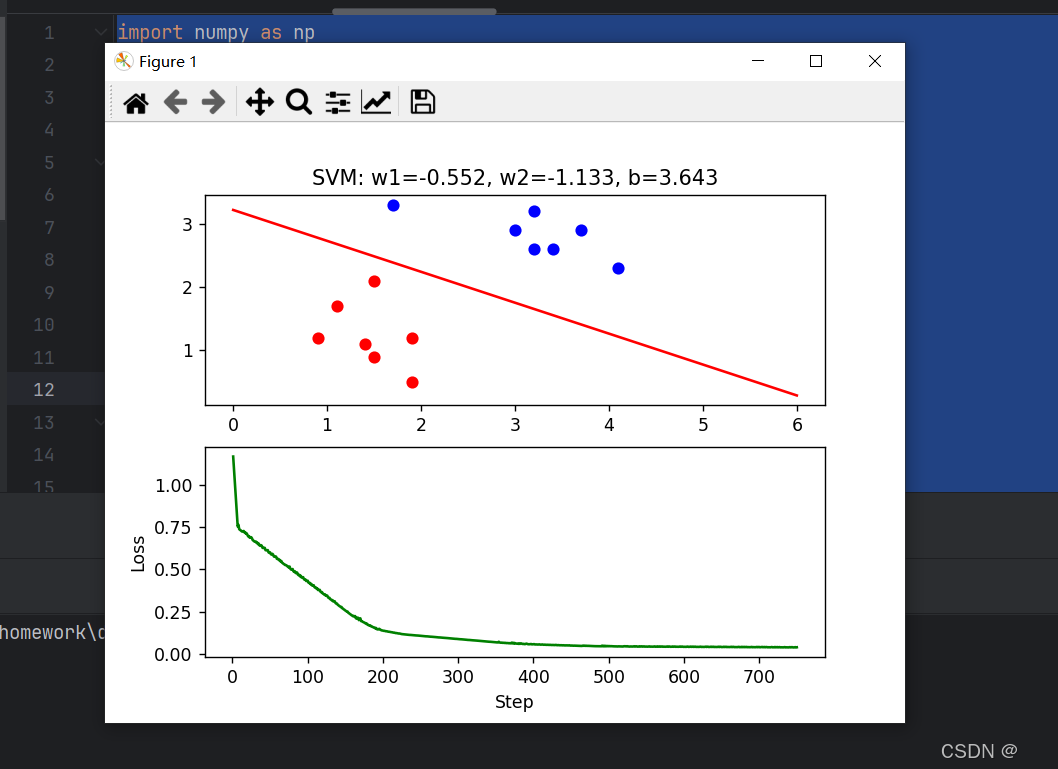

自定义数据集 使用scikit-learn中svm的包实现svm分类

代码

import numpy as np

import matplotlib.pyplot as pltclass1_points = np.array([[1.9, 1.2],[1.5, 2.1],[1.9, 0.5],[1.5, 0.9],[0.9, 1.2],[1.1, 1.7],[1.4, 1.1]])class2_points = np.array([[3.2, 3.2],[3.7, 2.9],[3.2, 2.6],[1.7, 3.3],[3.4, 2.6],[4.1, 2.3],[3.0, 2.9]])x1_data = np.concatenate((class1_points[:, 0], class2_points[:, 0]))

x2_data = np.concatenate((class1_points[:, 1], class2_points[:, 1]))

y = np.concatenate((np.ones(class1_points.shape[0]), -np.ones(class2_points.shape[0])))w1 = 0.1

w2 = 0.1

b = 0

learning_rate = 0.05l_data = x1_data.sizefig, (ax1, ax2) = plt.subplots(2, 1)step_list = np.array([]) # 初始化为空数组

loss_values = np.array([]) # 初始化为空数组num_iterations = 1000

for n in range(1, num_iterations + 1):z = w1 * x1_data + w2 * x2_data + byz = y * zloss = 1 - yzloss[loss < 0] = 0hinge_loss = np.mean(loss)loss_values = np.append(loss_values, hinge_loss)step_list = np.append(step_list, n)gradient_w1 = 0gradient_w2 = 0gradient_b = 0for i in range(len(y)):if loss[i] > 0:gradient_w1 += -y[i] * x1_data[i]gradient_w2 += -y[i] * x2_data[i]gradient_b += -y[i]gradient_w1 /= len(y)gradient_w2 /= len(y)gradient_b /= len(y)w1 -= learning_rate * gradient_w1w2 -= learning_rate * gradient_w2b -= learning_rate * gradient_b# 显示频率设置frequence_display = 50if n % frequence_display == 0 or n == 1:if np.abs(w2) < 1e-5:continuex1_min, x1_max = 0, 6x2_min, x2_max = -(w1 * x1_min + b) / w2, -(w1 * x1_max + b) / w2ax1.clear()ax1.scatter(x1_data[:len(class1_points)], x2_data[:len(class1_points)], c='red', label='Class 1')ax1.scatter(x1_data[len(class1_points):], x2_data[len(class1_points):], c='blue', label='Class 2')ax1.plot((x1_min, x1_max), (x2_min, x2_max), 'r-')ax1.set_title(f"SVM: w1={round(w1.item(), 3)}, w2={round(w2.item(), 3)}, b={round(b.item(), 3)}")ax2.clear()ax2.plot(step_list, loss_values, 'g-')ax2.set_xlabel("Step")ax2.set_ylabel("Loss")# 显示图形plt.pause(1)plt.show()效果展示

![2 [GitHub遭遇严重供应链投毒攻击]](https://i-blog.csdnimg.cn/img_convert/32d5bbe15e03baf1da2eb3e47cf60eee.jpeg)

![99,[7] buuctf web [羊城杯2020]easyphp](https://i-blog.csdnimg.cn/direct/b8ee6d1f92d748aa9208ee19157cec12.png)