前言

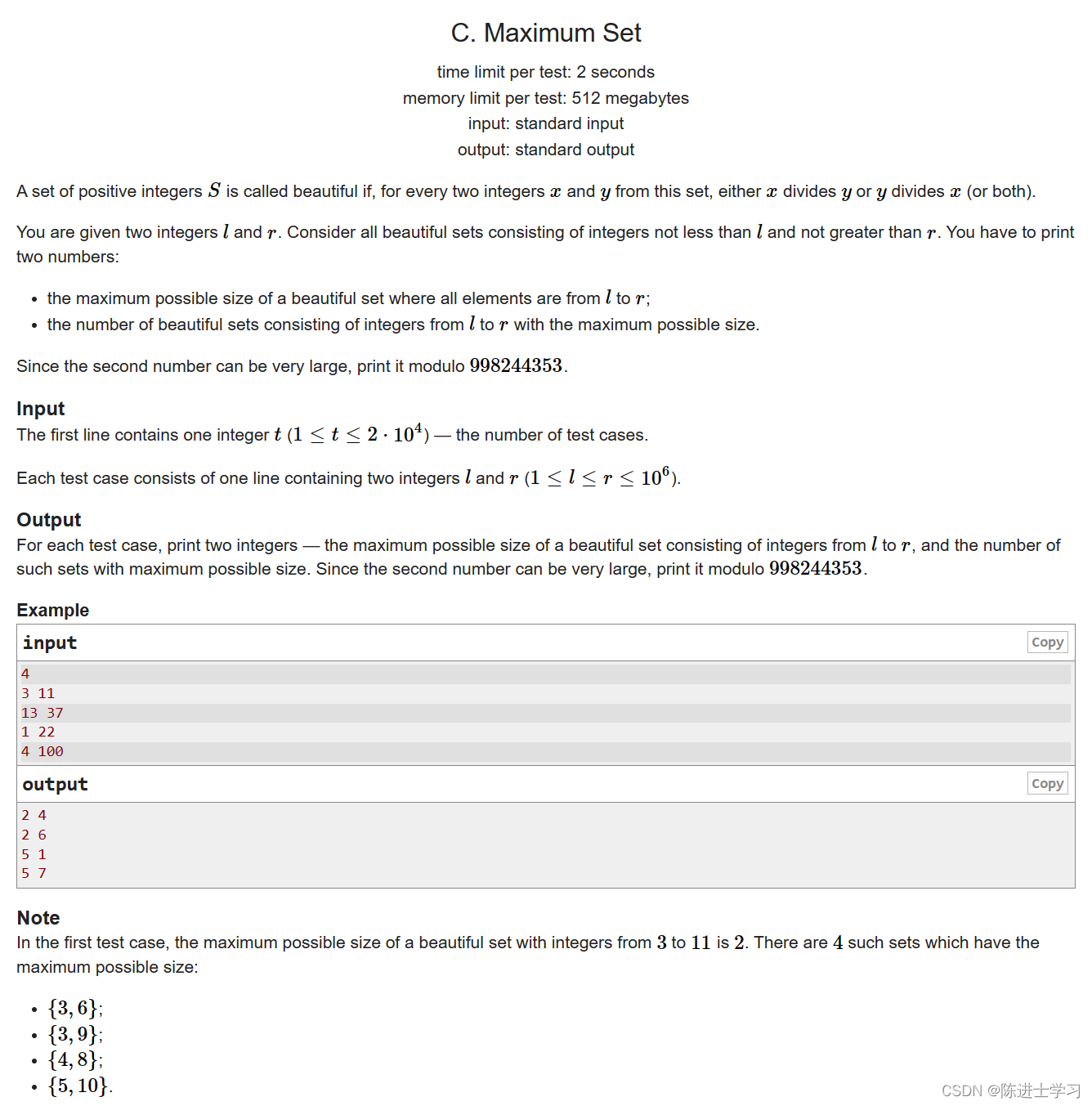

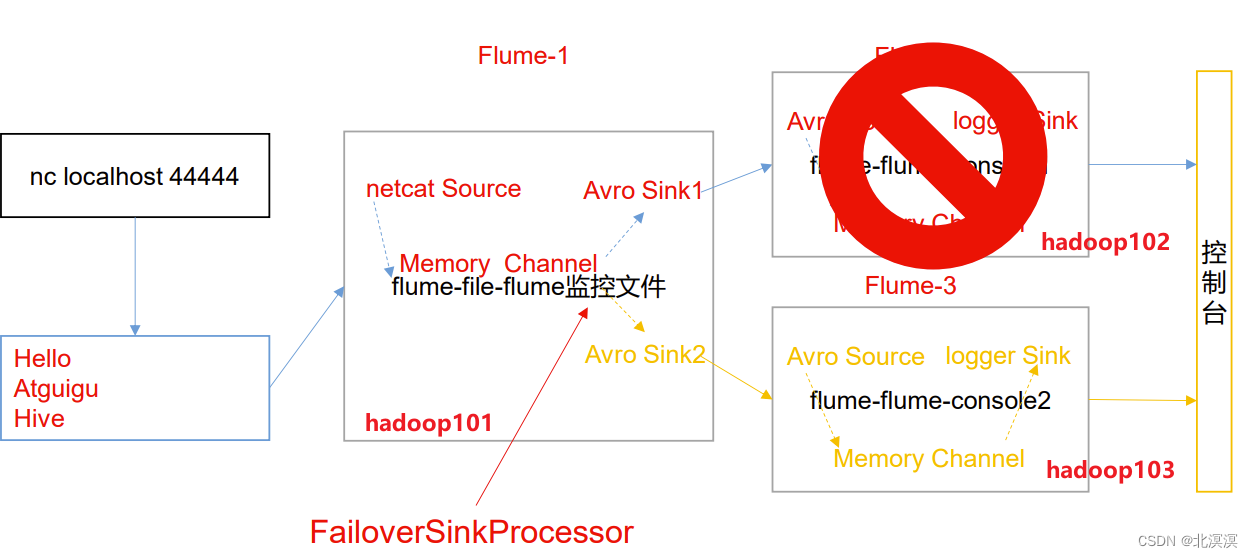

本节内容我们完成Flume数据采集的故障转移案例,使用三台服务器,一台服务器负责采集nc数据,通过使用failover模式的Sink处理器完成监控数据的故障转移,使用Avro的方式完成flume之间采集数据的传输。整体架构如下:

正文

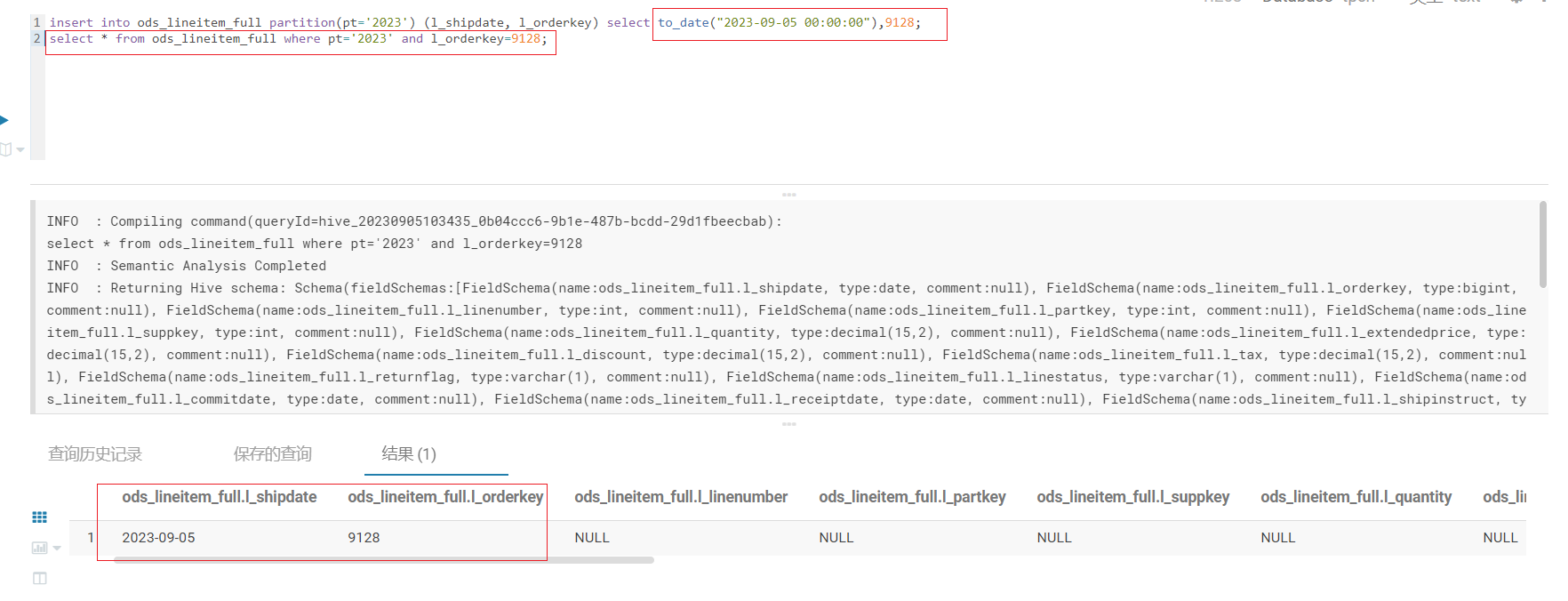

①在hadoop101服务器的/opt/module/apache-flume-1.9.0/job目录下创建job-nc-flume-avro.conf配置文件,用于监控nc并传输到avro sink

- job-nc-flume-avro.conf配置文件

# Name the components on this agent a1.sources = r1 a1.channels = c1 a1.sinkgroups = g1 a1.sinks = k1 k2 # Describe/configure the source a1.sources.r1.type = netcat a1.sources.r1.bind = localhost a1.sources.r1.port = 44444 a1.sinkgroups.g1.processor.type = failover a1.sinkgroups.g1.processor.priority.k1 = 5 a1.sinkgroups.g1.processor.priority.k2 = 10 a1.sinkgroups.g1.processor.maxpenalty = 10000 # Describe the sink a1.sinks.k1.type = avro a1.sinks.k1.hostname = hadoop102 a1.sinks.k1.port = 4141 a1.sinks.k2.type = avro a1.sinks.k2.hostname = hadoop103 a1.sinks.k2.port = 4142 # Describe the channel a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinkgroups.g1.sinks = k1 k2 a1.sinks.k1.channel = c1 a1.sinks.k2.channel = c1

②在hadoop102服务器的/opt/module/apache-flume-1.9.0/job目录下创建job-avro-flume-console102.conf配置文件,用于监控avro source数据到控制台

- job-avro-flume-console102.conf配置文件

# Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = avro a1.sources.r1.bind = hadoop102 a1.sources.r1.port = 4141 # Describe the sink a1.sinks.k1.type = logger # Describe the channel a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

③ 在hadoop103服务器的/opt/module/apache-flume-1.9.0/job目录下创建job-avro-flume-console103.conf配置文件,用于监控avro source数据到控制台

- job-avro-flume-console103.conf配置文件

# Name the components on this agent a1.sources = r1 a1.sinks = k1 a1.channels = c1 # Describe/configure the source a1.sources.r1.type = avro a1.sources.r1.bind = hadoop103 a1.sources.r1.port = 4142 # Describe the sink a1.sinks.k1.type = logger # Describe the channel a1.channels.c1.type = memory a1.channels.c1.capacity = 1000 a1.channels.c1.transactionCapacity = 100 # Bind the source and sink to the channel a1.sources.r1.channels = c1 a1.sinks.k1.channel = c1

④启动hadoop102上的flume任务job-avro-flume-console102.conf

- 命令:

bin/flume-ng agent -c conf/ -n a1 -f job/job-avro-flume-console102.conf -Dflume.root.logger=INFO,console

⑤启动hadoop103上的flume任务job-avro-flume-console103.conf

- 命令:

bin/flume-ng agent -c conf/ -n a1 -f job/job-avro-flume-console103.conf -Dflume.root.logger=INFO,console

⑥启动hadoop101上的flume任务job-nc-flume-avro.conf

- 命令:

bin/flume-ng agent -c conf/ -n a1 -f job/job-nc-flume-avro.conf -Dflume.root.logger=INFO,console

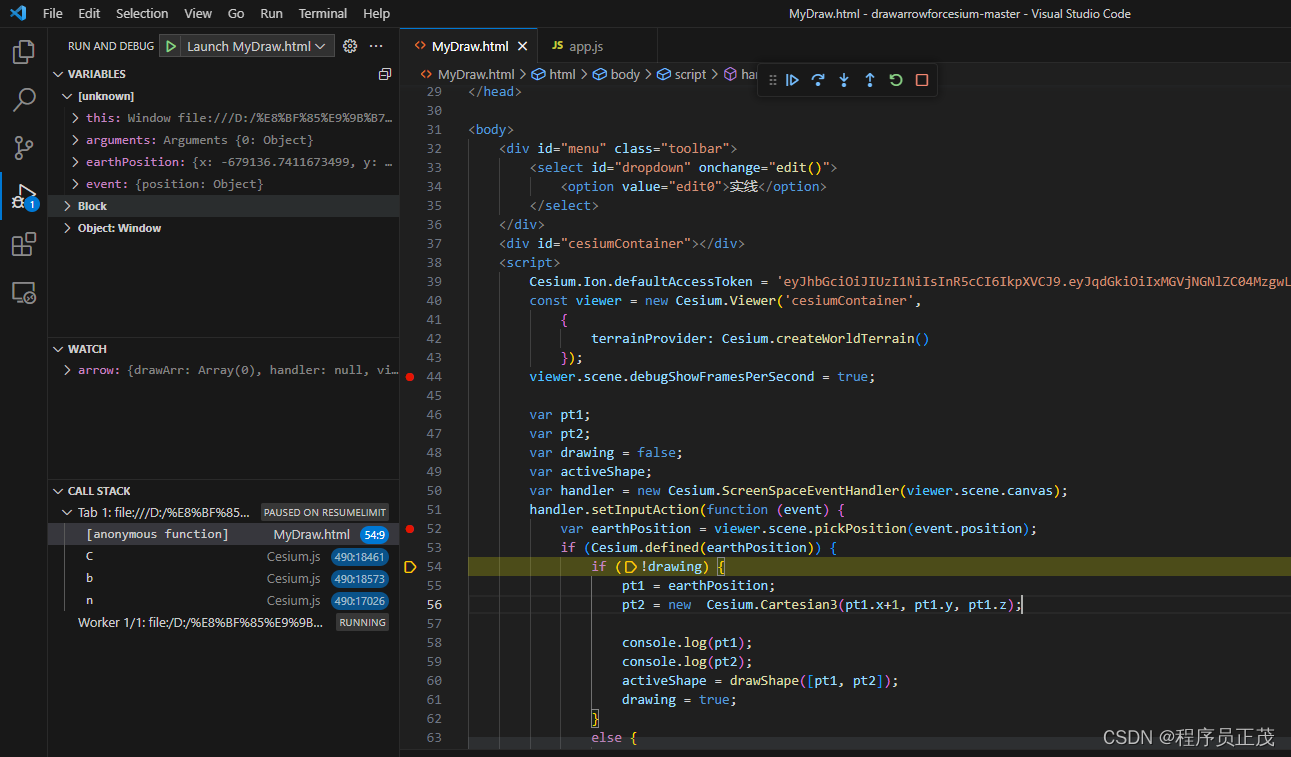

⑦使用nc向本地44444监控端口发送数据

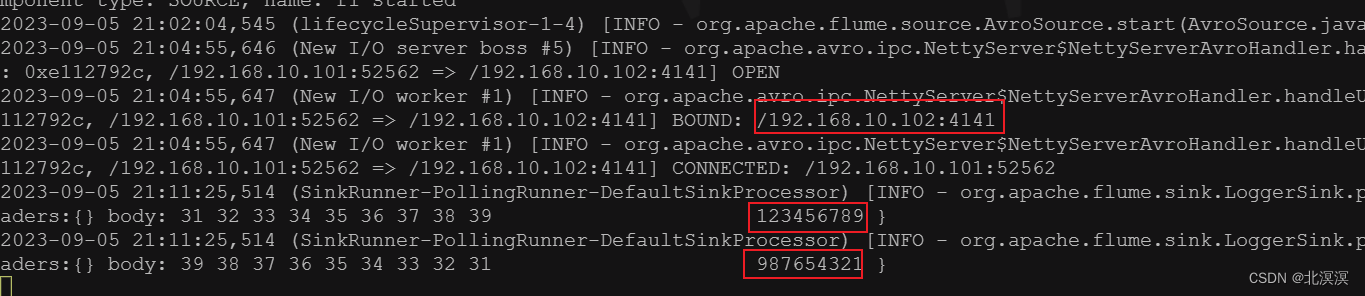

- 由于hadoop103中的sink avro优先级高于hadoop102中的sink avro,故hadoop103接收到了nc发送的数据

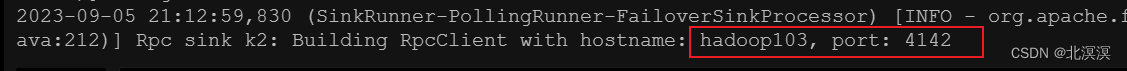

- 此时将hadoop103中的flume任务停止,继续通过nc发送数据,hadoop102的sink avro替换hadoop103中的flume任务继续接收数据打印到控制台

- 此时在将hadoop103中的flume监控恢复,继续通过nc发送数据,数据继续通过hadoop103中的sink avro接收数据

结语

至此,关于Flume数据采集之故障转移案例实战到这里就结束了,我们下期见。。。。。。