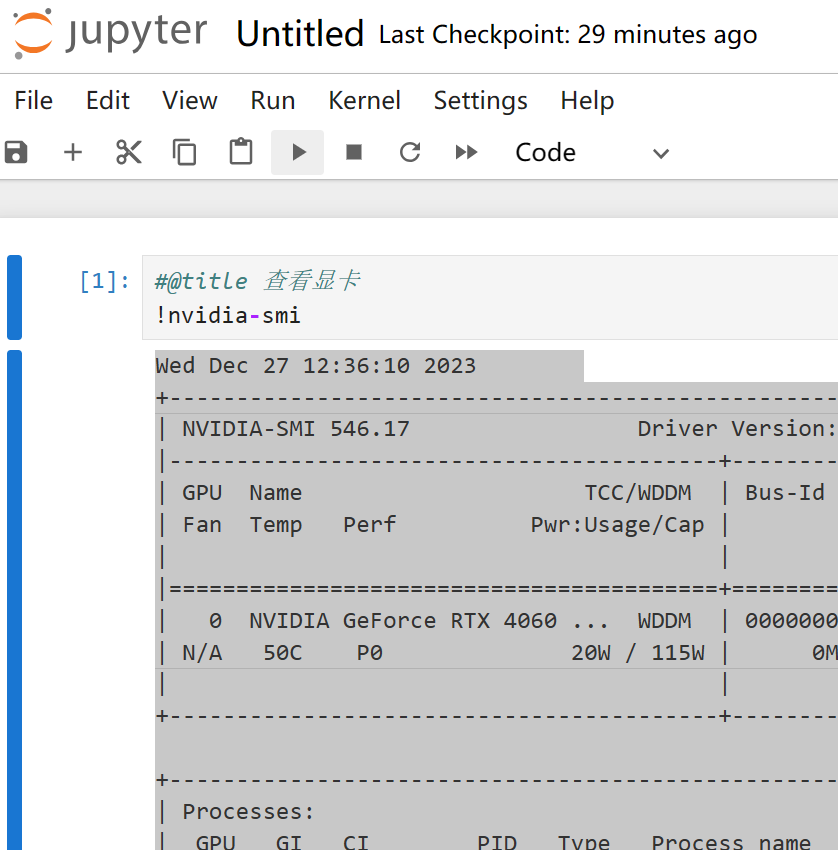

背景:

公司后台管理系统有个需求,需要上传体积比较大的文件:500M-1024M;此时普通的文件上传显然有些吃力了,加上我司服务器配置本就不高,带宽也不大,所以必须考虑多线程异步上传来提速;所以这里就要用到文件分片上传技术了。

技术选型:

直接问GPT实现大文件分片上传比较好的解决方案,它给的答案是webUploader(链接是官方文档);这是由 Baidu FEX 团队开发的一款以 HTML5 为主,FLASH 为辅的现代文件上传组件。在现代的浏览器里面能充分发挥 HTML5 的优势,同时又不摒弃主流IE浏览器,沿用原来的 FLASH 运行时,兼容 IE6+,iOS 6+, android 4+。采用大文件分片并发上传,极大的提高了文件上传效率;功能强大且齐全,支持对文件内容的Hash计算和分片上传,可实现上传进度条等功能。

实现原理:

文件分片上传比较简单,就不画图了,前端(webUploader)将用户选择的文件根据开发者配置的分片参数进行分片计算,将文件分成N个小文件多次调用后端提供的分片文件上传接口(webUploader插件有默认的一套参数规范,文件ID及分片相关字段,后端将对保存分片临时文件),后端记录并判断当前文件所有分片是否上传完毕,若已上传完则将所有分片合并成完整的文件,完成后建议删除分片临时文件(若考虑做分片下载可以保留)。

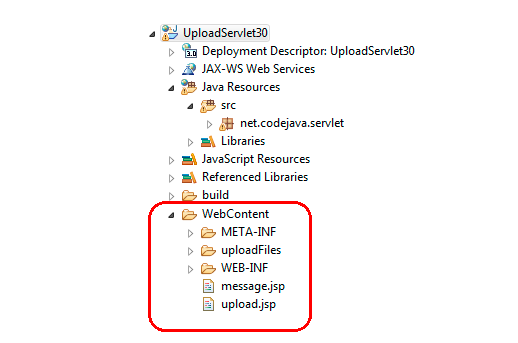

前端引入webUploader:

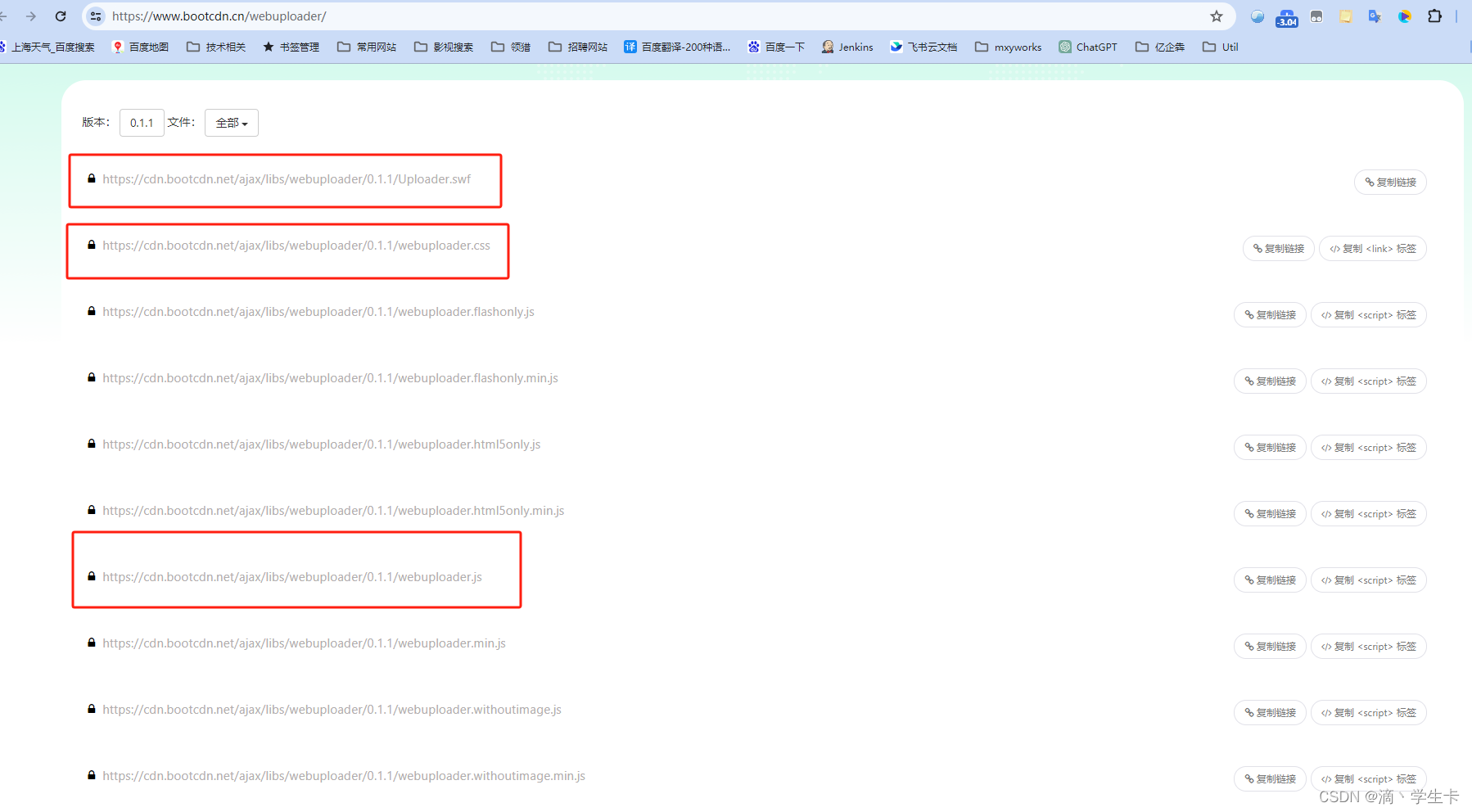

这里推荐去CDN下载静态资源:

记得要先引入JQuery,webUploader依赖JQuery;前端页面引入CSS和JS文件即可,Uploader.swf文件在创建webUploader对象时指定,貌似用来做兼容的。

前端(笔者前端用的layui)核心代码:

//百度文件上传插件 WebUploaderlet uploader = WebUploader.create({// 选完文件后,是否自动上传。auto: true,// swf文件路径swf: contextPath + '/static/plugin/webuploader/Uploader.swf',pick: {id: '#webUploader',multiple: false},// 文件接收服务端。server: contextPath + '/common/file/shard/upload',// 文件分片上传相关配置chunked: true,chunkSize: 5 * 1024 * 1024, // 分片大小为 5MBchunkRetry: 3, // 上传失败最大重试次数threads: 5, // 同时最大上传线程数});//文件上传临时对象let fileUpload = {idPrefix: '' //文件id前缀, genIdPrefix: function () {this.idPrefix = new Date().getTime() + '_';}, mergeLoading: null //合并文件加载层, lastUploadResponse: null // 最后一次上传返回值, chunks: 0 // 文件分片数, uploadedChunks: 0 // 已上传文件分片数, sumUploadChunk: function () {if (this.chunks > 0) {this.uploadedChunks++;}}, checkResult: function () {if (this.uploadedChunks < this.chunks) {layer.open({title: '系统提示', content: '文件上传失败,请重新上传!', btn: ['我知道了']});}}};// 某个文件开始上传前触发,一个文件只会触发一次uploader.on('uploadStart', function (file) {$('#uploadProgressBar').show();// 生成文件id前缀fileUpload.genIdPrefix();});// 当某个文件的分块在发送前触发,主要用来询问是否要添加附带参数,大文件在开起分片上传的前提下此事件可能会触发多次uploader.on('uploadBeforeSend', function (object, data, header) {// 重写文件id生成规则data.id = fileUpload.idPrefix + data.name;fileUpload.chunks = data.chunks != null ? data.chunks : 0;});uploader.on('uploadProgress', function (file, percentage) {// 更新进度条let value = Math.round(percentage * 100);element.progress('progressBar', value + '%');if (value == 100) {fileUpload.mergeLoading = layer.load();}});// 获取最后上传成功的文件信息,每个分片文件上传都会回调uploader.on('uploadAccept', function (file, response) {if (response == null || response.code !== '0000') {return;}fileUpload.sumUploadChunk();if (response.data != null && response.data.fileAccessPath != null) {fileUpload.lastUploadResponse = response.data;}});// 文件上传成功时触发uploader.on('uploadSuccess', function (file, response) {console.log('File ' + file.name + ' uploaded successfully.');layer.msg('文件上传成功!');$('#fileName').val(fileUpload.lastUploadResponse.fileOriginalName);$('#fileRelativePath').val(fileUpload.lastUploadResponse.fileRelativePath);})uploader.on('uploadComplete', function (file) {console.log('File' + file.name + 'uploaded complete.');console.log('总分片:' + fileUpload.chunks + ' 已上传:' + fileUpload.uploadedChunks);fileUpload.checkResult();$('#uploadProgressBar').hide();layer.close(fileUpload.mergeLoading);});其中几个关键的节点的事件回调都提供了,使用起来很方便;其中“uploadProgress”事件实现了上传的实时进度条展示。

后端Controller代码:

/*** 文件分片上传* * * @param file* @param fileUploadInfoDTO* @return*/@PostMapping(value = "shard/upload")public Layui<FileUploadService.FileBean> uploadFileByShard(@RequestParam("file") MultipartFile file,FileUploadInfoDTO fileUploadInfoDTO) {if (null == fileUploadInfoDTO) {return Layui.error("文件信息为空");}if (null == file || file.getSize() <= 0) {return Layui.error("文件内容为空");}log.info("fileName=[{}]", file.getName());log.info("fileSize=[{}]", file.getSize());log.info("fileShardUpload=[{}]", JSONUtil.toJsonStr(fileUploadInfoDTO));FileUploadService.FileBean fileBean = fileShardUploadService.uploadFileByShard(fileUploadInfoDTO, file);return Layui.success(fileBean);}/*** @Author: XiangPeng* @Date: 2023/12/22 12:01*/@Getter

@Setter

public class FileUploadInfoDTO implements Serializable {private static final long serialVersionUID = -1L;/*** 文件 ID*/private String id;/*** 文件名*/private String name;/*** 文件类型*/private String type;/*** 文件最后修改日期*/private String lastModifiedDate;/*** 文件大小*/private Long size;/*** 分片总数*/private int chunks;/*** 当前分片序号*/private int chunk;

}@Getter

@Setter

public class FileUploadCacheDTO implements Serializable {private static final long serialVersionUID = 1L;/*** 文件 ID*/private String id;/*** 文件名*/private String name;/*** 分片总数*/private int chunks;/*** 当前已上传分片索引*/private List<Integer> uploadedChunkIndex;public FileUploadCacheDTO(FileUploadInfoDTO fileUploadInfoDTO) {this.id = fileUploadInfoDTO.getId();this.name = fileUploadInfoDTO.getName();this.chunks = fileUploadInfoDTO.getChunks();this.uploadedChunkIndex = Lists.newArrayList();}public FileUploadCacheDTO() {}

}后端Service层代码:

/*** 文件分片上传* * @param fileUploadInfoDTO* @param file* @return*/public FileBean uploadFileByShard(FileUploadInfoDTO fileUploadInfoDTO, MultipartFile file) {if (fileUploadInfoDTO == null || file == null) {throw new ServiceException("文件上传失败!");}// 无需分片的小文件直接上传if (fileUploadInfoDTO.getChunks() <= 0) {return super.commonUpload(file);}String fileId = fileUploadInfoDTO.getId();// 生成分片临时文件,文件名格式:文件id_分片序号FileBean fileBean = super.commonUpload(fileId + StrUtil.UNDERLINE + fileUploadInfoDTO.getChunk(), file);// redis缓存数据FileUploadCacheDTO fileUploadInfo = null;synchronized (this) {// 查询文件id是否存在,不存在则创建,存在则更新已上传分片数fileUploadInfo = (FileUploadCacheDTO) redisService.get(genRedisKey(fileId));// 第一个分片文件上传if (fileUploadInfo == null) {fileUploadInfo = new FileUploadCacheDTO(fileUploadInfoDTO);}fileUploadInfo.getUploadedChunkIndex().add(fileUploadInfoDTO.getChunk());redisService.set(genRedisKey(fileId), fileUploadInfo);// 判断所有分片文件是否上传完成if ((fileUploadInfo.getUploadedChunkIndex().size()) < fileUploadInfo.getChunks()) {return fileBean;}}// 合并文件return mergeChunks(fileUploadInfo);}/*** 分片文件全部上传完成则合并文件,清除缓存并返回文件地址* * @param fileUploadCache* @return*/private FileBean mergeChunks(FileUploadCacheDTO fileUploadCache) {String mergeFileRelativePath = super.getCommonPath().getFileRelativePath() + fileUploadCache.getId();String mergeFilePath = super.getCommonPath().getBasePath() + mergeFileRelativePath;RandomAccessFile mergedFile = null;File chunkTempFile = null;RandomAccessFile chunkFile = null;try {mergedFile = new RandomAccessFile(mergeFilePath, "rw");for (int i = 0; i < fileUploadCache.getChunks(); i++) {// 读取分片文件chunkTempFile = new File(super.getCommonPath().getFileFullPath() + fileUploadCache.getId() + StrUtil.UNDERLINE + i);byte[] buffer = new byte[1024 * 1024];int bytesRead;chunkFile = new RandomAccessFile(chunkTempFile, "r");// 合并分片文件while ((bytesRead = chunkFile.read(buffer)) != -1) {mergedFile.write(buffer, 0, bytesRead);}chunkFile.close();}} catch (IOException e) {log.error("merge file chunk error, fileId=[{}]", fileUploadCache.getId(), e);} finally {try {if (mergedFile != null) {mergedFile.close();}} catch (IOException e) {}redisService.remove(genRedisKey(fileUploadCache.getId()));// 删除分片文件removeChunkFiles(super.getCommonPath().getFileFullPath(), fileUploadCache);}return FileBean.builder().fileOriginalName(fileUploadCache.getName()).fileRelativePath(mergeFileRelativePath).fileAccessPath(super.getNginxPath() + mergeFileRelativePath).build();}private void removeChunkFiles(String fileFullPathPrefix, FileUploadCacheDTO fileUploadCache) {taskExecutor.execute(() -> {try {// 延迟1秒删除TimeUnit.SECONDS.sleep(1);String fileFullPath;for (int i = 0; i < fileUploadCache.getChunks(); i++) {try {fileFullPath = fileFullPathPrefix + fileUploadCache.getId() + StrUtil.UNDERLINE + i;FileUtil.del(fileFullPath);log.info("file[{}] delete success.", fileFullPath);} catch (Exception e) {log.error("delete temp file error.", e);}}} catch (Exception e) {log.error("delete temp chunk file error.", e);}});}private String genRedisKey(String id) {return FILE_SHARD_UPLOAD_KEY + id;}