目录

一、概述

二、节点名称 - nodeName

二、节点选择器 - nodeSelector

三、节点亲和性和反亲和性

3.1、亲和性和反亲和性

3.2、节点硬亲和性

3.3、节点软亲和性

3.4、节点反亲和性

3.5、注意点

四、Pod亲和性和反亲和性

4.1、亲和性和反亲和性

4.2、Pod亲和性/反亲和性

五、总结

一、概述

在 Kubernetes 中 Pod 的调度都是由kube-scheduler组件来完成的,整个调度过程都是自动完成的,也就是说我们并不能确定 Pod 最终被调度到了哪个节点上。而在实际环境中,可能需要将Pod调度到指定的节点上。例如可能会遇到如下一些场景:

- 机器学习相关应用希望调度到有 GPU 硬件的节点上;

- 数据库应用需要调度到有 SSD 的节点上;

- 为了保证应用的高可用性,需要将同一应用的不同Pod分散在不同的节点上,以防节点所在机器出现宕机等情况导致Pod重建;

- 两个不同的应用需要调度到同一个节点上;

为了满足如上一些场景,k8s提供了一些功能帮助我们干预Pod节点的调度,这些功能包括:指定节点名称、节点选择器、节点亲和性/反亲和性、Pod亲和性和反亲和性。

二、节点名称 - nodeName

nodeName即节点名称,如果对应Pod资源清单中,用户明确定义了nodeName字段,则表示不使用调度器调度,此时调度器也不会调度此类Pod资源,原因是对应nodeName非空,调度器认为该Pod是已经调度过了,nodeName是用户手动将Pod绑定至某个节点上运行。

$ kubectl get nodes

NAME STATUS ROLES AGE VERSION

controlplane Ready control-plane 25d v1.26.0

node01 Ready <none> 25d v1.26.0创建Pod资源清单。vim node-name-pod.yaml

apiVersion: apps/v1

kind: Deployment

metadata:name: nginx

spec:replicas: 1selector:matchLabels:app: nginxtemplate:metadata:labels:app: nginxspec:nodeName: node01 # 指定pod调度到node01节点上containers:- name: nginximage: nginx创建并查看Pod:

$ kubectl apply -f node-name-pod.yaml

deployment.apps/nginx created$ kubectl get pod -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-86dcc5bdc6-wwrzx 1/1 Running 0 26s 192.168.1.3 node01 <none> <none>可以看到,Pod确实是落在了node01节点上,使用 nodeName 来选择节点的方式有一些局限性:

- 如果所指代的节点不存在,则 Pod 无法运行,而且在某些情况下可能会被自动删除。

- 如果所指代的节点无法提供用来运行 Pod 所需的资源,Pod 会失败, 而其失败原因中会给出是否因为内存或 CPU 不足而造成无法运行。

- 在云环境中的节点名称并不总是可预测的,也不总是稳定的。

所以,生产环境一般不使用nodeName。

二、节点选择器 - nodeSelector

nodeSelector即节点选择器,它是将Pod调度到包含指定标签的node上面,只有符合对应node标签选择器定义的标签的node才能运行对应Pod,如果没有节点满足节点选择器的规则,则对应的Pod就会一直处于Pending状态。

下面我们通过一个简单的案例说明nodeSelector是如何使用的。首先创建Pod资源清单:

vim node-selector-pod.yaml

apiVersion: apps/v1

kind: Deployment

metadata:name: nginx

spec:replicas: 1selector:matchLabels:app: nginxtemplate:metadata:labels:app: nginxspec:containers:- name: nginximage: nginxnodeSelector:role: master # 选择具有role=master标签的那些node我们指定Pod需要调度到具有role=master标签的那些节点上。创建Pod:

# 查看节点存在的标签

$ kubectl get nodes --show-labels

NAME STATUS ROLES AGE VERSION LABELS

controlplane Ready control-plane 25d v1.26.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=controlplane,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node.kubernetes.io/exclude-from-external-load-balancers=

node01 Ready <none> 25d v1.26.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node01,kubernetes.io/os=linux$ kubectl apply -f node-selector-pod.yaml

deployment.apps/nginx created$ kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-cc84c8d74-79dqx 0/1 Pending 0 11s我们可以看到Pod的状态是Pending,由于目前并不存在role=master标签的node节点,所以Pod是调度不上去的,我们可以查看Pod的事件:

# 查看pod的详细描述信息

$ kubectl describe pod nginx-cc84c8d74-79dqx

Name: nginx-cc84c8d74-79dqx

Namespace: default

Priority: 0

Service Account: default

Node: <none>

Labels: app=nginxpod-template-hash=cc84c8d74

Annotations: <none>

Status: Pending

IP:

IPs: <none>

Controlled By: ReplicaSet/nginx-cc84c8d74

Containers:nginx:Image: nginxPort: <none>Host Port: <none>Environment: <none>Mounts:/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-rk2mj (ro)

Conditions:Type StatusPodScheduled False

Volumes:kube-api-access-rk2mj:Type: Projected (a volume that contains injected data from multiple sources)TokenExpirationSeconds: 3607ConfigMapName: kube-root-ca.crtConfigMapOptional: <nil>DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: role=master

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300snode.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:Type Reason Age From Message---- ------ ---- ---- -------Warning FailedScheduling 2m25s default-scheduler 0/2 nodes are available: 2 node(s) didn't match Pod's node affinity/selector. preemption: 0/2 nodes are available: 2 Preemption is not helpful for scheduling..可以看到,最下边的FailedScheduling表示的就是调度失败,原因是当前没有节点满足nodeSelector或者nodeAffinity(节点亲和性)的规则。那么下面我们给node01节点添加role=master标签:

# 给node01节点打上role=master的标签

$ kubectl label nodes node01 role=master

node/node01 labeled# 查看node01节点的标签

$ kubectl get node/node01 --show-labels

NAME STATUS ROLES AGE VERSION LABELS

node01 Ready <none> 25d v1.26.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node01,kubernetes.io/os=linux,role=master打完标签后我们再次查看Pod的状态以及事件:

$ kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-cc84c8d74-79dqx 1/1 Running 0 4m45s 此时我们可以看到,Pod已经成功被调度到node01节点上了,这就是nodeSelector节点选择器的作用。

此时我们可以看到,Pod已经成功被调度到node01节点上了,这就是nodeSelector节点选择器的作用。

注意,nodeSelector指定的条件是必须满足的,如果没有找到满足条件的节点,那么这个Pod就会一直处于Pending状态,直到能找到满足nodeSelector条件的节点,才能调度上。

三、节点亲和性和反亲和性

3.1、亲和性和反亲和性

k8s目前支持的节点亲和性有两种:

- 1、requiredDuringSchedulingIgnoredDuringExecution(硬亲和性)

硬亲和性表示指定的条件必须满足,如果不满足,那就调度不上,比较强硬,硬亲和性更多地表示Pod必须被调度到满足条件的节点上。

- 2)、preferredDuringSchedulingIgnoredDuringExecution(软亲和性)

软亲和性表示指定的条件尽量满足,不保证总是满足,也就是说能满足最好,不满足那也不影响调度,软亲和性更多地表示希望Pod被调度到满足条件的节点上。

注意,处于正在运行中的Pod,如果节点标签发送变化的话,并不会驱逐因节点标签变更导致不再符合亲和/反亲和条件的Pod。

在k8s中,节点的亲和性通过Pod Spec的affinity字段下的nodeAffinity字段进行指定,操作符支持In、NotIn、Exists、DoesNotExist、Gt、Lt,反亲和性通过NotIn和DoesNotExist实现。操作符说明如下:

- In:判断对应标签的值是否在某个集合中;

- NotIn:判断对应标签的值是否不在某个集合中;

- Gt:判断标签值是否大于某个值,用于字符串比较;

- Lt:判断标签值是否小于某个值,用于字符串比较;

- Exists:判断判断某个标签的key是否存在;

- DoesNotExist:某个标签的key是否不存在;

3.2、节点硬亲和性

我们可以使用节点硬亲和性来实现前面nodeSelector节点选择器相同的功能,首先我们定义Pod的资源清单:

vim hard-node-affinity.yaml

apiVersion: apps/v1

kind: Deployment

metadata:name: nginx

spec:replicas: 1selector:matchLabels:app: nginxtemplate:metadata:labels:app: nginxspec:affinity:nodeAffinity: # 节点亲和性requiredDuringSchedulingIgnoredDuringExecution: # 硬亲和性nodeSelectorTerms: # node节点选择的条件:必须包含role=master的label- matchExpressions:- key: roleoperator: Invalues:- mastercontainers:- name: nginximage: nginx我们通过requiredDuringSchedulingIgnoredDuringExecution下的nodeSelectorTerms属性指定了Pod的硬亲和性是必须满足role=master的那些node节点。

创建并查看Pod:

$ kubectl apply -f hard-node-affinity.yaml

deployment.apps/nginx created$ kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-798c8486f6-rft9w 0/1 Pending 0 7s$ kubectl describe pod nginx-798c8486f6-rft9w

Name: nginx-798c8486f6-rft9w

Namespace: default

Priority: 0

Service Account: default

Node: <none>

Labels: app=nginxpod-template-hash=798c8486f6

Annotations: <none>

Status: Pending

IP:

IPs: <none>

Controlled By: ReplicaSet/nginx-798c8486f6

Containers:nginx:Image: nginxPort: <none>Host Port: <none>Environment: <none>Mounts:/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-nxwvb (ro)

Conditions:Type StatusPodScheduled False

Volumes:kube-api-access-nxwvb:Type: Projected (a volume that contains injected data from multiple sources)TokenExpirationSeconds: 3607ConfigMapName: kube-root-ca.crtConfigMapOptional: <nil>DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300snode.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:Type Reason Age From Message---- ------ ---- ---- -------Warning FailedScheduling 20s default-scheduler 0/2 nodes are available: 2 node(s) didn't match Pod's node affinity/selector. preemption: 0/2 nodes are available: 2 Preemption is not helpful for scheduling..# 当前没有node节点存在role=master的标签

$ kubectl get node --show-labels

NAME STATUS ROLES AGE VERSION LABELS

controlplane Ready control-plane 25d v1.26.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=controlplane,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node.kubernetes.io/exclude-from-external-load-balancers=

node01 Ready <none> 25d v1.26.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node01,kubernetes.io/os=linux可以看到,Pod又是处于Pending状态,原因还是因为没有找到role=master的node节点,所以调度不上。同样的,我们给node01节点打上role=master的标签,再次查看Pod的状态:

$ kubectl label nodes node01 role=master

node/node01 labeled$ kubectl get node node01 --show-labels

NAME STATUS ROLES AGE VERSION LABELS

node01 Ready <none> 25d v1.26.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node01,kubernetes.io/os=linux,role=master$ kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-798c8486f6-rft9w 1/1 Running 0 3m29s$ kubectl describe pod nginx-798c8486f6-rft9w

Name: nginx-798c8486f6-rft9w

Namespace: default

Priority: 0

Service Account: default

Node: node01/172.30.2.2

Start Time: Tue, 17 Jan 2023 01:51:48 +0000

Labels: app=nginxpod-template-hash=798c8486f6

Annotations: cni.projectcalico.org/containerID: c13cf68653c462145216fbf2cd94c8b6e7ce55b0c9969eeb21ce153d94ad24afcni.projectcalico.org/podIP: 192.168.1.3/32cni.projectcalico.org/podIPs: 192.168.1.3/32

Status: Running

IP: 192.168.1.3

IPs:IP: 192.168.1.3

Controlled By: ReplicaSet/nginx-798c8486f6

Containers:nginx:Container ID: containerd://7ce8f5d9048b2272ac51c4358b2c142bfae28ca7144b1958836223f9b0857711Image: nginxImage ID: docker.io/library/nginx@sha256:b8f2383a95879e1ae064940d9a200f67a6c79e710ed82ac42263397367e7cc4ePort: <none>Host Port: <none>State: RunningStarted: Tue, 17 Jan 2023 01:51:54 +0000Ready: TrueRestart Count: 0Environment: <none>Mounts:/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-nxwvb (ro)

Conditions:Type StatusInitialized True Ready True ContainersReady True PodScheduled True

Volumes:kube-api-access-nxwvb:Type: Projected (a volume that contains injected data from multiple sources)TokenExpirationSeconds: 3607ConfigMapName: kube-root-ca.crtConfigMapOptional: <nil>DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300snode.kubernetes.io/unreachable:NoExecute op=Exists for 300s

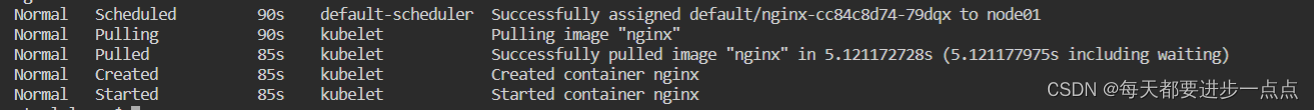

Events:Type Reason Age From Message---- ------ ---- ---- -------Warning FailedScheduling 3m37s default-scheduler 0/2 nodes are available: 2 node(s) didn't match Pod's node affinity/selector. preemption: 0/2 nodes are available: 2 Preemption is not helpful for scheduling..Normal Scheduled 27s default-scheduler Successfully assigned default/nginx-798c8486f6-rft9w to node01Normal Pulling 26s kubelet Pulling image "nginx"Normal Pulled 21s kubelet Successfully pulled image "nginx" in 5.200031738s (5.200036683s including waiting)Normal Created 21s kubelet Created container nginxNormal Started 21s kubelet Started container nginx当我们给node01添加完role=master标签后,Pod立马就被调度上去了,这就是requiredDuringSchedulingIgnoredDuringExecution硬亲和性,必须找到满足条件的node,Pod才会被调度上。

3.3、节点软亲和性

首先我们定义Pod的资源清单:vim soft-node-affinity.yaml

apiVersion: apps/v1

kind: Deployment

metadata:name: nginx

spec:replicas: 1selector:matchLabels:app: nginxtemplate:metadata:labels:app: nginxspec:affinity:nodeAffinity: # 节点亲和性preferredDuringSchedulingIgnoredDuringExecution: # 软亲和- weight: 1 # 权重,多个条件权重加起来最高的node被调度的优先级越高preference:matchExpressions:- key: roleoperator: Invalues:- slavecontainers:- name: nginximage: nginx我们通过nodeAffinity下的preferredDuringSchedulingIgnoredDuringExecution属性指定了Pod调度的软亲和条件就是尽量去找那些包含role=slave标签的节点,并通过weight指定权重为1,weight表示的是计算权重,当我们指定了多个条件的时候,多个条件权重加起来分数最高的node被调度的优先级最高。

创建并查看Pod:

$ kubectl get nodes --show-labels

NAME STATUS ROLES AGE VERSION LABELS

controlplane Ready control-plane 25d v1.26.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=controlplane,kubernetes.io/os=linux,node-role.kubernetes.io/control-plane=,node.kubernetes.io/exclude-from-external-load-balancers=

node01 Ready <none> 25d v1.26.0 beta.kubernetes.io/arch=amd64,beta.kubernetes.io/os=linux,kubernetes.io/arch=amd64,kubernetes.io/hostname=node01,kubernetes.io/os=linux,role=master$ vim soft-node-affinity.yaml

$ kubectl apply -f soft-node-affinity.yaml

deployment.apps/nginx created$ kubectl get pod

NAME READY STATUS RESTARTS AGE

nginx-649b779d98-cd9rd 1/1 Running 0 6s$ kubectl describe pod nginx-649b779d98-cd9rd

Name: nginx-649b779d98-cd9rd

Namespace: default

Priority: 0

Service Account: default

Node: node01/172.30.2.2

Start Time: Tue, 17 Jan 2023 02:03:27 +0000

Labels: app=nginx

pod-template-hash=649b779d98

Annotations: cni.projectcalico.org/containerID: a512d1c7f0b3fd9d690a3ad1c68909d36a80abe50c91522f16e0a01f65d4f9ca

cni.projectcalico.org/podIP: 192.168.1.5/32

cni.projectcalico.org/podIPs: 192.168.1.5/32

Status: Running

IP: 192.168.1.5

IPs:

IP: 192.168.1.5

Controlled By: ReplicaSet/nginx-649b779d98

Containers:

nginx:

Container ID: containerd://20b43750d4323d92b4b5f27c4ffbc917fbed2d92e5bc94d69db3127b43a62dff

Image: nginx

Image ID: docker.io/library/nginx@sha256:b8f2383a95879e1ae064940d9a200f67a6c79e710ed82ac42263397367e7cc4e

Port: <none>

Host Port: <none>

State: Running

Started: Tue, 17 Jan 2023 02:03:29 +0000

Ready: True

Restart Count: 0

Environment: <none>

Mounts:

/var/run/secrets/kubernetes.io/serviceaccount from kube-api-access-sw59z (ro)

Conditions:

Type Status

Initialized True

Ready True

ContainersReady True

PodScheduled True

Volumes:

kube-api-access-sw59z:

Type: Projected (a volume that contains injected data from multiple sources)

TokenExpirationSeconds: 3607

ConfigMapName: kube-root-ca.crt

ConfigMapOptional: <nil>

DownwardAPI: true

QoS Class: BestEffort

Node-Selectors: <none>

Tolerations: node.kubernetes.io/not-ready:NoExecute op=Exists for 300s

node.kubernetes.io/unreachable:NoExecute op=Exists for 300s

Events:

Type Reason Age From Message

---- ------ ---- ---- -------

Normal Scheduled 20s default-scheduler Successfully assigned default/nginx-649b779d98-cd9rd to node01

Normal Pulling 19s kubelet Pulling image "nginx"

Normal Pulled 19s kubelet Successfully pulled image "nginx" in 480.824291ms (480.831812ms including waiting)

Normal Created 19s kubelet Created container nginx

Normal Started 18s kubelet Started container nginx可以看到,当前集群中两个节点node01、controlplane都不包含role=slave的标签,但是我们的Pod也能成功调度上,这就是软亲和性preferredDuringSchedulingIgnoredDuringExecution,尽量去找满足条件的节点,如果找不到,那也不会影响Pod被调度。

3.4、节点反亲和性

节点反亲和性就是通过NotIn和DoesNotExist实现,配置如下:

spec:affinity:nodeAffinity: # 节点反亲和性requiredDuringSchedulingIgnoredDuringExecution: # 硬亲和性nodeSelectorTerms:- matchExpressions: - key: roleoperator: NotIn # Pod尽量不要去选择包含role=master的那些节点values:- master3.5、注意点

- 1、如果你同时指定了 nodeSelector 和 nodeAffinity,两者必须都要满足,才能将 Pod 调度到候选节点上

# 对应pod必须运行在节点上有节点标签key为a的节点并且对应节点上还有role=master节点标签

affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:- matchExpressions:- key: aoperator: Existsvalues: []

nodeSelector:role: master- 2、如果你在与 nodeAffinity 类型关联的 nodeSelectorTerms 中指定多个条件, 只要其中一个 nodeSelectorTerms 满足(各个条件按逻辑或操作组合)的话,Pod 就可以被调度到节点上

# pod节点必须运行在对应节点上有节点标签key为a或key为role的节点

affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:- matchExpressions:- key: aoperator: Existsvalues: []- matchExpressions:- key: roleoperator: Existsvalues: []- 3、如果你在与nodeSelectorTerms 中的条件相关联的单个 matchExpressions 字段中指定多个表达式, 则只有当所有表达式都满足(各表达式按逻辑与操作组合)时,Pod 才能被调度到节点上

# pod必须运行在节点标签key为a和节点标签key为role的节点上

affinity:nodeAffinity:requiredDuringSchedulingIgnoredDuringExecution:nodeSelectorTerms:- matchExpressions:- key: aoperator: Existsvalues: []- key: roleoperator: Existsvalues: []四、Pod亲和性和反亲和性

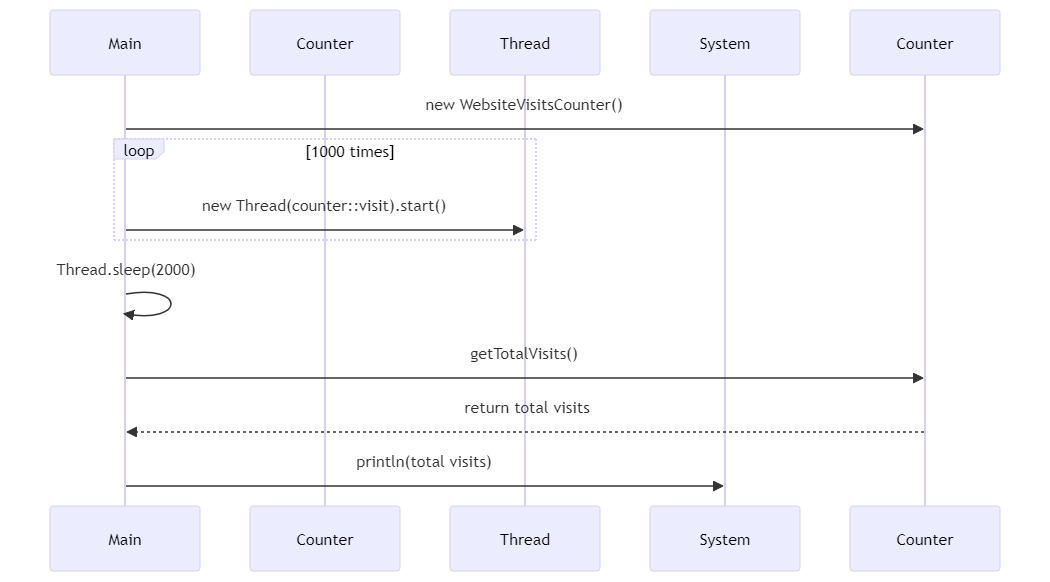

4.1、亲和性和反亲和性

Pod Affinity即节点亲和,是用来定义Pod与Pod间的亲和性,所谓Pod与Pod的亲和性是指,Pod更愿意和哪些Pod在一起;与之相反的也有Pod更不愿意和哪些Pod在一起,这种叫做Pod Anti Affinity,即Pod与Pod间的反亲和性。

所谓在一起是指和对应Pod在同一个位置,这个位置可以是按主机名划分,也可以按照区域划分,这样一来我们要定义Pod和Pod在一起或不在一起,定义位置就显得尤为重要,也是评判对应Pod能够运行在哪里的标准;例如:我们可以控制两个Pod运行在同一个node上,也可以控制两个Pod运行在不同的region上面,topologyKey就是来控制这个划分规则的,最常用的值为kubernetes.io/hostname。

同样的,Pod亲和也有两种: requiredDuringSchedulingIgnoredDuringExecution(硬亲和性)和preferredDuringSchedulingIgnoredDuringExecution(软亲和性)。

pod亲和与反亲和性通过PodSpec的affinity字段下的podAffinity(Pod亲和性)和podAntiAffinity(Pod反亲和性)字段指定,操作符仅支持In、NotIn、Exists、DoesNotExist。

4.2、Pod亲和性/反亲和性

首先运行一个包含app=tomcat的Pod:vim tomcat-pod.yaml

apiVersion: apps/v1

kind: Deployment

metadata:name: tomcat

spec:replicas: 1selector:matchLabels:app: tomcattemplate:metadata:labels:app: tomcatspec:containers:- name: tomcatimage: tomcat:8.5-jre10-slimports:- containerPort: 8080创建并查看tomcat Pod:

$ kubectl apply -f tomcat.yaml

deployment.apps/tomcat created$ kubectl get pod -o wide --show-labels

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES LABELS

tomcat-ff7c8b896-fh9zb 1/1 Running 0 22s 192.168.1.5 node01 <none> <none> app=tomcat,pod-template-hash=ff7c8b896可以看到,当前tomcat Pod运行在node01节点上。

接下来,我们定义一个nginx Pod,指定Pod的亲和性和反亲和性配置: vim pod-anti-affinity.yaml

apiVersion: apps/v1

kind: Deployment

metadata:name: nginx

spec:replicas: 2selector:matchLabels:app: nginxtemplate:metadata:labels:app: nginxspec:affinity:podAffinity: # Pod亲和性requiredDuringSchedulingIgnoredDuringExecution: # 硬亲和 调度的节点上必须有labels包含app=tomcat的pod,如果没有这样的pod则调度失败。- labelSelector:matchExpressions:- key: appoperator: Invalues:- tomcattopologyKey: kubernetes.io/hostname # 指定节点标签,通过该标签可以确定哪些节点上的 Pod 有指定标签,以此来做区分。podAntiAffinity: # Pod反亲和性requiredDuringSchedulingIgnoredDuringExecution: # 硬亲和 任意两个nginx pod不得调度在同一个node上面,即如果节点上有labels包含app=nginx的pod,则pod不应该调度到该节点上- labelSelector:matchExpressions:- key: appoperator: Invalues:- nginxtopologyKey: kubernetes.io/hostnamecontainers:- name: nginximage: nginx在资源清单中,我们通过podAffinity配置了nginx Pod的硬亲和规则就是必须调度到包含app=tomcat标签并且目前正在运行中的tomcat Pod所在的那些node上面【即node01】,通过podAntiAffinity配置nginx Pod的硬反亲和规则是任意两个nginx Pod不得调度在同一个node上面。

运行并查看Pod:

$ kubectl apply -f pod-anti-affinity.yaml

deployment.apps/nginx created$ kubectl get pod -o wide --show-labels

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES LABELS

nginx-5689b4dd7-6twt8 0/1 Pending 0 34s <none> <none> <none> <none> app=nginx,pod-template-hash=5689b4dd7

nginx-5689b4dd7-z8m2j 1/1 Running 0 34s 192.168.1.6 node01 <none> <none> app=nginx,pod-template-hash=5689b4dd7

tomcat-ff7c8b896-fh9zb 1/1 Running 0 5m19s 192.168.1.5 node01 <none> <none> app=tomcat,pod-template-hash=ff7c8b896在资源清单中,我们指定了nginx的副本数为2,并且一个处于Running,一个处于Pending。

- 处于Running是因为,它满足了我们配置了Pod亲和/反亲和性规则,nginx-5689b4dd7-z8m2j这个Pod它首先会去挑选当前包含app=tomcat标签并且正在运行中的Pod所在的节点,这里就是node01;然后再判断反亲和性规则,同一个节点不能同时运行两个nginx Pod,目前node01上并没有运行nginx Pod,所以nginx-5689b4dd7-z8m2j它就会被调度到node01上。

- 处于Pending是因为,它满足了我们配置了Pod亲和/反亲和性规则,nginx-5689b4dd7-6twt8这个Pod它首先会去挑选当前包含app=tomcat标签并且正在运行中的Pod所在的节点,这里就是node01;然后再判断反亲和性规则,同一个节点不能同时运行两个nginx Pod,因为node01上已经运行了一个nginx Pod【nginx-5689b4dd7-z8m2j】,所以nginx-5689b4dd7-6twt8这个Pod就不能再被调度到node01上了,不满足反亲和规则,故nginx-5689b4dd7-6twt8这个Pod一直处于Pending状态。

五、总结

不管是node的亲和/反亲和,还是Pod和Pod的亲和/反亲和,如果我们指定的规则过于精细的话,都需要大量计算处理和条件过滤, 依赖大量的Pod和node状态信息, 如果k8s集群很大的话,会显著减慢scheduler的调度速度,因此k8s官方不建议在超过数百个节点的集群中使用它们,如果节点较多,过滤规则不应该设置的过于精细。

参考:将 Pod 指派给节点 | Kubernetes

![[C#]C# OpenVINO部署yolov8实例分割模型](https://img-blog.csdnimg.cn/direct/48d6f935d195433dbf9570b92947a532.jpeg)