一、首先下载windows版本的Kafka

官网:Apache Kafka

二、启动Kafka

cmd进入到kafka安装目录:

1:cmd启动zookeeer

.\bin\windows\zookeeper-server-start.bat .\config\zookeeper.properties

2:cmd启动kafka server

.\bin\windows\zookeeper-server-start.bat .\config\zookeeper.properties

3:使用cmd窗口启动一个生产者命令:

.\bin\windows\kafka-console-producer.bat --bootstrap-server localhost:9092 --topic Topic1

4:cmd启动zookeeer

.\bin\windows\kafka-console-consumer.bat --bootstrap-server localhost:9092 -topic Topic1

三、引入kafka依赖

<!--kafka依赖--><dependency><groupId>org.springframework.kafka</groupId><artifactId>spring-kafka</artifactId></dependency>四、配置文件

server:port: 8080spring:application:name: kafka-demokafka:bootstrap-servers: localhost:9092producer: # producer 生产者retries: 0 # 重试次数acks: 1 # 应答级别:多少个分区副本备份完成时向生产者发送ack确认(可选0、1、all/-1)batch-size: 16384 # 批量大小buffer-memory: 33554432 # 生产端缓冲区大小key-serializer: org.apache.kafka.common.serialization.StringSerializer# value-serializer: com.itheima.demo.config.MySerializervalue-serializer: org.apache.kafka.common.serialization.StringSerializerconsumer: # consumer消费者group-id: javagroup # 默认的消费组IDenable-auto-commit: true # 是否自动提交offsetauto-commit-interval: 100 # 提交offset延时(接收到消息后多久提交offset)# earliest:当各分区下有已提交的offset时,从提交的offset开始消费;无提交的offset时,从头开始消费# latest:当各分区下有已提交的offset时,从提交的offset开始消费;无提交的offset时,消费新产生的该分区下的数据# none:topic各分区都存在已提交的offset时,从offset后开始消费;只要有一个分区不存在已提交的offset,则抛出异常auto-offset-reset: earliestkey-deserializer: org.apache.kafka.common.serialization.StringDeserializer# value-deserializer: com.itheima.demo.config.MyDeserializervalue-deserializer: org.apache.kafka.common.serialization.StringDeserializer五、编写生产者发送消息

1:异步发送

@RestController

@Api(tags = "异步接口")

@RequestMapping("/kafka")

public class KafkaProducer {@Resourceprivate KafkaTemplate<String, Object> kafkaTemplate;@GetMapping("/kafka/test/{msg}")public void sendMessage(@PathVariable("msg") String msg) {Message message = new Message();message.setMessage(msg);kafkaTemplate.send("Topic3", JSON.toJSONString(message));}

}1:同步发送

//测试同步发送与监听

@RestController

@Api(tags = "同步接口")

@RequestMapping("/kafka")

public class AsyncProducer {private final static Logger logger = LoggerFactory.getLogger(AsyncProducer.class);@Resourceprivate KafkaTemplate<String, Object> kafkaTemplate;//同步发送@GetMapping("/kafka/sync/{msg}")public void sync(@PathVariable("msg") String msg) throws Exception {Message message = new Message();message.setMessage(msg);ListenableFuture<SendResult<String, Object>> future = kafkaTemplate.send("Topic3", JSON.toJSONString(message));//注意,可以设置等待时间,超出后,不再等候结果SendResult<String, Object> result = future.get(3, TimeUnit.SECONDS);logger.info("send result:{}",result.getProducerRecord().value());}}六、消费者编写

@Component

public class KafkaConsumer {private final Logger logger = LoggerFactory.getLogger(KafkaConsumer.class);//不指定group,默认取yml里配置的@KafkaListener(topics = {"Topic3"})public void onMessage1(ConsumerRecord<?, ?> consumerRecord) {Optional<?> optional = Optional.ofNullable(consumerRecord.value());if (optional.isPresent()) {Object msg = optional.get();logger.info("message:{}", msg);}}

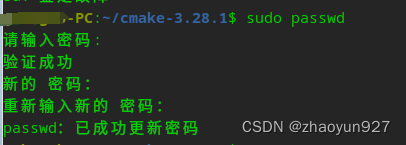

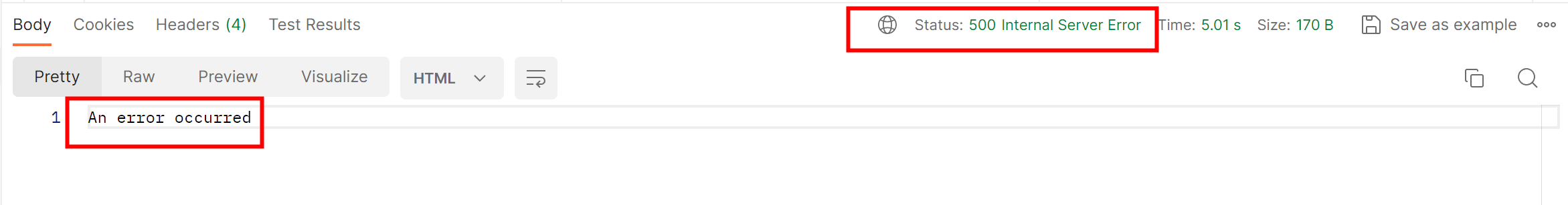

}通过swagger,进行生产者发送消息,观察控制台结果

至此,一个简单的整合就完成了。

后续会持续更新kafka相关内容(多多关注哦!)