目前正在写一个训练框架,需要有以下几个功能:

1.保存模型

2.断点继续训练

3.加载模型

4.tensorboard 查询训练记录的功能

命令:

tensorboard --logdir=runs --host=192.168.112.5

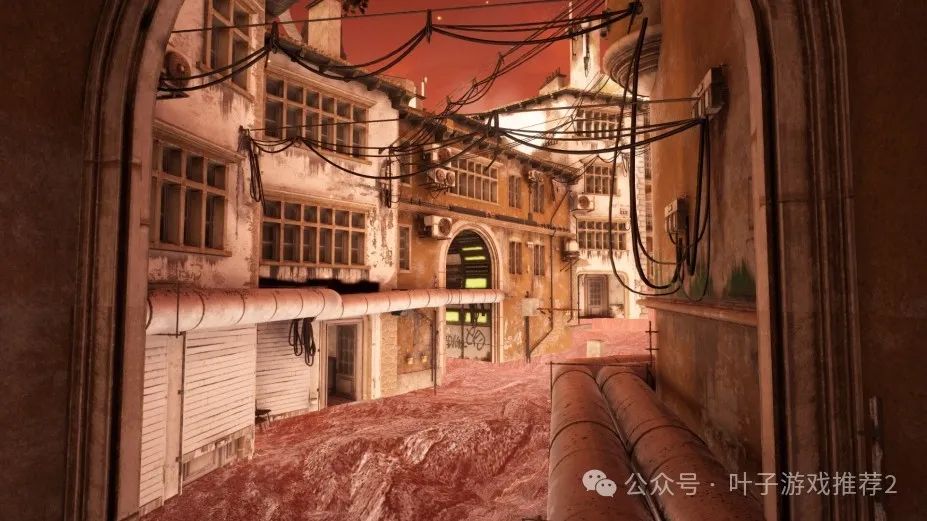

效果:

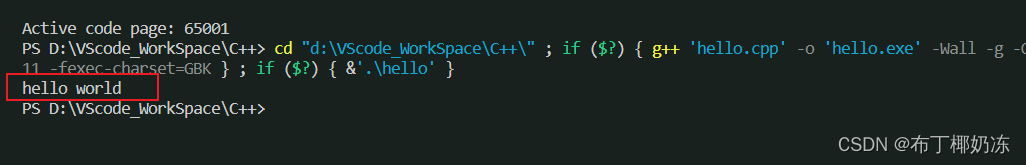

import torch

import torch.nn as nn

import torch.optim as optim

from torch.utils.tensorboard import SummaryWriter# 假设有一个训练数据集和其对应的标签

# 例如:

data = torch.tensor([[1.0], [2.0], [3.0]])

labels = torch.tensor([[2.0], [4.0], [6.0]])class MYdataset(torch.utils.data.Dataset):def __init__(self, features, labels):self.features = featuresself.labels = labelsdef __getitem__(self, index):return self.features[index], self.labels[index]def __len__(self):return len(self.features)BATCH_SIZE= 1train_ds = MYdataset(data, labels)train_dl = torch.utils.data.DataLoader(train_ds,batch_size=BATCH_SIZE,shuffle = True

)# 定义模型、损失函数和优化器

model = nn.Linear(1, 1)

criterion = nn.MSELoss()

optimizer = optim.SGD(model.parameters(), lr=0.01)# 创建summary writer

writer = SummaryWriter('runs/loss_visualization')for epoch in range(10): # 假设我们只训练10个周期for i, (data,labels) in enumerate(train_dl): # 假设train_loader是数据加载器# 前向传播outputs = model(data)loss = criterion(outputs, labels)# 反向传播optimizer.zero_grad()loss.backward()optimizer.step()# 将损失记录到TensorBoard中print("Loss:",loss.item())writer.add_scalar('Loss', loss.item(), epoch * len(train_dl) + i)# 关闭summary writer

writer.close()