mac本地采用GPU启动keras运算

- 一、问题背景

- 二、技术背景

- 三、实验验证

- 本机配置

- 安装PlaidML

- 安装plaidml-keras

- 配置默认显卡

- 运行采用 CPU运算的代码

- step1 先导入keras包,导入数据cifar10,这里可能涉及外网下载,有问题可以参考[keras使用基础问题](https://editor.csdn.net/md/?articleId=140331142)

- step2 导入计算模型,如果本地不存在该模型数据,会自动进行下载,有问题可以参考[keras使用基础问题](https://editor.csdn.net/md/?articleId=140331142)

- step3 模型编译

- step4 进行一次预测

- step5 进行10次预测

- 运行采用 GPU运算的代码

- 采用显卡metal_intel(r)_uhd_graphics_630.0

- step0 通过plaidml导入keras,之后再做keras相关操作

- step1 先导入keras包,导入数据cifar10

- step2 导入计算模型,如果本地不存在该模型数据,会自动进行下载

- step3 模型编译

- step4 进行一次预测

- step5 进行10次预测

- 采用显卡metal_amd_radeon_pro_5300m.0

- step0 通过plaidml导入keras,之后再做keras相关操作

- step1 先导入keras包,导入数据cifar10

- step2 导入计算模型,如果本地不存在该模型数据,会自动进行下载

- step3 模型编译

- step4 进行一次预测

- step5 进行10次预测

- 四、评估讨论

一、问题背景

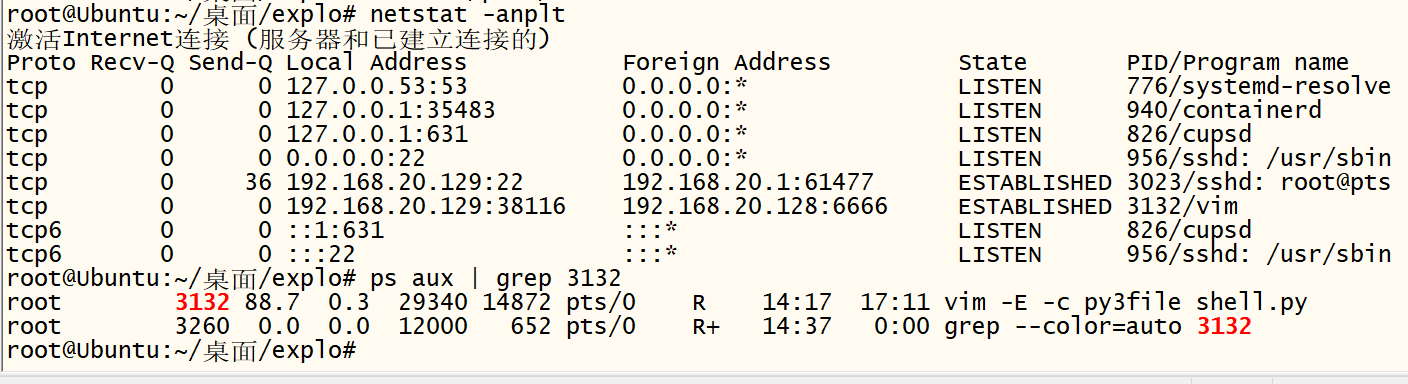

从上一篇文章中,我们已经发现在大模型的运算中,采用cpu进行运算时,对于cpu的使用消耗很大。因此我们这里会想到如果机器中有GPU显卡,如何能够发挥显卡在向量运算中的优势,将机器学习相关的运算做的又快又好。

二、技术背景

我们知道当前主流的支持机器学习比较好的显卡为 Nvida系列的显卡,俗称 N卡,但是在mac机器上,通常集成的都是 AMD系列的显卡。两种不同的硬件指令集的差异导致上层需要有不同的实现技术。

但是在 AMD显卡中,有一种PlaidML的技术,通过该插件,可以封装不同显卡的差异。

PlaidML项目地址:https://github.com/plaidml/plaidml

目前 PlaidML 已经支持 Keras、ONNX 和 nGraph 等工具,直接用 Keras 建个模,MacBook 轻轻松松调用 GPU。

通过这款名为 PlaidML 的工具,不论英伟达、AMD 还是英特尔显卡都可以轻松搞定深度学习训练了。

参考:Mac使用PlaidML加速强化学习训练

三、实验验证

本次操作,对于一个常规的keras的算法,分别在cpu和 gpu下进行多轮计算,统计耗时。进行对比统计。

本机配置

本次采用的mac机器的软件、硬件参数如下

安装PlaidML

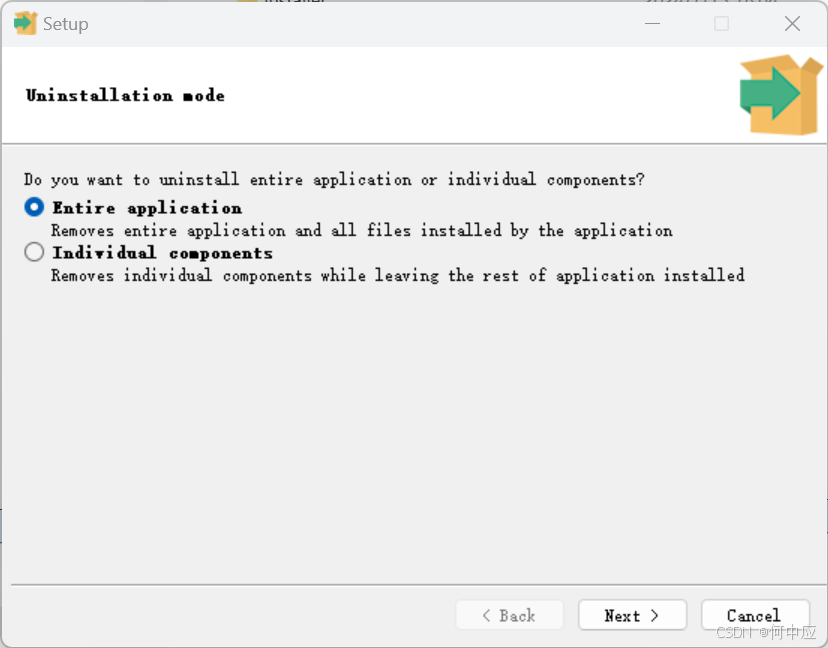

由于安装依赖包的过程,需要有命令交互,因此安装plaidML包操作在命令行进行,代码执行在 jupyter中进行。

由于采用虚拟环境时会jupyter会有需要创建 kernal的技术点,因此这里建议暂时直接用原python环境进行验证。了解jupyter在虚拟环境的配置特点的同学可以尝试在虚拟环境中操作。

安装plaidml-keras

pip3 install plaidml-keras

笔者在采用命令 pip3 install plaidml-keras 安装最新版本plaidml-keras为0.7.0后,在执行初始化操作时遇到bug,后续降为0.6.4执行正常。但是后续再次安装为 0.7.0,又正常了。

plaidml in github

配置默认显卡

在命令行执行

plaidml-setup

交互内容如下

(venv) tsingj@tsingjdeMacBook-Pro-2 ~ # plaidml-setupPlaidML Setup (0.6.4)Thanks for using PlaidML!Some Notes:* Bugs and other issues: https://github.com/plaidml/plaidml* Questions: https://stackoverflow.com/questions/tagged/plaidml* Say hello: https://groups.google.com/forum/#!forum/plaidml-dev* PlaidML is licensed under the Apache License 2.0Default Config Devices:metal_intel(r)_uhd_graphics_630.0 : Intel(R) UHD Graphics 630 (Metal)metal_amd_radeon_pro_5300m.0 : AMD Radeon Pro 5300M (Metal)Experimental Config Devices:llvm_cpu.0 : CPU (LLVM)metal_intel(r)_uhd_graphics_630.0 : Intel(R) UHD Graphics 630 (Metal)opencl_amd_radeon_pro_5300m_compute_engine.0 : AMD AMD Radeon Pro 5300M Compute Engine (OpenCL)opencl_cpu.0 : Intel CPU (OpenCL)opencl_intel_uhd_graphics_630.0 : Intel Inc. Intel(R) UHD Graphics 630 (OpenCL)metal_amd_radeon_pro_5300m.0 : AMD Radeon Pro 5300M (Metal)Using experimental devices can cause poor performance, crashes, and other nastiness.Enable experimental device support? (y,n)[n]:

列举现实当前可以支持的显卡列表,选择默认支持支持的2个显卡,还是试验阶段所有支持的6 种硬件。

可以看到默认支持的 2 个显卡即最初截图中现实的两个显卡。为了测试稳定起见,这里先选择N,回车。

Multiple devices detected (You can override by setting PLAIDML_DEVICE_IDS).

Please choose a default device:1 : metal_intel(r)_uhd_graphics_630.02 : metal_amd_radeon_pro_5300m.0Default device? (1,2)[1]:1Selected device:metal_intel(r)_uhd_graphics_630.0

对于默认选择的设置,设置一个默认设备,这里我们先将metal_intel®_uhd_graphics_630.0设置为默认设备,当然这个设备其实性能比较差,后续我们会再将metal_amd_radeon_pro_5300m.0设置为默认设备进行对比。

写入 1 之后,回车。

Almost done. Multiplying some matrices...

Tile code:function (B[X,Z], C[Z,Y]) -> (A) { A[x,y : X,Y] = +(B[x,z] * C[z,y]); }

Whew. That worked.Save settings to /Users/tsingj/.plaidml? (y,n)[y]:y

Success!

回车,将配置信息写入默认配置文件中,完成配置。

运行采用 CPU运算的代码

本节中采用jupyter进行一个简单算法代码的运行,统计其时间。

step1 先导入keras包,导入数据cifar10,这里可能涉及外网下载,有问题可以参考keras使用基础问题

#!/usr/bin/env python

import numpy as np

import os

import time

import keras

import keras.applications as kapp

from keras.datasets import cifar10

(x_train, y_train_cats), (x_test, y_test_cats) = cifar10.load_data()

batch_size = 8

x_train = x_train[:batch_size]

x_train = np.repeat(np.repeat(x_train, 7, axis=1), 7, axis=2)

注意,这里默认的keral的运算后端应该是采用了tenserflow,查看输出

2024-07-11 14:36:02.753107: I tensorflow/core/platform/cpu_feature_guard.cc:210] This TensorFlow binary is optimized to use available CPU instructions in performance-critical operations.

To enable the following instructions: AVX2 FMA, in other operations, rebuild TensorFlow with the appropriate compiler flags.

step2 导入计算模型,如果本地不存在该模型数据,会自动进行下载,有问题可以参考keras使用基础问题

model = kapp.VGG19()

step3 模型编译

model.compile(optimizer='sgd', loss='categorical_crossentropy',metrics=['accuracy'])

step4 进行一次预测

print("Running initial batch (compiling tile program)")

y = model.predict(x=x_train, batch_size=batch_size)

Running initial batch (compiling tile program)

1/1 ━━━━━━━━━━━━━━━━━━━━ 1s 1s/step

step5 进行10次预测

# Now start the clock and run 10 batchesprint("Timing inference...")

start = time.time()

for i in range(10):y = model.predict(x=x_train, batch_size=batch_size)print("Ran in {} seconds".format(time.time() - start))

1/1 ━━━━━━━━━━━━━━━━━━━━ 1s 891ms/step

Ran in 0.9295139312744141 seconds

1/1 ━━━━━━━━━━━━━━━━━━━━ 1s 923ms/step

Ran in 1.8894760608673096 seconds

1/1 ━━━━━━━━━━━━━━━━━━━━ 1s 893ms/step

Ran in 2.818492889404297 seconds

1/1 ━━━━━━━━━━━━━━━━━━━━ 1s 932ms/step

Ran in 3.7831668853759766 seconds

1/1 ━━━━━━━━━━━━━━━━━━━━ 1s 892ms/step

Ran in 4.71358585357666 seconds

1/1 ━━━━━━━━━━━━━━━━━━━━ 1s 860ms/step

Ran in 5.609835863113403 seconds

1/1 ━━━━━━━━━━━━━━━━━━━━ 1s 878ms/step

Ran in 6.5182459354400635 seconds

1/1 ━━━━━━━━━━━━━━━━━━━━ 1s 871ms/step

Ran in 7.423128128051758 seconds

1/1 ━━━━━━━━━━━━━━━━━━━━ 1s 896ms/step

Ran in 8.352543830871582 seconds

1/1 ━━━━━━━━━━━━━━━━━━━━ 1s 902ms/step

Ran in 9.288795948028564 seconds

运行采用 GPU运算的代码

采用显卡metal_intel®_uhd_graphics_630.0

step0 通过plaidml导入keras,之后再做keras相关操作

# Importing PlaidML. Make sure you follow this order

import plaidml.keras

plaidml.keras.install_backend()

import os

os.environ["KERAS_BACKEND"] = "plaidml.keras.backend"

注:

1、在采用plaidml=0.7.0 版本时,plaidml.keras.install_backend()操作会发生报错

2、这步操作,会通过plaidml导入keras,将后台运算引擎设置为plaidml,而不再采用tenserflow

step1 先导入keras包,导入数据cifar10

#!/usr/bin/env python

import numpy as np

import os

import time

import keras

import keras.applications as kapp

from keras.datasets import cifar10

(x_train, y_train_cats), (x_test, y_test_cats) = cifar10.load_data()

batch_size = 8

x_train = x_train[:batch_size]

x_train = np.repeat(np.repeat(x_train, 7, axis=1), 7, axis=2)

step2 导入计算模型,如果本地不存在该模型数据,会自动进行下载

model = kapp.VGG19()

首次运行输出显卡信息

INFO:plaidml:Opening device “metal_intel®_uhd_graphics_630.0”

step3 模型编译

model.compile(optimizer='sgd', loss='categorical_crossentropy',metrics=['accuracy'])

step4 进行一次预测

print("Running initial batch (compiling tile program)")

y = model.predict(x=x_train, batch_size=batch_size)

Running initial batch (compiling tile program)

由于输出较快,内容打印只有一行。

step5 进行10次预测

# Now start the clock and run 10 batchesprint("Timing inference...")

start = time.time()

for i in range(10):y = model.predict(x=x_train, batch_size=batch_size)print("Ran in {} seconds".format(time.time() - start))

Ran in 4.241918087005615 seconds

Ran in 8.452141046524048 seconds

Ran in 12.665411949157715 seconds

Ran in 16.849968910217285 seconds

Ran in 21.025720834732056 seconds

Ran in 25.212764024734497 seconds

Ran in 29.405478954315186 seconds

Ran in 33.594977140426636 seconds

Ran in 37.7886438369751 seconds

Ran in 41.98136305809021 seconds

采用显卡metal_amd_radeon_pro_5300m.0

在plaidml-setup设置的选择显卡的阶段,不再选择显卡metal_intel®_uhd_graphics_630.0,而是选择metal_amd_radeon_pro_5300m.0

(venv) tsingj@tsingjdeMacBook-Pro-2 ~ # plaidml-setupPlaidML Setup (0.6.4)Thanks for using PlaidML!Some Notes:* Bugs and other issues: https://github.com/plaidml/plaidml* Questions: https://stackoverflow.com/questions/tagged/plaidml* Say hello: https://groups.google.com/forum/#!forum/plaidml-dev* PlaidML is licensed under the Apache License 2.0Default Config Devices:metal_intel(r)_uhd_graphics_630.0 : Intel(R) UHD Graphics 630 (Metal)metal_amd_radeon_pro_5300m.0 : AMD Radeon Pro 5300M (Metal)Experimental Config Devices:llvm_cpu.0 : CPU (LLVM)metal_intel(r)_uhd_graphics_630.0 : Intel(R) UHD Graphics 630 (Metal)opencl_amd_radeon_pro_5300m_compute_engine.0 : AMD AMD Radeon Pro 5300M Compute Engine (OpenCL)opencl_cpu.0 : Intel CPU (OpenCL)opencl_intel_uhd_graphics_630.0 : Intel Inc. Intel(R) UHD Graphics 630 (OpenCL)metal_amd_radeon_pro_5300m.0 : AMD Radeon Pro 5300M (Metal)Using experimental devices can cause poor performance, crashes, and other nastiness.Enable experimental device support? (y,n)[n]:nMultiple devices detected (You can override by setting PLAIDML_DEVICE_IDS).

Please choose a default device:1 : metal_intel(r)_uhd_graphics_630.02 : metal_amd_radeon_pro_5300m.0Default device? (1,2)[1]:2Selected device:metal_amd_radeon_pro_5300m.0Almost done. Multiplying some matrices...

Tile code:function (B[X,Z], C[Z,Y]) -> (A) { A[x,y : X,Y] = +(B[x,z] * C[z,y]); }

Whew. That worked.Save settings to /Users/tsingj/.plaidml? (y,n)[y]:y

Success!

step0 通过plaidml导入keras,之后再做keras相关操作

# Importing PlaidML. Make sure you follow this order

import plaidml.keras

plaidml.keras.install_backend()

import os

os.environ["KERAS_BACKEND"] = "plaidml.keras.backend"

注:

1、在采用plaidml=0.7.0 版本时,plaidml.keras.install_backend()操作会发生报错

2、这步操作,会通过plaidml导入keras,将后台运算引擎设置为plaidml,而不再采用tenserflow

step1 先导入keras包,导入数据cifar10

#!/usr/bin/env python

import numpy as np

import os

import time

import keras

import keras.applications as kapp

from keras.datasets import cifar10

(x_train, y_train_cats), (x_test, y_test_cats) = cifar10.load_data()

batch_size = 8

x_train = x_train[:batch_size]

x_train = np.repeat(np.repeat(x_train, 7, axis=1), 7, axis=2)

step2 导入计算模型,如果本地不存在该模型数据,会自动进行下载

model = kapp.VGG19()

INFO:plaidml:Opening device “metal_amd_radeon_pro_5300m.0”

注意,这里首次执行输入了显卡信息。

step3 模型编译

model.compile(optimizer='sgd', loss='categorical_crossentropy',metrics=['accuracy'])

step4 进行一次预测

print("Running initial batch (compiling tile program)")

y = model.predict(x=x_train, batch_size=batch_size)

Running initial batch (compiling tile program)

由于输出较快,内容打印只有一行。

step5 进行10次预测

# Now start the clock and run 10 batchesprint("Timing inference...")

start = time.time()

for i in range(10):y = model.predict(x=x_train, batch_size=batch_size)print("Ran in {} seconds".format(time.time() - start))

查看输出

Ran in 0.43606019020080566 seconds

Ran in 0.8583459854125977 seconds

Ran in 1.2787911891937256 seconds

Ran in 1.70143723487854 seconds

Ran in 2.1235032081604004 seconds

Ran in 2.5464580059051514 seconds

Ran in 2.9677979946136475 seconds

Ran in 3.390064001083374 seconds

Ran in 3.8117799758911133 seconds

Ran in 4.236911058425903 seconds

四、评估讨论

显卡metal_intel®_uhd_graphics_630.0的内存值为1536 MB,虽然作为显卡,其在进行运算中性能不及本机的 6核CPU;

显卡metal_amd_radeon_pro_5300m.0,内存值为4G,其性能与本机 CPU对比,提升将近 1 倍数。

由此可以看到对于采用 GPU在进行机器学习运算中的强大优势。