hrnet人体关键点检测模型适配atlas

模型转换

将end2end.onnx模型转换为om模型

转换模型前激活:

source /usr/local/Ascend/ascend-toolkit/set_env.sh

转模型

atc --model=end2end.onnx --framework=5 --output=end2end --soc_version=Ascend310P3

可修改Atlas开发板版本Ascend310P3、Ascend310B1、Ascend310等

推理代码

import time

import cv2

import numpy as np

from ais_bench.infer.interface import InferSessionmodel_path = "/tmp/pose/end2_16.om"

IMG_PATH = "/tmp/pose/ztest.jpg"import loggingdef print_outputs(outputs):"""将 outputs 的完整内容打印到日志中。Args:outputs (numpy.ndarray): 模型的输出结果。"""logging.basicConfig(level=logging.INFO, format='%(message)s')for i, output_slice in enumerate(outputs):logging.info(f"Output slice {i}:")for j, row in enumerate(output_slice):row_str = ', '.join([f'{x:.6f}' for x in row])logging.info(f"[{row_str}]")logging.info('')def bbox_xywh2cs(bbox, aspect_ratio, padding, pixel_std):"""Transform the bbox format from (x,y,w,h) into (center, scale)Args:bbox (ndarray): Single bbox in (x, y, w, h)aspect_ratio (float): The expected bbox aspect ratio (w over h)padding (float): Bbox padding factor that will be multilied to scale.Default: 1.0pixel_std (float): The scale normalization factor. Default: 200.0Returns:tuple: A tuple containing center and scale.- np.ndarray[float32](2,): Center of the bbox (x, y).- np.ndarray[float32](2,): Scale of the bbox w & h."""x, y, w, h = bbox[:4]center = np.array([x + w * 0.5, y + h * 0.5], dtype=np.float32)if w > aspect_ratio * h:h = w * 1.0 / aspect_ratioelif w < aspect_ratio * h:w = h * aspect_ratioscale = np.array([w, h], dtype=np.float32) / pixel_stdscale = scale * paddingreturn center, scaledef rotate_point(pt, angle_rad):"""Rotate a point by an angle.Args:pt (list[float]): 2 dimensional point to be rotatedangle_rad (float): rotation angle by radianReturns:list[float]: Rotated point."""assert len(pt) == 2sn, cs = np.sin(angle_rad), np.cos(angle_rad)new_x = pt[0] * cs - pt[1] * snnew_y = pt[0] * sn + pt[1] * csrotated_pt = [new_x, new_y]return rotated_ptdef _get_3rd_point(a, b):"""To calculate the affine matrix, three pairs of points are required. Thisfunction is used to get the 3rd point, given 2D points a & b.The 3rd point is defined by rotating vector `a - b` by 90 degreesanticlockwise, using b as the rotation center.Args:a (np.ndarray): point(x,y)b (np.ndarray): point(x,y)Returns:np.ndarray: The 3rd point."""assert len(a) == 2assert len(b) == 2direction = a - bthird_pt = b + np.array([-direction[1], direction[0]], dtype=np.float32)return third_ptdef get_affine_transform(center,scale,rot,output_size,shift=(0., 0.),inv=False):"""Get the affine transform matrix, given the center/scale/rot/output_size.Args:center (np.ndarray[2, ]): Center of the bounding box (x, y).scale (np.ndarray[2, ]): Scale of the bounding boxwrt [width, height].rot (float): Rotation angle (degree).output_size (np.ndarray[2, ] | list(2,)): Size of thedestination heatmaps.shift (0-100%): Shift translation ratio wrt the width/height.Default (0., 0.).inv (bool): Option to inverse the affine transform direction.(inv=False: src->dst or inv=True: dst->src)Returns:np.ndarray: The transform matrix."""assert len(center) == 2assert len(scale) == 2assert len(output_size) == 2assert len(shift) == 2# pixel_std is 200.scale_tmp = scale * 210shift = np.array(shift)src_w = scale_tmp[0]dst_w = output_size[0]dst_h = output_size[1]rot_rad = np.pi * rot / 180src_dir = rotate_point([0., src_w * -0.5], rot_rad)dst_dir = np.array([0., dst_w * -0.5])src = np.zeros((3, 2), dtype=np.float32)src[0, :] = center + scale_tmp * shiftsrc[1, :] = center + src_dir + scale_tmp * shiftsrc[2, :] = _get_3rd_point(src[0, :], src[1, :])dst = np.zeros((3, 2), dtype=np.float32)dst[0, :] = [dst_w * 0.5, dst_h * 0.5]dst[1, :] = np.array([dst_w * 0.5, dst_h * 0.5]) + dst_dirdst[2, :] = _get_3rd_point(dst[0, :], dst[1, :])if inv:trans = cv2.getAffineTransform(np.float32(dst), np.float32(src))else:trans = cv2.getAffineTransform(np.float32(src), np.float32(dst))return transdef bbox_xyxy2xywh(bbox_xyxy):"""Transform the bbox format from x1y1x2y2 to xywh.Args:bbox_xyxy (np.ndarray): Bounding boxes (with scores), shaped (n, 4) or(n, 5). (left, top, right, bottom, [score])Returns:np.ndarray: Bounding boxes (with scores),shaped (n, 4) or (n, 5). (left, top, width, height, [score])"""bbox_xywh = bbox_xyxy.copy()bbox_xywh[:, 2] = bbox_xywh[:, 2] - bbox_xywh[:, 0]bbox_xywh[:, 3] = bbox_xywh[:, 3] - bbox_xywh[:, 1]return bbox_xywhdef _get_max_preds(heatmaps):"""Get keypoint predictions from score maps.Note:batch_size: Nnum_keypoints: Kheatmap height: Hheatmap width: WArgs:heatmaps (np.ndarray[N, K, H, W]): model predicted heatmaps.Returns:tuple: A tuple containing aggregated results.- preds (np.ndarray[N, K, 2]): Predicted keypoint location.- maxvals (np.ndarray[N, K, 1]): Scores (confidence) of the keypoints."""assert isinstance(heatmaps,np.ndarray), ('heatmaps should be numpy.ndarray')assert heatmaps.ndim == 4, 'batch_images should be 4-ndim'N, K, _, W = heatmaps.shapeheatmaps_reshaped = heatmaps.reshape((N, K, -1))idx = np.argmax(heatmaps_reshaped, 2).reshape((N, K, 1))maxvals = np.amax(heatmaps_reshaped, 2).reshape((N, K, 1))preds = np.tile(idx, (1, 1, 2)).astype(np.float32)preds[:, :, 0] = preds[:, :, 0] % Wpreds[:, :, 1] = preds[:, :, 1] // Wpreds = np.where(np.tile(maxvals, (1, 1, 2)) > 0.0, preds, -1)return preds, maxvalsdef transform_preds(coords, center, scale, output_size, use_udp=False):"""Get final keypoint predictions from heatmaps and apply scaling andtranslation to map them back to the image.Note:num_keypoints: KArgs:coords (np.ndarray[K, ndims]):* If ndims=2, corrds are predicted keypoint location.* If ndims=4, corrds are composed of (x, y, scores, tags)* If ndims=5, corrds are composed of (x, y, scores, tags,flipped_tags)center (np.ndarray[2, ]): Center of the bounding box (x, y).scale (np.ndarray[2, ]): Scale of the bounding boxwrt [width, height].output_size (np.ndarray[2, ] | list(2,)): Size of thedestination heatmaps.use_udp (bool): Use unbiased data processingReturns:np.ndarray: Predicted coordinates in the images."""assert coords.shape[1] in (2, 4, 5)assert len(center) == 2assert len(scale) == 2assert len(output_size) == 2# Recover the scale which is normalized by a factor of 200.scale = scale * 200if use_udp:scale_x = scale[0] / (output_size[0] - 1.0)scale_y = scale[1] / (output_size[1] - 1.0)else:scale_x = scale[0] / output_size[0]scale_y = scale[1] / output_size[1]target_coords = np.ones_like(coords)target_coords[:, 0] = coords[:, 0] * scale_x + center[0] - scale[0] * 0.5target_coords[:, 1] = coords[:, 1] * scale_y + center[1] - scale[1] * 0.5return target_coordsdef keypoints_from_heatmaps(heatmaps,center,scale,unbiased=False,post_process='default',kernel=11,valid_radius_factor=0.0546875,use_udp=False,target_type='GaussianHeatmap'):# Avoid being affectedheatmaps = heatmaps.copy()N, K, H, W = heatmaps.shapepreds, maxvals = _get_max_preds(heatmaps)# add +/-0.25 shift to the predicted locations for higher acc.for n in range(N):for k in range(K):heatmap = heatmaps[n][k]px = int(preds[n][k][0])py = int(preds[n][k][1])if 1 < px < W - 1 and 1 < py < H - 1:diff = np.array([heatmap[py][px + 1] - heatmap[py][px - 1],heatmap[py + 1][px] - heatmap[py - 1][px]])preds[n][k] += np.sign(diff) * .25if post_process == 'megvii':preds[n][k] += 0.5# Transform back to the imagefor i in range(N):preds[i] = transform_preds(preds[i], center[i], scale[i], [W, H], use_udp=use_udp)if post_process == 'megvii':maxvals = maxvals / 255.0 + 0.5return preds, maxvalsdef decode(output, center, scale, score_, batch_size=1):c = np.zeros((batch_size, 2), dtype=np.float32)s = np.zeros((batch_size, 2), dtype=np.float32)score = np.ones(batch_size)for i in range(batch_size):c[i, :] = centers[i, :] = scalescore[i] = np.array(score_).reshape(-1)preds, maxvals = keypoints_from_heatmaps(output,c,s,False,'default',11,0.0546875,False,'GaussianHeatmap')all_preds = np.zeros((batch_size, preds.shape[1], 3), dtype=np.float32)all_boxes = np.zeros((batch_size, 6), dtype=np.float32)all_preds[:, :, 0:2] = preds[:, :, 0:2]all_preds[:, :, 2:3] = maxvalsall_boxes[:, 0:2] = c[:, 0:2]all_boxes[:, 2:4] = s[:, 0:2]all_boxes[:, 4] = np.prod(s * 200.0, axis=1)all_boxes[:, 5] = scoreresult = {}result['preds'] = all_predsresult['boxes'] = all_boxesprint(result)return resultdef draw(bgr, predict_dict, skeleton, box):cv2.rectangle(bgr, (int(box[0]), int(box[1])), (int(box[0]) + int(box[2]), int(box[1]) + int(box[3])),(255, 0, 0))all_preds = predict_dict["preds"]for all_pred in all_preds:for x, y, s in all_pred:cv2.circle(bgr, (int(x), int(y)), 3, (0, 255, 120), -1)for sk in skeleton:if sk[0] < len(all_pred) and sk[1] < len(all_pred):x0 = int(all_pred[sk[0]][0])y0 = int(all_pred[sk[0]][1])x1 = int(all_pred[sk[1]][0])y1 = int(all_pred[sk[1]][1])cv2.line(bgr, (x0, y0), (x1, y1), (0, 255, 0), 1)cv2.imwrite("new_test.jpg", bgr)if __name__ == "__main__":# Create RKNN objectmodel = InferSession(0, model_path)print("done")#[x1,y1,w,h,conf],根据图像中人的目标框进行关键点检测bbox = [1, 185, 272, 225, 9]image_size = [192, 256]src_img = cv2.imread(IMG_PATH)img = cv2.cvtColor(src_img, cv2.COLOR_BGR2RGB) # hwc rgb# RE_img = cv2.resize(img, (256, 192))aspect_ratio = image_size[0] / image_size[1]# aspect_ratio =1.33print('aspect_ratio', aspect_ratio)img_height = img.shape[0]img_width = img.shape[1]img_sz = [img_width, img_height]print('image shape', src_img.shape)padding = 1.2pixel_std = 220center, scale = bbox_xywh2cs(bbox,aspect_ratio,padding,pixel_std)# print(scale)# center =np.array([484.5, 395.5], dtype=np.float32)# scale = np.array([0.87, 0.653], dtype=np.float32)print(center, scale)trans = get_affine_transform(center, scale, 0, image_size)img = cv2.warpAffine(img,trans, (int(image_size[0]), int(image_size[1])),flags=cv2.INTER_LINEAR)print('trans', trans)print(img.shape)img = img / 255.0 # 归一化到0~1img = img.transpose(2, 0, 1)img = np.ascontiguousarray(img, dtype=np.float16)# Inferenceprint("--> Running model")outputs = model.infer([img])[0]print('outputs', outputs)predict_dict = decode(outputs, center, scale, bbox[-1])inv_trans = cv2.invertAffineTransform(trans)skeleton = [[15, 13], [13, 11], [16, 14], [14, 12], [11, 12], [5, 11], [6, 12], [5, 6], [5, 7], [6, 8], [7, 9],[8, 10], [1, 2], [0, 1], [0, 2], [1, 3], [2, 4], [3, 5], [4, 6]]# draw(src_img, {"preds": [original_points]}, skeleton,[x_min, y_min, width, height])draw(src_img, predict_dict, skeleton, bbox)运行代码需安装aclruntime,安装教程:

https://gitee.com/ascend/msit/pulls/1124.diff

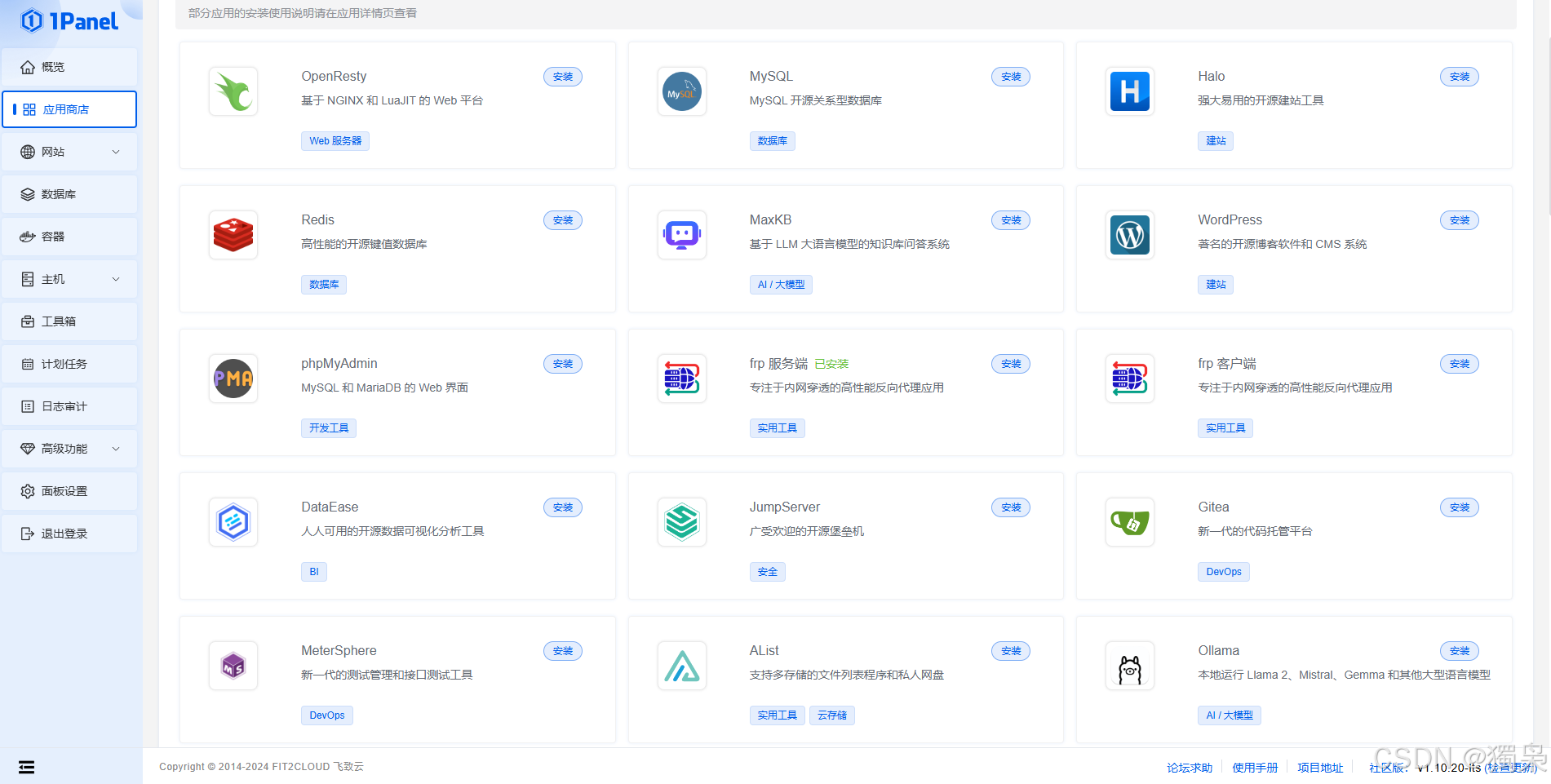

此外,需下载ais_bench包

下载链接:https://download.csdn.net/download/qq_40357993/89994844?spm=1001.2014.3001.5503

![[Codesys]常用功能块应用分享-BMOV功能块功能介绍及其使用实例说明](https://i-blog.csdnimg.cn/direct/42e93b825ee24940b6a7601b90554c97.png)

![[GXYCTF2019]BabyUpload--详细解析](https://i-blog.csdnimg.cn/direct/cee21f09e3674f42b886613662e745c9.png)