雅各比矩阵与梯度:区别与联系

在数学与机器学习中,梯度(Gradient) 和 雅各比矩阵(Jacobian Matrix) 是两个核心概念。虽然它们都描述了函数的变化率,但应用场景和具体形式有所不同。本文将通过深入解析它们的定义、区别与联系,并结合实际数值模拟,帮助读者全面理解两者,尤其是雅各比矩阵在深度学习与大模型领域的作用。

1. 梯度与雅各比矩阵的定义

1.1 梯度(Gradient)

梯度是标量函数(输出是一个标量)的变化率的向量化表示。

设函数 ( f : R n → R f: \mathbb{R}^n \to \mathbb{R} f:Rn→R ),其梯度是一个 ( n n n )-维向量:

∇ f ( x ) = [ ∂ f ∂ x 1 ∂ f ∂ x 2 ⋮ ∂ f ∂ x n ] , \nabla f(x) = \begin{bmatrix} \frac{\partial f}{\partial x_1} \\ \frac{\partial f}{\partial x_2} \\ \vdots \\ \frac{\partial f}{\partial x_n} \end{bmatrix}, ∇f(x)= ∂x1∂f∂x2∂f⋮∂xn∂f ,

表示在每个方向上 ( f f f ) 的变化率。

1.2 雅各比矩阵(Jacobian Matrix)

雅各比矩阵描述了向量函数(输出是一个向量)在输入点的变化率。

设函数 ( f : R n → R m \mathbf{f}: \mathbb{R}^n \to \mathbb{R}^m f:Rn→Rm ),即输入是 ( n n n )-维向量,输出是 ( m m m )-维向量,其雅各比矩阵为一个 ( m × n m \times n m×n ) 的矩阵:

D f ( x ) = [ ∂ f 1 ∂ x 1 ∂ f 1 ∂ x 2 ⋯ ∂ f 1 ∂ x n ∂ f 2 ∂ x 1 ∂ f 2 ∂ x 2 ⋯ ∂ f 2 ∂ x n ⋮ ⋮ ⋱ ⋮ ∂ f m ∂ x 1 ∂ f m ∂ x 2 ⋯ ∂ f m ∂ x n ] . Df(x) = \begin{bmatrix} \frac{\partial f_1}{\partial x_1} & \frac{\partial f_1}{\partial x_2} & \cdots & \frac{\partial f_1}{\partial x_n} \\ \frac{\partial f_2}{\partial x_1} & \frac{\partial f_2}{\partial x_2} & \cdots & \frac{\partial f_2}{\partial x_n} \\ \vdots & \vdots & \ddots & \vdots \\ \frac{\partial f_m}{\partial x_1} & \frac{\partial f_m}{\partial x_2} & \cdots & \frac{\partial f_m}{\partial x_n} \end{bmatrix}. Df(x)= ∂x1∂f1∂x1∂f2⋮∂x1∂fm∂x2∂f1∂x2∂f2⋮∂x2∂fm⋯⋯⋱⋯∂xn∂f1∂xn∂f2⋮∂xn∂fm .

- 每一行是某个标量函数 ( f i ( x ) f_i(x) fi(x) ) 的梯度;

- 雅各比矩阵描述了函数在各输入维度上的整体变化。

2. 梯度与雅各比矩阵的区别与联系

| 方面 | 梯度 | 雅各比矩阵 |

|---|---|---|

| 适用范围 | 标量函数 ( f : R n → R f: \mathbb{R}^n \to \mathbb{R} f:Rn→R ) | 向量函数 ( f : R n → R m f: \mathbb{R}^n \to \mathbb{R}^m f:Rn→Rm ) |

| 形式 | 一个 ( n n n )-维向量 | 一个 ( m × n m \times n m×n ) 的矩阵 |

| 含义 | 表示函数 ( f f f ) 在输入空间的变化率 | 表示向量函数 ( f f f ) 的所有输出分量对所有输入变量的变化率 |

| 联系 | 梯度是雅各比矩阵的特殊情况(当 ( m = 1 m = 1 m=1 ) 时,雅各比矩阵退化为梯度) | 梯度可以看作雅各比矩阵的行之一(当输出是标量时只有一行) |

3. 数值模拟:梯度与雅各比矩阵

示例函数

假设有函数 ( f : R 2 → R 2 \mathbf{f}: \mathbb{R}^2 \to \mathbb{R}^2 f:R2→R2 ),定义如下:

f ( x 1 , x 2 ) = [ x 1 2 + x 2 x 1 x 2 ] . \mathbf{f}(x_1, x_2) = \begin{bmatrix} x_1^2 + x_2 \\ x_1 x_2 \end{bmatrix}. f(x1,x2)=[x12+x2x1x2].

3.1 梯度计算(标量函数场景)

若我们关注第一个输出分量 ( f 1 ( x ) = x 1 2 + x 2 f_1(x) = x_1^2 + x_2 f1(x)=x12+x2 ),则其梯度为:

∇ f 1 ( x ) = [ ∂ f 1 ∂ x 1 ∂ f 1 ∂ x 2 ] = [ 2 x 1 1 ] . \nabla f_1(x) = \begin{bmatrix} \frac{\partial f_1}{\partial x_1} \\ \frac{\partial f_1}{\partial x_2} \end{bmatrix} = \begin{bmatrix} 2x_1 \\ 1 \end{bmatrix}. ∇f1(x)=[∂x1∂f1∂x2∂f1]=[2x11].

3.2 雅各比矩阵计算(向量函数场景)

对整个函数 ( f \mathbf{f} f ),其雅各比矩阵为:

D f ( x ) = [ ∂ f 1 ∂ x 1 ∂ f 1 ∂ x 2 ∂ f 2 ∂ x 1 ∂ f 2 ∂ x 2 ] = [ 2 x 1 1 x 2 x 1 ] . Df(x) = \begin{bmatrix} \frac{\partial f_1}{\partial x_1} & \frac{\partial f_1}{\partial x_2} \\ \frac{\partial f_2}{\partial x_1} & \frac{\partial f_2}{\partial x_2} \end{bmatrix} = \begin{bmatrix} 2x_1 & 1 \\ x_2 & x_1 \end{bmatrix}. Df(x)=[∂x1∂f1∂x1∂f2∂x2∂f1∂x2∂f2]=[2x1x21x1].

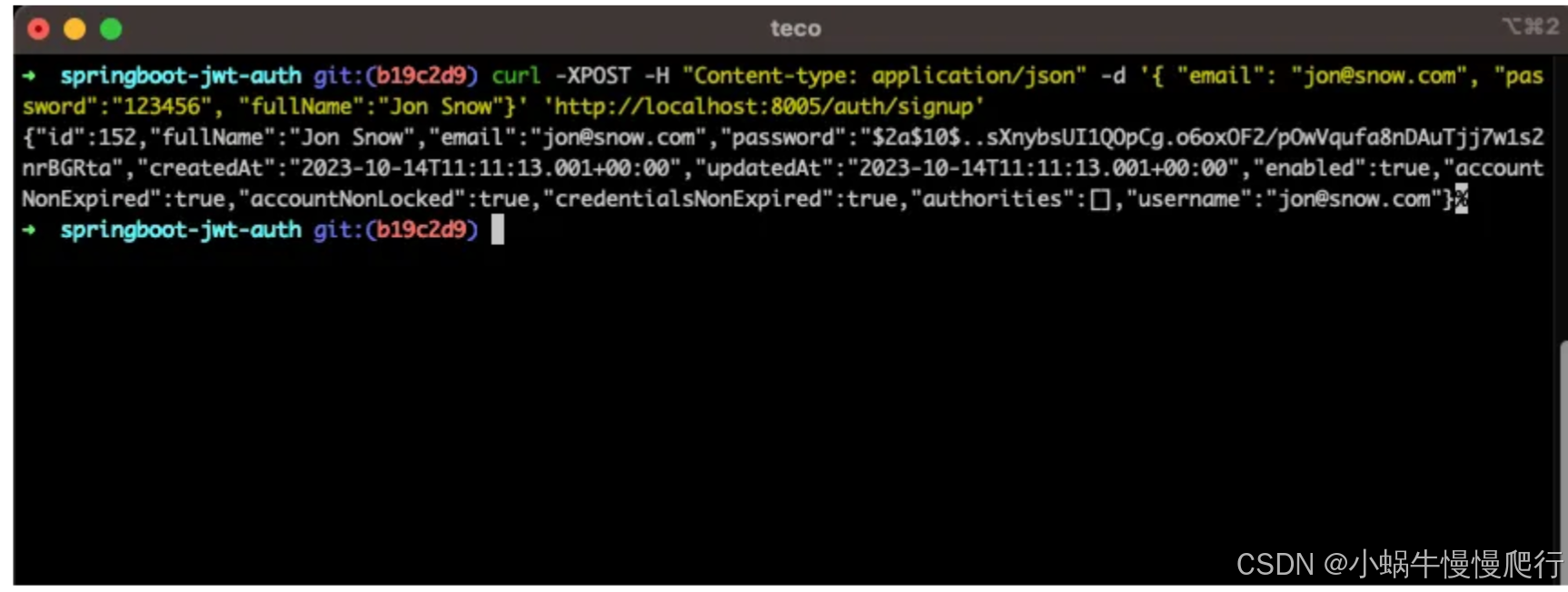

3.3 Python 实现

以下代码演示了梯度和雅各比矩阵的数值计算:

import numpy as np# 定义函数

def f(x):return np.array([x[0]**2 + x[1], x[0] * x[1]])# 定义雅各比矩阵

def jacobian_f(x):return np.array([[2 * x[0], 1],[x[1], x[0]]])# 计算梯度和雅各比矩阵

x = np.array([1.0, 2.0]) # 输入点

gradient_f1 = np.array([2 * x[0], 1]) # f1 的梯度

jacobian = jacobian_f(x) # 雅各比矩阵print("Gradient of f1:", gradient_f1)

print("Jacobian matrix of f:", jacobian)

运行结果:

Gradient of f1: [2. 1.]

Jacobian matrix of f:

[[2. 1.][2. 1.]]

4. 在机器学习和深度学习中的作用

4.1 梯度的作用

在深度学习中,梯度主要用于反向传播。当损失函数是标量时,其梯度指示了参数需要如何调整以最小化损失。例如:

- 对于神经网络的参数 ( θ \theta θ ),损失函数 ( L ( θ ) L(\theta) L(θ) ) 的梯度 ( ∇ L ( θ ) \nabla L(\theta) ∇L(θ) ) 用于优化器(如 SGD 或 Adam)更新参数。

4.2 雅各比矩阵的作用

-

多输出问题

雅各比矩阵用于多任务学习和多输出模型(例如,Transformer 的输出是一个序列,维度为 ( m m m )),描述多个输出对输入的变化关系。 -

对抗样本生成

在对抗攻击中,雅各比矩阵被用来计算输入的小扰动如何同时影响多个输出。 -

深度学习中的 Hessian-Free 方法

雅各比矩阵是二阶优化方法(如 Newton 方法)中的重要组成部分,因为 Hessian 矩阵的计算通常依赖雅各比矩阵。 -

大模型推理与精调

在大语言模型中,雅各比矩阵被用于研究模型对输入扰动的敏感性,或指导精调时的梯度裁剪与更新。

5. 总结

- 梯度 是描述标量函数变化率的向量;

- 雅各比矩阵 是描述向量函数所有输出对输入变化的矩阵;

- 两者紧密相关:梯度是雅各比矩阵的特例。

在机器学习与深度学习中,梯度用于优化,雅各比矩阵在多任务学习、对抗训练和大模型分析中有广泛应用。通过数值模拟,我们可以直观理解它们的区别与联系,掌握它们在实际场景中的重要性。

英文版

Jacobian Matrix vs Gradient: Differences and Connections

In mathematics and machine learning, the gradient and the Jacobian matrix are essential concepts that describe the rate of change of functions. While they are closely related, they serve different purposes and are used in distinct scenarios. This blog will explore their definitions, differences, and connections through examples, particularly emphasizing the Jacobian matrix’s role in deep learning and large-scale models.

1. Definition of Gradient and Jacobian Matrix

1.1 Gradient

The gradient is a vector representation of the rate of change for a scalar-valued function.

For a scalar function ( f : R n → R f: \mathbb{R}^n \to \mathbb{R} f:Rn→R ), the gradient is an ( n n n )-dimensional vector:

∇ f ( x ) = [ ∂ f ∂ x 1 ∂ f ∂ x 2 ⋮ ∂ f ∂ x n ] . \nabla f(x) = \begin{bmatrix} \frac{\partial f}{\partial x_1} \\ \frac{\partial f}{\partial x_2} \\ \vdots \\ \frac{\partial f}{\partial x_n} \end{bmatrix}. ∇f(x)= ∂x1∂f∂x2∂f⋮∂xn∂f .

This represents the direction and magnitude of the steepest ascent of ( f f f ).

1.2 Jacobian Matrix

The Jacobian matrix describes the rate of change for a vector-valued function.

For a vector function ( f : R n → R m \mathbf{f}: \mathbb{R}^n \to \mathbb{R}^m f:Rn→Rm ), where the input is ( n n n )-dimensional and the output is ( m m m )-dimensional, the Jacobian matrix is an ( m × n m \times n m×n ) matrix:

D f ( x ) = [ ∂ f 1 ∂ x 1 ∂ f 1 ∂ x 2 ⋯ ∂ f 1 ∂ x n ∂ f 2 ∂ x 1 ∂ f 2 ∂ x 2 ⋯ ∂ f 2 ∂ x n ⋮ ⋮ ⋱ ⋮ ∂ f m ∂ x 1 ∂ f m ∂ x 2 ⋯ ∂ f m ∂ x n ] . Df(x) = \begin{bmatrix} \frac{\partial f_1}{\partial x_1} & \frac{\partial f_1}{\partial x_2} & \cdots & \frac{\partial f_1}{\partial x_n} \\ \frac{\partial f_2}{\partial x_1} & \frac{\partial f_2}{\partial x_2} & \cdots & \frac{\partial f_2}{\partial x_n} \\ \vdots & \vdots & \ddots & \vdots \\ \frac{\partial f_m}{\partial x_1} & \frac{\partial f_m}{\partial x_2} & \cdots & \frac{\partial f_m}{\partial x_n} \end{bmatrix}. Df(x)= ∂x1∂f1∂x1∂f2⋮∂x1∂fm∂x2∂f1∂x2∂f2⋮∂x2∂fm⋯⋯⋱⋯∂xn∂f1∂xn∂f2⋮∂xn∂fm .

- Each row is the gradient of a scalar function ( f i ( x ) f_i(x) fi(x) );

- The Jacobian matrix encapsulates all partial derivatives of ( f \mathbf{f} f ) with respect to its inputs.

2. Differences and Connections Between Gradient and Jacobian Matrix

| Aspect | Gradient | Jacobian Matrix |

|---|---|---|

| Scope | Scalar function ( f : R n → R f: \mathbb{R}^n \to \mathbb{R} f:Rn→R ) | Vector function ( f : R n → R m f: \mathbb{R}^n \to \mathbb{R}^m f:Rn→Rm ) |

| Form | An ( n n n )-dimensional vector | An ( m × n m \times n m×n ) matrix |

| Meaning | Represents the rate of change of ( f f f ) in the input space | Represents the rate of change of all outputs w.r.t. all inputs |

| Connection | The gradient is a special case of the Jacobian (when ( m = 1 m = 1 m=1 )) | Each row of the Jacobian matrix is a gradient of ( f i ( x ) f_i(x) fi(x) ) |

3. Numerical Simulation: Gradient and Jacobian Matrix

Example Function

Consider the function ( f : R 2 → R 2 \mathbf{f}: \mathbb{R}^2 \to \mathbb{R}^2 f:R2→R2 ) defined as:

f ( x 1 , x 2 ) = [ x 1 2 + x 2 x 1 x 2 ] . \mathbf{f}(x_1, x_2) = \begin{bmatrix} x_1^2 + x_2 \\ x_1 x_2 \end{bmatrix}. f(x1,x2)=[x12+x2x1x2].

3.1 Gradient Computation (Scalar Function Case)

If we focus on the first output component ( f 1 ( x ) = x 1 2 + x 2 f_1(x) = x_1^2 + x_2 f1(x)=x12+x2 ), the gradient is:

∇ f 1 ( x ) = [ ∂ f 1 ∂ x 1 ∂ f 1 ∂ x 2 ] = [ 2 x 1 1 ] . \nabla f_1(x) = \begin{bmatrix} \frac{\partial f_1}{\partial x_1} \\ \frac{\partial f_1}{\partial x_2} \end{bmatrix} = \begin{bmatrix} 2x_1 \\ 1 \end{bmatrix}. ∇f1(x)=[∂x1∂f1∂x2∂f1]=[2x11].

3.2 Jacobian Matrix Computation (Vector Function Case)

For the full vector function ( f \mathbf{f} f ), the Jacobian matrix is:

D f ( x ) = [ ∂ f 1 ∂ x 1 ∂ f 1 ∂ x 2 ∂ f 2 ∂ x 1 ∂ f 2 ∂ x 2 ] = [ 2 x 1 1 x 2 x 1 ] . Df(x) = \begin{bmatrix} \frac{\partial f_1}{\partial x_1} & \frac{\partial f_1}{\partial x_2} \\ \frac{\partial f_2}{\partial x_1} & \frac{\partial f_2}{\partial x_2} \end{bmatrix} = \begin{bmatrix} 2x_1 & 1 \\ x_2 & x_1 \end{bmatrix}. Df(x)=[∂x1∂f1∂x1∂f2∂x2∂f1∂x2∂f2]=[2x1x21x1].

3.3 Python Implementation

The following Python code demonstrates how to compute the gradient and Jacobian matrix numerically:

import numpy as np# Define the function

def f(x):return np.array([x[0]**2 + x[1], x[0] * x[1]])# Define the Jacobian matrix

def jacobian_f(x):return np.array([[2 * x[0], 1],[x[1], x[0]]])# Input point

x = np.array([1.0, 2.0])# Compute the gradient of f1

gradient_f1 = np.array([2 * x[0], 1]) # Gradient of the first output component# Compute the Jacobian matrix

jacobian = jacobian_f(x)print("Gradient of f1:", gradient_f1)

print("Jacobian matrix of f:", jacobian)

Output:

Gradient of f1: [2. 1.]

Jacobian matrix of f:

[[2. 1.][2. 1.]]

4. Applications in Machine Learning and Deep Learning

4.1 Gradient Applications

In deep learning, the gradient is critical for backpropagation. When the loss function is a scalar, its gradient indicates how to adjust the parameters to minimize the loss. For example:

- For a neural network with parameters ( θ \theta θ ), the loss function ( L ( θ ) L(\theta) L(θ) ) has a gradient ( ∇ L ( θ ) \nabla L(\theta) ∇L(θ) ), which is used by optimizers (e.g., SGD, Adam) to update the parameters.

4.2 Jacobian Matrix Applications

-

Multi-Output Models

The Jacobian matrix is essential for multi-task learning or models with multiple outputs (e.g., transformers where the output is a sequence). It describes how each input affects all outputs. -

Adversarial Examples

In adversarial attacks, the Jacobian matrix helps compute how small perturbations in input affect multiple outputs simultaneously. -

Hessian-Free Methods

In second-order optimization methods (e.g., Newton’s method), the Jacobian matrix is used to compute the Hessian matrix, which is crucial for convergence. -

Large Model Fine-Tuning

For large language models, the Jacobian matrix is used to analyze how sensitive a model is to input perturbations, guiding techniques like gradient clipping or parameter-efficient fine-tuning (PEFT).

5. Summary

- The gradient is a vector describing the rate of change of a scalar function, while the Jacobian matrix is a matrix describing the rate of change of a vector function.

- The gradient is a special case of the Jacobian matrix (when there is only one output dimension).

- In machine learning, gradients are essential for optimization, whereas Jacobian matrices are widely used in multi-output models, adversarial training, and fine-tuning large models.

Through numerical simulations and real-world applications, understanding the gradient and Jacobian matrix can significantly enhance your knowledge of optimization, deep learning, and large-scale model analysis.

后记

2024年12月19日15点30分于上海,在GPT4o大模型辅助下完成。