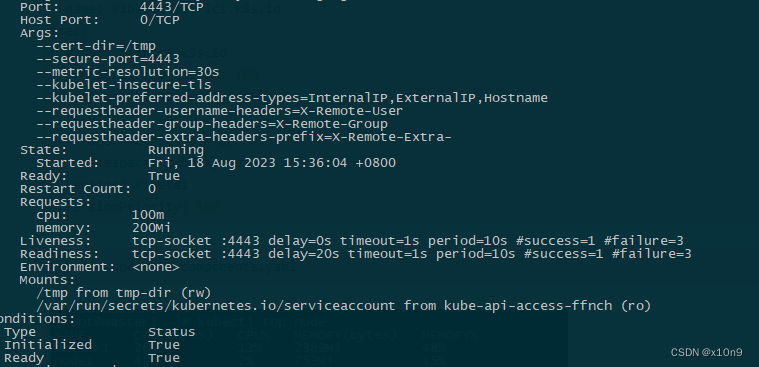

只有一个问题,原来的httpGet存活、就绪检测一直不通过,于是改为tcpSocket后pod正常。

wget https://github.com/kubernetes-sigs/metrics-server/releases/latest/download/components.yaml

修改后的yaml文件,镜像修改为阿里云

apiVersion: v1

kind: ServiceAccount

metadata:labels:k8s-app: metrics-servername: metrics-servernamespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:labels:k8s-app: metrics-serverrbac.authorization.k8s.io/aggregate-to-admin: "true"rbac.authorization.k8s.io/aggregate-to-edit: "true"rbac.authorization.k8s.io/aggregate-to-view: "true"name: system:aggregated-metrics-reader

rules:

- apiGroups:- metrics.k8s.ioresources:- pods- nodesverbs:- get- list- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:labels:k8s-app: metrics-servername: system:metrics-server

rules:

- apiGroups:- ""resources:- nodes/metricsverbs:- get

- apiGroups:- ""resources:- pods- nodesverbs:- get- list- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:labels:k8s-app: metrics-servername: metrics-server-auth-readernamespace: kube-system

roleRef:apiGroup: rbac.authorization.k8s.iokind: Rolename: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccountname: metrics-servernamespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:labels:k8s-app: metrics-servername: metrics-server:system:auth-delegator

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: system:auth-delegator

subjects:

- kind: ServiceAccountname: metrics-servernamespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:labels:k8s-app: metrics-servername: system:metrics-server

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: system:metrics-server

subjects:

- kind: ServiceAccountname: metrics-servernamespace: kube-system

---

apiVersion: v1

kind: Service

metadata:labels:k8s-app: metrics-servername: metrics-servernamespace: kube-system

spec:ports:- name: httpsport: 443protocol: TCPtargetPort: httpsselector:k8s-app: metrics-server

---

apiVersion: apps/v1

kind: Deployment

metadata:labels:k8s-app: metrics-servername: metrics-servernamespace: kube-system

spec:selector:matchLabels:k8s-app: metrics-serverstrategy:rollingUpdate:maxUnavailable: 0template:metadata:labels:k8s-app: metrics-serverspec:containers:- args:

# - --cert-dir=/tmp

# - --secure-port=4443

# - --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname

# - --kubelet-use-node-status-port

# - --metric-resolution=15s- --cert-dir=/tmp- --secure-port=4443- --metric-resolution=30s- --kubelet-insecure-tls- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname- --requestheader-username-headers=X-Remote-User- --requestheader-group-headers=X-Remote-Group- --requestheader-extra-headers-prefix=X-Remote-Extra-image: registry.aliyuncs.com/google_containers/metrics-server:v0.6.4imagePullPolicy: IfNotPresentlivenessProbe:failureThreshold: 3tcpSocket:port: 4443periodSeconds: 10name: metrics-serverports:- containerPort: 4443name: httpsprotocol: TCPreadinessProbe:failureThreshold: 3tcpSocket:port: 4443initialDelaySeconds: 20periodSeconds: 10resources:requests:cpu: 100mmemory: 200MisecurityContext:allowPrivilegeEscalation: falsereadOnlyRootFilesystem: truerunAsNonRoot: truerunAsUser: 1000volumeMounts:- mountPath: /tmpname: tmp-dirnodeSelector:kubernetes.io/os: linuxpriorityClassName: system-cluster-criticalserviceAccountName: metrics-servervolumes:- emptyDir: {}name: tmp-dir

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:labels:k8s-app: metrics-servername: v1beta1.metrics.k8s.io

spec:group: metrics.k8s.iogroupPriorityMinimum: 100insecureSkipTLSVerify: trueservice:name: metrics-servernamespace: kube-systemversion: v1beta1versionPriority: 100

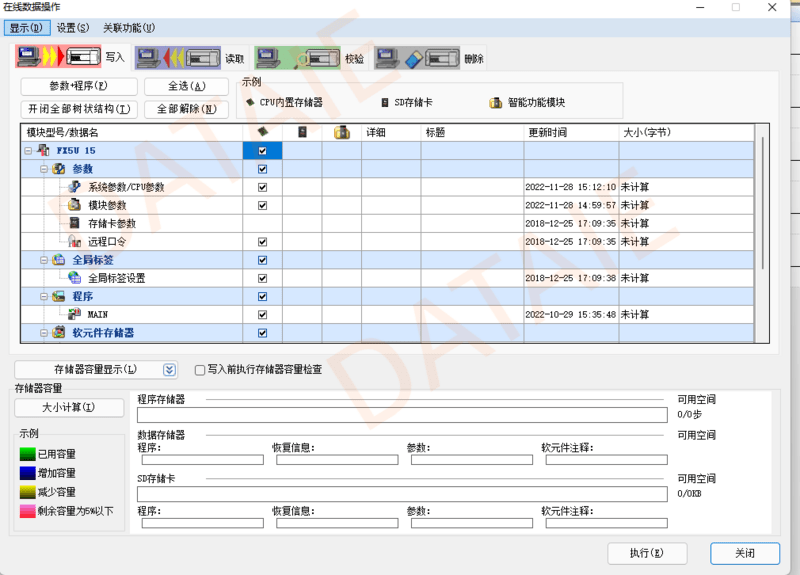

kubectl apply -f components.yaml

部署kube-prometheus

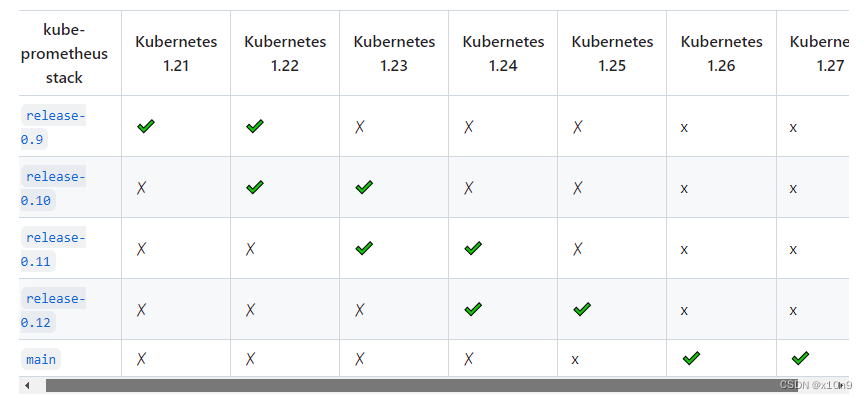

兼容1.27的为main分支

只克隆main分支

git clone --single-branch --branch main https://github.com/prometheus-operator/kube-prometheus.git

cd kube-prometheus如果直接运行kubectl apply -f manifests/setup/,会有元数据注解太长的错误

Error from server (Invalid): error when creating "manifests/setup/0prometheusCustomResourceDefinition.yaml": CustomResourceDefinition.apiextensions.k8s.io "prometheuses.monitoring.coreos.com" is invalid: metadata.annotations: Too long: must have at most 262144 bytes应该使用以下方式

1. kubectl apply -f manifests/setup/

2. kubectl apply --server-side -f manifests/setup CRD正常创建后然后再执行:

kubectl apply -f manifests

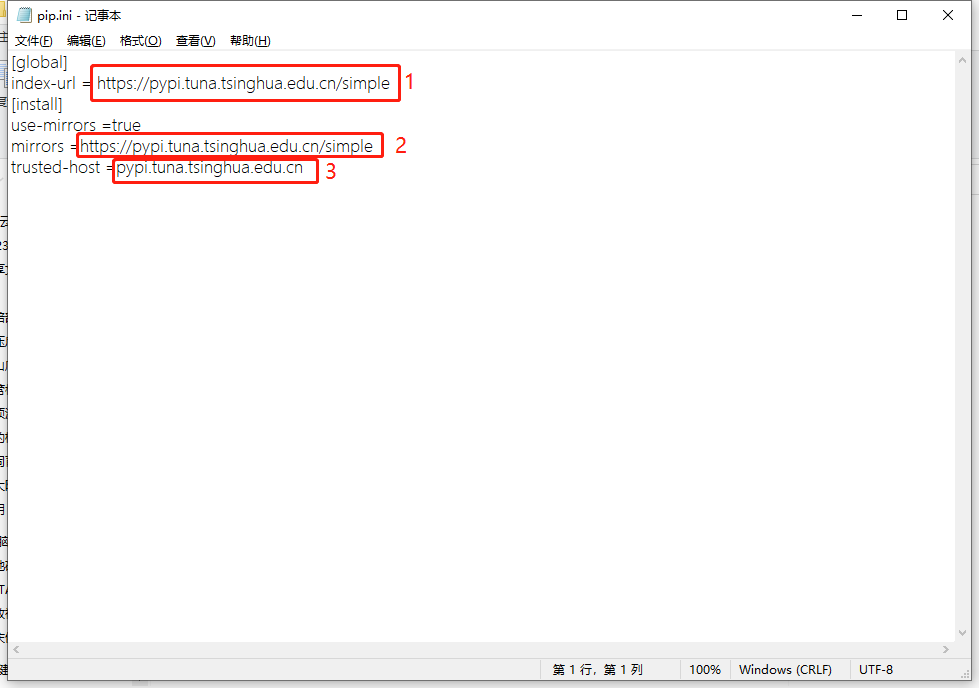

镜像下载使用这个项目的方法:https://github.com/DaoCloud/public-image-mirror,在镜像前面加:m.daocloud.io

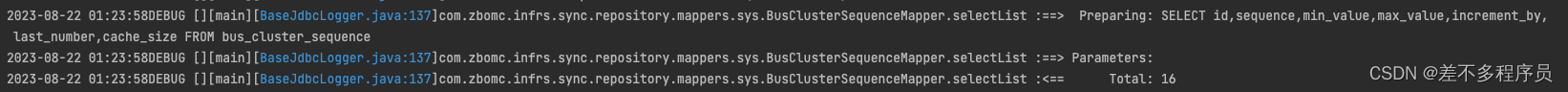

prometheus-k8s-0 pod报权限不足问题

ts=2023-08-21T03:14:34.582Z caller=klog.go:116 level=error component=k8s_client_runtime func=ErrorDepth msg="pkg/mod/k8s.io/client-go@v0.27.3/tools/cache/reflector.go:231: Failed to watch *v1.Pod: failed to list *v1.Pod: pods is forbidden: User \"system:serviceaccount:monitoring:prometheus-k8s\" cannot list resource \"pods\" in API group \"\" at the cluster scope"

处理:

修改prometheus-clusterRole.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:labels:app.kubernetes.io/component: prometheusapp.kubernetes.io/name: prometheusapp.kubernetes.io/part-of: kube-prometheusapp.kubernetes.io/version: 2.26.0name: prometheus-k8s

rules:

- apiGroups:- ""resources:- nodes/metrics- services- endpoints- podsverbs:- get- list- watch

- nonResourceURLs:- /metricsverbs:- get

跨节点pind不通monitoring下的pod ip,prometheus target异常问题处理,

删除kube-prometheus/manifests下的netowrkprolic

alertmanager-networkPolicy.yaml

blackboxExporter-networkPolicy.yaml

grafana-networkPolicy.yaml

kubeStateMetrics-networkPolicy.yaml

nodeExporter-networkPolicy.yaml

prometheusAdapter-networkPolicy.yaml

prometheus-networkPolicy.yaml

prometheusOperator-networkPolicy.yaml

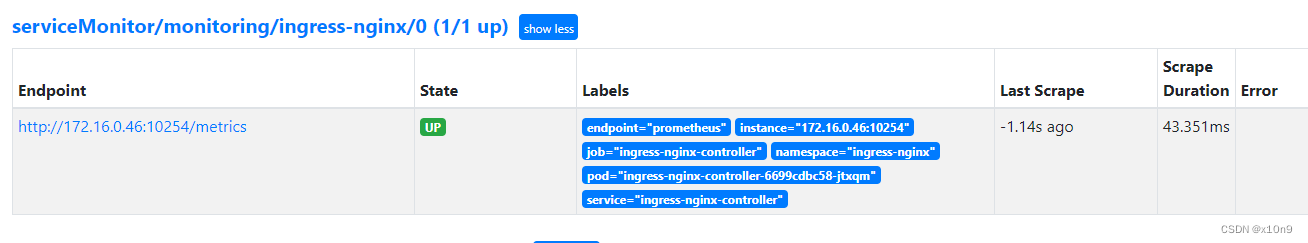

使用ServiceMonitor添加监控:

以ingress-nginx为例

修改ingress-nginx.yaml的service

apiVersion: v1

kind: Service

metadata:annotations:prometheus.io/port: '10254' #新增内容prometheus.io/scrape: 'true' #新增内容labels:app.kubernetes.io/component: controllerapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/name: ingress-nginxapp.kubernetes.io/part-of: ingress-nginxapp.kubernetes.io/version: 1.8.1name: ingress-nginx-controllernamespace: ingress-nginx

spec:externalTrafficPolicy: LocalipFamilies:- IPv4ipFamilyPolicy: SingleStackports:- appProtocol: httpname: httpport: 80protocol: TCPtargetPort: http- appProtocol: httpsname: httpsport: 443protocol: TCPtargetPort: https- appProtocol: http #新增内容name: prometheus #新增内容port: 10254 #新增内容protocol: TCP #新增内容targetPort: 10254 #新增内容selector:app.kubernetes.io/component: controllerapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/name: ingress-nginxtype: LoadBalancer# ingress-nginx-monitor.yamlapiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:name: ingress-nginx # ServiceMonitor名称namespace: monitoring # ServiceMonitor所在名称空间

spec:endpoints: # prometheus所采集Metrics地址配置,endpoints为一个数组,可以创建多个,但是每个endpoints包含三个字段interval、path、port- interval: 15s # prometheus采集数据的周期,单位为秒path: /metrics # prometheus采集数据的路径port: prometheus # prometheus采集数据的端口,这里为port的name,主要是通过spec.selector中选择对应的svc,在选中的svc中匹配该端口namespaceSelector: # 需要发现svc的范围any: true # 有且仅有一个值true,当该字段被设置时,表示监听所有符合selector所选择的svcselector:matchLabels: # 选择svc的标签app.kubernetes.io/component: controllerapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/name: ingress-nginxapp.kubernetes.io/part-of: ingress-nginxapp.kubernetes.io/version: 1.8.1kubectl apply -f ingress-nginx-monitor.yaml

确认ingress-nginx servicemonitors已添加

kubectl -n monitoring get servicemonitors.monitoring.coreos.com

NAME AGE

alertmanager-main 70m

blackbox-exporter 70m

coredns 70m

grafana 70m

ingress-nginx 24m

kube-apiserver 70m

kube-controller-manager 70m

kube-scheduler 70m

kube-state-metrics 70m

kubelet 70m

node-exporter 70m

prometheus-adapter 70m

prometheus-k8s 70m

prometheus-operator 70m

监控外部ETCD:

etcd配置文件添加:ETCD_LISTEN_METRICS_URLS=“http://0.0.0.0:2381”

etcd-job.yaml

- job_name: "etcd-server"scrape_interval: 30sscrape_timeout: 10sstatic_configs:- targets: ["172.16.0.157:2381"]labels:instance: etcdserver

创建secret

kubectl create secret generic etcd-secret --from-file=etcd-job.yaml -n monitoring

kube-prometheus/manifests/prometheus-prometheus.yaml 末尾添加配置:

apiVersion: monitoring.coreos.com/v1

kind: Prometheus

metadata:labels:app.kubernetes.io/component: prometheusapp.kubernetes.io/instance: k8sapp.kubernetes.io/name: prometheusapp.kubernetes.io/part-of: kube-prometheusapp.kubernetes.io/version: 2.46.0name: k8snamespace: monitoring

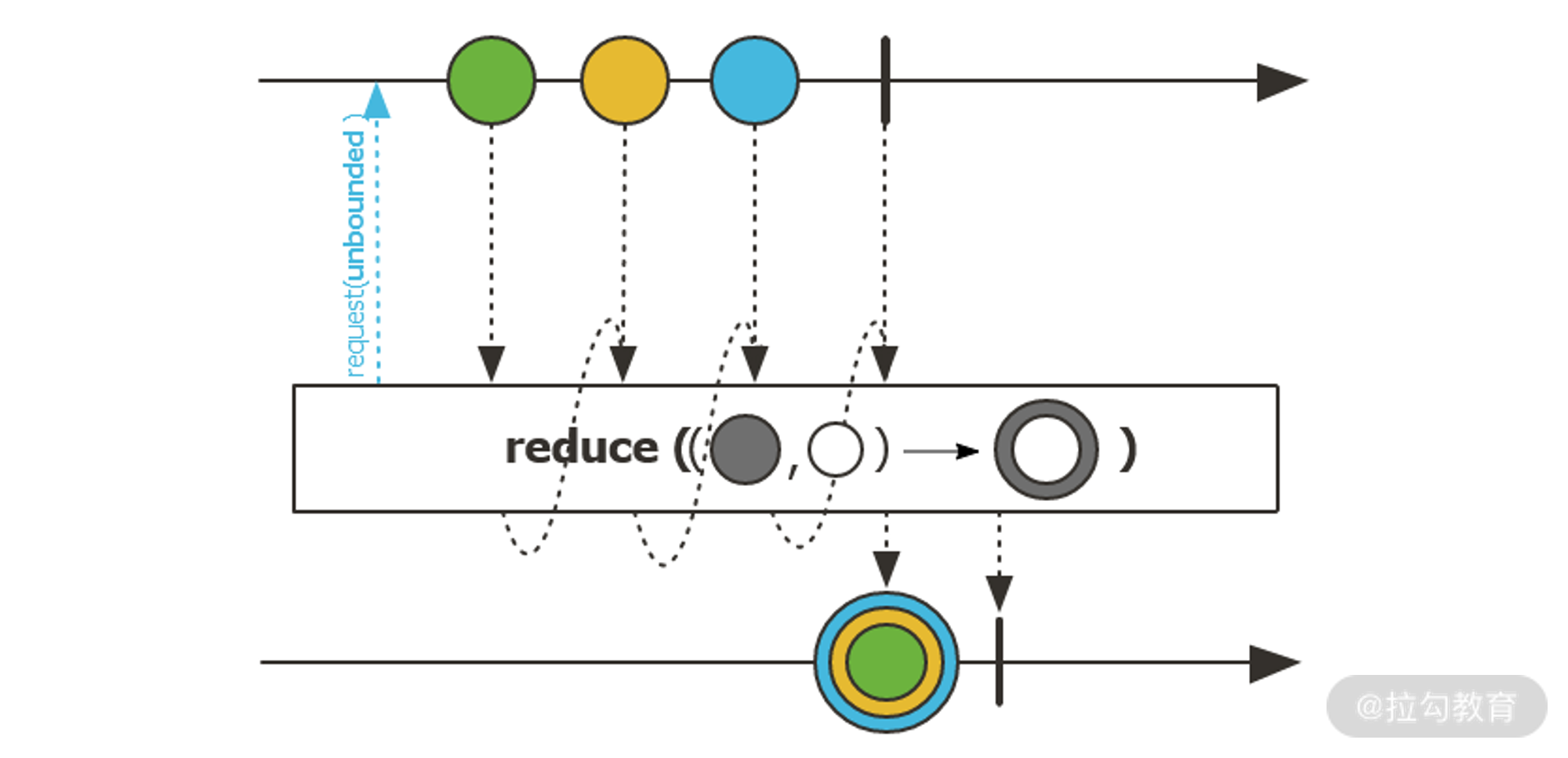

spec:alerting:alertmanagers:- apiVersion: v2name: alertmanager-mainnamespace: monitoringport: webenableFeatures: []externalLabels: {}image: quay.io/prometheus/prometheus:v2.46.0nodeSelector:kubernetes.io/os: linuxpodMetadata:labels:app.kubernetes.io/component: prometheusapp.kubernetes.io/instance: k8sapp.kubernetes.io/name: prometheusapp.kubernetes.io/part-of: kube-prometheusapp.kubernetes.io/version: 2.46.0podMonitorNamespaceSelector: {}podMonitorSelector: {}probeNamespaceSelector: {}probeSelector: {}replicas: 2resources:requests:memory: 400MiruleNamespaceSelector: {}ruleSelector: {}securityContext:fsGroup: 2000runAsNonRoot: truerunAsUser: 1000serviceAccountName: prometheus-k8sserviceMonitorNamespaceSelector: {}serviceMonitorSelector: {}version: 2.46.0additionalScrapeConfigs: #新增name: etcd-secret #新增key: etcd-job.yaml #新增

kubectl apply -f kube-prometheus/manifests/prometheus-prometheus.yaml

grafana导入 3070模板

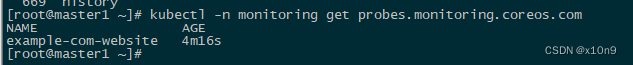

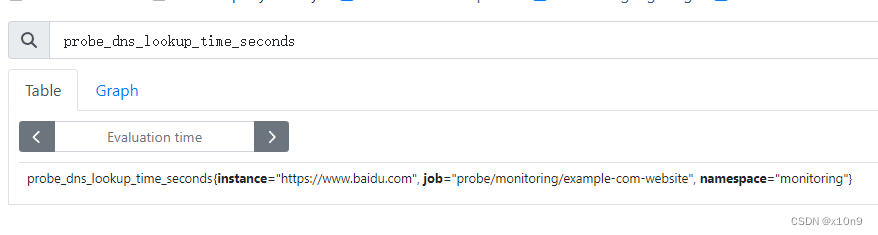

http存活检测

kind: Probe

apiVersion: monitoring.coreos.com/v1

metadata:name: example-com-websitenamespace: monitoring

spec:interval: 60smodule: http_2xxprober:url: blackbox-exporter.monitoring.svc.cluster.local:19115targets:staticConfig:static:- https://www.baidu.com

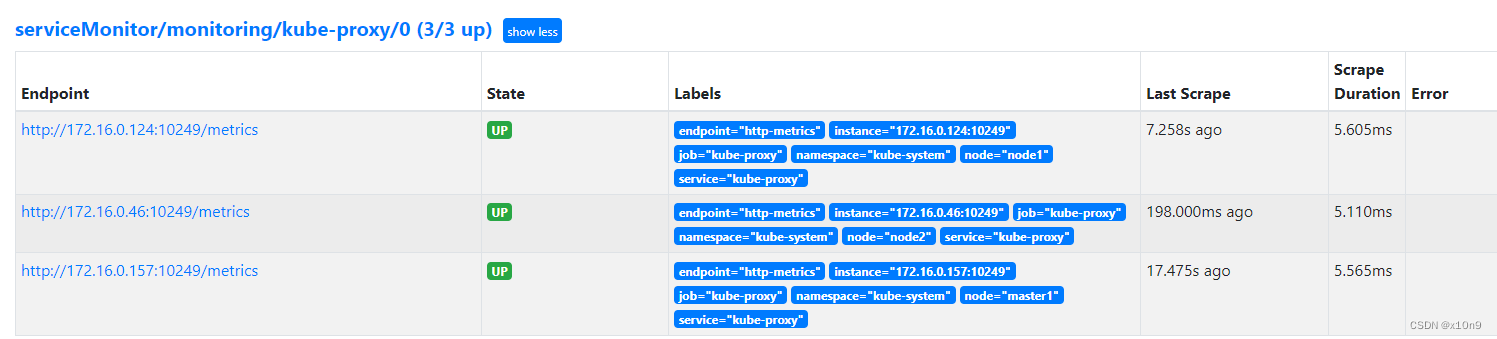

添加proxy监控,默认为http

---

apiVersion: monitoring.coreos.com/v1

kind: ServiceMonitor

metadata:labels:app.kubernetes.io/name: kube-proxyapp.kubernetes.io/part-of: kube-prometheusname: kube-proxynamespace: monitoring

spec:endpoints:- bearerTokenFile: /var/run/secrets/kubernetes.io/serviceaccount/tokeninterval: 30sport: http-metricsscheme: httptlsConfig:insecureSkipVerify: truejobLabel: app.kubernetes.io/namenamespaceSelector:matchNames:- kube-systemselector:matchLabels:app.kubernetes.io/name: kube-proxy

---

apiVersion: v1

kind: Endpoints

metadata:name: kube-proxynamespace: kube-systemlabels:app.kubernetes.io/name: kube-proxy

subsets:

- addresses:- ip: 172.16.0.157targetRef:kind: Nodename: master1- ip: 172.16.0.124targetRef:kind: Nodename: node1- ip: 172.16.0.46targetRef:kind: Nodename: node2ports:- name: http-metricsport: 10249protocol: TCP

---

apiVersion: v1

kind: Service

metadata:name: kube-proxynamespace: kube-systemlabels:app.kubernetes.io/name: kube-proxy

spec:type: ClusterIP clusterIP: Noneports:- name: http-metricsport: 10249targetPort: 10249protocol: TCP