目录

一. CNN卷积神经网络与传统神经网络的不同

1. 模型图

2. 参数分布情况

3. 卷积神经网络和传统神经网络的层次结构

4. 传统神经网络的缺点:

二. CNN的基本操作

1. 卷积

2. 池化

三. CNN实现过程

1. 算法流程图

2. 输入层

3. 卷积层

4. 激活层

5. 池化层

6. 全连接层

二. CNN卷积神经网络的过程

1. 正向传播过程

2. 反向传播过程(算法核心)

三. 卷积神经网络代码

1. CnnLayer类

2. Dataset类

3. FullCnn类

4. LayerBuilder类

5. LayerTypeEnum类

6. MathUtils类

7. Size类

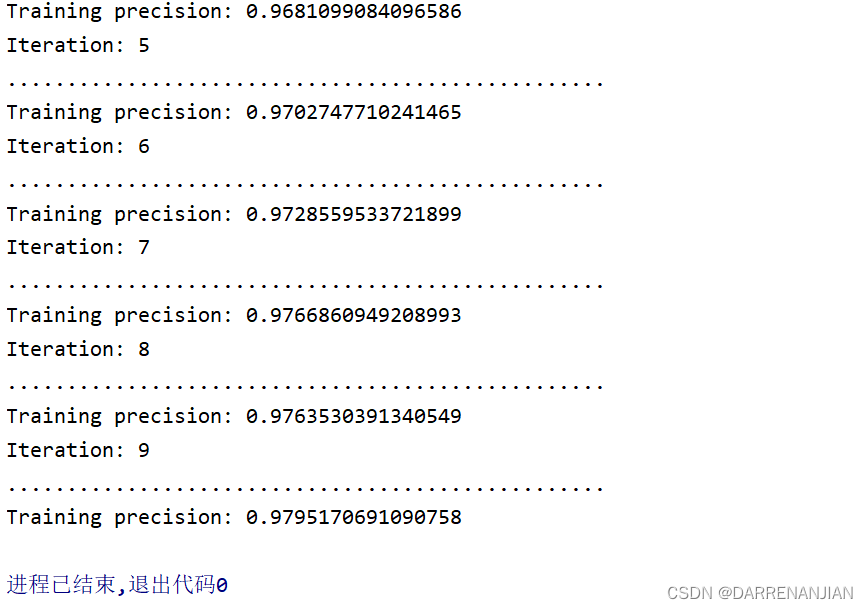

四. 运行结果

一. CNN卷积神经网络与传统神经网络的不同

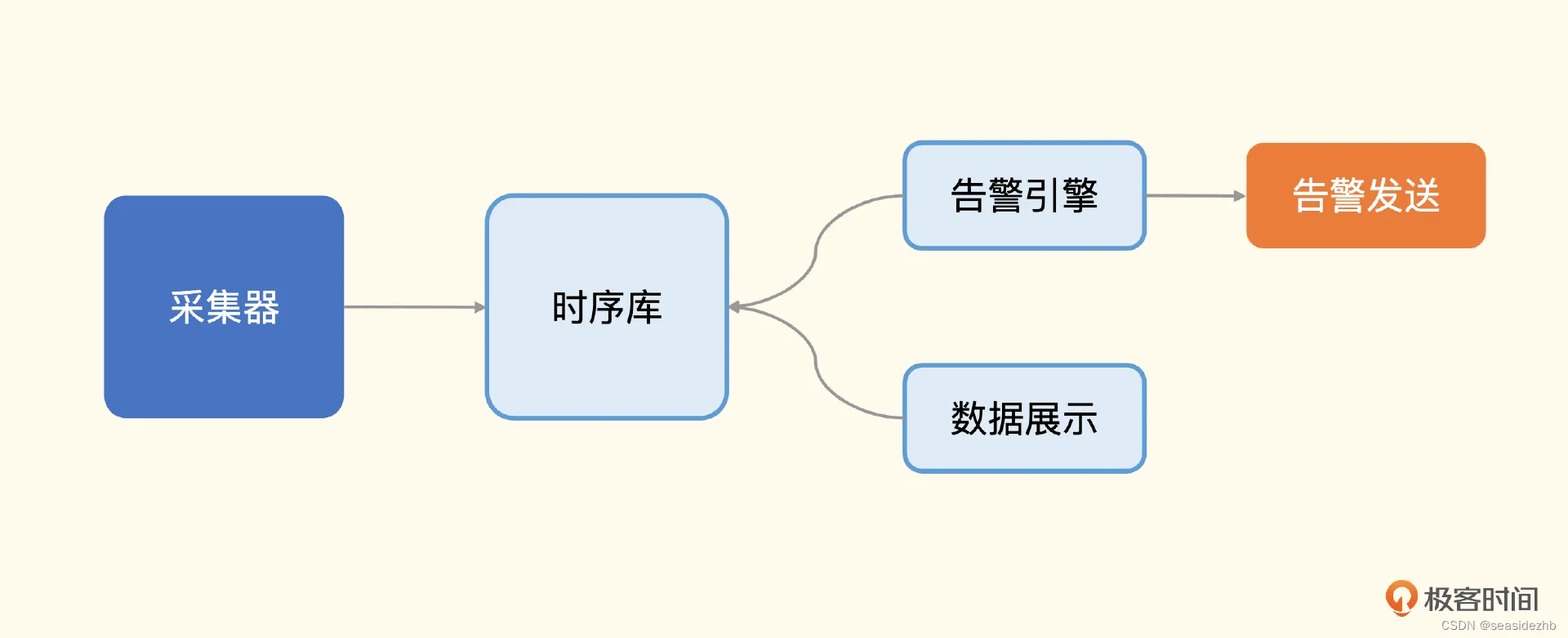

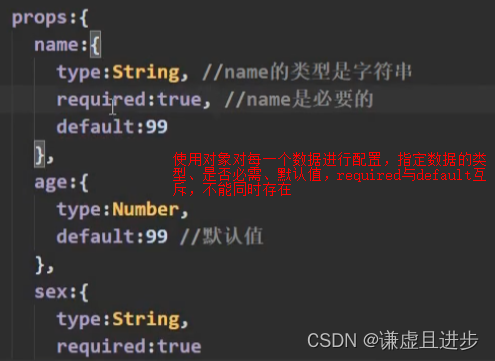

1. 模型图

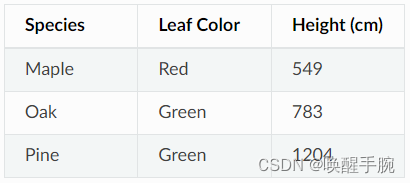

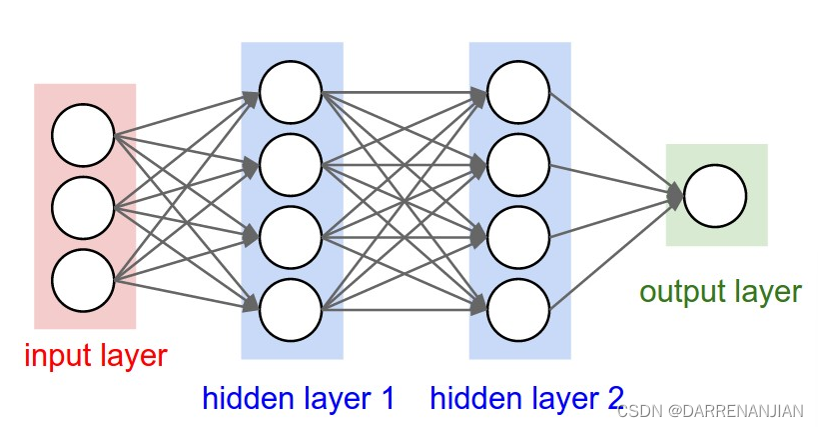

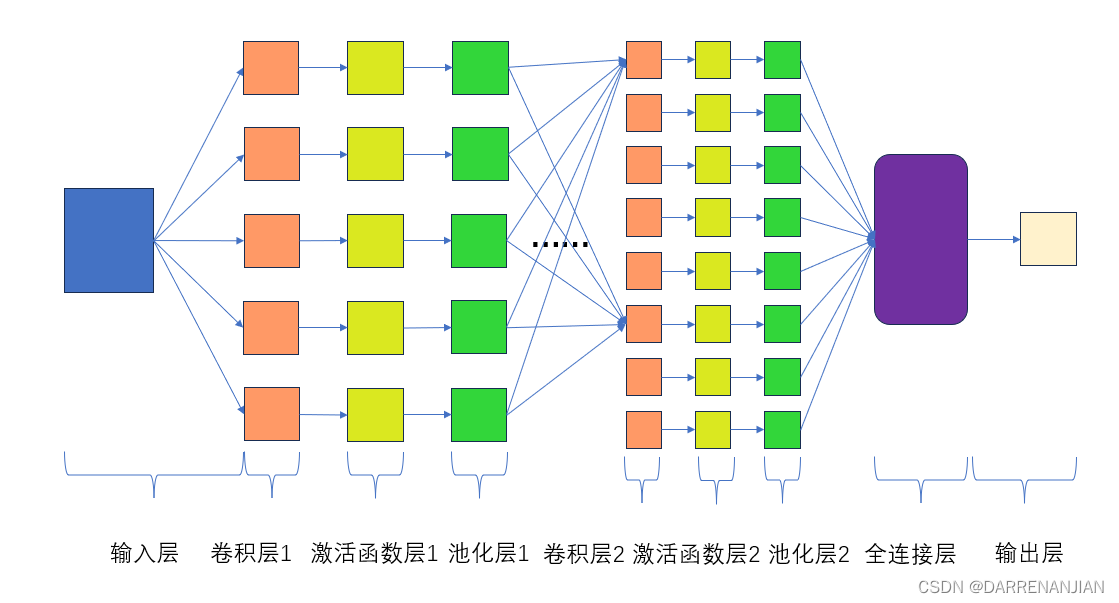

传统神经网络和卷积神经网络的大致模型如下图所示:

2. 参数分布情况

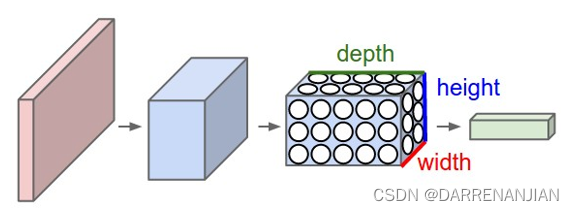

首先我们根据矩阵运算划分——传统神经网络处理过程是一维过程,卷积神经网络(CNN)处理过程是多维的,更进一步解释就是传统神经网络的数据输入仅仅是一个向量,CNN的数据输入是一张图片这个图片有深度depth、高度height和宽度width。卷积神经网络:卷积神经层由多个特征面构成,每一个特征面则是由很多个神经元构成,卷积核代表参数w。传统神经网络:每层由排成一列的神经元构成;神经元:每个神经元代表矩阵的一个列向量Xi,神经元连线代表系数W。每一个像素值,都是一个神经元,每个神经元代表了一个特征。

3. 卷积神经网络和传统神经网络的层次结构

传统神经网络:

- 输入层:的每个神经元代表了一个特征

- 隐藏层:特征提取

- 输出层:输出层个数代表了分类标签的个数

卷积神经网络:

- 多通道输入,多卷积核,输出的通道数=卷积核的个数。输入层->卷积层->激活层->池化层->全连接层

- 数据输入层:对原始数据进行初步处理,使卷积神经网络能有更好的效果

- 卷积层:提取特征(矩阵大小个数都变化);卷积核遍历图片上每一个像素点

- 激活层:计算结果通过一个激活函数加一个非线性的关系,使能逼近任何函数。

- 池化层:数据压缩,提取主要特征,降低网络复杂度;不改变矩阵的深度,缩小矩阵,减少网络的参数(矩阵变小,个数不变)

- 全连接层:分类器角色,将特征映射到样本标记空间,本质是矩阵变换。将特征图拉成一维向量,将每个特征点作为一个神经元进行分类任务。把所有局部特征结合变成全局特征,用来计算最后每一类的得分。

4. 传统神经网络的缺点:

首先将图像展开为向量会丢失空间信息(局部相关性被破坏);其次参数过多效率低下,训练困难;同时大量的参数也很快会导致网络过拟合。

相较之下,卷积神经网络(Convolutional Neural Network,CNN)是一种深度学习的模型,它可以有效地处理图像等高维数据。卷积神经网络的主要特点是使用卷积层和池化层来提取图像的局部特征和降低维度,从而减少参数数量和计算量。

二. CNN的基本操作

1. 卷积

介绍完卷积神经网络和传统神经网络,我们这里继续谈谈基本概念——卷积。

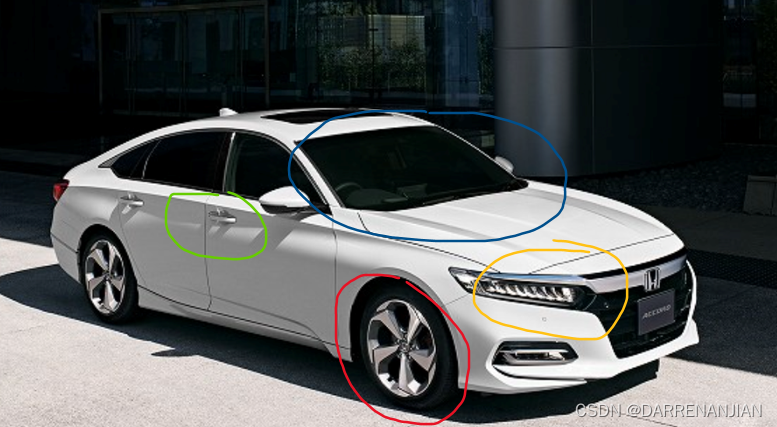

对于一张普通的图片我们人类需要进行识别,首先做的事情是不是判断出它有什么特征啊?这个是很关键的一步,例如下图是汽车的一张图片,那么我们是怎么样判断的呢?

首先我们是不是看它有轮子?它有车灯?前挡风玻璃?车门把手等等?,有了这些东西,好了那我们就可以判断他是个汽车

回顾我们人类的判断过程,最关键的一步就是对于特征的提取,而这里所说的卷积就是对于特征提取的一个方法。

我们从卷积的定义开始说起,很多人在接触卷积这个概念时, 会感到很奇怪. 我们知道卷积的定义是这样的:给定两个函数,

,定义卷积:

也就是说,给两个函数和

,我们把它卷积起来成为

,具体构造如上。它的物理意义大概可以理解为:系统某一时刻的输出是由多个输入共同作用(叠加)的结果。放在图像分析里,

可以理解为原始像素点(source pixel),所有的原始像素点叠加起来,就是原始图了;

可以称为作用点,所有作用点合起来我们称为卷积核(Convolution kernel);卷积核上所有作用点依次作用于原始像素点后(即乘起来),线性叠加的输出结果,即是最终卷积的输出,也是我们想要的结果。

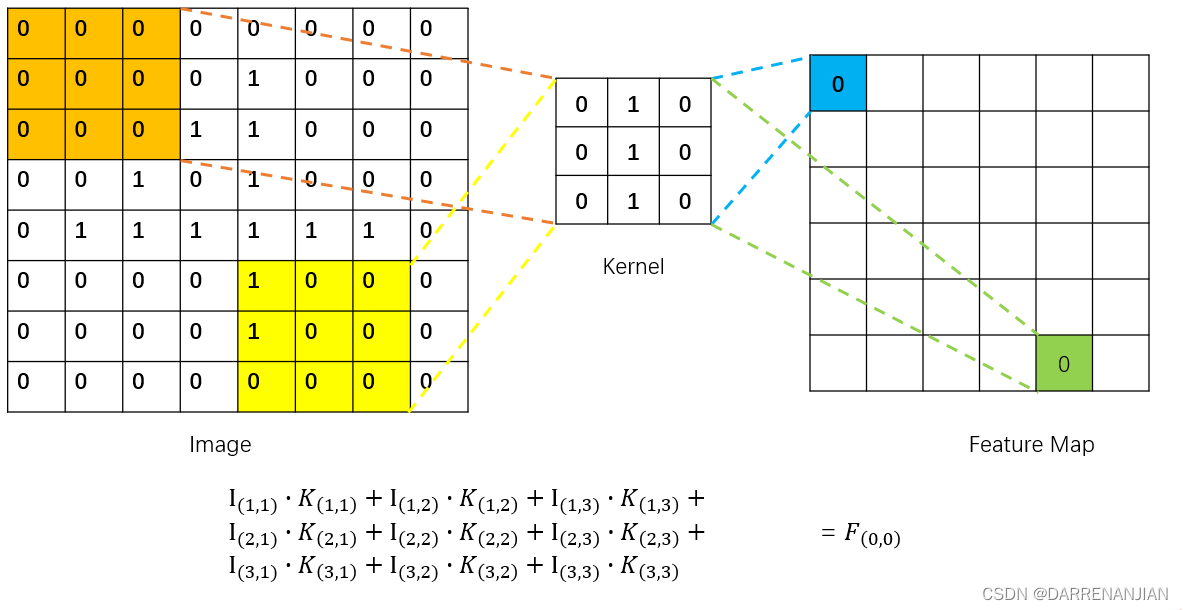

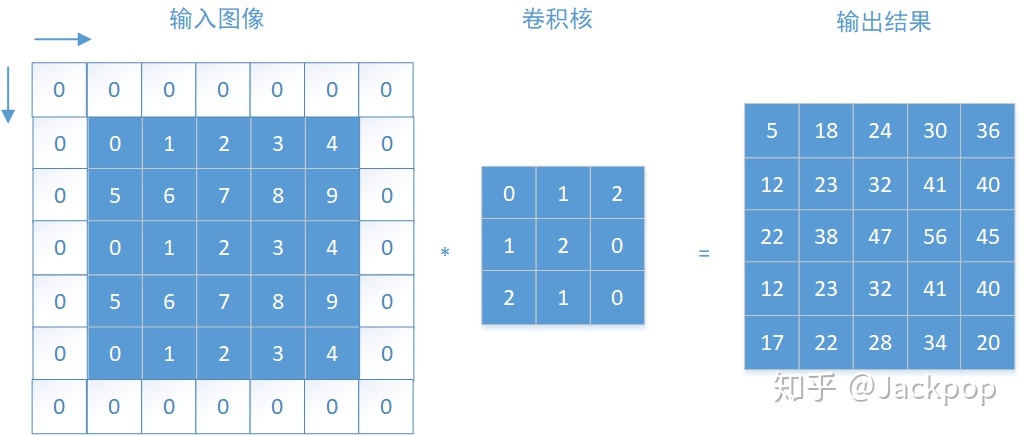

对于图像的卷积处理,我们继续来看。每一张照片其实就是一个数字矩阵,只不过彩色照片每个像素点不是用0、1表示而是用更广的数字表示,这里为了简便就设定为黑白照片,像素点是由0、1组合。假设我们现在有一张图片image它对应的像素点是这样的:

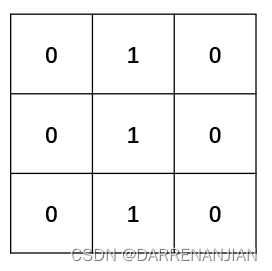

现在我们需要有一个特征提取器,也叫卷积核,这个东西主要是将上面的图片针对于卷积核的特征提取出来,然后构建成为一个新的矩阵,这个新的矩阵就有输入图片image的特征。先假定我们有一个卷积核如下图所示:

对于它的提取过程——将image中大小和kernel大小相等部分的对应位置相乘,即

为了更好的理解这一过程,下面有一个GIF动态图片

上述过程结束之后,我们得到了一个Feature Map(原image图像通过kernel进行特征提取得到的结果),这个Feature Map只是其中的一个,当然我们可以设置许多的卷积核kernel,用于提取原图片不同的特征,最终得到许多Feature Map,这一部分就是原图片的“核心”,用于辨认原图片的什么特征。

从上面的卷积过程我们不难发现,每次经过卷积大小都会发生改变,那么我们该怎么样保证卷积的结果大小不变呢?这里补充一点——零填充,就是在原本的图像上增加像素点,每个像素点的值都是0。

最后我们可以根据卷积的过程得到的Feature Map的图片大小与原图片的大小和卷积核的大小有关系,假设一张图片的大小为,卷积核的大小为

,步长为

,总共有

个卷积核,零填充的列数为

,则可得到输出的Feature Map的大小为:

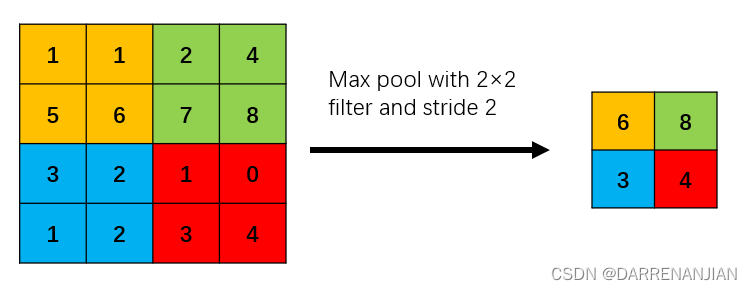

2. 池化

池化过程在一般卷积过程后。池化(pooling)的本质,其实就是采样。Pooling 对于输入的 Feature Map,选择某种方式对其进行降维压缩,以加快运算速度。采用较多的一种池化过程叫最大池化(Max Pooling),其具体操作过程如下:

池化过程类似于卷积过程,如上图所示,表示的就是对一个 4×4 feature map邻域内的值,用一个 2×2 的filter,步长为2进行“扫描”,选择最大值输出到下一层,这叫做 Max Pooling。

还有一种叫平均池化(Average Pooling),就是从以上取某个区域的最大值改为求这个区域的平均值,其具体操作过程如下:

如上图所示,表示的就是对一个 4×4 feature map邻域内的值,用一个 2×2 的filter,步长为2进行“扫描”,选择平均值输出到下一层,这叫做 Average Pooling。

三. CNN实现过程

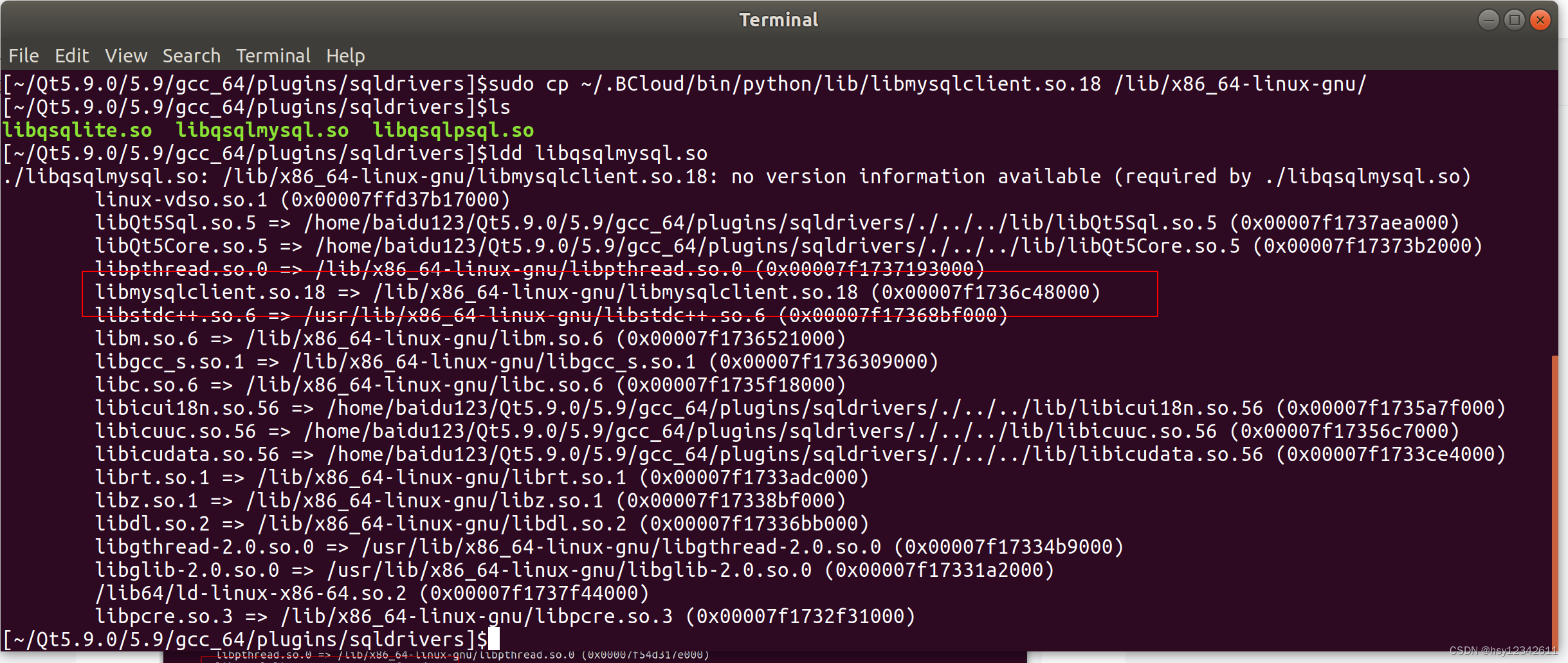

1. 算法流程图

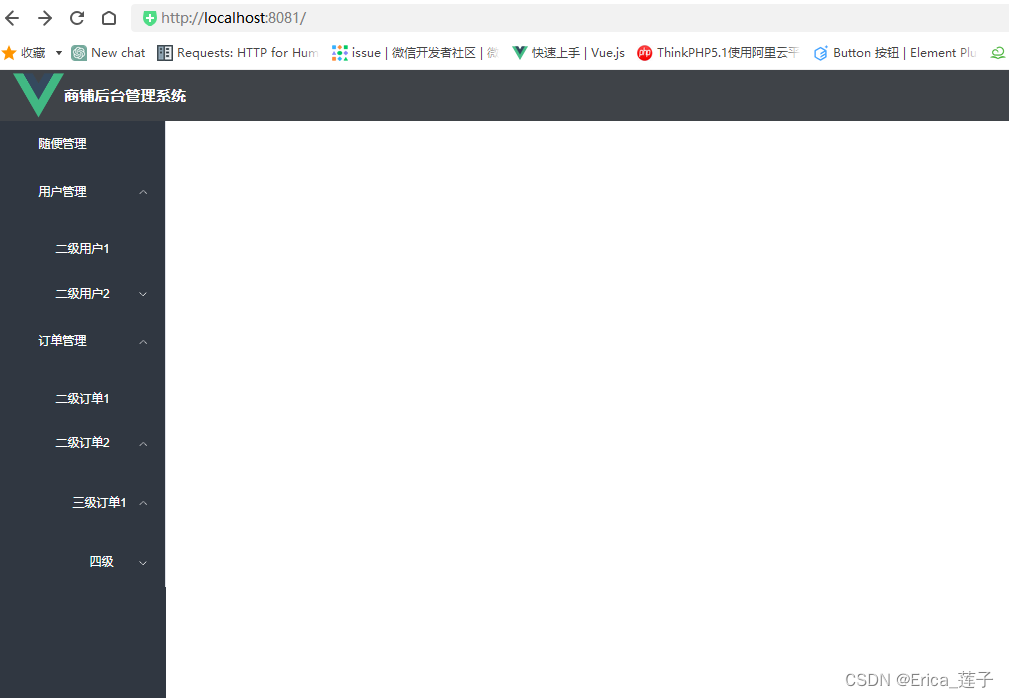

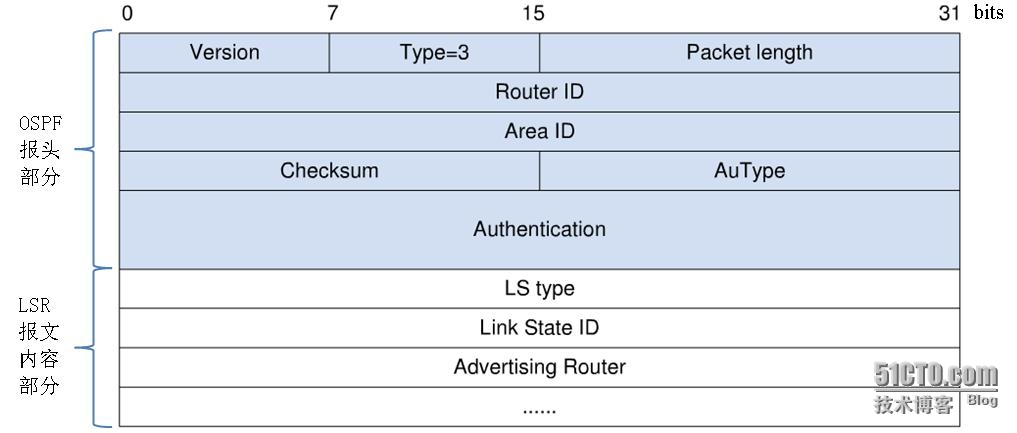

在这里对于传统全连接神经网络模型不详细赘述,有兴趣的请参考——文章,卷积神经网络一般由以下层实现

- 数据输入层/ Input layer

- 卷积计算层/ CONV layer

- ReLU激励层 / ReLU layer

- 池化层 / Pooling layer

- 全连接层 / FC layer

对应的模型过程如下图1所示:

2. 输入层

这里我们主要是要明白整个CNN模型的输入数据是什么,众所周知,一张图片就是一个超大的矩阵。输入层主要是n×m×3 RGB图像,这不同于人工神经网络,人工神经网络的输入是n×1维的矢量。

3. 卷积层

之前我们学习了卷积的概念,这里补充一些想法,便于理解后文

3.1 为什么选择卷积?

有时候可能会问自己,为什么要首先使用卷积操作?为什么不从一开始就展开输入图像矩阵?在这里给出答案,如果这样做,我们最终会得到大量需要训练的参数,而且大多数人都没有能够以最快的方式解决计算成本高昂任务的能力。此外,由于卷积神经网络具有的参数会更少,因此就可以避免出现过拟合现象。

3.2 多个FeatureMap的卷积

在上文中,我提过一张图片的卷积操作,那个部分是卷积操作的基础,但是假设我们进行到卷积层2的话,又该怎么处理多个图片输入的卷积操作呢?

答:现在有多个featuremap输入,我们要对其进行卷积操作,之前的卷积操作只是针对于一张图片(一个输入),现在我们的输入有多个图片,我们假设现在需要进行卷积操作的图片为,我们的卷积核为

,那么现在我们的卷积结果为:

卷积之后的数量大小和卷积核的数量大小一致。

4. 激活层

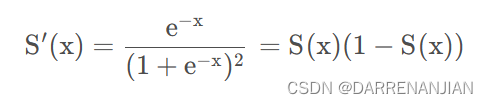

这一部分与之前写的BP神经网络激活函数的作用一致,于是就不再详谈,不过这里还是列出我在后面会用到的激活函数。Sigmoid函数是一个在生物学中常见的S型函数,也称为S型生长曲线。在深度学习中,由于其单增以及反函数单增等性质,Sigmoid函数常被用作神经网络的激活函数,将变量映射到[0,1]之间。

Sigmod函数:

Sigmod导函数:

图像:

5. 池化层

详见上文二. 2。

6. 全连接层

我们首先看到上面的图1,通过不断地卷积池化,可以将一个高维的图片降维成一个低维的图片...

需要补充...

二. CNN卷积神经网络的过程

1. 正向传播过程

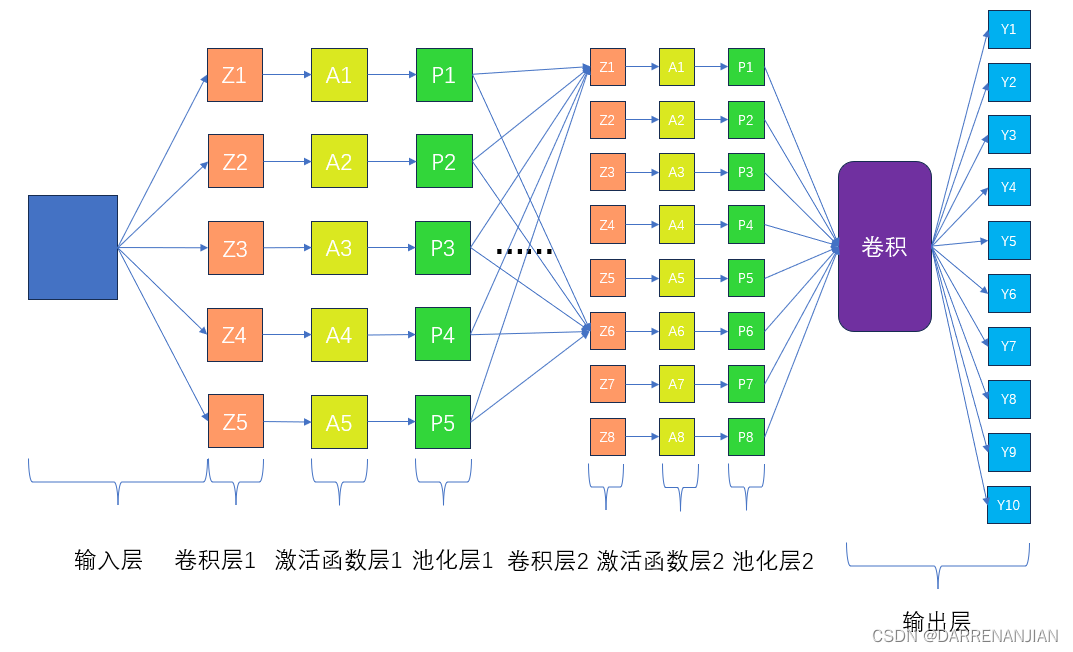

所有数学推导都基于如下图2所示

正向传播过程比较简单,主要是按照上图2的过程一步一步计算即可。

输入层:

假设我们输入的一张L×W×H的图片(输入一个矩阵)。

卷积层1:

首先对于卷积层1,随机生成多个卷积核(大小与要求一致,相关概念参考——二. 1卷积)我们得到一个卷积集合

2. 反向传播过程(算法核心)

补充...

三. 卷积神经网络代码

1. CnnLayer类

package Day_81;/*** One layer, support all four layer types. The code mainly initializes, gets,* and sets variables. Essentially no algorithm is implemented.** @author Fan Min minfanphd@163.com.*/

public class CnnLayer {/*** The type of the layer.*/LayerTypeEnum type;/*** The number of out map.*/int outMapNum;/*** The map size.*/Size mapSize;/*** The kernel size.*/Size kernelSize;/*** The scale size.*/Size scaleSize;/*** The index of the class (label) attribute.*/int classNum = -1;/*** Kernel. Dimensions: [front map][out map][width][height].*/private double[][][][] kernel;/*** Bias. The length is outMapNum.*/private double[] bias;/*** Out maps. Dimensions:* [batchSize][outMapNum][mapSize.width][mapSize.height].*/private double[][][][] outMaps;/*** Errors.*/private double[][][][] errors;/*** For batch processing.*/private static int recordInBatch = 0;/************************** The first constructor.** @param paraNum* When the type is CONVOLUTION, it is the out map number. when* the type is OUTPUT, it is the class number.* @param paraSize* When the type is INPUT, it is the map size; when the type is* CONVOLUTION, it is the kernel size; when the type is SAMPLING,* it is the scale size.************************/public CnnLayer(LayerTypeEnum paraType, int paraNum, Size paraSize) {type = paraType;switch (type) {case INPUT:outMapNum = 1;mapSize = paraSize; // No deep copy.break;case CONVOLUTION:outMapNum = paraNum;kernelSize = paraSize;break;case SAMPLING:scaleSize = paraSize;break;case OUTPUT:classNum = paraNum;mapSize = new Size(1, 1);outMapNum = classNum;break;default:System.out.println("Internal error occurred in AbstractLayer.java constructor.");}// Of switch}// Of the first constructor/************************** Initialize the kernel.** @param paraNum* When the type is CONVOLUTION, it is the out map number. when************************/public void initKernel(int paraFrontMapNum) {kernel = new double[paraFrontMapNum][outMapNum][][];for (int i = 0; i < paraFrontMapNum; i++) {for (int j = 0; j < outMapNum; j++) {kernel[i][j] = MathUtils.randomMatrix(kernelSize.width, kernelSize.height, true);} // Of for j} // Of for i}// Of initKernel/************************** Initialize the output kernel. The code is revised to invoke* initKernel(int).************************/public void initOutputKernel(int paraFrontMapNum, Size paraSize) {kernelSize = paraSize;initKernel(paraFrontMapNum);}// Of initOutputKernel/************************** Initialize the bias. No parameter. "int frontMapNum" is claimed however* not used.************************/public void initBias() {bias = MathUtils.randomArray(outMapNum);}// Of initBias/************************** Initialize the errors.** @param paraBatchSize* The batch size.************************/public void initErrors(int paraBatchSize) {errors = new double[paraBatchSize][outMapNum][mapSize.width][mapSize.height];}// Of initErrors/************************** Initialize out maps.** @param paraBatchSize* The batch size.************************/public void initOutMaps(int paraBatchSize) {outMaps = new double[paraBatchSize][outMapNum][mapSize.width][mapSize.height];}// Of initOutMaps/************************** Prepare for a new batch.************************/public static void prepareForNewBatch() {recordInBatch = 0;}// Of prepareForNewBatch/************************** Prepare for a new record.************************/public static void prepareForNewRecord() {recordInBatch++;}// Of prepareForNewRecord/************************** Set one value of outMaps.************************/public void setMapValue(int paraMapNo, int paraX, int paraY, double paraValue) {outMaps[recordInBatch][paraMapNo][paraX][paraY] = paraValue;}// Of setMapValue/************************** Set values of the whole map.************************/public void setMapValue(int paraMapNo, double[][] paraOutMatrix) {outMaps[recordInBatch][paraMapNo] = paraOutMatrix;}// Of setMapValue/************************** Getter.************************/public Size getMapSize() {return mapSize;}// Of getMapSize/************************** Setter.************************/public void setMapSize(Size paraMapSize) {mapSize = paraMapSize;}// Of setMapSize/************************** Getter.************************/public LayerTypeEnum getType() {return type;}// Of getType/************************** Getter.************************/public int getOutMapNum() {return outMapNum;}// Of getOutMapNum/************************** Setter.************************/public void setOutMapNum(int paraOutMapNum) {outMapNum = paraOutMapNum;}// Of setOutMapNum/************************** Getter.************************/public Size getKernelSize() {return kernelSize;}// Of getKernelSize/************************** Getter.************************/public Size getScaleSize() {return scaleSize;}// Of getScaleSize/************************** Getter.************************/public double[][] getMap(int paraIndex) {return outMaps[recordInBatch][paraIndex];}// Of getMap/************************** Getter.************************/public double[][] getKernel(int paraFrontMap, int paraOutMap) {return kernel[paraFrontMap][paraOutMap];}// Of getKernel/************************** Setter. Set one error.************************/public void setError(int paraMapNo, int paraMapX, int paraMapY, double paraValue) {errors[recordInBatch][paraMapNo][paraMapX][paraMapY] = paraValue;}// Of setError/************************** Setter. Set one error matrix.************************/public void setError(int paraMapNo, double[][] paraMatrix) {errors[recordInBatch][paraMapNo] = paraMatrix;}// Of setError/************************** Getter. Get one error matrix.************************/public double[][] getError(int paraMapNo) {return errors[recordInBatch][paraMapNo];}// Of getError/************************** Getter. Get the whole error tensor.************************/public double[][][][] getErrors() {return errors;}// Of getErrors/************************** Setter. Set one kernel.************************/public void setKernel(int paraLastMapNo, int paraMapNo, double[][] paraKernel) {kernel[paraLastMapNo][paraMapNo] = paraKernel;}// Of setKernel/************************** Getter.************************/public double getBias(int paraMapNo) {return bias[paraMapNo];}// Of getBias/************************** Setter.************************/public void setBias(int paraMapNo, double paraValue) {bias[paraMapNo] = paraValue;}// Of setBias/************************** Getter.************************/public double[][][][] getMaps() {return outMaps;}// Of getMaps/************************** Getter.************************/public double[][] getError(int paraRecordId, int paraMapNo) {return errors[paraRecordId][paraMapNo];}// Of getError/************************** Getter.************************/public double[][] getMap(int paraRecordId, int paraMapNo) {return outMaps[paraRecordId][paraMapNo];}// Of getMap/************************** Getter.************************/public int getClassNum() {return classNum;}// Of getClassNum/************************** Getter. Get the whole kernel tensor.************************/public double[][][][] getKernel() {return kernel;} // Of getKernel

}// Of class CnnLayer

2. Dataset类

package Day_81;import java.io.BufferedReader;

import java.io.File;

import java.io.FileReader;

import java.io.IOException;

import java.util.ArrayList;

import java.util.Arrays;

import java.util.List;/*** Manage the dataset.** @author Fan Min minfanphd@163.com.*/

public class Dataset {/*** All instances organized by a list.*/private List<Instance> instances;/*** The label index.*/private int labelIndex;/*** The max label (label start from 0).*/private double maxLabel = -1;/************************** The first constructor.************************/public Dataset() {labelIndex = -1;instances = new ArrayList<Instance>();}// Of the first constructor/************************** The second constructor.** @param paraFilename* The filename.* @param paraSplitSign* Often comma.* @param paraLabelIndex* Often the last column.************************/public Dataset(String paraFilename, String paraSplitSign, int paraLabelIndex) {instances = new ArrayList<Instance>();labelIndex = paraLabelIndex;File tempFile = new File(paraFilename);try {BufferedReader tempReader = new BufferedReader(new FileReader(tempFile));String tempLine;while ((tempLine = tempReader.readLine()) != null) {String[] tempDatum = tempLine.split(paraSplitSign);if (tempDatum.length == 0) {continue;} // Of ifdouble[] tempData = new double[tempDatum.length];for (int i = 0; i < tempDatum.length; i++)tempData[i] = Double.parseDouble(tempDatum[i]);Instance tempInstance = new Instance(tempData);append(tempInstance);} // Of whiletempReader.close();} catch (IOException e) {e.printStackTrace();System.out.println("Unable to load " + paraFilename);System.exit(0);}//Of try}// Of the second constructor/************************** Append an instance.** @param paraInstance* The given record.************************/public void append(Instance paraInstance) {instances.add(paraInstance);}// Of append/************************** Append an instance specified by double values.************************/public void append(double[] paraAttributes, Double paraLabel) {instances.add(new Instance(paraAttributes, paraLabel));}// Of append/************************** Getter.************************/public Instance getInstance(int paraIndex) {return instances.get(paraIndex);}// Of getInstance/************************** Getter.************************/public int size() {return instances.size();}// Of size/************************** Getter.************************/public double[] getAttributes(int paraIndex) {return instances.get(paraIndex).getAttributes();}// Of getAttrs/************************** Getter.************************/public Double getLabel(int paraIndex) {return instances.get(paraIndex).getLabel();}// Of getLabel/************************** Unit test.***********************D:\data*/public static void main(String args[]) {Dataset tempData = new Dataset("D:/data/train.format", ",", 784);Instance tempInstance = tempData.getInstance(0);System.out.println("The first instance is: " + tempInstance);}// Of main/************************** An instance.************************/public class Instance {/*** Conditional attributes.*/private double[] attributes;/*** Label.*/private Double label;/************************** The first constructor.************************/private Instance(double[] paraAttrs, Double paraLabel) {attributes = paraAttrs;label = paraLabel;}//Of the first constructor/************************** The second constructor.************************/public Instance(double[] paraData) {if (labelIndex == -1)// No labelattributes = paraData;else {label = paraData[labelIndex];if (label > maxLabel) {// It is a new labelmaxLabel = label;} // Of ifif (labelIndex == 0) {// The first column is the labelattributes = Arrays.copyOfRange(paraData, 1, paraData.length);} else {// The last column is the labelattributes = Arrays.copyOfRange(paraData, 0, paraData.length - 1);} // Of if} // Of if}// Of the second constructor/************************** Getter.************************/public double[] getAttributes() {return attributes;}// Of getAttributes/************************** Getter.************************/public Double getLabel() {if (labelIndex == -1)return null;return label;}// Of getLabel/************************** toString.************************/public String toString(){return Arrays.toString(attributes) + ", " + label;}//Of toString}// Of class Instance

}// Of class Dataset

3. FullCnn类

package Day_81;import Day_81.Dataset.Instance;

import Day_81.MathUtils.Operator;import java.util.Arrays;/*** CNN.** @author Fan Min minfanphd@163.com.*/

public class FullCnn {/*** The value changes.*/private static double ALPHA = 0.85;/*** A constant.*/public static double LAMBDA = 0;/*** Manage layers.*/private static LayerBuilder layerBuilder;/*** Train using a number of instances simultaneously.*/private int batchSize;/*** Divide the batch size with the given value.*/private Operator divideBatchSize;/*** Multiply alpha with the given value.*/private Operator multiplyAlpha;/*** Multiply lambda and alpha with the given value.*/private Operator multiplyLambda;/************************** The first constructor.*************************/public FullCnn(LayerBuilder paraLayerBuilder, int paraBatchSize) {layerBuilder = paraLayerBuilder;batchSize = paraBatchSize;setup();initOperators();}// Of the first constructor/************************** Initialize operators using temporary classes.************************/private void initOperators() {divideBatchSize = new Operator() {private static final long serialVersionUID = 7424011281732651055L;@Overridepublic double process(double value) {return value / batchSize;}// Of process};multiplyAlpha = new Operator() {private static final long serialVersionUID = 5761368499808006552L;@Overridepublic double process(double value) {return value * ALPHA;}// Of process};multiplyLambda = new Operator() {private static final long serialVersionUID = 4499087728362870577L;@Overridepublic double process(double value) {return value * (1 - LAMBDA * ALPHA);}// Of process};}// Of initOperators/************************** Setup according to the layer builder.************************/public void setup() {CnnLayer tempInputLayer = layerBuilder.getLayer(0);tempInputLayer.initOutMaps(batchSize);for (int i = 1; i < layerBuilder.getNumLayers(); i++) {CnnLayer tempLayer = layerBuilder.getLayer(i);CnnLayer tempFrontLayer = layerBuilder.getLayer(i - 1);int tempFrontMapNum = tempFrontLayer.getOutMapNum();switch (tempLayer.getType()) {case INPUT:// Should not be input. Maybe an error should be thrown out.break;case CONVOLUTION:tempLayer.setMapSize(tempFrontLayer.getMapSize().subtract(tempLayer.getKernelSize(), 1));tempLayer.initKernel(tempFrontMapNum);tempLayer.initBias();tempLayer.initErrors(batchSize);tempLayer.initOutMaps(batchSize);break;case SAMPLING:tempLayer.setOutMapNum(tempFrontMapNum);tempLayer.setMapSize(tempFrontLayer.getMapSize().divide(tempLayer.getScaleSize()));tempLayer.initErrors(batchSize);tempLayer.initOutMaps(batchSize);break;case OUTPUT:tempLayer.initOutputKernel(tempFrontMapNum, tempFrontLayer.getMapSize());tempLayer.initBias();tempLayer.initErrors(batchSize);tempLayer.initOutMaps(batchSize);break;}// Of switch} // Of for i}// Of setup/************************** Forward computing.************************/private void forward(Instance instance) {setInputLayerOutput(instance);for (int l = 1; l < layerBuilder.getNumLayers(); l++) {CnnLayer tempCurrentLayer = layerBuilder.getLayer(l);CnnLayer tempLastLayer = layerBuilder.getLayer(l - 1);switch (tempCurrentLayer.getType()) {case CONVOLUTION:setConvolutionOutput(tempCurrentLayer, tempLastLayer);break;case SAMPLING:setSampOutput(tempCurrentLayer, tempLastLayer);break;case OUTPUT:setConvolutionOutput(tempCurrentLayer, tempLastLayer);break;default:break;}// Of switch} // Of for l}// Of forward/************************** Set the in layer output. Given a record, copy its values to the input* map.************************/private void setInputLayerOutput(Instance paraRecord) {CnnLayer tempInputLayer = layerBuilder.getLayer(0);Size tempMapSize = tempInputLayer.getMapSize();double[] tempAttributes = paraRecord.getAttributes();if (tempAttributes.length != tempMapSize.width * tempMapSize.height)throw new RuntimeException("input record does not match the map size.");for (int i = 0; i < tempMapSize.width; i++) {for (int j = 0; j < tempMapSize.height; j++) {tempInputLayer.setMapValue(0, i, j, tempAttributes[tempMapSize.height * i + j]);} // Of for j} // Of for i}// Of setInputLayerOutput/************************** Compute the convolution output according to the output of the last layer.** @param paraLastLayer* the last layer.* @param paraLayer* the current layer.************************/private void setConvolutionOutput(final CnnLayer paraLayer, final CnnLayer paraLastLayer) {// int mapNum = paraLayer.getOutMapNum();final int lastMapNum = paraLastLayer.getOutMapNum();// Attention: paraLayer.getOutMapNum() may not be right.for (int j = 0; j < paraLayer.getOutMapNum(); j++) {double[][] tempSumMatrix = null;for (int i = 0; i < lastMapNum; i++) {double[][] lastMap = paraLastLayer.getMap(i);double[][] kernel = paraLayer.getKernel(i, j);if (tempSumMatrix == null) {// On the first map.tempSumMatrix = MathUtils.convnValid(lastMap, kernel);} else {// Sum up convolution mapstempSumMatrix = MathUtils.matrixOp(MathUtils.convnValid(lastMap, kernel),tempSumMatrix, null, null, MathUtils.plus);} // Of if} // Of for i// Activation.final double bias = paraLayer.getBias(j);tempSumMatrix = MathUtils.matrixOp(tempSumMatrix, new Operator() {private static final long serialVersionUID = 2469461972825890810L;@Overridepublic double process(double value) {return MathUtils.sigmod(value + bias);}});paraLayer.setMapValue(j, tempSumMatrix);} // Of for j}// Of setConvolutionOutput/************************** Compute the convolution output according to the output of the last layer.** @param paraLastLayer* the last layer.* @param paraLayer* the current layer.************************/private void setSampOutput(final CnnLayer paraLayer, final CnnLayer paraLastLayer) {// int tempLastMapNum = paraLastLayer.getOutMapNum();// Attention: paraLayer.outMapNum may not be right.for (int i = 0; i < paraLayer.outMapNum; i++) {double[][] lastMap = paraLastLayer.getMap(i);Size scaleSize = paraLayer.getScaleSize();double[][] sampMatrix = MathUtils.scaleMatrix(lastMap, scaleSize);paraLayer.setMapValue(i, sampMatrix);} // Of for i}// Of setSampOutput/************************** Train the cnn.************************/public void train(Dataset paraDataset, int paraRounds) {for (int t = 0; t < paraRounds; t++) {System.out.println("Iteration: " + t);int tempNumEpochs = paraDataset.size() / batchSize;if (paraDataset.size() % batchSize != 0)tempNumEpochs++;// logger.info("第{}次迭代,epochsNum: {}", t, epochsNum);double tempNumCorrect = 0;int tempCount = 0;for (int i = 0; i < tempNumEpochs; i++) {int[] tempRandomPerm = MathUtils.randomPerm(paraDataset.size(), batchSize);CnnLayer.prepareForNewBatch();for (int index : tempRandomPerm) {boolean isRight = train(paraDataset.getInstance(index));if (isRight)tempNumCorrect++;tempCount++;CnnLayer.prepareForNewRecord();} // Of for indexupdateParameters();if (i % 50 == 0) {System.out.print("..");if (i + 50 > tempNumEpochs)System.out.println();}}double p = 1.0 * tempNumCorrect / tempCount;if (t % 10 == 1 && p > 0.96) {ALPHA = 0.001 + ALPHA * 0.9;// logger.info("设置 alpha = {}", ALPHA);} // Of iffSystem.out.println("Training precision: " + p);// logger.info("计算精度: {}/{}={}.", right, count, p);} // Of for i}// Of train/************************** Train the cnn with only one record.** @param paraRecord* The given record.************************/private boolean train(Instance paraRecord) {forward(paraRecord);boolean result = backPropagation(paraRecord);return result;}// Of train/************************** Back-propagation.** @param paraRecord* The given record.************************/private boolean backPropagation(Instance paraRecord) {boolean result = setOutputLayerErrors(paraRecord);setHiddenLayerErrors();return result;}// Of backPropagation/************************** Update parameters.************************/private void updateParameters() {for (int l = 1; l < layerBuilder.getNumLayers(); l++) {CnnLayer layer = layerBuilder.getLayer(l);CnnLayer lastLayer = layerBuilder.getLayer(l - 1);switch (layer.getType()) {case CONVOLUTION:case OUTPUT:updateKernels(layer, lastLayer);updateBias(layer, lastLayer);break;default:break;}// Of switch} // Of for l}// Of updateParameters/************************** Update bias.************************/private void updateBias(final CnnLayer paraLayer, CnnLayer paraLastLayer) {final double[][][][] errors = paraLayer.getErrors();// int mapNum = paraLayer.getOutMapNum();// Attention: getOutMapNum() may not be correct.for (int j = 0; j < paraLayer.getOutMapNum(); j++) {double[][] error = MathUtils.sum(errors, j);double deltaBias = MathUtils.sum(error) / batchSize;double bias = paraLayer.getBias(j) + ALPHA * deltaBias;paraLayer.setBias(j, bias);} // Of for j}// Of updateBias/************************** Update kernels.************************/private void updateKernels(final CnnLayer paraLayer, final CnnLayer paraLastLayer) {// int mapNum = paraLayer.getOutMapNum();int tempLastMapNum = paraLastLayer.getOutMapNum();// Attention: getOutMapNum() may not be rightfor (int j = 0; j < paraLayer.getOutMapNum(); j++) {for (int i = 0; i < tempLastMapNum; i++) {double[][] tempDeltaKernel = null;for (int r = 0; r < batchSize; r++) {double[][] error = paraLayer.getError(r, j);if (tempDeltaKernel == null)tempDeltaKernel = MathUtils.convnValid(paraLastLayer.getMap(r, i), error);else {tempDeltaKernel = MathUtils.matrixOp(MathUtils.convnValid(paraLastLayer.getMap(r, i), error),tempDeltaKernel, null, null, MathUtils.plus);} // Of if} // Of for rtempDeltaKernel = MathUtils.matrixOp(tempDeltaKernel, divideBatchSize);if (!rangeCheck(tempDeltaKernel, -10, 10)) {System.exit(0);} // Of ifdouble[][] kernel = paraLayer.getKernel(i, j);tempDeltaKernel = MathUtils.matrixOp(kernel, tempDeltaKernel, multiplyLambda,multiplyAlpha, MathUtils.plus);paraLayer.setKernel(i, j, tempDeltaKernel);} // Of for i} // Of for j}// Of updateKernels/************************** Set errors of all hidden layers.************************/private void setHiddenLayerErrors() {// System.out.println("setHiddenLayerErrors");for (int l = layerBuilder.getNumLayers() - 2; l > 0; l--) {CnnLayer layer = layerBuilder.getLayer(l);CnnLayer nextLayer = layerBuilder.getLayer(l + 1);// System.out.println("layertype = " + layer.getType());switch (layer.getType()) {case SAMPLING:setSamplingErrors(layer, nextLayer);break;case CONVOLUTION:setConvolutionErrors(layer, nextLayer);break;default:break;}// Of switch} // Of for l}// Of setHiddenLayerErrors/************************** Set errors of a sampling layer.************************/private void setSamplingErrors(final CnnLayer paraLayer, final CnnLayer paraNextLayer) {// int mapNum = layer.getOutMapNum();int tempNextMapNum = paraNextLayer.getOutMapNum();// Attention: getOutMapNum() may not be correctfor (int i = 0; i < paraLayer.getOutMapNum(); i++) {double[][] sum = null;for (int j = 0; j < tempNextMapNum; j++) {double[][] nextError = paraNextLayer.getError(j);double[][] kernel = paraNextLayer.getKernel(i, j);if (sum == null) {sum = MathUtils.convnFull(nextError, MathUtils.rot180(kernel));} else {sum = MathUtils.matrixOp(MathUtils.convnFull(nextError, MathUtils.rot180(kernel)), sum, null,null, MathUtils.plus);} // Of if} // Of for jparaLayer.setError(i, sum);if (!rangeCheck(sum, -2, 2)) {System.out.println("setSampErrors, error out of range.\r\n" + Arrays.deepToString(sum));} // Of if} // Of for i}// Of setSamplingErrors/************************** Set errors of a sampling layer.************************/private void setConvolutionErrors(final CnnLayer paraLayer, final CnnLayer paraNextLayer) {// System.out.println("setConvErrors");for (int m = 0; m < paraLayer.getOutMapNum(); m++) {Size tempScale = paraNextLayer.getScaleSize();double[][] tempNextLayerErrors = paraNextLayer.getError(m);double[][] tempMap = paraLayer.getMap(m);double[][] tempOutMatrix = MathUtils.matrixOp(tempMap, MathUtils.cloneMatrix(tempMap),null, MathUtils.one_value, MathUtils.multiply);tempOutMatrix = MathUtils.matrixOp(tempOutMatrix,MathUtils.kronecker(tempNextLayerErrors, tempScale), null, null,MathUtils.multiply);paraLayer.setError(m, tempOutMatrix);// System.out.println("range check nextError");if (!rangeCheck(tempNextLayerErrors, -10, 10)) {System.out.println("setConvErrors, nextError out of range:\r\n"+ Arrays.deepToString(tempNextLayerErrors));System.out.println("the new errors are:\r\n" + Arrays.deepToString(tempOutMatrix));System.exit(0);} // Of ifif (!rangeCheck(tempOutMatrix, -10, 10)) {System.out.println("setConvErrors, error out of range.");System.exit(0);} // Of if} // Of for m}// Of setConvolutionErrors/************************** Set errors of a sampling layer.************************/private boolean setOutputLayerErrors(Instance paraRecord) {CnnLayer tempOutputLayer = layerBuilder.getOutputLayer();int tempMapNum = tempOutputLayer.getOutMapNum();double[] tempTarget = new double[tempMapNum];double[] tempOutMaps = new double[tempMapNum];for (int m = 0; m < tempMapNum; m++) {double[][] outmap = tempOutputLayer.getMap(m);tempOutMaps[m] = outmap[0][0];} // Of for mint tempLabel = paraRecord.getLabel().intValue();tempTarget[tempLabel] = 1;// Log.i(record.getLable() + "outmaps:" +// Util.fomart(outmaps)// + Arrays.toString(target));for (int m = 0; m < tempMapNum; m++) {tempOutputLayer.setError(m, 0, 0,tempOutMaps[m] * (1 - tempOutMaps[m]) * (tempTarget[m] - tempOutMaps[m]));} // Of for mreturn tempLabel == MathUtils.getMaxIndex(tempOutMaps);}// Of setOutputLayerErrors/************************** Setup the network.************************/public void setup(int paraBatchSize) {CnnLayer tempInputLayer = layerBuilder.getLayer(0);tempInputLayer.initOutMaps(paraBatchSize);for (int i = 1; i < layerBuilder.getNumLayers(); i++) {CnnLayer tempLayer = layerBuilder.getLayer(i);CnnLayer tempLastLayer = layerBuilder.getLayer(i - 1);int tempLastMapNum = tempLastLayer.getOutMapNum();switch (tempLayer.getType()) {case INPUT:break;case CONVOLUTION:tempLayer.setMapSize(tempLastLayer.getMapSize().subtract(tempLayer.getKernelSize(), 1));tempLayer.initKernel(tempLastMapNum);tempLayer.initBias();tempLayer.initErrors(paraBatchSize);tempLayer.initOutMaps(paraBatchSize);break;case SAMPLING:tempLayer.setOutMapNum(tempLastMapNum);tempLayer.setMapSize(tempLastLayer.getMapSize().divide(tempLayer.getScaleSize()));tempLayer.initErrors(paraBatchSize);tempLayer.initOutMaps(paraBatchSize);break;case OUTPUT:tempLayer.initOutputKernel(tempLastMapNum, tempLastLayer.getMapSize());tempLayer.initBias();tempLayer.initErrors(paraBatchSize);tempLayer.initOutMaps(paraBatchSize);break;}// Of switch} // Of for i}// Of setup/************************** Predict for the dataset.************************/public int[] predict(Dataset paraDataset) {System.out.println("Predicting ... ");CnnLayer.prepareForNewBatch();int[] resultPredictions = new int[paraDataset.size()];double tempCorrect = 0.0;Instance tempRecord;for (int i = 0; i < paraDataset.size(); i++) {tempRecord = paraDataset.getInstance(i);forward(tempRecord);CnnLayer outputLayer = layerBuilder.getOutputLayer();int tempMapNum = outputLayer.getOutMapNum();double[] tempOut = new double[tempMapNum];for (int m = 0; m < tempMapNum; m++) {double[][] outmap = outputLayer.getMap(m);tempOut[m] = outmap[0][0];} // Of for mresultPredictions[i] = MathUtils.getMaxIndex(tempOut);if (resultPredictions[i] == tempRecord.getLabel().intValue()) {tempCorrect++;} // Of if} // Of forSystem.out.println("Accuracy: " + tempCorrect / paraDataset.size());return resultPredictions;}// Of predict/************************** Range check, only for debugging.** @param paraMatix* The given matrix.* @param paraLowerBound* @param paraUpperBound************************/public boolean rangeCheck(double[][] paraMatrix, double paraLowerBound, double paraUpperBound) {for (int i = 0; i < paraMatrix.length; i++) {for (int j = 0; j < paraMatrix[0].length; j++) {if ((paraMatrix[i][j] < paraLowerBound) || (paraMatrix[i][j] > paraUpperBound)) {System.out.println("" + paraMatrix[i][j] + " out of range (" + paraLowerBound+ ", " + paraUpperBound + ")\r\n");return false;} // Of if} // Of for j} // Of for ireturn true;}// Of rangeCheck/************************** The main entrance.************************/public static void main(String[] args) {LayerBuilder builder = new LayerBuilder();// Input layer, the maps are 28*28builder.addLayer(new CnnLayer(LayerTypeEnum.INPUT, -1, new Size(28, 28)));// Convolution output has size 24*24, 24=28+1-5builder.addLayer(new CnnLayer(LayerTypeEnum.CONVOLUTION, 6, new Size(5, 5)));// Sampling output has size 12*12,12=24/2builder.addLayer(new CnnLayer(LayerTypeEnum.SAMPLING, -1, new Size(2, 2)));// Convolution output has size 8*8, 8=12+1-5builder.addLayer(new CnnLayer(LayerTypeEnum.CONVOLUTION, 12, new Size(5, 5)));// Sampling output has size4×4,4=8/2builder.addLayer(new CnnLayer(LayerTypeEnum.SAMPLING, -1, new Size(2, 2)));// output layer, digits 0 - 9.builder.addLayer(new CnnLayer(LayerTypeEnum.OUTPUT, 10, null));// Construct the full CNN.FullCnn tempCnn = new FullCnn(builder, 10);Dataset tempTrainingSet = new Dataset("d:/data/train.format", ",", 784);// Train the model.tempCnn.train(tempTrainingSet, 10);// tempCnn.predict(tempTrainingSet);}// Of main

}// Of class MfCnn4. LayerBuilder类

package Day_81;import java.util.ArrayList;

import java.util.List;/*** CnnLayer builder.** @author Fan Min minfanphd@163.com.*/

public class LayerBuilder {/*** Layers.*/private List<CnnLayer> layers;/************************** The first constructor.************************/public LayerBuilder() {layers = new ArrayList<CnnLayer>();}// Of the first constructor/************************** The second constructor.************************/public LayerBuilder(CnnLayer paraLayer) {this();layers.add(paraLayer);}// Of the second constructor/************************** Add a layer.** @param paraLayer* The new layer.************************/public void addLayer(CnnLayer paraLayer) {layers.add(paraLayer);}// Of addLayer/************************** Get the specified layer.** @param paraIndex* The index of the layer.************************/public CnnLayer getLayer(int paraIndex) throws RuntimeException{if (paraIndex >= layers.size()) {throw new RuntimeException("CnnLayer " + paraIndex + " is out of range: "+ layers.size() + ".");}//Of ifreturn layers.get(paraIndex);}//Of getLayer/************************** Get the output layer.************************/public CnnLayer getOutputLayer() {return layers.get(layers.size() - 1);}//Of getOutputLayer/************************** Get the number of layers.************************/public int getNumLayers() {return layers.size();}//Of getNumLayers

}// Of class LayerBuilder

5. LayerTypeEnum类

package Day_81;/*** Enumerate all layer types.** @author Fan Min minfanphd@163.com.*/

public enum LayerTypeEnum {INPUT, CONVOLUTION, SAMPLING, OUTPUT;

}//Of enum LayerTypeEnum

6. MathUtils类

package Day_81;import java.io.Serializable;

import java.util.Arrays;

import java.util.HashSet;

import java.util.Random;

import java.util.Set;/*** Math operations.** Adopted from cnn-master.*/

public class MathUtils {/*** An interface for different on-demand operators.*/public interface Operator extends Serializable {public double process(double value);}// Of interfact Operator/*** The one-minus-the-value operator.*/public static final Operator one_value = new Operator() {private static final long serialVersionUID = 3752139491940330714L;@Overridepublic double process(double value) {return 1 - value;}// Of process};/*** The sigmoid operator.*/public static final Operator sigmoid = new Operator() {private static final long serialVersionUID = -1952718905019847589L;@Overridepublic double process(double value) {return 1 / (1 + Math.pow(Math.E, -value));}// Of process};/*** An interface for operations with two operators.*/interface OperatorOnTwo extends Serializable {public double process(double a, double b);}// Of interface OperatorOnTwo/*** Plus.*/public static final OperatorOnTwo plus = new OperatorOnTwo() {private static final long serialVersionUID = -6298144029766839945L;@Overridepublic double process(double a, double b) {return a + b;}// Of process};/*** Multiply.*/public static OperatorOnTwo multiply = new OperatorOnTwo() {private static final long serialVersionUID = -7053767821858820698L;@Overridepublic double process(double a, double b) {return a * b;}// Of process};/*** Minus.*/public static OperatorOnTwo minus = new OperatorOnTwo() {private static final long serialVersionUID = 7346065545555093912L;@Overridepublic double process(double a, double b) {return a - b;}// Of process};/************************** Print a matrix************************/public static void printMatrix(double[][] matrix) {for (int i = 0; i < matrix.length; i++) {String line = Arrays.toString(matrix[i]);line = line.replaceAll(", ", "\t");System.out.println(line);} // Of for iSystem.out.println();}// Of printMatrix/************************** Rotate the matrix 180 degrees.************************/public static double[][] rot180(double[][] matrix) {matrix = cloneMatrix(matrix);int m = matrix.length;int n = matrix[0].length;for (int i = 0; i < m; i++) {for (int j = 0; j < n / 2; j++) {double tmp = matrix[i][j];matrix[i][j] = matrix[i][n - 1 - j];matrix[i][n - 1 - j] = tmp;}}for (int j = 0; j < n; j++) {for (int i = 0; i < m / 2; i++) {double tmp = matrix[i][j];matrix[i][j] = matrix[m - 1 - i][j];matrix[m - 1 - i][j] = tmp;}}return matrix;}// Of rot180private static Random myRandom = new Random(2);/************************** Generate a random matrix with the given size. Each value takes value in* [-0.005, 0.095].************************/public static double[][] randomMatrix(int x, int y, boolean b) {double[][] matrix = new double[x][y];// int tag = 1;for (int i = 0; i < x; i++) {for (int j = 0; j < y; j++) {matrix[i][j] = (myRandom.nextDouble() - 0.05) / 10;} // Of for j} // Of for ireturn matrix;}// Of randomMatrix/************************** Generate a random array with the given length. Each value takes value in* [-0.005, 0.095].************************/public static double[] randomArray(int len) {double[] data = new double[len];for (int i = 0; i < len; i++) {//data[i] = myRandom.nextDouble() / 10 - 0.05;data[i] = 0;} // Of for ireturn data;}// Of randomArray/************************** Generate a random perm with the batch size.************************/public static int[] randomPerm(int size, int batchSize) {Set<Integer> set = new HashSet<Integer>();while (set.size() < batchSize) {set.add(myRandom.nextInt(size));}int[] randPerm = new int[batchSize];int i = 0;for (Integer value : set)randPerm[i++] = value;return randPerm;}// Of randomPerm/************************** Clone a matrix. Do not use it reference directly.************************/public static double[][] cloneMatrix(final double[][] matrix) {final int m = matrix.length;int n = matrix[0].length;final double[][] outMatrix = new double[m][n];for (int i = 0; i < m; i++) {for (int j = 0; j < n; j++) {outMatrix[i][j] = matrix[i][j];} // Of for j} // Of for ireturn outMatrix;}// Of cloneMatrix/************************** Matrix operation with the given operator on single operand.************************/public static double[][] matrixOp(final double[][] ma, Operator operator) {final int m = ma.length;int n = ma[0].length;for (int i = 0; i < m; i++) {for (int j = 0; j < n; j++) {ma[i][j] = operator.process(ma[i][j]);} // Of for j} // Of for ireturn ma;}// Of matrixOp/************************** Matrix operation with the given operator on two operands.************************/public static double[][] matrixOp(final double[][] ma, final double[][] mb,final Operator operatorA, final Operator operatorB, OperatorOnTwo operator) {final int m = ma.length;int n = ma[0].length;if (m != mb.length || n != mb[0].length)throw new RuntimeException("ma.length:" + ma.length + " mb.length:" + mb.length);for (int i = 0; i < m; i++) {for (int j = 0; j < n; j++) {double a = ma[i][j];if (operatorA != null)a = operatorA.process(a);double b = mb[i][j];if (operatorB != null)b = operatorB.process(b);mb[i][j] = operator.process(a, b);} // Of for j} // Of for ireturn mb;}// Of matrixOp/************************** Extend the matrix to a bigger one (a number of times).************************/public static double[][] kronecker(final double[][] matrix, final Size scale) {final int m = matrix.length;int n = matrix[0].length;final double[][] outMatrix = new double[m * scale.width][n * scale.height];for (int i = 0; i < m; i++) {for (int j = 0; j < n; j++) {for (int ki = i * scale.width; ki < (i + 1) * scale.width; ki++) {for (int kj = j * scale.height; kj < (j + 1) * scale.height; kj++) {outMatrix[ki][kj] = matrix[i][j];}}}}return outMatrix;}// Of kronecker/************************** Scale the matrix.************************/public static double[][] scaleMatrix(final double[][] matrix, final Size scale) {int m = matrix.length;int n = matrix[0].length;final int sm = m / scale.width;final int sn = n / scale.height;final double[][] outMatrix = new double[sm][sn];if (sm * scale.width != m || sn * scale.height != n)throw new RuntimeException("scale matrix");final int size = scale.width * scale.height;for (int i = 0; i < sm; i++) {for (int j = 0; j < sn; j++) {double sum = 0.0;for (int si = i * scale.width; si < (i + 1) * scale.width; si++) {for (int sj = j * scale.height; sj < (j + 1) * scale.height; sj++) {sum += matrix[si][sj];} // Of for sj} // Of for sioutMatrix[i][j] = sum / size;} // Of for j} // Of for ireturn outMatrix;}// Of scaleMatrix/************************** Convolution full to obtain a bigger size. It is used in back-propagation.************************/public static double[][] convnFull(double[][] matrix, final double[][] kernel) {int m = matrix.length;int n = matrix[0].length;final int km = kernel.length;final int kn = kernel[0].length;final double[][] extendMatrix = new double[m + 2 * (km - 1)][n + 2 * (kn - 1)];for (int i = 0; i < m; i++) {for (int j = 0; j < n; j++) {extendMatrix[i + km - 1][j + kn - 1] = matrix[i][j];} // Of for j} // Of for ireturn convnValid(extendMatrix, kernel);}// Of convnFull/************************** Convolution operation, from a given matrix and a kernel, sliding and sum* to obtain the result matrix. It is used in forward.************************/public static double[][] convnValid(final double[][] matrix, double[][] kernel) {// kernel = rot180(kernel);int m = matrix.length;int n = matrix[0].length;final int km = kernel.length;final int kn = kernel[0].length;int kns = n - kn + 1;final int kms = m - km + 1;final double[][] outMatrix = new double[kms][kns];for (int i = 0; i < kms; i++) {for (int j = 0; j < kns; j++) {double sum = 0.0;for (int ki = 0; ki < km; ki++) {for (int kj = 0; kj < kn; kj++)sum += matrix[i + ki][j + kj] * kernel[ki][kj];}outMatrix[i][j] = sum;}}return outMatrix;}// Of convnValid/************************** Convolution on a tensor.************************/public static double[][] convnValid(final double[][][][] matrix, int mapNoX,double[][][][] kernel, int mapNoY) {int m = matrix.length;int n = matrix[0][mapNoX].length;int h = matrix[0][mapNoX][0].length;int km = kernel.length;int kn = kernel[0][mapNoY].length;int kh = kernel[0][mapNoY][0].length;int kms = m - km + 1;int kns = n - kn + 1;int khs = h - kh + 1;if (matrix.length != kernel.length)throw new RuntimeException("length");final double[][][] outMatrix = new double[kms][kns][khs];for (int i = 0; i < kms; i++) {for (int j = 0; j < kns; j++)for (int k = 0; k < khs; k++) {double sum = 0.0;for (int ki = 0; ki < km; ki++) {for (int kj = 0; kj < kn; kj++)for (int kk = 0; kk < kh; kk++) {sum += matrix[i + ki][mapNoX][j + kj][k + kk]* kernel[ki][mapNoY][kj][kk];}}outMatrix[i][j][k] = sum;}}return outMatrix[0];}// Of convnValid/************************** The sigmod operation.************************/public static double sigmod(double x) {return 1 / (1 + Math.pow(Math.E, -x));}// Of sigmod/************************** Sum all values of a matrix.************************/public static double sum(double[][] error) {int m = error.length;int n = error[0].length;double sum = 0.0;for (int i = 0; i < m; i++) {for (int j = 0; j < n; j++) {sum += error[i][j];}}return sum;}// Of sum/************************** Ad hoc sum.************************/public static double[][] sum(double[][][][] errors, int j) {int m = errors[0][j].length;int n = errors[0][j][0].length;double[][] result = new double[m][n];for (int mi = 0; mi < m; mi++) {for (int nj = 0; nj < n; nj++) {double sum = 0;for (int i = 0; i < errors.length; i++)sum += errors[i][j][mi][nj];result[mi][nj] = sum;}}return result;}// Of sum/************************** Get the index of the maximal value for the final classification.************************/public static int getMaxIndex(double[] out) {double max = out[0];int index = 0;for (int i = 1; i < out.length; i++)if (out[i] > max) {max = out[i];index = i;}return index;}// Of getMaxIndex

}// Of MathUtils

7. Size类

package Day_81;/*** The size of a convolution core.** @author Fan Min minfanphd@163.com.*/

public class Size {/*** Cannot be changed after initialization.*/public final int width;/*** Cannot be changed after initialization.*/public final int height;/************************** The first constructor.** @param paraWidth* The given width.* @param paraHeight* The given height.************************/public Size(int paraWidth, int paraHeight) {width = paraWidth;height = paraHeight;}// Of the first constructor/************************** Divide a scale with another one. For example (4, 12) / (2, 3) = (2, 4).** @param paraScaleSize* The given scale size.* @return The new size.************************/public Size divide(Size paraScaleSize) {int resultWidth = width / paraScaleSize.width;int resultHeight = height / paraScaleSize.height;if (resultWidth * paraScaleSize.width != width|| resultHeight * paraScaleSize.height != height)throw new RuntimeException("Unable to divide " + this + " with " + paraScaleSize);return new Size(resultWidth, resultHeight);}// Of divide/************************** Subtract a scale with another one, and add a value. For example (4, 12) -* (2, 3) + 1 = (3, 10).** @param paraScaleSize* The given scale size.* @param paraAppend* The appended size to both dimensions.* @return The new size.************************/public Size subtract(Size paraScaleSize, int paraAppend) {int resultWidth = width - paraScaleSize.width + paraAppend;int resultHeight = height - paraScaleSize.height + paraAppend;return new Size(resultWidth, resultHeight);}// Of subtract/************************** @param The* string showing itself.************************/public String toString() {String resultString = "(" + width + ", " + height + ")";return resultString;}// Of toString/************************** Unit test.************************/public static void main(String[] args) {Size tempSize1 = new Size(4, 6);Size tempSize2 = new Size(2, 2);System.out.println("" + tempSize1 + " divide " + tempSize2 + " = " + tempSize1.divide(tempSize2));System.out.printf("a");try {System.out.println("" + tempSize2 + " divide " + tempSize1 + " = " + tempSize2.divide(tempSize1));} catch (Exception ee) {System.out.println(ee);} // Of trySystem.out.println("" + tempSize1 + " - " + tempSize2 + " + 1 = " + tempSize1.subtract(tempSize2, 1));}// Of main

}// Of class Size

四. 运行结果