https://tianfeng.space/1947.html

前言概念

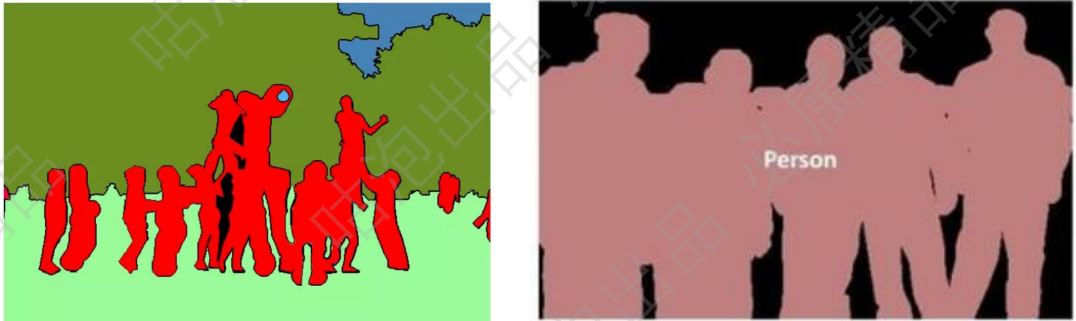

图像分割

分割任务就是在原始图像中逐像素的找到你需要的家伙!

语义分割

就是把每个像素都打上标签(这个像素点是人,树,背景等)

(语义分割只区分类别,不区分类别中具体单位)

实例分割

实例分割不光要区别类别,还要区分类别中每一个个体

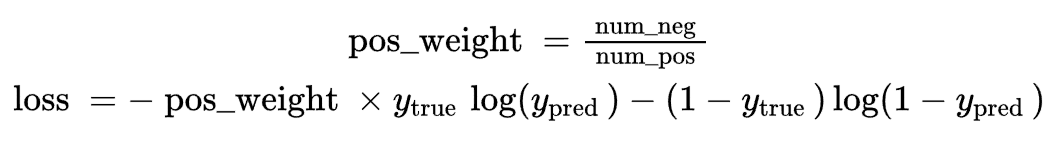

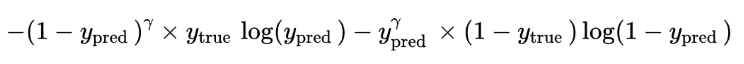

损失函数:

逐像素的交叉熵:还经常需要考虑样本均衡问题,交叉熵损失函数公式如下:

Focal loss:样本也由难易之分,就跟玩游戏一样,难度越高的BOSS奖励越高

Gamma通常设置为2,例如预测正样本概率0.95,如果预测正样本概率0.4, (相当于样本的难易权值)

(再结合样本数量的权值就是Focal Loss)

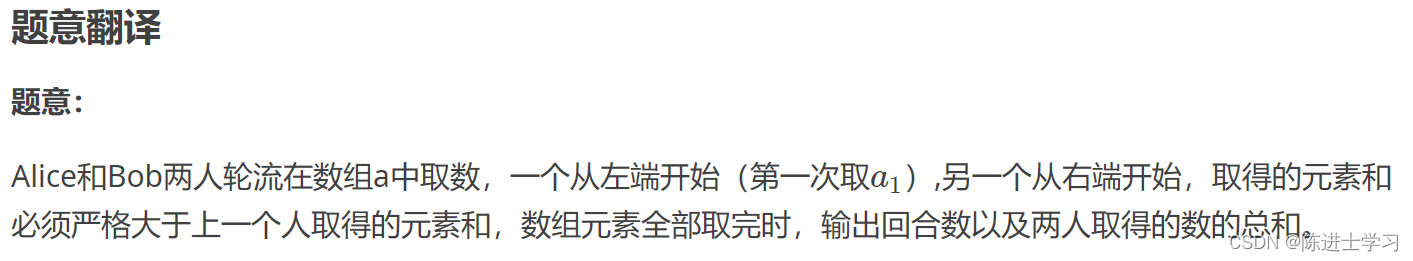

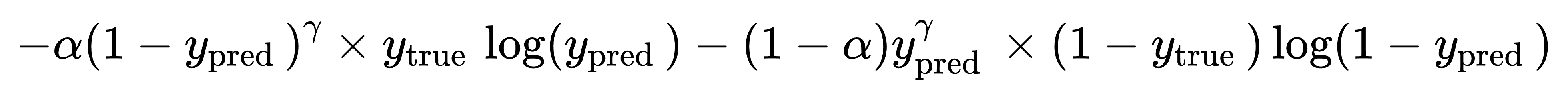

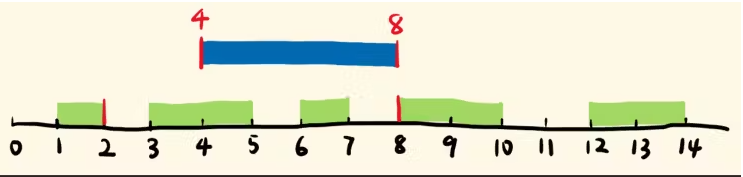

IOU计算

多分类任务时:iou_dog = 801 / true_dog + predict_dog - 801

MIOU指标:

MIOU就是计算所有类别的平均值,一般当作分割任务评估指标

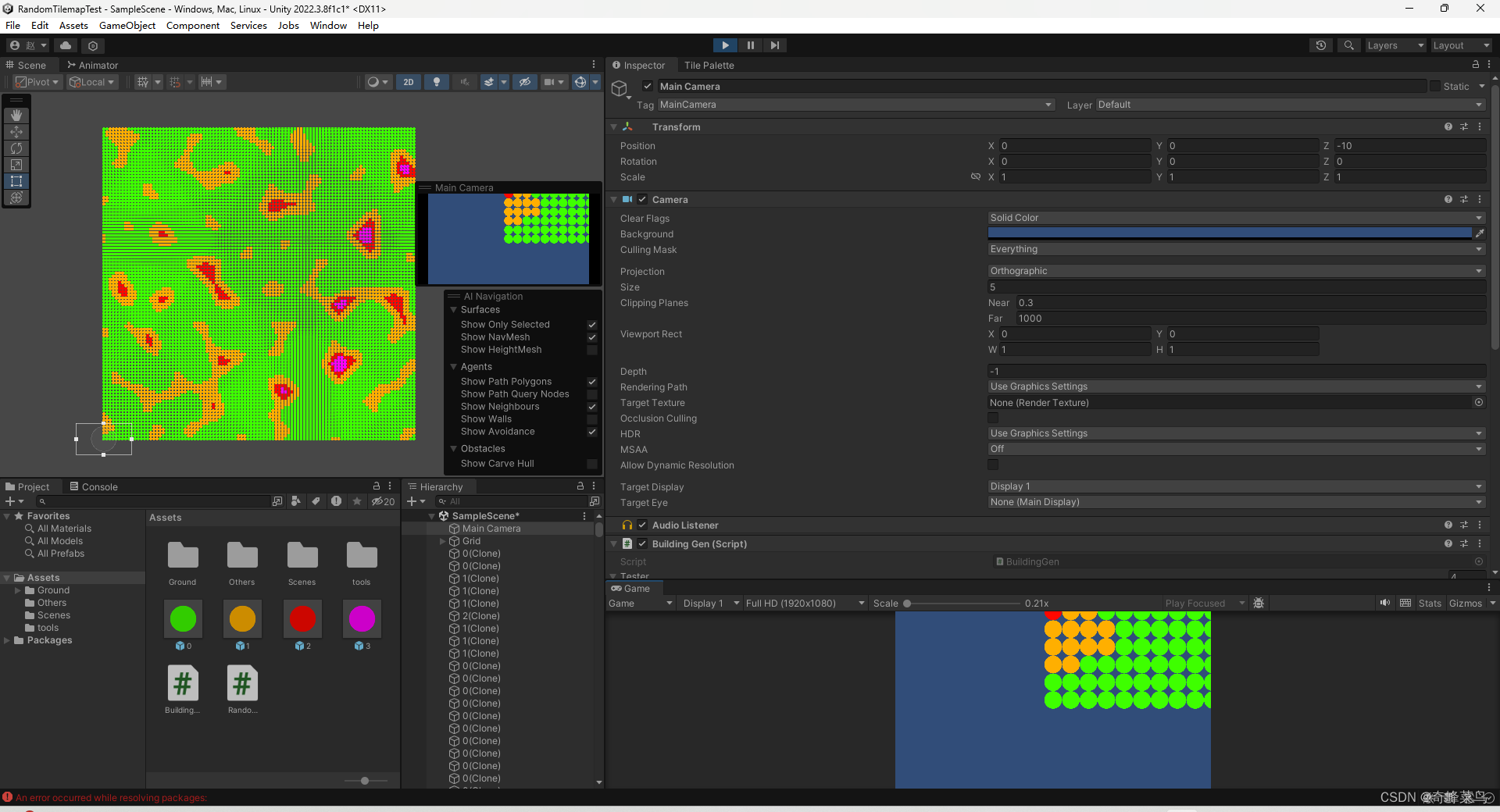

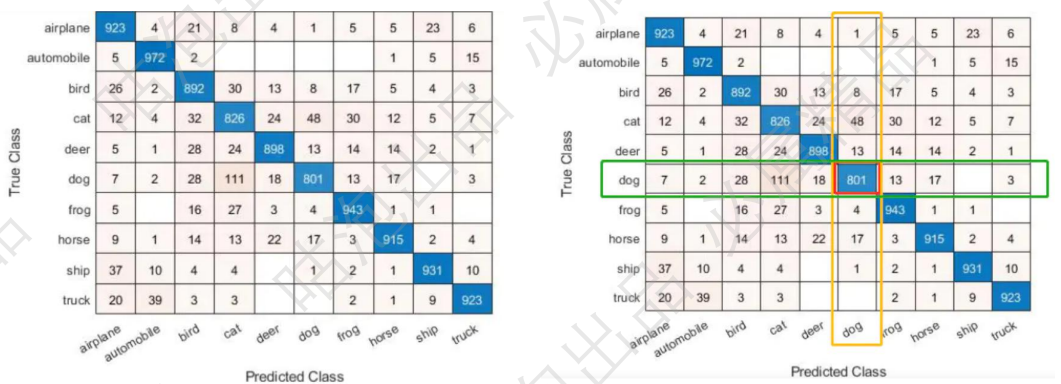

Unet

整体结构:概述就是编码解码过程;简单但是很实用,应用广;起初是做医学方向,现在也是

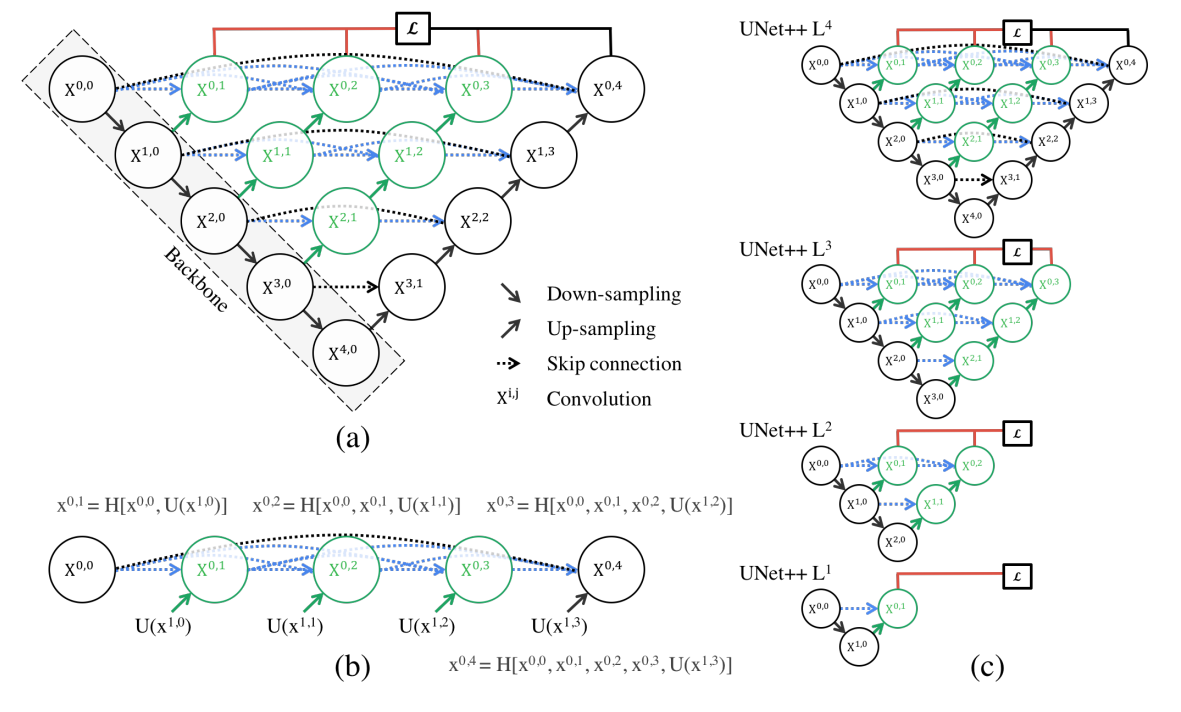

Unet++

整体网络结构:特征融合,拼接更全面;其实跟densenet思想一致;把能拼能凑的特征全用上

Deep Supervision :多输出损失;由多个位置计算,再更新

容易剪枝:可以根据速度要求来快速完成剪枝;训练的时候同样会用到L4,效果还不错

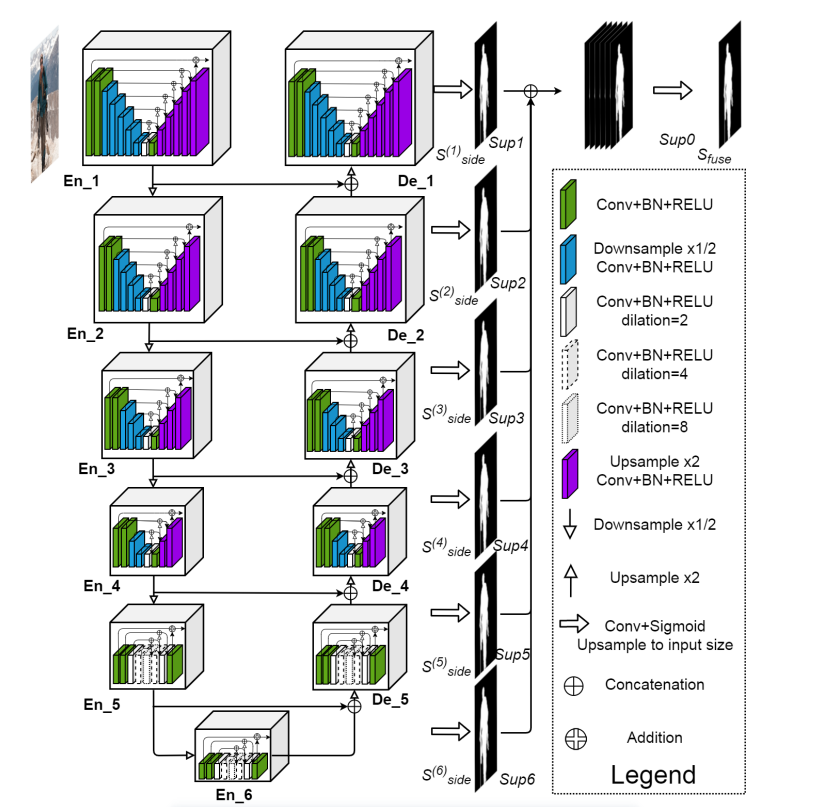

U²net

代码 论文

听名字知道就是把Unet中每个stage再变成一个Unet,这样就嵌套了一个Unet变成U²net;

输出为解码器各个阶段输出再拼接,经过一次卷积输出

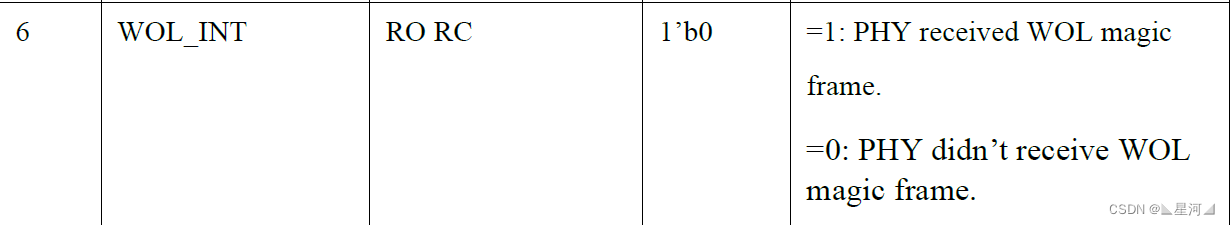

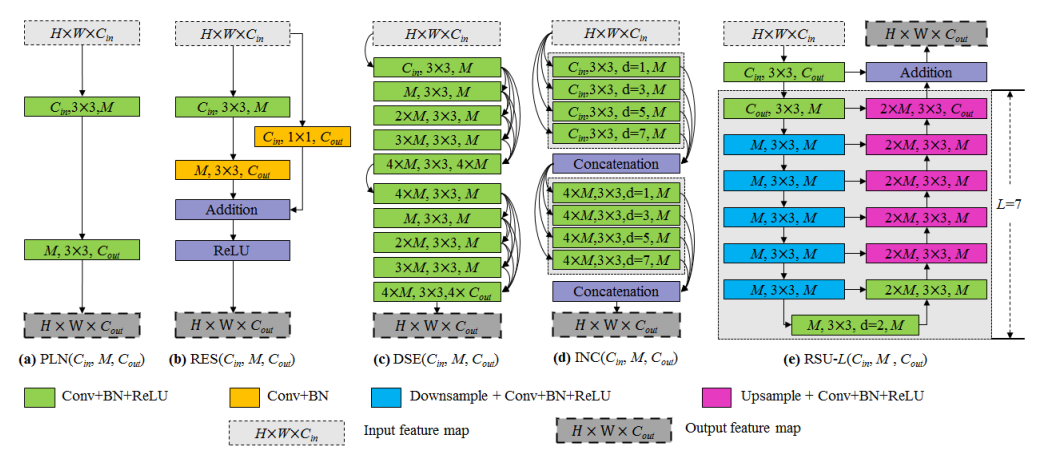

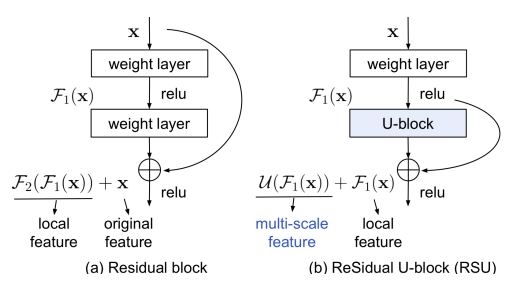

现有卷积块和我们提出的残差U形块RSU的说明:(a)普通卷积块PLN,(b)残差类块RES,(c)密集类块DSE,(d)启始类块INC和(e)我们的残差U型块RSU

残差块与我们的RSU的比较

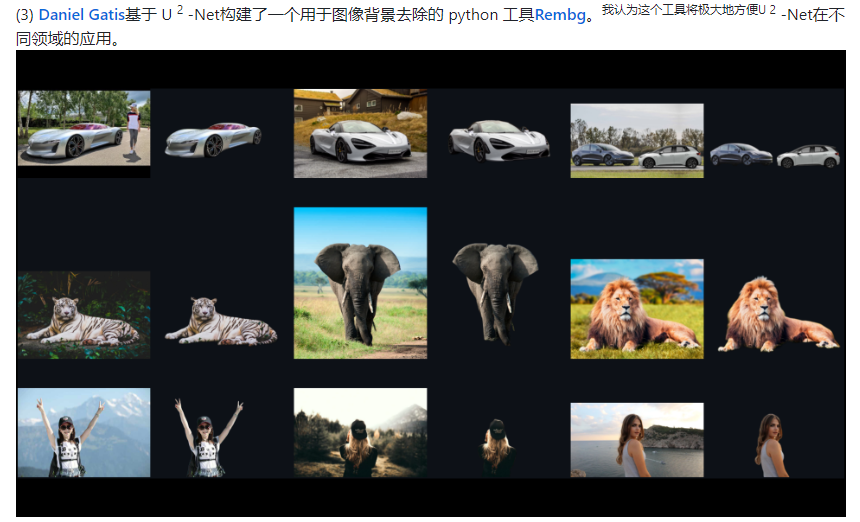

就作者展示的效果而言,出奇的不错,有兴趣去代码界面看看,使用也很简单,下面展示一些

代码结构放最后;有兴趣看看

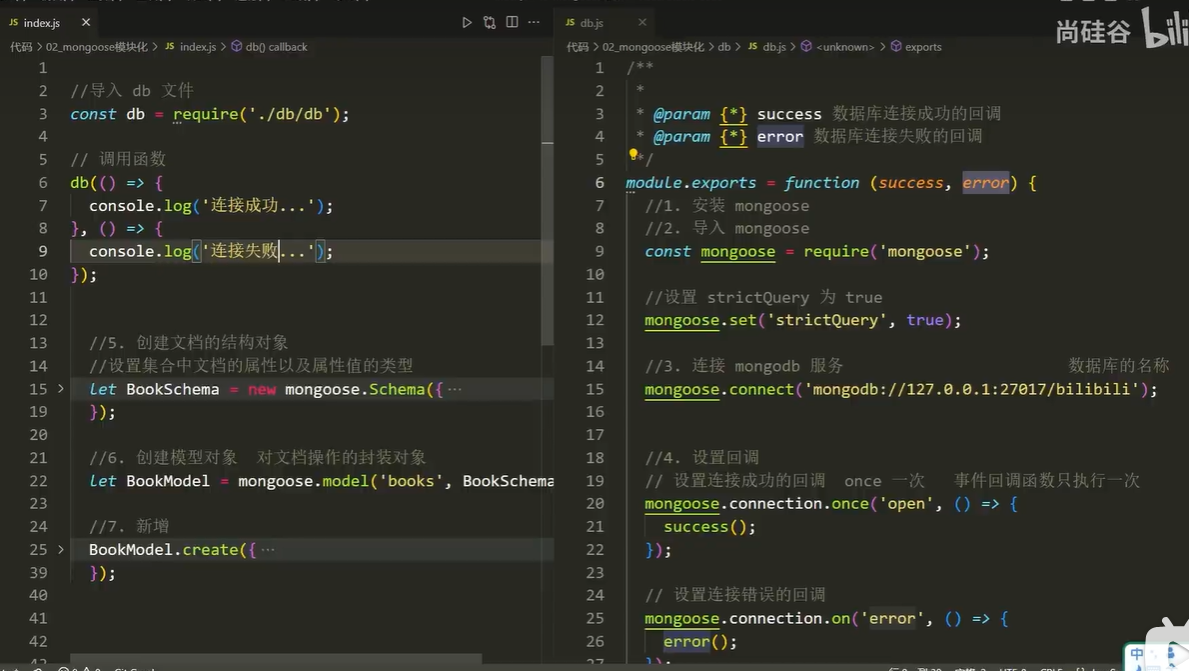

#U²net结构;387行forward开始

import torch

import torch.nn as nn

from torchvision import models

import torch.nn.functional as Fclass REBNCONV(nn.Module):def __init__(self,in_ch=3,out_ch=3,dirate=1):super(REBNCONV,self).__init__()self.conv_s1 = nn.Conv2d(in_ch,out_ch,3,padding=1*dirate,dilation=1*dirate)self.bn_s1 = nn.BatchNorm2d(out_ch)self.relu_s1 = nn.ReLU(inplace=True)def forward(self,x):hx = xxout = self.relu_s1(self.bn_s1(self.conv_s1(hx)))return xout## upsample tensor 'src' to have the same spatial size with tensor 'tar'

def _upsample_like(src,tar):src = F.upsample(src,size=tar.shape[2:],mode='bilinear')return src### RSU-7 ###

class RSU7(nn.Module):#UNet07DRES(nn.Module):def __init__(self, in_ch=3, mid_ch=12, out_ch=3):super(RSU7,self).__init__()self.rebnconvin = REBNCONV(in_ch,out_ch,dirate=1)self.rebnconv1 = REBNCONV(out_ch,mid_ch,dirate=1)self.pool1 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv2 = REBNCONV(mid_ch,mid_ch,dirate=1)self.pool2 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv3 = REBNCONV(mid_ch,mid_ch,dirate=1)self.pool3 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv4 = REBNCONV(mid_ch,mid_ch,dirate=1)self.pool4 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv5 = REBNCONV(mid_ch,mid_ch,dirate=1)self.pool5 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv6 = REBNCONV(mid_ch,mid_ch,dirate=1)self.rebnconv7 = REBNCONV(mid_ch,mid_ch,dirate=2)self.rebnconv6d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv5d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv4d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv3d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv2d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv1d = REBNCONV(mid_ch*2,out_ch,dirate=1)def forward(self,x):print(x.shape)hx = xhxin = self.rebnconvin(hx)print(hxin.shape)hx1 = self.rebnconv1(hxin)print(hx1.shape)hx = self.pool1(hx1)print(hx.shape)hx2 = self.rebnconv2(hx)print(hx2.shape)hx = self.pool2(hx2)print(hx.shape)hx3 = self.rebnconv3(hx)print(hx3.shape)hx = self.pool3(hx3)print(hx.shape)hx4 = self.rebnconv4(hx)print(hx4.shape)hx = self.pool4(hx4)print(hx.shape)hx5 = self.rebnconv5(hx)print(hx5.shape)hx = self.pool5(hx5)print(hx.shape)hx6 = self.rebnconv6(hx)print(hx6.shape)hx7 = self.rebnconv7(hx6)print(hx7.shape)hx6d = self.rebnconv6d(torch.cat((hx7,hx6),1))print(hx6d.shape)hx6dup = _upsample_like(hx6d,hx5)print(hx6dup.shape)hx5d = self.rebnconv5d(torch.cat((hx6dup,hx5),1))print(hx5d.shape)hx5dup = _upsample_like(hx5d,hx4)print(hx5dup.shape)hx4d = self.rebnconv4d(torch.cat((hx5dup,hx4),1))print(hx4d.shape)hx4dup = _upsample_like(hx4d,hx3)print(hx4dup.shape)hx3d = self.rebnconv3d(torch.cat((hx4dup,hx3),1))print(hx3d.shape)hx3dup = _upsample_like(hx3d,hx2)print(hx3dup.shape)hx2d = self.rebnconv2d(torch.cat((hx3dup,hx2),1))print(hx2d.shape)hx2dup = _upsample_like(hx2d,hx1)print(hx2dup.shape)hx1d = self.rebnconv1d(torch.cat((hx2dup,hx1),1))print(hx1d.shape)return hx1d + hxin### RSU-6 ###

class RSU6(nn.Module):#UNet06DRES(nn.Module):def __init__(self, in_ch=3, mid_ch=12, out_ch=3):super(RSU6,self).__init__()self.rebnconvin = REBNCONV(in_ch,out_ch,dirate=1)self.rebnconv1 = REBNCONV(out_ch,mid_ch,dirate=1)self.pool1 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv2 = REBNCONV(mid_ch,mid_ch,dirate=1)self.pool2 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv3 = REBNCONV(mid_ch,mid_ch,dirate=1)self.pool3 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv4 = REBNCONV(mid_ch,mid_ch,dirate=1)self.pool4 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv5 = REBNCONV(mid_ch,mid_ch,dirate=1)self.rebnconv6 = REBNCONV(mid_ch,mid_ch,dirate=2)self.rebnconv5d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv4d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv3d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv2d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv1d = REBNCONV(mid_ch*2,out_ch,dirate=1)def forward(self,x):hx = xhxin = self.rebnconvin(hx)hx1 = self.rebnconv1(hxin)hx = self.pool1(hx1)hx2 = self.rebnconv2(hx)hx = self.pool2(hx2)hx3 = self.rebnconv3(hx)hx = self.pool3(hx3)hx4 = self.rebnconv4(hx)hx = self.pool4(hx4)hx5 = self.rebnconv5(hx)hx6 = self.rebnconv6(hx5)hx5d = self.rebnconv5d(torch.cat((hx6,hx5),1))hx5dup = _upsample_like(hx5d,hx4)hx4d = self.rebnconv4d(torch.cat((hx5dup,hx4),1))hx4dup = _upsample_like(hx4d,hx3)hx3d = self.rebnconv3d(torch.cat((hx4dup,hx3),1))hx3dup = _upsample_like(hx3d,hx2)hx2d = self.rebnconv2d(torch.cat((hx3dup,hx2),1))hx2dup = _upsample_like(hx2d,hx1)hx1d = self.rebnconv1d(torch.cat((hx2dup,hx1),1))return hx1d + hxin### RSU-5 ###

class RSU5(nn.Module):#UNet05DRES(nn.Module):def __init__(self, in_ch=3, mid_ch=12, out_ch=3):super(RSU5,self).__init__()self.rebnconvin = REBNCONV(in_ch,out_ch,dirate=1)self.rebnconv1 = REBNCONV(out_ch,mid_ch,dirate=1)self.pool1 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv2 = REBNCONV(mid_ch,mid_ch,dirate=1)self.pool2 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv3 = REBNCONV(mid_ch,mid_ch,dirate=1)self.pool3 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv4 = REBNCONV(mid_ch,mid_ch,dirate=1)self.rebnconv5 = REBNCONV(mid_ch,mid_ch,dirate=2)self.rebnconv4d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv3d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv2d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv1d = REBNCONV(mid_ch*2,out_ch,dirate=1)def forward(self,x):hx = xhxin = self.rebnconvin(hx)hx1 = self.rebnconv1(hxin)hx = self.pool1(hx1)hx2 = self.rebnconv2(hx)hx = self.pool2(hx2)hx3 = self.rebnconv3(hx)hx = self.pool3(hx3)hx4 = self.rebnconv4(hx)hx5 = self.rebnconv5(hx4)hx4d = self.rebnconv4d(torch.cat((hx5,hx4),1))hx4dup = _upsample_like(hx4d,hx3)hx3d = self.rebnconv3d(torch.cat((hx4dup,hx3),1))hx3dup = _upsample_like(hx3d,hx2)hx2d = self.rebnconv2d(torch.cat((hx3dup,hx2),1))hx2dup = _upsample_like(hx2d,hx1)hx1d = self.rebnconv1d(torch.cat((hx2dup,hx1),1))return hx1d + hxin### RSU-4 ###

class RSU4(nn.Module):#UNet04DRES(nn.Module):def __init__(self, in_ch=3, mid_ch=12, out_ch=3):super(RSU4,self).__init__()self.rebnconvin = REBNCONV(in_ch,out_ch,dirate=1)self.rebnconv1 = REBNCONV(out_ch,mid_ch,dirate=1)self.pool1 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv2 = REBNCONV(mid_ch,mid_ch,dirate=1)self.pool2 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.rebnconv3 = REBNCONV(mid_ch,mid_ch,dirate=1)self.rebnconv4 = REBNCONV(mid_ch,mid_ch,dirate=2)self.rebnconv3d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv2d = REBNCONV(mid_ch*2,mid_ch,dirate=1)self.rebnconv1d = REBNCONV(mid_ch*2,out_ch,dirate=1)def forward(self,x):hx = xhxin = self.rebnconvin(hx)hx1 = self.rebnconv1(hxin)hx = self.pool1(hx1)hx2 = self.rebnconv2(hx)hx = self.pool2(hx2)hx3 = self.rebnconv3(hx)hx4 = self.rebnconv4(hx3)hx3d = self.rebnconv3d(torch.cat((hx4,hx3),1))hx3dup = _upsample_like(hx3d,hx2)hx2d = self.rebnconv2d(torch.cat((hx3dup,hx2),1))hx2dup = _upsample_like(hx2d,hx1)hx1d = self.rebnconv1d(torch.cat((hx2dup,hx1),1))return hx1d + hxin### RSU-4F ###

class RSU4F(nn.Module):#UNet04FRES(nn.Module):def __init__(self, in_ch=3, mid_ch=12, out_ch=3):super(RSU4F,self).__init__()self.rebnconvin = REBNCONV(in_ch,out_ch,dirate=1)self.rebnconv1 = REBNCONV(out_ch,mid_ch,dirate=1)self.rebnconv2 = REBNCONV(mid_ch,mid_ch,dirate=2)self.rebnconv3 = REBNCONV(mid_ch,mid_ch,dirate=4)self.rebnconv4 = REBNCONV(mid_ch,mid_ch,dirate=8)self.rebnconv3d = REBNCONV(mid_ch*2,mid_ch,dirate=4)self.rebnconv2d = REBNCONV(mid_ch*2,mid_ch,dirate=2)self.rebnconv1d = REBNCONV(mid_ch*2,out_ch,dirate=1)def forward(self,x):hx = xhxin = self.rebnconvin(hx)print(hxin.shape)hx1 = self.rebnconv1(hxin)print(hx1.shape)hx2 = self.rebnconv2(hx1)print(hx2.shape)hx3 = self.rebnconv3(hx2)print(hx3.shape)hx4 = self.rebnconv4(hx3)print(hx4.shape)hx3d = self.rebnconv3d(torch.cat((hx4,hx3),1))print(hx3d.shape)hx2d = self.rebnconv2d(torch.cat((hx3d,hx2),1))print(hx2d.shape)hx1d = self.rebnconv1d(torch.cat((hx2d,hx1),1))print(hx1d.shape)return hx1d + hxin##### U^2-Net ####

class U2NET(nn.Module):def __init__(self,in_ch=3,out_ch=1):super(U2NET,self).__init__()self.stage1 = RSU7(in_ch,32,64)self.pool12 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.stage2 = RSU6(64,32,128)self.pool23 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.stage3 = RSU5(128,64,256)self.pool34 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.stage4 = RSU4(256,128,512)self.pool45 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.stage5 = RSU4F(512,256,512)self.pool56 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.stage6 = RSU4F(512,256,512)# decoderself.stage5d = RSU4F(1024,256,512)self.stage4d = RSU4(1024,128,256)self.stage3d = RSU5(512,64,128)self.stage2d = RSU6(256,32,64)self.stage1d = RSU7(128,16,64)self.side1 = nn.Conv2d(64,out_ch,3,padding=1)self.side2 = nn.Conv2d(64,out_ch,3,padding=1)self.side3 = nn.Conv2d(128,out_ch,3,padding=1)self.side4 = nn.Conv2d(256,out_ch,3,padding=1)self.side5 = nn.Conv2d(512,out_ch,3,padding=1)self.side6 = nn.Conv2d(512,out_ch,3,padding=1)self.outconv = nn.Conv2d(6,out_ch,1)def forward(self,x):print(x.shape)hx = x#stage 1hx1 = self.stage1(hx)print(hx1.shape)hx = self.pool12(hx1)print(hx.shape)#stage 2hx2 = self.stage2(hx)print(hx2.shape)hx = self.pool23(hx2)print(hx.shape)#stage 3hx3 = self.stage3(hx)print(hx3.shape)hx = self.pool34(hx3)print(hx.shape)#stage 4hx4 = self.stage4(hx)print(hx4.shape)hx = self.pool45(hx4)print(hx.shape)#stage 5hx5 = self.stage5(hx)print(hx5.shape)hx = self.pool56(hx5)print(hx.shape)#stage 6hx6 = self.stage6(hx)print(hx6.shape)hx6up = _upsample_like(hx6,hx5)print(hx6up.shape)#-------------------- decoder --------------------hx5d = self.stage5d(torch.cat((hx6up,hx5),1))print(hx5d.shape)hx5dup = _upsample_like(hx5d,hx4)print(hx5dup.shape)hx4d = self.stage4d(torch.cat((hx5dup,hx4),1))print(hx4d.shape)hx4dup = _upsample_like(hx4d,hx3)print(hx4dup.shape)hx3d = self.stage3d(torch.cat((hx4dup,hx3),1))print(hx3d.shape)hx3dup = _upsample_like(hx3d,hx2)print(hx3dup.shape)hx2d = self.stage2d(torch.cat((hx3dup,hx2),1))print(hx2d.shape)hx2dup = _upsample_like(hx2d,hx1)print(hx2dup.shape)hx1d = self.stage1d(torch.cat((hx2dup,hx1),1))print(hx1d.shape)#side outputd1 = self.side1(hx1d)print(d1.shape)d2 = self.side2(hx2d)print(d2.shape)d2 = _upsample_like(d2,d1)print(d2.shape)d3 = self.side3(hx3d)print(d3.shape)d3 = _upsample_like(d3,d1)print(d3.shape)d4 = self.side4(hx4d)print(d4.shape)d4 = _upsample_like(d4,d1)print(d4.shape)d5 = self.side5(hx5d)print(d5.shape)d5 = _upsample_like(d5,d1)print(d5.shape)d6 = self.side6(hx6)print(d6.shape)d6 = _upsample_like(d6,d1)print(d6.shape)d0 = self.outconv(torch.cat((d1,d2,d3,d4,d5,d6),1))print(d0.shape)return F.sigmoid(d0), F.sigmoid(d1), F.sigmoid(d2), F.sigmoid(d3), F.sigmoid(d4), F.sigmoid(d5), F.sigmoid(d6)### U^2-Net small ###

class U2NETP(nn.Module):def __init__(self,in_ch=3,out_ch=1):super(U2NETP,self).__init__()self.stage1 = RSU7(in_ch,16,64)self.pool12 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.stage2 = RSU6(64,16,64)self.pool23 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.stage3 = RSU5(64,16,64)self.pool34 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.stage4 = RSU4(64,16,64)self.pool45 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.stage5 = RSU4F(64,16,64)self.pool56 = nn.MaxPool2d(2,stride=2,ceil_mode=True)self.stage6 = RSU4F(64,16,64)# decoderself.stage5d = RSU4F(128,16,64)self.stage4d = RSU4(128,16,64)self.stage3d = RSU5(128,16,64)self.stage2d = RSU6(128,16,64)self.stage1d = RSU7(128,16,64)self.side1 = nn.Conv2d(64,out_ch,3,padding=1)self.side2 = nn.Conv2d(64,out_ch,3,padding=1)self.side3 = nn.Conv2d(64,out_ch,3,padding=1)self.side4 = nn.Conv2d(64,out_ch,3,padding=1)self.side5 = nn.Conv2d(64,out_ch,3,padding=1)self.side6 = nn.Conv2d(64,out_ch,3,padding=1)self.outconv = nn.Conv2d(6,out_ch,1)def forward(self,x):hx = x#stage 1hx1 = self.stage1(hx)hx = self.pool12(hx1)#stage 2hx2 = self.stage2(hx)hx = self.pool23(hx2)#stage 3hx3 = self.stage3(hx)hx = self.pool34(hx3)#stage 4hx4 = self.stage4(hx)hx = self.pool45(hx4)#stage 5hx5 = self.stage5(hx)hx = self.pool56(hx5)#stage 6hx6 = self.stage6(hx)hx6up = _upsample_like(hx6,hx5)#decoderhx5d = self.stage5d(torch.cat((hx6up,hx5),1))hx5dup = _upsample_like(hx5d,hx4)hx4d = self.stage4d(torch.cat((hx5dup,hx4),1))hx4dup = _upsample_like(hx4d,hx3)hx3d = self.stage3d(torch.cat((hx4dup,hx3),1))hx3dup = _upsample_like(hx3d,hx2)hx2d = self.stage2d(torch.cat((hx3dup,hx2),1))hx2dup = _upsample_like(hx2d,hx1)hx1d = self.stage1d(torch.cat((hx2dup,hx1),1))#side outputd1 = self.side1(hx1d)d2 = self.side2(hx2d)d2 = _upsample_like(d2,d1)d3 = self.side3(hx3d)d3 = _upsample_like(d3,d1)d4 = self.side4(hx4d)d4 = _upsample_like(d4,d1)d5 = self.side5(hx5d)d5 = _upsample_like(d5,d1)d6 = self.side6(hx6)d6 = _upsample_like(d6,d1)d0 = self.outconv(torch.cat((d1,d2,d3,d4,d5,d6),1))return F.sigmoid(d0), F.sigmoid(d1), F.sigmoid(d2), F.sigmoid(d3), F.sigmoid(d4), F.sigmoid(d5), F.sigmoid(d6)

![ES查询数据的时报错:circuit_breaking_exception[[parent] Data too large](https://img-blog.csdnimg.cn/b1ea2a4e4ddb4f49b2600a2ff606e20d.png)