爬虫核心

1. HTTP协议与WEB开发

1. 什么是请求头请求体,响应头响应体 2. URL地址包括什么 3. get请求和post请求到底是什么 4. Content-Type是什么

(1)简介

HTTP协议是Hyper Text Transfer Protocol(超文本传输协议)的缩写,是用于万维网(WWW:World Wide Web )服务器与本地浏览器之间传输超文本的传送协议。HTTP是一个属于应用层的面向对象的协议,由于其简捷、快速的方式,适用于分布式超媒体信息系统。它于1990年提出,经过几年的使用与发展,得到不断地完善和扩展。HTTP协议工作于客户端-服务端架构为上。浏览器作为HTTP客户端通过URL向HTTP服务端即WEB服务器发送所有请求。Web服务器根据接收到的请求后,向客户端发送响应信息。

(2)socket套接字

最简单的web应用程序

import socketsock = socket.socket()

sock.bind(("127.0.0.1", 8890))

sock.listen(3)print("服务器已经启动...")

while 1:conn, addr = sock.accept()data = conn.recv(1024)print("data:", data)conn.send('HTTP/1.1 200 ok\r\n\r\n<h1 onClick="alert(\'alex is greened\')" style="color:green">Alex</h1>'.encode())conn.close()基于postman完成测试!

(3)请求协议与响应协议

http协议包含由浏览器发送数据到服务器需要遵循的请求协议与服务器发送数据到浏览器需要遵循的请求协议。用于HTTP协议交互的信被为HTTP报文。请求端(客户端)的HTTP报文 做请求报文,响应端(服务器端)的 做响应报文。HTTP报文本身是由多行数据构成的字文本。

一个完整的URL包括:协议、ip、端口、路径、参数

例如: 百度安全验证 其中https是协议,www.baidu.com 是IP,端口默认80,/s是路径,参数是wd=yuan

请求方式: get与post请求

GET提交的数据会放在URL之后,以?分割URL和传输数据,参数之间以&相连,如EditBook?name=test1&id=123456. POST方法是把提交的数据放在HTTP包的请求体中.

GET提交的数据大小有限制(因为浏览器对URL的长度有限制),而POST方法提交的数据没有限制

响应状态码:状态码的职 是当客户端向服务器端发送请求时, 返回的请求 结果。借助状态码,用户可以知道服务器端是正常 理了请求,还是出 现了 。状态码如200 OK,以3位数字和原因组成。

2. requests&反爬破解

(1)UA反爬

import requestsheaders = {"User-Agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/110.0.0.0 Safari/537.36",

}res = requests.get("https://www.baidu.com/",# headers=headers

)# 解析数据

with open("baidu.html", "w") as f:f.write(res.text)(2)referer反爬

import requestsheaders = {"User-Agent": "Mozilla/5.0 (Macintosh; Intel Mac OS X 10_15_7) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/110.0.0.0 Safari/537.36","Referer": "https://movie.douban.com/explore",

}res = requests.get("https://m.douban.com/rexxar/api/v2/movie/recommend?refresh=0&start=0&count=20&selected_categories=%7B%7D&uncollect=false&tags=",headers=headers

)# 解析数据

print(res.text)(3)cookie反爬

import requests

url = "https://stock.xueqiu.com/v5/stock/screener/quote/list.json?page=1&size=30&order=desc&orderby=percent&order_by=percent&market=CN&type=sh_sz"

cookie = 'xq_a_token=a0f5e0d91bc0846f43452e89ae79e08167c42068; xqat=a0f5e0d91bc0846f43452e89ae79e08167c42068; xq_r_token=76ed99965d5bffa08531a6a47501f096f61108e8; xq_id_token=eyJ0eXAiOiJKV1QiLCJhbGciOiJSUzI1NiJ9.eyJ1aWQiOi0xLCJpc3MiOiJ1YyIsImV4cCI6MTY5NTUxNTc5NCwiY3RtIjoxNjkzMjAzODIzMzAwLCJjaWQiOiJkOWQwbjRBWnVwIn0.MCIGGTGaSPe9nVuXkyrXQTlCthdURSnDtqm8dGttO2XYHeaMPSKmHQvsJmbw3OJTRnkf0KHZvgF0W3Rv-9uYe4P2Wizt0g2QzQonONjUmExABmZX0e3ara8BzBQ3b96H7dm0LV4pdBlnOW0A9PUmGRouWM7kVUOGPvd3X7GkB7M_th8pV8SZo9Iz4nzjrwQzxPBa0DlS7whbeNeXMnbnmAPp7z-eG75vdE2Pb3OyZ5Gv-FINhpQtAWo95lTxZVw5C5VHSzbR_-z8uqH6DD0xop4_wvKw5LIVwu6ZZ6TUnNFr3zGU9jWqAGgdzcKgO38dlL6uXNixa9mrKOd1OZnDig; cookiesu=431693203848858; u=431693203848858; Hm_lvt_1db88642e346389874251b5a1eded6e3=1693203851; device_id=7971eba10048692a91d87e3dad9eb9ca; s=bv11kb1wna; Hm_lpvt_1db88642e346389874251b5a1eded6e3=1693203857'

headers = {'User-Agent': 'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/109.0.0.0 Safari/537.36',"referer": "https://xueqiu.com/","cookie": cookie,}

res = requests.get(url, headers=headers)

print(res.text)3. 请求参数

(1)get请求以及查询参数

(2)post请求以及请求体参数

import requestswhile 1:wd = input("请输入翻译内容:")res = requests.post("https://aidemo.youdao.com/trans?", params={}, headers={},data={"q": wd,"from": "Auto","to": "Auto"})print(res.json().get("translation")[0])4. 爬虫图片和视频

(1)直接爬取媒体数据流

import requests# (1)下载图片

url = "https://pic.netbian.com/uploads/allimg/230812/202108-16918428684ab5.jpg"res = requests.get(url)# 解析数据

with open("a.jpg", "wb") as f:f.write(res.content)# (2)下载视频url = "https://vd3.bdstatic.com/mda-nadbjpk0hnxwyndu/720p/h264_delogo/1642148105214867253/mda-nadbjpk0hnxwyndu.mp4?v_from_s=hkapp-haokan-hbe&auth_key=1693223039-0-0-e2da819f15bfb93409ce23540f3b10fa&bcevod_channel=searchbox_feed&pd=1&cr=2&cd=0&pt=3&logid=2639522172&vid=5423681428712102654&klogid=2639522172&abtest=112162_5"res = requests.get(url)# 解析数据

with open("美女.mp4", "wb") as f:f.write(res.content)(2)批量爬取数据

import requests

import re

import os# (1)获取当页所有的img url

start_url = "https://pic.netbian.com/4kmeinv/"res = requests.get(start_url)

img_url_list = re.findall("uploads/allimg/.*?.jpg", res.text)print(img_url_list)# (2)循环下载所有图片for img_url in img_url_list:res = requests.get("https://pic.netbian.com/" + img_url)img_name = os.path.basename(img_url)with open(img_name, "wb") as f:f.write(res.content)5. 打码平台

获取验证码

打码平台:图鉴

import base64

import json

import requestsdef base64_api(uname, pwd, img, typeid):with open(img, 'rb') as f:base64_data = base64.b64encode(f.read())b64 = base64_data.decode()data = {"username": uname, "password": pwd, "typeid": typeid, "image": b64}result = json.loads(requests.post("http://api.ttshitu.com/predict", json=data).text)if result['success']:return result["data"]["result"]else:# !!!!!!!注意:返回 人工不足等 错误情况 请加逻辑处理防止脚本卡死 继续重新 识别return result["message"]if __name__ == "__main__":` img_path = "./v_code.jpg"result = base64_api(uname='yuan0316', pwd='yuan0316', img=img_path, typeid=3)print(result)6. 今日作业

动手练习:模拟登陆

-

古诗文:https://so.gushiwen.cn

JS逆向实战案例1

URL地址:https://user.wangxiao.cn/login?url=http%3A%2F%2Fks.wangxiao.cn%2F

1、抓包分析登录请求时,发现请求体“password”被加密

2、可以搜索请求体内容找到对应源代码部分,这里选择使用访问的url去搜索

3、这里搜索到3处地方,无法判断具体是哪一块的源代码,可以都添加上断点,再次点击登陆。看源代码会停在哪个位置。

4、找到源代码,看到password是由“密码 + 10位的时间戳”,再使用encryptFn函数进行处理。

5、再点击找到js函数源代码,刚刚查到的密码不是这个长字符串,判断使用base64对password进行了编码,再使用RSA加密算法进行的加密。

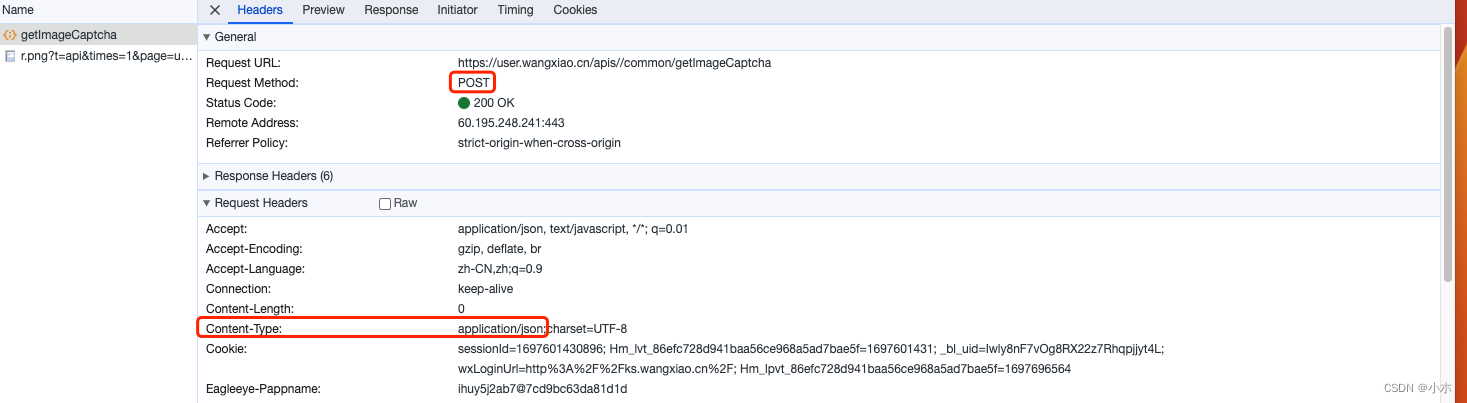

(1)获取验证码

import requests

import base64

import jsondef base64_api(b64):data = {"username": "bb328410948", "password": "bb328410948", "typeid": 3, "image": b64}result = json.loads(requests.post("http://api.ttshitu.com/predict", json=data).text)if result['success']:return result["data"]["result"]else:return result["message"]session = requests.session()

session.headers = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/113.0.0.0 Safari/537.36"

}# 进入登录页, 目的: 加载cookie

login_url = "https://user.wangxiao.cn/login?url=http%3A%2F%2Fks.wangxiao.cn%2F"

session.get(login_url)

session.headers['Content-Type'] = "application/json;charset=UTF-8"

# 下载验证码图片

verify_img_url = "https://user.wangxiao.cn/apis//common/getImageCaptcha"

img_resp = session.post(verify_img_url).json().get("data")

img_b64 = img_resp.split(",")[-1]

print(img_b64)with open("code.png", mode="wb") as f:f.write(base64.b64decode(img_b64))(2)JS逆向密码加密

rsa非对称加密:

from Crypto.PublicKey import RSA

from Crypto.Cipher import PKCS1_v1_5

import base64# (1)创建公钥私钥

# rsakey = RSA.generate(1024)

#

# with open("rsa.public.pem", mode="wb") as f:

# f.write(rsakey.publickey().exportKey())

#

# with open("rsa.private.pem", mode="wb") as f:

# f.write(rsakey.exportKey())# (2)加密

data = "我喜欢好多女孩"

with open("rsa.public.pem", mode="r") as f:pk = f.read()rsa_pk = RSA.importKey(pk)rsa = PKCS1_v1_5.new(rsa_pk)result = rsa.encrypt(data.encode("utf-8"))print("原生加密:", result)# 处理成b64方便传输b64_result = base64.b64encode(result).decode("utf-8")print("rsa加密数据:", b64_result)# (3)解密:私钥

data = "JRI0YcnIVQ6elt6lKnNGxmBOaFRb4vkcj5vO6z5/bEvEB8WgHvjmHag6kaDQNXLDsISWR8bEjBhy7m78RGaDmEchVam7Bl1UXFhMq3YeQ6bqsGf+lKHtC8eYN5MJAeJ8vYUOVY3gShKhMT+WVfmIdEWFIrRM1Z6p3AGH3Qrq+0U="

ret = base64.b64decode(data.encode())with open("rsa.private.pem", mode="r") as f:prikey = f.read()rsa_pk = RSA.importKey(prikey)rsa = PKCS1_v1_5.new(rsa_pk)result = rsa.decrypt(ret, None)print("rsa解密数据:::", result.decode("utf-8"))

import requests

import base64

import json

from Crypto.PublicKey import RSA

from Crypto.Cipher import PKCS1_v1_5def base64_api(b64):data = {"username": "yuan0316", "password": "yuan0316", "typeid": 3, "image": b64}result = json.loads(requests.post("http://api.ttshitu.com/predict", json=data).text)if result['success']:return result["data"]["result"]else:return result["message"]return ""# 为了保持cookie状态

# 所有的服务器返回的set-cookie都可以自动帮你保存和更新

# js动态添加的cookie 它无法保持..

# 如果你手动添加了cookie信息. 后续请求都会保持该cookie

session = requests.session()# # 如果遇到了js动态加载的cookie. 可以使用下面这个方案来手动保持.

# session.cookies['abc'] = "123456"session.headers = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/113.0.0.0 Safari/537.36"

}# 进入登录页, 目的: 加载cookie

login_url = "https://user.wangxiao.cn/login?url=http%3A%2F%2Fks.wangxiao.cn%2F"

session.get(login_url)# 根据实际案例来尝试.

# 一次搞定(后续的请求不能有html的请求)

session.headers['Content-Type'] = "application/json;charset=UTF-8"# 下载验证码图片

verify_img_url = "https://user.wangxiao.cn/apis//common/getImageCaptcha"

img_resp = session.post(verify_img_url)img_resp_json = img_resp.json()img_base64 = img_resp_json.get("data").split(",")[-1]

with open("tu.png", mode="wb") as f:f.write(base64.b64decode(img_base64))# 识别验证码

verify_code = base64_api(img_base64)

print(verify_code)# 在加密之前, 需要访问getTime, 获取到一个时间.

getTime_url = "https://user.wangxiao.cn/apis//common/getTime"

getTime_resp = session.post(getTime_url)

getTime_json = getTime_resp.json()getTime = getTime_json.get('data')login_name = "13121758648"

password_ming = "13121758648yuan"# 对密码进行加密

# rsa加密(密码+时间)

# rsa的公钥: "MIGfMA0GCSqGSIb3DQEBAQUAA4GNADCBiQKBgQDA5Zq6ZdH/RMSvC8WKhp5gj6Ue4Lqjo0Q2PnyGbSkTlYku0HtVzbh3S9F9oHbxeO55E8tEEQ5wj/+52VMLavcuwkDypG66N6c1z0Fo2HgxV3e0tqt1wyNtmbwg7ruIYmFM+dErIpTiLRDvOy+0vgPcBVDfSUHwUSgUtIkyC47UNQIDAQAB"# 把公钥处理成字节

rsa_key_bs = base64.b64decode("MIGfMA0GCSqGSIb3DQEBAQUAA4GNADCBiQKBgQDA5Zq6ZdH/RMSvC8WKhp5gj6Ue4Lqjo0Q2PnyGbSkTlYku0HtVzbh3S9F9oHbxeO55E8tEEQ5wj/+52VMLavcuwkDypG66N6c1z0Fo2HgxV3e0tqt1wyNtmbwg7ruIYmFM+dErIpTiLRDvOy+0vgPcBVDfSUHwUSgUtIkyC47UNQIDAQAB")

# 加载公钥

pub_key = RSA.importKey(rsa_key_bs)

# 创加密器

rsa = PKCS1_v1_5.new(pub_key)

# 进行rsa加密, 加密的内容是 密码+时间

password_mi_bs = rsa.encrypt((password_ming+getTime).encode("utf-8"))

# 加密后的字节. 处理成base64

password_mi = base64.b64encode(password_mi_bs).decode()# 登陆需要的参数备齐了. 可以开始登陆了

login_data = {"imageCaptchaCode": verify_code,"password": password_mi,"userName": login_name

}password_login_url = "https://user.wangxiao.cn/apis//login/passwordLogin"

login_resp = session.post(password_login_url, data=json.dumps(login_data))login_json = login_resp.json()login_success_data = login_json.get("data")注意点:

1、POST访问方式请求体类型,request.post默认为urlencoding表单格式,请求体的类型为json格式时,需要使用json.dumps(data)

2、一般,当网页需要跳转访问时,会验证是否携带cookie,使用以下方式可以实现cookie的自动添加

# 自动保存cookie

session = requests.session()# 添加请求头

session.headers = {"User-Agent": "Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/113.0.0.0 Safari/537.36"

}# 进入首页,加载cookie

session.get(login_url)# 后续再使用session.get/post访问二级页面时,将会自动添加cookie