目录

前向传播过程

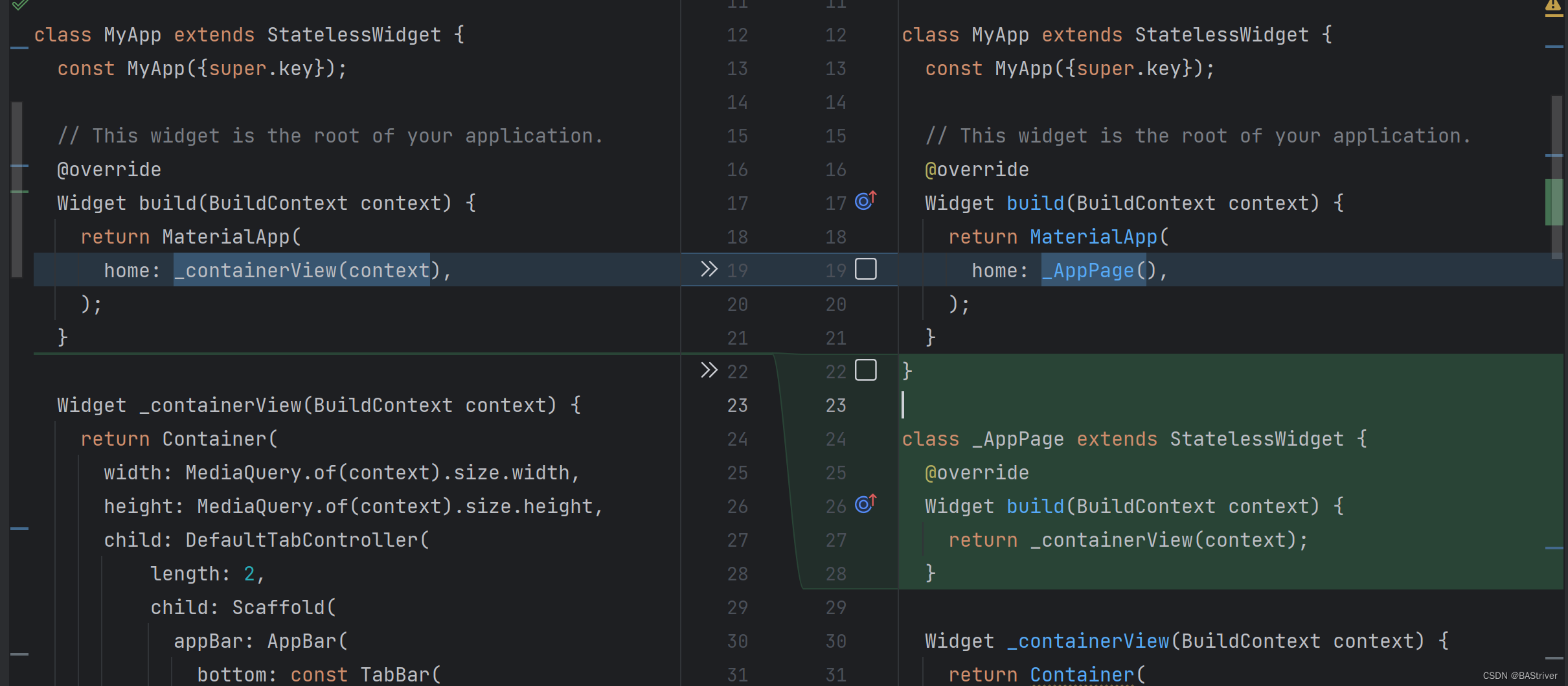

训练过程:

网络结构

前向传播过程

batch_preds--> tgt-->tgt=cat(tgt, padding)-->tgt_embedding-->tgt_mask,tgt_padding_mask以NLP的角度,tgt 代表了 词汇表的长度,encoder部分直接对图像进行处理,输出序列的patch,16倍下采样,最终输出的序列长度为576。

其中词汇表的设置由,见这里

nn.Eebedding 完成decoder中,根据句子的最大长度生成了掩码mask,下三角矩阵全为0.还有paddding mask,第一个为False,其余全为填充的,第一个是开始标志。

decoder的输入序列初始化 全为填充的token,为406,外加一个开始标志,因此输入的词向量嵌入都根据它初始化。

decoder的输入包括 encoder的输出序列+位置编码, 初始化的词向量嵌入, 掩码mask, padding掩码。

因为只检测一张图片,而NLP任务中需要预测一句话,可能包含多个单词。所以,输出只采用了

return self.output(preds)[:, length-1, :]来进行预测。

注意

NLP中的语句生成,贪心所搜,与top_k_top_p_filtering相关见这里

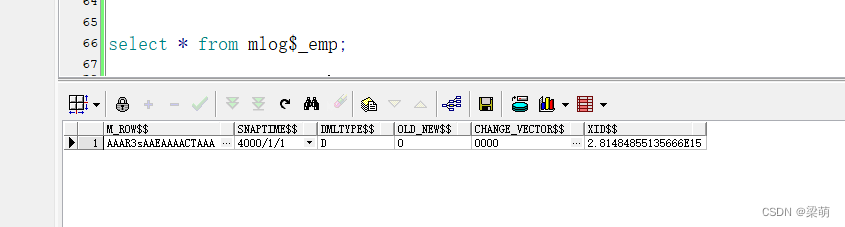

采用自回归方式生成预测,前向过程后生成的预测结果可视化如下

其中的404由

num_bins + class得出。实际离散化后包含406个标记,因为加入了开始(404)和结束(405)标记。

得到上述的网络的输出预测后,开始对这些进行处理。

1、 得到第一个结束标志 EOS 的索引 index

2、 判断 index-1 是否是 5 的倍数,若不是,则本次的预测不进行处理,默认没有检测到任何目标

3、 去掉额外填充的噪声

4、 迭代的每次拿出5个token

5、 前4维 为 box的信息,第5维为类别信息

6、 预测的表示类别的离散化token需要减去 num_bins,才是最后的类别

7、 box 反离散化, box / (num_bins - 1), 这个是输出特征尺度下的归一化的box的坐标

8、 将box的尺度返回输入图片的尺度, box的信息为 (Xmin,Ymin,Xmax,Ymax)训练过程:

至于文中的 各种训练的设置,包括序列增强技术,没有看到,没有仔细的看。

损失函数,文章中说用的极大似然估计,最终的形式是交叉熵损失函数。

网络结构

EncoderDecoder((encoder): Encoder((model): VisionTransformer((patch_embed): PatchEmbed((proj): Conv2d(3, 384, kernel_size=(16, 16), stride=(16, 16))(norm): Identity())(pos_drop): Dropout(p=0.0, inplace=False)(blocks): Sequential((0): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity())(1): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity())(2): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity())(3): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity())(4): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity())(5): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity())(6): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity())(7): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity())(8): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity())(9): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity())(10): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity())(11): Block((norm1): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=384, out_features=1152, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=384, out_features=384, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(ls1): LayerScale()(drop_path1): Identity()(norm2): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=384, out_features=1536, bias=True)(act): GELU()(drop1): Dropout(p=0.0, inplace=False)(fc2): Linear(in_features=1536, out_features=384, bias=True)(drop2): Dropout(p=0.0, inplace=False))(ls2): LayerScale()(drop_path2): Identity()))(norm): LayerNorm((384,), eps=1e-06, elementwise_affine=True)(fc_norm): Identity()(head): Identity())(bottleneck): AdaptiveAvgPool1d(output_size=256))(decoder): Decoder((embedding): Embedding(407, 256)(decoder_pos_drop): Dropout(p=0.05, inplace=False)(decoder): TransformerDecoder((layers): ModuleList((0): TransformerDecoderLayer((self_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(multihead_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(linear1): Linear(in_features=256, out_features=2048, bias=True)(dropout): Dropout(p=0.1, inplace=False)(linear2): Linear(in_features=2048, out_features=256, bias=True)(norm1): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm2): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm3): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(dropout1): Dropout(p=0.1, inplace=False)(dropout2): Dropout(p=0.1, inplace=False)(dropout3): Dropout(p=0.1, inplace=False))(1): TransformerDecoderLayer((self_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(multihead_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(linear1): Linear(in_features=256, out_features=2048, bias=True)(dropout): Dropout(p=0.1, inplace=False)(linear2): Linear(in_features=2048, out_features=256, bias=True)(norm1): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm2): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm3): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(dropout1): Dropout(p=0.1, inplace=False)(dropout2): Dropout(p=0.1, inplace=False)(dropout3): Dropout(p=0.1, inplace=False))(2): TransformerDecoderLayer((self_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(multihead_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(linear1): Linear(in_features=256, out_features=2048, bias=True)(dropout): Dropout(p=0.1, inplace=False)(linear2): Linear(in_features=2048, out_features=256, bias=True)(norm1): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm2): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm3): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(dropout1): Dropout(p=0.1, inplace=False)(dropout2): Dropout(p=0.1, inplace=False)(dropout3): Dropout(p=0.1, inplace=False))(3): TransformerDecoderLayer((self_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(multihead_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(linear1): Linear(in_features=256, out_features=2048, bias=True)(dropout): Dropout(p=0.1, inplace=False)(linear2): Linear(in_features=2048, out_features=256, bias=True)(norm1): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm2): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm3): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(dropout1): Dropout(p=0.1, inplace=False)(dropout2): Dropout(p=0.1, inplace=False)(dropout3): Dropout(p=0.1, inplace=False))(4): TransformerDecoderLayer((self_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(multihead_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(linear1): Linear(in_features=256, out_features=2048, bias=True)(dropout): Dropout(p=0.1, inplace=False)(linear2): Linear(in_features=2048, out_features=256, bias=True)(norm1): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm2): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm3): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(dropout1): Dropout(p=0.1, inplace=False)(dropout2): Dropout(p=0.1, inplace=False)(dropout3): Dropout(p=0.1, inplace=False))(5): TransformerDecoderLayer((self_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(multihead_attn): MultiheadAttention((out_proj): NonDynamicallyQuantizableLinear(in_features=256, out_features=256, bias=True))(linear1): Linear(in_features=256, out_features=2048, bias=True)(dropout): Dropout(p=0.1, inplace=False)(linear2): Linear(in_features=2048, out_features=256, bias=True)(norm1): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm2): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(norm3): LayerNorm((256,), eps=1e-05, elementwise_affine=True)(dropout1): Dropout(p=0.1, inplace=False)(dropout2): Dropout(p=0.1, inplace=False)(dropout3): Dropout(p=0.1, inplace=False))))(output): Linear(in_features=256, out_features=407, bias=True)(encoder_pos_drop): Dropout(p=0.05, inplace=False))

)

![[蓝桥杯学习] 树状树组](https://img-blog.csdnimg.cn/direct/87320cdefb174632afc24ce6d076185b.png)