从头构建gpt2 基于Transformer

VX关注{晓理紫|小李子},获取技术推送信息,如感兴趣,请转发给有需要的同学,谢谢支持!!

如果你感觉对你有所帮助,请关注我。

源码获取 VX关注晓理紫并回复“chatgpt-0”

- 头文件以及超参数

import torch

import torch.nn as nn

from torch.nn import functional as F

# 加入为了扩大网络进行修改 head ,注意力、前向网络添加了dropout和设置蹭数目

#超参数

batch_size = 64

block_size = 34 #块大小 现在有34个上下文字符来预测

max_iters = 5000

eval_interval = 500

learning_rate=3e-4

device = 'cuda' if torch.cuda.is_available() else 'cpu'

eval_iters = 200

n_embd = 384 #嵌入维度

n_head = 6 #有6个头,每个头有284/6维

n_layer = 6 # 6层

dropout = 0.2torch.manual_seed(1337)

- 数据处理

with open('input.txt','r',encoding='utf-8') as f:text = f.read()chars = sorted(list(set(text)))

vocab_size = len(chars)stoi = {ch:i for i,ch in enumerate(chars)}itos = {i:ch for i,ch in enumerate(chars)}encode = lambda s : [stoi[c] for c in s]

decode = lambda l: ''.join([itos[i] for i in l])data = torch.tensor(encode(text),dtype=torch.long)n = int(0.9*len(data))

train_data = data[:n]

val_data = data[n:]def get_batch(split):data = train_data if split=="train" else val_dataix = torch.randint(len(data)-batch_size,(batch_size,))x = torch.stack([data[i:i+block_size] for i in ix])y = torch.stack([data[i+1:i+block_size+1] for i in ix])x,y = x.to(device),y.to(device)return x,y

- 估计损失

@torch.no_grad()

def estimate_loos(model):out={}model.eval()for split in ['train','val']:losses = torch.zeros(eval_iters)for k in range(eval_iters):x,y = get_batch(split)logits,loss = model(x,y)losses[k] = loss.mean()out[split] = losses.mean()model.train()return out

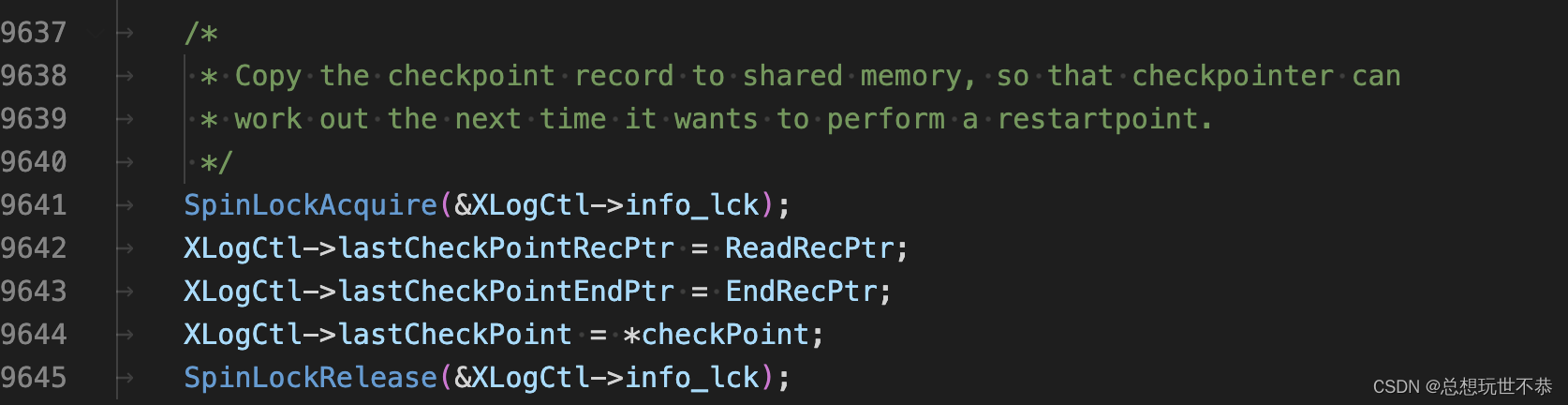

- 单头注意力

class Head(nn.Module):"""one head of self-attention"""def __init__(self, head_size):super(Head,self).__init__()self.key = nn.Linear(n_embd,head_size,bias=False)self.query = nn.Linear(n_embd,head_size,bias=False)self.value= nn.Linear(n_embd,head_size,bias=False)self.register_buffer('tril',torch.tril(torch.ones(block_size,block_size)))self.dropout = nn.Dropout(dropout)def forward(self,x):B,T,C = x.shapek = self.key(x) #(B,T,C)q = self.query(x) #(B,T,C)wei = q@k.transpose(-2,-1)*C**-0.5 #(B,T,C) @ (B,C,T)-->(B,T,T)wei = wei.masked_fill(self.tril[:T,:T]==0,float('-inf'))#(B,T,T)wei = F.softmax(wei,dim=-1) #(B,T,T)wei = self.dropout(wei)v= self.value(x)out = wei@vreturn out

- 多头注意力

class MultiHeadAttention(nn.Module):"""multiple heads of self-attention in parallel"""def __init__(self, num_heads,head_size) -> None:super(MultiHeadAttention,self).__init__()self.heads = nn.ModuleList([Head(head_size) for _ in range(num_heads)])self.proj = nn.Linear(n_embd,n_embd) #投影,为了方便使用惨差跳连self.dropout = nn.Dropout(dropout)def forward(self,x):out = torch.cat([h(x) for h in self.heads],dim=-1)out = self.dropout(self.proj(out))return out

- 前馈网络

class FeedFoward(nn.Module):"""a simple linear layer followed by a non-linearity"""def __init__(self,n_embd):super().__init__()self.net = nn.Sequential(nn.Linear(n_embd,4*n_embd), #从512变成2048nn.ReLU(),nn.Linear(4*n_embd,n_embd), #从2048变成512nn.Dropout(dropout), #Dropout 是可以在惨差链接之前加的东西)def forward(self,x):out = self.net(x)return out

- 块

class Block(nn.Module):"""Transformer block:communication followed by computation"""def __init__(self, n_embd,n_head) -> None:#n_embd 需要嵌入维度中的嵌入数量#n_head 头部数量super().__init__()head_size = n_embd//n_headself.sa = MultiHeadAttention(n_head,head_size) #通过多头注意力进行计算self.ffwd = FeedFoward(n_embd) # 对注意力计算的结果进行提要完成self.ln1 = nn.LayerNorm(n_embd) #层规范 对于优化深层网络很重要 论文Layer Normalizationself.ln2 = nn.LayerNorm(n_embd) #层规范def forward(self,x):# 通过使用残差网络的跳连进行x = x + self.sa(self.ln1(x)) x = x + self.ffwd(self.ln2(x))return x

- 整个语言模型

class BigramLangeNodel(nn.Module):def __init__(self):super(BigramLangeNodel,self).__init__()self.token_embedding_table = nn.Embedding(vocab_size,n_embd) #令牌嵌入表,对标记的身份进行编码self.position_embedding_table = nn.Embedding(block_size,n_embd) #位置嵌入表,对标记的位置进行编码。从0到block_size大小减一的每个位置将获得自己的嵌入向量self.blocks = nn.Sequential(*[Block(n_embd,n_head=n_head) for _ in range(n_layer)]) #通过n_layer设置构建的曾数self.ln_f = nn.LayerNorm(n_embd)self.lm_head = nn.Linear(n_embd,vocab_size) #进行令牌嵌入到logits的转换,这是语言头def forward(self,idx,targets=None):B,T = idx.shapetok_emb= self.token_embedding_table(idx) #(B,T,C) C是嵌入大小 根据idx内的令牌的身份进行编码pos_emb = self.position_embedding_table(torch.arange(T,device=device)) #(T,C) 从0到T减一的整数都嵌入到表中x = tok_emb+pos_emb #(B,T,C) 标记的身份嵌入与位置嵌入相加。x保存了身份以及身份出现的位置# x = self.sa_head(x) #(B,T,C)x = self.blocks(x)x = self.ln_f(x)logits = self.lm_head(x) #(B,T,vocab_size)if targets is None:loss = Noneelse:B,T,C = logits.shapelogits = logits.view(B*T,C)targets = targets.view(B*T)loss = F.cross_entropy(logits,targets)return logits,lossdef generate(self,idx,max_new_tokens):for _ in range(max_new_tokens):idx_cond = idx[:,-block_size] logits,loss= self(idx_cond)logits = logits[:,-1,:]# becomes (B,C)probs = F.softmax(logits,dim=-1)idx_next = torch.multinomial(probs,num_samples=1)idx = torch.cat((idx,idx_next),dim=1) #(B,T+1)return idx

- 训练

model = BigramLangeNodel()

m = model.to(device)optimizer = torch.optim.AdamW(model.parameters(),lr = learning_rate)for iter in range(max_iters):if iter % eval_interval==0:losses = estimate_loos(model)print(f"step {iter}:train loss {losses['train']:.4f},val loss{losses['val']:.4f}")xb,yb = get_batch('train')logits,loss = model(xb,yb)optimizer.zero_grad(set_to_none=True)loss.backward()optimizer.step()context = torch.zeros((1,1),dtype=torch.long,device=device)

print(decode(m.generate(context,max_new_tokens=500)[0].tolist()))

- 训练损失

step 0:train loss 4.3975,val loss4.3983

step 500:train loss 1.8497,val loss1.9600

step 1000:train loss 1.6500,val loss1.8210

step 1500:train loss 1.5530,val loss1.7376

step 2000:train loss 1.5034,val loss1.6891

step 2500:train loss 1.4665,val loss1.6638

step 3000:train loss 1.4431,val loss1.6457

step 3500:train loss 1.4156,val loss1.6209

step 4000:train loss 1.3958,val loss1.6025

step 4500:train loss 1.3855,val loss1.5988

简单实现自注意力

VX 关注{晓理紫|小李子},获取技术推送信息,如感兴趣,请转发给有需要的同学,谢谢支持!!

如果你感觉对你有所帮助,请关注我。

源码获取 VX关注晓理紫并回复“chatgpt-0”