-

引言

-

简介

-

编译Android可用的模型

-

转换权重

-

生成配置文件

-

模型编译

-

-

编译apk

-

修改配置文件

-

绑定android library

-

配置gradle

-

编译apk

-

-

手机上运行

-

安装 APK

-

植入模型

-

效果实测

-

0. 引言

清明时节雨纷纷,路上行人欲断魂。

小伙伴们好,我是《小窗幽记机器学习》的小编:卖青团的小女孩,紧接前文LLM系列。今天这篇小作文主要介绍如何将阿里巴巴的千问大模型Qwen 1.8B部署到手机端,实现离线、断网条件下使用大模型。主要包括以下几个步骤:

-

编译Android手机可以使用的Qwen模型

-

编译打包APK,为Qwen在Android手机上运行提供用户交互界面

-

安装APK和效果实测

如需与小编进一步交流,可以在《小窗幽记机器学习》上添加小编好友。

1. 简介

为将Qwen大模型部署到手机,实现断网下Qwen模型正常使用,本文选择MLC-LLM框架。

MLC LLM(机器学习编译大型语言模型,Machine Learning Compilation for Large Language Models) 是一种高性能的通用部署解决方案,将任何语言模型本地化部署在各种硬件后端和本机应用程序上,并为每个人提供一个高效的框架,以进一步优化自己模型性能。该项目的使命是使每个人都能够使用ML编译技术在各种设备上本机开发、优化和部署AI模型。

以下将以Qwen1.5-1.8B-Chat为例,详细说明如何利用mlc-llm将该模型部署到Android手机上,最终实现每秒约20个token的生成速度。以下命令执行都在mlc-llm的目类下执行。囿于篇幅,将在后文,以上篇名义补充介绍对应的环境安装和配置等工作。

2. 编译Android可用模型

MODEL_NAME=Qwen1.5-1.8B-Chat

QUANTIZATION=q4f16_1

2.1 权重转换

# convert weights

mlc_llm convert_weight /share_model_zoo/LLM/Qwen/$MODEL_NAME/ --quantization $QUANTIZATION -o dist/$MODEL_NAME-$QUANTIZATION-MLC/

通过上述命令,将hf格式的Qwen模型转为mlc-llm支持的模型格式,结果文件存于:dist/Qwen1.5-1.8B-Chat-q4f16_1-MLC

2.2 生成配置文件

# 生成配置文件mlc_llm gen_config /share_model_zoo/LLM/Qwen/$MODEL_NAME/ --quantization $QUANTIZATION --model-type qwen2 --conv-template chatml --context-window-size 4096 -o dist/${MODEL_NAME}-${QUANTIZATION}-MLC/

此时生成的配置文件dist/Qwen1.5-1.8B-Chat-q4f16_1-MLC/mlc-chat-config.json信息:

{"model_type": "qwen2","quantization": "q4f16_1","model_config": {"hidden_act": "silu","hidden_size": 2048,"intermediate_size": 5504,"num_attention_heads": 16,"num_hidden_layers": 24,"num_key_value_heads": 16,"rms_norm_eps": 1e-06,"rope_theta": 1000000.0,"vocab_size": 151936,"context_window_size": 4096,"prefill_chunk_size": 4096,"tensor_parallel_shards": 1,"head_dim": 128,"dtype": "float32"},"vocab_size": 151936,"context_window_size": 4096,"sliding_window_size": -1,"prefill_chunk_size": 4096,"attention_sink_size": -1,"tensor_parallel_shards": 1,"mean_gen_len": 128,"max_gen_len": 512,"shift_fill_factor": 0.3,"temperature": 0.7,"presence_penalty": 0.0,"frequency_penalty": 0.0,"repetition_penalty": 1.1,"top_p": 0.8,"conv_template": {"name": "chatml","system_template": "<|im_start|>system\n{system_message}","system_message": "A conversation between a user and an LLM-based AI assistant. The assistant gives helpful and honest answers.","add_role_after_system_message": true,"roles": {"user": "<|im_start|>user","assistant": "<|im_start|>assistant"},"role_templates": {"user": "{user_message}","assistant": "{assistant_message}","tool": "{tool_message}"},"messages": [],"seps": ["<|im_end|>\n"],"role_content_sep": "\n","role_empty_sep": "\n","stop_str": ["<|im_end|>"],"stop_token_ids": [2],"function_string": "","use_function_calling": false},"pad_token_id": 151643,"bos_token_id": 151643,"eos_token_id": [151645,151643],"tokenizer_files": ["tokenizer.json","vocab.json","merges.txt","tokenizer_config.json"],"version": "0.1.0"

}

2.3 模型编译

# 进行模型编译:# 2. compile: compile model library with specification in mlc-chat-config.jsonmkdir dist/libsmlc_llm compile ./dist/${MODEL_NAME}-${QUANTIZATION}-MLC/mlc-chat-config.json --device android -o ./dist/libs/${MODEL_NAME}-${QUANTIZATION}-android.tar

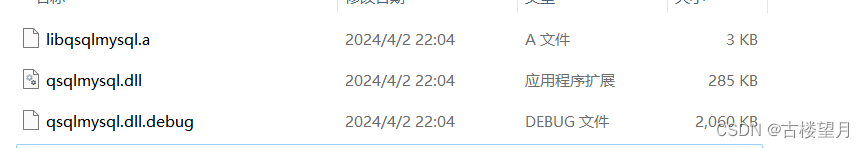

生成dist/libs/Qwen1.5-1.8B-Chat-q4f16_1-android.tar文件。

3. 编译apk

3.1 修改配置文件

# Configure list of models

vim ./android/library/src/main/assets/app-config.json

将./android/library/src/main/assets/app-config.json改为:

{"model_list": [{"model_url": "https://huggingface.co/Qwen/Qwen1.5-1.8B-Chat","model_lib": "qwen2_q4f16_1","estimated_vram_bytes": 4348727787,"model_id": "Qwen1.5-1.8B-Chat-q4f16_1" # 手机上模型目录要跟这个一致,不然无法加载}],"model_lib_path_for_prepare_libs": {"qwen2_q4f16_1": "libs/Qwen1.5-1.8B-Chat-q4f16_1-android.tar"}

}

3.2 绑定android library

需要查看以下系统变量:

echo $ANDROID_NDK # Android NDK toolchain

echo $TVM_NDK_CC # Android NDK clang

echo $JAVA_HOME # Java

export TVM_HOME=/share/Repository/mlc-llm/3rdparty/tvm # mlc-llm 中的 tvm 目类

echo $TVM_HOME # TVM Unity runtime

是否符合预期。

# Bundle model library

cd ./android/library

./prepare_libs.sh

上述脚本会基于rustup安装aarch64-linux-android,如果比较慢,可以进行如下配置:

export RUSTUP_DIST_SERVER=https://mirrors.tuna.tsinghua.edu.cn/rustup

export RUSTUP_UPDATE_ROOT=https://mirrors.tuna.tsinghua.edu.cn/rustup/rustup

再执行上述脚本。

3.3 配置gradle

修改android/gradle/wrapper/gradle-wrapper.properties, 将原始的内容:

#Thu Jan 25 10:19:50 EST 2024

distributionBase=GRADLE_USER_HOME

distributionPath=wrapper/dists

distributionUrl=https\://services.gradle.org/distributions/gradle-8.5-bin.zip

zipStoreBase=GRADLE_USER_HOME

zipStorePath=wrapper/dists

可以看出,gradle-8.5-bin.zip的路径是:android/gradle/wrapper/dist/gradle-8.5-bin.zip

这里需要注意,wrapper/dists的完整路径其实是/root/.gradle/wrapper/dists修改为:

distributionBase=GRADLE_USER_HOME

distributionPath=wrapper/dists

distributionUrl=dist/gradle-8.5-bin.zip

zipStoreBase=GRADLE_USER_HOME

zipStorePath=wrapper/dists

需要注意,distributionUrl 这个的base目录其实是mlc-llm目录下的android/gradle/wrapper。

3.4 编译apk

# Build android app

cd .. && ./gradlew assembleDebug

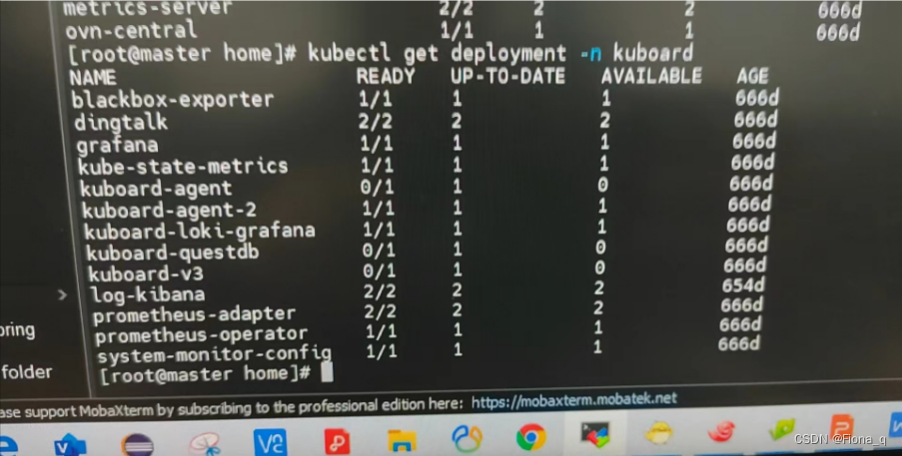

编译生成的Android apk 文件位于:app/build/outputs/apk/debug/app-debug.apk

4. 手机实测

4.1 安装 APK

将手机设置成debug模式,数据线连接手机,正常连接之后在电脑执行以下命令,将上面编译出的apk安装到Android手机上:

adb install app-debug.apk

PS: 需要预先在本机电脑上安装 adb 命令。

4.2 植入模型

# 改名,从而适配之前的配置信息

mv Qwen1.5-1.8B-Chat-q4f16_1-MLC Qwen1.5-1.8B-Chat-q4f16_1# 将模型文件推送到手机的 /data/local/tmp/ 目类

adb push Qwen1.5-1.8B-Chat-q4f16_1 /data/local/tmp/adb shell "mkdir -p /storage/emulated/0/Android/data/ai.mlc.mlcchat/files/"adb shell "mv /data/local/tmp/Qwen1.5-1.8B-Chat-q4f16_1 /storage/emulated/0/Android/data/ai.mlc.mlcchat/files/"

4.3 聊天实测

实测大约1s可以生成20个token。