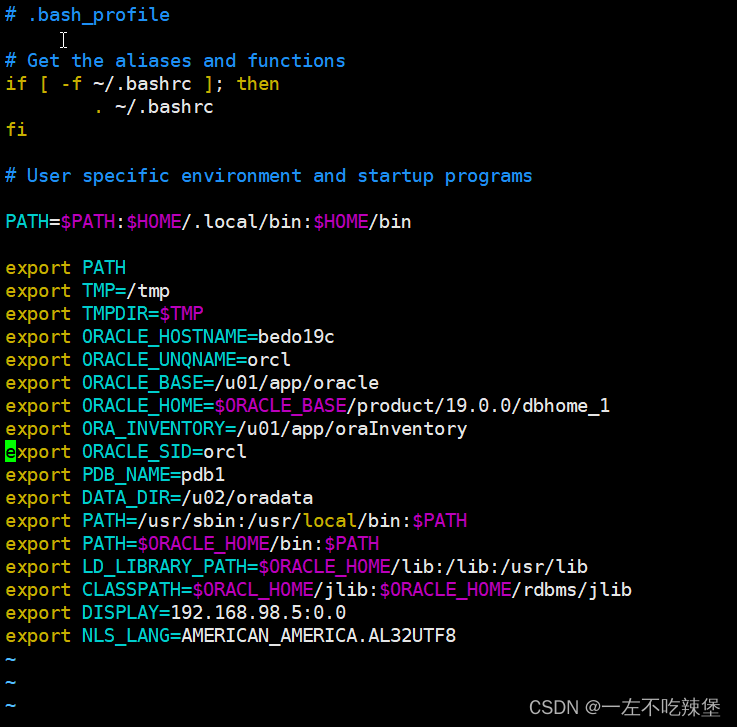

一、Kubernetes 区域可采用 Kubeadm 方式进行安装:

| 名称 | 主机 | 部署服务 |

|---|---|---|

| master | 192.168.91.10 | docker、kubeadm、kubelet、kubectl、flannel |

| node01 | 192.168.91.11 | docker、kubeadm、kubelet、kubectl、flannel |

| node02 | 192.168.91.20 | docker、kubeadm、kubelet、kubectl、flannel |

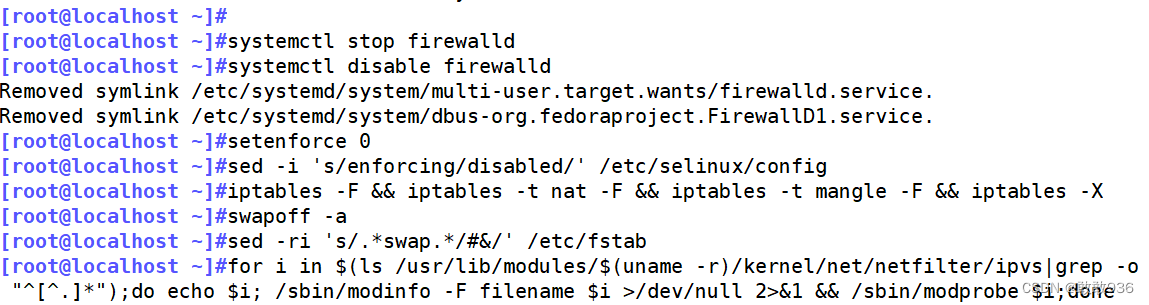

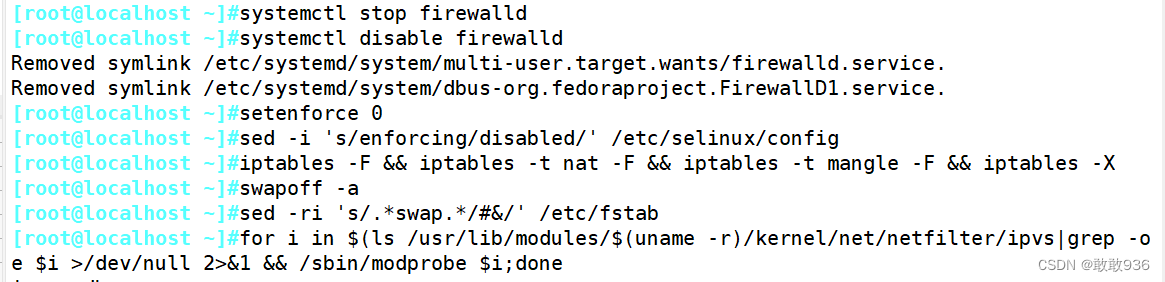

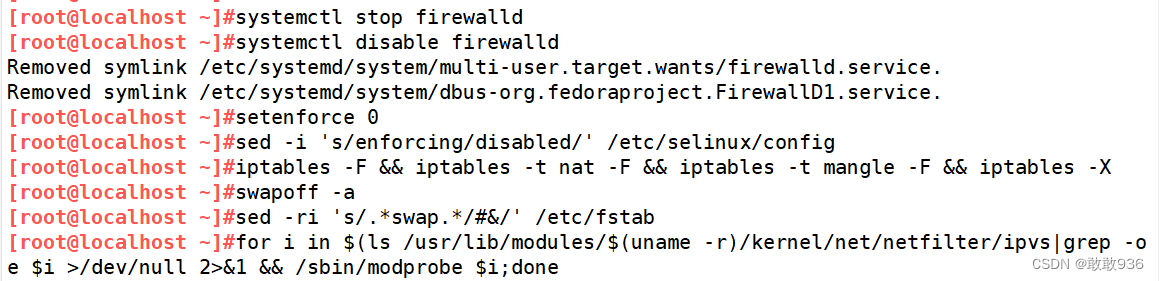

1.系统初始化配置:

systemctl stop firewalld

systemctl disable firewalld

setenforce 0

sed -i 's/enforcing/disabled/' /etc/selinux/config

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -Xswapoff -a #交换分区必须要关闭

sed -ri 's/.*swap.*/#&/' /etc/fstab #永久关闭swap分区,&符号在sed命令中代表上次匹配的结果#加载 ip_vs 模块

for i in $(ls /usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs|grep -o "^[^.]*");do echo $i; /sbin/modinfo -F filename $i >/dev/null 2>&1 && /sbin/modprobe $i;done

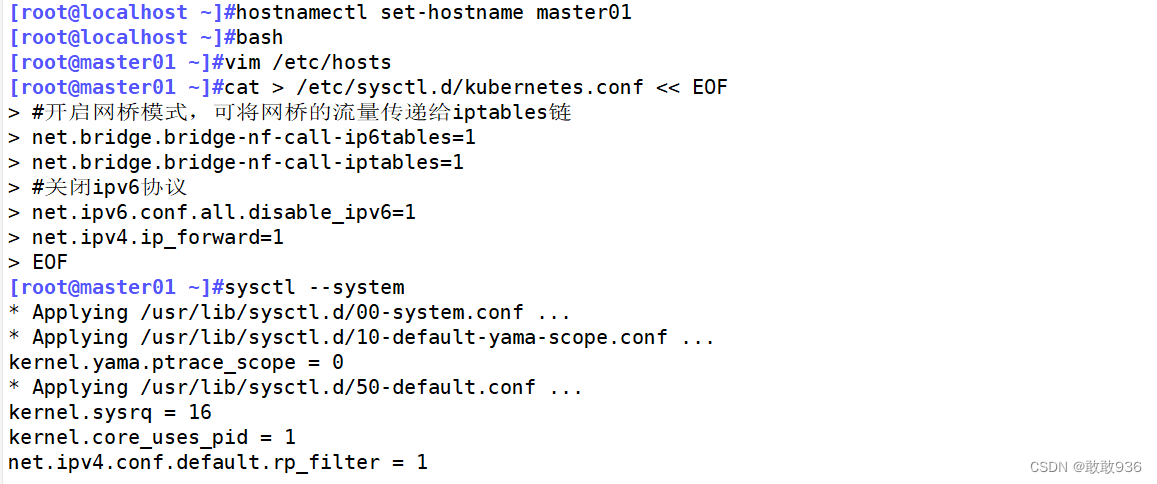

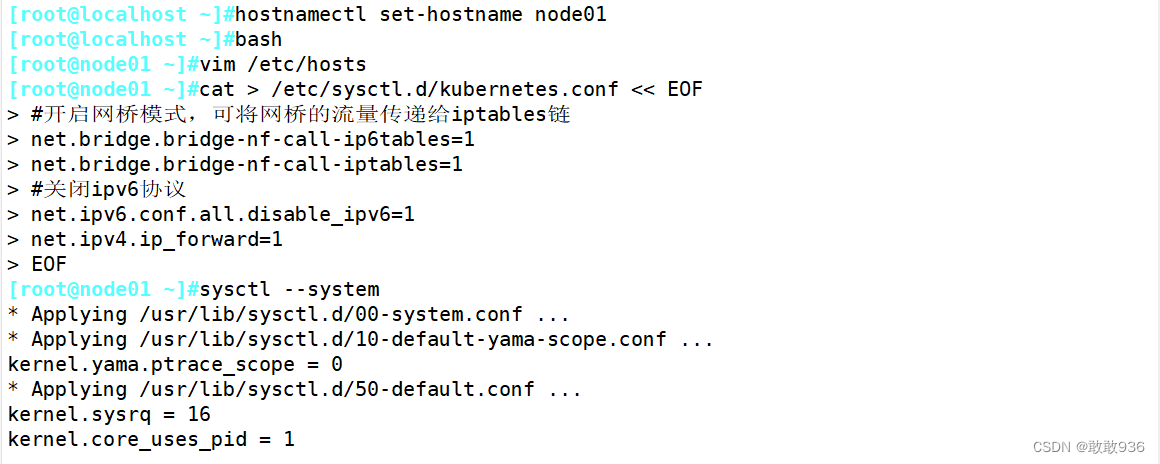

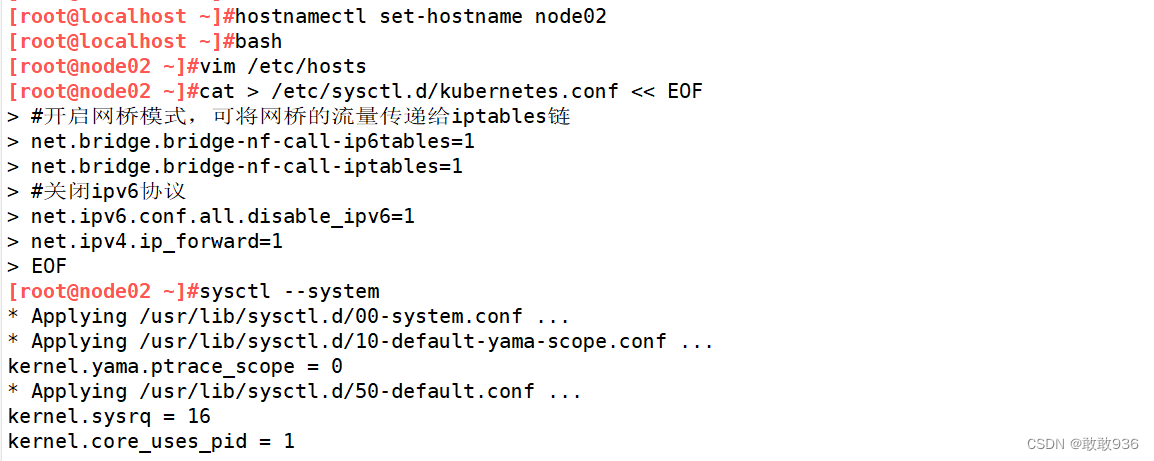

#修改主机名

hostnamectl set-hostname master01

hostnamectl set-hostname node01

hostnamectl set-hostname node02

#域名解析

vim /etc/hosts

192.168.91.10 master01

192.168.91.11 node01

192.168.91.20 node02

#调整内核参数

cat > /etc/sysctl.d/kubernetes.conf << EOF

#开启网桥模式,可将网桥的流量传递给iptables链

net.bridge.bridge-nf-call-ip6tables=1

net.bridge.bridge-nf-call-iptables=1

#关闭ipv6协议

net.ipv6.conf.all.disable_ipv6=1

net.ipv4.ip_forward=1

EOF

#生效参数

sysctl --system

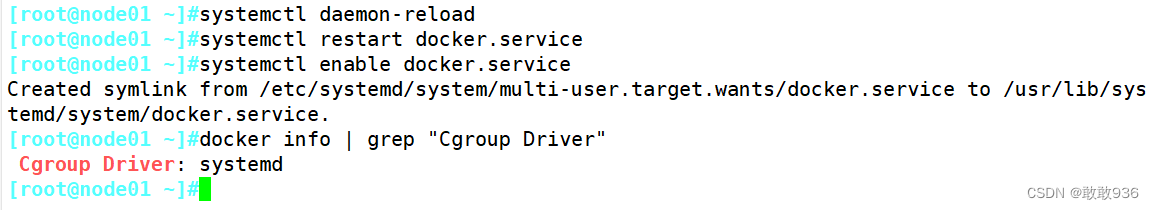

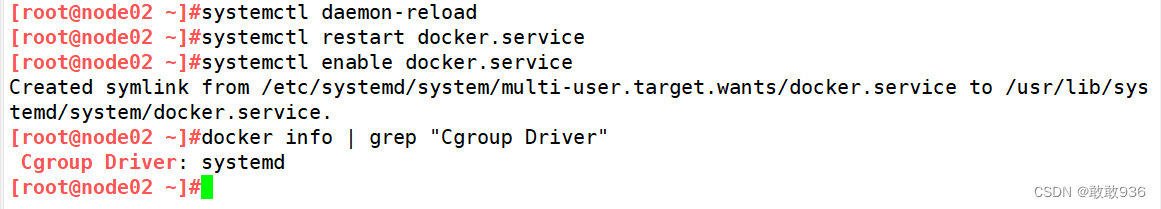

2.所有节点部署docker:

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum install -y docker-ce docker-ce-cli containerd.io

mkdir /etc/docker

cat > /etc/docker/daemon.json <<EOF

{"registry-mirrors": ["https://6ijb8ubo.mirror.aliyuncs.com"],"exec-opts": ["native.cgroupdriver=systemd"],"log-driver": "json-file","log-opts": {"max-size": "100m"}

}

EOF

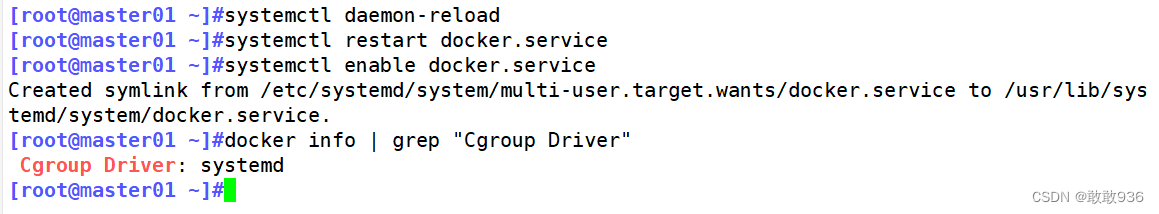

systemctl daemon-reload

systemctl restart docker.service

systemctl enable docker.service

docker info | grep "Cgroup Driver"

Cgroup Driver: systemd

3.所有节点安装kubeadm,kubelet和kubectl :

//定义kubernetes源

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

#yum安装组件

yum install -y kubelet-1.20.11 kubeadm-1.20.11 kubectl-1.20.11

#开机自启kubelet

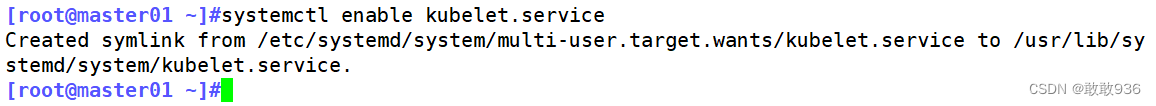

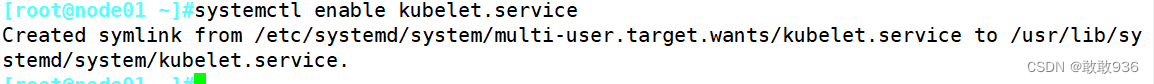

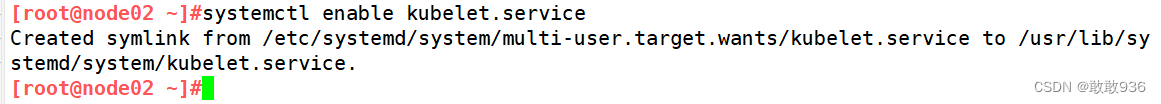

systemctl enable kubelet.service

#K8S通过kubeadm安装出来以后都是以Pod方式存在,即底层是以容器方式运行,所以kubelet必须设置开机自启

4.部署K8S集群:

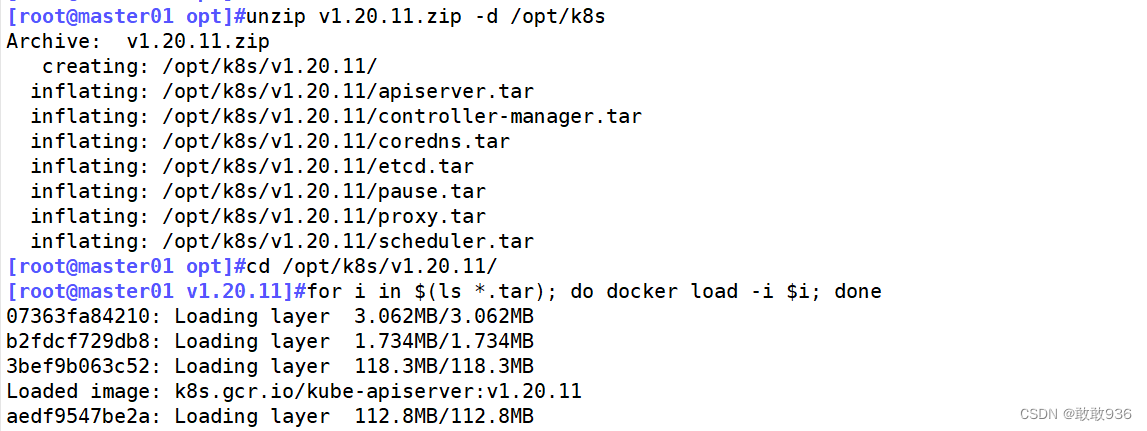

//在 master 节点上传 v1.20.11.zip 压缩包至 /opt 目录

unzip v1.20.11.zip -d /opt/k8s

cd /opt/k8s/v1.20.11

for i in $(ls *.tar); do docker load -i $i; done

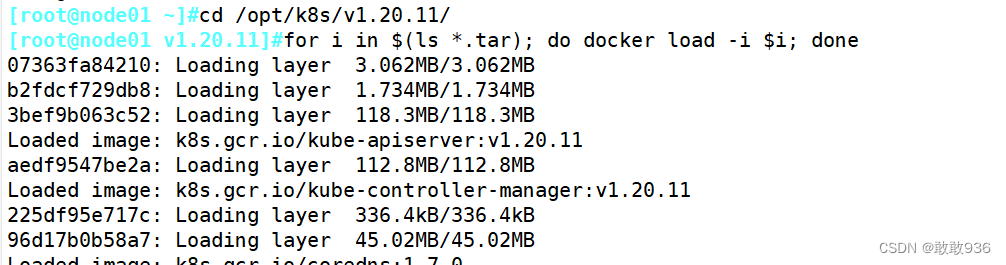

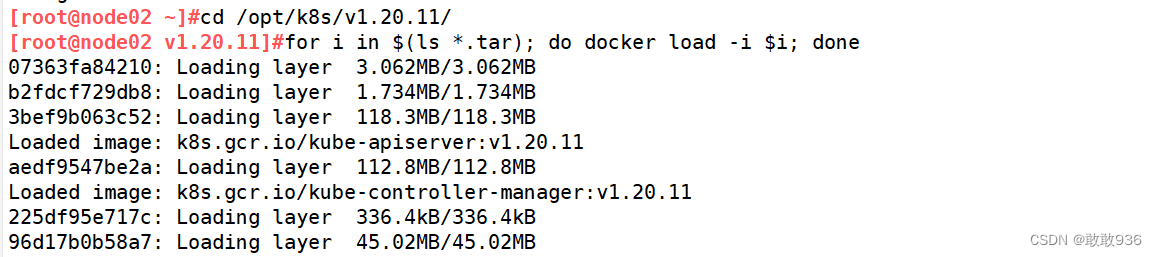

//复制镜像和脚本到 node 节点,并在 node 节点上执行脚本加载镜像文件

scp -r /opt/k8s root@node01:/opt

scp -r /opt/k8s root@node02:/opt#在node节点上执行加载镜像文件

cd /opt/k8s/v1.20.11

for i in $(ls *.tar); do docker load -i $i; done

kubeadm config print init-defaults > /opt/kubeadm-config.yaml

cd /opt/

vim kubeadm-config.yaml

......

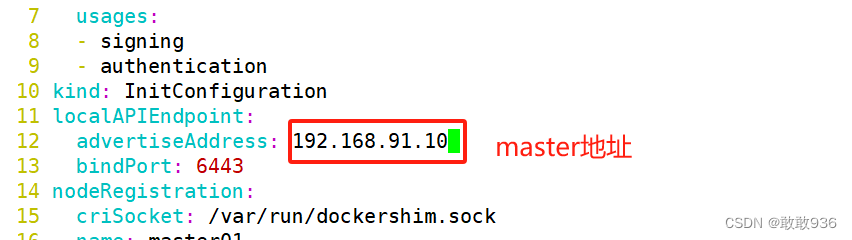

11 localAPIEndpoint:

12 advertiseAddress: 192.168.91.10 #指定master节点的IP地址

13 bindPort: 6443

......

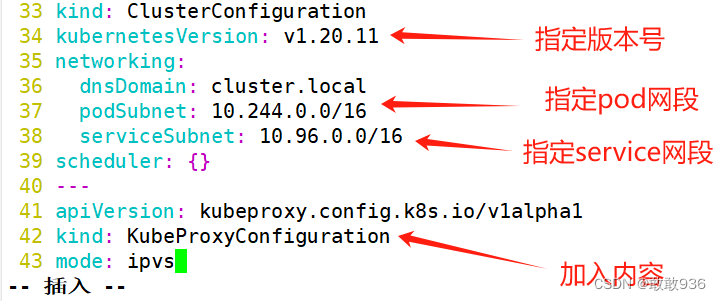

34 kubernetesVersion: v1.20.11 #指定kubernetes版本号

35 networking:

36 dnsDomain: cluster.local

37 podSubnet: "10.244.0.0/16" #指定pod网段,10.244.0.0/16用于匹配flannel默认网段

38 serviceSubnet: 10.96.0.0/16 #指定service网段

39 scheduler: {}

#末尾再添加以下内容

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: ipvs #把默认的kube-proxy调度方式改为ipvs模式

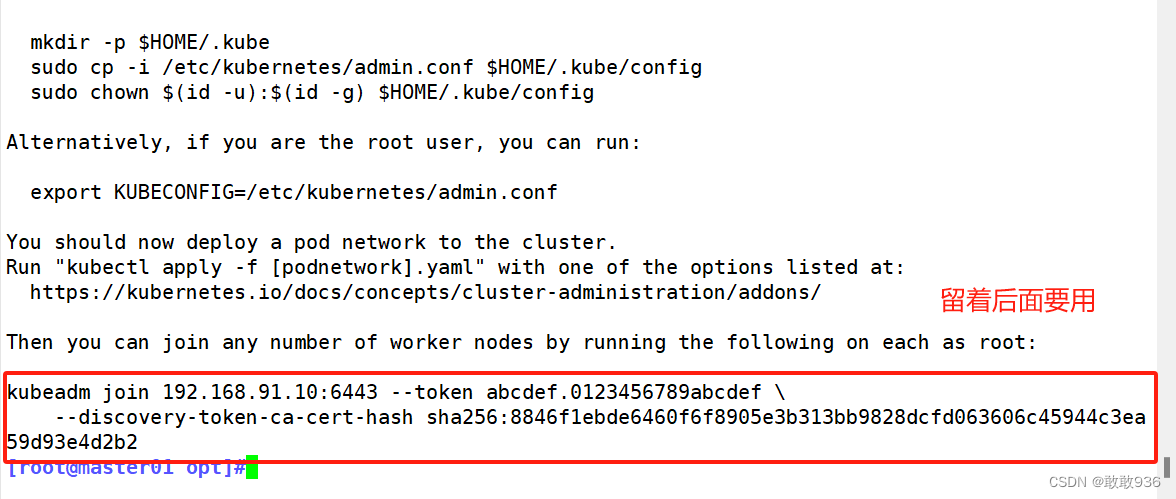

kubeadm init --config=kubeadm-config.yaml --upload-certs | tee kubeadm-init.log

#--experimental-upload-certs 参数可以在后续执行加入节点时自动分发证书文件,K8S V1.16版本开始替换为 --upload-certs

#tee kubeadm-init.log 用以输出日志

//查看 kubeadm-init 日志

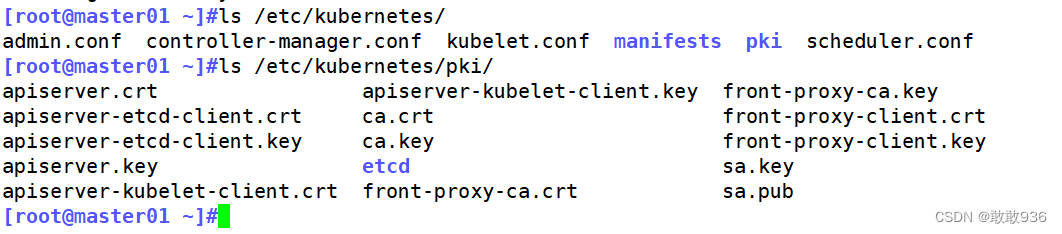

less kubeadm-init.log//kubernetes配置文件目录

ls /etc/kubernetes/

//存放ca等证书和密码的目录

ls /etc/kubernetes/pki

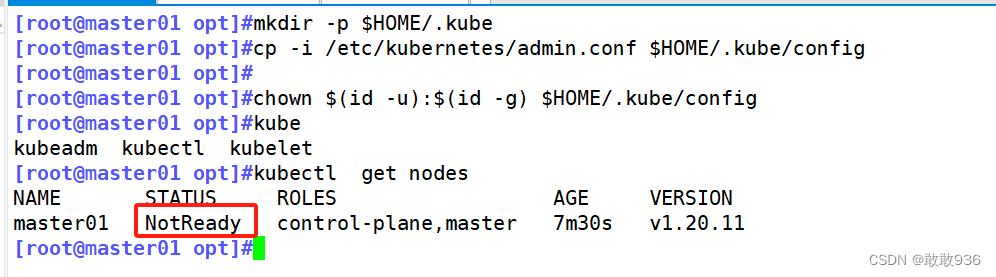

5.设定kubectl:

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

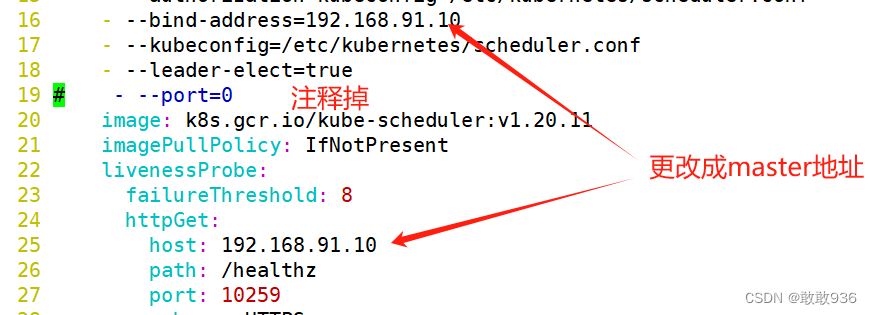

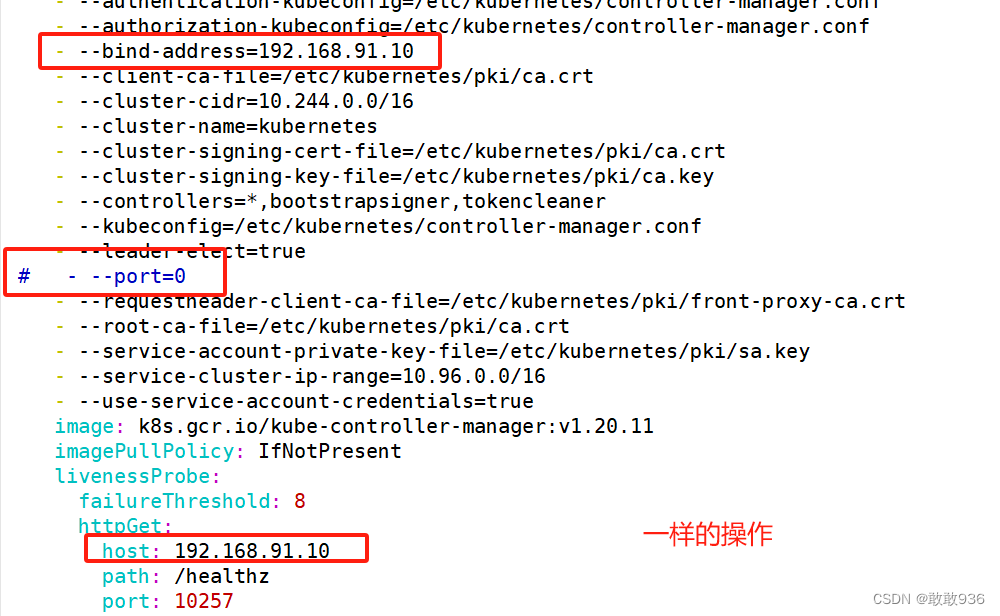

//如果 kubectl get cs 发现集群不健康,更改以下两个文件

vim /etc/kubernetes/manifests/kube-scheduler.yaml

vim /etc/kubernetes/manifests/kube-controller-manager.yaml

# 修改如下内容

把--bind-address=127.0.0.1变成--bind-address=192.168.10.19 #修改成k8s的控制节点master01的ip

把httpGet:字段下的hosts由127.0.0.1变成192.168.10.19(有两处)

#- --port=0 # 搜索port=0,把这一行注释掉

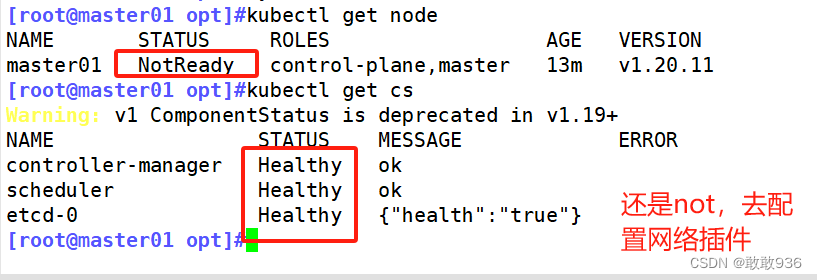

systemctl restart kubelet

再次查看node节点状态:

6.所有节点部署网络插件flannel:

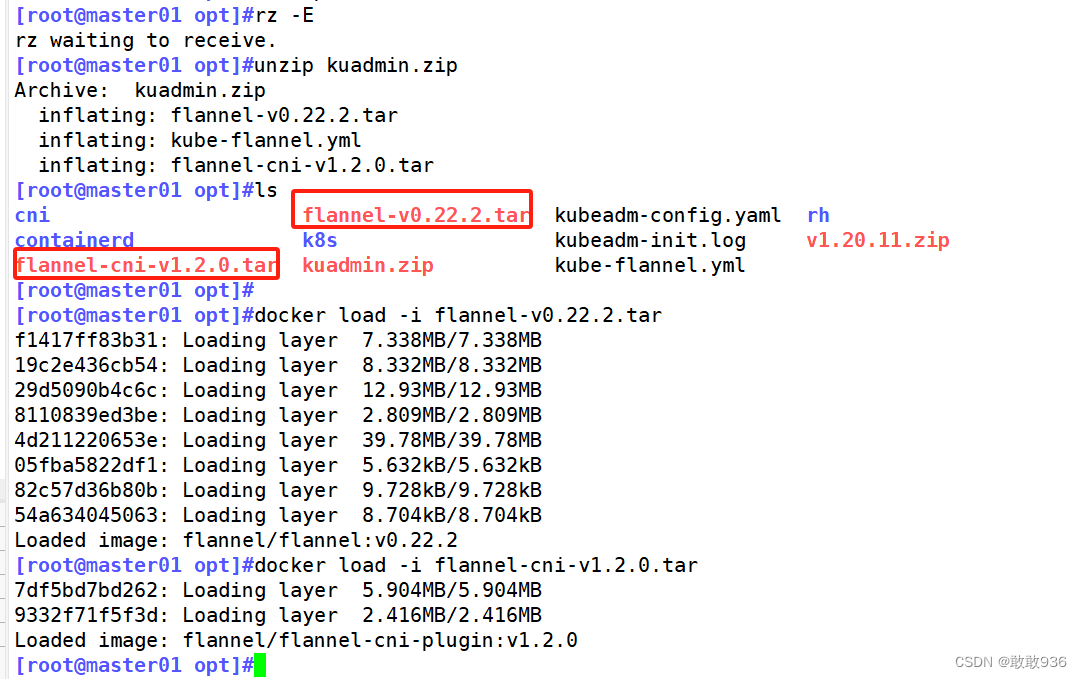

//所有节点上传flannel-cni-v1.2.0.tar ,flannel-v0.22.2.tar镜像node 到 /opt 目录,master节点上传cni-plugins-linux-amd64-v1.2.0.tgz,(由于版本更新后 不使用这个) flannel-cni-v1.2.0.tar ,flannel-v0.22.2.tar文件

cd /opt

unzip kuadmin.zip

docker load -i flannel-v0.22.2.tar

docker load -i flannel-cni-v1.2.0.tar

#将flannel-cni-v1.2.0.tar flannel-v0.22.2.tar传给node01和node02

scp flannel-cni-v1.2.0.tar flannel-v0.22.2.tar node01:/opt/

scp flannel-cni-v1.2.0.tar flannel-v0.22.2.tar node02:/opt/

docker load -i flannel-v0.22.2.tar

docker load -i flannel-cni-v1.2.0.tar

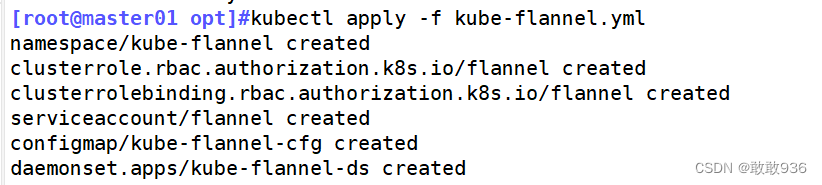

//在 master 节点创建 flannel 资源(将kube-flannel.yml传入/opt )

kubectl apply -f kube-flannel.yml

namespace/kube-flannel created

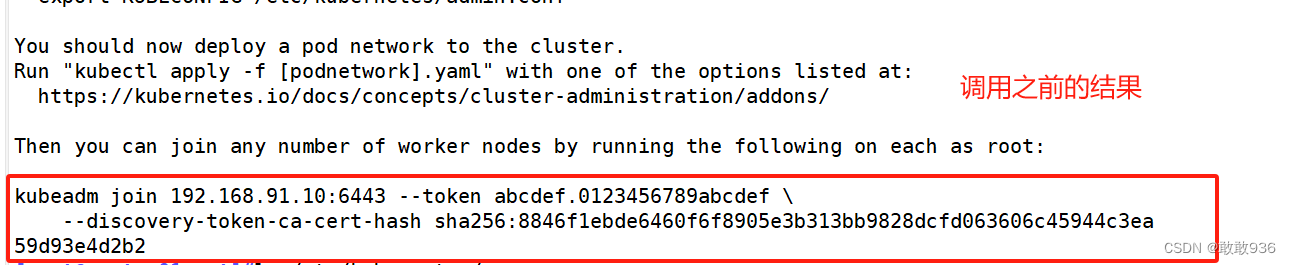

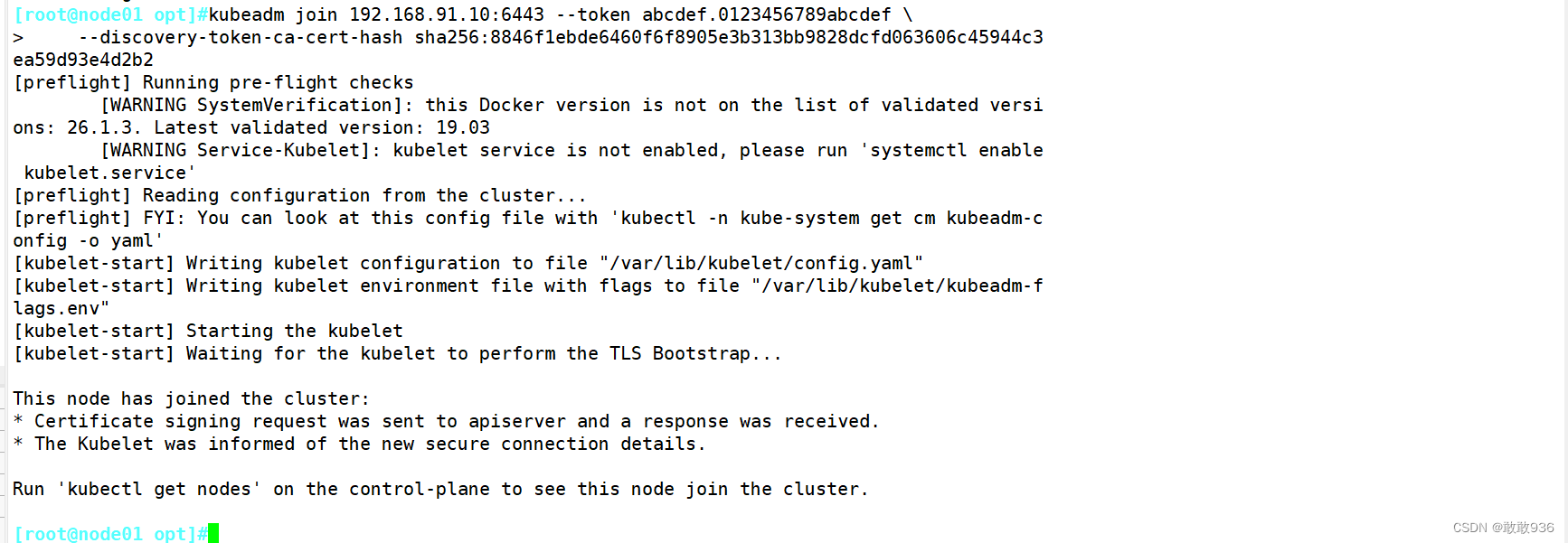

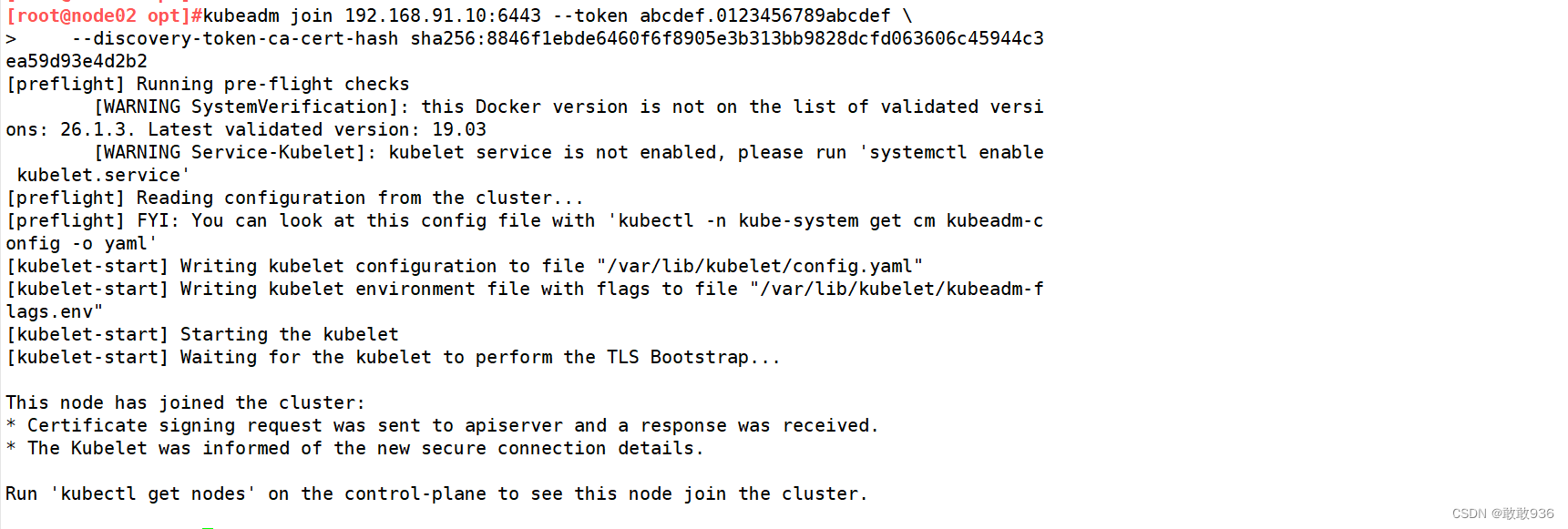

#在 node 节点上执行 kubeadm join 命令加入群集

kubeadm join 192.168.91.10:6443 --token abcdef.0123456789abcdef \--discovery-token-ca-cert-hash sha256:8846f1ebde6460f6f8905e3b313bb9828dcfd063606c45944c3ea59d93e4d2b2

#在master节点查看节点状态

kubectl get nodes

kubectl get pods -n kube-system

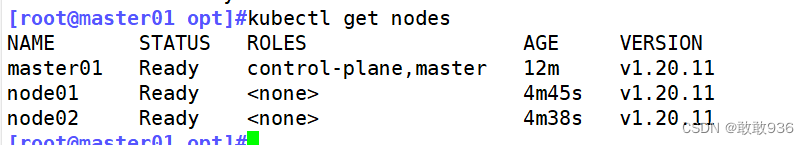

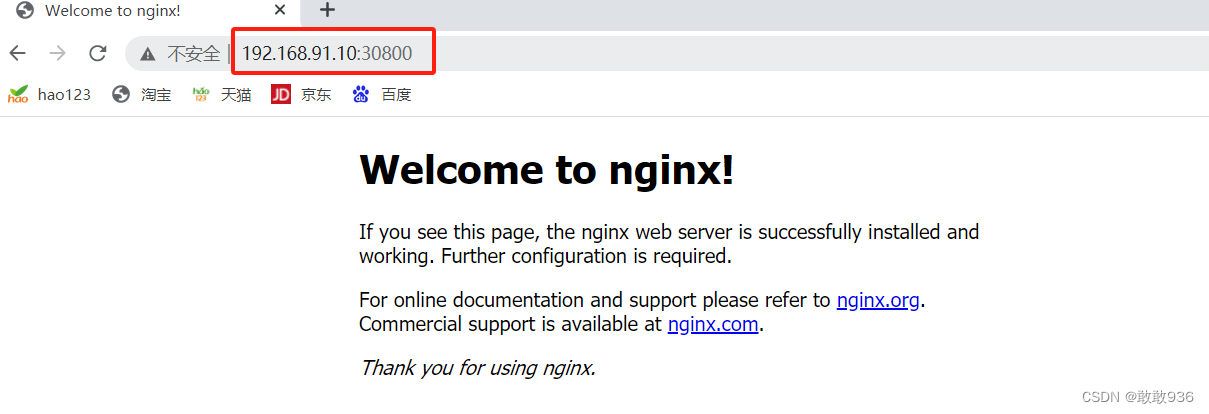

//测试 pod 资源创建

kubectl create deployment nginx --image=nginx

kubectl get pods -o wide

#curl访问测试:

kubectl get pod -owide

curl 10.244.2.2

//暴露端口提供服务

kubectl expose deployment nginx --port=80 --type=NodePort

kubectl get svc

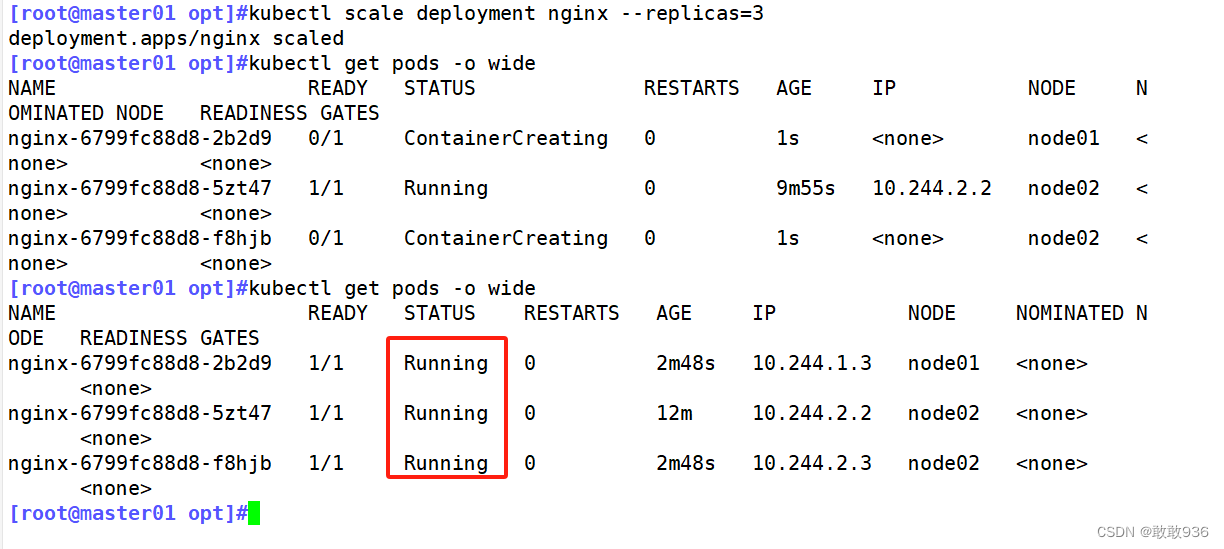

//扩展3个副本

kubectl scale deployment nginx --replicas=3

kubectl get pods -o wide

![]()

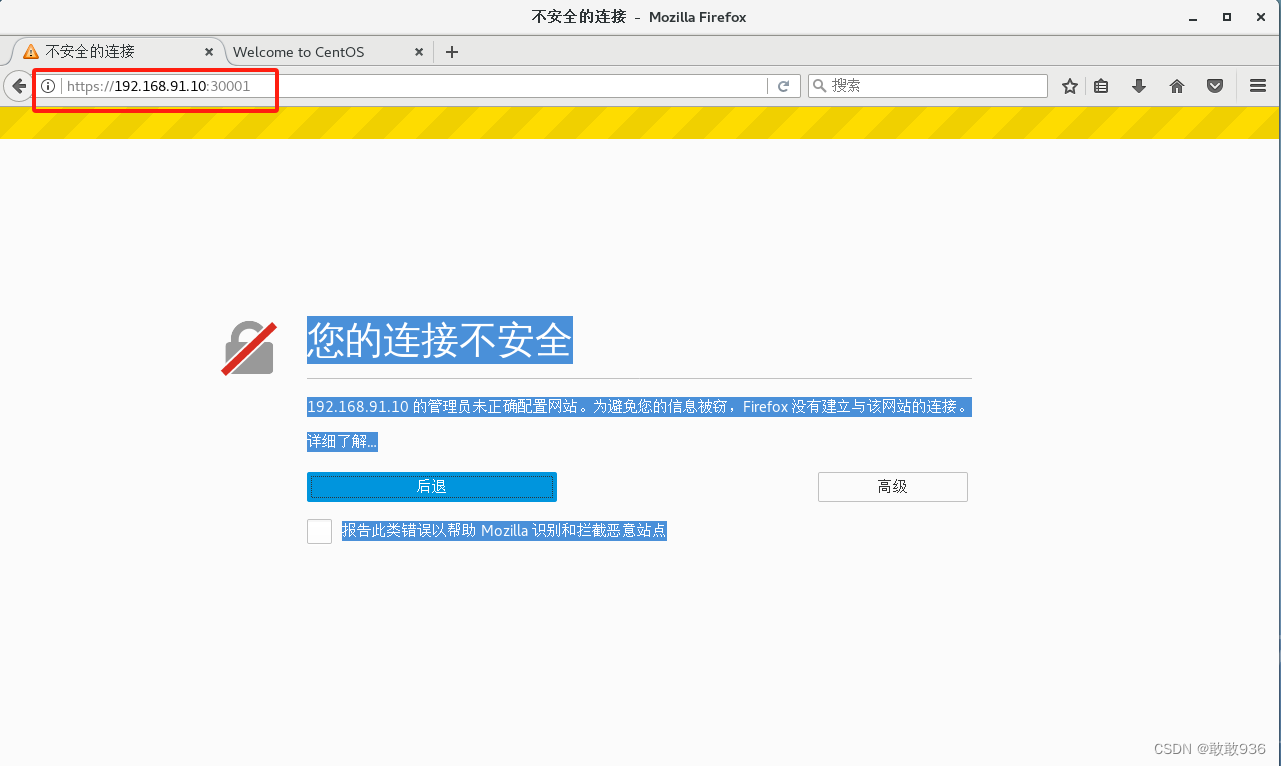

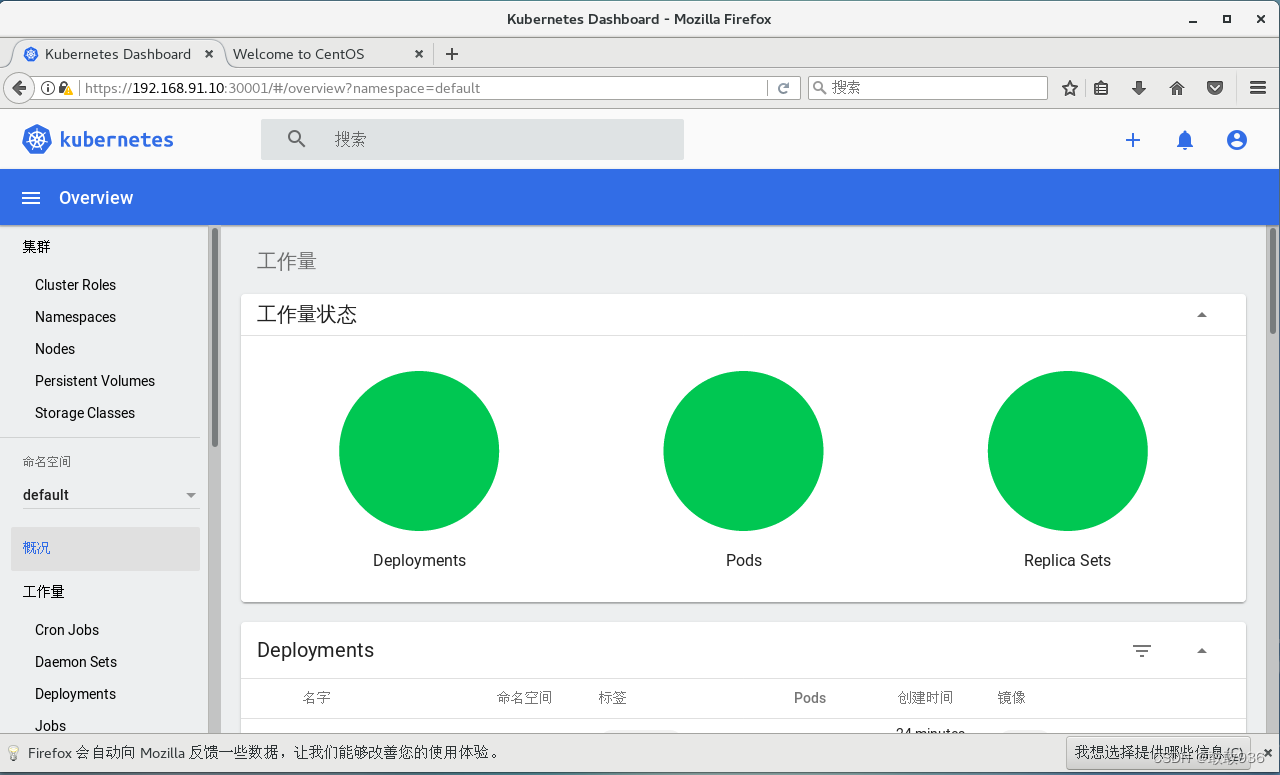

7.部署 Dashboard:

#上传 recommended.yaml 文件到 /opt/k8s 目录中

cd /opt/k8s

vim recommended.yaml

#默认Dashboard只能集群内部访问,修改Service为NodePort类型,暴露到外部:

kind: Service

apiVersion: v1

metadata:labels:k8s-app: kubernetes-dashboardname: kubernetes-dashboardnamespace: kubernetes-dashboard

spec:ports:- port: 443targetPort: 8443nodePort: 30001 #添加type: NodePort #添加selector:k8s-app: kubernetes-dashboardkubectl apply -f recommended.yaml

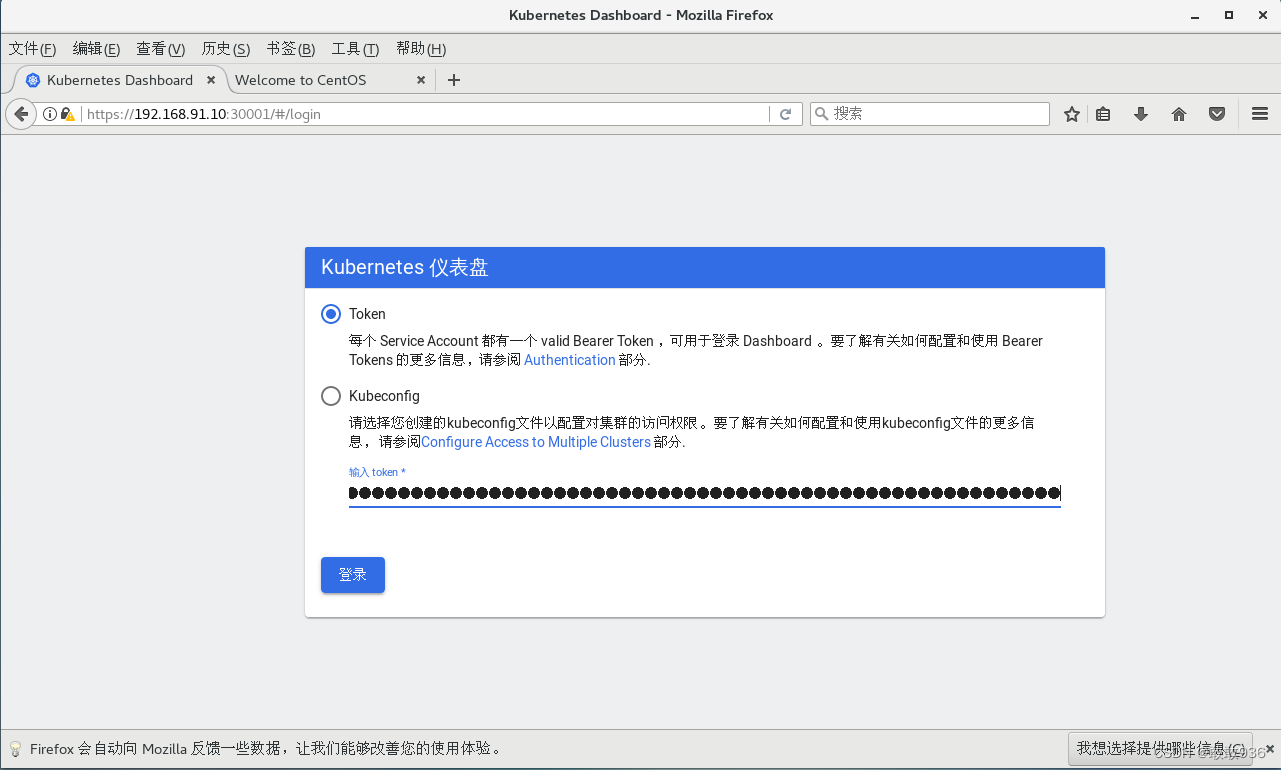

#创建service account并绑定默认cluster-admin管理员集群角色

kubectl create serviceaccount dashboard-admin -n kube-system

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

![]()

![]()

![]()

二、要求在 Kubernetes 环境中,通过yaml文件的方式,创建2个Nginx Pod分别放置在两个不同的节点上,Pod使用hostPath类型的存储卷挂载,节点本地目录共享使用 /data,2个Pod副本测试页面二者要不同,以做区分,测试页面可自己定义。

1.生成nginx的pod模板文件

kubectl run mynginx --image=nginx:1.14 --port=80 --dry-run=client -o yaml > nginx-pod.yaml2.修改模板文件:

---

apiVersion: v1

kind: Pod

metadata:labels:run: nginxname: nginx01

spec:nodeName: node01containers:- image: nginxname: nginxports:- containerPort: 80volumeMounts:- name: node1-htmlmountPath: /usr/share/nginx/html/volumes:- name: node1-htmlhostPath:path: /data/type: DirectoryOrCreate

---

apiVersion: v1

kind: Pod

metadata:labels:run: nginxname: nginx02

spec:nodeName: node02containers:- image: nginxname: nginxports:- containerPort: 80volumeMounts:- name: node2-htmlmountPath: /usr/share/nginx/html/volumes:- name: node2-htmlhostPath:path: /data/type: DirectoryOrCreate

3.使用yaml文件创建自主式Pod资源:

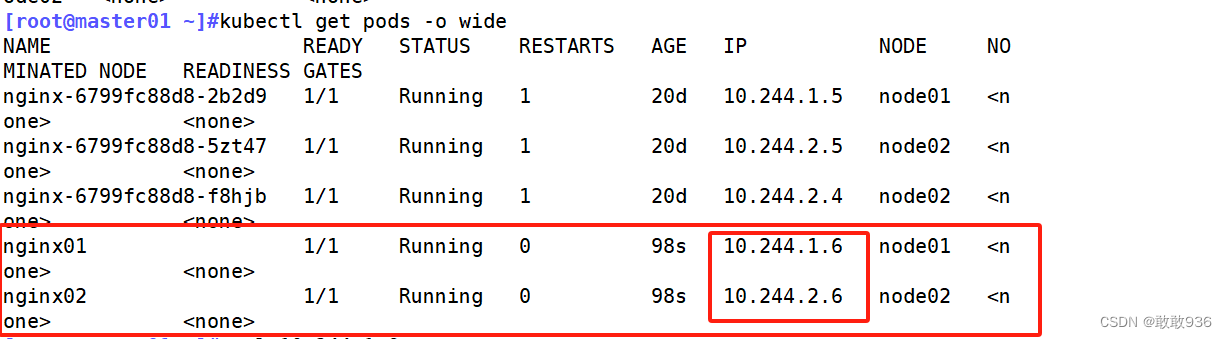

kubectl apply -f nginx-pod.yamlpod/nginx01 createdpod/nginx02 created4.查看创建的两个pod,被调度到了不同的node节点:

kubectl get pods -o wide

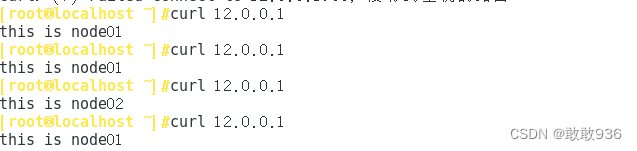

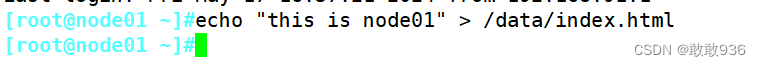

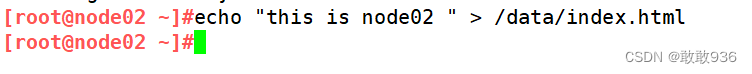

5.两个node节点的存储卷,写入不同的html文件内容,验证访问网页:

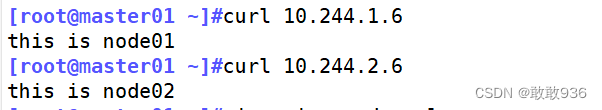

#node01节点echo "this is node01" > /data/index.html#node02节点echo "this is node02 " > /data/index.htmlcurl 10.244.1.6 #访问Node01节点的Pod的IPcurl 10.244.2.6 #访问Node02节点的Pod的IP

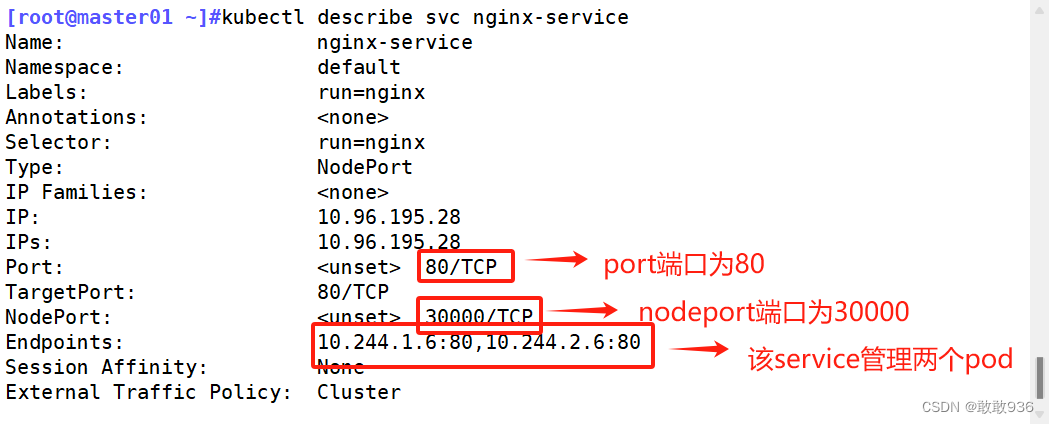

三、编写service对应的yaml文件,使用NodePort类型和TCP 30000端口将Nginx服务发布出去。

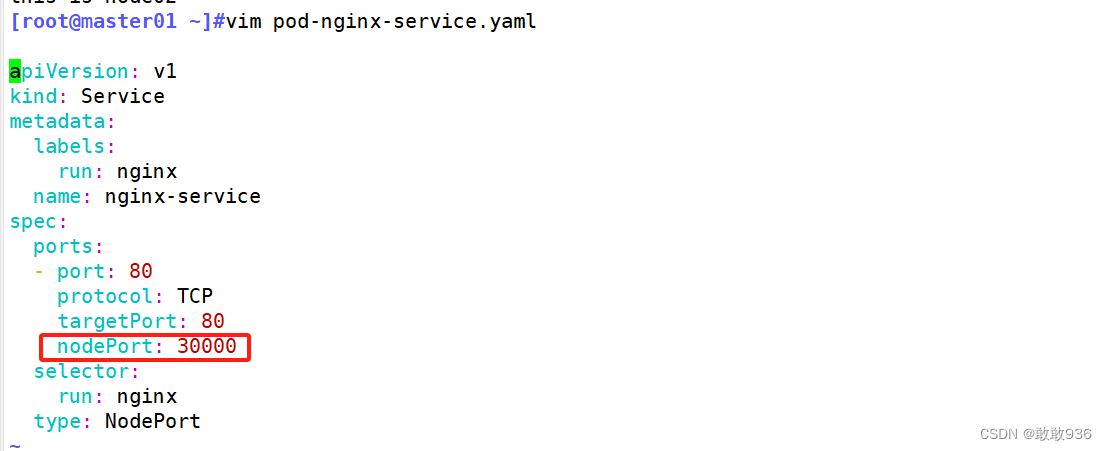

1.编写service对应的yaml文件:

apiVersion: v1

kind: Service

metadata:labels:run: nginxname: nginx-service

spec:ports:- port: 80protocol: TCPtargetPort: 80nodePort: 30000selector:run: nginxtype: NodePort

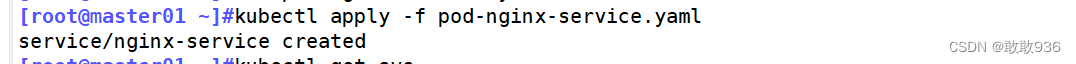

2.执行yaml文件:

[root@master01 ~]#kubectl apply -f pod-nginx-service.yaml

service/nginx-service created

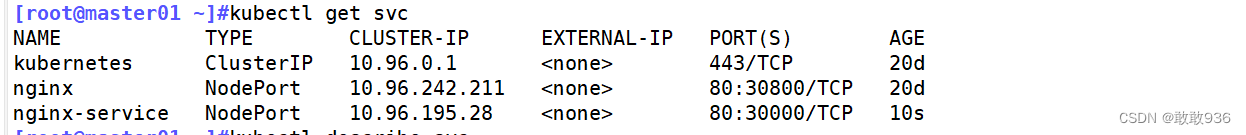

3.查看:

kubectl get svc

kubectl describe svc nginx-service

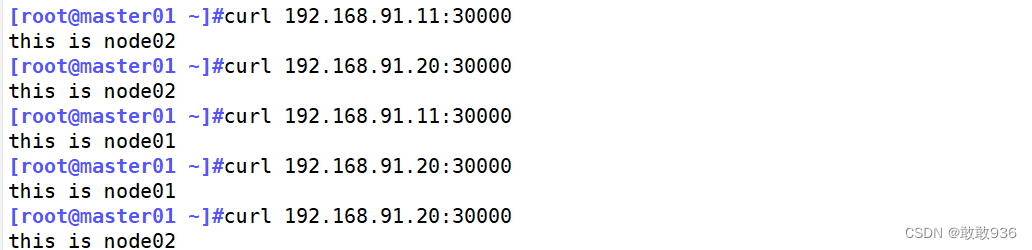

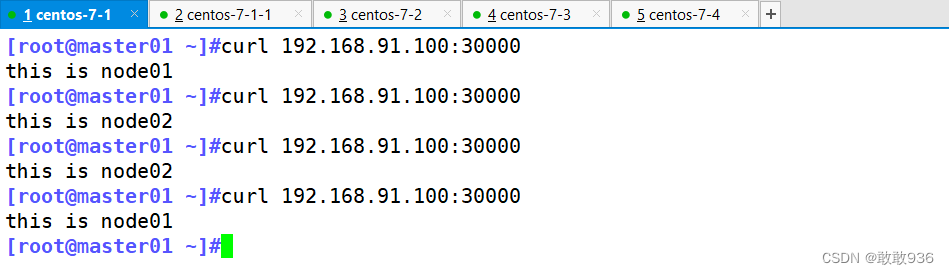

4.访问网页:

[root@master01 ~]#curl 192.168.91.11:30000

[root@master01 ~]#curl 192.168.91.20:30000

四、负载均衡区域配置Keepalived+Nginx,实现负载均衡高可用,通过VIP 192.168.10.100和自定义的端口号即可访问K8S发布出来的服务。

| lb01: | 192.168.91.30 |

|---|---|

| lb02: | 192.168.91.40 |

| VIP: | 192.168.91.100 |

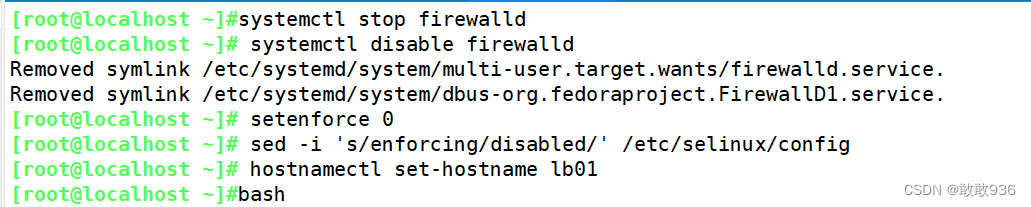

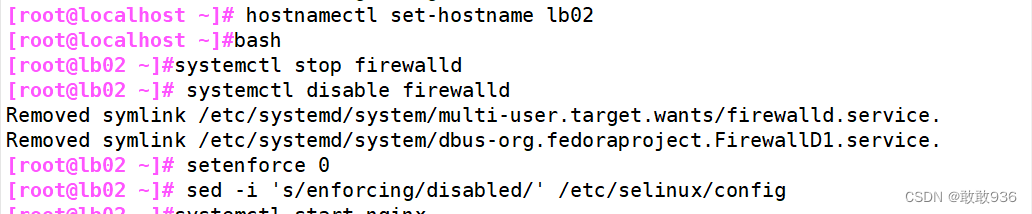

1.初始化设置:

#关闭防火墙和selinuxsystemctl stop firewalldsystemctl disable firewalldsetenforce 0sed -i 's/enforcing/disabled/' /etc/selinux/config#设置主机名hostnamectl set-hostname lb01bashhostnamectl set-hostname lb02bash2.配置nginx的官方在线yum源:

vim /etc/yum.repos.d/nginx.repo

[nginx]

name=nginx repo

baseurl=https://nginx.org/packages/centos/7/$basearch/

gpgcheck=0

yum install nginx -y

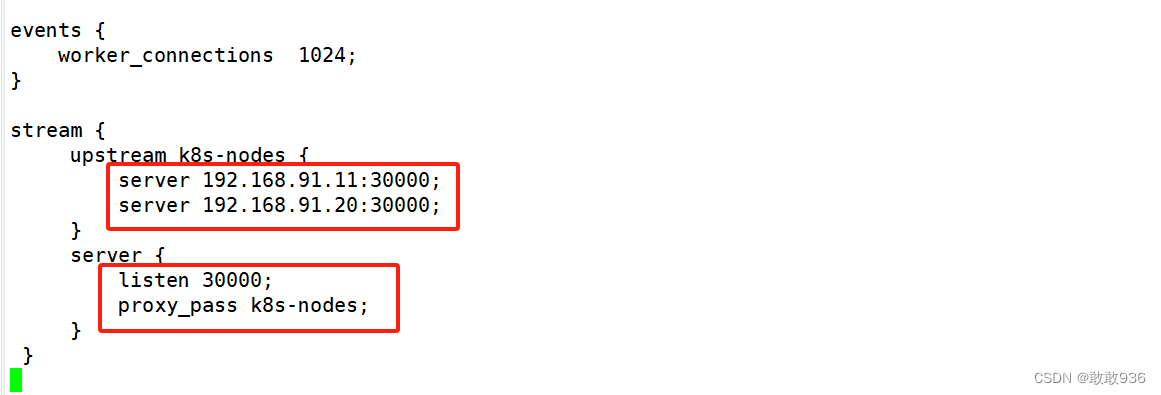

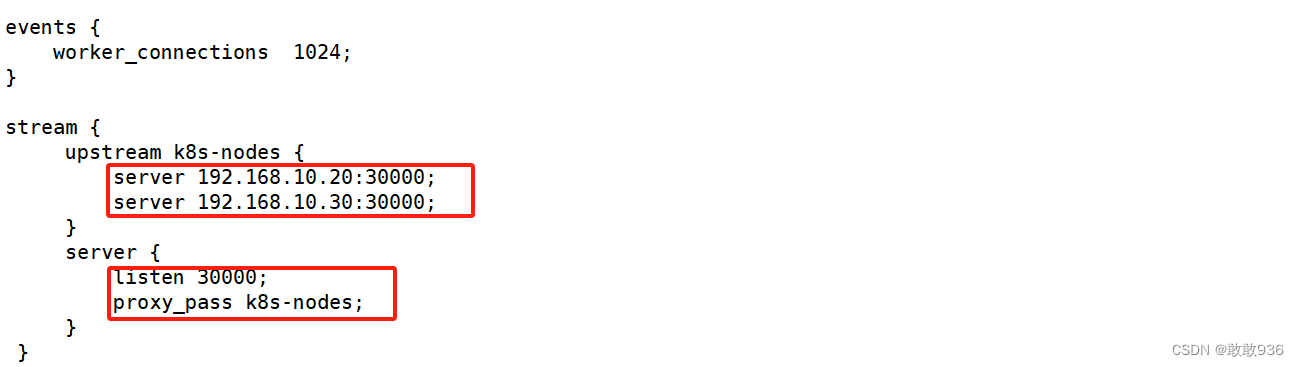

3.修改nginx配置文件,配置四层反向代理负载均衡,指定k8s群集2台node的节点ip和30000端口:

vim /etc/nginx/nginx.confevents {worker_connections 1024;}#在http块上方,添加stream块stream {upstream k8s-nodes {server 192.168.10.20:30000; #node01IP:nodePortserver 192.168.10.30:30000; #node02IP:nodePort}server {listen 30000; #自定义监听端口proxy_pass k8s-nodes;}}http {......#include /etc/nginx/conf.d/*.conf; #建议将这一行注释掉,否则会同时加载/etc/nginx/conf.d/default.conf文件中的内容,nginx会同时监听80端口。}

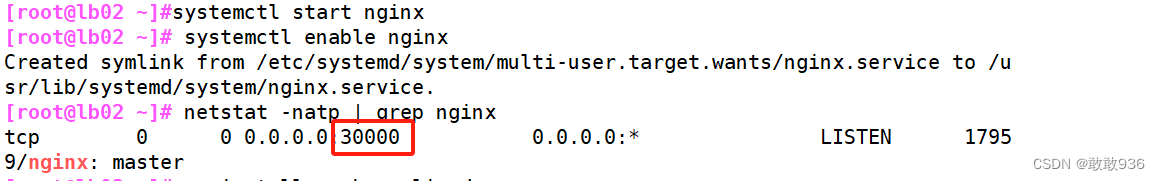

#检查配置文件语法是否正确nginx -t #启动nginx服务,查看到已监听30000端口systemctl start nginxsystemctl enable nginxnetstat -natp | grep nginx

4.两台负载均衡器配置keepalived:

#安装keepalived

yum install -y keepalived

#在/etc/keepalived目录下创建nginx检测脚本

cd /etc/keepalived/

vim check_nginx.sh

#!/bin/bash

#检测nginx是否启动了

A=`ps -C nginx --no-header |wc -l`

if [ $A -eq 0 ];then #如果nginx没有启动就启动nginx systemctl start nginx #重启nginxif [ `ps -C nginx --no-header |wc -l` -eq 0 ];then #nginx重启失败,则停掉keepalived服务,进行VIP转移killall keepalived fi

fi

#给脚本执行权限

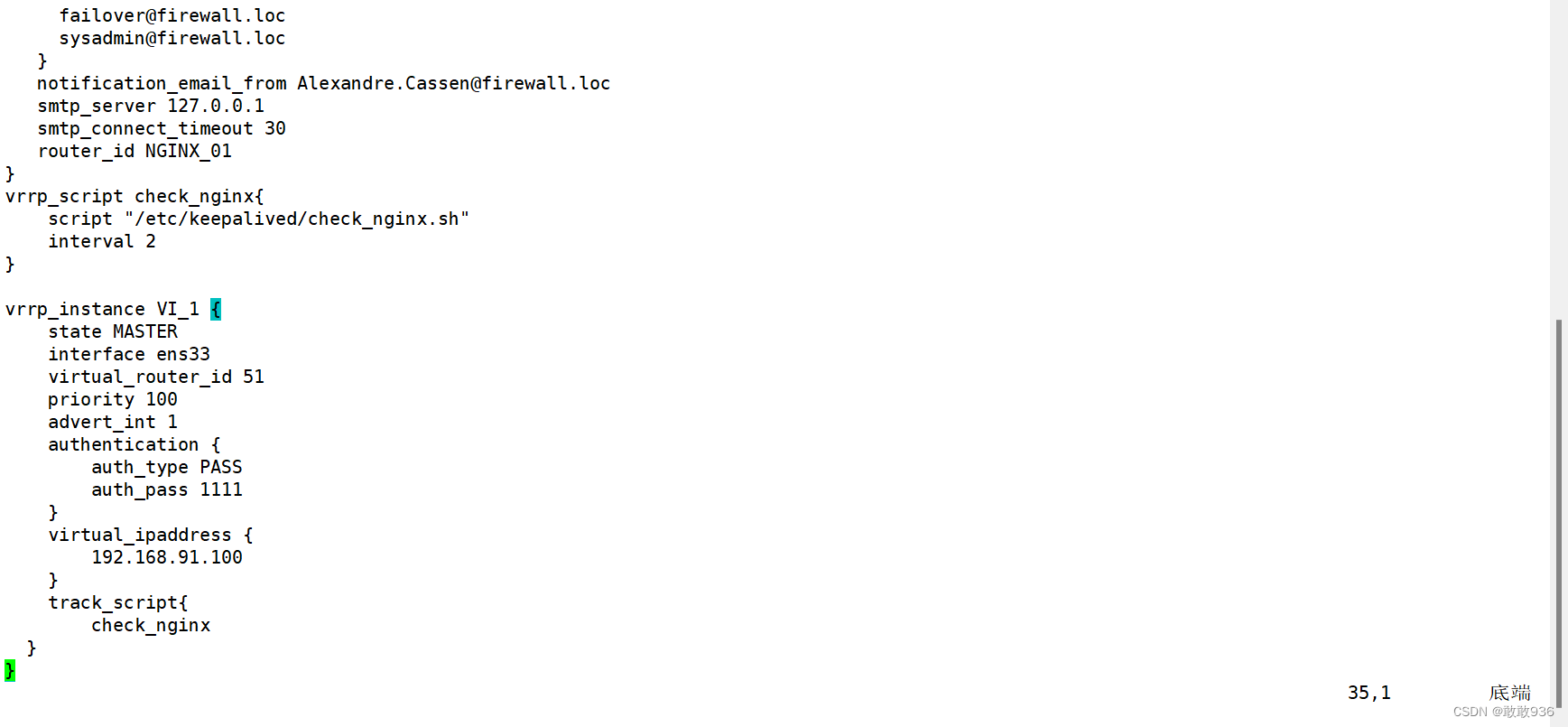

chmod +x check_nginx.sh 5.修改keepalived配置文件:

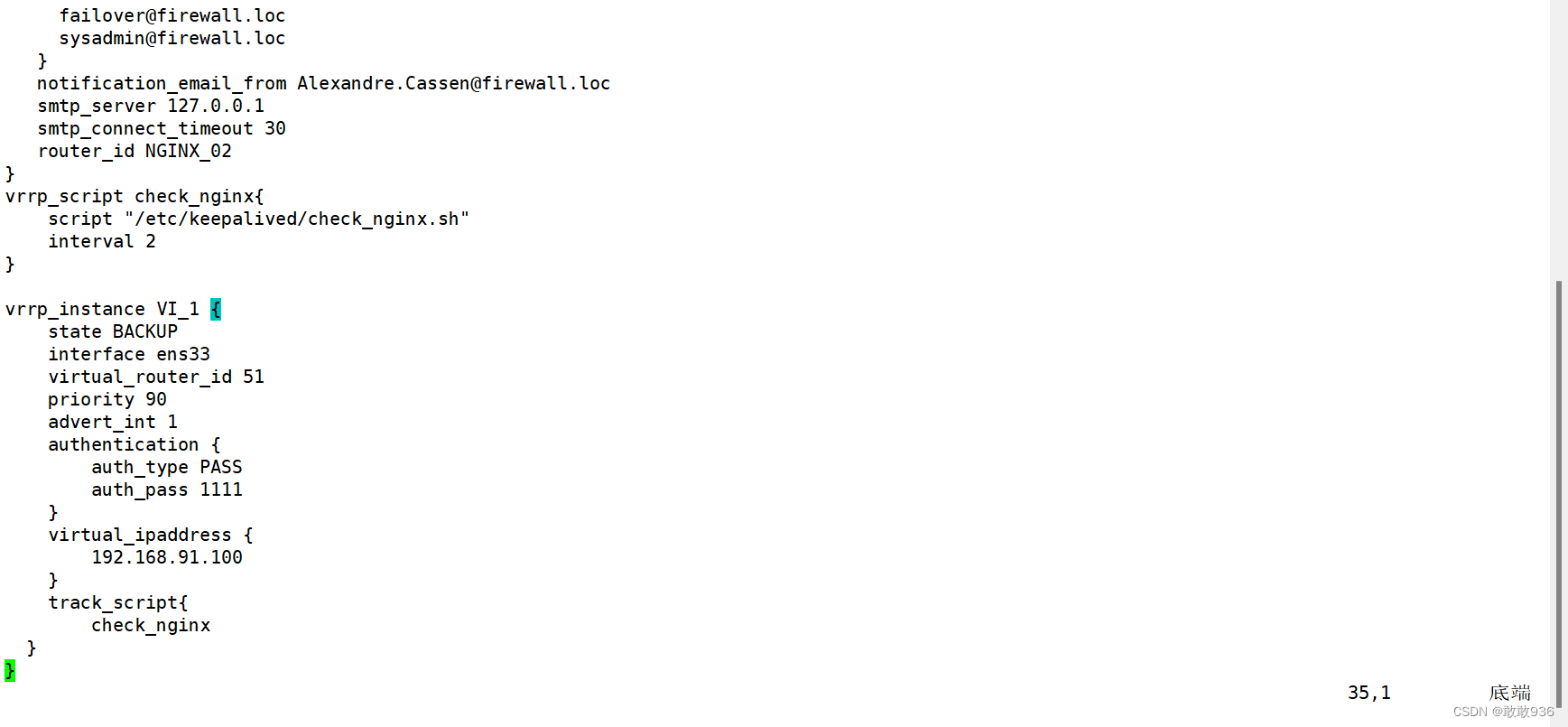

vim keepalived.conf

! Configuration File for keepalived

global_defs {notification_email {acassen@firewall.locfailover@firewall.locsysadmin@firewall.loc}notification_email_from Alexandre.Cassen@firewall.locsmtp_server 127.0.0.1 #修改邮箱地址smtp_connect_timeout 30router_id NGINX_01 #修改主备id#删掉这里的四行vrrp

}

#加入周期性检测nginx服务脚本的相关配置

vrrp_script check_nginx{script "/etc/keepalived/check_nginx.sh" #心跳执行的脚本,检测nginx是否启动interval 2 #(检测脚本执行的间隔,单位是秒)

}

vrrp_instance VI_1 {state MASTERinterface ens33 #修改网卡名称virtual_router_id 51priority 100 #优先级,主不改,备改成比100小就行advert_int 1authentication {auth_type PASSauth_pass 1111}virtual_ipaddress {192.168.10.100 #修改VIP地址}#添加跟踪(执行脚本)track_script{check_nginx}

}

#重启服务

systemctl restart keepalived.service

systemctl enable keepalived.service

#备服务器下载好keepalived后,在主服务器上将脚本和keepalived配置文件传过去

[root@nginx01 keepalived]# scp * 192.168.10.50:`pwd`

#传过去后修改三处

router_id NGINX_02

state BACKUP

priority 90

#然后重启服务

systemctl restart keepalived.service

systemctl enable keepalived.service

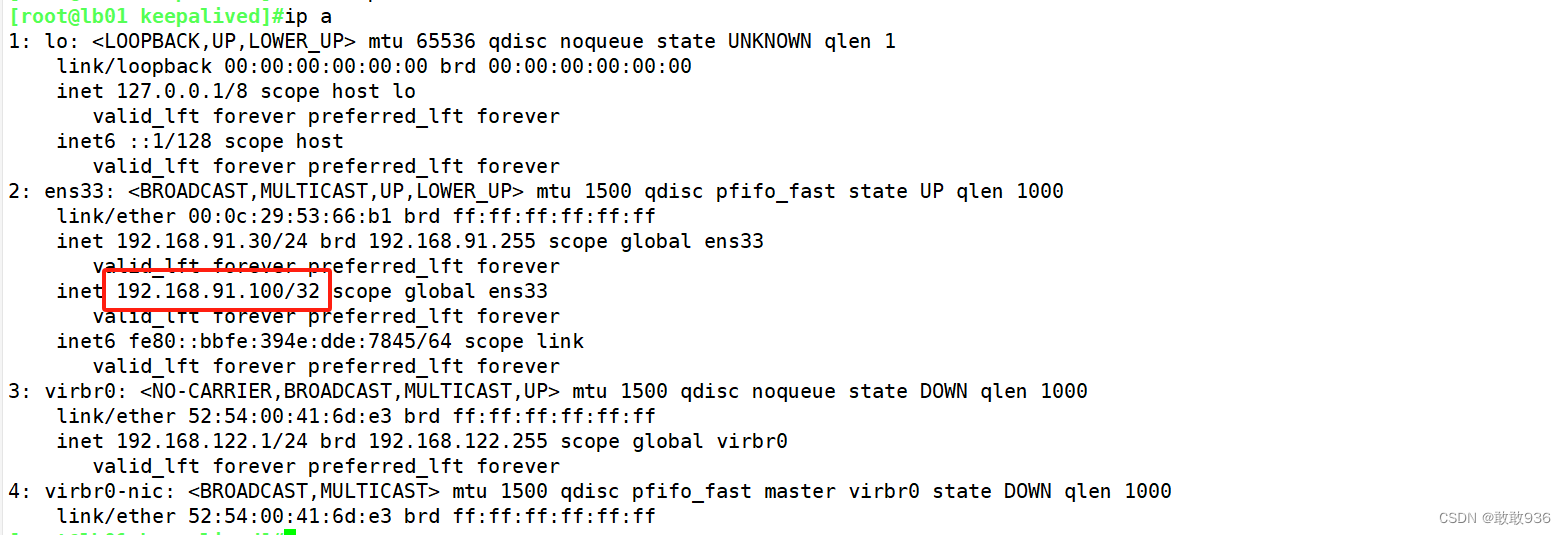

6.在nginx+keepalivd主机上测试查看VIP地址是否生成并测试页面:

五、iptables防火墙服务器,设置双网卡,并且配置SNAT和DNAT转换实现外网客户端可以通过12.0.0.1访问内网的Web服务。

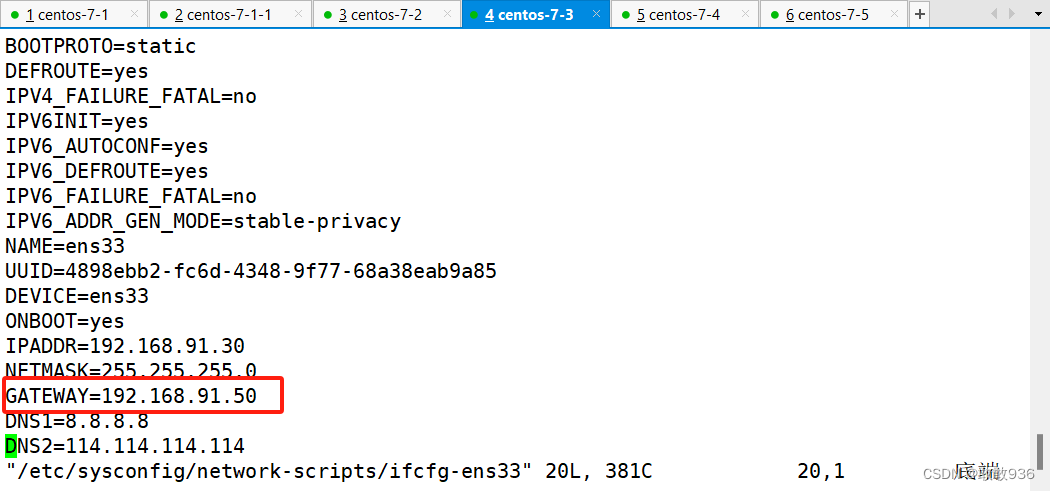

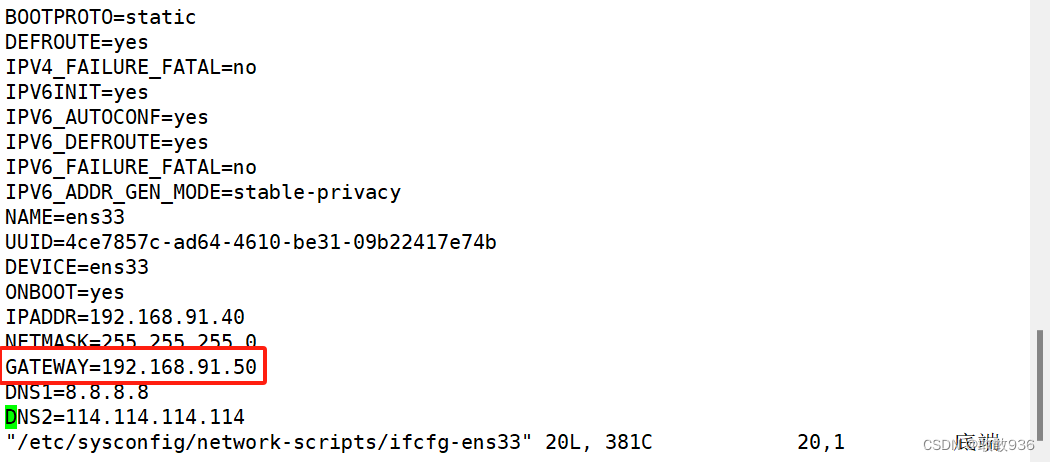

1.两台负载均衡器,将网关地址修改为防火墙服务器的内网IP地址

vim /etc/sysconfig/network-scripts/ifcfg-ens33GATEWAY="192.168.10.1"systemctl restart network #重启网络

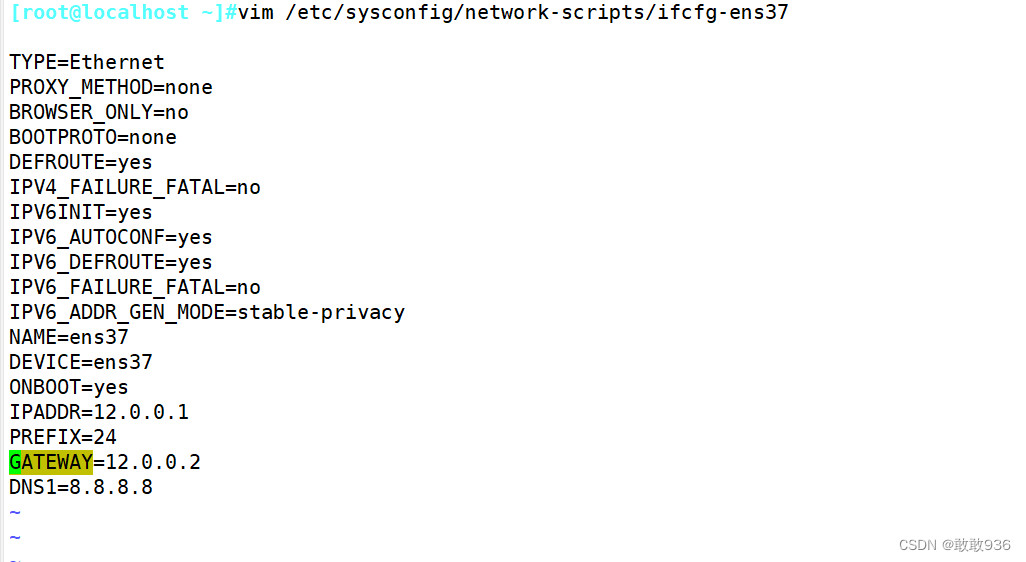

2.配置防火墙服务器:

配置两块网卡

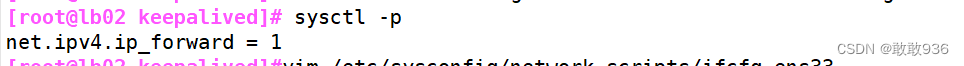

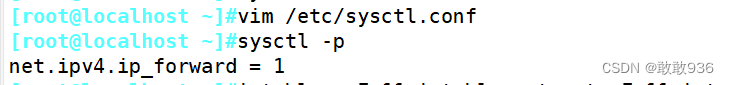

------------开启路由转发功能----------------vim /etc/sysctl.confnet.ipv4.ip_forward = 1 //在文件中增加这一行,开启路由转发功能sysctl -p //加载修改后的配置

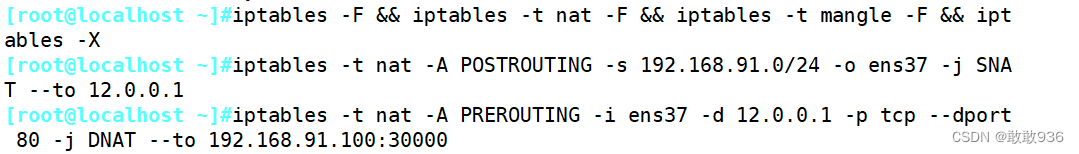

#------------配置iptables策略---------------#先将原有的规则清除iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X#设置SNAT服务,解析源地址。修改nat表中的POSTROUTING链。#将源地址192.168.91.100转换为为12.0.0.1iptables -t nat -A POSTROUTING -s 192.168.91.0/24 -o ens37 -j SNAT --to 12.0.0.1#-t nat //指定nat表#-A POSTROUTING //在POSTROUTING链中添加规则 #-s 192.168.91.100/24 //数据包的源地址#-o ens36 //出站网卡#-j SNAT --to 12.0.0.1 //使用SNAT服务,将源地址转换成公网IP地址。#设置DNAT服务,解析目的地址。修改nat表中的PRETROUTING链。#将目的地址12.0.0.1:3344 转换成 192.168.91.100:3344iptables -t nat -A PREROUTING -i ens37 -d 12.0.0.1 -p tcp --dport 80 -j DNAT --to 192.168.91.100:30000#-A PREROUTING //在PREROUTING链中添加规则 #-i ens37 //入站网卡#-d 12.0.0.254 //数据包的目的地址#-p tcp --dport 3344 //数据包的目的端口#-j DNAT --to 192.168.91.100:3344 //使用DNAT功能,将目的地址和端口转换成192.168.10.100:3344

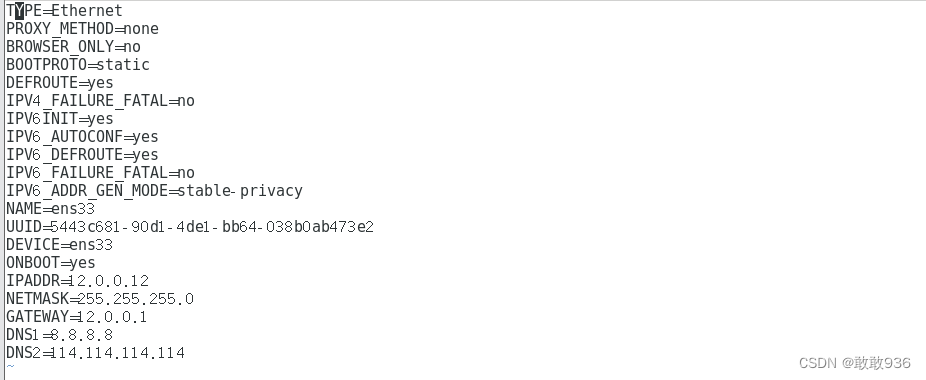

3.客户端修改网关配置文件,测试访问内网的Web服务:

客户端IP地址:12.0.0.12,将网关地址设置为防火墙服务器的外网网卡地址:12.0.0.1浏览器输入 curl 12.0.0.1 进行访问