p54-56 k8s核心实战 service服务发现

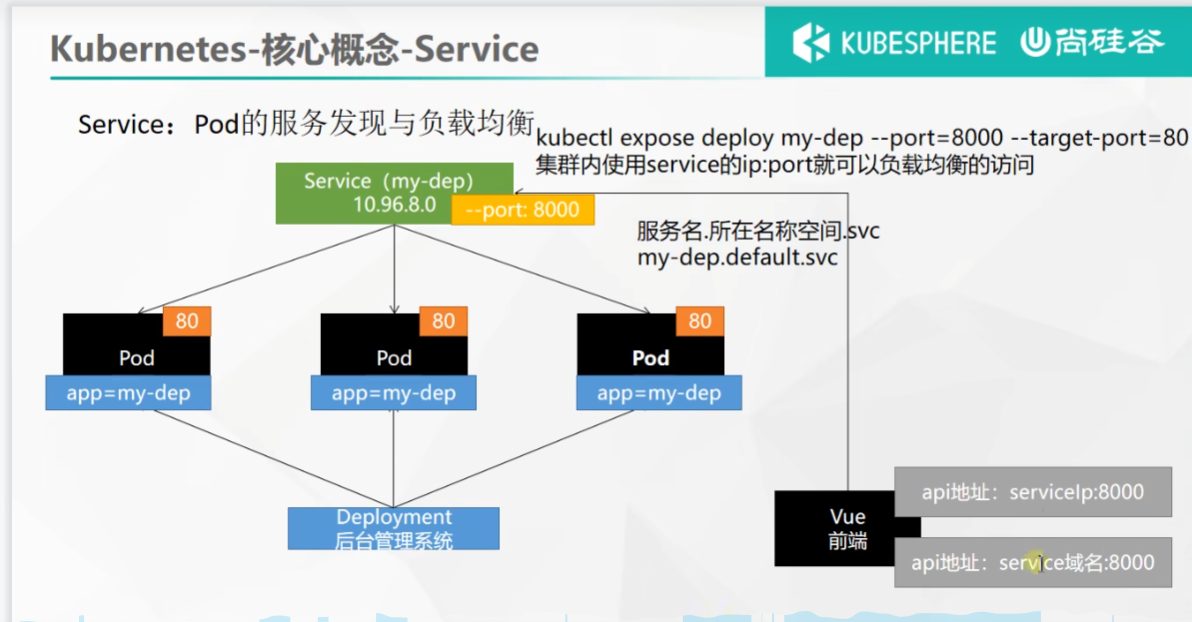

Service:将一组 Pods 公开为网络服务的抽象方法。

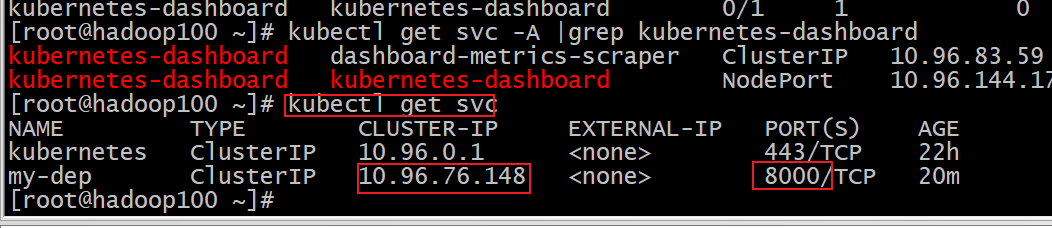

#暴露Deploy,暴露deploy会出现在svc

kubectl expose deployment my-dep --port=8000 --target-port=80#使用标签检索Pod

kubectl get pod -l app=my-dep

apiVersion: v1

kind: Service

metadata:labels:app: my-depname: my-dep

spec:selector:app: my-depports:- port: 8000protocol: TCPtargetPort: 80

ClusterIP

拿到service的ip和port

# 等同于没有--type的

kubectl expose deployment my-dep --port=8000 --target-port=80 --type=ClusterIP

apiVersion: v1

kind: Service

metadata:labels:app: my-depname: my-dep

spec:ports:- port: 8000protocol: TCPtargetPort: 80selector:app: my-deptype: ClusterIP

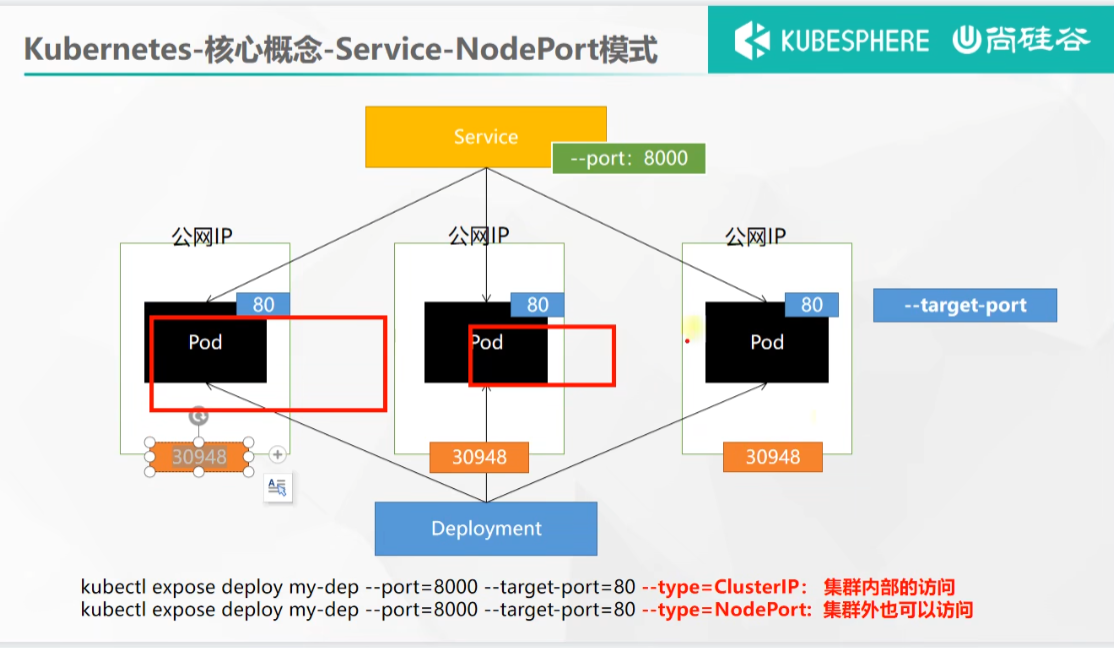

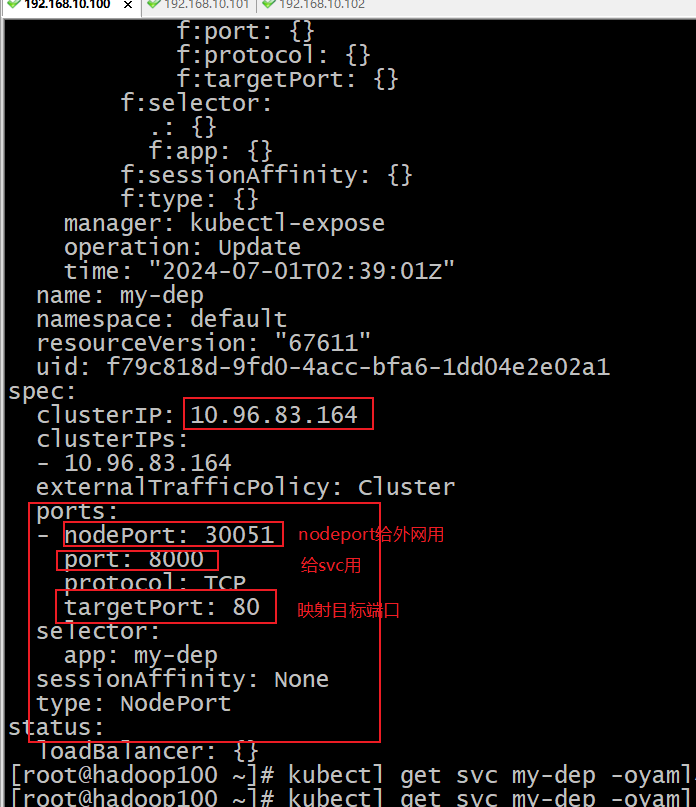

NodePort

kubectl expose deployment my-dep --port=8000 --target-port=80 --type=NodePort

apiVersion: v1

kind: Service

metadata:labels:app: my-depname: my-dep

spec:ports:- port: 8000protocol: TCPtargetPort: 80selector:app: my-deptype: NodePort区别

区别:nodeport模式在可以访问公有网络也可以通过service网络访问 ,cluster只能够通过service网络访问

kubectl get svc my-dep -oyaml

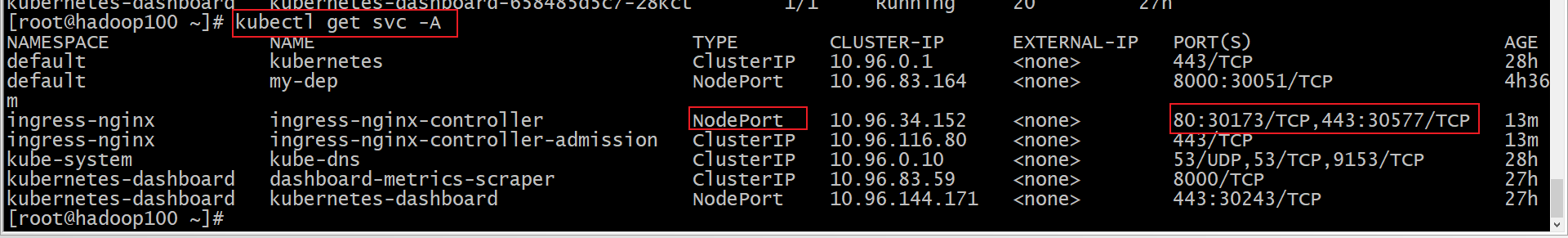

p57-61 ingress网络模型

1、安装

wget https://raw.githubusercontent.com/kubernetes/ingress-nginx/controller-v0.47.0/deploy/static/provider/baremetal/deploy.yaml#修改镜像

vi deploy.yaml

#将image的值改为如下值:

registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/ingress-nginx-controller:v0.46.0# 检查安装的结果

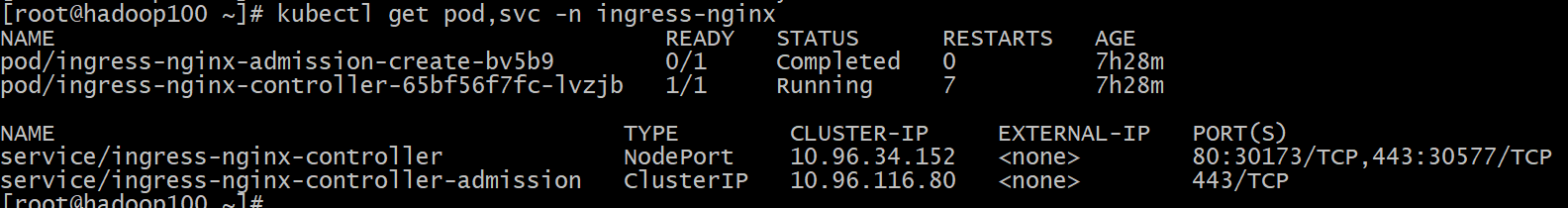

kubectl get pod,svc -n ingress-nginx# 最后别忘记把svc暴露的端口要放行

如果下载不到,用以下文件

apiVersion: v1

kind: Namespace

metadata:name: ingress-nginxlabels:app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginx---

# Source: ingress-nginx/templates/controller-serviceaccount.yaml

apiVersion: v1

kind: ServiceAccount

metadata:labels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: controllername: ingress-nginxnamespace: ingress-nginx

automountServiceAccountToken: true

---

# Source: ingress-nginx/templates/controller-configmap.yaml

apiVersion: v1

kind: ConfigMap

metadata:labels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: controllername: ingress-nginx-controllernamespace: ingress-nginx

data:

---

# Source: ingress-nginx/templates/clusterrole.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:labels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmname: ingress-nginx

rules:- apiGroups:- ''resources:- configmaps- endpoints- nodes- pods- secretsverbs:- list- watch- apiGroups:- ''resources:- nodesverbs:- get- apiGroups:- ''resources:- servicesverbs:- get- list- watch- apiGroups:- extensions- networking.k8s.io # k8s 1.14+resources:- ingressesverbs:- get- list- watch- apiGroups:- ''resources:- eventsverbs:- create- patch- apiGroups:- extensions- networking.k8s.io # k8s 1.14+resources:- ingresses/statusverbs:- update- apiGroups:- networking.k8s.io # k8s 1.14+resources:- ingressclassesverbs:- get- list- watch

---

# Source: ingress-nginx/templates/clusterrolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:labels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmname: ingress-nginx

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: ingress-nginx

subjects:- kind: ServiceAccountname: ingress-nginxnamespace: ingress-nginx

---

# Source: ingress-nginx/templates/controller-role.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:labels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: controllername: ingress-nginxnamespace: ingress-nginx

rules:- apiGroups:- ''resources:- namespacesverbs:- get- apiGroups:- ''resources:- configmaps- pods- secrets- endpointsverbs:- get- list- watch- apiGroups:- ''resources:- servicesverbs:- get- list- watch- apiGroups:- extensions- networking.k8s.io # k8s 1.14+resources:- ingressesverbs:- get- list- watch- apiGroups:- extensions- networking.k8s.io # k8s 1.14+resources:- ingresses/statusverbs:- update- apiGroups:- networking.k8s.io # k8s 1.14+resources:- ingressclassesverbs:- get- list- watch- apiGroups:- ''resources:- configmapsresourceNames:- ingress-controller-leader-nginxverbs:- get- update- apiGroups:- ''resources:- configmapsverbs:- create- apiGroups:- ''resources:- eventsverbs:- create- patch

---

# Source: ingress-nginx/templates/controller-rolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:labels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: controllername: ingress-nginxnamespace: ingress-nginx

roleRef:apiGroup: rbac.authorization.k8s.iokind: Rolename: ingress-nginx

subjects:- kind: ServiceAccountname: ingress-nginxnamespace: ingress-nginx

---

# Source: ingress-nginx/templates/controller-service-webhook.yaml

apiVersion: v1

kind: Service

metadata:labels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: controllername: ingress-nginx-controller-admissionnamespace: ingress-nginx

spec:type: ClusterIPports:- name: https-webhookport: 443targetPort: webhookselector:app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/component: controller

---

# Source: ingress-nginx/templates/controller-service.yaml

apiVersion: v1

kind: Service

metadata:annotations:labels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: controllername: ingress-nginx-controllernamespace: ingress-nginx

spec:type: NodePortports:- name: httpport: 80protocol: TCPtargetPort: http- name: httpsport: 443protocol: TCPtargetPort: httpsselector:app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/component: controller

---

# Source: ingress-nginx/templates/controller-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:labels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: controllername: ingress-nginx-controllernamespace: ingress-nginx

spec:selector:matchLabels:app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/component: controllerrevisionHistoryLimit: 10minReadySeconds: 0template:metadata:labels:app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/component: controllerspec:dnsPolicy: ClusterFirstcontainers:- name: controllerimage: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/ingress-nginx-controller:v0.46.0imagePullPolicy: IfNotPresentlifecycle:preStop:exec:command:- /wait-shutdownargs:- /nginx-ingress-controller- --election-id=ingress-controller-leader- --ingress-class=nginx- --configmap=$(POD_NAMESPACE)/ingress-nginx-controller- --validating-webhook=:8443- --validating-webhook-certificate=/usr/local/certificates/cert- --validating-webhook-key=/usr/local/certificates/keysecurityContext:capabilities:drop:- ALLadd:- NET_BIND_SERVICErunAsUser: 101allowPrivilegeEscalation: trueenv:- name: POD_NAMEvalueFrom:fieldRef:fieldPath: metadata.name- name: POD_NAMESPACEvalueFrom:fieldRef:fieldPath: metadata.namespace- name: LD_PRELOADvalue: /usr/local/lib/libmimalloc.solivenessProbe:failureThreshold: 5httpGet:path: /healthzport: 10254scheme: HTTPinitialDelaySeconds: 10periodSeconds: 10successThreshold: 1timeoutSeconds: 1readinessProbe:failureThreshold: 3httpGet:path: /healthzport: 10254scheme: HTTPinitialDelaySeconds: 10periodSeconds: 10successThreshold: 1timeoutSeconds: 1ports:- name: httpcontainerPort: 80protocol: TCP- name: httpscontainerPort: 443protocol: TCP- name: webhookcontainerPort: 8443protocol: TCPvolumeMounts:- name: webhook-certmountPath: /usr/local/certificates/readOnly: trueresources:requests:cpu: 100mmemory: 90MinodeSelector:kubernetes.io/os: linuxserviceAccountName: ingress-nginxterminationGracePeriodSeconds: 300volumes:- name: webhook-certsecret:secretName: ingress-nginx-admission

---

# Source: ingress-nginx/templates/admission-webhooks/validating-webhook.yaml

# before changing this value, check the required kubernetes version

# https://kubernetes.io/docs/reference/access-authn-authz/extensible-admission-controllers/#prerequisites

apiVersion: admissionregistration.k8s.io/v1

kind: ValidatingWebhookConfiguration

metadata:labels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: admission-webhookname: ingress-nginx-admission

webhooks:- name: validate.nginx.ingress.kubernetes.iomatchPolicy: Equivalentrules:- apiGroups:- networking.k8s.ioapiVersions:- v1beta1operations:- CREATE- UPDATEresources:- ingressesfailurePolicy: FailsideEffects: NoneadmissionReviewVersions:- v1- v1beta1clientConfig:service:namespace: ingress-nginxname: ingress-nginx-controller-admissionpath: /networking/v1beta1/ingresses

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/serviceaccount.yaml

apiVersion: v1

kind: ServiceAccount

metadata:name: ingress-nginx-admissionannotations:helm.sh/hook: pre-install,pre-upgrade,post-install,post-upgradehelm.sh/hook-delete-policy: before-hook-creation,hook-succeededlabels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: admission-webhooknamespace: ingress-nginx

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/clusterrole.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:name: ingress-nginx-admissionannotations:helm.sh/hook: pre-install,pre-upgrade,post-install,post-upgradehelm.sh/hook-delete-policy: before-hook-creation,hook-succeededlabels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: admission-webhook

rules:- apiGroups:- admissionregistration.k8s.ioresources:- validatingwebhookconfigurationsverbs:- get- update

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/clusterrolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:name: ingress-nginx-admissionannotations:helm.sh/hook: pre-install,pre-upgrade,post-install,post-upgradehelm.sh/hook-delete-policy: before-hook-creation,hook-succeededlabels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: admission-webhook

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: ingress-nginx-admission

subjects:- kind: ServiceAccountname: ingress-nginx-admissionnamespace: ingress-nginx

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/role.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: Role

metadata:name: ingress-nginx-admissionannotations:helm.sh/hook: pre-install,pre-upgrade,post-install,post-upgradehelm.sh/hook-delete-policy: before-hook-creation,hook-succeededlabels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: admission-webhooknamespace: ingress-nginx

rules:- apiGroups:- ''resources:- secretsverbs:- get- create

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/rolebinding.yaml

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:name: ingress-nginx-admissionannotations:helm.sh/hook: pre-install,pre-upgrade,post-install,post-upgradehelm.sh/hook-delete-policy: before-hook-creation,hook-succeededlabels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: admission-webhooknamespace: ingress-nginx

roleRef:apiGroup: rbac.authorization.k8s.iokind: Rolename: ingress-nginx-admission

subjects:- kind: ServiceAccountname: ingress-nginx-admissionnamespace: ingress-nginx

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/job-createSecret.yaml

apiVersion: batch/v1

kind: Job

metadata:name: ingress-nginx-admission-createannotations:helm.sh/hook: pre-install,pre-upgradehelm.sh/hook-delete-policy: before-hook-creation,hook-succeededlabels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: admission-webhooknamespace: ingress-nginx

spec:template:metadata:name: ingress-nginx-admission-createlabels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: admission-webhookspec:containers:- name: createimage: docker.io/jettech/kube-webhook-certgen:v1.5.1imagePullPolicy: IfNotPresentargs:- create- --host=ingress-nginx-controller-admission,ingress-nginx-controller-admission.$(POD_NAMESPACE).svc- --namespace=$(POD_NAMESPACE)- --secret-name=ingress-nginx-admissionenv:- name: POD_NAMESPACEvalueFrom:fieldRef:fieldPath: metadata.namespacerestartPolicy: OnFailureserviceAccountName: ingress-nginx-admissionsecurityContext:runAsNonRoot: truerunAsUser: 2000

---

# Source: ingress-nginx/templates/admission-webhooks/job-patch/job-patchWebhook.yaml

apiVersion: batch/v1

kind: Job

metadata:name: ingress-nginx-admission-patchannotations:helm.sh/hook: post-install,post-upgradehelm.sh/hook-delete-policy: before-hook-creation,hook-succeededlabels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: admission-webhooknamespace: ingress-nginx

spec:template:metadata:name: ingress-nginx-admission-patchlabels:helm.sh/chart: ingress-nginx-3.33.0app.kubernetes.io/name: ingress-nginxapp.kubernetes.io/instance: ingress-nginxapp.kubernetes.io/version: 0.47.0app.kubernetes.io/managed-by: Helmapp.kubernetes.io/component: admission-webhookspec:containers:- name: patchimage: docker.io/jettech/kube-webhook-certgen:v1.5.1imagePullPolicy: IfNotPresentargs:- patch- --webhook-name=ingress-nginx-admission- --namespace=$(POD_NAMESPACE)- --patch-mutating=false- --secret-name=ingress-nginx-admission- --patch-failure-policy=Failenv:- name: POD_NAMESPACEvalueFrom:fieldRef:fieldPath: metadata.namespacerestartPolicy: OnFailureserviceAccountName: ingress-nginx-admissionsecurityContext:runAsNonRoot: truerunAsUser: 2000

2、使用

官网地址:https://kubernetes.github.io/ingress-nginx/

就是nginx做的

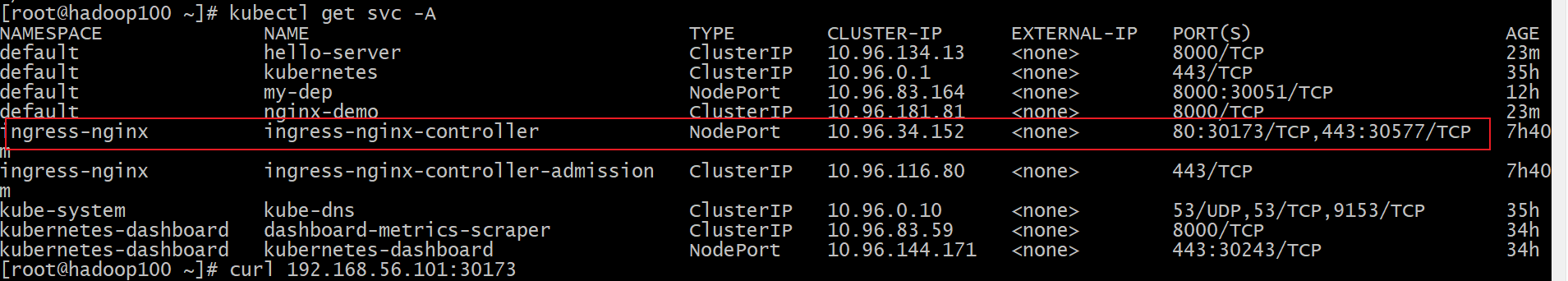

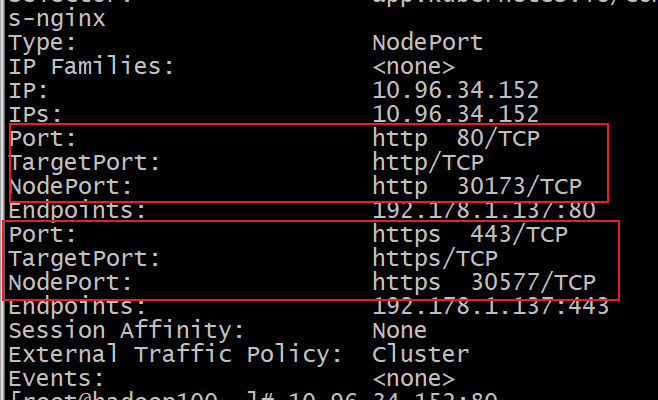

port私网用,nodeport公网用.targetport是目标端口

集群私网访问

http://10.96.34.152:80

https://10.96.34.152:443

NodePort模式下公网访问

http://192.168.10.101:30173/

https://192.168.10.101:30577/

测试环境

应用如下test.yaml,准备好测试环境 kubectl apply -f test.yaml,

部署pod和service

apiVersion: apps/v1

kind: Deployment

metadata:name: hello-server

spec:replicas: 2selector:matchLabels:app: hello-servertemplate:metadata:labels:app: hello-serverspec:containers:- name: hello-serverimage: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/hello-serverports:- containerPort: 9000

---

apiVersion: apps/v1

kind: Deployment

metadata:labels:app: nginx-demoname: nginx-demo

spec:replicas: 2selector:matchLabels:app: nginx-demotemplate:metadata:labels:app: nginx-demospec:containers:- image: nginxname: nginx

---

apiVersion: v1

kind: Service

metadata:labels:app: nginx-demoname: nginx-demo

spec:selector:app: nginx-demoports:- port: 8000protocol: TCPtargetPort: 80

---

apiVersion: v1

kind: Service

metadata:labels:app: hello-servername: hello-server

spec:selector:app: hello-serverports:- port: 8000protocol: TCPtargetPort: 9000

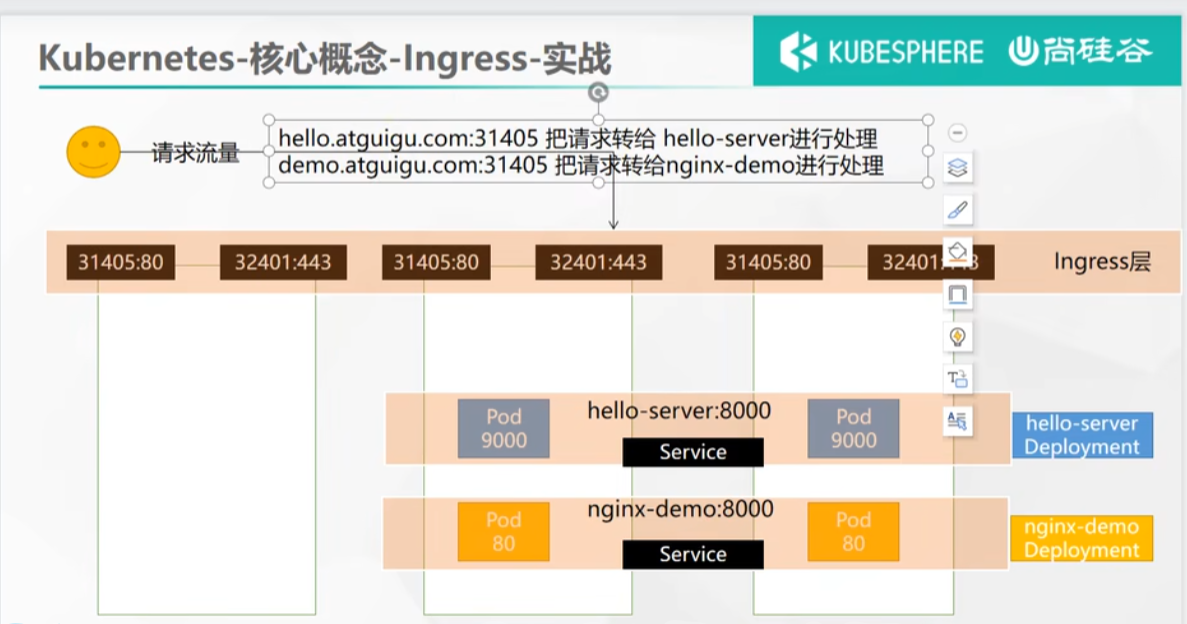

现在想要根据不同的域名访问不同的service

1、域名访问

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:name: ingress-host-bar

spec:ingressClassName: nginxrules:- host: "hello.atguigu.com"http:paths:- pathType: Prefixpath: "/"backend:service:name: hello-serverport:number: 8000- host: "demo.atguigu.com"http:paths:- pathType: Prefixpath: "/nginx" # 把请求会转给下面的服务,下面的服务一定要能处理这个路径,不能处理就是404backend:service:name: nginx-demo ## java,比如使用路径重写,去掉前缀nginxport:number: 8000

2、路径重写

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:annotations:nginx.ingress.kubernetes.io/rewrite-target: /$2name: ingress-host-bar

spec:ingressClassName: nginxrules:- host: "hello.atguigu.com"http:paths:- pathType: Prefixpath: "/"backend:service:name: hello-serverport:number: 8000- host: "demo.atguigu.com"http:paths:- pathType: Prefixpath: "/nginx(/|$)(.*)" # 把请求会转给下面的服务,下面的服务一定要能处理这个路径,不能处理就是404backend:service:name: nginx-demo ## java,比如使用路径重写,去掉前缀nginxport:number: 8000

3、流量限制

apiVersion: networking.k8s.io/v1

kind: Ingress

metadata:name: ingress-limit-rateannotations:nginx.ingress.kubernetes.io/limit-rps: "1"

spec:ingressClassName: nginxrules:- host: "haha.atguigu.com"http:paths:- pathType: Exactpath: "/"backend:service:name: nginx-demoport:number: 8000

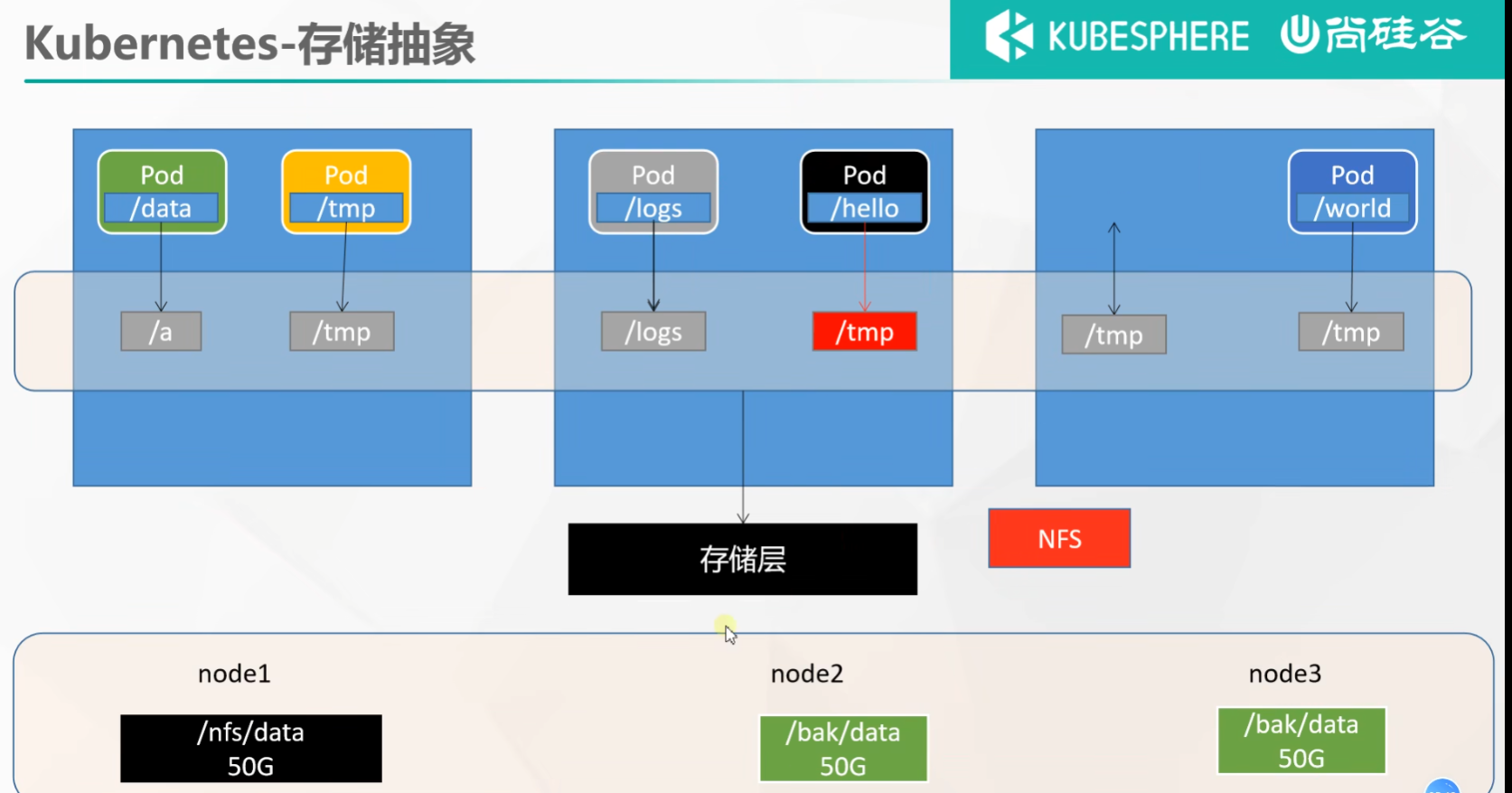

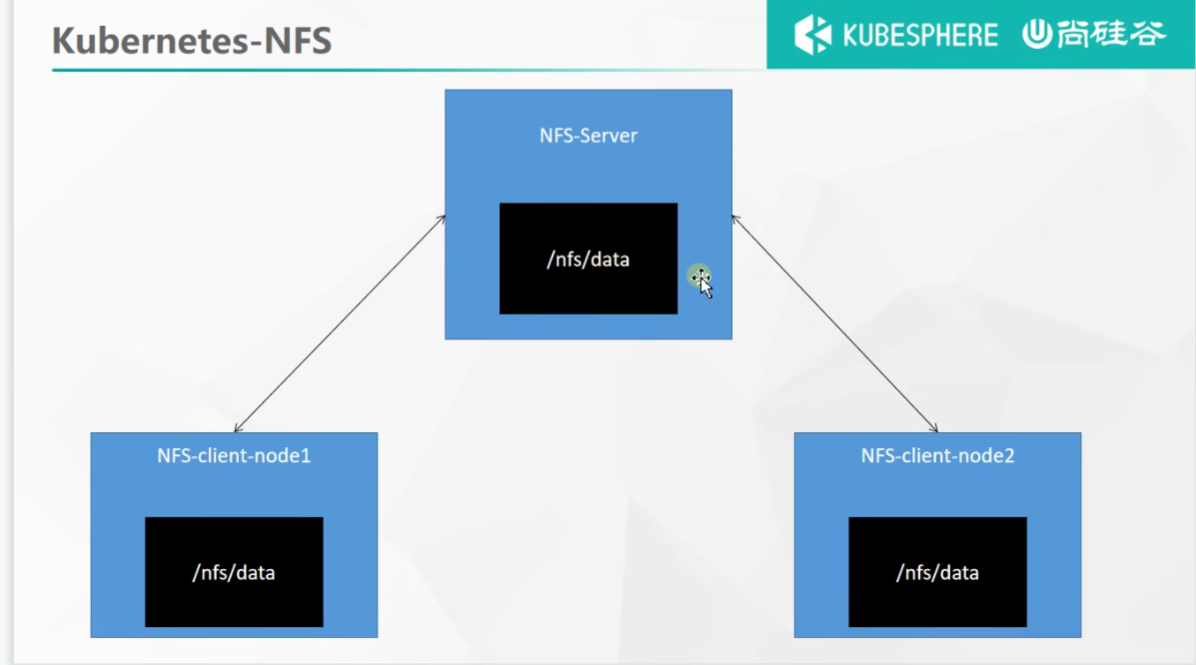

62 存储抽象基本概念 NFS搭建

传统的docker挂载不能解决分布式下问题,使用NFS

环境准备

1、所有节点

#所有机器安装

yum install -y nfs-utils

2、主节点

#nfs主节点

echo "/nfs/data/ *(insecure,rw,sync,no_root_squash)" > /etc/exportsmkdir -p /nfs/data

systemctl enable rpcbind --now

systemctl enable nfs-server --now

#配置生效

exportfs -r

3、从节点

showmount -e 172.31.0.4#执行以下命令挂载 nfs 服务器上的共享目录到本机路径 /root/nfsmount

mkdir -p /nfs/datamount -t nfs 172.31.0.4:/nfs/data /nfs/data

# 写入一个测试文件

echo "hello nfs server" > /nfs/data/test.txt

4、原生方式数据挂载

apiVersion: apps/v1

kind: Deployment

metadata:labels:app: nginx-pv-demoname: nginx-pv-demo

spec:replicas: 2selector:matchLabels:app: nginx-pv-demotemplate:metadata:labels:app: nginx-pv-demospec:containers:- image: nginxname: nginxvolumeMounts:- name: htmlmountPath: /usr/share/nginx/htmlvolumes:- name: htmlnfs:server: 192.168.10.100path: /nfs/data/nginx-pv

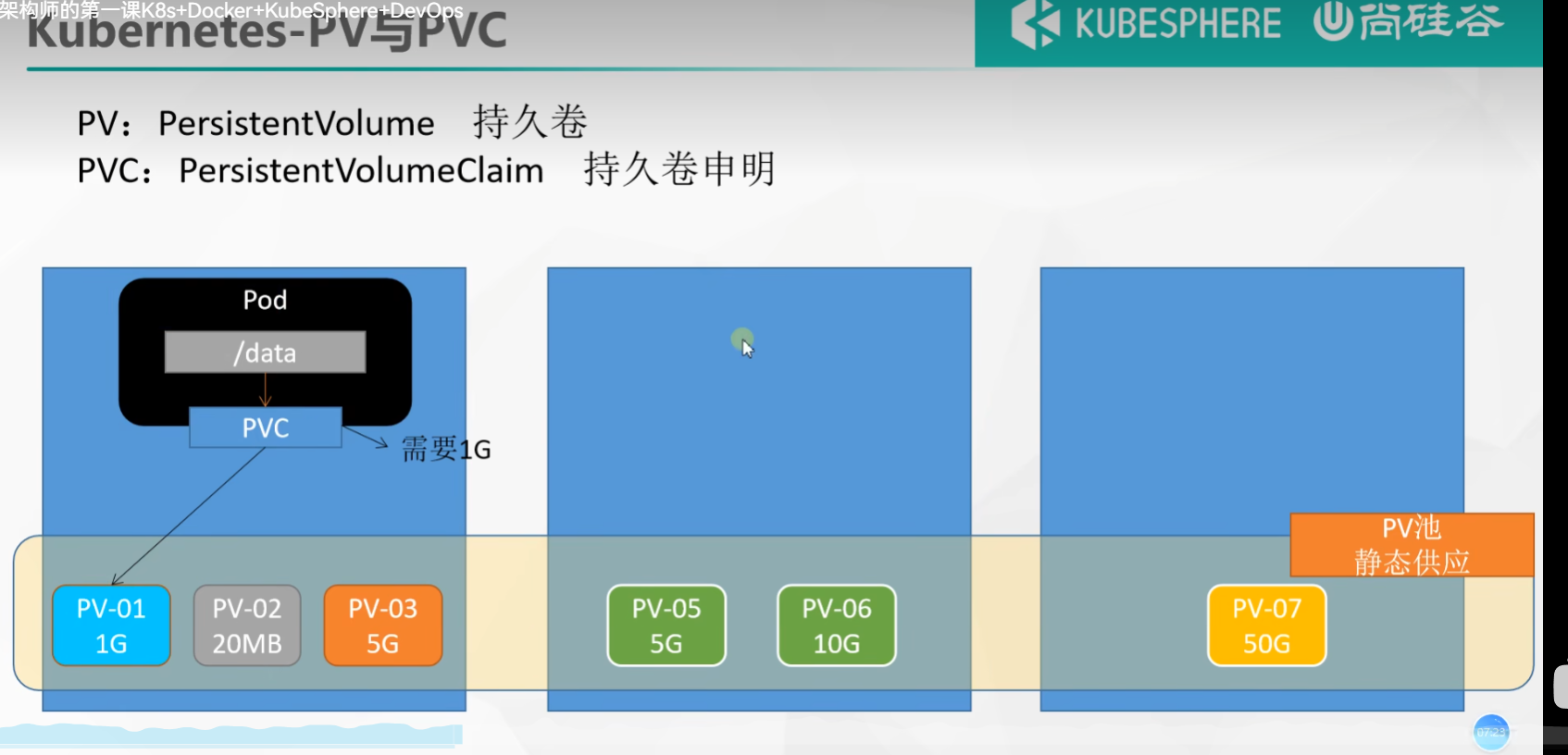

p64 pv 与pvc

1、PV&PVC

PV:持久卷(Persistent Volume),将应用需要持久化的数据保存到指定位置

PVC:持久卷申明(Persistent Volume Claim),申明需要使用的持久卷规格

1、创建pv池

静态供应

#nfs主节点

mkdir -p /nfs/data/01

mkdir -p /nfs/data/02

mkdir -p /nfs/data/03

创建PV

apiVersion: v1

kind: PersistentVolume

metadata:name: pv01-10m

spec:capacity:storage: 10MaccessModes:- ReadWriteManystorageClassName: nfsnfs:path: /nfs/data/01server: 192.168.10.100

---

apiVersion: v1

kind: PersistentVolume

metadata:name: pv02-1gi

spec:capacity:storage: 1GiaccessModes:- ReadWriteManystorageClassName: nfsnfs:path: /nfs/data/02server: 192.168.10.100

---

apiVersion: v1

kind: PersistentVolume

metadata:name: pv03-3gi

spec:capacity:storage: 3GiaccessModes:- ReadWriteManystorageClassName: nfsnfs:path: /nfs/data/03server: 192.168.10.100

2、PVC创建与绑定

创建PVC

kind: PersistentVolumeClaim

apiVersion: v1

metadata:name: nginx-pvc

spec:accessModes:- ReadWriteManyresources:requests:storage: 200MistorageClassName: nfs

创建Pod绑定PVC

apiVersion: apps/v1

kind: Deployment

metadata:labels:app: nginx-deploy-pvcname: nginx-deploy-pvc

spec:replicas: 2selector:matchLabels:app: nginx-deploy-pvctemplate:metadata:labels:app: nginx-deploy-pvcspec:containers:- image: nginxname: nginxvolumeMounts:- name: htmlmountPath: /usr/share/nginx/htmlvolumes:- name: htmlpersistentVolumeClaim:claimName: nginx-pvc

p65 66 ConfigMap重抽取配置

之前使用docker 挂载配置

现在使用pod挂载configmap配置

1、redis示例

1、把之前的配置文件创建为配置集

#创建配置,redis保存到k8s的etcd;

kubectl create cm redis-conf --from-file=redis.conf

ConfigMap详情

apiVersion: v1

data: #data是所有真正的数据,key:默认是文件名 value:配置文件的内容redis.conf: |appendonly yes

kind: ConfigMap

metadata:name: redis-confnamespace: default

2、创建Pod

apiVersion: v1

kind: Pod

metadata:name: redis

spec:containers:- name: redisimage: rediscommand:- redis-server- "/redis-master/redis.conf" #指的是redis容器内部的位置ports:- containerPort: 6379volumeMounts:- mountPath: /dataname: data- mountPath: /redis-mastername: configvolumes:- name: dataemptyDir: {}- name: configconfigMap:name: redis-confitems:- key: redis.confpath: redis.conf

3、Secret

Secret 对象类型用来保存敏感信息,例如密码、OAuth 令牌和 SSH 密钥。 将这些信息放在 secret 中比放在 Pod 的定义或者 容器镜像 中来说更加安全和灵活。

kubectl create secret docker-registry leifengyang-docker \

--docker-username=leifengyang \

--docker-password=Lfy123456 \

--docker-email=534096094@qq.com##命令格式

kubectl create secret docker-registry regcred \--docker-server=<你的镜像仓库服务器> \--docker-username=<你的用户名> \--docker-password=<你的密码> \--docker-email=<你的邮箱地址>

apiVersion: v1

kind: Pod

metadata:name: private-nginx

spec:containers:- name: private-nginximage: leifengyang/guignginx:v1.0imagePullSecrets:- name: leifengyang-docker

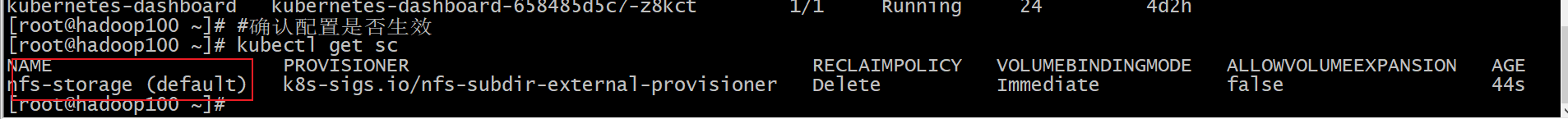

p 71 kubesphere 平台安装 安装前置环境 配置默认存储

前面已经安装的就不安装了,只安装要加的

1、nfs文件系统

配置默认存储

## 创建了一个存储类

apiVersion: storage.k8s.io/v1

kind: StorageClass

metadata:name: nfs-storageannotations:storageclass.kubernetes.io/is-default-class: "true"

provisioner: k8s-sigs.io/nfs-subdir-external-provisioner

parameters:archiveOnDelete: "true" ## 删除pv的时候,pv的内容是否要备份---

apiVersion: apps/v1

kind: Deployment

metadata:name: nfs-client-provisionerlabels:app: nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: default

spec:replicas: 1strategy:type: Recreateselector:matchLabels:app: nfs-client-provisionertemplate:metadata:labels:app: nfs-client-provisionerspec:serviceAccountName: nfs-client-provisionercontainers:- name: nfs-client-provisionerimage: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/nfs-subdir-external-provisioner:v4.0.2# resources:# limits:# cpu: 10m# requests:# cpu: 10mvolumeMounts:- name: nfs-client-rootmountPath: /persistentvolumesenv:- name: PROVISIONER_NAMEvalue: k8s-sigs.io/nfs-subdir-external-provisioner- name: NFS_SERVERvalue: 192.168.10.100 ## 指定自己nfs服务器地址- name: NFS_PATH value: /nfs/data ## nfs服务器共享的目录volumes:- name: nfs-client-rootnfs:server: 192.168.10.100path: /nfs/data

---

apiVersion: v1

kind: ServiceAccount

metadata:name: nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: default

---

kind: ClusterRole

apiVersion: rbac.authorization.k8s.io/v1

metadata:name: nfs-client-provisioner-runner

rules:- apiGroups: [""]resources: ["nodes"]verbs: ["get", "list", "watch"]- apiGroups: [""]resources: ["persistentvolumes"]verbs: ["get", "list", "watch", "create", "delete"]- apiGroups: [""]resources: ["persistentvolumeclaims"]verbs: ["get", "list", "watch", "update"]- apiGroups: ["storage.k8s.io"]resources: ["storageclasses"]verbs: ["get", "list", "watch"]- apiGroups: [""]resources: ["events"]verbs: ["create", "update", "patch"]

---

kind: ClusterRoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:name: run-nfs-client-provisioner

subjects:- kind: ServiceAccountname: nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: default

roleRef:kind: ClusterRolename: nfs-client-provisioner-runnerapiGroup: rbac.authorization.k8s.io

---

kind: Role

apiVersion: rbac.authorization.k8s.io/v1

metadata:name: leader-locking-nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: default

rules:- apiGroups: [""]resources: ["endpoints"]verbs: ["get", "list", "watch", "create", "update", "patch"]

---

kind: RoleBinding

apiVersion: rbac.authorization.k8s.io/v1

metadata:name: leader-locking-nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: default

subjects:- kind: ServiceAccountname: nfs-client-provisioner# replace with namespace where provisioner is deployednamespace: default

roleRef:kind: Rolename: leader-locking-nfs-client-provisionerapiGroup: rbac.authorization.k8s.io

#确认配置是否生效

kubectl get sc

2、metrics-server

集群指标监控组件

apiVersion: v1

kind: ServiceAccount

metadata:labels:k8s-app: metrics-servername: metrics-servernamespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:labels:k8s-app: metrics-serverrbac.authorization.k8s.io/aggregate-to-admin: "true"rbac.authorization.k8s.io/aggregate-to-edit: "true"rbac.authorization.k8s.io/aggregate-to-view: "true"name: system:aggregated-metrics-reader

rules:

- apiGroups:- metrics.k8s.ioresources:- pods- nodesverbs:- get- list- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRole

metadata:labels:k8s-app: metrics-servername: system:metrics-server

rules:

- apiGroups:- ""resources:- pods- nodes- nodes/stats- namespaces- configmapsverbs:- get- list- watch

---

apiVersion: rbac.authorization.k8s.io/v1

kind: RoleBinding

metadata:labels:k8s-app: metrics-servername: metrics-server-auth-readernamespace: kube-system

roleRef:apiGroup: rbac.authorization.k8s.iokind: Rolename: extension-apiserver-authentication-reader

subjects:

- kind: ServiceAccountname: metrics-servernamespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:labels:k8s-app: metrics-servername: metrics-server:system:auth-delegator

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: system:auth-delegator

subjects:

- kind: ServiceAccountname: metrics-servernamespace: kube-system

---

apiVersion: rbac.authorization.k8s.io/v1

kind: ClusterRoleBinding

metadata:labels:k8s-app: metrics-servername: system:metrics-server

roleRef:apiGroup: rbac.authorization.k8s.iokind: ClusterRolename: system:metrics-server

subjects:

- kind: ServiceAccountname: metrics-servernamespace: kube-system

---

apiVersion: v1

kind: Service

metadata:labels:k8s-app: metrics-servername: metrics-servernamespace: kube-system

spec:ports:- name: httpsport: 443protocol: TCPtargetPort: httpsselector:k8s-app: metrics-server

---

apiVersion: apps/v1

kind: Deployment

metadata:labels:k8s-app: metrics-servername: metrics-servernamespace: kube-system

spec:selector:matchLabels:k8s-app: metrics-serverstrategy:rollingUpdate:maxUnavailable: 0template:metadata:labels:k8s-app: metrics-serverspec:containers:- args:- --cert-dir=/tmp- --kubelet-insecure-tls- --secure-port=4443- --kubelet-preferred-address-types=InternalIP,ExternalIP,Hostname- --kubelet-use-node-status-portimage: registry.cn-hangzhou.aliyuncs.com/lfy_k8s_images/metrics-server:v0.4.3imagePullPolicy: IfNotPresentlivenessProbe:failureThreshold: 3httpGet:path: /livezport: httpsscheme: HTTPSperiodSeconds: 10name: metrics-serverports:- containerPort: 4443name: httpsprotocol: TCPreadinessProbe:failureThreshold: 3httpGet:path: /readyzport: httpsscheme: HTTPSperiodSeconds: 10securityContext:readOnlyRootFilesystem: truerunAsNonRoot: truerunAsUser: 1000volumeMounts:- mountPath: /tmpname: tmp-dirnodeSelector:kubernetes.io/os: linuxpriorityClassName: system-cluster-criticalserviceAccountName: metrics-servervolumes:- emptyDir: {}name: tmp-dir

---

apiVersion: apiregistration.k8s.io/v1

kind: APIService

metadata:labels:k8s-app: metrics-servername: v1beta1.metrics.k8s.io

spec:group: metrics.k8s.iogroupPriorityMinimum: 100insecureSkipTLSVerify: trueservice:name: metrics-servernamespace: kube-systemversion: v1beta1versionPriority: 100

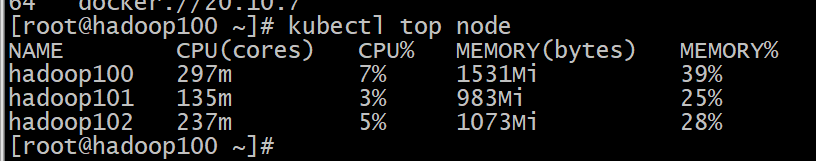

# 查看资源使用情况kubectl top nodekubectl top pod -A

p73全功能安装完成

安装KubeSphere

1、下载核心文件

wget https://github.com/kubesphere/ks-installer/releases/download/v3.1.1/kubesphere-installer.yamlwget https://github.com/kubesphere/ks-installer/releases/download/v3.1.1/cluster-configuration.yaml

2、修改cluster-configuration

在 cluster-configuration.yaml中指定我们需要开启的功能

参照官网“启用可插拔组件”

https://kubesphere.com.cn/docs/pluggable-components/overview/

3、执行安装

kubectl apply -f kubesphere-installer.yamlkubectl apply -f cluster-configuration.yaml4、查看安装进度

kubectl logs -n kubesphere-system $(kubectl get pod -n kubesphere-system -l app=ks-install -o jsonpath='{.items[0].metadata.name}') -f访问任意机器的 30880端口

账号 : admin

密码 : P@88w0rd

解决etcd监控证书找不到问题

kubectl -n kubesphere-monitoring-system create secret generic kube-etcd-client-certs --from-file=etcd-client-ca.crt=/etc/kubernetes/pki/etcd/ca.crt --from-file=etcd-client.crt=/etc/kubernetes/pki/apiserver-etcd-client.crt --from-file=etcd-client.key=/etc/kubernetes/pki/apiserver-etcd-client.key

附录 略