代码里要求用pytorch1.0.0版本,其实不用也可以的。

【删掉run.py里的assert(torch.version == “1.0.0”)即可】

代码里面也有提示让你实现什么,弄懂代码什么意思基本就可以了,看多了感觉大框架都大差不差。多看多练慢慢来,加油!

参考文章:cs224n assignment3 2021

问题c,d:

找到parser.transition.py文件,打开,根据相应提示进行填充。(里面加了个import copy,因为跑run.py时报错说不认识copy)

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

CS224N 2018-19: Homework 3

parser_transitions.py: Algorithms for completing partial parsess.

Sahil Chopra <schopra8@stanford.edu>

"""import sys

import copyclass PartialParse(object):def __init__(self, sentence):"""Initializes this partial parse.@param sentence (list of str): The sentence to be parsed as a list of words.Your code should not modify the sentence."""# The sentence being parsed is kept for bookkeeping purposes. Do not alter it in your code.self.sentence = sentence### YOUR CODE HERE (3 Lines)### Your code should initialize the following fields:### self.stack: The current stack represented as a list with the top of the stack as the### last element of the list.### self.buffer: The current buffer represented as a list with the first item on the### buffer as the first item of the list### self.dependencies: The list of dependencies produced so far. Represented as a list of### tuples where each tuple is of the form (head, dependent).### Order for this list doesn't matter.###### Note: The root token should be represented with the string "ROOT"###self.stack = ['ROOT']self.buffer = copy.deepcopy(sentence)self.dependencies = []### END YOUR CODEdef parse_step(self, transition):"""Performs a single parse step by applying the given transition to this partial parse@param transition (str): A string that equals "S", "LA", or "RA" representing the shift,left-arc, and right-arc transitions. You can assume the providedtransition is a legal transition."""### YOUR CODE HERE (~7-10 Lines)### TODO:### Implement a single parsing step, i.e. the logic for the following as### described in the pdf handout:### 1. Shift### 2. Left Arc### 3. Right Arcif transition == 'S':self.stack.append(self.buffer.pop(0))elif transition == 'LA':a = self.stack[-1]b = self.stack.pop(-2)self.dependencies.append((a,b))elif transition == 'RA':a = self.stack.pop()b = self.stack[-1]self.dependencies.append((b,a))### END YOUR CODEdef parse(self, transitions):"""Applies the provided transitions to this PartialParse@param transitions (list of str): The list of transitions in the order they should be applied@return dsependencies (list of string tuples): The list of dependencies produced whenparsing the sentence. Represented as a list oftuples where each tuple is of the form (head, dependent)."""for transition in transitions:self.parse_step(transition)return self.dependenciesdef minibatch_parse(sentences, model, batch_size):"""Parses a list of sentences in minibatches using a model.@param sentences (list of list of str): A list of sentences to be parsed(each sentence is a list of words and each word is of type string)@param model (ParserModel): The model that makes parsing decisions. It is assumed to have a functionmodel.predict(partial_parses) that takes in a list of PartialParses as input andreturns a list of transitions predicted for each parse. That is, after callingtransitions = model.predict(partial_parses)transitions[i] will be the next transition to apply to partial_parses[i].@param batch_size (int): The number of PartialParses to include in each minibatch@return dependencies (list of dependency lists): A list where each element is the dependencieslist for a parsed sentence. Ordering should be thesame as in sentences (i.e., dependencies[i] shouldcontain the parse for sentences[i])."""dependencies = []### YOUR CODE HERE (~8-10 Lines)### TODO:### Implement the minibatch parse algorithm as described in the pdf handout###### Note: A shallow copy (as denoted in the PDF) can be made with the "=" sign in python, e.g.### unfinished_parses = partial_parses[:].### Here `unfinished_parses` is a shallow copy of `partial_parses`.### In Python, a shallow copied list like `unfinished_parses` does not contain new instances### of the object stored in `partial_parses`. Rather both lists refer to the same objects.### In our case, `partial_parses` contains a list of partial parses. `unfinished_parses`### contains references to the same objects. Thus, you should NOT use the `del` operator### to remove objects from the `unfinished_parses` list. This will free the underlying memory that### is being accessed by `partial_parses` and may cause your code to crash.partial_parses = []for i in sentences:partial_parses.append(PartialParse(i))dependencies = [[] for i in sentences]unfinished_parses = [(i, parser) for i, parser in enumerate(partial_parses)]while (len(unfinished_parses) > 0):size = min(batch_size, len(unfinished_parses))now_parsers = unfinished_parses[:size]transitions = model.predict([parser for idx, parser in now_parsers])for i, data in enumerate(now_parsers):idx, parser = dataa = parser.parse([transitions[i]])dependencies[idx] = aif len(parser.buffer) == 0 and len(parser.stack) == 1:unfinished_parses.remove(data)print(dependencies)### END YOUR CODEreturn dependenciesdef test_step(name, transition, stack, buf, deps,ex_stack, ex_buf, ex_deps):"""Tests that a single parse step returns the expected output"""pp = PartialParse([])pp.stack, pp.buffer, pp.dependencies = stack, buf, depspp.parse_step(transition)stack, buf, deps = (tuple(pp.stack), tuple(pp.buffer), tuple(sorted(pp.dependencies)))assert stack == ex_stack, \"{:} test resulted in stack {:}, expected {:}".format(name, stack, ex_stack)assert buf == ex_buf, \"{:} test resulted in buffer {:}, expected {:}".format(name, buf, ex_buf)assert deps == ex_deps, \"{:} test resulted in dependency list {:}, expected {:}".format(name, deps, ex_deps)print("{:} test passed!".format(name))def test_parse_step():"""Simple tests for the PartialParse.parse_step functionWarning: these are not exhaustive"""test_step("SHIFT", "S", ["ROOT", "the"], ["cat", "sat"], [],("ROOT", "the", "cat"), ("sat",), ())test_step("LEFT-ARC", "LA", ["ROOT", "the", "cat"], ["sat"], [],("ROOT", "cat",), ("sat",), (("cat", "the"),))test_step("RIGHT-ARC", "RA", ["ROOT", "run", "fast"], [], [],("ROOT", "run",), (), (("run", "fast"),))def test_parse():"""Simple tests for the PartialParse.parse functionWarning: these are not exhaustive"""sentence = ["parse", "this", "sentence"]dependencies = PartialParse(sentence).parse(["S", "S", "S", "LA", "RA", "RA"])dependencies = tuple(sorted(dependencies))expected = (('ROOT', 'parse'), ('parse', 'sentence'), ('sentence', 'this'))assert dependencies == expected, \"parse test resulted in dependencies {:}, expected {:}".format(dependencies, expected)assert tuple(sentence) == ("parse", "this", "sentence"), \"parse test failed: the input sentence should not be modified"print("parse test passed!")class DummyModel(object):"""Dummy model for testing the minibatch_parse functionFirst shifts everything onto the stack and then does exclusively right arcs if the first word ofthe sentence is "right", "left" if otherwise."""def predict(self, partial_parses):return [("RA" if pp.stack[1] is "right" else "LA") if len(pp.buffer) == 0 else "S"for pp in partial_parses]def test_dependencies(name, deps, ex_deps):"""Tests the provided dependencies match the expected dependencies"""deps = tuple(sorted(deps))assert deps == ex_deps, \"{:} test resulted in dependency list {:}, expected {:}".format(name, deps, ex_deps)def test_minibatch_parse():"""Simple tests for the minibatch_parse functionWarning: these are not exhaustive"""sentences = [["right", "arcs", "only"],["right", "arcs", "only", "again"],["left", "arcs", "only"],["left", "arcs", "only", "again"]]deps = minibatch_parse(sentences, DummyModel(), 2)test_dependencies("minibatch_parse", deps[0],(('ROOT', 'right'), ('arcs', 'only'), ('right', 'arcs')))test_dependencies("minibatch_parse", deps[1],(('ROOT', 'right'), ('arcs', 'only'), ('only', 'again'), ('right', 'arcs')))test_dependencies("minibatch_parse", deps[2],(('only', 'ROOT'), ('only', 'arcs'), ('only', 'left')))test_dependencies("minibatch_parse", deps[3],(('again', 'ROOT'), ('again', 'arcs'), ('again', 'left'), ('again', 'only')))print("minibatch_parse test passed!")if __name__ == '__main__':args = sys.argvif len(args) != 2:raise Exception("You did not provide a valid keyword. Either provide 'part_c' or 'part_d', when executing this script")elif args[1] == "part_c":test_parse_step()test_parse()elif args[1] == "part_d":test_minibatch_parse()else:raise Exception("You did not provide a valid keyword. Either provide 'part_c' or 'part_d', when executing this script")问题e:

paser.model.py

import argparse

import numpy as npimport torch

import torch.nn as nn

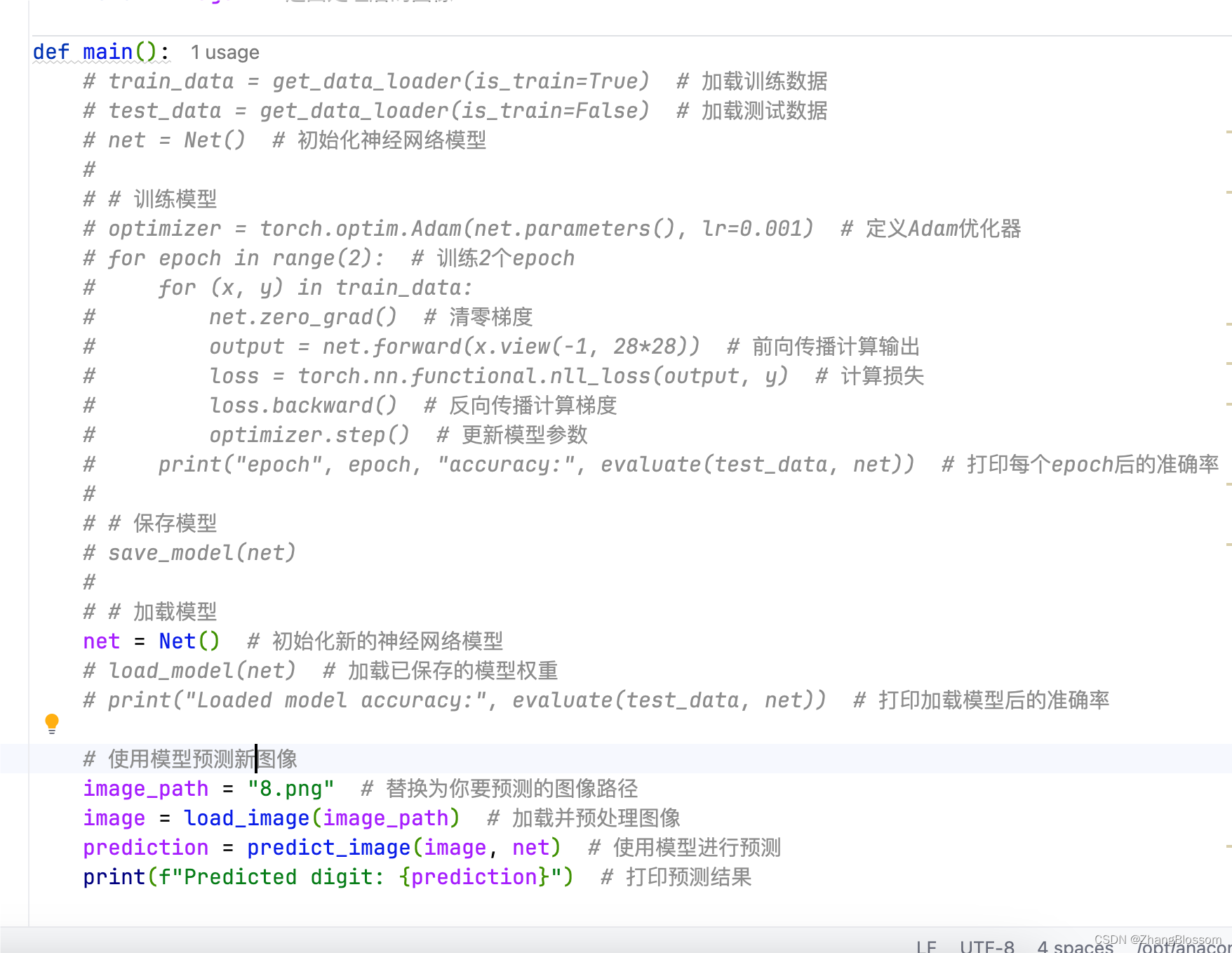

import torch.nn.functional as Fclass ParserModel(nn.Module):""" Feedforward neural network with an embedding layer and two hidden layers.The ParserModel will predict which transition should be applied to agiven partial parse configuration.PyTorch Notes:- Note that "ParserModel" is a subclass of the "nn.Module" class. In PyTorch all neural networksare a subclass of this "nn.Module".- The "__init__" method is where you define all the layers and parameters(embedding layers, linear layers, dropout layers, etc.).- "__init__" gets automatically called when you create a new instance of your class, e.g.when you write "m = ParserModel()".- Other methods of ParserModel can access variables that have "self." prefix. Thus,you should add the "self." prefix layers, values, etc. that you want to utilizein other ParserModel methods.- For further documentation on "nn.Module" please see https://pytorch.org/docs/stable/nn.html."""def __init__(self, embeddings, n_features=36,hidden_size=200, n_classes=3, dropout_prob=0.5):""" Initialize the parser model.@param embeddings (ndarray): word embeddings (num_words, embedding_size)@param n_features (int): number of input features@param hidden_size (int): number of hidden units@param n_classes (int): number of output classes@param dropout_prob (float): dropout probability"""super(ParserModel, self).__init__()self.n_features = n_featuresself.n_classes = n_classesself.dropout_prob = dropout_probself.embed_size = embeddings.shape[1]self.hidden_size = hidden_sizeself.embeddings = nn.Parameter(torch.tensor(embeddings))### YOUR CODE HERE (~9-10 Lines)### TODO:### 1) Declare `self.embed_to_hidden_weight` and `self.embed_to_hidden_bias` as `nn.Parameter`.### Initialize weight with the `nn.init.xavier_uniform_` function and bias with `nn.init.uniform_`### with default parameters.### 2) Construct `self.dropout` layer.### 3) Declare `self.hidden_to_logits_weight` and `self.hidden_to_logits_bias` as `nn.Parameter`.### Initialize weight with the `nn.init.xavier_uniform_` function and bias with `nn.init.uniform_`### with default parameters.###### Note: Trainable variables are declared as `nn.Parameter` which is a commonly used API### to include a tensor into a computational graph to support updating w.r.t its gradient.### Here, we use Xavier Uniform Initialization for our Weight initialization.### It has been shown empirically, that this provides better initial weights### for training networks than random uniform initialization.### For more details checkout this great blogpost:### http://andyljones.tumblr.com/post/110998971763/an-explanation-of-xavier-initialization###### Please see the following docs for support:### nn.Parameter: https://pytorch.org/docs/stable/nn.html#parameters### Initialization: https://pytorch.org/docs/stable/nn.init.html### Dropout: https://pytorch.org/docs/stable/nn.html#dropout-layers### ### See the PDF for hints.self.embed_to_hidden = nn.Linear(self.n_features * self.embed_size, self.hidden_size)self.dropout = nn.Dropout(self.dropout_prob)self.hidden_to_logits = nn.Linear(self.hidden_size, self.n_classes)nn.init.xavier_uniform_(self.embed_to_hidden.weight)nn.init.xavier_uniform_(self.hidden_to_logits.weight)### END YOUR CODEdef embedding_lookup(self, w):""" Utilize `w` to select embeddings from embedding matrix `self.embeddings`@param w (Tensor): input tensor of word indices (batch_size, n_features)@return x (Tensor): tensor of embeddings for words represented in w(batch_size, n_features * embed_size)"""### YOUR CODE HERE (~1-4 Lines)### TODO:### 1) For each index `i` in `w`, select `i`th vector from self.embeddings### 2) Reshape the tensor using `view` function if necessary###### Note: All embedding vectors are stacked and stored as a matrix. The model receives### a list of indices representing a sequence of words, then it calls this lookup### function to map indices to sequence of embeddings.###### This problem aims to test your understanding of embedding lookup,### so DO NOT use any high level API like nn.Embedding### (we are asking you to implement that!). Pay attention to tensor shapes### and reshape if necessary. Make sure you know each tensor's shape before you run the code!###### Pytorch has some useful APIs for you, and you can use either one### in this problem (except nn.Embedding). These docs might be helpful:### Index select: https://pytorch.org/docs/stable/torch.html#torch.index_select### Gather: https://pytorch.org/docs/stable/torch.html#torch.gather### View: https://pytorch.org/docs/stable/tensors.html#torch.Tensor.view### Flatten: https://pytorch.org/docs/stable/generated/torch.flatten.htmlx = self.embeddings.data[w,:].reshape(-1, self.n_features * self.embed_size)### END YOUR CODEreturn xdef forward(self, w):""" Run the model forward.Note that we will not apply the softmax function here because it is included in the loss function nn.CrossEntropyLossPyTorch Notes:- Every nn.Module object (PyTorch model) has a `forward` function.- When you apply your nn.Module to an input tensor `w` this function is applied to the tensor.For example, if you created an instance of your ParserModel and applied it to some `w` as follows,the `forward` function would called on `w` and the result would be stored in the `output` variable:model = ParserModel()output = model(w) # this calls the forward function- For more details checkout: https://pytorch.org/docs/stable/nn.html#torch.nn.Module.forward@param w (Tensor): input tensor of tokens (batch_size, n_features)@return logits (Tensor): tensor of predictions (output after applying the layers of the network)without applying softmax (batch_size, n_classes)"""### YOUR CODE HERE (~3-5 lines)### TODO:### Complete the forward computation as described in write-up. In addition, include a dropout layer### as decleared in `__init__` after ReLU function.###### Note: We do not apply the softmax to the logits here, because### the loss function (torch.nn.CrossEntropyLoss) applies it more efficiently.###### Please see the following docs for support:### Matrix product: https://pytorch.org/docs/stable/torch.html#torch.matmul### ReLU: https://pytorch.org/docs/stable/nn.html?highlight=relu#torch.nn.functional.reluembeddings = self.embedding_lookup(w)h = self.embed_to_hidden(embeddings)h = F.relu(h)h = self.dropout(h)logits = self.hidden_to_logits(h)### END YOUR CODEreturn logitsrun.py:

#!/usr/bin/env python3

# -*- coding: utf-8 -*-

"""

CS224N 2018-19: Homework 3

run.py: Run the dependency parser.

Sahil Chopra <schopra8@stanford.edu>

"""

from datetime import datetime

import os

import torch.nn.functional as F

import pickle

import math

import timefrom torch import nn, optim

import torch

from tqdm import tqdmfrom parser_model import ParserModel

from utils.parser_utils import minibatches, load_and_preprocess_data, AverageMeter# -----------------

# Primary Functions

# -----------------

def train(parser, train_data, dev_data, output_path, batch_size=1024, n_epochs=10, lr=0.0005):""" Train the neural dependency parser.@param parser (Parser): Neural Dependency Parser@param train_data ():@param dev_data ():@param output_path (str): Path to which model weights and results are written.@param batch_size (int): Number of examples in a single batch@param n_epochs (int): Number of training epochs@param lr (float): Learning rate"""best_dev_UAS = 0optimizer = optim.Adam(model.parameters(), lr=0.001)loss_func = nn.CrossEntropyLoss() ### YOUR CODE HERE (~2-7 lines)### TODO:### 1) Construct Adam Optimizer in variable `optimizer`### 2) Construct the Cross Entropy Loss Function in variable `loss_func`###### Hint: Use `parser.model.parameters()` to pass optimizer### necessary parameters to tune.### Please see the following docs for support:### Adam Optimizer: https://pytorch.org/docs/stable/optim.html### Cross Entropy Loss: https://pytorch.org/docs/stable/nn.html#crossentropyloss### END YOUR CODEfor epoch in range(n_epochs):print("Epoch {:} out of {:}".format(epoch + 1, n_epochs))dev_UAS = train_for_epoch(parser, train_data, dev_data, optimizer, loss_func, batch_size)if dev_UAS > best_dev_UAS:best_dev_UAS = dev_UASprint("New best dev UAS! Saving model.")torch.save(parser.model.state_dict(), output_path)print("")def train_for_epoch(parser, train_data, dev_data, optimizer, loss_func, batch_size):""" Train the neural dependency parser for single epoch.Note: In PyTorch we can signify train versus test and automatically havethe Dropout Layer applied and removed, accordingly, by specifyingwhether we are training, `model.train()`, or evaluating, `model.eval()`@param parser (Parser): Neural Dependency Parser@param train_data ():@param dev_data ():@param optimizer (nn.Optimizer): Adam Optimizer@param loss_func (nn.CrossEntropyLoss): Cross Entropy Loss Function@param batch_size (int): batch size@param lr (float): learning rate@return dev_UAS (float): Unlabeled Attachment Score (UAS) for dev data"""parser.model.train() # Places model in "train" mode, i.e. apply dropout layern_minibatches = math.ceil(len(train_data) / batch_size)loss_meter = AverageMeter()with tqdm(total=(n_minibatches)) as prog:for i, (train_x, train_y) in enumerate(minibatches(train_data, batch_size)):optimizer.zero_grad() # remove any baggage in the optimizerloss = 0. # store loss for this batch heretrain_x = torch.from_numpy(train_x).long()train_y = torch.from_numpy(train_y.nonzero()[1]).long()logits = parser.model(train_x)

# print(logits.shape, train_y.shape,max(train_y),min(train_y))loss = loss_func(logits, train_y)loss.backward() # 反向传播,计算当前梯度 optimizer.step() # 根据梯度更新网络参数 ### YOUR CODE HERE (~5-10 lines)### TODO:### 1) Run train_x forward through model to produce `logits`### 2) Use the `loss_func` parameter to apply the PyTorch CrossEntropyLoss function.### This will take `logits` and `train_y` as inputs. It will output the CrossEntropyLoss### between softmax(`logits`) and `train_y`. Remember that softmax(`logits`)### are the predictions (y^ from the PDF).### 3) Backprop losses### 4) Take step with the optimizer### Please see the following docs for support:### Optimizer Step: https://pytorch.org/docs/stable/optim.html#optimizer-step### END YOUR CODEprog.update(1)loss_meter.update(loss.item())print ("Average Train Loss: {}".format(loss_meter.avg))print("Evaluating on dev set",)parser.model.eval() # Places model in "eval" mode, i.e. don't apply dropout layerdev_UAS, _ = parser.parse(dev_data)print("- dev UAS: {:.2f}".format(dev_UAS * 100.0))return dev_UAS# Note: Set debug to False, when training on entire corpus

debug = True

# debug = False# assert(torch.__version__ == "1.0.0"), "Please install torch version 1.0.0"print(80 * "=")

print("INITIALIZING")

print(80 * "=")

parser, embeddings, train_data, dev_data, test_data = load_and_preprocess_data(debug)start = time.time()

model = ParserModel(embeddings)

parser.model = model

print("took {:.2f} seconds\n".format(time.time() - start))print(80 * "=")

print("TRAINING")

print(80 * "=")

output_dir = "results/{:%Y%m%d_%H%M%S}/".format(datetime.now())

output_path = output_dir + "model.weights"if not os.path.exists(output_dir):os.makedirs(output_dir)train(parser, train_data, dev_data, output_path, batch_size=1024, n_epochs=10, lr=0.0005)if not debug:print(80 * "=")print("TESTING")print(80 * "=")print("Restoring the best model weights found on the dev set")parser.model.load_state_dict(torch.load(output_path))print("Final evaluation on test set",)parser.model.eval()UAS, dependencies = parser.parse(test_data)print("- test UAS: {:.2f}".format(UAS * 100.0))print("Done!")