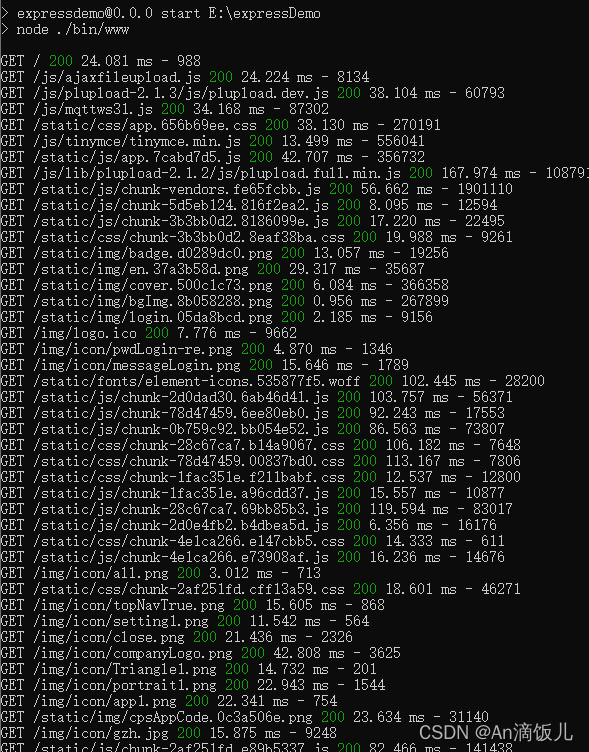

1. 启动

2.相关配置

2.1 data.yaml

path: D:/yolo-tool/yaunshen-yolov8/YOLOv8ys/YOLOv8-CUDA10.2/1/datasets/ceshi001

train: images

val: images

names: ['蔡徐坤','篮球']2.2 cfg.yaml

# Ultralytics YOLOv8, GPL-3.0 license

# Default training settings and hyperparameters for medium-augmentation COCO trainingtask: detect # inference task, i.e. detect, segment, classify

mode: train # YOLO mode, i.e. train, val, predict, export# Train settings -------------------------------------------------------------------------------------------------------

model: C:\Users\AF5\Desktop\YOLOv8ql\YOLOv8-CPU\1\datasets\qh\pt\train2\weights\best.pt # path to model file, i.e. yolov8n.pt, yolov8n.yaml 模型文件路径

data: C:\Users\AF5\Desktop\YOLOv8ql\YOLOv8-CPU\1\datasets\qh\data.yaml # path to data file, i.e. i.e. coco128.yaml 数据集data文件路径

epochs: 100000 # number of epochs to train for 训练次数,达到这个次数后将终止训练,且无法该模型无法继续训练

patience: 0 # epochs to wait for no observable improvement for early stopping of training 超过这个次数没有提升将自动完成训练

batch: 1 # number of images per batch (-1 for AutoBatch) 批数量,设越大占用显存越多

imgsz: 640 # size of input images as integer or w,h 一般默认640,训练时的图片宽高

save: True # save train checkpoints and predict results

save_period: -1 # Save checkpoint every x epochs (disabled if < 1)

cache: False # True/ram, disk or False. Use cache for data loading

device: # device to run on, i.e. cuda device=0 or device=0,1,2,3 or device=cpu

workers: 0 # number of worker threads for data loading (per RANK if DDP) 勿改,必须为0

project: C:/Users/AF5/Desktop/YOLOv8ql/YOLOv8-CPU/1/datasets/qh/val # project name 勿改

name: train # experiment name 训练完成的文件夹名称

exist_ok: False # whether to overwrite existing experiment

pretrained: False # whether to use a pretrained model

optimizer: SGD # optimizer to use, choices=['SGD', 'Adam', 'AdamW', 'RMSProp']

verbose: True # whether to print verbose output

seed: 0 # random seed for reproducibility

deterministic: True # whether to enable deterministic mode

single_cls: False # train multi-class data as single-class

image_weights: False # use weighted image selection for training

rect: False # support rectangular training if mode='train', support rectangular evaluation if mode='val'

cos_lr: False # use cosine learning rate scheduler

close_mosaic: 10 # disable mosaic augmentation for final 10 epochs

resume: False # resume training from last checkpoint 为True时为继续模型的训练

min_memory: False # minimize memory footprint loss function, choices=[False, True, <roll_out_thr>]

# Segmentation

overlap_mask: True # masks should overlap during training (segment train only)

mask_ratio: 4 # mask downsample ratio (segment train only)

# Classification

dropout: 0.0 # use dropout regularization (classify train only)# Val/Test settings ----------------------------------------------------------------------------------------------------

val: True # validate/test during training 为True,训练时计算mAP

split: val # dataset split to use for validation, i.e. 'val', 'test' or 'train'

save_json: False # save results to JSON file

save_hybrid: False # save hybrid version of labels (labels + additional predictions)

conf: # object confidence threshold for detection (default 0.25 predict, 0.001 val)

iou: 0.7 # intersection over union (IoU) threshold for NMS

max_det: 300 # maximum number of detections per image

half: False # use half precision (FP16)

dnn: False # use OpenCV DNN for ONNX inference

plots: True # save plots during train/val# Prediction settings --------------------------------------------------------------------------------------------------

source: C:\Users\AF5\Desktop\YOLOv8ql\YOLOv8-CPU\1\datasets\qh\images\qh174.png # source directory for images or videos 需要进行预测视频或图片的路径

show: False # show results if possible

save_txt: True # save results as .txt file

save_conf: False # save results with confidence scores

save_crop: False # save cropped images with results

hide_labels: False # hide labels

hide_conf: False # hide confidence scores

vid_stride: 1 # video frame-rate stride

line_thickness: 3 # bounding box thickness (pixels)

visualize: False # visualize model features

augment: False # apply image augmentation to prediction sources

agnostic_nms: False # class-agnostic NMS

classes: # filter results by class, i.e. class=0, or class=[0,2,3]

retina_masks: False # use high-resolution segmentation masks

boxes: True # Show boxes in segmentation predictions# Export settings ------------------------------------------------------------------------------------------------------

format: torchscript # format to export to

keras: False # use Keras

optimize: False # TorchScript: optimize for mobile

int8: False # CoreML/TF INT8 quantization

dynamic: False # ONNX/TF/TensorRT: dynamic axes

simplify: False # ONNX: simplify model

opset: 12 # ONNX: opset version (optional)

workspace: 4 # TensorRT: workspace size (GB)

nms: False # CoreML: add NMS# Hyperparameters ------------------------------------------------------------------------------------------------------

lr0: 0.01 # initial learning rate (i.e. SGD=1E-2, Adam=1E-3)

lrf: 0.01 # final learning rate (lr0 * lrf)

momentum: 0.937 # SGD momentum/Adam beta1

weight_decay: 0.0005 # optimizer weight decay 5e-4

warmup_epochs: 3.0 # warmup epochs (fractions ok)

warmup_momentum: 0.8 # warmup initial momentum

warmup_bias_lr: 0.1 # warmup initial bias lr

box: 7.5 # box loss gain

cls: 0.5 # cls loss gain (scale with pixels)

dfl: 1.5 # dfl loss gain

fl_gamma: 0.0 # focal loss gamma (efficientDet default gamma=1.5)

label_smoothing: 0.0 # label smoothing (fraction)

nbs: 64 # nominal batch size

hsv_h: 0.015 # image HSV-Hue augmentation (fraction)

hsv_s: 0.7 # image HSV-Saturation augmentation (fraction)

hsv_v: 0.4 # image HSV-Value augmentation (fraction)

degrees: 0.0 # image rotation (+/- deg)

translate: 0.1 # image translation (+/- fraction)

scale: 0.5 # image scale (+/- gain)

shear: 0.0 # image shear (+/- deg)

perspective: 0.0 # image perspective (+/- fraction), range 0-0.001

flipud: 0.0 # image flip up-down (probability)

fliplr: 0.5 # image flip left-right (probability)

mosaic: 1.0 # image mosaic (probability)

mixup: 0.0 # image mixup (probability)

copy_paste: 0.0 # segment copy-paste (probability)# Custom config.yaml ---------------------------------------------------------------------------------------------------

cfg: # for overriding defaults.yaml# Debug, do not modify -------------------------------------------------------------------------------------------------

v5loader: False # use legacy YOLOv5 dataloader# Tracker settings ------------------------------------------------------------------------------------------------------

tracker: botsort.yaml # tracker type, ['botsort.yaml', 'bytetrack.yaml']

2.3 主要代码

import cv2

import time

from ultralytics import YOLO

import json

import numpy as npdef Yolov10Detector(frame, model, image_size, conf_threshold, cap):results = model.predict(source=frame, imgsz=image_size, conf=conf_threshold)frame = results[0].plot()# 获取当前帧的时间current_time = cap.get(cv2.CAP_PROP_POS_MSEC) / 1000 # 以秒为单位# 打印所有标签结果及对应的时间for result in results:for box in result.boxes:c = int(box.cls)name = result.names[c]print(f"识别到的标签: {name},对应的时间: {current_time} 秒")return framedef main():image_size = 640 # Adjust as neededconf_threshold = 0.3 # Adjust as neededmodel = YOLO("D:/yolo-workspace/yoloy8-project/model/oneself/best.pt")source = "C:/Users/wangwei/Desktop/2024-09-18/20240925_115452.mp4" # 0 for webcamcap = cv2.VideoCapture(source)while True:success, frame = cap.read()start_time = time.time()if success:print("读取帧成功!")if not success:print("读取帧失败!")breakmodelName = model.namesjson.dumps(modelName, ensure_ascii=False)#print("预检测 识别转json 信息为:" + json.dumps(modelName, ensure_ascii=False))frame = Yolov10Detector(frame, model, image_size, conf_threshold, cap)end_time = time.time()fps = 1 / (end_time - start_time)framefps = "FPS:{:.2f}".format(fps)try:cv2.rectangle(frame, (10, 1), (120, 20), (0, 0, 0), -1)cv2.putText(frame, framefps, (15, 17), cv2.FONT_HERSHEY_SIMPLEX, 0.6, (0, 255, 255), 2)except Exception as e:print("")cv2.imshow("yolov10-本地摄像头识别", frame) # Display the annotated frameif cv2.waitKey(1) & 0xFF == ord('q'): # Exit on 'q' key pres:breakcap.release()cv2.destroyAllWindows()main()

3. 模型训练

4.训练结果:

20240926_104219