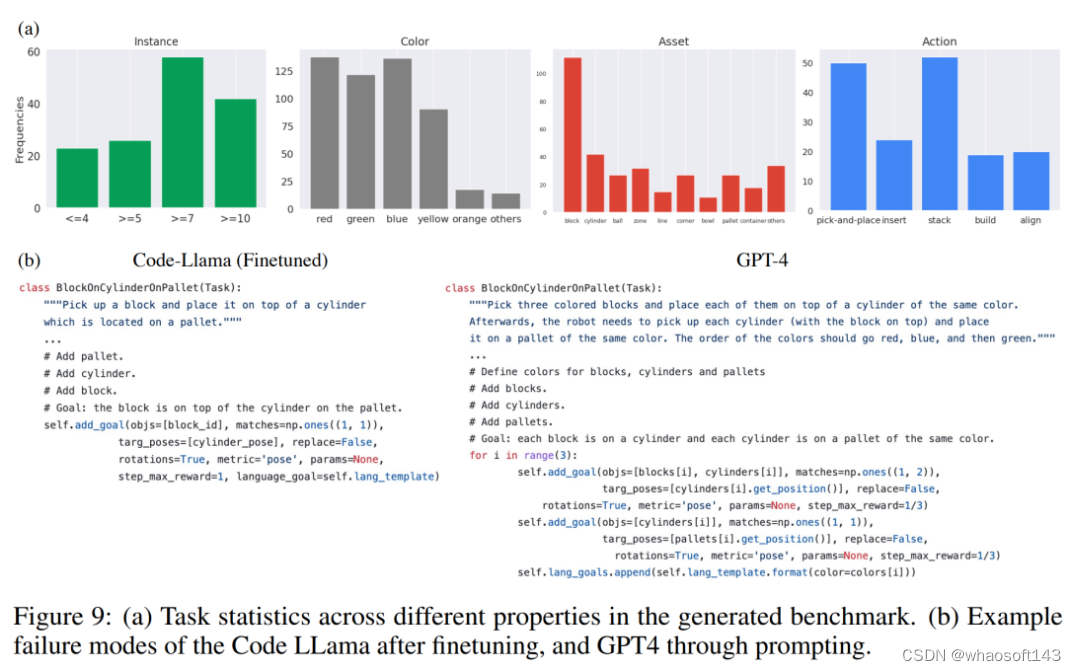

随着目标检测领域的快速发展,YOLO系列模型凭借其端到端、高效的检测性能逐渐成为工业界和学术界的标杆。然而,如何进一步优化YOLOv11的特征提取能力,减少冗余信息并提升模型对复杂场景的适应性,仍是一个值得深入探讨的问题。为此,本文将自适应稀疏自注意力ASSA机制引入YOLOv11,以优化目标检测模型中的特征提取过程。ASSA最早应用于图像恢复任务,通过减少噪声交互并保留重要的特征信息,显著提升了模型的处理效率。本文将探讨如何将ASSA机制与YOLOv11结合,以实现更高效的目标检测。

1. ASSA的概述:

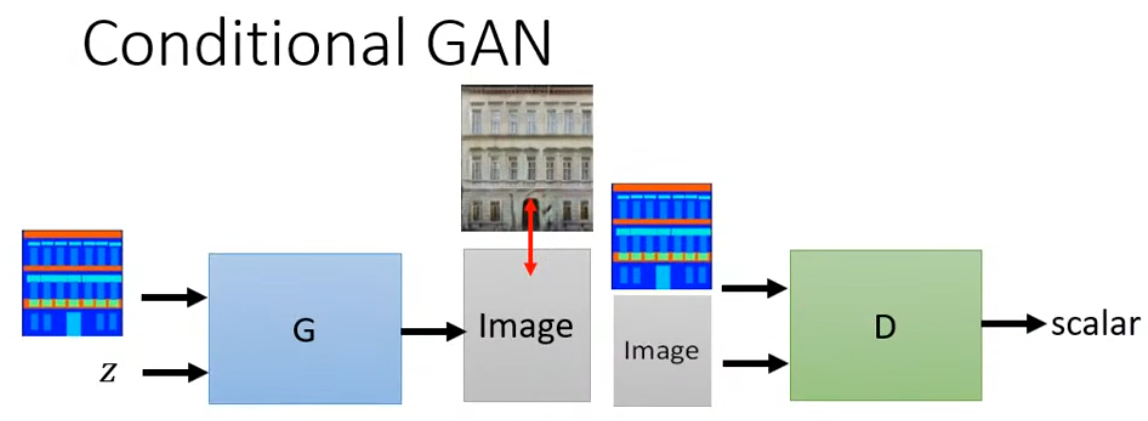

Adaptive Sparse Self-Attention(ASSA)是自适应稀疏Transformer(AST)模型中的关键组件,主要用于提升图像恢复任务的性能。ASSA的设计旨在减少标准Transformer模型中由于无关区域引入的噪声交互,同时解决特征冗余问题。

-

双分支结构:

- 稀疏自注意力(SSA):使用基于ReLU的稀疏注意力机制,过滤掉查询与键之间低匹配的无关交互。这可以减少无效特征的参与,帮助聚焦在最有价值的信息交互上。

- 密集自注意力(DSA):采用标准的softmax密集注意力机制,补充SSA,以确保在稀疏处理过程中不会丢失关键信息。

-

自适应加权: ASSA通过自适应的加权机制,将SSA和DSA的输出进行融合。这种设计使模型能够动态调整稀疏与密集注意力的权重,从而根据具体的任务和输入内容有效地平衡信息流,既能过滤掉无关特征,又保留必要的信息。

2. YOLOv11与ASSA的结合

YOLOv11在其卷积层和特征金字塔网络(FPN)中已经引入了多种创新机制,如C3K2模块、C2PSA模块。然而,在处理复杂背景或密集物体时,仍可能会存在特征冗余或噪声干扰。为了进一步优化YOLOv11的特征提取,我们可以在其特征提取模块中引入ASSA机制,具体包括以下步骤:

1. ASSA集成到YOLOv11的C2PSA模块模块中:我们将C2PSA模块中的注意力模块替换成ASSA模块,以优化C2PSA模块特征信息的筛选。

2. ASSA集成到YOLOv11的backbone中: 将ASSA添加到YOLOv11backbone中的SPPF模块之前,为进入SPPF模块前的特征进行筛选,使得SPPF模块的多尺度特征在空间和语义上都更富有表达力。

3. 自适应稀疏自注意力ASSA代码部分

import torch

import torch.nn as nn

from timm.models.layers import trunc_normal_

from einops import repeat

import math

from .conv import Conv

from .block import C2f, C3class LinearProjection(nn.Module):def __init__(self, dim, heads=8, dim_head=64, dropout=0., bias=True):super().__init__()inner_dim = dim_head * headsself.heads = headsself.to_q = nn.Linear(dim, inner_dim, bias=bias)self.to_kv = nn.Linear(dim, inner_dim * 2, bias=bias)self.dim = dimself.inner_dim = inner_dimdef forward(self, x, attn_kv=None):B_, N, C = x.shapeif attn_kv is not None:attn_kv = attn_kv.unsqueeze(0).repeat(B_, 1, 1)else:attn_kv = xN_kv = attn_kv.size(1)q = self.to_q(x).reshape(B_, N, 1, self.heads, C // self.heads).permute(2, 0, 3, 1, 4)kv = self.to_kv(attn_kv).reshape(B_, N_kv, 2, self.heads, C // self.heads).permute(2, 0, 3, 1, 4)q = q[0]k, v = kv[0], kv[1]return q, k, v########### window-based self-attention #############

class WindowAttention_ASSA(nn.Module):def __init__(self, dim, win_size = (8, 8), num_heads=4, token_projection='linear', qkv_bias=True, qk_scale=None, attn_drop=0.,proj_drop=0.):super().__init__()self.dim = dimself.win_size = win_size # Wh, Wwself.num_heads = num_headshead_dim = dim // num_headsself.scale = qk_scale or head_dim ** -0.5# define a parameter table of relative position biasself.relative_position_bias_table = nn.Parameter(torch.zeros((2 * win_size[0] - 1) * (2 * win_size[1] - 1), num_heads)) # 2*Wh-1 * 2*Ww-1, nH# get pair-wise relative position index for each token inside the windowcoords_h = torch.arange(self.win_size[0]) # [0,...,Wh-1]coords_w = torch.arange(self.win_size[1]) # [0,...,Ww-1]coords = torch.stack(torch.meshgrid([coords_h, coords_w])) # 2, Wh, Wwcoords_flatten = torch.flatten(coords, 1) # 2, Wh*Wwrelative_coords = coords_flatten[:, :, None] - coords_flatten[:, None, :] # 2, Wh*Ww, Wh*Wwrelative_coords = relative_coords.permute(1, 2, 0).contiguous() # Wh*Ww, Wh*Ww, 2relative_coords[:, :, 0] += self.win_size[0] - 1 # shift to start from 0relative_coords[:, :, 1] += self.win_size[1] - 1relative_coords[:, :, 0] *= 2 * self.win_size[1] - 1relative_position_index = relative_coords.sum(-1) # Wh*Ww, Wh*Wwself.register_buffer("relative_position_index", relative_position_index)trunc_normal_(self.relative_position_bias_table, std=.02)if token_projection == 'linear':self.qkv = LinearProjection(dim, num_heads, dim // num_heads, bias=qkv_bias)else:raise Exception("Projection error!")self.token_projection = token_projectionself.attn_drop = nn.Dropout(attn_drop)self.proj = nn.Linear(dim, dim)self.proj_drop = nn.Dropout(proj_drop)self.softmax = nn.Softmax(dim=-1)self.relu = nn.ReLU()self.w = nn.Parameter(torch.ones(2))def forward(self, x, attn_kv=None, mask=None):# 调整输入维度,从 (B, C, H, W) 转为 (B, H, W, C)x = x.permute(0, 2, 3, 1).reshape(x.shape[0], x.shape[2]*x.shape[3], x.shape[1])B_, N, C = x.shapeq, k, v = self.qkv(x, attn_kv)q = q * self.scaleattn = (q @ k.transpose(-2, -1))relative_position_bias = self.relative_position_bias_table[self.relative_position_index.view(-1)].view(self.win_size[0] * self.win_size[1], self.win_size[0] * self.win_size[1], -1) # Wh*Ww,Wh*Ww,nHrelative_position_bias = relative_position_bias.permute(2, 0, 1).contiguous() # nH, Wh*Ww, Wh*Wwratio = attn.size(-1) // relative_position_bias.size(-1)relative_position_bias = repeat(relative_position_bias, 'nH l c -> nH l (c d)', d=ratio)attn = attn + relative_position_bias.unsqueeze(0)if mask is not None:nW = mask.shape[0]mask = repeat(mask, 'nW m n -> nW m (n d)', d=ratio)attn = attn.view(B_ // nW, nW, self.num_heads, N, N * ratio) + mask.unsqueeze(1).unsqueeze(0)attn = attn.view(-1, self.num_heads, N, N * ratio)attn0 = self.softmax(attn)attn1 = self.relu(attn) ** 2 # b,h,w,celse:attn0 = self.softmax(attn)attn1 = self.relu(attn) ** 2w1 = torch.exp(self.w[0]) / torch.sum(torch.exp(self.w))w2 = torch.exp(self.w[1]) / torch.sum(torch.exp(self.w))attn = attn0 * w1 + attn1 * w2attn = self.attn_drop(attn)x = (attn @ v).transpose(1, 2).reshape(B_, N, C)x = self.proj(x)x = self.proj_drop(x)x = x.reshape(x.shape[0], int(math.sqrt(x.shape[1])), int(math.sqrt(x.shape[1])),x.shape[2]).permute(0, 3, 1, 2)return xdef extra_repr(self) -> str:return f'dim={self.dim}, win_size={self.win_size}, num_heads={self.num_heads}'class Bottleneck_WAtt_ASSA(nn.Module):"""Standard bottleneck."""def __init__(self, c1, c2, shortcut=True, g=1, k=(3, 3), e=0.5):"""Initializes a standard bottleneck module with optional shortcut connection and configurable parameters."""super().__init__()c_ = int(c2 * e) # hidden channelsself.cv1 = Conv(c1, c_, k[0], 1)self.cv2 = Conv(c_, c2, k[1], 1, g=g)self.cv3 = WindowAttention_ASSA(dim=c2)self.add = shortcut and c1 == c2def forward(self, x):"""Applies the YOLO FPN to input data."""return x + self.cv3(self.cv2(self.cv1(x))) if self.add else self.cv3(self.cv2(self.cv1(x)))class C3k(C3):"""C3k is a CSP bottleneck module with customizable kernel sizes for feature extraction in neural networks."""def __init__(self, c1, c2, n=1, shortcut=True, g=1, e=0.5, k=3):"""Initializes the C3k module with specified channels, number of layers, and configurations."""super().__init__(c1, c2, n, shortcut, g, e)c_ = int(c2 * e) # hidden channels# self.m = nn.Sequential(*(RepBottleneck(c_, c_, shortcut, g, k=(k, k), e=1.0) for _ in range(n)))self.m = nn.Sequential(*(Bottleneck_WAtt_ASSA(c_, c_, shortcut, g, k=(k, k), e=1.0) for _ in range(n)))class C3k2_WAtt_ASSA(C2f):"""Faster Implementation of CSP Bottleneck with 2 convolutions."""def __init__(self, c1, c2, n=1, c3k=False, e=0.5, g=1, shortcut=True):"""Initializes the C3k2 module, a faster CSP Bottleneck with 2 convolutions and optional C3k blocks."""super().__init__(c1, c2, n, shortcut, g, e)self.m = nn.ModuleList(C3k(self.c, self.c, 2, shortcut, g) if c3k else Bottleneck_WAtt_ASSA(self.c, self.c, shortcut, g) for _ in range(n))if __name__ =='__main__':ASSA_Attention = WindowAttention_ASSA(dim=256)#创建一个输入张量batch_size = 1input_tensor=torch.randn(batch_size, 256, 64, 64 )#运行模型并打印输入和输出的形状output_tensor =ASSA_Attention(input_tensor)print("Input shape:",input_tensor.shape)print("0utput shape:",output_tensor.shape)4. 将自适应稀疏自注意力ASSA引入到YOLOv11中

第一: 将下面的核心代码复制到D:\bilibili\model\YOLO11\ultralytics-main\ultralytics\nn路径下,如下图所示。

第二:在task.py中导入ASSA包

第三:在task.py中的模型配置部分下面代码

1. 第一个改进,直接在backbone中添加WindowAttention_ASSA

elif m is WindowAttention_ASSA:args = [ch[f]]

2. 第二个改进,与C2PSA模块结合生成C2PSA_ASSA

第四:将模型配置文件复制到YOLOV11.YAMY文件中

第一个改进配置文件

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'# [depth, width, max_channels]n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPss: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPsm: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPsl: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPsx: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs# YOLO11n backbone

backbone:# [from, repeats, module, args]- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4- [-1, 2, C3k2, [256, False, 0.25]]- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8- [-1, 2, C3k2, [512, False, 0.25]]- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16- [-1, 2, C3k2, [512, True]]- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32- [-1, 2, C3k2, [1024, True]]- [-1, 2, WindowAttention_ASSA, []]- [-1, 1, SPPF, [1024, 5]] # 9- [-1, 2, C2PSA, [1024]] # 10# YOLO11n head

head:- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [[-1, 6], 1, Concat, [1]] # cat backbone P4- [-1, 2, C3k2, [512, False]] # 13- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [[-1, 4], 1, Concat, [1]] # cat backbone P3- [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)- [-1, 1, Conv, [256, 3, 2]]- [[-1, 14], 1, Concat, [1]] # cat head P4- [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium)- [-1, 1, Conv, [512, 3, 2]]- [[-1, 11], 1, Concat, [1]] # cat head P5- [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large)- [[17, 20, 23], 1, Detect, [nc]] # Detect(P3, P4, P5)

第二个改进配置文件

# Ultralytics YOLO 🚀, AGPL-3.0 license

# YOLO11 object detection model with P3-P5 outputs. For Usage examples see https://docs.ultralytics.com/tasks/detect# Parameters

nc: 80 # number of classes

scales: # model compound scaling constants, i.e. 'model=yolo11n.yaml' will call yolo11.yaml with scale 'n'# [depth, width, max_channels]n: [0.50, 0.25, 1024] # summary: 319 layers, 2624080 parameters, 2624064 gradients, 6.6 GFLOPss: [0.50, 0.50, 1024] # summary: 319 layers, 9458752 parameters, 9458736 gradients, 21.7 GFLOPsm: [0.50, 1.00, 512] # summary: 409 layers, 20114688 parameters, 20114672 gradients, 68.5 GFLOPsl: [1.00, 1.00, 512] # summary: 631 layers, 25372160 parameters, 25372144 gradients, 87.6 GFLOPsx: [1.00, 1.50, 512] # summary: 631 layers, 56966176 parameters, 56966160 gradients, 196.0 GFLOPs# YOLO11n backbone

backbone:# [from, repeats, module, args]- [-1, 1, Conv, [64, 3, 2]] # 0-P1/2- [-1, 1, Conv, [128, 3, 2]] # 1-P2/4- [-1, 2, C3k2, [256, False, 0.25]]- [-1, 1, Conv, [256, 3, 2]] # 3-P3/8- [-1, 2, C3k2, [512, False, 0.25]]- [-1, 1, Conv, [512, 3, 2]] # 5-P4/16- [-1, 2, C3k2, [512, True]]- [-1, 1, Conv, [1024, 3, 2]] # 7-P5/32- [-1, 2, C3k2, [1024, True]]- [-1, 1, SPPF, [1024, 5]] # 9- [-1, 2, C2PSA_ASSA, [1024]] # 10# YOLO11n head

head:- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [[-1, 6], 1, Concat, [1]] # cat backbone P4- [-1, 2, C3k2, [512, False]] # 13- [-1, 1, nn.Upsample, [None, 2, "nearest"]]- [[-1, 4], 1, Concat, [1]] # cat backbone P3- [-1, 2, C3k2, [256, False]] # 16 (P3/8-small)- [-1, 1, Conv, [256, 3, 2]]- [[-1, 13], 1, Concat, [1]] # cat head P4- [-1, 2, C3k2, [512, False]] # 19 (P4/16-medium)- [-1, 1, Conv, [512, 3, 2]]- [[-1, 10], 1, Concat, [1]] # cat head P5- [-1, 2, C3k2, [1024, True]] # 22 (P5/32-large)- [[16, 19, 22], 1, Detect, [nc]] # Detect(P3, P4, P5)

第五:运行成功

from ultralytics.models import NAS, RTDETR, SAM, YOLO, FastSAM, YOLOWorldif __name__=="__main__":# 使用自己的YOLOv11.yamy文件搭建模型并加载预训练权重训练模型model = YOLO(r"D:\bilibili\model\YOLO11\ultralytics-main\ultralytics\cfg\models\11\yolo11_ASSA.yaml")\.load(r'D:\bilibili\model\YOLO11\ultralytics-main\yolo11n.pt') # build from YAML and transfer weightsresults = model.train(data=r'D:\bilibili\model\ultralytics-main\ultralytics\cfg\datasets\VOC_my.yaml',epochs=100, imgsz=640, batch=8)