Downloads | Apache Spark

拖动安装包 上传虚拟机

# 解压锁环境就安装好能使用

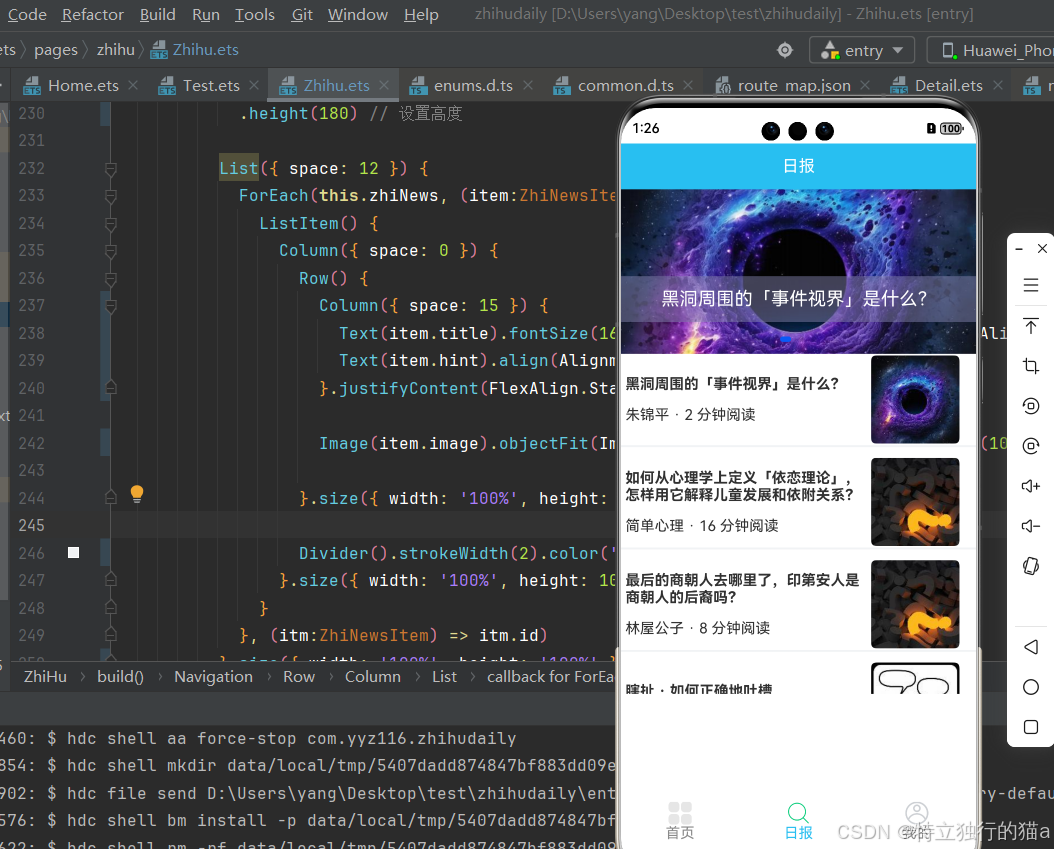

tar -zxvf spark-3.3.1-bin-hadoop3.tgz# 修改文件名称

mv spark-3.3.1-bin-hadoop3 spark-local1.Local模式

测试案例:计算圆周率π

# Usage: spark-submit [options] <app jar | python file | R file> [app arguments]# local表示运行环境,其中[4]表示4个线程执行;10表示运行10次

bin/spark-submit \

--class org.apache.spark.examples.SparkPi \

--master local[4] \

./examples/jars/spark-examples_2.12-3.3.1.jar \

10

2. Yarn 模式

修改模板配置文件spark.env.sh 添加:YARN_CONF_DIR=/usr/local/hadoop-3.1.3/etc/hadoop/

表示Spark运行的资源由Yarn进行调度,前提要先安装好hadoop

下面是hadoop里面的yarn-site配置的服务(注意域名解析配置)

启动hadoop集群

bin/spark-submit \

--class org.apache.spark.examples.SparkPi \

--master yarn \

./examples/jars/spark-examples_2.12-3.3.1.jar \

10踩坑!!!!!开始看控制台的错误日志输出错误,看不出原因

要到hadoop任务执行日志查看 详细报错

Application application_1729393561143_0001 failed 2 times due to AM Container for appattempt_1729393561143_0001_000002 exited with exitCode: -103

Failing this attempt.Diagnostics: [2024-10-19 20:07:40.080]Container [pid=5207,containerID=container_1729393561143_0001_02_000001] is running 110868992B beyond the 'VIRTUAL' memory limit. Current usage: 370.4 MB of 1 GB physical memory used; 2.2 GB of 2.1 GB virtual memory used. Killing container.

解决方案:

修改hadoop各个节点里的mapred-site.xml

<property><!-- 是否对容器强制执行虚拟内存限制 --><name>yarn.nodemanager.vmem-check-enabled</name><value>false</value></property><property><!-- 为容器设置内存限制时虚拟内存与物理内存之间的比率 --><name>yarn.nodemanager.vmem-pmem-ratio</name><value>4</value></property>

参考:Hadoop beyond the ‘VIRTUAL‘ memory limit.问题解决_beyond the 'virtal' memory-CSDN博客