简介

在人工智能领域,北京人工智能学会(BAAI)做出了重要贡献: 在人工智能领域,北京人工智能研究所(BAAI)开发的 Aquila-VL-2B-llava-qwen 模型做出了重大贡献。这一创新模型建立在 LLava-one-vision 框架之上,展示了视觉语言模型(VLM)在理解和处理视觉和文本数据方面的潜力。

模型架构

Aquila-VL-2B-llava-qwen 是一款功能强大的视觉语言模型,采用 Qwen2.5-1.5B-instruct 模型作为其大语言模型(LLM)组件。该 LLM 负责理解和生成文本,使模型能够处理复杂的语言结构。视觉塔(siglip-so400m-patch14-384)在图像理解方面起着至关重要的作用,使模型能够有效地分析和解释视觉信息。

训练数据

该模型的优势在于其训练数据集 Infinity-MM,这是一个包含约 4000 万对图像和文本的庞大数据集。该数据集融合了从互联网上收集的开源数据和使用开源 VLM 模型生成的合成指令数据。通过在如此多样化和广泛的数据集上进行训练,Aquila-VL-2B-llava-qwen 获得了对视觉和语言概念的全面理解。

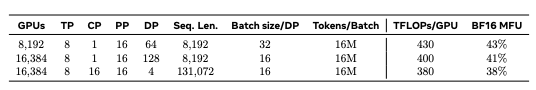

开源和评估: BAAI 慷慨地开源了 Infinity-MM 数据集,使研究人员和开发人员能够探索和利用这一宝贵资源。使用不同 GPU 训练的 Aquila-VL-2B-CG 模型也将很快公布。使用 VLMEvalKit 工具对模型的性能进行了评估,以确保对其能力进行全面评估。

| Benchmark | MiniCPM-V-2 | InternVL2-2B | XinYuan-VL-2B | Qwen2-VL-2B-Instruct | Aquila-VL-2B |

|---|---|---|---|---|---|

| MMBench-ENtest | 69.4 | 73.4 | 78.9 | 74.9 | 78.8 |

| MMBench-CNtest | 65.9 | 70.9 | 76.1 | 73.9 | 76.4 |

| MMBench_V1.1test | 65.2 | 69.7 | 75.4 | 72.7 | 75.2 |

| MMT-Benchtest | 54.5 | 53.3 | 57.2 | 54.8 | 58.2 |

| RealWorldQA | 55.4 | 57.3 | 63.9 | 62.6 | 63.9 |

| HallusionBench | 36.8 | 38.1 | 36.0 | 41.5 | 43.0 |

| SEEDBench2plus | 51.8 | 60.0 | 63.0 | 62.4 | 63.0 |

| LLaVABench | 66.1 | 64.8 | 42.4 | 52.5 | 68.4 |

| MMStar | 41.6 | 50.2 | 51.9 | 47.8 | 54.9 |

| POPE | 86.6 | 85.3 | 89.4 | 88.0 | 83.6 |

| MMVet | 44.0 | 41.1 | 42.7 | 50.7 | 44.3 |

| MMMUval | 39.6 | 34.9 | 43.6 | 41.7 | 47.4 |

| ScienceQAtest | 80.4 | 94.1 | 86.6 | 78.1 | 95.2 |

| AI2Dtest | 64.8 | 74.4 | 74.2 | 74.6 | 75.0 |

| MathVistatestmini | 39.0 | 45.0 | 47.1 | 47.9 | 59.0 |

| MathVersetestmini | 19.8 | 24.7 | 22.2 | 21.0 | 26.2 |

| MathVision | 15.4 | 12.6 | 16.3 | 17.5 | 18.4 |

| DocVQAtest | 71.0 | 86.9 | 87.6 | 89.9 | 85.0 |

| InfoVQAtest | 40.0 | 59.5 | 59.1 | 65.4 | 58.3 |

| ChartQAtest | 59.6 | 71.4 | 57.1 | 73.5 | 76.5 |

| TextVQAval | 74.3 | 73.5 | 77.6 | 79.9 | 76.4 |

| OCRVQAtestcore | 54.4 | 40.2 | 67.6 | 68.7 | 64.0 |

| VCRen easy | 27.6 | 51.6 | 67.7 | 68.3 | 70.0 |

| OCRBench | 613 | 784 | 782 | 810 | 772 |

| Average | 53.5 | 58.8 | 60.9 | 62.1 | 64.1 |

对于比较模型,评估是在本地环境中进行的,因此得分可能与论文或 VLMEvalKit 官方排行榜上的报告略有不同。

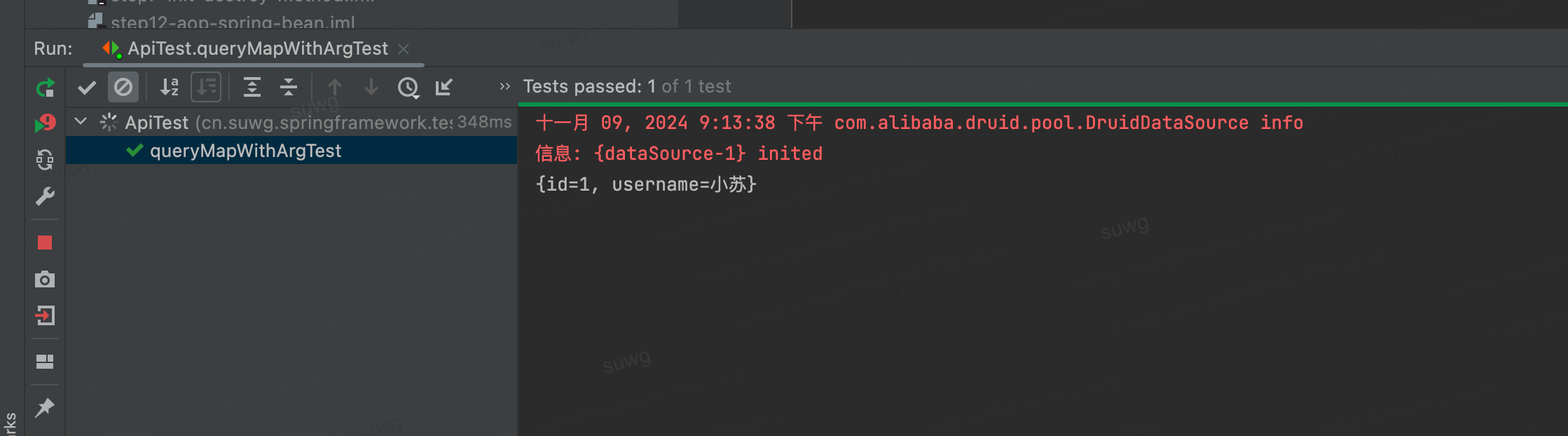

代码

# pip install git+https://github.com/LLaVA-VL/LLaVA-NeXT.git

from llava.model.builder import load_pretrained_model

from llava.mm_utils import process_images, tokenizer_image_token

from llava.constants import IMAGE_TOKEN_INDEX, DEFAULT_IMAGE_TOKEN

from llava.conversation import conv_templates

from PIL import Image

import requests

import copy

import torch

import warningswarnings.filterwarnings("ignore")pretrained = "BAAI/Aquila-VL-2B-llava-qwen"model_name = "llava_qwen"

device = "cuda"

device_map = "auto"

tokenizer, model, image_processor, max_length = load_pretrained_model(pretrained, None, model_name, device_map=device_map) # Add any other thing you want to pass in llava_model_argsmodel.eval()# load image from url

url = "https://github.com/haotian-liu/LLaVA/blob/1a91fc274d7c35a9b50b3cb29c4247ae5837ce39/images/llava_v1_5_radar.jpg?raw=true"

image = Image.open(requests.get(url, stream=True).raw)# load image from local environment

# url = "./local_image.jpg"

# image = Image.open(url)image_tensor = process_images([image], image_processor, model.config)

image_tensor = [_image.to(dtype=torch.float16, device=device) for _image in image_tensor]conv_template = "qwen_1_5" # Make sure you use correct chat template for different models

question = DEFAULT_IMAGE_TOKEN + "\nWhat is shown in this image?"

conv = copy.deepcopy(conv_templates[conv_template])

conv.append_message(conv.roles[0], question)

conv.append_message(conv.roles[1], None)

prompt_question = conv.get_prompt()input_ids = tokenizer_image_token(prompt_question, tokenizer, IMAGE_TOKEN_INDEX, return_tensors="pt").unsqueeze(0).to(device)

image_sizes = [image.size]cont = model.generate(input_ids,images=image_tensor,image_sizes=image_sizes,do_sample=False,temperature=0,max_new_tokens=4096,

)text_outputs = tokenizer.batch_decode(cont, skip_special_tokens=True)print(text_outputs)结论

BAAI 的 Aquila-VL-2B-llava-qwen 代表着视觉语言理解领域的重大进步。通过将功能强大的 LLM 和视觉塔组件与丰富多样的训练数据集相结合,该模型展示了在图像识别、自然语言处理等各种应用中改进人工智能系统的潜力。Infinity-MM 数据集的开源进一步鼓励了人工智能界的合作与创新。