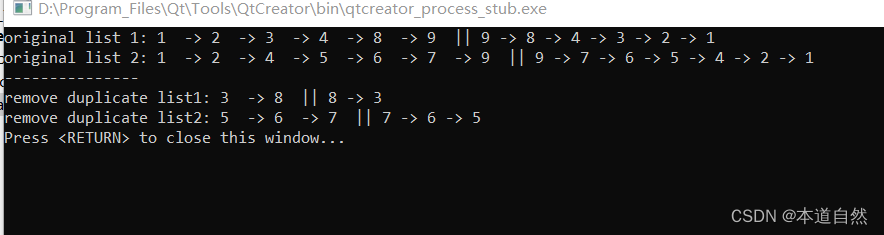

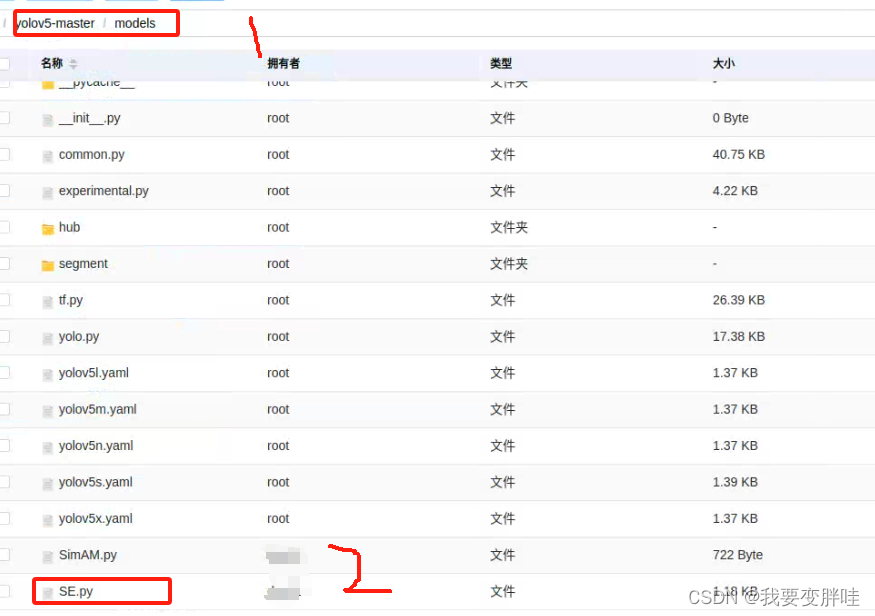

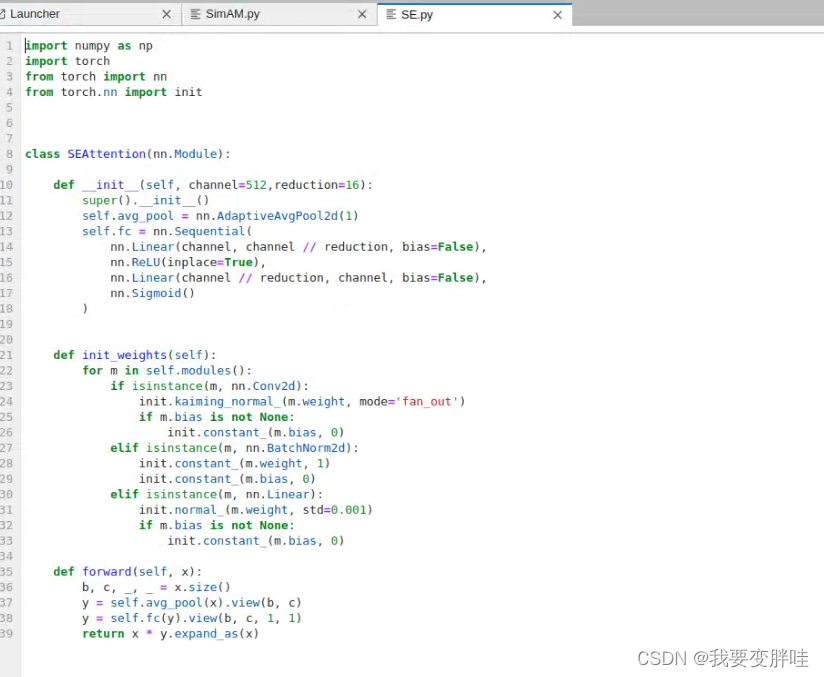

1、在yolov5/models下面新建一个SE.py文件,在里面放入下面的代码

代码如下:

import numpy as np

import torch

from torch import nn

from torch.nn import initclass SEAttention(nn.Module):def __init__(self, channel=512,reduction=16):super().__init__()self.avg_pool = nn.AdaptiveAvgPool2d(1)self.fc = nn.Sequential(nn.Linear(channel, channel // reduction, bias=False),nn.ReLU(inplace=True),nn.Linear(channel // reduction, channel, bias=False),nn.Sigmoid())def init_weights(self):for m in self.modules():if isinstance(m, nn.Conv2d):init.kaiming_normal_(m.weight, mode='fan_out')if m.bias is not None:init.constant_(m.bias, 0)elif isinstance(m, nn.BatchNorm2d):init.constant_(m.weight, 1)init.constant_(m.bias, 0)elif isinstance(m, nn.Linear):init.normal_(m.weight, std=0.001)if m.bias is not None:init.constant_(m.bias, 0)def forward(self, x):b, c, _, _ = x.size()y = self.avg_pool(x).view(b, c)y = self.fc(y).view(b, c, 1, 1)return x * y.expand_as(x)

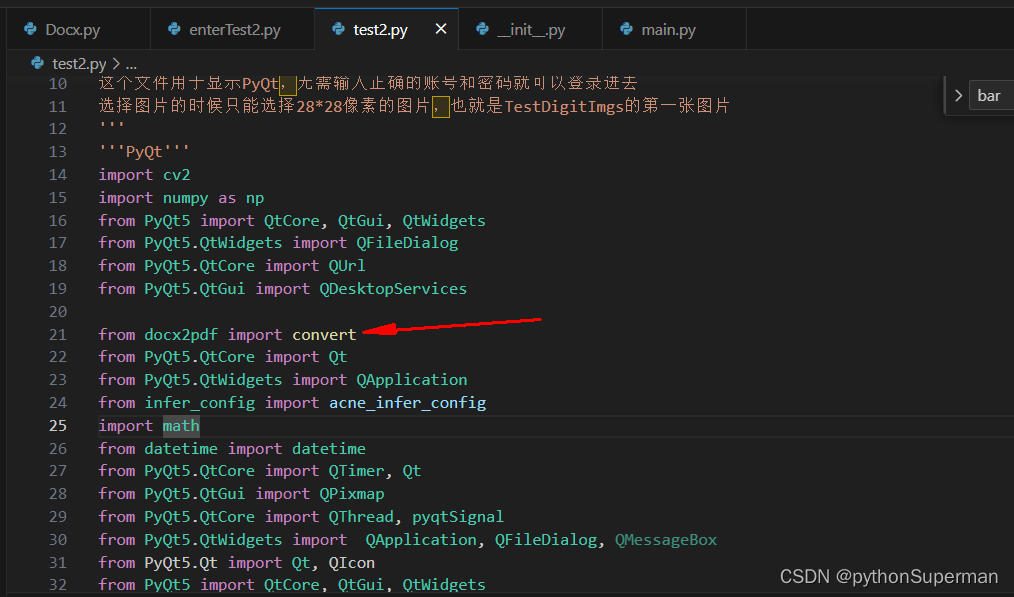

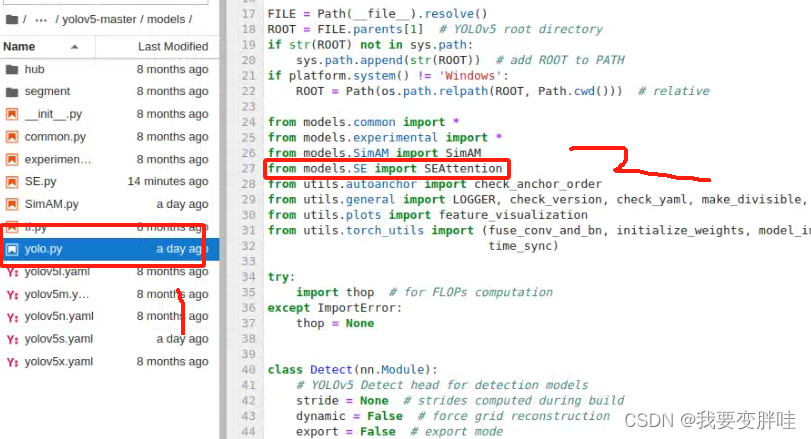

2、找到yolo.py文件,进行更改内容

在27行加一个from models SE import SEAttention, 保存即可

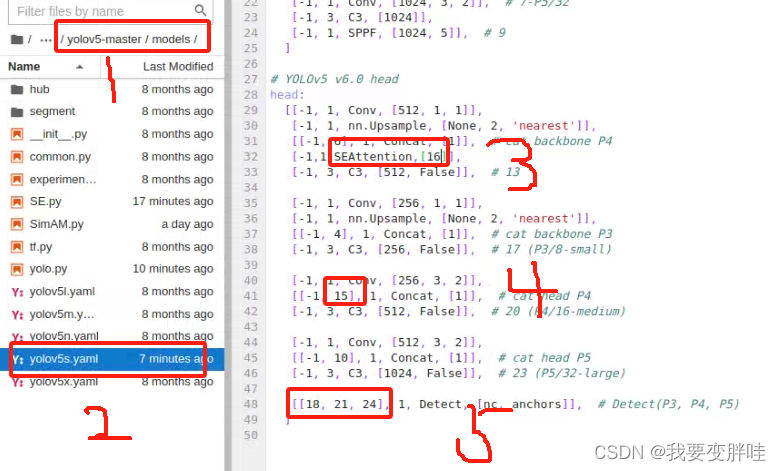

3、找到自己想要更改的yaml文件,我选择的yolov5s.yaml文件(你可以根据自己需求进行选择),将刚刚写好的模块SEAttention加入到yolov5s.yaml里面,并更改一些内容。更改如下

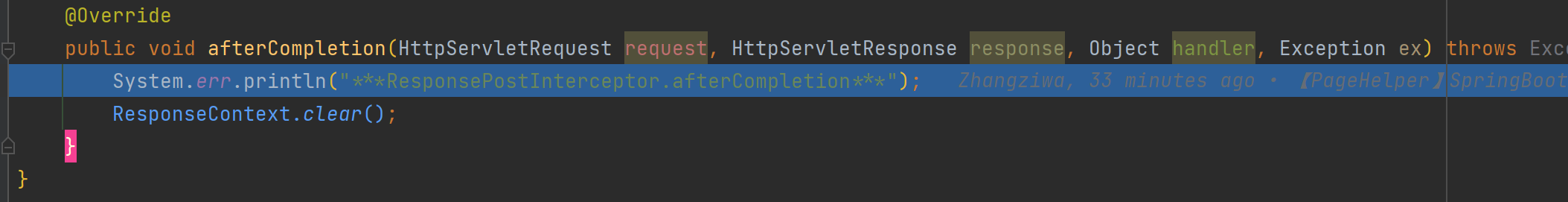

4、在yolo.py里面加入两行代码(332-333)

保存即可!

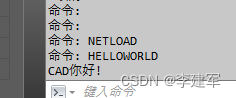

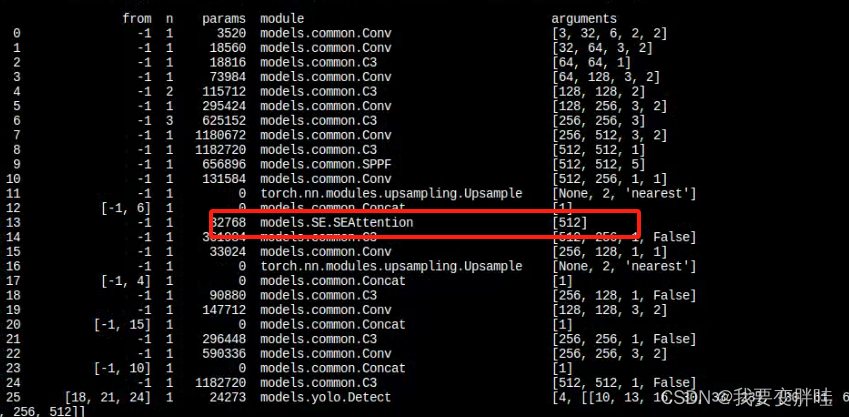

运行一下,发现出来了SEAttention

到处完成,跑100epoch,不知道跑到什么时候!