文章目录

- (十四)C++ 加载TorchScript 模型

- Step 1: 将PyTorch模型转换为TorchScript

- Step 2: 将TorchScript序列化为文件

- Step 3: C++程序中加载TorchScript模型

- Step 4: C++程序中运行TorchScript模型

【Pytorch】(十三)PyTorch模型部署: TorchScript

(十四)C++ 加载TorchScript 模型

以下内容将介绍如何在C++环境下加载和运行TorchScript 模型。

Step 1: 将PyTorch模型转换为TorchScript

将resnet18模型的一个实例以及示例输入传递给torch.jit.trace函数, 将模型转换为TorchScript:

import torch

import torchvision# An instance of your model.

model = torchvision.models.resnet18()# An example input you would normally provide to your model's forward() method.

example = torch.rand(1, 3, 224, 224)# Use torch.jit.trace to generate a torch.jit.ScriptModule via tracing.

traced_script_module = torch.jit.trace(model, example)

Step 2: 将TorchScript序列化为文件

序列化TorchScript并保存:

traced_script_module.save("traced_resnet_model.pt")

这将在工作目录中生成traced_resnet_model.pt文件。

Step 3: C++程序中加载TorchScript模型

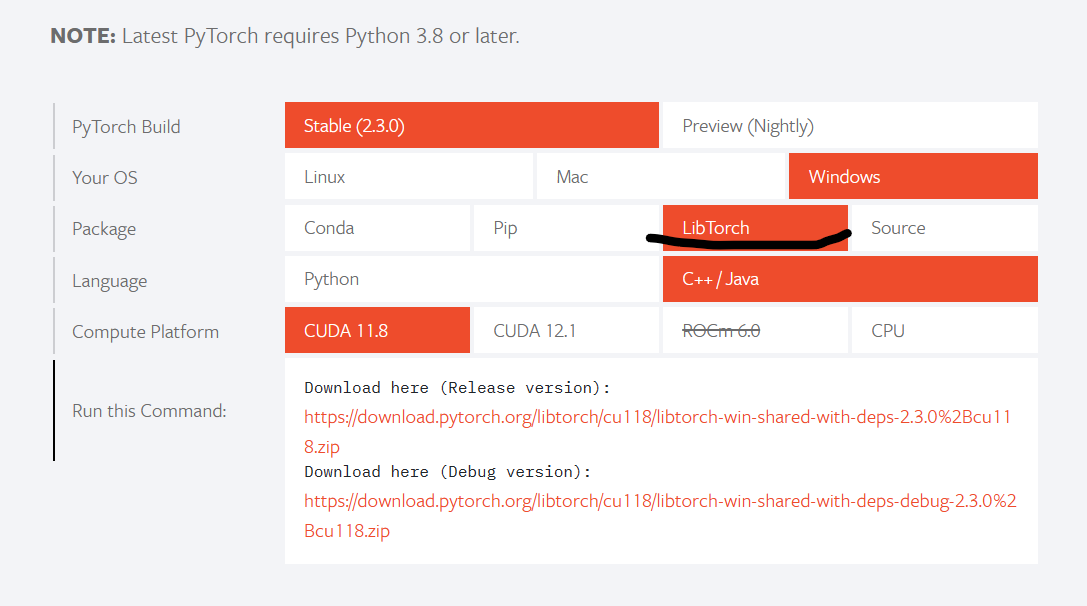

要在C++中加载序列化的TorchScript模型,必须依赖于PyTorch C++API(也称为LibTorch)。最新的稳定版本的LibTorch可以从PyTorch官网下载。

以下命令可以下载CPU版本的:

wget https://download.pytorch.org/libtorch/nightly/cpu/libtorch-shared-with-deps-latest.zip

unzip libtorch-shared-with-deps-latest.zip

下载并解压缩后,可以得到一个具有以下目录结构的文件夹:

libtorch/bin/include/lib/share/

lib/文件夹包含必须链接的共享库,

include/文件夹包含程序需要包含的头文件,

share/文件夹包含必要的CMake配置。

下面将使用CMake和LibTorch构建一个C++应用程序,该应用程序加载并执行一个序TorchScript模型。

example-app.cpp

#include <torch/script.h>

#include <iostream>

#include <memory>int main(int argc, const char* argv[]) {if (argc != 2) {std::cerr << "usage: example-app <path-to-exported-script-module>\n";return -1;}torch::jit::script::Module module;try {// 对TorchScript进行反序列化,该函数以文件路径作为输入module = torch::jit::load(argv[1]);}catch (const c10::Error& e) {std::cerr << "error loading the model\n";return -1;}std::cout << "ok\n";

}

CMakeLists.txt

cmake_minimum_required(VERSION 3.0 FATAL_ERROR)

project(custom_ops)find_package(Torch REQUIRED)add_executable(example-app example-app.cpp)

target_link_libraries(example-app "${TORCH_LIBRARIES}")

set_property(TARGET example-app PROPERTY CXX_STANDARD 14)

假设我们的示例目录如下所示:

example-app/CMakeLists.txtexample-app.cpp

我们可以运行以下命令,构建应用程序:

mkdir build

cd build

cmake -DCMAKE_PREFIX_PATH=/path/to/libtorch ..

cmake --build . --config Release

注意:GCC版本需要不小于9,不然编译会出错。其中/path/to/libtorch应该是解压缩的libtorch的完整路径。

如果一切顺利,打印的信息会是这样的:

root@4b5a67132e81:/example-app# mkdir build

root@4b5a67132e81:/example-app# cd build

root@4b5a67132e81:/example-app/build# cmake -DCMAKE_PREFIX_PATH=/path/to/libtorch ..

-- The C compiler identification is GNU 5.4.0

-- The CXX compiler identification is GNU 5.4.0

-- Check for working C compiler: /usr/bin/cc

-- Check for working C compiler: /usr/bin/cc -- works

-- Detecting C compiler ABI info

-- Detecting C compiler ABI info - done

-- Detecting C compile features

-- Detecting C compile features - done

-- Check for working CXX compiler: /usr/bin/c++

-- Check for working CXX compiler: /usr/bin/c++ -- works

-- Detecting CXX compiler ABI info

-- Detecting CXX compiler ABI info - done

-- Detecting CXX compile features

-- Detecting CXX compile features - done

-- Looking for pthread.h

-- Looking for pthread.h - found

-- Looking for pthread_create

-- Looking for pthread_create - not found

-- Looking for pthread_create in pthreads

-- Looking for pthread_create in pthreads - not found

-- Looking for pthread_create in pthread

-- Looking for pthread_create in pthread - found

-- Found Threads: TRUE

-- Configuring done

-- Generating done

-- Build files have been written to: /example-app/build

root@4b5a67132e81:/example-app/build# make

Scanning dependencies of target example-app

[ 50%] Building CXX object CMakeFiles/example-app.dir/example-app.cpp.o

[100%] Linking CXX executable example-app

[100%] Built target example-app

运行程序:

root@4b5a67132e81:/example-app/build# ./example-app <path_to_model>/traced_resnet_model.pt

ok

打印ok说明加载成功。

Step 4: C++程序中运行TorchScript模型

将Step 1相同的 inputs 输入到C++加载的模型:

#include <torch/script.h>

#include <iostream>

#include <memory>int main(int argc, const char* argv[]) {if (argc != 2) {std::cerr << "usage: example-app <path-to-exported-script-module>\n";return -1;}torch::jit::script::Module module;try {// Deserialize the ScriptModule from a file using torch::jit::load().module = torch::jit::load(argv[1]);}catch (const c10::Error& e) {std::cerr << "error loading the model\n";return -1;}std::cout << "ok\n";// Create a vector of inputs.std::vector<torch::jit::IValue> inputs;inputs.push_back(torch::ones({1, 3, 224, 224}));// Execute the model and turn its output into a tensor.at::Tensor output = module.forward(inputs).toTensor();std::cout << output.slice(/*dim=*/1, /*start=*/0, /*end=*/5) << '\n';

}

root@4b5a67132e81:/example-app/build# make

Scanning dependencies of target example-app

[ 50%] Building CXX object CMakeFiles/example-app.dir/example-app.cpp.o

[100%] Linking CXX executable example-app

[100%] Built target example-app

root@4b5a67132e81:/example-app/build# ./example-app traced_resnet_model.pt

-0.2698 -0.0381 0.4023 -0.3010 -0.0448

[ Variable[CPUFloatType]{1,5} ]

可以看到,C++环境下模型的输出与Python环境下的相同:

tensor([-0.2698, -0.0381, 0.4023, -0.3010, -0.0448], grad_fn=<SliceBackward>)

更多功能的实现可查阅:

PyTorch C++API文档:

PyTorch Python API文档:

TorchScript Pytorch官方文档

参考:

https://pytorch.org/tutorials/advanced/cpp_export.html

![vue2实现字节流byte[]数组的图片预览](https://img-blog.csdnimg.cn/direct/5d5716c817b54b01b8d7e4ea7e9c9665.png#pic_center)