火灾温度预测

- 🍨 本文为🔗365天深度学习训练营 中的学习记录博客

- 🍖 原作者:K同学啊

使用LSTM进行时间序列预测

这周学习如何使用长短期记忆网络(LSTM)进行时间序列预测。使用PyTorch框架来构建和训练模型,基于一个包含温度、CO浓度以及烟灰浓度的数据集。

1. 数据预处理

首先,需要对数据进行预处理。数据集中的特征包括温度(Tem1)、CO浓度(CO 1)、以及烟灰浓度(Soot 1)。我们将这些特征标准化,并构建输入序列X和目标值y。

import pandas as pd

import numpy as np

import matplotlib.pyplot as plt

import seaborn as sns

from sklearn.preprocessing import MinMaxScalerdata = pd.read_csv("woodpine2.csv")plt.rcParams['savefig.dpi'] = 500 # 图片像素

plt.rcParams['figure.dpi'] = 500 # 分辨率fig, ax = plt.subplots(1, 3, constrained_layout=True, figsize=(14, 3))sns.lineplot(data=data["Tem1"], ax=ax[0])

sns.lineplot(data=data["CO 1"], ax=ax[1])

sns.lineplot(data=data["Soot 1"], ax=ax[2])

plt.show()# 数据归一化

dataFrame = data.iloc[:, 1:].copy()

sc = MinMaxScaler(feature_range=(0, 1))for i in ['CO 1', 'Soot 1', 'Tem1']:dataFrame[i] = sc.fit_transform(dataFrame[i].values.reshape(-1, 1))width_X = 8

width_y = 1# 构建输入序列X和目标值y

X = []

y = []in_start = 0for _, _ in data.iterrows():in_end = in_start + width_Xout_end = in_end + width_yif out_end < len(dataFrame):X_ = np.array(dataFrame.iloc[in_start:in_end, ])y_ = np.array(dataFrame.iloc[in_end:out_end, 0])X.append(X_)y.append(y_)in_start += 1X = np.array(X)

y = np.array(y).reshape(-1, 1, 1)

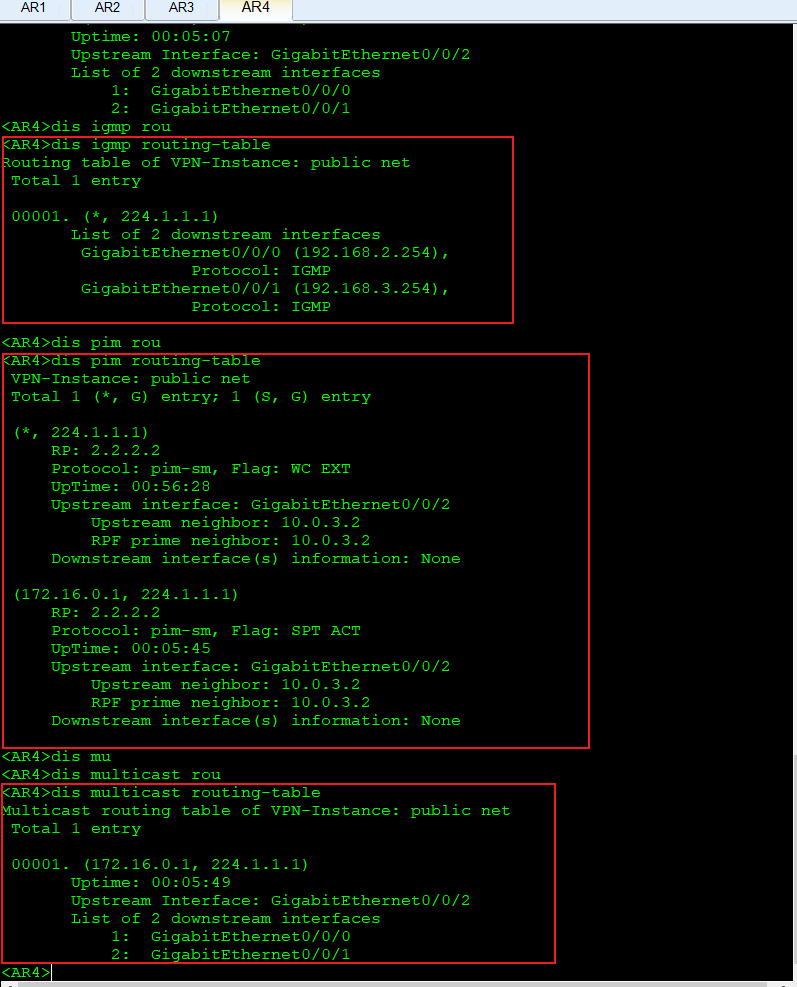

2. 构建LSTM模型

接下来,使用PyTorch构建一个两层的LSTM模型。模型输入为前8个时间步的Tem1、CO 1和Soot 1数据,输出为第9个时间步的Tem1值。

import torch

import torch.nn as nn

import torch.nn.functional as F

from torch.utils.data import TensorDataset, DataLoaderclass model_lstm(nn.Module):def __init__(self):super(model_lstm, self).__init__()self.lstm0 = nn.LSTM(input_size=3, hidden_size=320,num_layers=1, batch_first=True)self.lstm1 = nn.LSTM(input_size=320, hidden_size=320,num_layers=1, batch_first=True)self.fc0 = nn.Linear(320, 1)def forward(self, x):out, hidden1 = self.lstm0(x)out, _ = self.lstm1(out, hidden1)out = self.fc0(out)return out[:, -1:, :]

3. 模型训练

使用均方误差(MSE)作为损失函数,并采用随机梯度下降(SGD)优化器。训练过程中,我们会记录训练损失和测试损失,并绘制其变化曲线。

device = torch.device("cuda" if torch.cuda.is_available() else "cpu")

model = model_lstm().to(device)

loss_fn = nn.MSELoss()

opt = torch.optim.SGD(model.parameters(), lr=1e-1, weight_decay=1e-4)

epochs = 50

train_loss = []

test_loss = []

lr_scheduler = torch.optim.lr_scheduler.CosineAnnealingLR(opt, epochs)def train(train_dl, model, loss_fn, opt, lr_scheduler=None):size = len(train_dl.dataset)num_batches = len(train_dl)train_loss = 0for x, y in train_dl:x, y = x.to(device), y.to(device)pred = model(x)loss = loss_fn(pred, y)opt.zero_grad()loss.backward()opt.step()train_loss += loss.item()if lr_scheduler is not None:lr_scheduler.step()train_loss /= num_batchesreturn train_lossdef test(dataloader, model, loss_fn):num_batches = len(dataloader)test_loss = 0with torch.no_grad():for x, y in dataloader:x, y = x.to(device), y.to(device)y_pred = model(x)loss = loss_fn(y_pred, y)test_loss += loss.item()test_loss /= num_batchesreturn test_lossfor epoch in range(epochs):model.train()epoch_train_loss = train(train_dl, model, loss_fn, opt, lr_scheduler)model.eval()epoch_test_loss = test(test_dl, model, loss_fn)train_loss.append(epoch_train_loss)test_loss.append(epoch_test_loss)template = ('Epoch:{:2d}, Train_loss:{:.5f}, Test_loss:{:.5f}')print(template.format(epoch + 1, epoch_train_loss, epoch_test_loss))plt.figure(figsize=(5, 3), dpi=120)

plt.plot(train_loss, label='LSTM Training Loss')

plt.plot(test_loss, label='LSTM Validation Loss')

plt.title('Training and Validation Loss')

plt.legend()

plt.show()

4. 预测与评估

最后,使用训练好的模型对测试集进行预测,并计算模型的均方根误差(RMSE)和决定系数(R²)。

# 预测并将数据移回 CPU 进行逆变换

predicted_y_lstm = sc.inverse_transform(model(X_test).detach().cpu().numpy().reshape(-1, 1))

y_test_1 = sc.inverse_transform(y_test.reshape(-1, 1))# 转换为列表形式

y_test_one = [i[0] for i in y_test_1]

predicted_y_lstm_one = [i[0] for i in predicted_y_lstm]# 绘制真实值和预测值

plt.figure(figsize=(5, 3), dpi=120)

plt.plot(y_test_one[:2000], color='red', label='真实值')

plt.plot(predicted_y_lstm_one[:2000], color='blue', label='预测值')

plt.title('预测结果对比')

plt.xlabel('X')

plt.ylabel('Y')

plt.legend()

plt.show()from sklearn import metrics

RMSE_lstm = metrics.mean_squared_error(predicted_y_lstm_one, y_test_1) ** 0.5

R2_lstm = metrics.r2_score(predicted_y_lstm_one, y_test_1)print('均方根误差: %.5f' % RMSE_lstm)

print('R2: %.5f' % R2_lstm)

5.结果

总结

这周学习了如何使用LSTM模型进行时间序列预测,并掌握数据预处理、模型构建、训练、预测及模型评估的全流程,对lstm模型的使用有了初步了解。