前言

1.真人视频三维重建数字人源码是基于NeRF改进的RAD-NeRF,NeRF(Neural Radiance Fields)是最早在2020年ECCV会议上的Best Paper,其将隐式表达推上了一个新的高度,仅用 2D 的 posed images 作为监督,即可表示复杂的三维场景。

NeRF其输入稀疏的多角度带pose的图像训练得到一个神经辐射场模型,根据这个模型可以渲染出任意视角下的清晰的照片。也可以简要概括为用一个MLP神经网络去隐式地学习一个三维场景。

NeRF最先是应用在新视点合成方向,由于其超强的隐式表达三维信息的能力后续在三维重建方向迅速发展起来。

2.NeRF使用的场景有几个主流应用方向:

新视点合成:

物体精细重建:

城市重建:

人体重建:

3.真人视频合成

通过音频空间分解的实时神经辐射谈话肖像合成

一、训练环境

1.系统要求

我是在win下训练,训练的环境为win 10,GPU RTX 3080 12G,CUDA 11.7,cudnn 8.5,Anaconda 3,Vs2019。

2.环境依赖

使用conda环境进行安装,python 3.10

#下载源码

git clone https://github.com/ashawkey/RAD-NeRF.git

cd RAD-NeRF

#创建虚拟环境

conda create --name vrh python=3.10

#pytorch 要单独对应cuda进行安装,要不然训练时使用不了GPU

conda install pytorch==2.0.0 torchvision==0.15.0 torchaudio==2.0.0 pytorch-cuda=11.7 -c pytorch -c nvidia

conda install -c fvcore -c iopath -c conda-forge fvcore iopath

#安装所需要的依赖

pip install -r requirements.txt3.windows下安装pytorch3d,这个依赖还是要在刚刚创建的conda环境里面进行安装。

git clone https://github.com/facebookresearch/pytorch3d.git

cd pytorch3d

python setup.py install安装pytorch3d很慢,也有可能中间报错退出,这里建议安装vs 生成工具。Microsoft C++ 生成工具 - Visual Studio![]() https://visualstudio.microsoft.com/zh-hans/visual-cpp-build-tools/

https://visualstudio.microsoft.com/zh-hans/visual-cpp-build-tools/

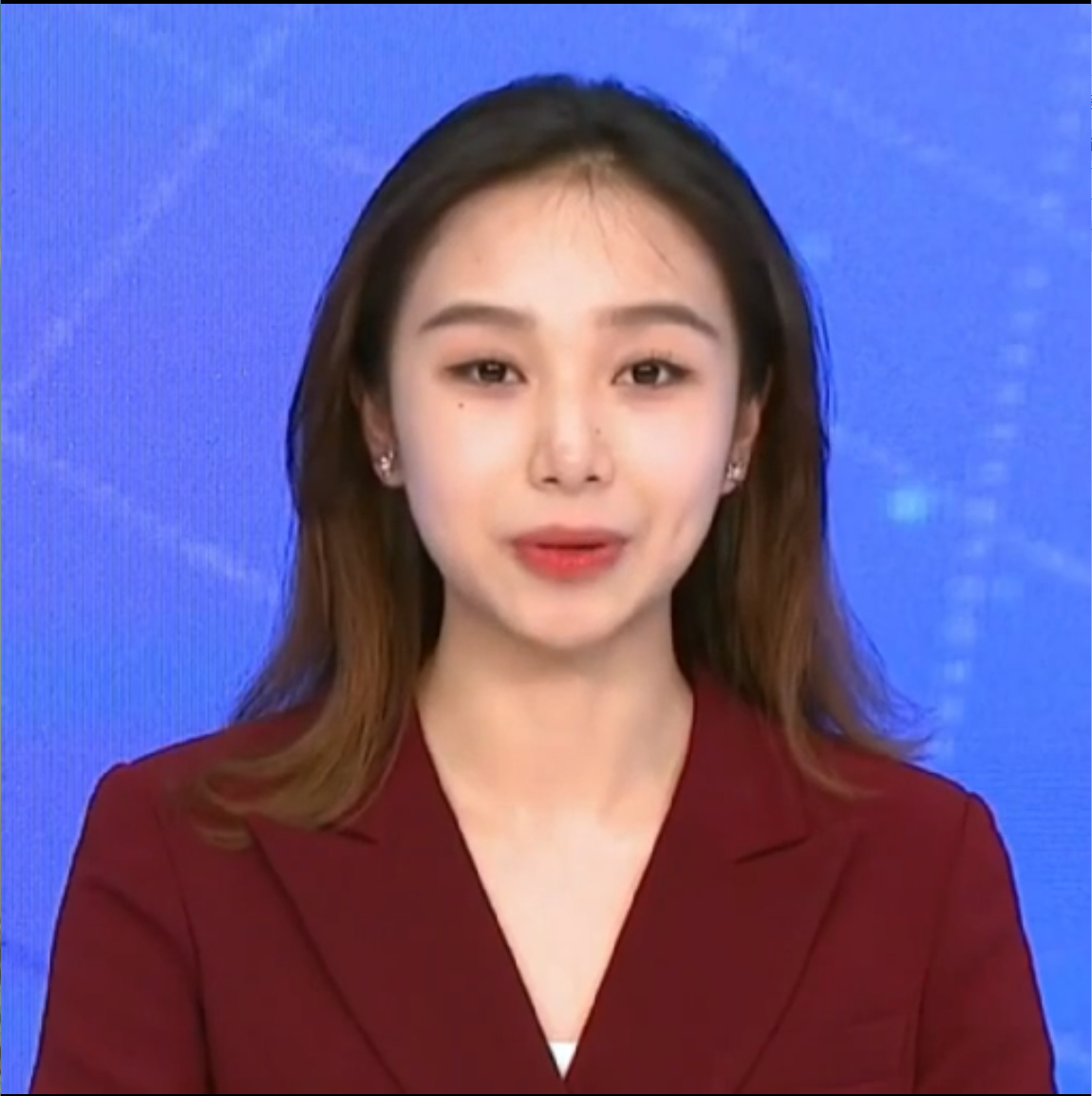

二、数据准备

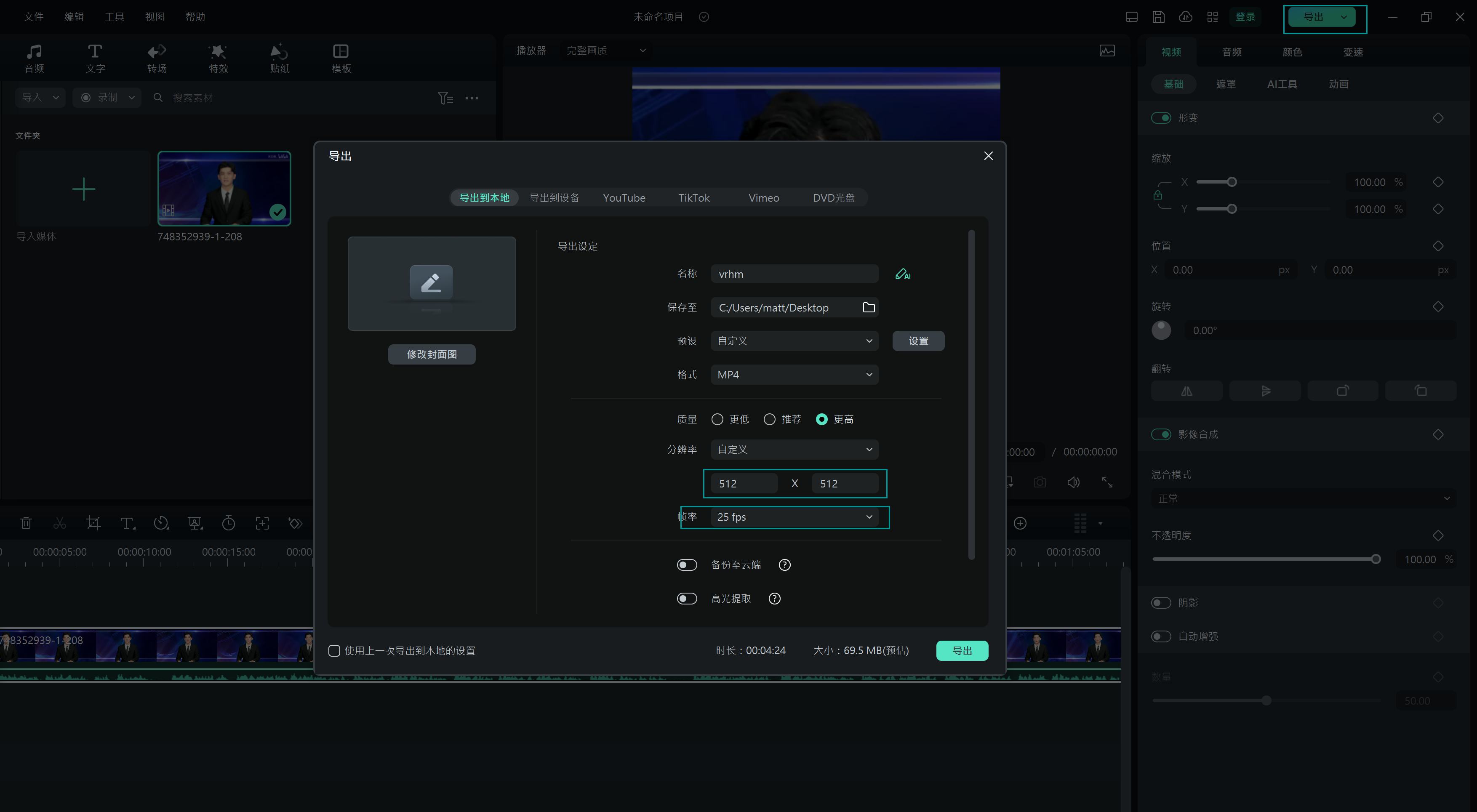

1.从网上上下载或者自己拍摄一段不大于5分钟的视频,视频人像单一,面对镜头,背景尽量简单,这是方便等下进行抠人像与分割人脸用的。我这里从网上下载了一段5分钟左右的视频,然后视频编辑软件,只切取一部分上半身和头部的画面。按1比1切取。这里的剪切尺寸不做要求,只是1比1就可以了。

2.把视频剪切项目参数设置成1比1,分辨率设成512*512。

3.数据长宽按512*512,25fps,mp4格式导出视频。

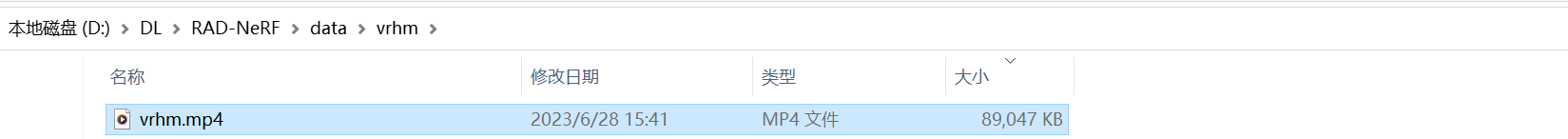

4.把导出的数据放到项目目录下,如下图所示, 我这里面在data下载创建了一个与文件名一样的目录,然后把刚刚剪切的视频放进目录里面。

视频数据如下:

三、人脸模型准备

1.人脸解析模型

模型是从AD-NeRF这个项目获取。下载AD-NeRF这个项目。

git clone https://github.com/YudongGuo/AD-NeRF.git把AD-NeRF项目下的data_utils/face_parsing/79999_iter.pth复制到RAD-NeRF/data_utils/face_parsing/79999_iter.pth 。

或者在RAD-NeRF目录直接下载,这种方式可能会出现下载不了。

wget https://github.com/YudongGuo/AD-NeRF/blob/master/data_util/face_parsing/79999_iter.pth?raw=true -O data_utils/face_parsing/79999_iter.pth2.basel脸部模型处理

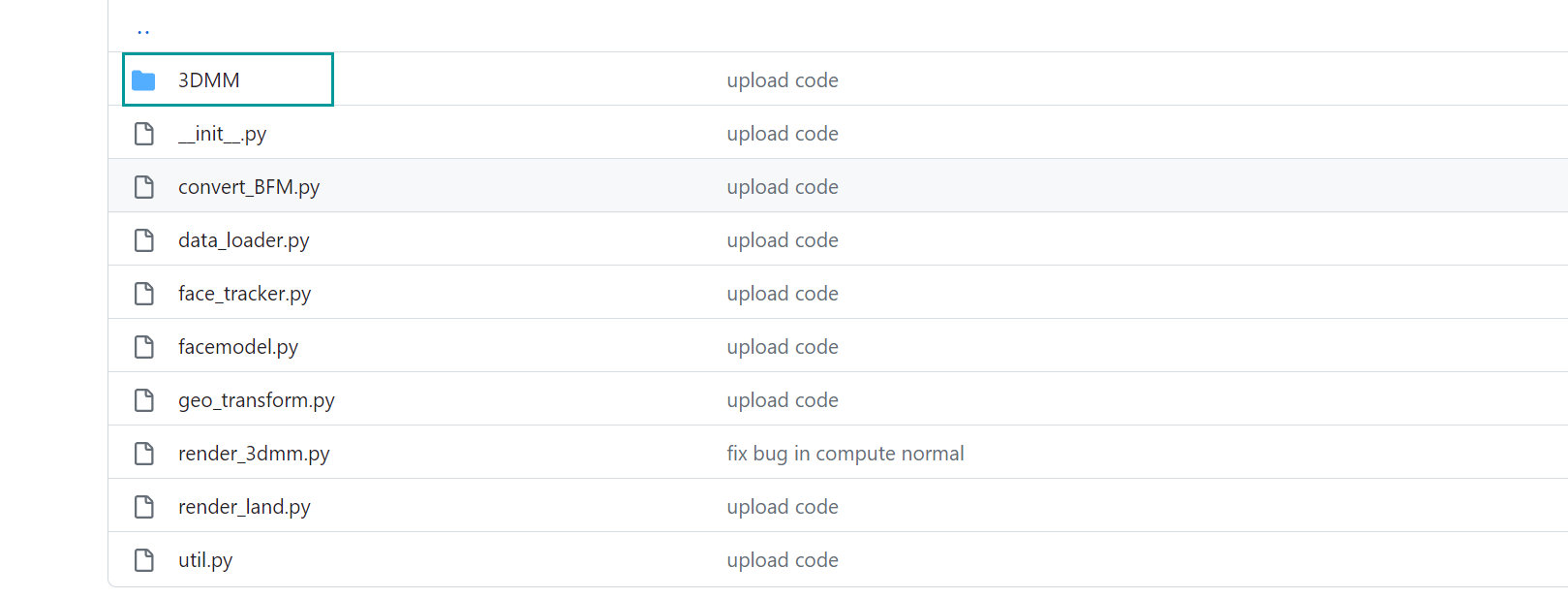

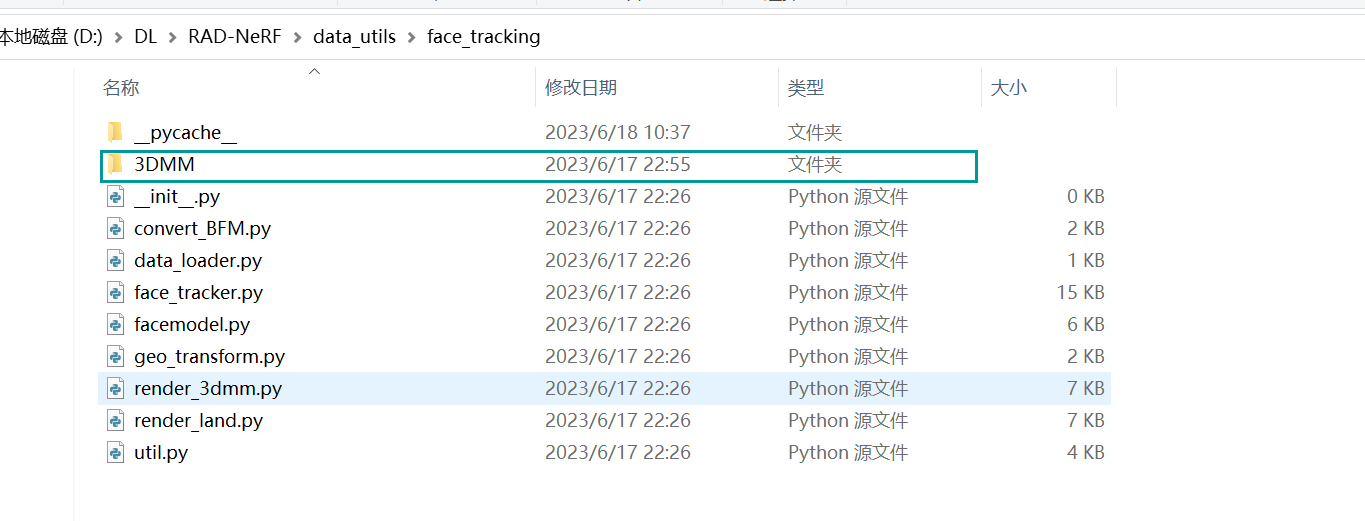

从AD-NeRF/data_utils/face_trackong项目里面的3DMM这个目录复制到Rad-NeRF/data_utils/face_trackong里面

移动到的位置:

或者是在Rad_NeRF项目下,直接下载,命令如下:

wget https://github.com/YudongGuo/AD-NeRF/blob/master/data_util/face_parsing/79999_iter.pth?raw=true -O data_utils/face_parsing/79999_iter.pth## prepare basel face model

# 1. download `01_MorphableModel.mat` from https://faces.dmi.unibas.ch/bfm/main.php?nav=1-2&id=downloads and put it under `data_utils/face_tracking/3DMM/`

# 2. download other necessary files from AD-NeRF's repository:

wget https://github.com/YudongGuo/AD-NeRF/blob/master/data_util/face_tracking/3DMM/exp_info.npy?raw=true -O data_utils/face_tracking/3DMM/exp_info.npy

wget https://github.com/YudongGuo/AD-NeRF/blob/master/data_util/face_tracking/3DMM/keys_info.npy?raw=true -O data_utils/face_tracking/3DMM/keys_info.npy

wget https://github.com/YudongGuo/AD-NeRF/blob/master/data_util/face_tracking/3DMM/sub_mesh.obj?raw=true -O data_utils/face_tracking/3DMM/sub_mesh.obj

wget https://github.com/YudongGuo/AD-NeRF/blob/master/data_util/face_tracking/3DMM/topology_info.npy?raw=true -O data_utils/face_tracking/3DMM/topology_info.npy从https://faces.dmi.unibas.ch/bfm/main.php?nav=1-2&id=downloads下载01_MorphableModel.mat放到Rad-NeRF/data_utils/face_trackong/3DMM里面。

运行

cd xx/xx/Rad-NeRF/data_utils/face_tracking

python convert_BFM.py

四、数据处理

1.处理数据

#按自己的数据与目录来运行对应的路径

python data_utils/process.py data/vrhm/vrhm.mp42.分步处理

python data_utils/process.py data/woman/woman.mp4 --task 1

分离音频

--task 2

aud_eo.npy

--task 3

把视频变成图像

--task 4

分割人像

--task 5

extracted background image

--task 6

extract torso and gt images for data/woman

--task 7

extracted face landmarks 生成lms文件

--task 7

perform face tracking

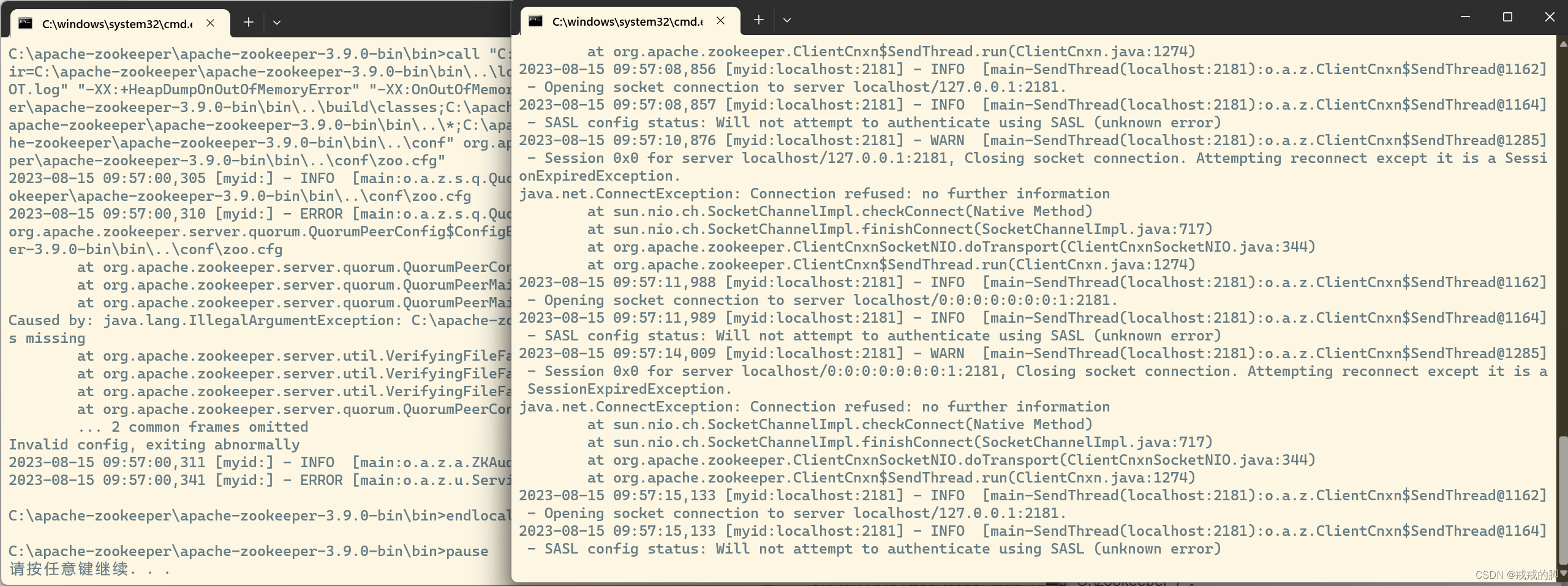

在这一步会下载四个模型,如果没有魔法上网,这四个模型下载很慢,或者直接下到一半就崩掉了。

也可以先把这个模型下载好之后放到指定的目录,在处理的过程中就不会再次下载,模型下载路径:

https://download.pytorch.org/models/resnet18-5c106cde.pth

https://www.adrianbulat.com/downloads/python-fan/s3fd-619a316812.pth

https://www.adrianbulat.com/downloads/python-fan/2DFAN4-cd938726ad.zip

https://download.pytorch.org/models/alexnet-owt-7be5be79.pth下载完成之后,把四个模型放到指定目录,如果目录则创建目录之后再放入。目录如下:

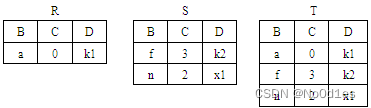

2.处理数据时,会在data所放的视频目录下生成以下几个目录:

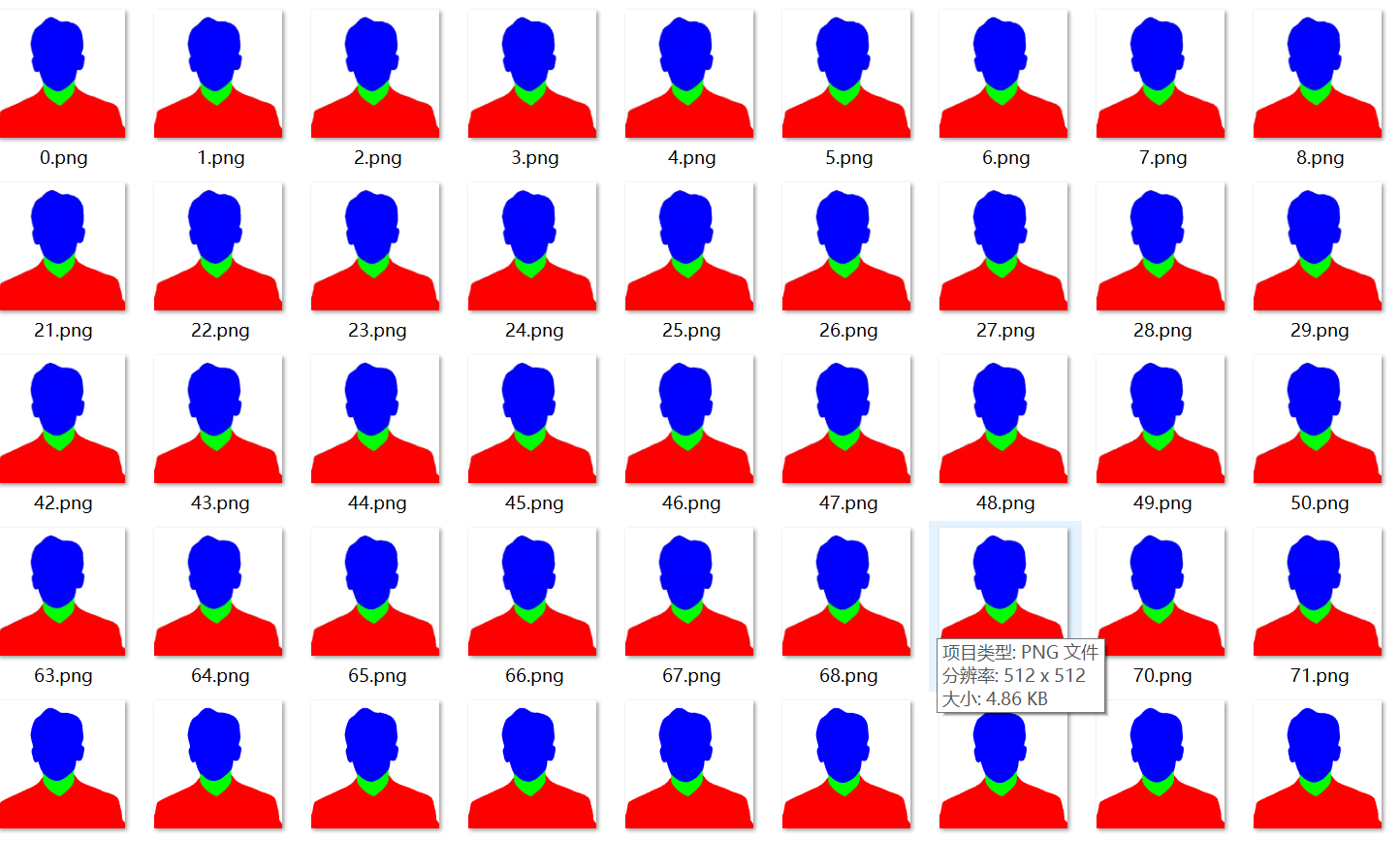

这里主要注意的是parsing这个目录,目录下的数据是分割后的数据。

这里要注意分割的质量,如果分割质量不好,就要借助别的工具先做人像分割,要不然训练出来的人物会出现透背景或者断开的现象。比如我之后处理的数据:

这里人的脖子下面有一块白的色块,训练完成之后,生成数字人才发现,这块区域是分割模型把它当背景了,合成视频时,这块是绿色的背景,直接废了。

在数据准备中,也尽量不要这种头发披下来的,很容易出现拼接错落的现象。

我在使用这个数据训练时,刚刚开始不清楚其中的关键因素,第一次训练效果如下,能感觉到头部与身体的连接并不和协。

五、模型训练

先看看训练代码的给的参数,训练时只要关注几个主要参数就可以了。

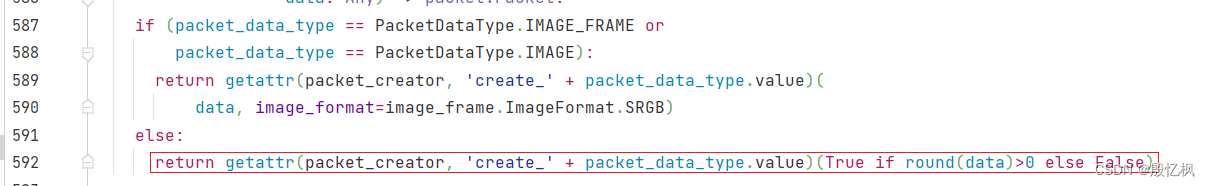

import torch

import argparsefrom nerf.provider import NeRFDataset

from nerf.gui import NeRFGUI

from nerf.utils import *# torch.autograd.set_detect_anomaly(True)if __name__ == '__main__':parser = argparse.ArgumentParser()parser.add_argument('path', type=str)parser.add_argument('-O', action='store_true', help="equals --fp16 --cuda_ray --exp_eye")parser.add_argument('--test', action='store_true', help="test mode (load model and test dataset)")parser.add_argument('--test_train', action='store_true', help="test mode (load model and train dataset)")parser.add_argument('--data_range', type=int, nargs='*', default=[0, -1], help="data range to use")parser.add_argument('--workspace', type=str, default='workspace')parser.add_argument('--seed', type=int, default=0)### training optionsparser.add_argument('--iters', type=int, default=200000, help="training iters")parser.add_argument('--lr', type=float, default=5e-3, help="initial learning rate")parser.add_argument('--lr_net', type=float, default=5e-4, help="initial learning rate")parser.add_argument('--ckpt', type=str, default='latest')parser.add_argument('--num_rays', type=int, default=4096 * 16, help="num rays sampled per image for each training step")parser.add_argument('--cuda_ray', action='store_true', help="use CUDA raymarching instead of pytorch")parser.add_argument('--max_steps', type=int, default=16, help="max num steps sampled per ray (only valid when using --cuda_ray)")parser.add_argument('--num_steps', type=int, default=16, help="num steps sampled per ray (only valid when NOT using --cuda_ray)")parser.add_argument('--upsample_steps', type=int, default=0, help="num steps up-sampled per ray (only valid when NOT using --cuda_ray)")parser.add_argument('--update_extra_interval', type=int, default=16, help="iter interval to update extra status (only valid when using --cuda_ray)")parser.add_argument('--max_ray_batch', type=int, default=4096, help="batch size of rays at inference to avoid OOM (only valid when NOT using --cuda_ray)")### network backbone optionsparser.add_argument('--fp16', action='store_true', help="use amp mixed precision training")parser.add_argument('--lambda_amb', type=float, default=0.1, help="lambda for ambient loss")parser.add_argument('--bg_img', type=str, default='', help="background image")parser.add_argument('--fbg', action='store_true', help="frame-wise bg")parser.add_argument('--exp_eye', action='store_true', help="explicitly control the eyes")parser.add_argument('--fix_eye', type=float, default=-1, help="fixed eye area, negative to disable, set to 0-0.3 for a reasonable eye")parser.add_argument('--smooth_eye', action='store_true', help="smooth the eye area sequence")parser.add_argument('--torso_shrink', type=float, default=0.8, help="shrink bg coords to allow more flexibility in deform")### dataset optionsparser.add_argument('--color_space', type=str, default='srgb', help="Color space, supports (linear, srgb)")parser.add_argument('--preload', type=int, default=0, help="0 means load data from disk on-the-fly, 1 means preload to CPU, 2 means GPU.")# (the default value is for the fox dataset)parser.add_argument('--bound', type=float, default=1, help="assume the scene is bounded in box[-bound, bound]^3, if > 1, will invoke adaptive ray marching.")parser.add_argument('--scale', type=float, default=4, help="scale camera location into box[-bound, bound]^3")parser.add_argument('--offset', type=float, nargs='*', default=[0, 0, 0], help="offset of camera location")parser.add_argument('--dt_gamma', type=float, default=1/256, help="dt_gamma (>=0) for adaptive ray marching. set to 0 to disable, >0 to accelerate rendering (but usually with worse quality)")parser.add_argument('--min_near', type=float, default=0.05, help="minimum near distance for camera")parser.add_argument('--density_thresh', type=float, default=10, help="threshold for density grid to be occupied (sigma)")parser.add_argument('--density_thresh_torso', type=float, default=0.01, help="threshold for density grid to be occupied (alpha)")parser.add_argument('--patch_size', type=int, default=1, help="[experimental] render patches in training, so as to apply LPIPS loss. 1 means disabled, use [64, 32, 16] to enable")parser.add_argument('--finetune_lips', action='store_true', help="use LPIPS and landmarks to fine tune lips region")parser.add_argument('--smooth_lips', action='store_true', help="smooth the enc_a in a exponential decay way...")parser.add_argument('--torso', action='store_true', help="fix head and train torso")parser.add_argument('--head_ckpt', type=str, default='', help="head model")### GUI optionsparser.add_argument('--gui', action='store_true', help="start a GUI")parser.add_argument('--W', type=int, default=450, help="GUI width")parser.add_argument('--H', type=int, default=450, help="GUI height")parser.add_argument('--radius', type=float, default=3.35, help="default GUI camera radius from center")parser.add_argument('--fovy', type=float, default=21.24, help="default GUI camera fovy")parser.add_argument('--max_spp', type=int, default=1, help="GUI rendering max sample per pixel")### elseparser.add_argument('--att', type=int, default=2, help="audio attention mode (0 = turn off, 1 = left-direction, 2 = bi-direction)")parser.add_argument('--aud', type=str, default='', help="audio source (empty will load the default, else should be a path to a npy file)")parser.add_argument('--emb', action='store_true', help="use audio class + embedding instead of logits")parser.add_argument('--ind_dim', type=int, default=4, help="individual code dim, 0 to turn off")parser.add_argument('--ind_num', type=int, default=10000, help="number of individual codes, should be larger than training dataset size")parser.add_argument('--ind_dim_torso', type=int, default=8, help="individual code dim, 0 to turn off")parser.add_argument('--amb_dim', type=int, default=2, help="ambient dimension")parser.add_argument('--part', action='store_true', help="use partial training data (1/10)")parser.add_argument('--part2', action='store_true', help="use partial training data (first 15s)")parser.add_argument('--train_camera', action='store_true', help="optimize camera pose")parser.add_argument('--smooth_path', action='store_true', help="brute-force smooth camera pose trajectory with a window size")parser.add_argument('--smooth_path_window', type=int, default=7, help="smoothing window size")# asrparser.add_argument('--asr', action='store_true', help="load asr for real-time app")parser.add_argument('--asr_wav', type=str, default='', help="load the wav and use as input")parser.add_argument('--asr_play', action='store_true', help="play out the audio")parser.add_argument('--asr_model', type=str, default='cpierse/wav2vec2-large-xlsr-53-esperanto')# parser.add_argument('--asr_model', type=str, default='facebook/wav2vec2-large-960h-lv60-self')parser.add_argument('--asr_save_feats', action='store_true')# audio FPSparser.add_argument('--fps', type=int, default=50)# sliding window left-middle-right length (unit: 20ms)parser.add_argument('-l', type=int, default=10)parser.add_argument('-m', type=int, default=50)parser.add_argument('-r', type=int, default=10)opt = parser.parse_args()if opt.O:opt.fp16 = Trueopt.exp_eye = Trueif opt.test:opt.smooth_path = Trueopt.smooth_eye = Trueopt.smooth_lips = Trueopt.cuda_ray = True# assert opt.cuda_ray, "Only support CUDA ray mode."if opt.patch_size > 1:# assert opt.patch_size > 16, "patch_size should > 16 to run LPIPS loss."assert opt.num_rays % (opt.patch_size ** 2) == 0, "patch_size ** 2 should be dividable by num_rays."if opt.finetune_lips:# do not update density grid in finetune stageopt.update_extra_interval = 1e9from nerf.network import NeRFNetworkprint(opt)seed_everything(opt.seed)device = torch.device('cuda' if torch.cuda.is_available() else 'cpu')model = NeRFNetwork(opt)# manually load state dict for headif opt.torso and opt.head_ckpt != '':model_dict = torch.load(opt.head_ckpt, map_location='cpu')['model']missing_keys, unexpected_keys = model.load_state_dict(model_dict, strict=False)if len(missing_keys) > 0:print(f"[WARN] missing keys: {missing_keys}")if len(unexpected_keys) > 0:print(f"[WARN] unexpected keys: {unexpected_keys}") # freeze these keysfor k, v in model.named_parameters():if k in model_dict:# print(f'[INFO] freeze {k}, {v.shape}')v.requires_grad = False# print(model)criterion = torch.nn.MSELoss(reduction='none')if opt.test:if opt.gui:metrics = [] # use no metric in GUI for faster initialization...else:# metrics = [PSNRMeter(), LPIPSMeter(device=device)]metrics = [PSNRMeter(), LPIPSMeter(device=device), LMDMeter(backend='fan')]trainer = Trainer('ngp', opt, model, device=device, workspace=opt.workspace, criterion=criterion, fp16=opt.fp16, metrics=metrics, use_checkpoint=opt.ckpt)if opt.test_train:test_set = NeRFDataset(opt, device=device, type='train')# a manual fix to test on the training datasettest_set.training = False test_set.num_rays = -1test_loader = test_set.dataloader()else:test_loader = NeRFDataset(opt, device=device, type='test').dataloader()# temp fix: for update_extra_statesmodel.aud_features = test_loader._data.audsmodel.eye_areas = test_loader._data.eye_areaif opt.gui:# we still need test_loader to provide audio features for testing.with NeRFGUI(opt, trainer, test_loader) as gui:gui.render()else:### evaluate metrics (slow)if test_loader.has_gt:trainer.evaluate(test_loader)### test and save video (fast) trainer.test(test_loader)else:optimizer = lambda model: torch.optim.Adam(model.get_params(opt.lr, opt.lr_net), betas=(0.9, 0.99), eps=1e-15)train_loader = NeRFDataset(opt, device=device, type='train').dataloader()assert len(train_loader) < opt.ind_num, f"[ERROR] dataset too many frames: {len(train_loader)}, please increase --ind_num to this number!"# temp fix: for update_extra_statesmodel.aud_features = train_loader._data.audsmodel.eye_area = train_loader._data.eye_areamodel.poses = train_loader._data.poses# decay to 0.1 * init_lr at last iter stepif opt.finetune_lips:scheduler = lambda optimizer: optim.lr_scheduler.LambdaLR(optimizer, lambda iter: 0.05 ** (iter / opt.iters))else:scheduler = lambda optimizer: optim.lr_scheduler.LambdaLR(optimizer, lambda iter: 0.1 ** (iter / opt.iters))metrics = [PSNRMeter(), LPIPSMeter(device=device)]eval_interval = max(1, int(5000 / len(train_loader)))trainer = Trainer('ngp', opt, model, device=device, workspace=opt.workspace, optimizer=optimizer, criterion=criterion, ema_decay=0.95, fp16=opt.fp16, lr_scheduler=scheduler, scheduler_update_every_step=True, metrics=metrics, use_checkpoint=opt.ckpt, eval_interval=eval_interval)if opt.gui:with NeRFGUI(opt, trainer, train_loader) as gui:gui.render()else:valid_loader = NeRFDataset(opt, device=device, type='val', downscale=1).dataloader()max_epoch = np.ceil(opt.iters / len(train_loader)).astype(np.int32)print(f'[INFO] max_epoch = {max_epoch}')trainer.train(train_loader, valid_loader, max_epoch)# free some memdel train_loader, valid_loadertorch.cuda.empty_cache()# also testtest_loader = NeRFDataset(opt, device=device, type='test').dataloader()if test_loader.has_gt:trainer.evaluate(test_loader) # blender has gt, so evaluate it.trainer.test(test_loader)参数:

--preload 0:从硬盘加载数据

--preload 1: 指定CPU,约70G内存

--preload 2: 指定GPU,约24G显存

1.头部训练

python main.py data/vrhm/ --workspace trial_vrhm/ -O --iters 2000002.唇部微调

python main.py data/vrhm/ --workspace trial_vrhm/ -O --iters 500000 --finetune_lips3.身体部分训练

python main.py data/vrhm/ --workspace trial_vrhm_torso/ -O --torso --head_ckpt <trial_ID>/checkpoints/npg_xxx.pth> --iters 200000 --preload 2

六、报错解决

报错No module named 'sklearn'

pip install -U scikit-learn