进入正文前,感谢宝子们订阅专题、点赞、评论、收藏!关注IT贫道,获取高质量博客内容!

进入正文前,感谢宝子们订阅专题、点赞、评论、收藏!关注IT贫道,获取高质量博客内容!

🏡个人主页:含各种IT体系技术,IT贫道_Apache Doris,大数据OLAP体系技术栈,Kerberos安全认证-CSDN博客

📌订阅:拥抱独家专题,你的订阅将点燃我的创作热情!

👍点赞:赞同优秀创作,你的点赞是对我创作最大的认可!

⭐️ 收藏:收藏原创博文,让我们一起打造IT界的荣耀与辉煌!

✏️评论:留下心声墨迹,你的评论将是我努力改进的方向!

目录

1. Java 读写ClickHouse API

2. Spark 写入 ClickHouse API

1. Java 读写ClickHouse API

Java读取ClickHouse中的数据API 。

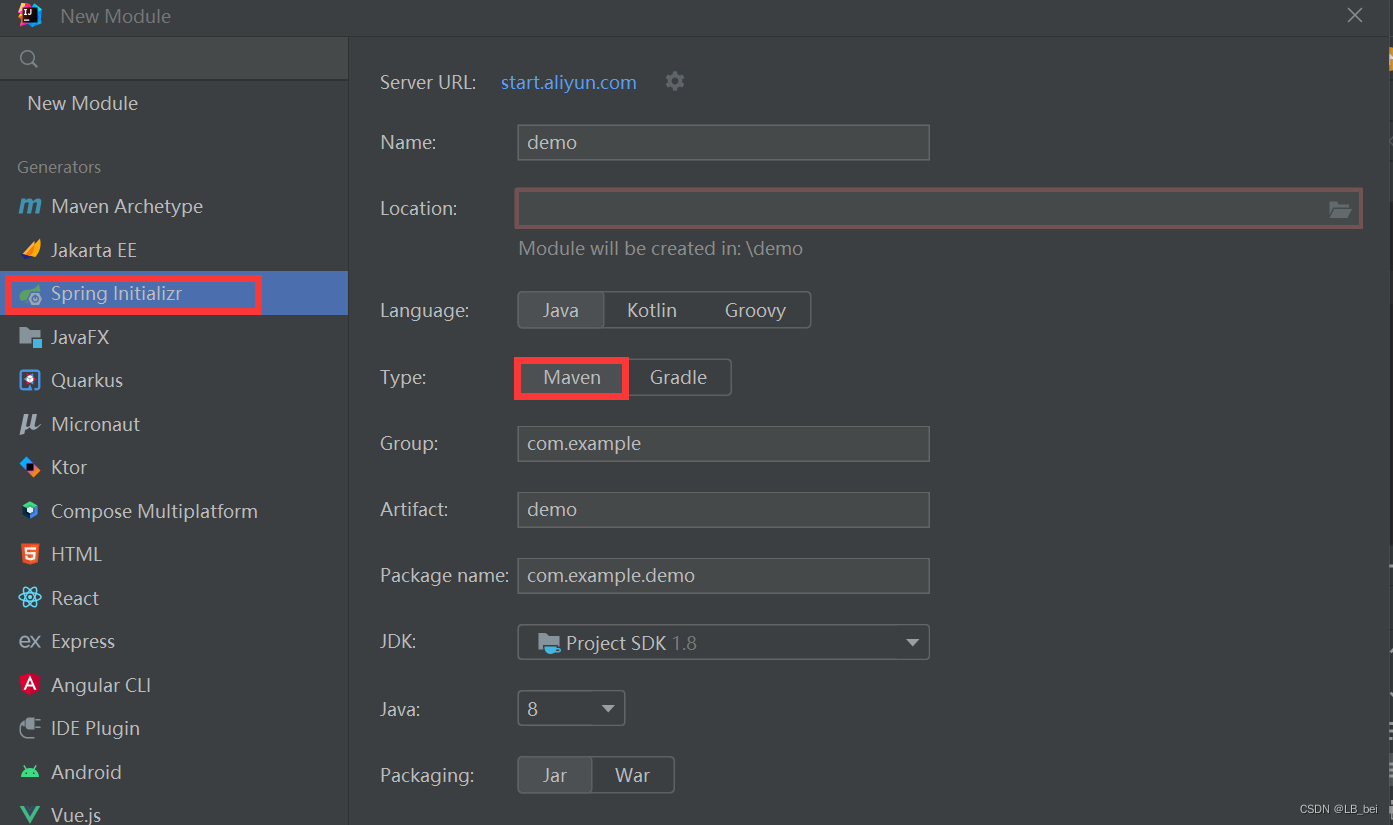

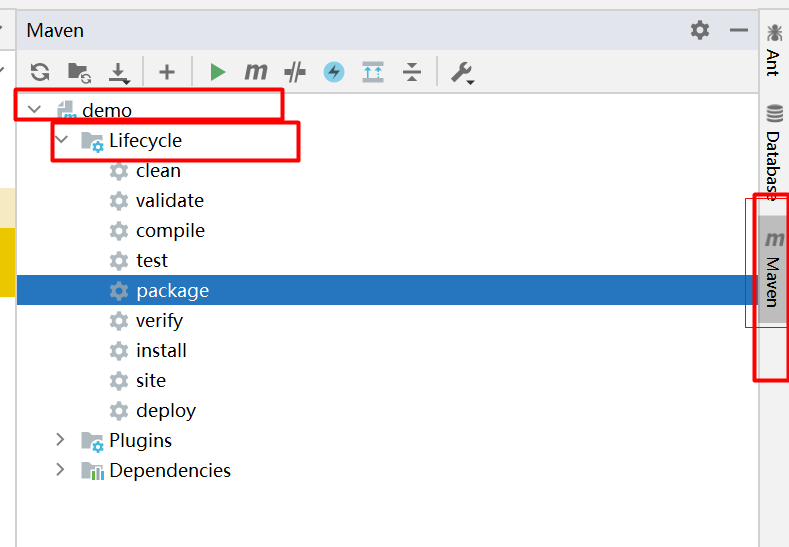

1) 首先需要加入maven依赖

<!-- 连接ClickHouse需要驱动包-->

<dependency><groupId>ru.yandex.clickhouse</groupId><artifactId>clickhouse-jdbc</artifactId><version>0.2.4</version>

</dependency>2) Java 读取ClickHouse单节点表数据

ClickHouseProperties props = new ClickHouseProperties();

props.setUser("default");

props.setPassword("");

BalancedClickhouseDataSource dataSource = new BalancedClickhouseDataSource("jdbc:clickhouse://node1:8123/default", props);

ClickHouseConnection conn = dataSource.getConnection();

ClickHouseStatement statement = conn.createStatement();

ResultSet rs = statement.executeQuery("select id,name,age from test");

while(rs.next()){int id = rs.getInt("id");String name = rs.getString("name");int age = rs.getInt("age");System.out.println("id = "+id+",name = "+name +",age = "+age);

}3. Java 读取ClickHouse集群表数据

ClickHouseProperties props = new ClickHouseProperties();

props.setUser("default");

props.setPassword("");

BalancedClickhouseDataSource dataSource = new BalancedClickhouseDataSource("jdbc:clickhouse://node1:8123,node2:8123,node3:8123/default", props);

ClickHouseConnection conn = dataSource.getConnection();

ClickHouseStatement statement = conn.createStatement();

ResultSet rs = statement.executeQuery("select id,name from t_cluster");

while(rs.next()){int id = rs.getInt("id");String name = rs.getString("name");System.out.println("id = "+id+",name = "+name );

}4) Java向ClickHouse 表中写入数据。

# API 操作:ClickHouseProperties props = new ClickHouseProperties();

props.setUser("default");

props.setPassword("");

BalancedClickhouseDataSource dataSource = new BalancedClickhouseDataSource("jdbc:clickhouse://node1:8123/default", props);

ClickHouseConnection conn = dataSource.getConnection();

ClickHouseStatement statement = conn.createStatement();

statement.execute("insert into test values (100,'王五',30)");//可以拼接批量插入多条#查询default库下 test表 数据:node1 :) select * from test;┌──id─┬─name─┬─age─┐│ 100 │ 王五 │ 30 │└─────┴──────┴─────┘┌─id─┬─name─┬─age─┐│ 1 │ 张三 │ 18 ││ 2 │ 李四 │ 19 │└────┴──────┴─────┘2. Spark 写入 ClickHouse API

SparkCore写入ClickHouse,可以直接采用写入方式。下面案例是使用SparkSQL将结果存入ClickHouse对应的表中。在ClickHouse中需要预先创建好对应的结果表。

1) 导入依赖

<!-- 连接ClickHouse需要驱动包-->

<dependency><groupId>ru.yandex.clickhouse</groupId><artifactId>clickhouse-jdbc</artifactId><version>0.2.4</version><!-- 去除与Spark 冲突的包 --><exclusions><exclusion><groupId>com.fasterxml.jackson.core</groupId><artifactId>jackson-databind</artifactId></exclusion><exclusion><groupId>net.jpountz.lz4</groupId><artifactId>lz4</artifactId></exclusion>

</exclusions>

</dependency><!-- Spark-core -->

<dependency><groupId>org.apache.spark</groupId><artifactId>spark-core_2.11</artifactId><version>2.3.1</version>

</dependency>

<!-- SparkSQL -->

<dependency><groupId>org.apache.spark</groupId><artifactId>spark-sql_2.11</artifactId><version>2.3.1</version>

</dependency>

<!-- SparkSQL ON Hive-->

<dependency><groupId>org.apache.spark</groupId><artifactId>spark-hive_2.11</artifactId><version>2.3.1</version>

</dependency>2) 代码编写:

val session: SparkSession = SparkSession.builder().master("local").appName("test").getOrCreate()

val jsonList = List[String]("{\"id\":1,\"name\":\"张三\",\"age\":18}","{\"id\":2,\"name\":\"李四\",\"age\":19}","{\"id\":3,\"name\":\"王五\",\"age\":20}"

)//将jsonList数据转换成DataSet

import session.implicits._

val ds: Dataset[String] = jsonList.toDS()val df: DataFrame = session.read.json(ds)

df.show()//将结果写往ClickHouse

val url = "jdbc:clickhouse://node1:8123/default"

val table = "test"

val properties = new Properties()

properties.put("driver", "ru.yandex.clickhouse.ClickHouseDriver")

properties.put("user", "default")

properties.put("password", "")

properties.put("socket_timeout", "300000")

df.write.mode(SaveMode.Append).option(JDBCOptions.JDBC_BATCH_INSERT_SIZE, 100000).jdbc(url, table, properties)👨💻如需博文中的资料请私信博主。

![[Docker] Portainer + nginx + AList 打造Docker操作三板斧](https://img-blog.csdnimg.cn/2a4611ceda3a4a59a803607ba037537f.png)