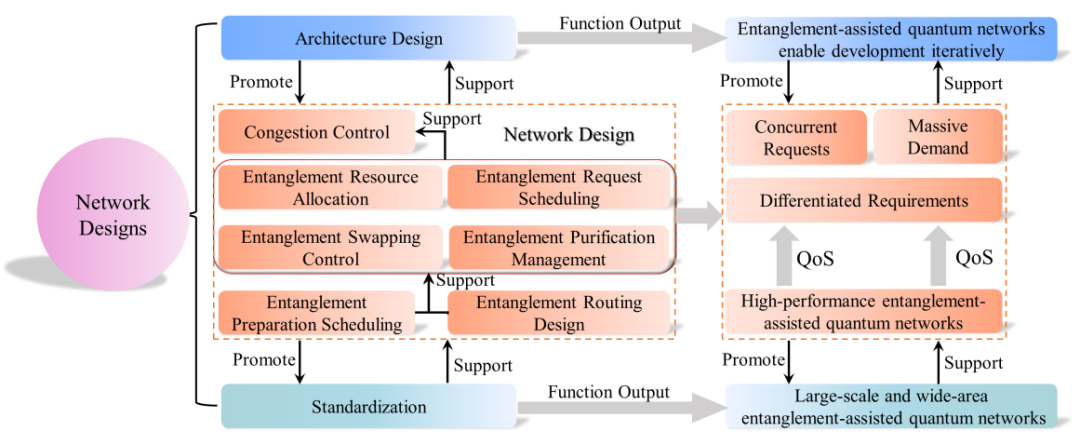

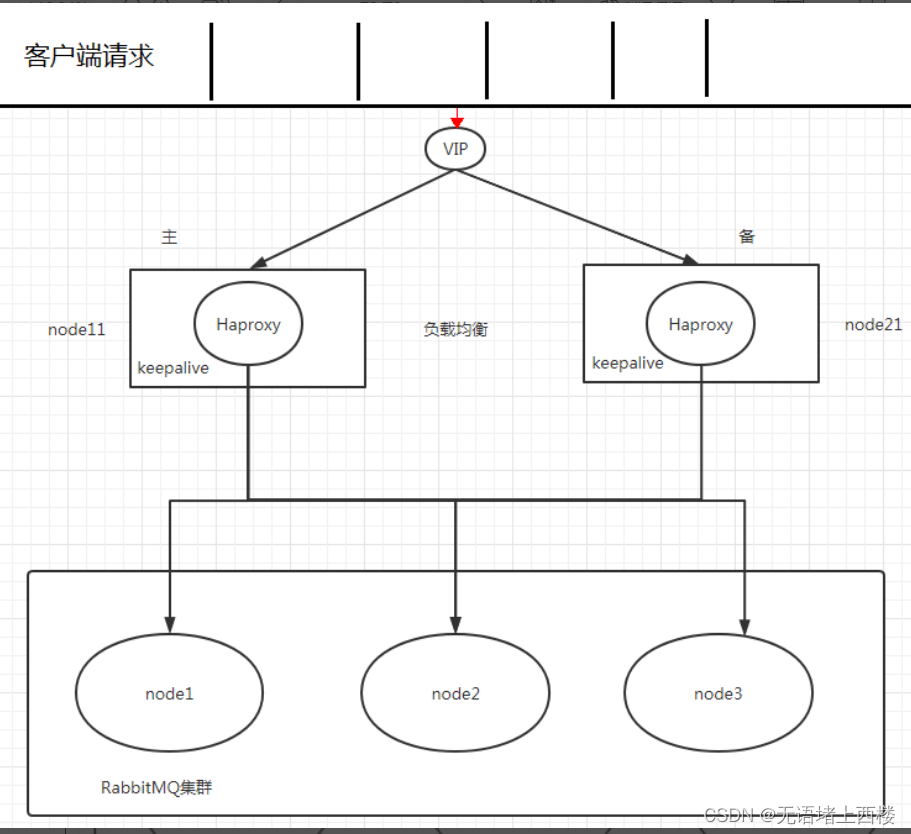

Haproxy 实现负载均衡

架构图

下载依赖

yum -y install libnl libnl-devel

yum install gcc gcc-c++ openssl openssl-devel -y

yum update glib* -y下载 haproxy

下载 haproxy,通过Index of /repo/pkgs/haproxy (fedoraproject.org)下载haproxy,进行解压

tar -zxvf haproxy-2.0.3.tar.gz进入解压后执行下面的编译命令

make TARGET=linux-glibc PREFIX=/usr/app/haproxy-2.0.3

make install PREFIX=/usr/app/haproxy-2.0.3配置环境变量,进入vim /etc/profile,写入配置

export HAPROXY_HOME=/usr/app/haproxy-2.0.3

export PATH=$PATH:$HAPROXY_HOME/sbin

使配置生效

source /etc/profile修改haproxy.cfg

修改 node1 和 node2 的 haproxy.cfg,vim /etc/haproxy/haproxy.cfg

# 全局配置

global# 日志输出配置、所有日志都记录在本机,通过 local0 进行输出log 127.0.0.1 local0 info# 最大连接数maxconn 4096# 改变当前的工作目录chroot /usr/app/haproxy-2.0.3# 以指定的 UID 运行 haproxy 进程uid 99# 以指定的 GID 运行 haproxy 进程gid 99# 以守护进行的方式运行daemon# 当前进程的 pid 文件存放位置pidfile /usr/app/haproxy-2.0.3/haproxy.pid# 默认配置

defaults# 应用全局的日志配置log global# 使用4层代理模式,7层代理模式则为"http"mode tcp# 日志类别option tcplog# 不记录健康检查的日志信息option dontlognull# 3次失败则认为服务不可用retries 3# 每个进程可用的最大连接数maxconn 2000# 连接超时timeout connect 5s# 客户端超时timeout client 120s# 服务端超时timeout server 120s# 绑定配置

listen rabbitmq_adminbind :15673mode tcpbalance roundrobin rabbit-node为每个主机名server node1 rabbit-node1:15672server node2 rabbit-node2:15672server node3 rabbit-node3:15672# 绑定配置

listen rabbitmq_clusterbind :5673# 配置TCP模式mode tcp# 采用加权轮询的机制进行负载均衡balance roundrobin# RabbitMQ 集群节点配置 rabbit-node为每个主机名server node1 rabbit-node1:5672 check inter 5000 rise 2 fall 3 weight 1server node2 rabbit-node2:5672 check inter 5000 rise 2 fall 3 weight 1server node3 rabbit-node3:5672 check inter 5000 rise 2 fall 3 weight 1# 配置监控页面

listen monitorbind :8100mode httpoption httplogstats enablestats uri /statsstats refresh 5s点启动 haproxy

在两台节点启动 haproxy

haproxy -f /etc/haproxy/haproxy.cfg

ps -ef | grep haproxy开放端口

firewall-cmd --add-port=8100/tcp --permanent

# 重启防火墙

firewall-cmd --reload

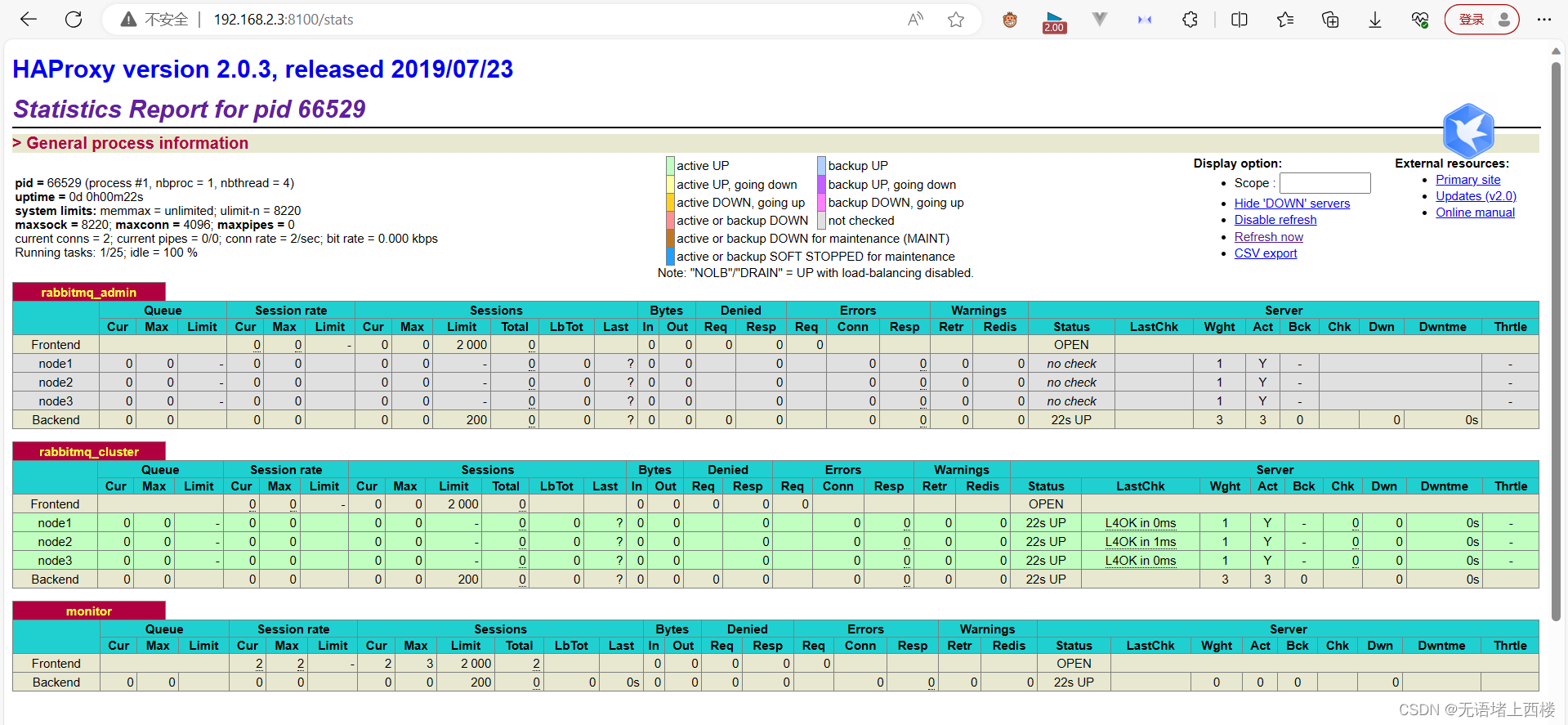

访问地址

http://192.168.2.3:8100/stats

这样我们就可以通过192.168.2.3:5673或192.168.2.130:5673去访问rabbitmq

KeepAlived实现真正高可用

如果HAProxy 发生故障了怎么办,我们可以搭建 Keepalived 来解决 HAProxy 故障转移的问题

下载KeepAlived

通过链接wget获取

wget https://www.keepalived.org/software/keepalived-2.2.2.tar.gz进行解压和编译

# 解压

tar -xvf keepalived-2.2.2.tar.gz# 编译安装

mkdir keepalived-2.2.2/build

cd keepalived-2.2.2/build

../configure --prefix=/usr/local/keepalived-2.2.2

make && make install环境配置,配置文件链接

# 创建目录

mkdir /etc/keepalived

# 备份

cp /usr/local/keepalived-2.2.2/etc/keepalived/keepalived.conf /usr/local/keepalived-2.2.2/etc/keepalived/keepalived.conf_bak

# 链接

ln -s /usr/local/keepalived-2.2.2/etc/keepalived/keepalived.conf /etc/keepalived/将所有 Keepalived 脚本拷贝到 /etc/init.d/ 目录下

# 编译目录中的脚本

cp keepalived-2.2.2/keepalived/etc/init.d/keepalived /etc/init.d/

# 安装目录中的脚本

cp /usr/local/keepalived-2.2.2/etc/sysconfig/keepalived /etc/sysconfig/

cp /usr/local/keepalived-2.2.2/sbin/keepalived /usr/sbin/设置开机自启动

chmod +x /etc/init.d/keepalived

chkconfig --add keepalived

systemctl enable keepalived.service配置 Keepalived

对 node1 主节点上 keepalived.conf 配置文件进行修改 ,vi /etc/keepalived/keepalived.conf

global_defs {# 路由id,主备节点不能相同router_id node1

}# 自定义监控脚本

vrrp_script chk_haproxy {# 脚本位置script "/etc/keepalived/haproxy_check.sh" # 脚本执行的时间间隔interval 5 weight 10

}vrrp_instance VI_1 {# Keepalived的角色,MASTER 表示主节点,BACKUP 表示备份节点state MASTER # 指定监测的网卡,可以使用 ifconfig 进行查看interface ens33# 虚拟路由的id,主备节点需要设置为相同virtual_router_id 1# 优先级,主节点的优先级需要设置比备份节点高priority 100 # 设置主备之间的检查时间,单位为秒 advert_int 1 # 定义验证类型和密码authentication { auth_type PASSauth_pass 123456}# 调用上面自定义的监控脚本track_script {chk_haproxy}virtual_ipaddress {# 虚拟IP地址,可以设置多个192.168.2.200 }

}node2的操作跟node1差不多,对node2 备份节点上,vi /etc/keepalived/keepalived.conf

global_defs {# 路由id,主备节点不能相同 router_id node2

}vrrp_script chk_haproxy {script "/etc/keepalived/haproxy_check.sh" interval 5 weight 10

}vrrp_instance VI_1 {# BACKUP 表示备份节点state BACKUP interface ens33virtual_router_id 1# 优先级,备份节点要比主节点低priority 50 advert_int 1 authentication { auth_type PASSauth_pass 123456}track_script {chk_haproxy}virtual_ipaddress {192.168.2.200 }

}编写HAProxy状态检测脚本

# 创建存放检测脚本的日志目录

mkdir -p /usr/local/keepalived-2.2.2/log# 创建检测脚本

vim /etc/keepalived/haproxy_check.sh脚本如下:

#!/bin/bashLOGFILE="/usr/local/keepalived-2.2.2/log/haproxy-check.log"

echo "[$(date)]:check_haproxy status" >> $LOGFILE# 判断haproxy是否已经启动

HAProxyStatusA=`ps -C haproxy --no-header|wc -l`

if [ $HAProxyStatusA -eq 0 ];thenecho "[$(date)]:启动haproxy服务......" >> $LOGFILE# 如果没有启动,则启动/usr/local/haproxy-2.3.10/sbin/haproxy -f /usr/local/haproxy-2.3.10/haproxy.cfg >> $LOGFILE 2>&1

fi# 睡眠5秒以便haproxy完全启动

sleep5# 如果haproxy还是没有启动,此时需要将本机的keepalived服务停掉,以便让VIP自动漂移到另外一台haproxy

HAProxyStatusB=`ps -C haproxy --no-header|wc -l`

if [ $HAProxyStatusB eq 0 ];thenecho "[$(date)]:haproxy启动失败,睡眼5秒后haproxy服务还是没有启动,现在关闭keepalived服务,以便让VIP自动漂移到另外一台haproxy" >> $LOGFILEsystemctl stop keepalived

fi赋权

chmod +x /etc/keepalived/haproxy_check.sh启动服务

分别在 node1 和 node2 上启动 KeepAlived 服务,命令如下

systemctl start keepalived查看虚拟 IP

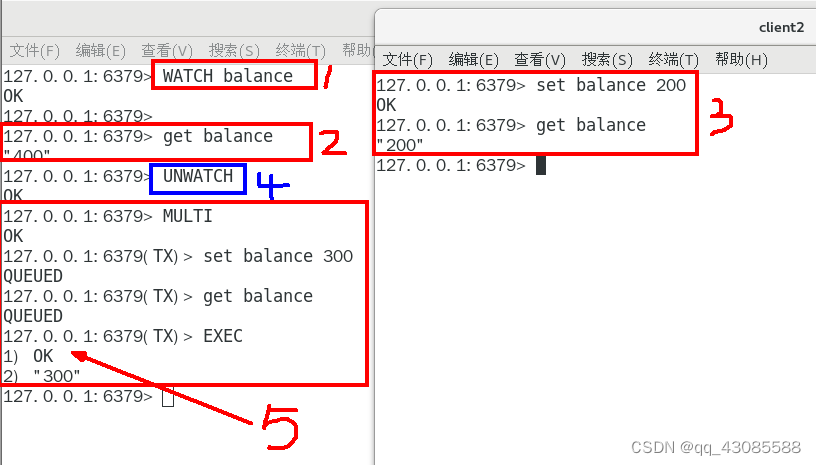

ip a 命令查看到虚拟 IP 的情况

ip a

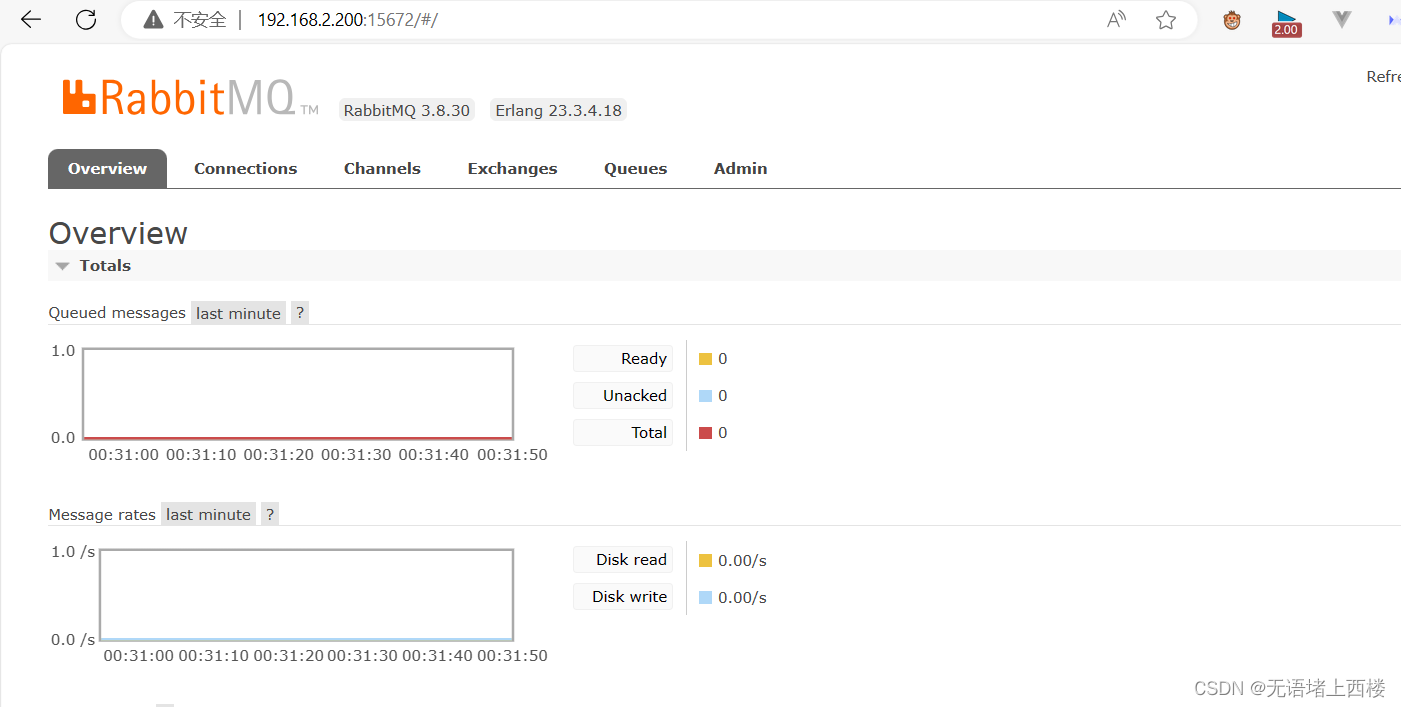

访问rabbitmq

这样我们可以通过192.168.2.200:5672去访问rabbitmq

我们停掉node1的KeepAlived

systemctl stop keepalived我们仍旧可以通过备份服务器去获取服务