硬件最低需求,显存13G以上

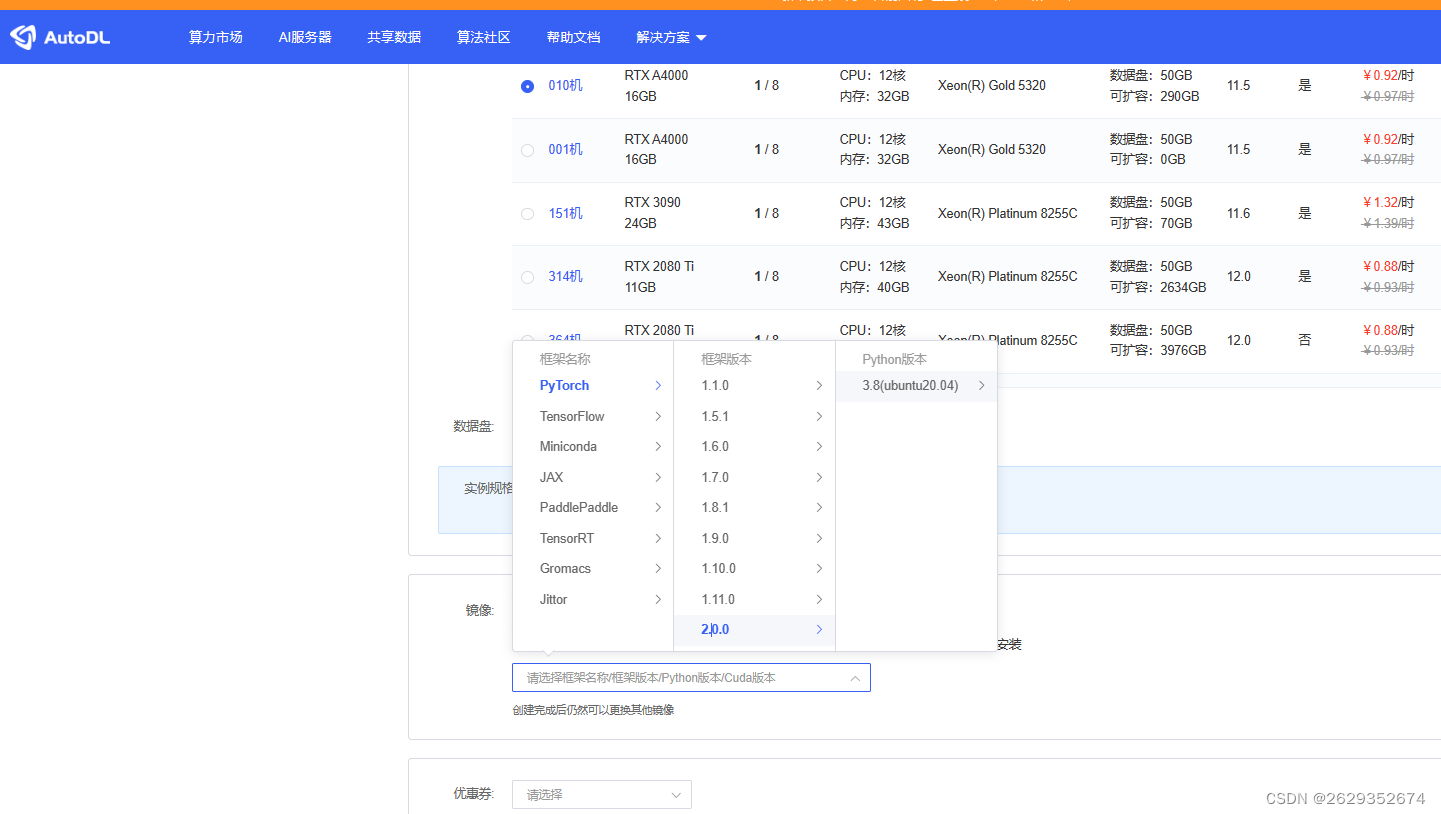

基本环境:

1.autodl-tmp 目录下

git clone https://github.com/THUDM/ChatGLM2-6B.git

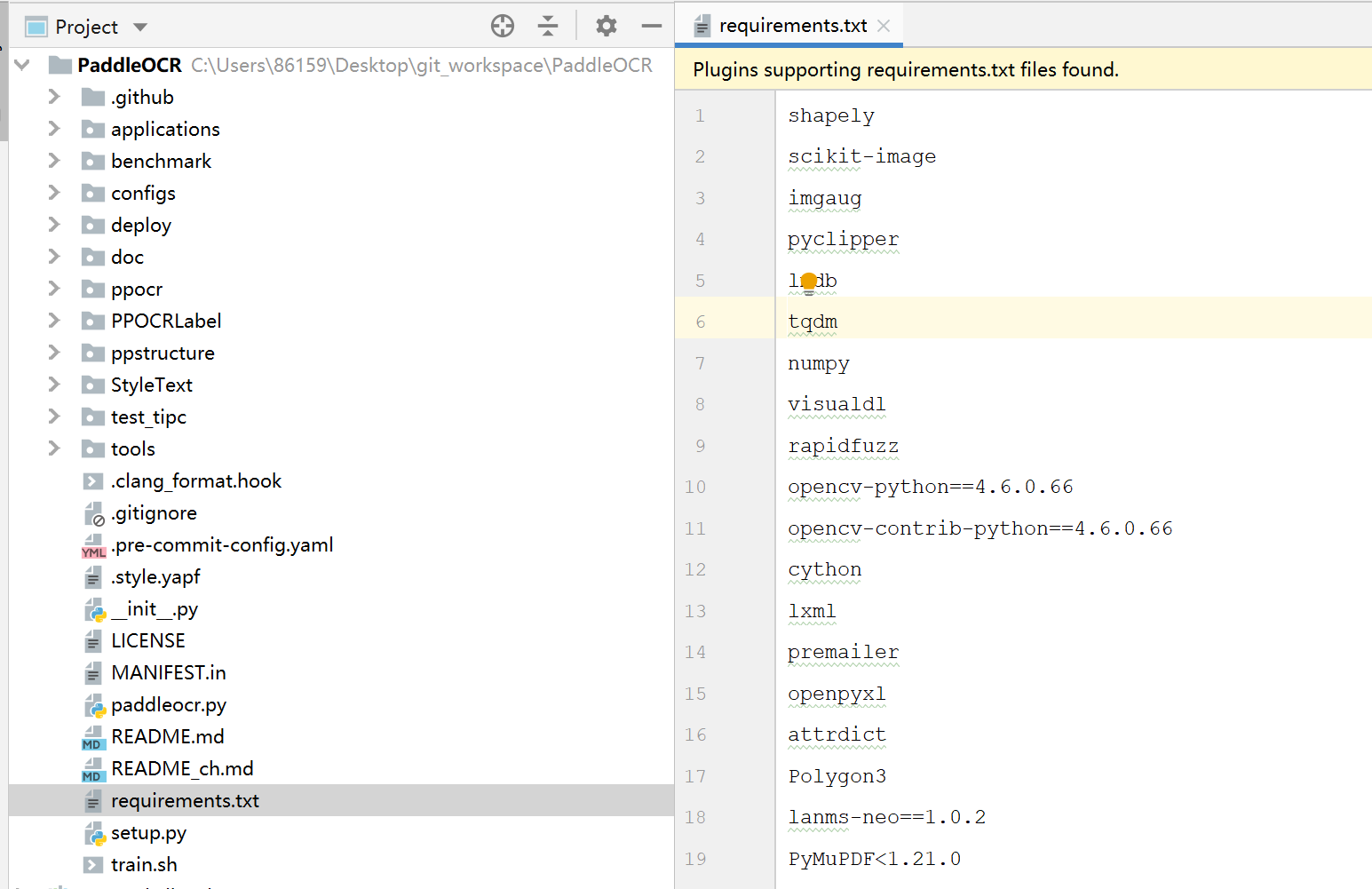

然后使用 pip 安装依赖:

pip install -r requirements.txt

pip

使用pip 阿里的

再执行git clone之前,要先在命令行执行学术加速的代码,否则执行速度太慢。

source /etc/network_turbo

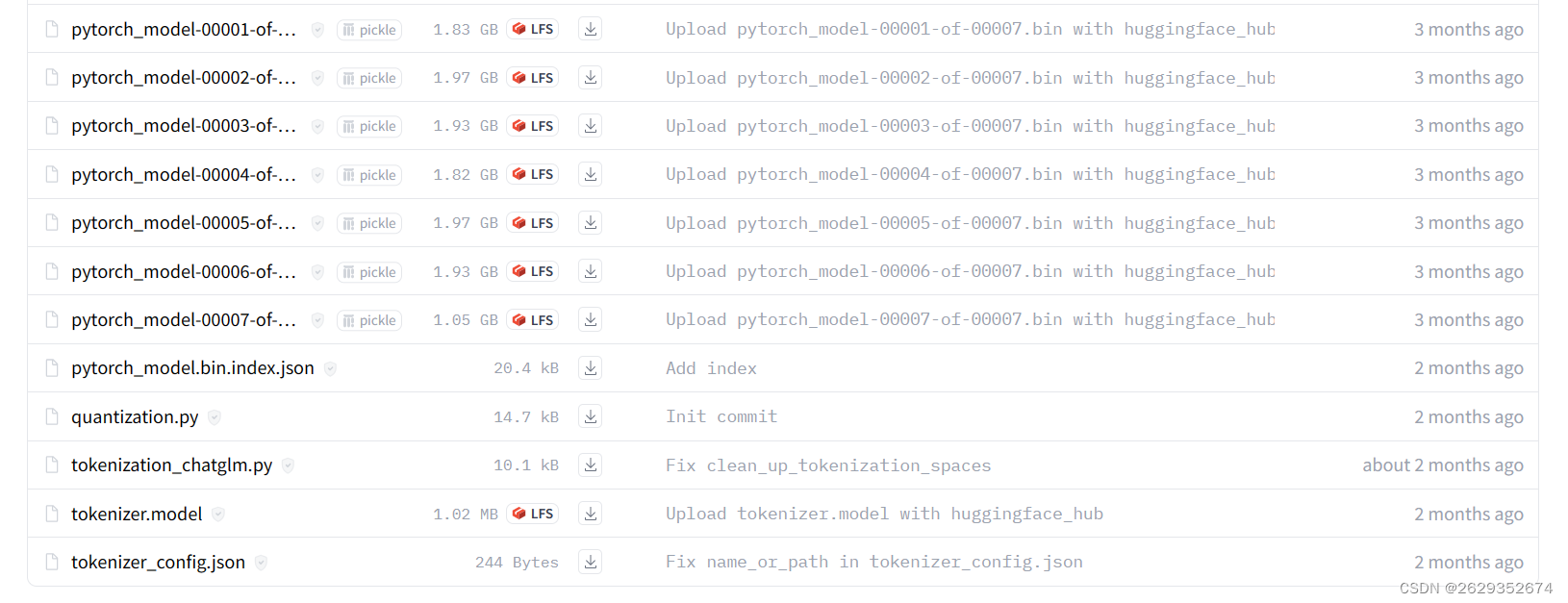

git clone https://huggingface.co/THUDM/chatglm2-6b/

https://huggingface.co/THUDM/chatglm2-6b/

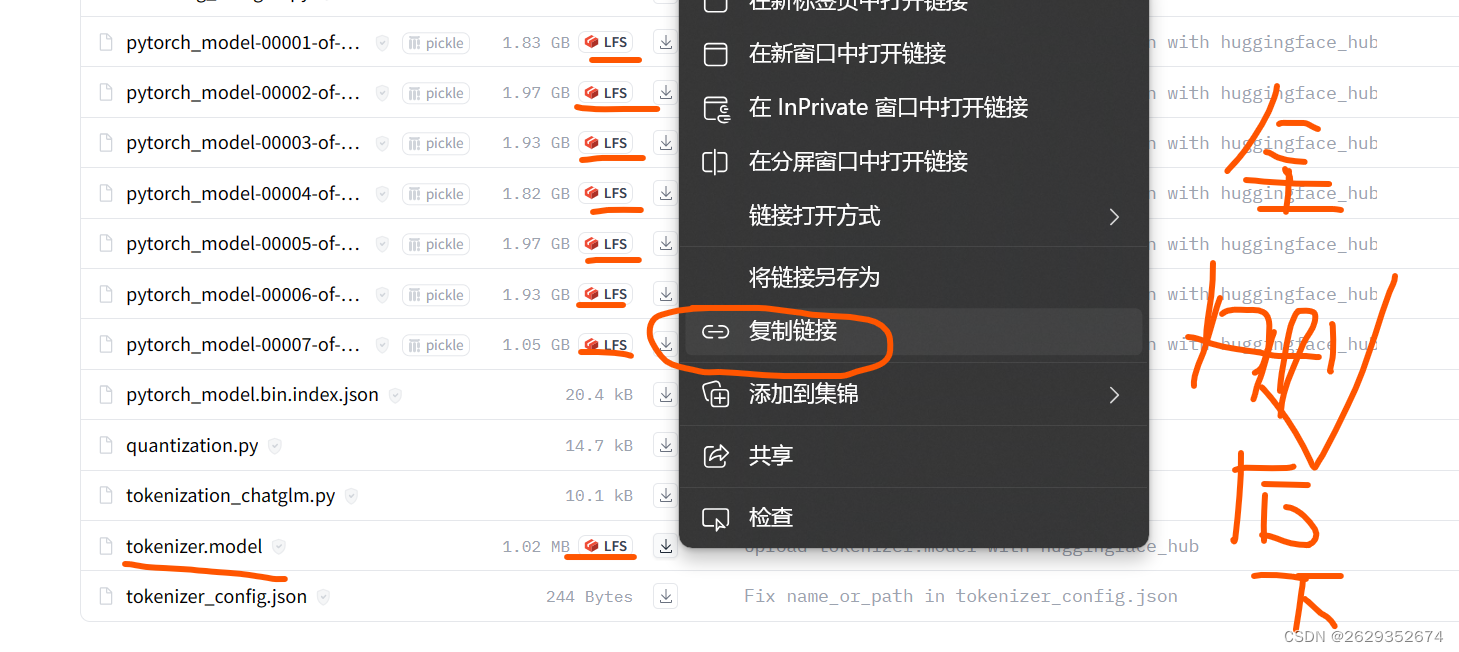

wget https://huggingface.co/THUDM/chatglm2-6b/resolve/main/tokenizer.model

wget https://huggingface.co/THUDM/chatglm2-6b/resolve/main/pytorch_model-00007-of-00007.bin

wget https://huggingface.co/THUDM/chatglm2-6b/resolve/main/pytorch_model-00006-of-00007.bin

wget https://huggingface.co/THUDM/chatglm2-6b/resolve/main/pytorch_model-00005-of-00007.bin

wget https://huggingface.co/THUDM/chatglm2-6b/resolve/main/pytorch_model-00004-of-00007.bin

wget https://huggingface.co/THUDM/chatglm2-6b/resolve/main/pytorch_model-00003-of-00007.bin

wget https://huggingface.co/THUDM/chatglm2-6b/resolve/main/pytorch_model-00002-of-00007.bin

wget https://huggingface.co/THUDM/chatglm2-6b/resolve/main/pytorch_model-00001-of-00007.bin

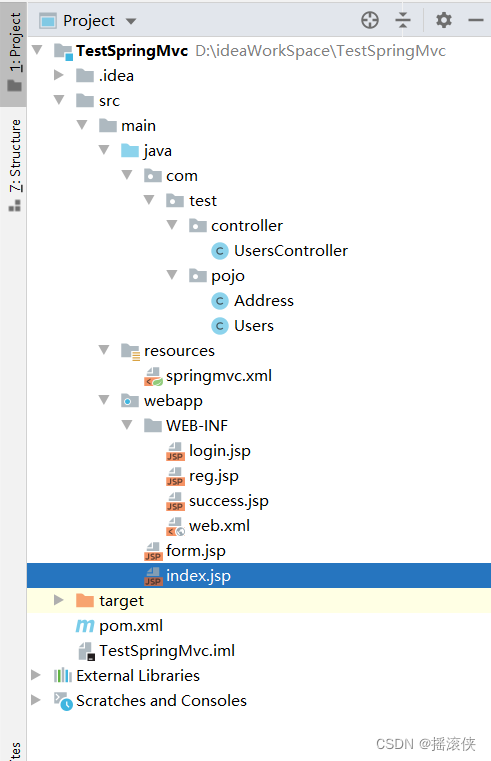

- autodl-tmp/ChatGLM2-6B/ web_demo.py 路径修改

demo.queue().launch(server_port=6006,share=True, inbrowser=True)

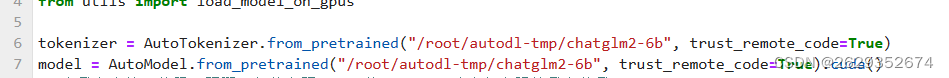

tokenizer = AutoTokenizer.from_pretrained("/root/autodl-tmp/chatglm2-6b", trust_remote_code=True)

model = AutoModel.from_pretrained("/root/autodl-tmp/chatglm2-6b", trust_remote_code=True).cuda()