我的博客原文链接:https://blog.gcc.ac.cn/post/2023/%E8%BF%B7%E4%BD%A0ceph%E9%9B%86%E7%BE%A4%E6%90%AD%E5%BB%BA/

环境

机器列表:

| IP | 角色 | 说明 |

|---|---|---|

| 10.0.0.15 | osd | ARMv7,512M内存,32G存储,百兆网口 |

| 10.0.0.16 | client | ARM64,2G内存,测试集群性能用,千兆网口 |

| 10.0.0.17 | osd | AMD64,2G内存,32G存储,百兆网口 |

| 10.0.0.18 | mon,mgr,mds,osd | AMD64,2G内存,32G存储,百兆网口 |

| 10.0.0.19 | osd | ARM64,1G内存,32G存储,百兆网口 |

这集群有x86,有ARM,ARM里面还分ARMv7和ARM64,你问我是有意的还是不小心的?我是有意用三种架构的CPU的。

注意:老版本(14.2.21)不同架构不能混合部署,否则osd通信有问题。后续尝试17.2.5版本发现已修复,不过新版本又出现了个RAW USED不准的bug(可能是因为ARMv7是32位,只支持4G空间)以及osd无法删除的bug。

系统采用ubuntu或debian。参考文档:https://docs.ceph.com/en/quincy/install/manual-deployment

官方推荐内存最少4G,我最低用512M内存的板子,纯属娱乐。

部署步骤

【可选】增加软件源/etc/apt/sources.list.d/ceph.list:

deb https://mirrors.tuna.tsinghua.edu.cn/ceph/debian-quincy/ bullseye main

【可选】添加公钥:

apt-key adv --keyserver keyserver.ubuntu.com --recv-keys E84AC2C0460F3994

在所有ceph节点和客户端安装软件包:

apt-get update

apt-get install -y ceph ceph-mds ceph-volume

在所有ceph节点和客户端创建配置文件/etc/ceph/ceph.conf:

[global]

fsid = 4a0137cc-1081-41e4-bacd-5ecddc63ae2d

mon initial members = intel-compute-stick

mon host = 10.0.0.17

public network = 10.0.0.0/24

cluster network = 10.0.0.0/24

osd journal size = 64

osd pool default size = 2

osd pool default min size = 1

osd pool default pg num = 64

osd pool default pgp num = 64

osd crush chooseleaf type = 1

在mon节点执行初始化命令:

ceph-authtool --create-keyring /tmp/ceph.mon.keyring --gen-key -n mon. --cap mon 'allow *'

ceph-authtool --create-keyring /etc/ceph/ceph.client.admin.keyring --gen-key -n client.admin --cap mon 'allow *' --cap osd 'allow *' --cap mds 'allow *' --cap mgr 'allow *'

ceph-authtool --create-keyring /var/lib/ceph/bootstrap-osd/ceph.keyring --gen-key -n client.bootstrap-osd --cap mon 'profile bootstrap-osd' --cap mgr 'allow r'

ceph-authtool /tmp/ceph.mon.keyring --import-keyring /etc/ceph/ceph.client.admin.keyring

ceph-authtool /tmp/ceph.mon.keyring --import-keyring /var/lib/ceph/bootstrap-osd/ceph.keyring

chown ceph:ceph /tmp/ceph.mon.keyring

monmaptool --create --add intel-compute-stick 10.0.0.17 --fsid 4a0137cc-1081-41e4-bacd-5ecddc63ae2d /tmp/monmap

sudo -u ceph mkdir /var/lib/ceph/mon/ceph-intel-compute-stick

sudo -u ceph ceph-mon --mkfs -i intel-compute-stick --monmap /tmp/monmap --keyring /tmp/ceph.mon.keyring

systemctl start ceph-mon@intel-compute-stick

部署MGR:

name=intel-compute-stick

mkdir -p /var/lib/ceph/mgr/ceph-$name

ceph auth get-or-create mgr.$name mon 'allow profile mgr' osd 'allow *' mds 'allow *' > /var/lib/ceph/mgr/ceph-$name/keyring

ceph-mgr -i $name

【旧版本需要做此操作】在ARMv7架构的osd节点修改文件nano /usr/lib/python3/dist-packages/ceph_argparse.py,将timeout = kwargs.pop('timeout', 0)改成timeout = 24 * 60 * 60。

将/var/lib/ceph/bootstrap-osd/ceph.keyring同步到所有osd节点。

部署OSD:

truncate --size 16G osd.img

losetup /dev/loop5 /root/osd.img

ceph-volume raw prepare --data /dev/loop5 --bluestore

systemctl start ceph-osd@{osd-num} # 注意这个num要和前面输出一致

部署mds:

mkdir -p /var/lib/ceph/mds/ceph-intel-compute-stick

ceph-authtool --create-keyring /var/lib/ceph/mds/ceph-intel-compute-stick/keyring --gen-key -n mds.intel-compute-stick

ceph auth add mds.intel-compute-stick osd "allow rwx" mds "allow *" mon "allow profile mds" -i /var/lib/ceph/mds/ceph-intel-compute-stick/keyring

修改/etc/ceph/ceph.conf,增加如下内容

[mds.intel-compute-stick]

host = intel-compute-stick

执行命令:

ceph-mds --cluster ceph -i intel-compute-stick -m 10.0.0.17:6789

systemctl start ceph.target

创建cephfs:

ceph osd pool create perf_test 64

ceph osd pool create cephfs_data 64

ceph osd pool create cephfs_metadata 16

ceph fs new cephfs cephfs_metadata cephfs_data

ceph fs authorize cephfs client.cephfs_user / rw

# 将上面的输出复制到客户端`/etc/ceph/ceph.client.cephfs_user.keyring`文件中

挂载cephfs:

mkdir /mnt/cephfs

mount -t ceph cephfs_user@.cephfs=/ /mnt/cephfs

其它命令

显示 bluestore 缓存的当前值:ceph daemon /var/run/ceph/ceph-osd.1.asok config show | grep bluestore_cache_size

设置OSD缓存用量:ceph daemon /var/run/ceph/ceph-osd.1.asok config set bluestore_cache_size_ssd 134217128

ceph-volume raw list

重启后重新挂载osd:ceph-volume raw activate --device /dev/loop5 --no-systemd

手动删除osd:ceph osd out {osd-num}

ceph osd safe-to-destroy osd.2

ceph osd destroy {id} --yes-i-really-mean-it

性能测试

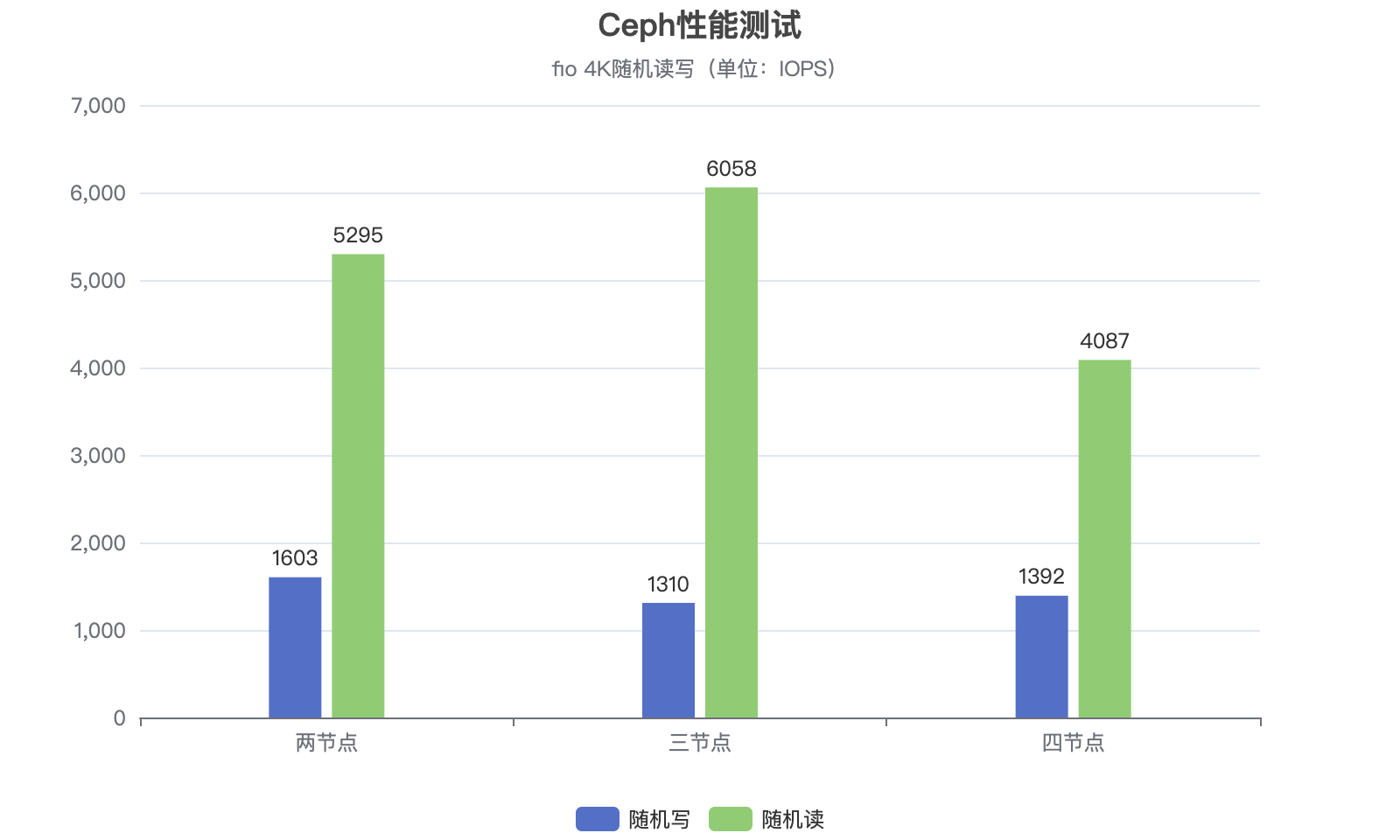

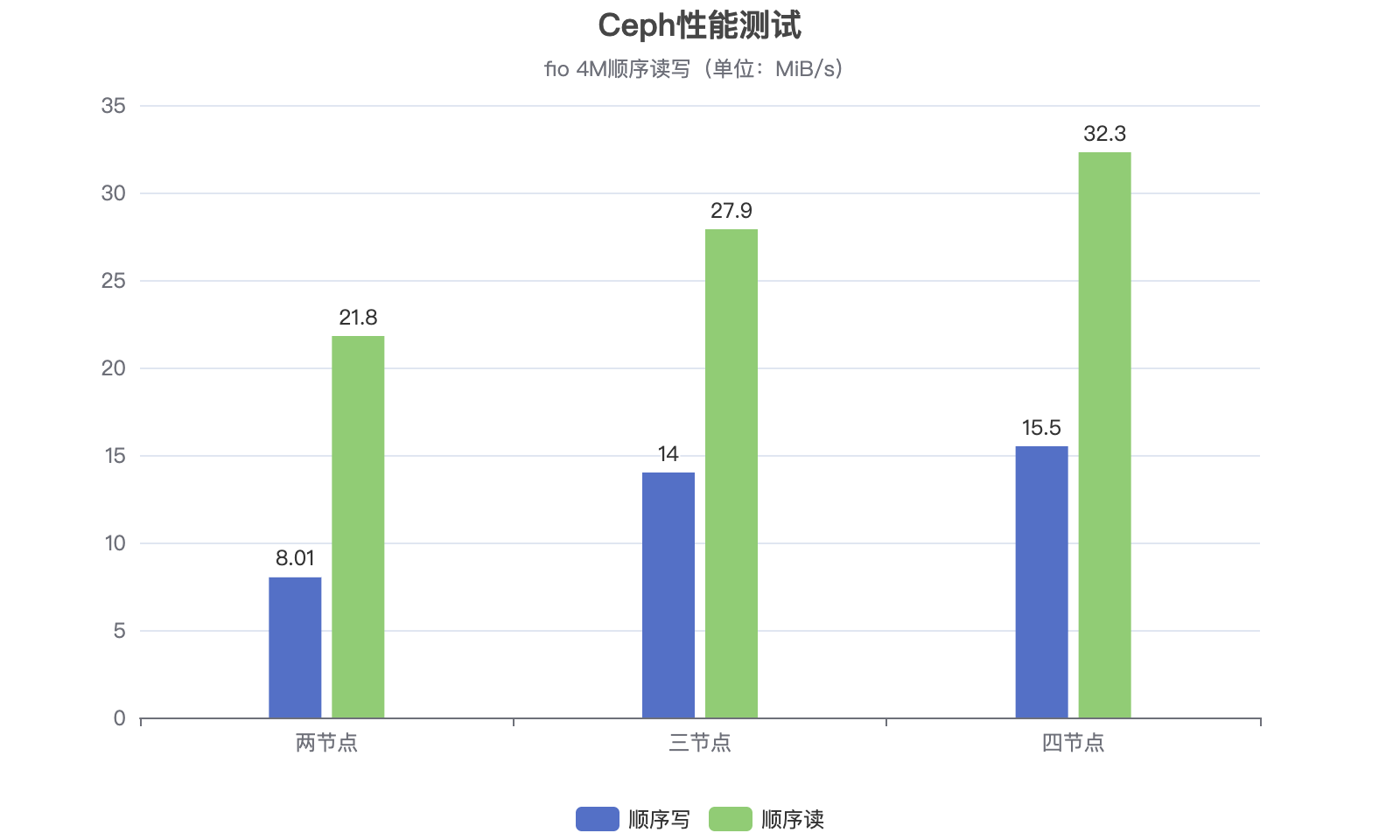

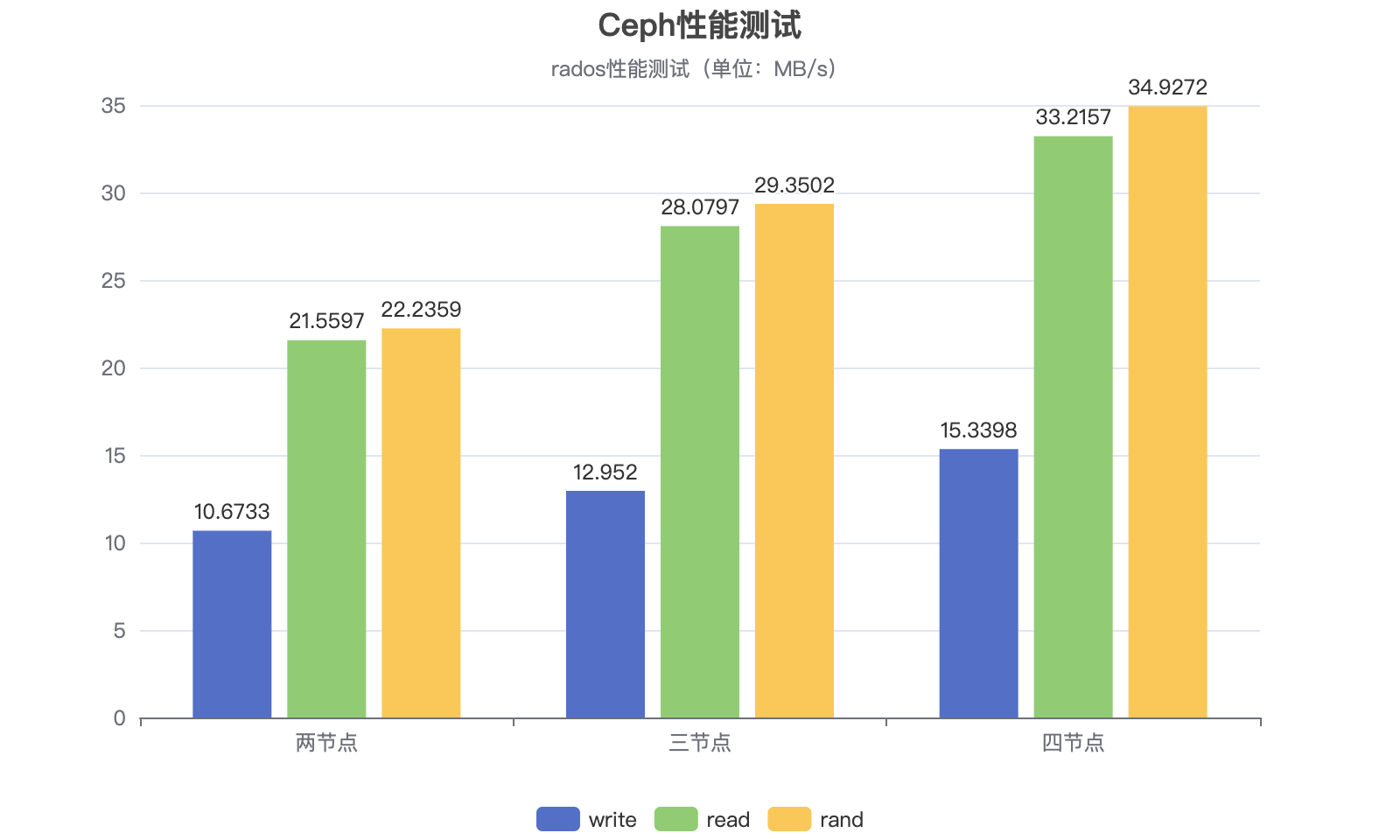

| 节点数 | 随机写(IOPS) | 随机读(IOPS) | 顺序写(MiB/s) | 顺序读(MiB/s) | rados写(MB/s) | rados读(MB/s) | rados随机(MB/s) |

|---|---|---|---|---|---|---|---|

| 2 | 1603 | 5295 | 8.01 | 21.8 | 10.6733 | 21.5597 | 22.2359 |

| 3 | 1310 | 6058 | 14.0 | 27.9 | 12.952 | 28.0797 | 29.3502 |

| 4 | 1392 | 4087 | 15.5 | 32.3 | 15.3398 | 33.2157 | 34.9272 |

两节点

cephfs:

- dd写入:16.3 MB/s (dd if=/dev/zero of=test.img bs=1M count=1024)

- dd读取:17.9 MB/s (echo 3 > /proc/sys/vm/drop_caches; dd if=test.img of=/dev/null bs=1M)

- fio随机写:1603

- fio随机读:5295

- fio顺序写:8201KiB/s

- fio顺序读:21.8MiB/s

rados bench -p perf_test 30 write --no-cleanup

hints = 1

Maintaining 16 concurrent writes of 4194304 bytes to objects of size 4194304 for up to 30 seconds or 0 objects

Object prefix: benchmark_data_orangepi3-lts_2101sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)0 0 0 0 0 0 - 01 16 16 0 0 0 - 02 16 16 0 0 0 - 03 16 16 0 0 0 - 04 16 19 3 2.99955 3 3.64087 3.387595 16 22 6 4.79925 12 4.34316 3.833326 16 25 9 5.99904 12 5.38901 4.341057 16 28 12 6.85602 12 6.50295 4.872518 16 31 15 7.49875 12 4.71442 4.97949 16 36 20 8.88738 20 4.61761 5.2450910 16 38 22 8.79849 8 9.75918 5.4015111 16 41 25 9.08934 12 5.56684 5.4417712 16 46 30 9.99826 20 3.34787 5.2129613 16 49 33 10.1521 12 4.10847 5.1728614 16 51 35 9.99824 8 3.49611 5.1667415 16 55 39 10.3982 16 6.06545 5.09816 16 58 42 10.4981 12 6.23518 5.0730817 16 59 43 10.1158 4 6.45517 5.1052218 16 62 46 10.2204 12 6.48019 5.0828219 16 63 47 9.89297 4 6.57521 5.11457

2023-04-18T15:12:33.881436+0800 min lat: 1.95623 max lat: 9.75918 avg lat: 5.12979sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)20 16 68 52 10.3981 20 6.95187 5.1297921 16 68 52 9.90296 0 - 5.1297922 16 70 54 9.8164 4 7.85105 5.2376223 16 72 56 9.73736 8 8.1019 5.3387224 16 76 60 9.99818 16 8.86371 5.4013325 16 77 61 9.75822 4 3.14892 5.364426 16 80 64 9.84436 12 9.63972 5.4586427 16 82 66 9.776 8 3.18262 5.4856428 16 84 68 9.71252 8 3.89847 5.5266929 16 87 71 9.79132 12 9.72659 5.6316830 16 91 75 9.99818 16 9.93409 5.6344931 13 91 78 10.0627 12 4.09479 5.6595232 11 91 80 9.99817 8 4.0216 5.6888733 6 91 85 10.3012 20 3.65814 5.729434 1 91 90 10.5863 20 4.55552 5.71818

Total time run: 34.1039

Total writes made: 91

Write size: 4194304

Object size: 4194304

Bandwidth (MB/sec): 10.6733

Stddev Bandwidth: 6.19765

Max bandwidth (MB/sec): 20

Min bandwidth (MB/sec): 0

Average IOPS: 2

Stddev IOPS: 1.58086

Max IOPS: 5

Min IOPS: 0

Average Latency(s): 5.70886

Stddev Latency(s): 2.2914

Max latency(s): 11.0174

Min latency(s): 1.95623

rados bench -p perf_test 30 seq

hints = 1sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)0 0 0 0 0 0 - 01 16 18 2 7.99563 8 0.749535 0.6400482 16 24 8 15.9934 24 1.82768 1.22093 16 30 14 18.6602 24 2.90052 1.763184 16 36 20 19.9939 24 3.22248 2.101215 16 41 25 19.9941 20 2.38014 2.204566 16 46 30 19.9943 20 3.69409 2.337437 16 52 36 20.5655 24 3.58809 2.40478 16 58 42 20.9942 24 3.94671 2.486649 15 63 48 21.3151 24 2.99373 2.5552710 16 69 53 21.1832 20 3.06825 2.5698811 16 75 59 21.4387 24 3.56289 2.5645612 16 80 64 21.3185 20 1.57452 2.5536213 16 86 70 21.5243 24 1.79171 2.5693314 16 91 75 21.4152 20 4.4904 2.624615 10 91 81 21.5871 24 4.2782 2.6707716 4 91 87 21.7375 24 2.84972 2.68923

Total time run: 16.8834

Total reads made: 91

Read size: 4194304

Object size: 4194304

Bandwidth (MB/sec): 21.5597

Average IOPS: 5

Stddev IOPS: 1.03078

Max IOPS: 6

Min IOPS: 2

Average Latency(s): 2.69827

Max latency(s): 4.4904

Min latency(s): 0.530561

rados bench -p perf_test 30 rand

hints = 1sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)0 0 0 0 0 0 - 01 16 20 4 15.9921 16 0.756867 0.5620192 16 26 10 19.9929 24 1.82705 1.096843 16 32 16 21.3269 24 2.89898 1.632164 16 38 22 21.9939 24 2.8592 1.965285 16 42 26 20.7945 16 2.89643 2.088976 16 48 32 21.3279 24 3.25362 2.244247 16 54 38 21.7089 24 2.53504 2.331038 16 60 44 21.9947 24 2.49214 2.393529 16 66 50 22.2169 24 2.8506 2.4624810 16 70 54 21.5948 16 2.89337 2.4911211 16 76 60 21.8128 24 2.13852 2.4972712 16 82 66 21.9946 24 2.53781 2.5401213 16 88 72 22.1485 24 2.53625 2.5763214 16 94 78 22.2804 24 2.89682 2.5972715 15 98 83 22.1192 20 2.80375 2.6157716 16 104 88 21.9865 20 2.54292 2.6256617 16 110 94 22.1046 24 2.84867 2.6438118 16 116 100 22.2097 24 3.20428 2.6525219 16 122 106 22.3036 24 2.5454 2.6641

2023-04-18T15:13:26.426279+0800 min lat: 0.367019 max lat: 3.92008 avg lat: 2.6753sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)20 16 127 111 22.1883 20 2.84974 2.675321 16 132 116 22.0838 20 2.90241 2.6828922 16 138 122 22.1707 24 3.20846 2.6852323 16 144 128 22.25 24 3.25698 2.6928424 16 150 134 22.3228 24 3.26158 2.6922325 16 155 139 22.2297 20 1.06362 2.6748126 16 160 144 22.1438 20 3.9225 2.6784327 16 166 150 22.2124 24 4.27967 2.6876528 16 172 156 22.276 24 4.33003 2.6988129 16 178 162 22.3353 24 3.9836 2.7107330 16 183 167 22.2573 20 2.13414 2.7170831 11 183 172 22.1844 20 3.57702 2.7291532 5 183 178 22.241 24 3.63257 2.73706

Total time run: 32.9197

Total reads made: 183

Read size: 4194304

Object size: 4194304

Bandwidth (MB/sec): 22.2359

Average IOPS: 5

Stddev IOPS: 0.669015

Max IOPS: 6

Min IOPS: 4

Average Latency(s): 2.74483

Max latency(s): 4.63637

Min latency(s): 0.367019

三节点

cephfs:

- dd写入:21.3 MB/s (dd if=/dev/zero of=test.img bs=1M count=1024)

- dd读取:19.5 MB/s (echo 3 > /proc/sys/vm/drop_caches; dd if=test.img of=/dev/null bs=1M)

- fio随机写:1310

- fio随机读:6058

- fio顺序写:14.0MiB/s

- fio顺序读:27.9MiB/s

rados bench -p perf_test 30 write --no-cleanup

hints = 1

Maintaining 16 concurrent writes of 4194304 bytes to objects of size 4194304 for up to 30 seconds or 0 objects

Object prefix: benchmark_data_orangepi3-lts_2188sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)0 0 0 0 0 0 - 01 16 16 0 0 0 - 02 16 17 1 1.99967 2 1.99725 1.997253 16 19 3 3.99915 8 2.92674 2.509324 16 21 5 4.99899 8 3.68936 2.911025 16 25 9 7.19858 16 4.44208 3.508036 16 29 13 8.66497 16 3.32056 3.775777 16 31 15 8.56977 8 6.59867 3.955118 16 33 17 8.49837 8 2.95588 3.892449 16 39 23 10.2203 24 8.91377 4.528710 16 42 26 10.398 12 4.00285 4.6172611 16 46 30 10.907 16 1.88708 4.3278812 16 48 32 10.6647 8 3.22648 4.2289213 16 52 36 11.0748 16 1.51764 4.1704114 16 53 37 10.5695 4 2.53073 4.126115 16 55 39 10.3981 8 8.91467 4.3993816 16 60 44 10.9979 20 7.31564 4.752117 16 61 45 10.5862 4 2.00639 4.6910818 16 66 50 11.109 20 2.34879 4.7650119 16 69 53 11.1558 12 2.87372 4.81177

2023-04-18T15:18:28.656929+0800 min lat: 1.51764 max lat: 10.6957 avg lat: 4.81906sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)20 16 74 58 11.5978 20 2.18304 4.8190621 16 75 59 11.2359 4 7.06397 4.8571122 16 80 64 11.6341 20 3.49498 4.8519123 16 80 64 11.1283 0 - 4.8519124 16 82 66 10.9979 4 6.3591 4.8366725 16 84 68 10.8779 8 6.34421 4.9169326 16 89 73 11.2286 20 8.52854 5.0612627 16 92 76 11.2571 12 5.63676 5.0719728 16 97 81 11.5692 20 6.39309 5.0401729 16 100 84 11.584 12 7.29099 5.0108430 16 105 89 11.8644 20 2.50054 4.9613831 10 105 95 12.2558 24 9.50269 4.9448232 4 105 101 12.6226 24 4.52251 4.83104

Total time run: 32.4273

Total writes made: 105

Write size: 4194304

Object size: 4194304

Bandwidth (MB/sec): 12.952

Stddev Bandwidth: 7.39196

Max bandwidth (MB/sec): 24

Min bandwidth (MB/sec): 0

Average IOPS: 3

Stddev IOPS: 1.87271

Max IOPS: 6

Min IOPS: 0

Average Latency(s): 4.774

Stddev Latency(s): 2.42686

Max latency(s): 10.6957

Min latency(s): 1.51764

rados bench -p perf_test 30 seq

hints = 1sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)0 0 0 0 0 0 - 01 16 19 3 11.9944 12 0.935954 0.7689772 16 26 10 19.9933 28 1.96017 1.313483 16 33 17 22.6602 28 2.77006 1.685334 16 42 26 25.9932 36 2.27413 1.753515 16 51 35 27.9931 36 2.27554 1.771646 16 59 43 28.6598 32 2.90927 1.808627 16 65 49 27.9935 24 1.89015 1.830588 16 72 56 27.9936 28 0.468285 1.883079 16 81 65 28.8824 36 0.785162 1.9026310 15 88 73 29.1822 32 2.5208 1.9442411 16 95 79 28.7108 24 2.0044 1.9606912 16 103 87 28.9843 32 3.24909 1.9564513 9 105 96 29.5231 36 2.53005 1.9709214 3 105 102 29.1284 24 1.87098 2.00023

Total time run: 14.9574

Total reads made: 105

Read size: 4194304

Object size: 4194304

Bandwidth (MB/sec): 28.0797

Average IOPS: 7

Stddev IOPS: 1.68379

Max IOPS: 9

Min IOPS: 3

Average Latency(s): 2.02704

Max latency(s): 3.42015

Min latency(s): 0.417385

rados bench -p perf_test 30 rand

hints = 1sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)0 0 0 0 0 0 - 01 16 22 6 23.986 24 0.824307 0.6036062 16 31 15 29.9884 36 1.90078 1.139163 16 40 24 31.9898 36 2.15175 1.417684 16 47 31 30.9908 28 1.42468 1.52515 16 55 39 31.191 32 2.52356 1.57766 16 63 47 31.3248 32 2.89946 1.618647 16 71 55 31.4203 32 0.368204 1.663988 16 78 62 30.9915 28 2.37269 1.705599 16 87 71 31.5467 36 2.84709 1.7710610 16 95 79 31.5911 32 1.07912 1.7901611 16 104 88 31.9911 36 1.44303 1.8095612 16 112 96 31.9913 32 1.42344 1.818113 16 119 103 31.6838 28 2.69186 1.8064414 16 127 111 31.7059 32 3.20146 1.8241815 16 133 117 31.1918 24 0.423103 1.8433416 16 141 125 31.2418 32 0.992599 1.8553517 16 148 132 31.0508 28 0.97594 1.8492818 16 155 139 30.8809 28 1.49825 1.868219 16 161 145 30.5185 24 4.27347 1.90106

2023-04-18T15:19:17.492678+0800 min lat: 0.366624 max lat: 4.73499 avg lat: 1.92024sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)20 15 167 152 30.3887 28 4.73499 1.9202421 16 175 159 30.2746 28 0.367838 1.9069722 16 184 168 30.5345 36 0.745395 1.9083323 16 193 177 30.7718 36 1.09359 1.9221724 16 199 183 30.4895 24 4.54686 1.9520325 16 207 191 30.5496 32 4.18695 1.9741126 16 215 199 30.6052 32 1.11538 1.9649627 16 223 207 30.6566 32 1.41712 1.9647428 16 230 214 30.5616 28 2.08073 1.9823429 16 236 220 30.3352 24 2.4372 2.0095130 16 241 225 29.9906 20 3.20498 2.02631 9 241 232 29.9262 28 2.49127 2.0266832 3 241 238 29.7409 24 2.5638 2.05061

Total time run: 32.8447

Total reads made: 241

Read size: 4194304

Object size: 4194304

Bandwidth (MB/sec): 29.3502

Average IOPS: 7

Stddev IOPS: 1.10534

Max IOPS: 9

Min IOPS: 5

Average Latency(s): 2.06437

Max latency(s): 4.91188

Min latency(s): 0.366624

四节点

cephfs:

- dd写入:22.0 MB/s (dd if=/dev/zero of=test.img bs=1M count=1024)

- dd读取:19.7 MB/s (echo 3 > /proc/sys/vm/drop_caches; dd if=test.img of=/dev/null bs=1M)

- fio随机写:1392

- fio随机读:4087

- fio顺序写:15.5MiB/s

- fio顺序读:32.3MiB/s

rados bench -p perf_test 30 write --no-cleanup

hints = 1

Maintaining 16 concurrent writes of 4194304 bytes to objects of size 4194304 for up to 30 seconds or 0 objects

Object prefix: benchmark_data_orangepi3-lts_35619sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)0 0 0 0 0 0 - 01 16 16 0 0 0 - 02 16 17 1 1.99975 2 1.78202 1.782023 16 20 4 5.33256 12 2.84877 2.430444 16 21 5 4.99923 4 3.74263 2.692875 16 23 7 5.5991 8 4.76583 2.854516 16 32 16 10.6649 36 5.86804 3.706167 16 34 18 10.284 8 1.92668 3.45968 16 39 23 11.498 20 7.93078 3.68639 16 42 26 11.5535 12 8.75803 3.9368910 16 47 31 12.3978 20 9.69823 3.9561211 16 50 34 12.3614 12 3.04304 3.994612 16 54 38 12.6644 16 3.45564 3.9937513 16 60 44 13.536 24 3.44875 3.9558814 16 63 47 13.4261 12 1.81607 3.8501815 16 67 51 13.5975 16 2.73712 3.7686316 16 71 55 13.7475 16 4.15855 3.7236717 16 76 60 14.115 20 10.2432 3.7529118 16 79 63 13.9973 12 2.17743 3.8182719 16 83 67 14.1024 16 8.20002 3.90448

2023-04-30T16:14:03.140857+0800 min lat: 1.0234 max lat: 10.2441 avg lat: 3.90471sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)20 16 84 68 13.5973 4 3.91999 3.9047121 16 87 71 13.5211 12 3.44596 3.9930422 16 93 77 13.9972 24 3.23659 3.9720923 16 96 80 13.9102 12 1.23555 4.0076824 16 102 86 14.3305 24 1.35805 4.0437325 16 103 87 13.9172 4 6.09656 4.0673226 16 107 91 13.9972 16 3.5407 4.0854927 16 109 93 13.775 8 6.03469 4.0852428 16 118 102 14.5686 36 2.17569 4.0677229 16 121 105 14.4799 12 4.49289 4.1101430 16 125 109 14.5305 16 2.87309 4.0865231 9 125 116 14.9648 28 2.25205 4.052632 6 125 119 14.8721 12 1.79392 4.00744

Total time run: 32.5949

Total writes made: 125

Write size: 4194304

Object size: 4194304

Bandwidth (MB/sec): 15.3398

Stddev Bandwidth: 8.72312

Max bandwidth (MB/sec): 36

Min bandwidth (MB/sec): 0

Average IOPS: 3

Stddev IOPS: 2.20611

Max IOPS: 9

Min IOPS: 0

Average Latency(s): 4.0305

Stddev Latency(s): 2.20517

Max latency(s): 10.2441

Min latency(s): 1.0234

rados bench -p perf_test 30 seq

hints = 1sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)0 0 0 0 0 0 - 01 16 20 4 15.9905 16 0.853527 0.6505662 16 32 16 31.987 48 1.92335 1.18493 15 42 27 35.9872 44 0.728561 1.284074 16 54 38 37.9873 44 1.45691 1.364275 16 63 47 37.5885 36 0.867527 1.327446 16 71 55 36.6558 32 0.388871 1.335147 16 81 65 37.1323 40 0.402616 1.369868 16 90 74 36.99 36 1.0629 1.418749 16 99 83 36.8793 36 1.75055 1.4811610 16 106 90 35.9908 28 0.407852 1.5216611 16 117 101 36.7179 44 1.74839 1.5567312 13 125 112 37.324 44 1.12977 1.5654813 6 125 119 36.6064 28 2.87119 1.582814 3 125 122 34.8488 12 3.12215 1.6180315 1 125 124 33.0589 8 3.1234 1.64145

Total time run: 15.0531

Total reads made: 125

Read size: 4194304

Object size: 4194304

Bandwidth (MB/sec): 33.2157

Average IOPS: 8

Stddev IOPS: 3.12745

Max IOPS: 12

Min IOPS: 2

Average Latency(s): 1.65394

Max latency(s): 4.13081

Min latency(s): 0.388871

rados bench -p perf_test 30 rand

hints = 1sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)0 0 0 0 0 0 - 01 16 22 6 23.9897 24 0.745057 0.5608032 16 34 18 35.9886 48 0.372282 0.9387343 16 45 29 38.6558 44 2.19349 1.178674 16 54 38 37.9898 36 1.37898 1.211865 16 63 47 37.5905 36 0.368202 1.269996 16 73 57 37.9907 40 0.369987 1.343727 16 84 68 38.8479 44 0.379556 1.354368 15 92 77 38.4844 36 0.366431 1.355229 16 98 82 36.4306 20 0.369742 1.3811610 16 108 92 36.7866 40 1.07875 1.4090911 16 119 103 37.4414 44 0.411923 1.4201512 16 128 112 37.3207 36 0.721847 1.4651713 15 136 121 37.2149 36 3.88744 1.5154414 16 145 129 36.8421 32 1.48207 1.5325715 16 153 137 36.519 32 0.374107 1.5682416 16 162 146 36.4861 36 0.369296 1.5667617 16 174 158 37.1627 48 0.73181 1.5581318 16 179 163 36.2091 20 0.402144 1.5722219 16 190 174 36.6186 44 0.37141 1.58182

2023-04-30T16:16:14.252493+0800 min lat: 0.366431 max lat: 5.00059 avg lat: 1.59628sec Cur ops started finished avg MB/s cur MB/s last lat(s) avg lat(s)20 16 201 185 36.9871 44 0.707558 1.5962821 16 212 196 37.3204 44 0.72207 1.5963922 16 222 206 37.4418 40 4.26157 1.6027123 16 231 215 37.3786 36 0.863178 1.5968524 16 240 224 37.3208 36 0.703603 1.5966925 16 248 232 37.1078 32 3.21437 1.60126 16 260 244 37.5262 48 0.545021 1.5851427 16 269 253 37.4695 36 4.48831 1.5958828 16 277 261 37.2739 32 0.369147 1.5964529 16 287 271 37.3677 40 0.732066 1.6055830 16 295 279 37.1885 32 0.421207 1.6196831 8 295 287 37.021 32 4.43585 1.6334132 5 295 290 36.2391 12 4.24594 1.6617933 3 295 292 35.3834 8 3.91917 1.67774

Total time run: 33.7845

Total reads made: 295

Read size: 4194304

Object size: 4194304

Bandwidth (MB/sec): 34.9272

Average IOPS: 8

Stddev IOPS: 2.41248

Max IOPS: 12

Min IOPS: 2

Average Latency(s): 1.70153

Max latency(s): 5.00059

Min latency(s): 0.366431

卸载命令

apt remove ceph-* --purge -y

rm osd.img

rm -rf /var/lib/ceph/

rm -rf /usr/share/ceph

reboot

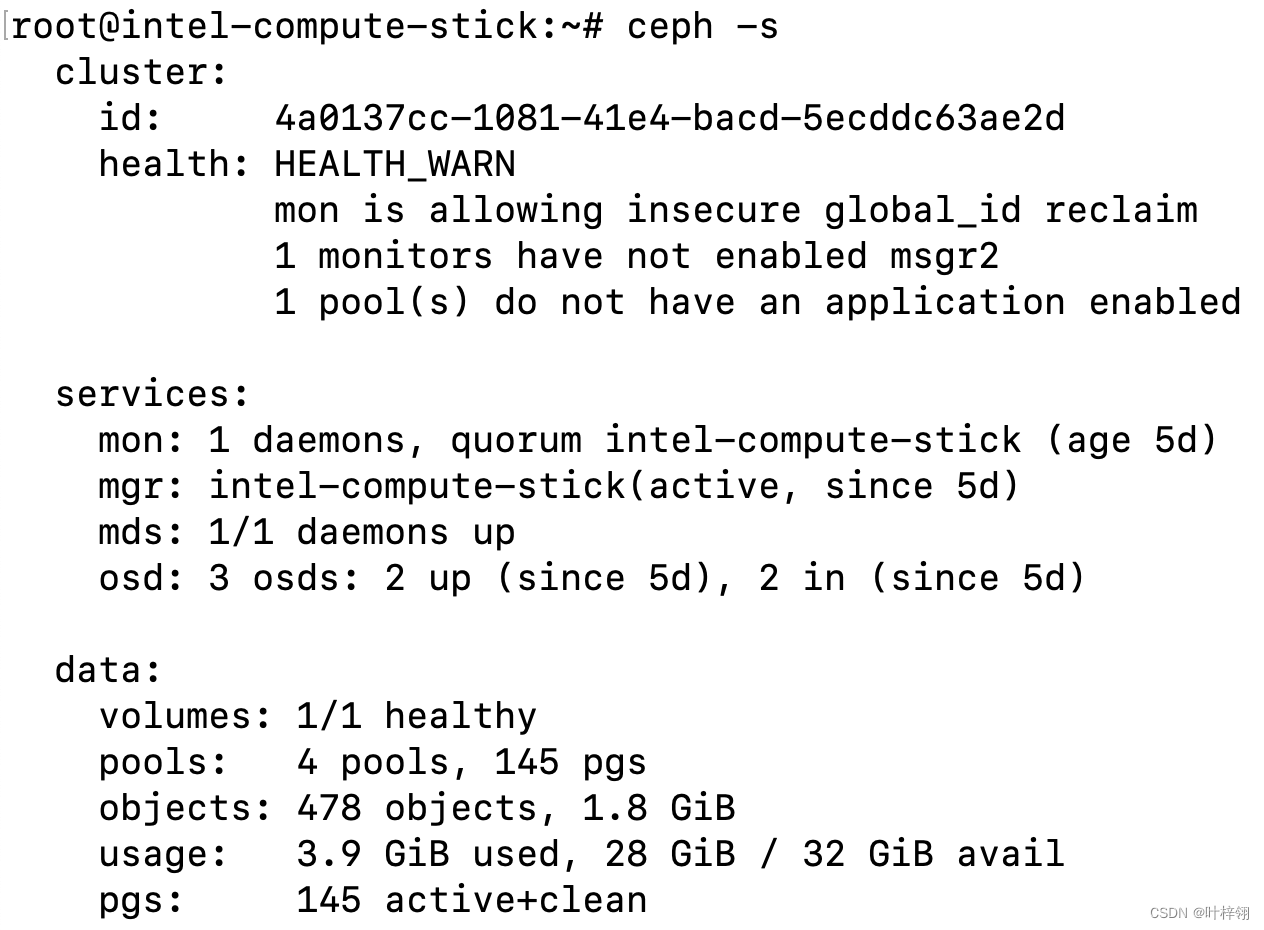

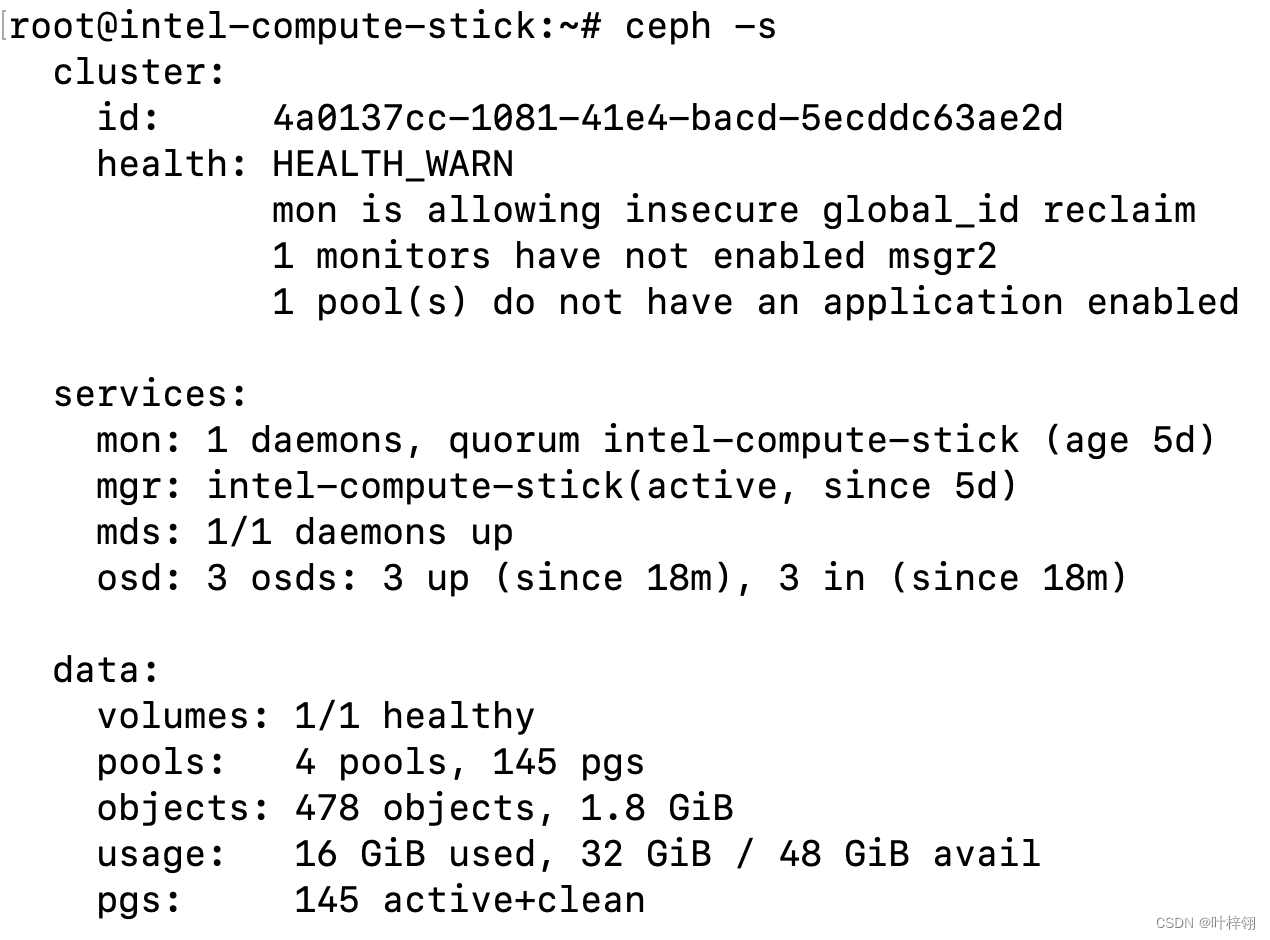

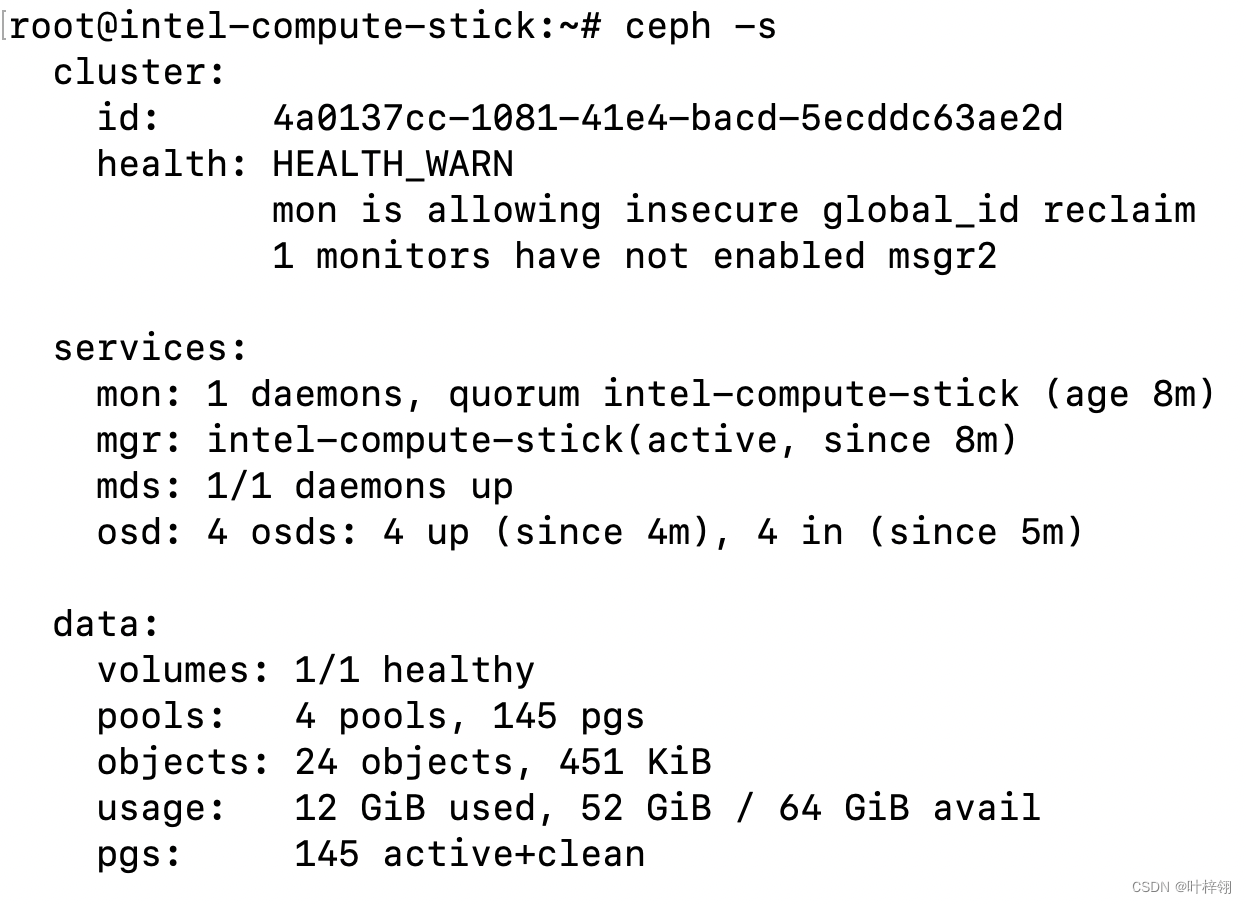

B站视频

我在家用了一块512M内存的ARMv7板子、一块1G内存的ARM64板子和一块2G内存的AMD64的HTPC和一根2G内存的Intel电脑棒搭建了一个Ceph集群。其中HTPC作为mon,mgr,mds,osd,而其它三个设备作为osd。他们都是百兆网络。我的目的是为了学习Ceph集群的搭建,此外也对Ceph集群的性能比较感兴趣。因此我用了一块ARM64的板子(有千兆网络)作为客户端,挂载cephfs进行性能测试。除了cephfs,我还用rados进行了性能测试。我测试了两节点、三节点和四节点的情况下的性能并进行了对比。我的Ceph集群是双副本的。总的来说顺序读写随着节点越多,性能越好,但随机读写因节点数量太少,暂时看不出趋势。

视频文字稿

低配设备搭建Ceph集群,性能如何?(分布式存储)

上次我用家里的电子垃圾搭了一个k8s集群,这次我来搭一个Ceph集群。

Ceph是一个开源的分布式存储系统。我这次用了一块ARMv7的板子,只有512M内存,还有一块1G内存ARM64板子(树莓派3B),另外用了一根Intel的电脑棒和一台HTPC,都是2G内存的。官方文档里面建议内存最少4G,不过我搭这个集群只是图一乐。

我本来还想用256M内存的那块板子的,但是内存太小,经过优化还是跑不起来,所以只能放弃。

我是根据官方文档来手动部署的,我一开始用的比较旧的版本,然后遇到一堆坑,后面换了新版就正常了,虽然新版也是各种bug,但是不影响我测试。

我使用双副本配置,所有的存储节点都是百兆内网。我另外使用一个千兆内网的ARM64板子作为客户端,对集群进行性能测试。我首先挂载了cephfs。也就是基于Ceph的分布式文件系统。挂载之后,在上面读写,这些读写请求会分发到所有的存储节点上,所以理论上存储节点越多,读写速度就越快。

我分别对两节点、三节点和四节点的情况进行测试。首先挂载cephfs并用fio进行性能测试,结果如图,随机读写性能比较差,而且和节点数量关系不大,原因可能是存储节点的闪存比较垃圾。不过连续读写都随着节点数量增加而增加,在两节点和三节点的时候,连续读取基本能跑满存储节点带宽。另外我还用rados进行了性能测试,结果如图,都是随节点数量增加而增加,不过不是线性增长。

如果大家喜欢这期视频,欢迎点赞投币收藏转发,有什么想说也可以在评论区留言。