Ceph运维笔记

一、基本操作

ceph osd tree //查看所有osd情况 其中里面的weight就是CRUSH算法要使用的weight,越大代表之后PG选择该osd的概率就越大

ceph -s //查看整体ceph情况 health_ok才是正常的

ceph osd out osd.1 //将osd.1踢出集群

ceph osd in osd.1 //将out的集群重新加入集群

ceph osd df tree //能够得到osd更加详细的信息(利用率这些)

二、问题解决

1.执行ceph-deploy mon create-initial出错

echo "deb [arch=$(dpkg --print-architecture) signed-by=/etc/apt/keyrings/docker.gpg] https://download.docker.com/linux/ubuntu $(lsb_release -cs) stable" | tee /etc/apt/sources.list.d/docker.list > /dev/null

解决方法

2.osd down(先尝试重启)

ceph osd tree //先查看down掉的osd编号 假设down掉的是osd.1ceph osd out osd.1 //在部署节点执行 先将osd.1移出集群 systemctl stop ceph-osd@1.serviceceph-osd -i 1 //在osd所在节点执行3.Resource temporarily unavailable和is another process using it?

[ceph1][WARNIN] E: Could not get lock /var/lib/dpkg/lock-frontend - open (11: Resource temporarily unavailable)

[ceph1][WARNIN] E: Unable to acquire the dpkg frontend lock (/var/lib/dpkg/lock-frontend), is another process using it?

sudo rm /var/lib/dpkg/lock //直接把锁删了

4.重启osd无效 直接删除osd 重新创建

ceph osd out 1 //将osd.1踢出集群执行ceph auth del osd.1 和 ceph osd rm 1, 此时删除成功但是原来的数据和日志目录还在,也就是数据还在执行umount /dev/sdb,然后执行ceph-disk zap /dev/sdb将数据也删除了之后再创建新osd时,必须是在一个空磁盘上创建

ceph-deploy osd create --data /dev/vdc ceph15.application not enabled on 1 pool(s)

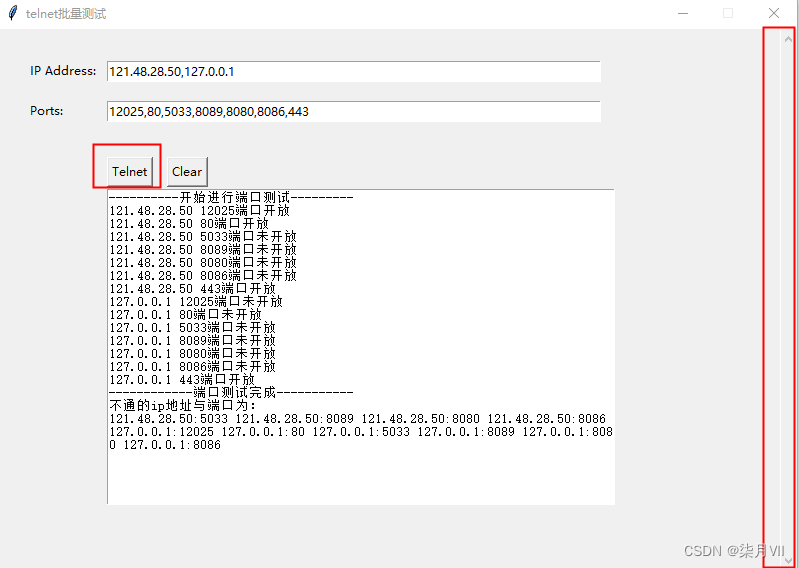

root@ceph0:~/ceph-deploy# ceph -scluster:id: e34e62c3-d8a7-484e-8d46-4707b03b8f71health: HEALTH_WARNapplication not enabled on 1 pool(s)clock skew detected on mon.ceph2services:mon: 3 daemons, quorum ceph0,ceph1,ceph2mgr: ceph2(active), standbys: ceph1, ceph0osd: 3 osds: 3 up, 3 inrgw: 1 daemon activedata:pools: 5 pools, 160 pgsobjects: 188 objects, 1.2 KiBusage: 3.0 GiB used, 27 GiB / 30 GiB availpgs: 160 active+cleanroot@ceph0:~/ceph-deploy# ceph health detail

HEALTH_WARN application not enabled on 1 pool(s)

POOL_APP_NOT_ENABLED application not enabled on 1 pool(s)application not enabled on pool 'testPool'use 'ceph osd pool application enable <pool-name> <app-name>', where <app-name> is 'cephfs', 'rbd', 'rgw', or freeform for custom applications.

ceph health detail //命令发现是新加入的存储池testPool没有被应用程序标记,因为之前添加的是RGW实例,所以此处依提示将testPool被rgw标记即可:

root@ceph0:~/ceph-deploy# ceph osd pool application enable testPool rgw

enabled application 'rgw' on pool 'testPool'