目录

使用Kubeadm安装k8s集群:

初始化操作:

每台主从节点:

升级内核:

所有节点安装docker :

所有节点安装kubeadm,kubelet和kubectl:

修改了 kubeadm-config.yaml,将其传输给其他master节点,先完成所有master节点的镜像拉取:

修改controller-manager和scheduler配置文件:

部署dashboard:

使用Kubeadm安装k8s集群:

环境准备:2主2从

master01,02:192.168.233.10,192.168.233.20

node01,02:192.168.233.30,192.168.233.40

负载均衡器:192.168.233.50,192.168.233.60

注意事项:

master节点cpu核心数要求大于2

最新的版本不一定好,但相对于旧版本,核心功能稳定,但新增功能、接口相对不稳

学会一个版本的 高可用部署,其他版本操作都差不多

宿主机尽量升级到CentOS 7.9

内核kernel升级到 4.19+ 这种稳定的内核

部署k8s版本时,尽量找 1.xx.5 这种大于5的小版本(这种一般是比较稳定的版本)

初始化操作:

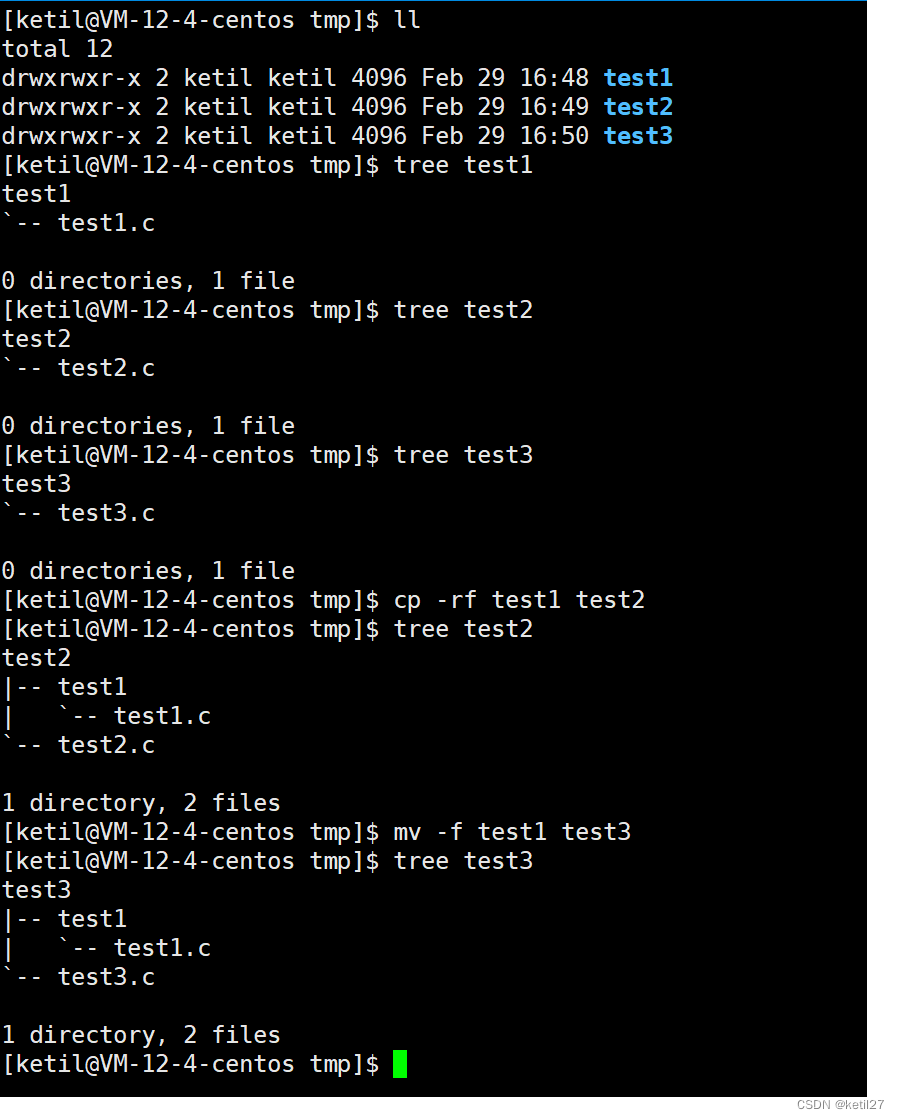

每台主从节点:

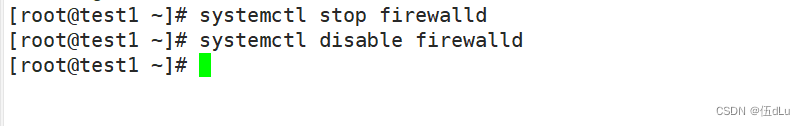

systemctl stop firewalld

systemctl disable firewalld

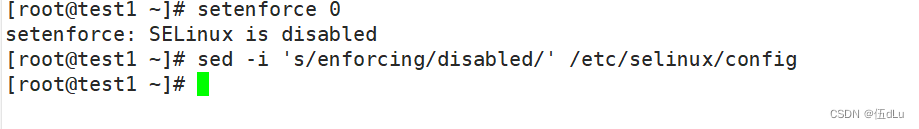

setenforce 0

sed -i 's/enforcing/disabled/' /etc/selinux/config

iptables -F && iptables -t nat -F && iptables -t mangle -F && iptables -X

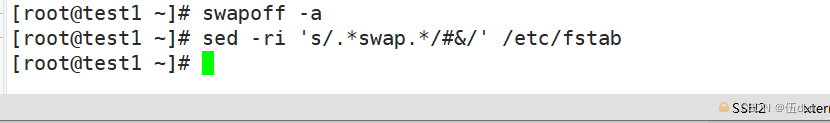

swapoff -a

sed -ri 's/.*swap.*/#&/' /etc/fstab

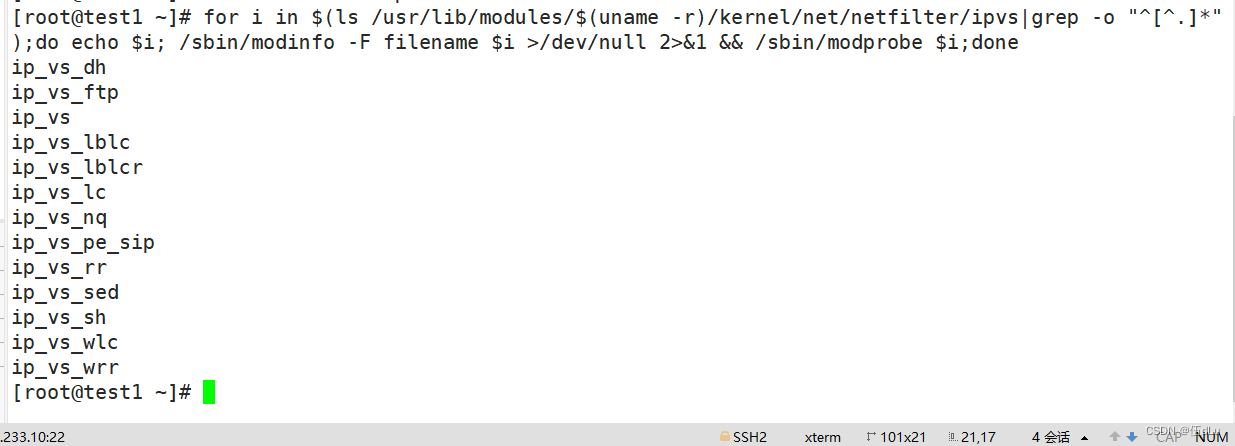

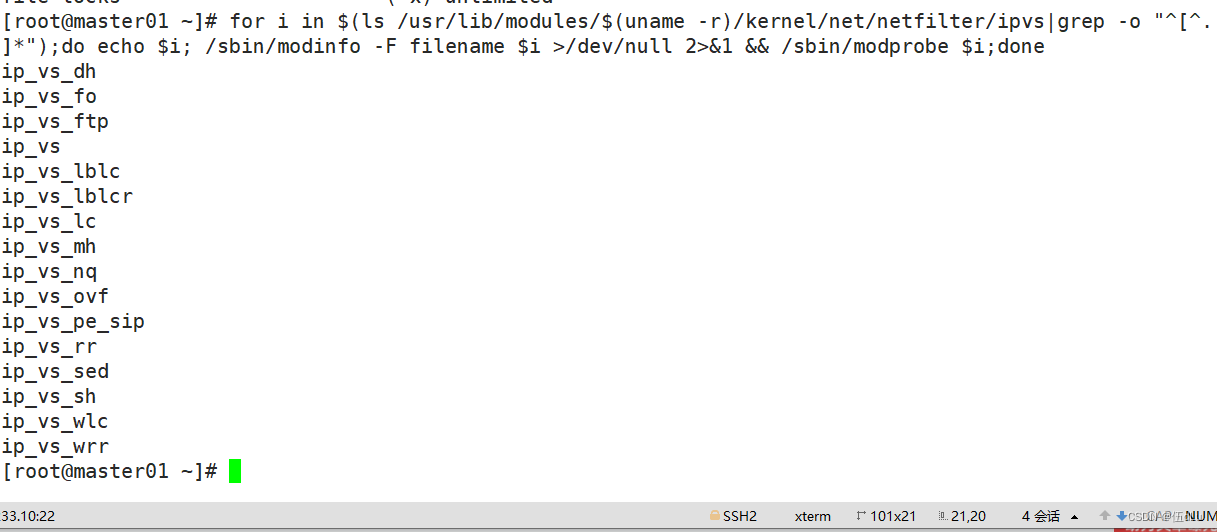

for i in $(ls /usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs|grep -o "^[^.]*");do echo $i; /sbin/modinfo -F filename $i >/dev/null 2>&1 && /sbin/modprobe $i;done

hostnamectl set-hostname master01

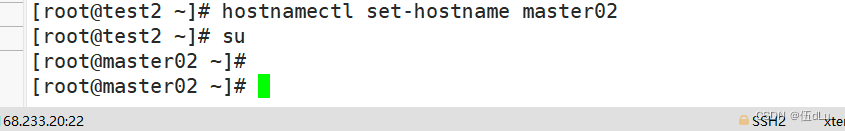

hostnamectl set-hostname master02

hostnamectl set-hostname node01

hostnamectl set-hostname node02

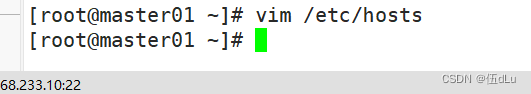

vim /etc/hosts

192.168.233.10 master01

192.168.233.20 master02

192.168.233.30 node01

192.168.233.40 node02

cat > /etc/sysctl.d/kubernetes.conf << EOF

#开启网桥模式,可将网桥的流量传递给iptables链

net.bridge.bridge-nf-call-ip6tables=1

net.bridge.bridge-nf-call-iptables=1

#关闭ipv6协议

net.ipv6.conf.all.disable_ipv6=1

net.ipv4.ip_forward=1

EOFsysctl --system

yum -y install ntpdate

ntpdate ntp.aliyun.com

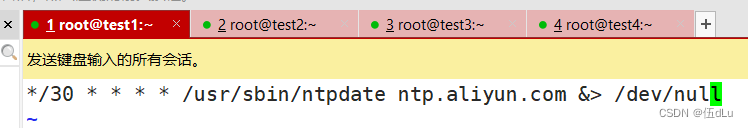

crontab -e

*/30 * * * * /usr/sbin/ntpdate ntp.aliyun.com &> /dev/null

![]()

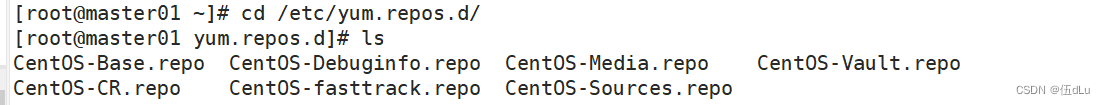

升级内核:

[elrepo]

name=elrepo

baseurl=https://mirrors.aliyun.com/elrepo/archive/kernel/el7/x86_64

gpgcheck=0

enabled=1

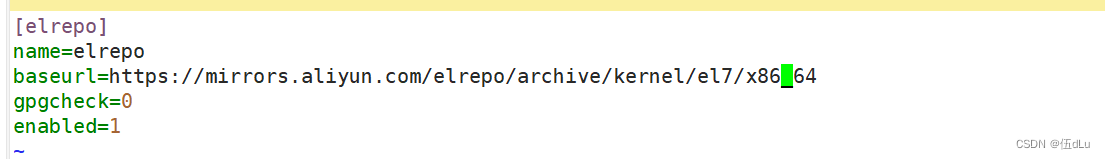

升级所有节点内核:

yum install -y kernel-lt-devel kernel-lt

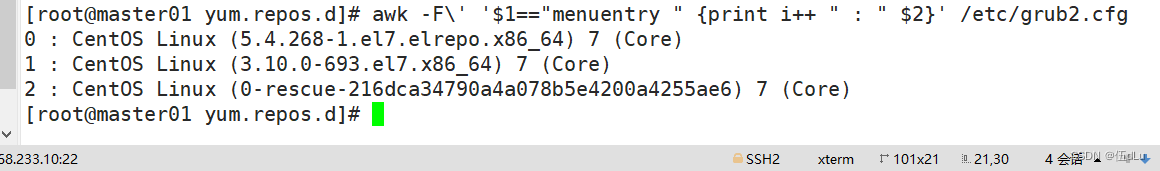

awk -F\' '$1=="menuentry " {print i++ " : " $2}' /etc/grub2.cfg

设置默认启动内核

grub2-set-default 0

修改内核参数:

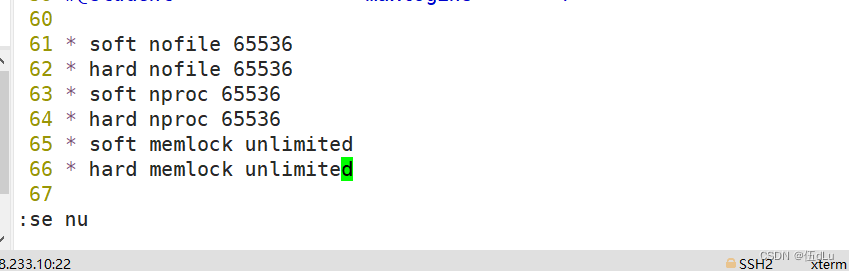

vim /etc/security/limits.conf

* soft nofile 65536

* hard nofile 65536

* soft nproc 65536

* hard nproc 65536

* soft memlock unlimited

* hard memlock unlimited

重启:

reboot

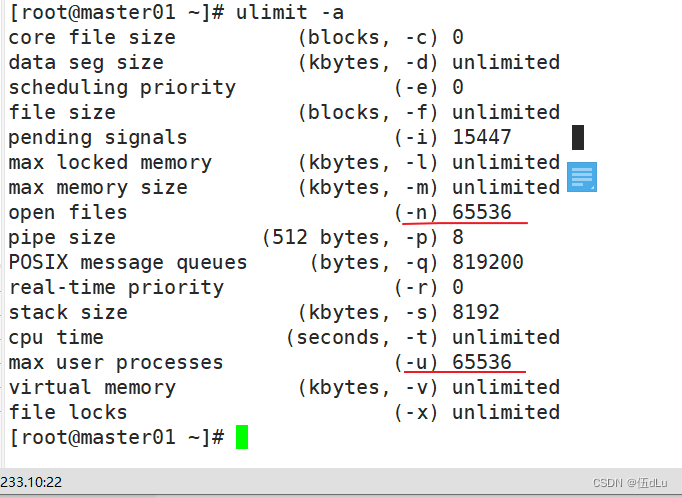

ulimit -a

for i in $(ls /usr/lib/modules/$(uname -r)/kernel/net/netfilter/ipvs|grep -o "^[^.]*");do echo $i; /sbin/modinfo -F filename $i >/dev/null 2>&1 && /sbin/modprobe $i;done

所有节点安装docker :

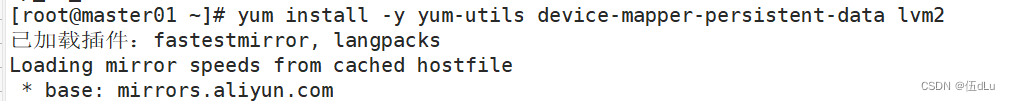

yum install -y yum-utils device-mapper-persistent-data lvm2

yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

yum install -y docker-ce docker-ce-cli containerd.io

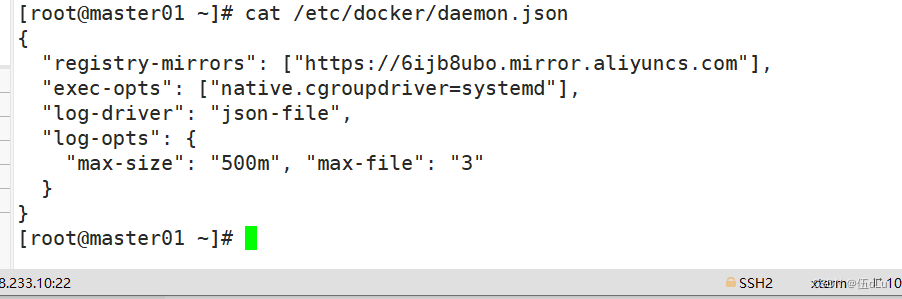

cat > /etc/docker/daemon.json <<EOF

{

"registry-mirrors": ["https://6ijb8ubo.mirror.aliyuncs.com"],

"exec-opts": ["native.cgroupdriver=systemd"],

"log-driver": "json-file",

"log-opts": {

"max-size": "500m", "max-file": "3"

}

}

EOF

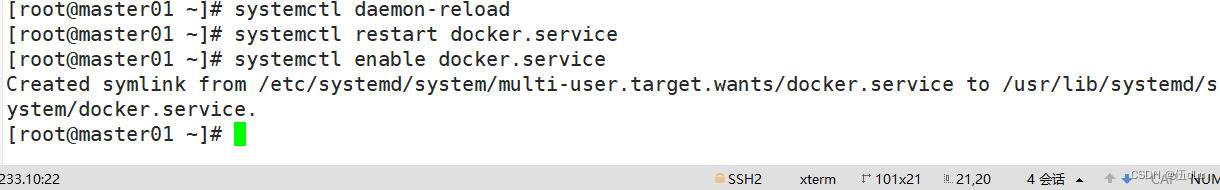

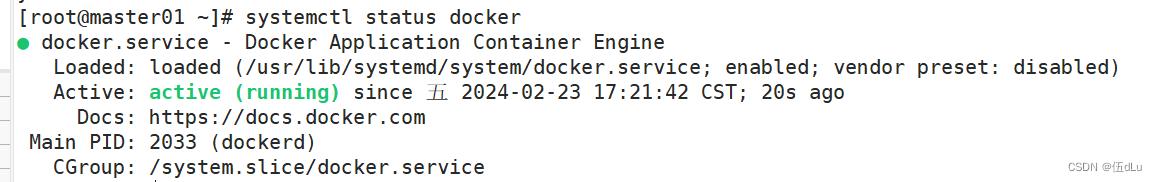

systemctl daemon-reload

systemctl restart docker.service

systemctl enable docker.service

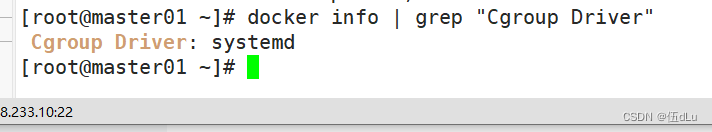

docker info | grep "Cgroup Driver"

所有节点安装kubeadm,kubelet和kubectl:

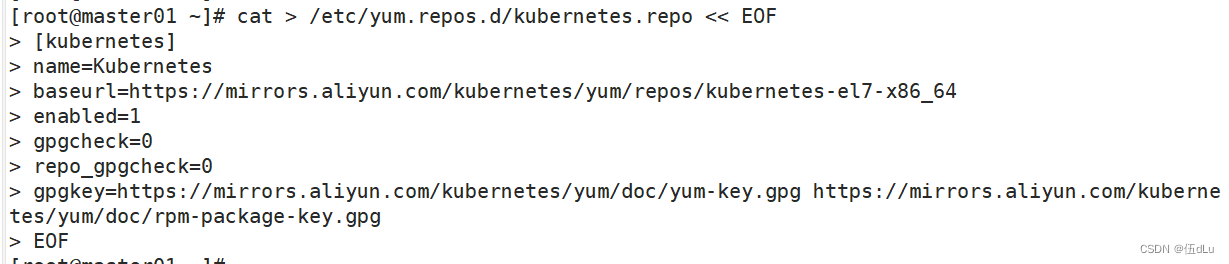

定义kubernetes源:

cat > /etc/yum.repos.d/kubernetes.repo << EOF

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpg https://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

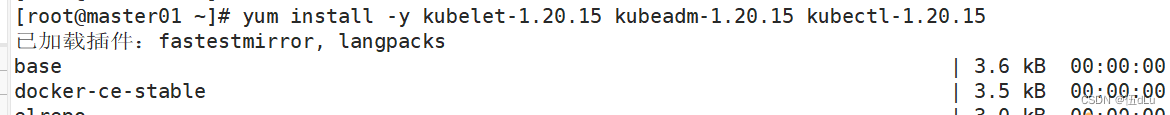

yum install -y kubelet-1.20.15 kubeadm-1.20.15 kubectl-1.20.15

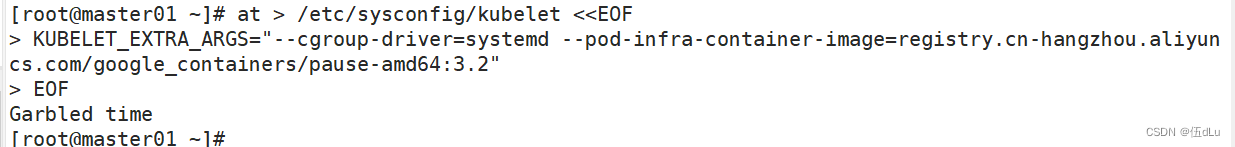

配置Kubelet使用阿里云的pause镜像:

cat > /etc/sysconfig/kubelet <<EOF

KUBELET_EXTRA_ARGS="--cgroup-driver=systemd --pod-infra-container-image=registry.cn-hangzhou.aliyuncs.com/google_containers/pause-amd64:3.2"

EOF

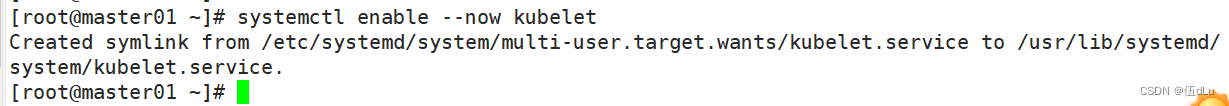

开机自启kubelet:

systemctl enable --now kubelet

在负载均衡器上部署nginx和keepalived(跟二进制安装的一样):

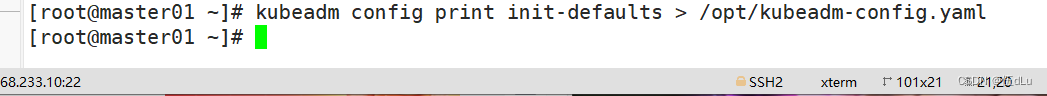

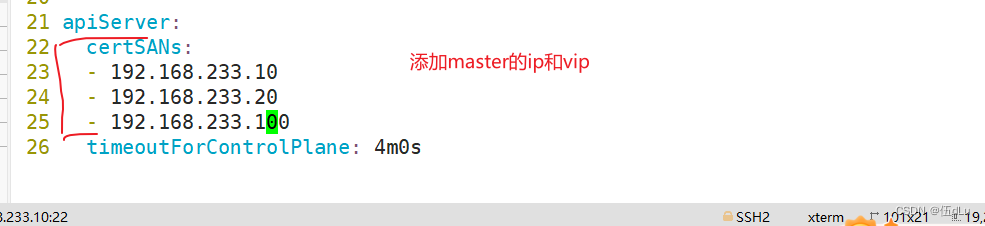

在 master01 节点上设置集群初始化配置文件:

kubeadm config print init-defaults > /opt/kubeadm-config.yaml

修改内容:

可选,有则添加,没有则用自带的local模块:

external:

endpoints:

- https://192.168.233.10:2379

- https://192.168.233.20:2379

caFile: /opt/etcd/ssl/ca.pem

certFile: /opt/etcd/ssl/server.pem

keyFile: /opt/etcd/ssl/server-key.pem

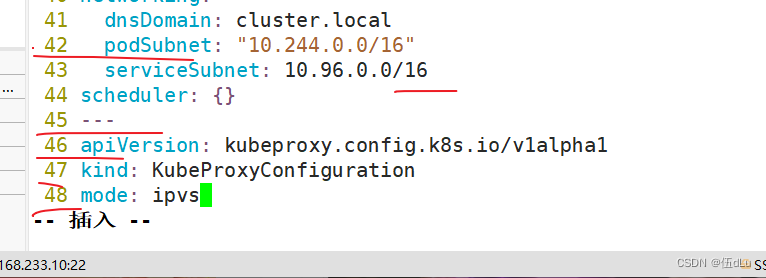

podSubnet: "10.244.0.0/16"

---

apiVersion: kubeproxy.config.k8s.io/v1alpha1

kind: KubeProxyConfiguration

mode: ipvs

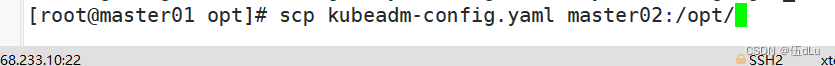

修改了 kubeadm-config.yaml,将其传输给其他master节点,先完成所有master节点的镜像拉取:

scp kubeadm-config.yaml master02:/opt/

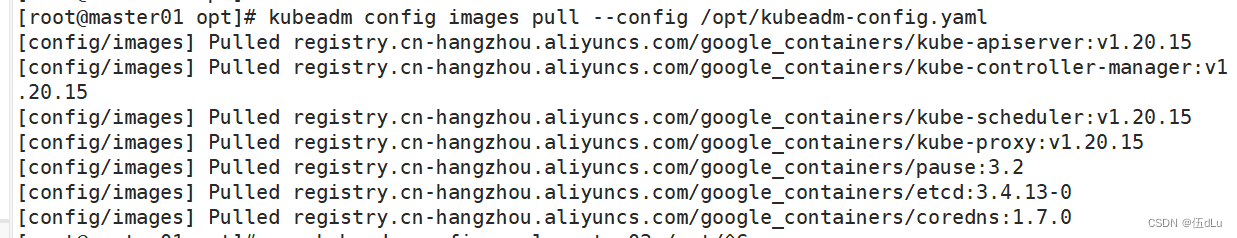

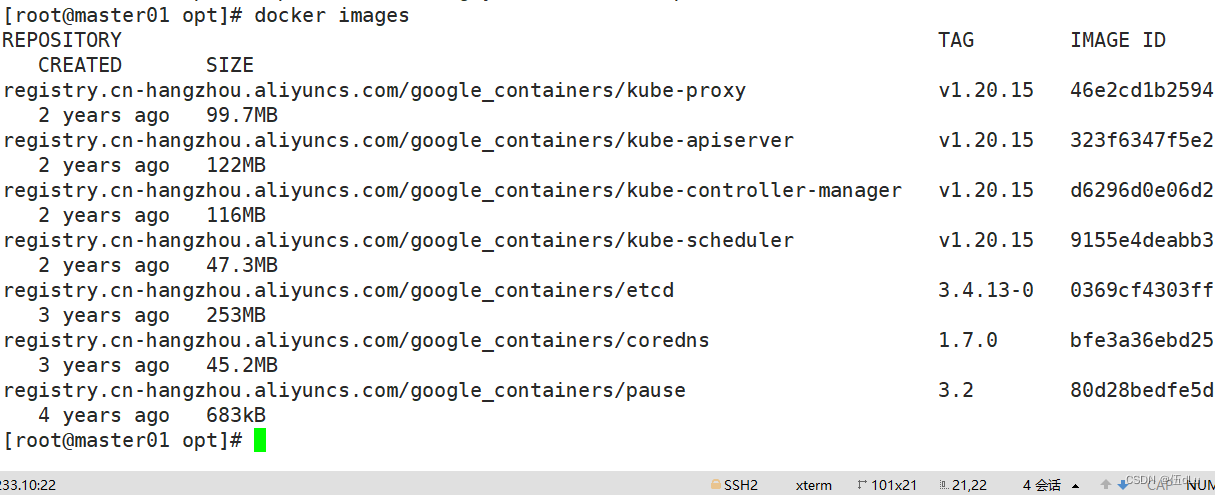

kubeadm config images pull --config /opt/kubeadm-config.yaml

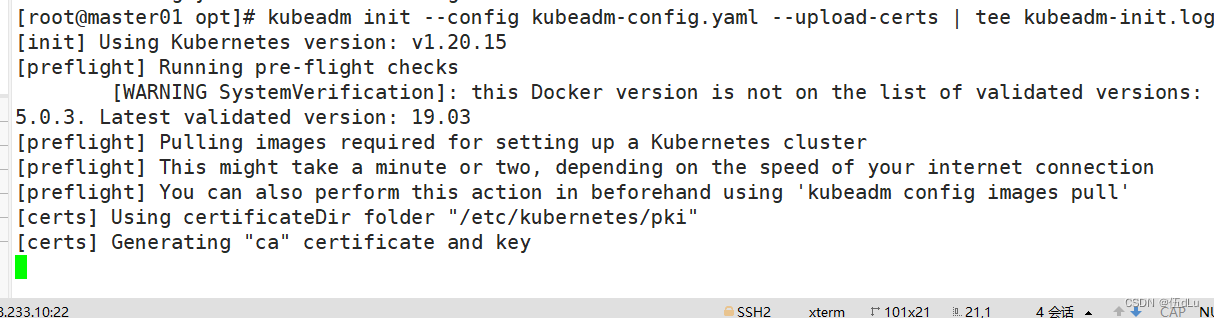

master01 节点进行初始化:

kubeadm init --config kubeadm-config.yaml --upload-certs | tee kubeadm-init.log

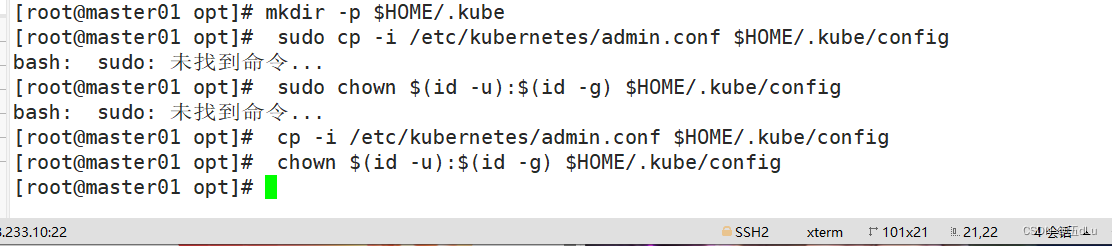

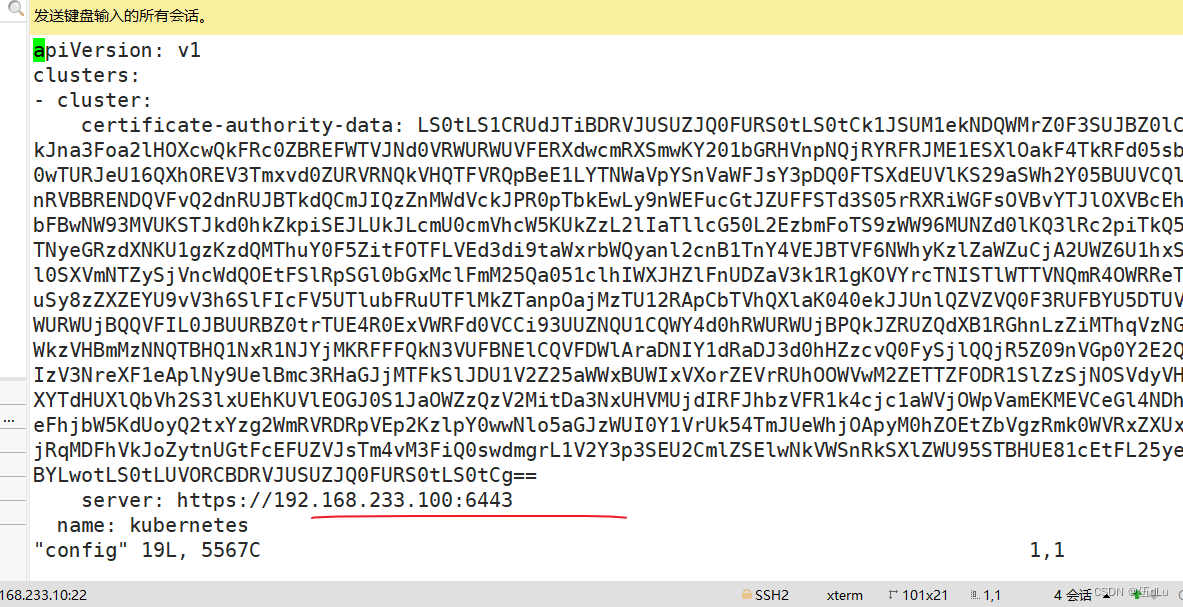

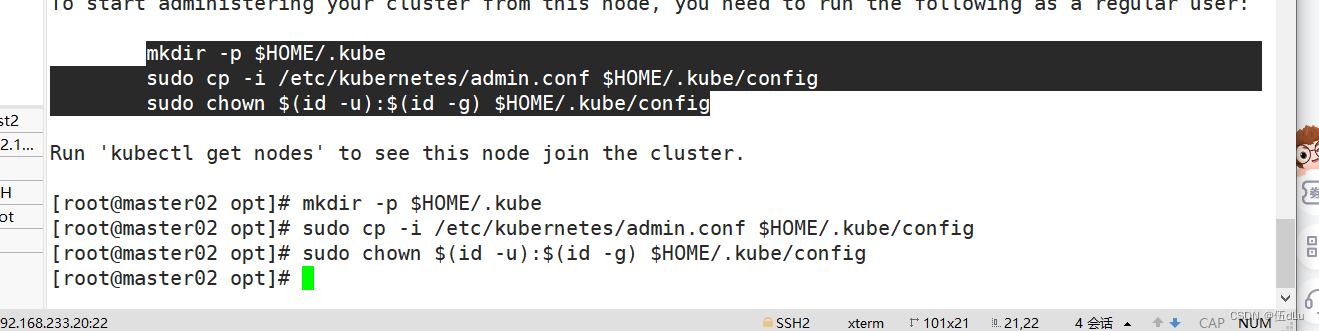

配置 kubectl:

mkdir -p $HOME/.kube

cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

chown $(id -u):$(id -g) $HOME/.kube/config

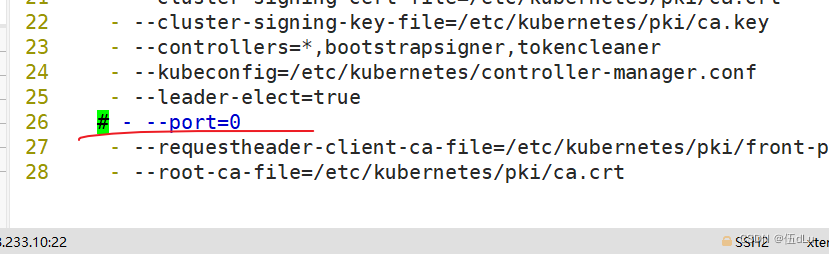

修改controller-manager和scheduler配置文件:

vim /etc/kubernetes/manifests/kube-scheduler.yaml

vim /etc/kubernetes/manifests/kube-controller-manager.yaml

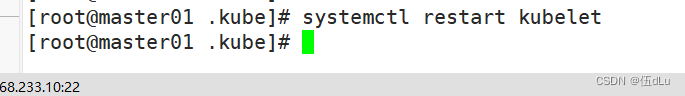

systemctl restart kubelet

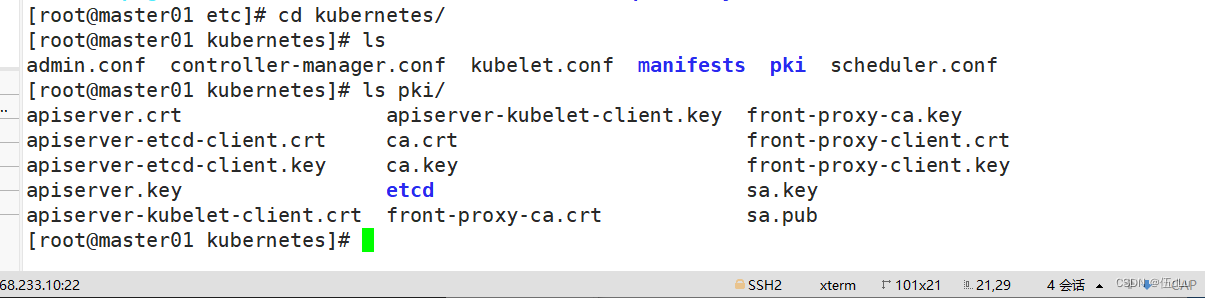

初始化后会生成 etc/kubernetes/目录下文件:

其他节点也如此操作:

若初始化失败,进行的操作

kubeadm reset -f

ipvsadm --clear

rm -rf ~/.kube

再次进行初始化

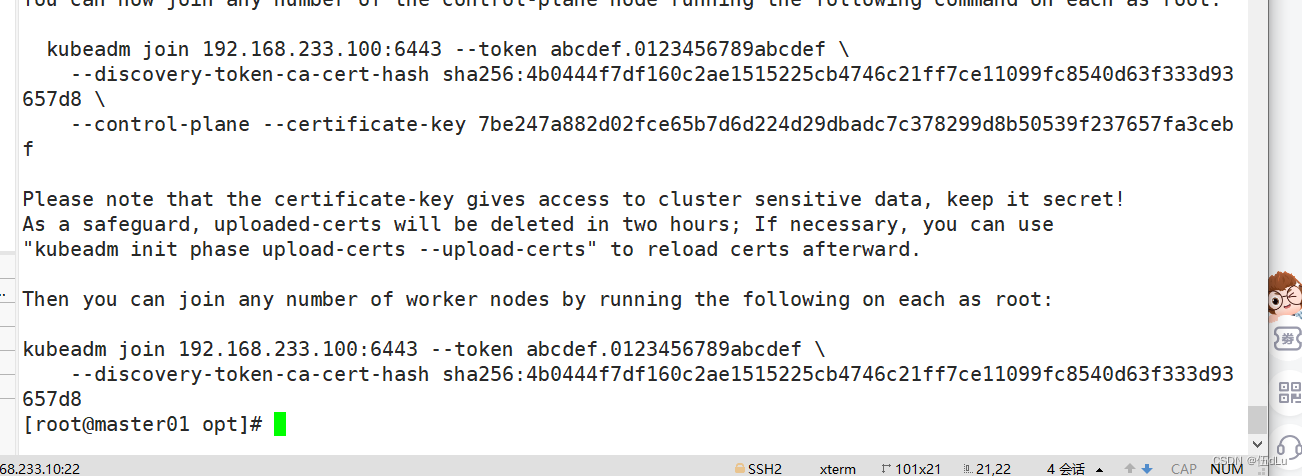

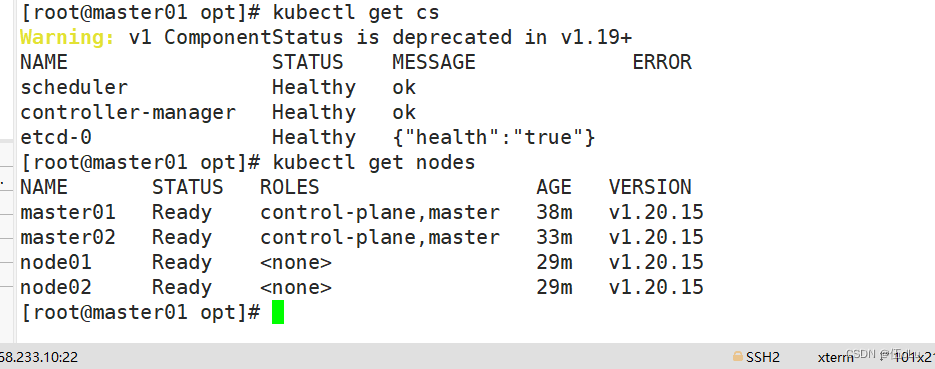

master节点对接:

kubeadm join 192.168.233.100:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:4b0444f7df160c2ae1515225cb4746c21ff7ce11099fc8540d63f333d93657d8 \

--control-plane --certificate-key 7be247a882d02fce65b7d6d224d29dbadc7c378299d8b50539f237657fa3cebf

node 节点加入集群:

kubeadm join 192.168.233.100:6443 --token abcdef.0123456789abcdef \

--discovery-token-ca-cert-hash sha256:4b0444f7df160c2ae1515225cb4746c21ff7ce11099fc8540d63f333d93657d8

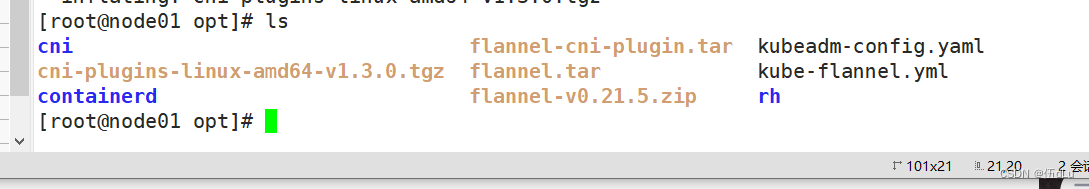

部署网络插件:

将文件传给master:

部署dashboard:

在 master01 节点上操作上传 recommended.yaml 文件到 /opt/目录中:

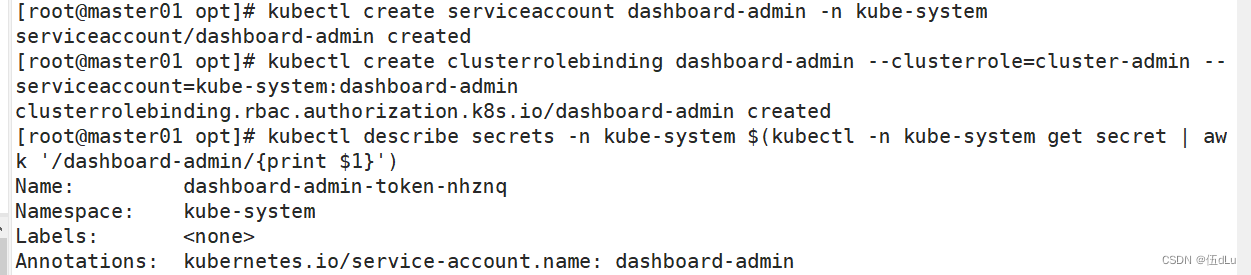

创建service account并绑定默认cluster-admin管理员集群角色:

kubectl create serviceaccount dashboard-admin -n kube-system

kubectl create clusterrolebinding dashboard-admin --clusterrole=cluster-admin --serviceaccount=kube-system:dashboard-admin

kubectl describe secrets -n kube-system $(kubectl -n kube-system get secret | awk '/dashboard-admin/{print $1}')

访问即可。

![TeXiFy IDEA 编译后文献引用为 “[?]“](https://img-blog.csdnimg.cn/direct/464f404a023f4f89a3a2929916f8e404.png)