K8S集群搭建(1 master 2 worker)

- 一、环境资源准备

- 1.1、版本统一

- 1.2、k8s环境系统要求

- 1.3、准备三台Centos7虚拟机

- 二、集群搭建

- 2.1、更新yum,并安装依赖包

- 2.2、安装Docker

- 2.3、设置hostname,修改hosts文件

- 2.4、设置k8s的系统要求

- 2.5、安装kubeadm, kubelet and kubectl

- 2.6、安装k8s核心组件(proxy、pause、scheduler)

- 2.7、kube init 初始化 master

- 三、测试(体验)Pod

kubernetes官网:https://kubernetes.io/docs/setup/production-environment/tools/kubeadm/install-kubeadm/#installing-kubeadm-kubelet-and-kubectl

GitHub:https://github.com/kubernetes/kubeadm

本文:使用kubeadm搭建一个3台机器组成的k8s集群,1台master节点,2台worker节点。

一、环境资源准备

1.1、版本统一

由于k8s安装较麻烦,为防止出现其他异常,特此统一下安装的软件版本。

Docker 18.09.0

---

kubeadm-1.14.0-0

kubelet-1.14.0-0

kubectl-1.14.0-0

---

k8s.gcr.io/kube-apiserver:v1.14.0

k8s.gcr.io/kube-controller-manager:v1.14.0

k8s.gcr.io/kube-scheduler:v1.14.0

k8s.gcr.io/kube-proxy:v1.14.0

k8s.gcr.io/pause:3.1

k8s.gcr.io/etcd:3.3.10

k8s.gcr.io/coredns:1.3.1

---

calico:v3.9

1.2、k8s环境系统要求

配置要求:

一台或多台机器运行其中之一

- K8S需要运行在以下一台或多台机器中:

- Ubuntu 16.04+

- Debian 9+

- CentOS 7 【本文使用】

- Red Hat Enterprise Linux (RHEL) 7

- Fedora 25+

- HypriotOS v1.0.1+

- Container Linux (tested with 1800.6.0)

- 每台机器至少有2GB或更多的内存(少于2GB的空间,其他应用就无法使用了)

- 2个或更多cpu

- 所有网络节点互联(互相ping通)

- 每个节点具有唯一主机名、MAC地址和product_uuid

- 关闭防火墙或开放某些互联端口

- 为了使kubelet正常工作,必须禁用swap.

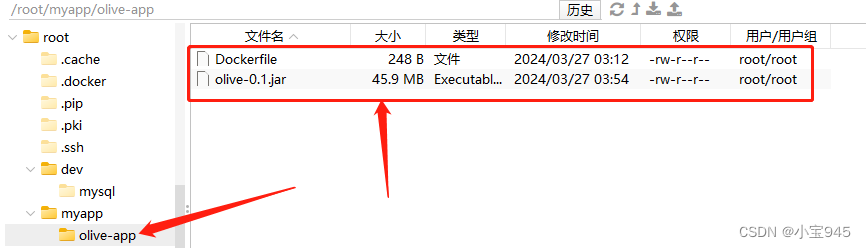

1.3、准备三台Centos7虚拟机

(1)保证3台虚拟机的Mac地址唯一

需同时启动3台虚拟机,故应保证3台虚拟机的Mac地址唯一。

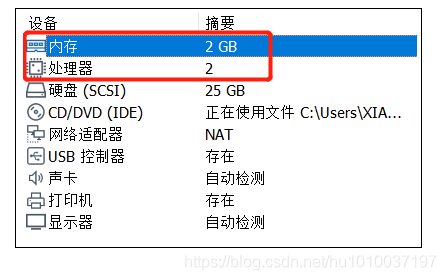

(2)虚拟机配置

| 节点角色 | IP | 处理器内核总数 | 内存(GB) |

|---|---|---|---|

| K8S_master(Centos7) | 192.168.116.170 | 2 | 2 |

| K8S_worker01(Centos7) | 192.168.116.171 | 2 | 2 |

| K8S_worker02(Centos7) | 192.168.116.172 | 2 | 2 |

可参考下图:

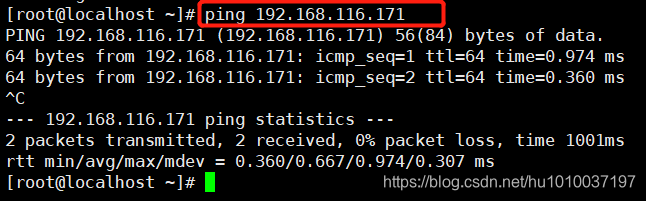

(3)保证各节点互相Ping通

二、集群搭建

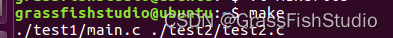

2.1、更新yum,并安装依赖包

注意:(3台机器均要执行)

注: 需联网,时间较长。

(1)更新yum

[root@localhost ~]# yum -y update

(2)安装依赖包

[root@localhost ~]# yum install -y conntrack ipvsadm ipset jq sysstat curl iptables libseccomp

2.2、安装Docker

注意:(3台机器均要执行)

在每一台机器上都安装好Docker,版本为18.09.0

(0)卸载之前安装的docker

[root@localhost /]# sudo yum remove docker docker-client docker-client-latest docker-common docker-latest docker-latest-logrotate docker-logrotate docker-engine

(1)安装必要的依赖

[root@localhost ~]# sudo yum install -y yum-utils device-mapper-persistent-data lvm2

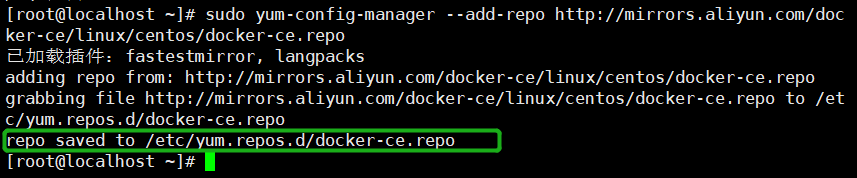

(2)设置docker仓库,添加软件源(阿里源)

[root@localhost ~]# sudo yum-config-manager --add-repo http://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo

(3)设置ustc镜像加速器(强烈建议)

注: 如果没有 /etc/docker/daemon.json,需要手动创建。

① 创建文件夹(/etc/docker)

[root@localhost ~]# mkdir -p /etc/docker

[root@localhost ~]#

[root@localhost ~]# vi /etc/docker/daemon.json

② 创建并编辑daemon.json(内容如下)

{"registry-mirrors": ["https://docker.mirrors.ustc.edu.cn"]

}

③ 重新加载生效

[root@localhost ~]# sudo systemctl daemon-reload

也可参考博文:Docker设置ustc的镜像源(镜像加速器:修改/etc/docker/daemon.json文件)

(4)安装指定版本docker(v18.09.0)

① 列出可用的docker版本(排序:从高到低)

[root@localhost /]# yum list docker-ce --showduplicates | sort -r

② 安装指定版本(这里安装版本v18.09.0),需联网

[root@localhost /]# sudo yum -y install docker-ce-18.09.0 docker-ce-cli-18.09.0 containerd.io

(5)启动Docker

提示: 安装完成后,Docker默认创建好了docker用户组,但该用户组下没有用户。

启动命令:

[root@localhost /]# sudo systemctl start docker

[root@localhost ~]#

[root@localhost ~]# ps -ef | grep docker

[root@localhost ~]#

或

启动docker并设置开机启动(推荐):

[root@localhost /]# sudo systemctl start docker && sudo systemctl enable docker

[root@localhost ~]#

[root@localhost ~]# ps -ef | grep docker

[root@localhost ~]#

附:停止docker命令

systemctl stop docker

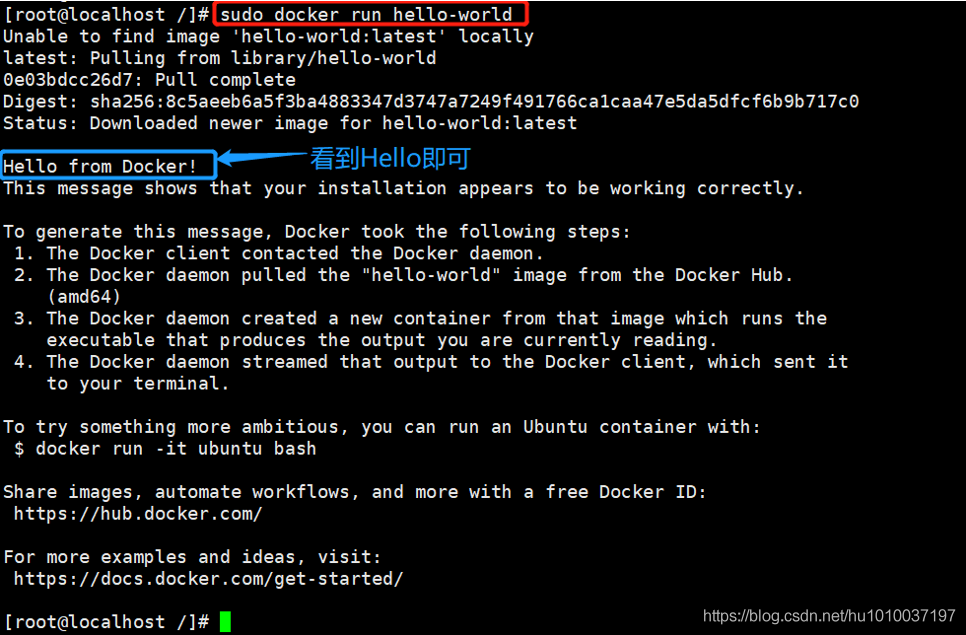

(6)测试Docker是否安装成功

提示: 通过运行 hello-world映像来验证是否正确安装了 Docker Engine-Community。

运行“hello-world”容器测试:

[root@localhost /]# sudo docker run hello-world

如上图即已安装成功。

2.3、设置hostname,修改hosts文件

注意:(3台机器均要执行)

hosts文件:用于解析计算机名称和IP地址的映射关系,功能相当于windows下面的c:\windows\system32\drivers\etc\hosts文件,如果想使用计算机名称来访问对方的主机,需要把对方计算机的名称和IP地址写到本机的hosts文件中,或使用配置DNS。

(1)对master节点主机操作

① 设置master虚拟机的hostname为m

[root@localhost /]# sudo hostnamectl set-hostname m

注: 使用

hostname或uname -n命令,可查看当前主机名:如:[root@localhost ~]# hostname

② 修改hosts文件

在/etc/hosts文件内容末尾 追加 IP和主机名映射。

[root@localhost /]# vi /etc/hosts

附:/etc/hosts中追加内容如下:

192.168.116.170 m

192.168.116.171 w1

192.168.116.172 w2

(2)对worker01节点主机操作

① 设置worker01虚拟机的hostname为w1

[root@localhost /]# sudo hostnamectl set-hostname w1

注: 使用

hostname或uname -n命令,可查看当前主机名:如:[root@localhost ~]# hostname

② 修改hosts文件

在/etc/hosts文件内容末尾 追加 IP和主机名映射。

[root@localhost /]# vi /etc/hosts

附:/etc/hosts中追加内容如下:

192.168.116.170 m

192.168.116.171 w1

192.168.116.172 w2

(3)对worker02节点主机操作

① 设置worker02虚拟机的hostname为w2

[root@localhost /]# sudo hostnamectl set-hostname w2

注: 使用

hostname或uname -n命令,可查看当前主机名:如:[root@localhost ~]# hostname

② 修改hosts文件

在/etc/hosts文件内容末尾 追加 IP和主机名映射。

[root@localhost /]# vi /etc/hosts

附:/etc/hosts中追加内容如下:

192.168.116.170 m

192.168.116.171 w1

192.168.116.172 w2

(3)使用ping命令互测三台虚拟机的网络联通状态

[root@localhost ~]# ping m

[root@localhost ~]# ping w1

[root@localhost ~]# ping w2

2.4、设置k8s的系统要求

注意:(3台机器均要执行)

(1)关闭防火墙(永久关闭)

[root@localhost ~]# systemctl stop firewalld && systemctl disable firewalld

(2)关闭selinux

SELinux,Security Enhanced Linux 的缩写,也就是安全强化的 Linux,是由美国国家安全局(NSA)联合其他安全机构(比如 SCC 公司)共同开发的,旨在增强传统 Linux 操作系统的安全性,解决传统 Linux 系统中自主访问控制(DAC)系统中的各种权限问题(如 root 权限过高等)。

依次执行命令:

[root@localhost ~]# setenforce 0

[root@localhost ~]# sed -i 's/^SELINUX=enforcing$/SELINUX=permissive/' /etc/selinux/config

(3)关闭swap

Swap分区在系统的物理内存不够用的时候,把硬盘内存中的一部分空间释放出来,以供当前运行的程序使用。那些被释放的空间可能来自一些很长时间没有什么操作的程序,这些被释放的空间被临时保存到Swap分区中,等到那些程序要运行时,再从Swap分区中恢复保存的数据到内存中。

依次执行命令:

[root@localhost ~]# swapoff -a

[root@localhost ~]# sed -i '/swap/s/^\(.*\)$/#\1/g' /etc/fstab

(4)配置iptables的ACCEPT规则

[root@localhost ~]# iptables -F && iptables -X && iptables -F -t nat && iptables -X -t nat && iptables -P FORWARD ACCEPT

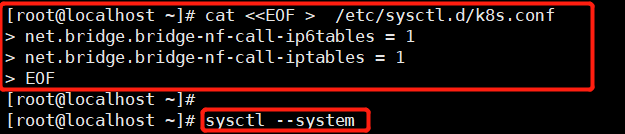

(5)设置系统参数

注:

cat<<EOF,以EOF输入字符作为标准输入结束。

① 执行以下命令

cat <<EOF > /etc/sysctl.d/k8s.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

② 重新加载配置

[root@localhost ~]# sysctl --system

操作如下图:

2.5、安装kubeadm, kubelet and kubectl

注意:(3台机器均要执行)

(1)配置yum源

注:

cat<<EOF,以EOF输入字符作为标准输入结束。

执行以下命令:

cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=http://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64

enabled=1

gpgcheck=0

repo_gpgcheck=0

gpgkey=http://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpghttp://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF

(2)安装kubeadm&kubelet&kubectl

依次执行命令:

#(1)查看源上的kubeaadm版本列表

[root@localhost ~]# yum list kubeadm --showduplicates | sort -r#(2)安装kubelet(会安装依赖kubernetes-cni.x86_64 0:0.7.5-0),切记以下第一步必须

#先安装kubelet-1.14.0-0,否则先安装其他会出现各种问题。

#(本人测试:先安装其他,会默认安装依赖kubelet-1.20.0-0版本,与我们的要求不符,否则后面

# 初始化master节点的时候会报错)

[root@localhost ~]# yum install -y kubelet-1.14.0-0#(3)安装kubeadm、kubectl

[root@localhost ~]# yum install -y kubeadm-1.14.0-0 kubectl-1.14.0-0#(4)查看kubelet版本(保证版本是v1.14.0即完成安装)

[root@localhost ~]# kubelet --version

Kubernetes v1.14.0

注:卸载安装或安装其他版本方法

(1)检查yum方式已安装软件:yum list installed

如检测到已安装:kubeadm.x86_64、kubectl.x86_64、kubelet.x86_64、kubernetes-cni.x86_64

(2)移除(卸载)软件

yum remove kubeadm.x86_64

yum remove kubectl.x86_64

yum remove kubelet.x86_64

yum remove kubernetes-cni.x86_64

(3)docker和k8s设置同一个cgroup

① 编辑/etc/docker/daemon.json内容:

[root@localhost ~]# vi /etc/docker/daemon.json

添加内容如下:

“exec-opts”: [“native.cgroupdriver=systemd”]

附: daemon.json全部内容

{"registry-mirrors": ["https://docker.mirrors.ustc.edu.cn"],"exec-opts": ["native.cgroupdriver=systemd"]

}

② 重启docker

# systemctl daemon-reload

# systemctl restart docker

③ 配置kubelet,启动kubelet,并设置开机启动kubelet

Ⅰ、配置kubelet

[root@localhost ~]# sed -i "s/cgroup-driver=systemd/cgroup-driver=cgroupfs/g" /etc/systemd/system/kubelet.service.d/10-kubeadm.conf

报:“

sed:无法读取 /etc/systemd/system/kubelet.service.d/10-kubeadm.conf:没有那个文件或目录”说明: 报如上问题不用管,属于正常现象,请继续进行下一步操作。

如果上面路径不存在,大概率在此路径:/usr/lib/systemd/system/kubelet.service.d/10-kubeadm.conf

Ⅱ、启动kubelet,并设置开机启动kubelet

[root@localhost ~]# systemctl enable kubelet && systemctl start kubelet

注: 此时使用ps -ef|grep kubelet,查不到任何进程(请先忽略)

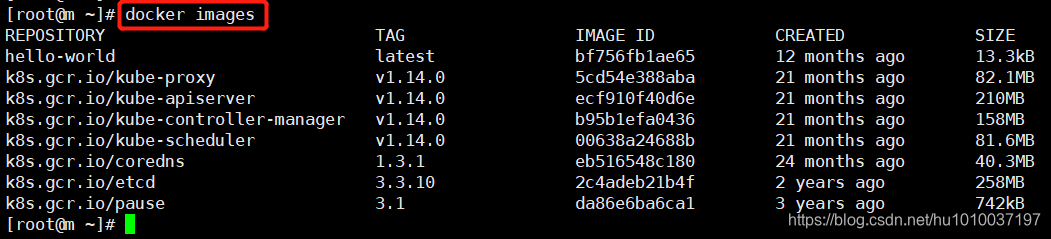

2.6、安装k8s核心组件(proxy、pause、scheduler)

注意:(3台机器均要执行)

(1)查看kubeadm镜像,发现都是国外镜像

[root@localhost ~]# kubeadm config images list

I0106 02:06:13.785323 101920 version.go:96] could not fetch a Kubernetes version from the internet: unable to get URL "https://dl.k8s.io/release/stable-1.txt": Get https://storage.googleapis.com/kubernetes-release/release/stable-1.txt: net/http: request canceled while waiting for connection (Client.Timeout exceeded while awaiting headers)

I0106 02:06:13.785667 101920 version.go:97] falling back to the local client version: v1.14.0

k8s.gcr.io/kube-apiserver:v1.14.0

k8s.gcr.io/kube-controller-manager:v1.14.0

k8s.gcr.io/kube-scheduler:v1.14.0

k8s.gcr.io/kube-proxy:v1.14.0

k8s.gcr.io/pause:3.1

k8s.gcr.io/etcd:3.3.10

k8s.gcr.io/coredns:1.3.1

[root@localhost ~]#

(2)解决国外镜像不能访问的问题

注: 由于国内大陆网络限制,无法获取国外镜像;但是如果是香港的服务器,就可以直接获取。

解决: 由于使用国内地址“registry.cn-hangzhou.aliyuncs.com/google_containers

”获取所有镜像过程(pull镜像—>打tag—>删除原有镜像),每个镜像依次操作过程较麻烦,故此本节提供以脚本方式运行,从国内地址获取镜像(如下):

① 创建并编辑kubeadm.sh脚本

说明: 在当前目录创建脚本文件即可,目的只为执行下载镜像而已。

执行命令:

[root@localhost ~]# vi kubeadm.sh

脚本内容:

#!/bin/bashset -eKUBE_VERSION=v1.14.0

KUBE_PAUSE_VERSION=3.1

ETCD_VERSION=3.3.10

CORE_DNS_VERSION=1.3.1GCR_URL=k8s.gcr.io

ALIYUN_URL=registry.cn-hangzhou.aliyuncs.com/google_containersimages=(kube-proxy:${KUBE_VERSION}

kube-scheduler:${KUBE_VERSION}

kube-controller-manager:${KUBE_VERSION}

kube-apiserver:${KUBE_VERSION}

pause:${KUBE_PAUSE_VERSION}

etcd:${ETCD_VERSION}

coredns:${CORE_DNS_VERSION})for imageName in ${images[@]} ; dodocker pull $ALIYUN_URL/$imageNamedocker tag $ALIYUN_URL/$imageName $GCR_URL/$imageNamedocker rmi $ALIYUN_URL/$imageName

done

② 执行脚本kubeadm.sh

[root@localhost ~]# sh ./kubeadm.sh

说明:

(1)执行脚本命令“sh ./kubeadm.sh”过程中可能报错:

Error response from daemon: Get https://registry.cn-hangzhou.aliyuncs.com/v2/: dial tcp: lookup registry.cn-hangzhou.aliyuncs.com on 8.8.8.8:53: read udp 192.168.116.170:51212->8.8.8.8:53: i/o timeout(2)执行脚本命令“sh ./kubeadm.sh”过程中语法或符号报错:

[root@m xiao]# sh ./kubeadm.sh

./kubeadm.sh: line 2: $‘\r’: command not found

: invalid optionne 3: set: -

set: usage: set [-abefhkmnptuvxBCHP] [-o option-name] [–] [arg …]

[root@m xiao]#【解决方法】去除Shell脚本的\r字符,执行命令:

[root@m xiao]# sed -i ‘s/\r//’ kubeadm.sh

个人测试:

(1)先执行下载其中一个镜像进行测试,如下载kube-proxy,执行:dockerpull registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.14.0,能下载即可。

(2)再执行脚本:sh ./kubeadm.sh

实际执行过程:

[root@m xiao]# sh kubeadm.sh

v1.24.0: Pulling from google_containers/kube-proxy

Digest: sha256:c957d602267fa61082ab8847914b2118955d0739d592cc7b01e278513478d6a8

Status: Image is up to date for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.24.0

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.24.0

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy:v1.24.0

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-proxy@sha256:c957d602267fa61082ab8847914b2118955d0739d592cc7b01e278513478d6a8

v1.24.0: Pulling from google_containers/kube-scheduler

36698cfa5275: Pull complete

fe7d6916a3e1: Pull complete

a47c83d2acf4: Pull complete

Digest: sha256:db842a7c431fd51db7e1911f6d1df27a7b6b6963ceda24852b654d2cd535b776

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.24.0

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.24.0

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler:v1.24.0

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-scheduler@sha256:db842a7c431fd51db7e1911f6d1df27a7b6b6963ceda24852b654d2cd535b776

v1.24.0: Pulling from google_containers/kube-controller-manager

36698cfa5275: Already exists

fe7d6916a3e1: Already exists

45a9a072021e: Pull complete

Digest: sha256:df044a154e79a18f749d3cd9d958c3edde2b6a00c815176472002b7bbf956637

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.24.0

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.24.0

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager:v1.24.0

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-controller-manager@sha256:df044a154e79a18f749d3cd9d958c3edde2b6a00c815176472002b7bbf956637

v1.24.0: Pulling from google_containers/kube-apiserver

36698cfa5275: Already exists

fe7d6916a3e1: Already exists

8ebfef20cea7: Pull complete

Digest: sha256:a04522b882e919de6141b47d72393fb01226c78e7388400f966198222558c955

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.24.0

registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.24.0

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver:v1.24.0

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/kube-apiserver@sha256:a04522b882e919de6141b47d72393fb01226c78e7388400f966198222558c955

3.7: Pulling from google_containers/pause

7582c2cc65ef: Pull complete

Digest: sha256:bb6ed397957e9ca7c65ada0db5c5d1c707c9c8afc80a94acbe69f3ae76988f0c

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.7

registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.7

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/pause:3.7

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/pause@sha256:bb6ed397957e9ca7c65ada0db5c5d1c707c9c8afc80a94acbe69f3ae76988f0c

3.5.3-0: Pulling from google_containers/etcd

36698cfa5275: Already exists

924f6cbb1ab3: Pull complete

11ade7be2717: Pull complete

8c6339f7974a: Pull complete

d846fbeccd2d: Pull complete

Digest: sha256:13f53ed1d91e2e11aac476ee9a0269fdda6cc4874eba903efd40daf50c55eee5

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.3-0

registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.3-0

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/etcd:3.5.3-0

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/etcd@sha256:13f53ed1d91e2e11aac476ee9a0269fdda6cc4874eba903efd40daf50c55eee5

v1.8.6: Pulling from google_containers/coredns

d92bdee79785: Pull complete

6e1b7c06e42d: Pull complete

Digest: sha256:5b6ec0d6de9baaf3e92d0f66cd96a25b9edbce8716f5f15dcd1a616b3abd590e

Status: Downloaded newer image for registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.6

registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.6

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/coredns:v1.8.6

Untagged: registry.cn-hangzhou.aliyuncs.com/google_containers/coredns@sha256:5b6ec0d6de9baaf3e92d0f66cd96a25b9edbce8716f5f15dcd1a616b3abd590e

[root@m xiao]#

③ 查看docker镜像

[root@localhost ~]# docker images

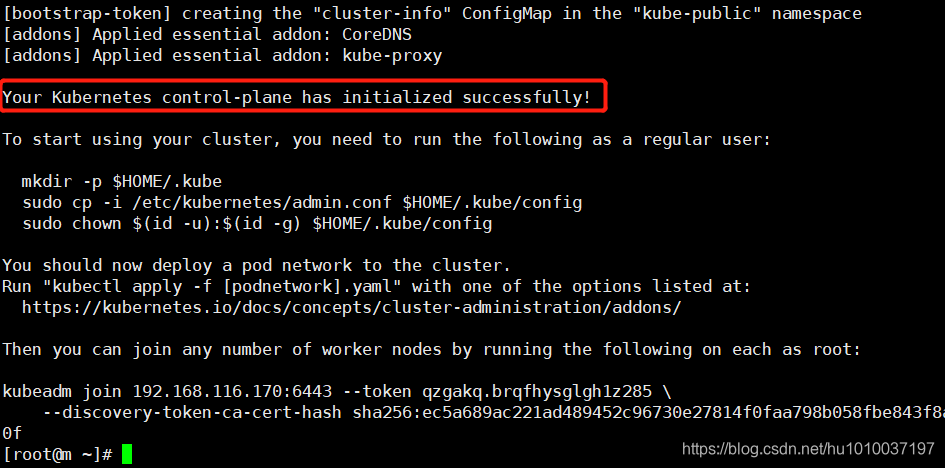

2.7、kube init 初始化 master

注意:(只在Master节点执行)

官网: https://kubernetes.io/docs/reference/setup-tools/kubeadm/kubeadm/

(1)初始化集群状态(可跳过)

说明:

1>

正常情况下,请跳过此步骤。

2> 如果在后续的步骤中出现错误,可进行此步骤操作,然后重新接着下一步执行。

3> 执行命令:[root@m ~]# kubeadm reset

(2)初始化master节点

如果出现如下问题,解决方法请参考博文(

3台机器均要执行):Centos7安装K8S报错:[ERROR KubeletVersion]: the kubelet version is higher than the control plane version.执行初始化master节点命令时,我的机器报了一个错误:

[ERROR KubeletVersion]: the kubelet version is higher than the control plane version. This is not a supported version skew and may lead to a malfunctional cluster. Kubelet version: "1.20.1" Control plane version: "1.14.0"

执行命令(只在Master节点执行):

[root@m ~]# kubeadm init --v=5 \--kubernetes-version=1.14.0 \--apiserver-advertise-address=192.168.116.170 \--pod-network-cidr=10.244.0.0/16

参数说明:

–apiserver-advertise-address:

master节点ip

–v=5会打出详细检查日志

–pod-network-cidr=10.244.0.0/16保持不做修改注意: 修改命令中的ip。

【说明】:如果init过程中还去拉取镜像,请仔细之前步骤是否已经拉取了该镜像,如果已拉取,只是镜像名称不正确,则重新打下tag即可:

格式:docker tag 源镜像名:版本 新镜像名:版本

打tag: docker tag k8s.gcr.io/coredns:v1.8.6 k8s.gcr.io/coredns/coredns:v1.8.6

删除原镜像: docker rmi k8s.gcr.io/coredns:v1.8.6

当看到如下界面,即初始化成功!!!仔细观察

初始化的全部过程如下(仔细阅读,里面有需要继续执行的命令):

[root@m ~]# kubeadm init --v=5 \

> --kubernetes-version=1.14.0 \

> --apiserver-advertise-address=192.168.116.170 \

> --pod-network-cidr=10.244.0.0/16

[init] Using Kubernetes version: v1.14.0

[preflight] Running pre-flight checks

[preflight] Pulling images required for setting up a Kubernetes cluster

[preflight] This might take a minute or two, depending on the speed of your internet connection

[preflight] You can also perform this action in beforehand using 'kubeadm config images pull'

[kubelet-start] Writing kubelet environment file with flags to file "/var/lib/kubelet/kubeadm-flags.env"

[kubelet-start] Writing kubelet configuration to file "/var/lib/kubelet/config.yaml"

[kubelet-start] Activating the kubelet service

[certs] Using certificateDir folder "/etc/kubernetes/pki"

[certs] Generating "ca" certificate and key

[certs] Generating "apiserver" certificate and key

[certs] apiserver serving cert is signed for DNS names [m kubernetes kubernetes.default kubernetes.default.svc kubernetes.default.svc.cluster.local] and IPs [10.96.0.1 192.168.116.170]

[certs] Generating "apiserver-kubelet-client" certificate and key

[certs] Generating "front-proxy-ca" certificate and key

[certs] Generating "front-proxy-client" certificate and key

[certs] Generating "etcd/ca" certificate and key

[certs] Generating "etcd/healthcheck-client" certificate and key

[certs] Generating "etcd/server" certificate and key

[certs] etcd/server serving cert is signed for DNS names [m localhost] and IPs [192.168.116.170 127.0.0.1 ::1]

[certs] Generating "etcd/peer" certificate and key

[certs] etcd/peer serving cert is signed for DNS names [m localhost] and IPs [192.168.116.170 127.0.0.1 ::1]

[certs] Generating "apiserver-etcd-client" certificate and key

[certs] Generating "sa" key and public key

[kubeconfig] Using kubeconfig folder "/etc/kubernetes"

[kubeconfig] Writing "admin.conf" kubeconfig file

[kubeconfig] Writing "kubelet.conf" kubeconfig file

[kubeconfig] Writing "controller-manager.conf" kubeconfig file

[kubeconfig] Writing "scheduler.conf" kubeconfig file

[control-plane] Using manifest folder "/etc/kubernetes/manifests"

[control-plane] Creating static Pod manifest for "kube-apiserver"

[control-plane] Creating static Pod manifest for "kube-controller-manager"

[control-plane] Creating static Pod manifest for "kube-scheduler"

[etcd] Creating static Pod manifest for local etcd in "/etc/kubernetes/manifests"

[wait-control-plane] Waiting for the kubelet to boot up the control plane as static Pods from directory "/etc/kubernetes/manifests". This can take up to 4m0s

[kubelet-check] Initial timeout of 40s passed.

[apiclient] All control plane components are healthy after 108.532279 seconds

[upload-config] storing the configuration used in ConfigMap "kubeadm-config" in the "kube-system" Namespace

[kubelet] Creating a ConfigMap "kubelet-config-1.14" in namespace kube-system with the configuration for the kubelets in the cluster

[upload-certs] Skipping phase. Please see --experimental-upload-certs

[mark-control-plane] Marking the node m as control-plane by adding the label "node-role.kubernetes.io/master=''"

[mark-control-plane] Marking the node m as control-plane by adding the taints [node-role.kubernetes.io/master:NoSchedule]

[bootstrap-token] Using token: qzgakq.brqfhysglgh1z285

[bootstrap-token] Configuring bootstrap tokens, cluster-info ConfigMap, RBAC Roles

[bootstrap-token] configured RBAC rules to allow Node Bootstrap tokens to post CSRs in order for nodes to get long term certificate credentials

[bootstrap-token] configured RBAC rules to allow the csrapprover controller automatically approve CSRs from a Node Bootstrap Token

[bootstrap-token] configured RBAC rules to allow certificate rotation for all node client certificates in the cluster

[bootstrap-token] creating the "cluster-info" ConfigMap in the "kube-public" namespace

[addons] Applied essential addon: CoreDNS

[addons] Applied essential addon: kube-proxyYour Kubernetes control-plane has initialized successfully!To start using your cluster, you need to run the following as a regular user:mkdir -p $HOME/.kubesudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/configsudo chown $(id -u):$(id -g) $HOME/.kube/configYou should now deploy a pod network to the cluster.

Run "kubectl apply -f [podnetwork].yaml" with one of the options listed at:https://kubernetes.io/docs/concepts/cluster-administration/addons/Then you can join any number of worker nodes by running the following on each as root:kubeadm join 192.168.116.170:6443 --token qzgakq.brqfhysglgh1z285 \--discovery-token-ca-cert-hash sha256:ec5a689ac221ad489452c96730e27814f0faa798b058fbe843f8a47388fe910f

[root@m ~]#

从上述日志中可以看出,etcd、controller、scheduler等组件都以pod的方式安装成功了。

切记:要留意上述过程中的最后部分(如下图),后面要用

接着,依次执行命令(只在Master节点执行):

[root@m ~]# mkdir -p $HOME/.kube

[root@m ~]# sudo cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@m ~]# sudo chown $(id -u):$(id -g) $HOME/.kube/config

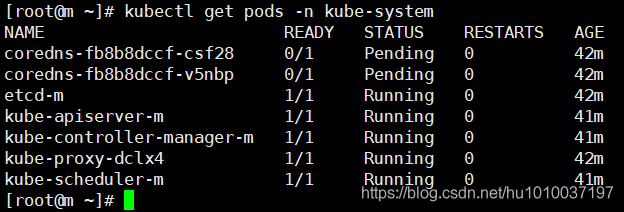

查看pod(Master节点):

[root@m ~]# kubectl get pods -n kube-system

注意: 如上图,发现必须coredns处于

Pending(等待)状态,这是由于缺少各节点互联的网络插件导致,安装网络插件参考下一小节。

(3)安装calico网络插件(只在Master节点)

选择网络插件网址:https://kubernetes.io/docs/concepts/cluster-administration/addons/

这里使用calico网络插件:https://docs.projectcalico.org/v3.9/getting-started/kubernetes/

提示:最新我安装的版本为:calico-v3.21

# (1)下载calico.yaml到当前目录

[root@m ~]# wget https://docs.projectcalico.org/v3.9/manifests/calico.yaml# (2)查看下calico.yaml中所需的镜像image

[root@m ~]# cat calico.yaml | grep imageimage: calico/cni:v3.9.6image: calico/cni:v3.9.6image: calico/pod2daemon-flexvol:v3.9.6image: calico/node:v3.9.6image: calico/kube-controllers:v3.9.6

[root@m ~]# # (3)为后续操作方便,我们这里先手动pull下所有镜像

[root@m ~]# docker pull calico/cni:v3.9.3

[root@m ~]# docker pull calico/pod2daemon-flexvol:v3.9.3

[root@m ~]# docker pull calico/node:v3.9.3

[root@m ~]# docker pull calico/kube-controllers:v3.9.3# (4)应用calico.yaml

[root@m ~]# kubectl apply -f calico.yaml# (5)不断查看pod(Master节点),等会,所有pod会处于Running状态即安装成功(如下图)

[root@m ~]# kubectl get pods -n kube-system

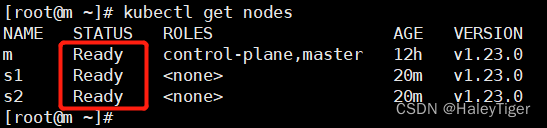

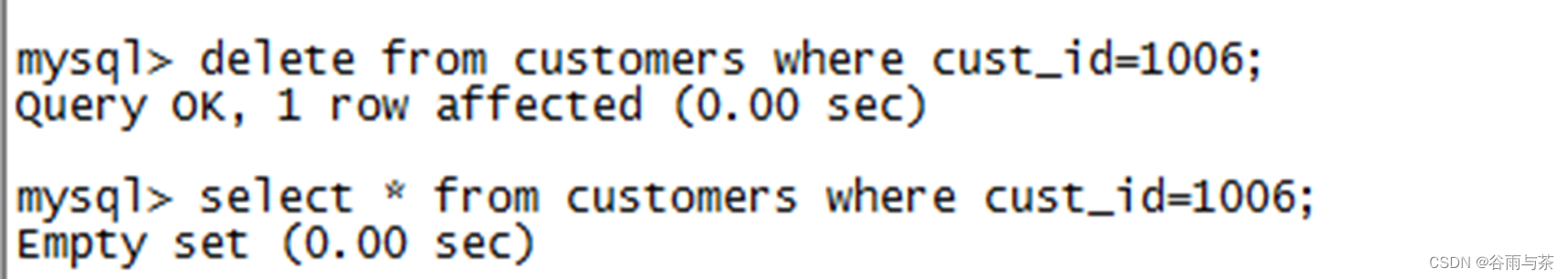

(4)将worker01节点和worker02节点加入master节点

# (1)在master节点查看所有node信息

[root@m ~]# kubectl get nodes# (2)在worker01节点执行(命令来自初始化master节点的过程日志中)

[root@m ~]# kubeadm join 192.168.116.170:6443 --token qzgakq.brqfhysglgh1z285 \--discovery-token-ca-cert-hash sha256:ec5a689ac221ad489452c96730e27814f0faa798b058fbe843f8a47388fe910f# (3)在worker02节点执行(命令来自初始化master节点的过程日志中)

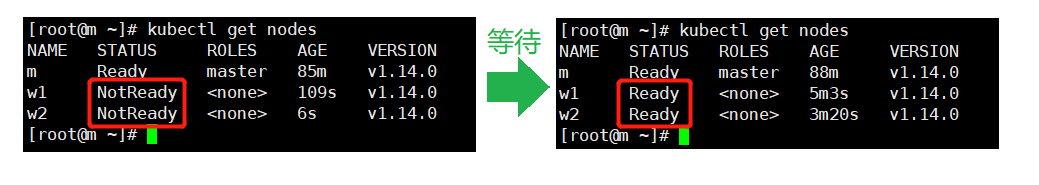

[root@m ~]# kubeadm join 192.168.116.170:6443 --token qzgakq.brqfhysglgh1z285 \--discovery-token-ca-cert-hash sha256:ec5a689ac221ad489452c96730e27814f0faa798b058fbe843f8a47388fe910f# (4)再在master节点查看所有node信息(等待所有状态变为Ready,如下图)-大概需要等待3~5分钟

[root@m ~]# kubectl get nodes

注:如果join报错,请看后续处理异常环节。

常用命令:

(1)在master节点实时查看所有的Pods(前台监控)

kubectl get pods --all-namespaces -w

(2)在master节点查看所有node信息

kubectl get nodes

.

join异常处理:

.异常详情:

accepts at most 1 arg(s), received 2

问题分析: 最多接收1个参数,实际2个参数。问题大概率是join命令格式问题,我是将命令复制到windows上了,又复制粘贴去执行(里面换行符导致多一个参数)

解决方法: 删除换行即可再次执行异常详情:

[preflight] Running pre-flight checks error execution phase preflight: couldn't validate the identity of the API Server: invalid discovery token CA certificate hash: invalid hash "sha256:9f89085f6bfbf60a35935b502a57532204c12a3da3d6412", expected a 32 byte SHA-256 hash, found 23 bytes

问题分析: master节点的token、sha256均过期了(我是隔天才去执行join命令的)

解决方法:

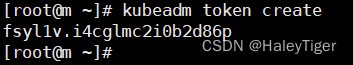

(1)在master节点重新生成token:

[root@m ~]# kubeadm token create

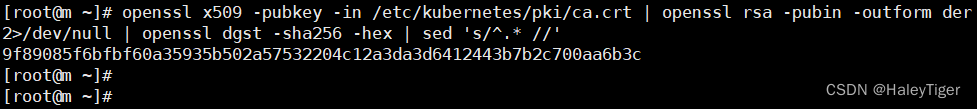

(2)在master节点重新生成sha256:

[root@m ~]# openssl x509 -pubkey -in /etc/kubernetes/pki/ca.crt | openssl rsa -pubin -outform der 2>/dev/null | openssl dgst -sha256 -hex | sed ‘s/^.* //’

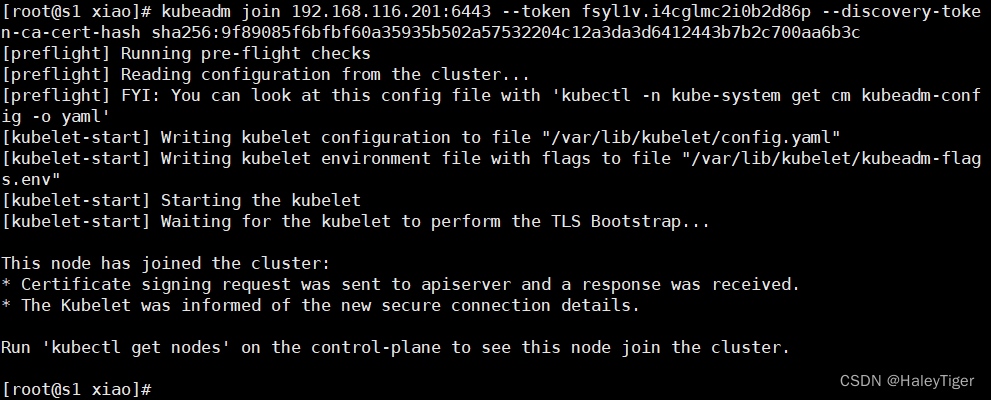

(3)在work01、work02节点重新执行join命令:

kubeadm join 192.168.116.201:6443 --tokenfsyl1v.i4cglmc2i0b2d86p--discovery-token-ca-cert-hash sha256:9f89085f6bfbf60a35935b502a57532204c12a3da3d6412443b7b2c700aa6b3c

(4)再在master节点查看所有node信息(等待所有状态变为Ready,如下图)-大概需要等待3~5分钟

[root@m ~]# kubectl get nodes

安装完成!!!

三、测试(体验)Pod

(1)定义/创建pod.yml文件

执行以下创建pod_nginx_rs.yaml的命令(会在当前目录创建):

cat > pod_nginx_rs.yaml <<EOF

apiVersion: apps/v1

kind: ReplicaSet

metadata:name: nginxlabels:tier: frontend

spec:replicas: 3selector:matchLabels:tier: frontendtemplate:metadata:name: nginxlabels:tier: frontendspec:containers:- name: nginximage: nginxports:- containerPort: 80

EOF

(2)创建pod(根据pod_nginx_rs.yml创建)

[root@m ~]# kubectl apply -f pod_nginx_rs.yaml

replicaset.apps/nginx created

[root@m ~]#

(3)查看pod

注意: 创建pod需要一定的时间,等待所有pod状态均变为Running状态即可。

# 概览所有pod

[root@m ~]# kubectl get pods# 概览所有pod(可看到分布到的集群节点node)

[root@m ~]# kubectl get pods -o wide# 查看指定pod详细信息(这里查看pod:nginx)

[root@m ~]# kubectl describe pod nginx

(4)感受通过rs将pod扩容

#(1)通过rs将pod扩容

[root@m ~]# kubectl scale rs nginx --replicas=5

replicaset.extensions/nginx scaled

[root@m ~]# #(2)概览pod(等待所有pod状态均变为Running状态)

[root@m ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-7b465 1/1 Running 0 16m 192.168.190.67 w1 <none> <none>

nginx-fn8rx 1/1 Running 0 16m 192.168.80.194 w2 <none> <none>

nginx-gp44d 1/1 Running 0 26m 192.168.190.65 w1 <none> <none>

nginx-q2cbz 1/1 Running 0 26m 192.168.80.193 w2 <none> <none>

nginx-xw85v 1/1 Running 0 26m 192.168.190.66 w1 <none> <none>

[root@m ~]#

(5)删除测试pod

[root@m ~]# kubectl delete -f pod_nginx_rs.yaml

replicaset.apps "nginx" deleted

[root@m ~]#

[root@m ~]# # 查看pod(正在终止中...)

[root@m ~]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-7b465 1/1 Terminating 0 21m 192.168.190.67 w1 <none> <none>

nginx-fn8rx 1/1 Terminating 0 21m 192.168.80.194 w2 <none> <none>

nginx-gp44d 1/1 Terminating 0 31m 192.168.190.65 w1 <none> <none>

nginx-q2cbz 1/1 Terminating 0 31m 192.168.80.193 w2 <none> <none>

nginx-xw85v 1/1 Terminating 0 31m 192.168.190.66 w1 <none> <none># 查看pod(无法查到)

[root@m ~]# kubectl get pods

No resources found in default namespace.

[root@m ~]#