资料:

https://github.com/hiyouga/LLaMA-Factory/blob/main/README_zh.md

https://www.53ai.com/news/qianyanjishu/2015.html

代码拉取:

git clone https://github.com/hiyouga/LLaMA-Factory.git

cd LLaMA-Factory

build镜像和执行镜像:

cd /ssd/xiedong/glm-4-9b-xd/LLaMA-Factorydocker build -f ./docker/docker-cuda/Dockerfile \--build-arg INSTALL_BNB=false \--build-arg INSTALL_VLLM=false \--build-arg INSTALL_DEEPSPEED=false \--build-arg INSTALL_FLASHATTN=false \--build-arg PIP_INDEX=https://pypi.org/simple \-t llamafactory:latest .docker run -dit --gpus=all \-v ./hf_cache:/root/.cache/huggingface \-v ./ms_cache:/root/.cache/modelscope \-v ./data:/app/data \-v ./output:/app/output \-v /ssd/xiedong/glm-4-9b-xd:/ssd/xiedong/glm-4-9b-xd \-p 9998:7860 \-p 9999:8000 \--shm-size 16G \llamafactory:latestdocker exec -it a2b34ec1 bashpip install bitsandbytes>=0.37.0

快速开始

下面三行命令分别对 Llama3-8B-Instruct 模型进行 LoRA 微调、推理和合并。

llamafactory-cli train examples/train_lora/llama3_lora_sft.yaml

llamafactory-cli chat examples/inference/llama3_lora_sft.yaml

llamafactory-cli export examples/merge_lora/llama3_lora_sft.yaml

高级用法请参考 examples/README_zh.md(包括多 GPU 微调)。

Tip

使用 llamafactory-cli help 显示帮助信息。

LLaMA Board 可视化微调(由 Gradio 驱动)

llamafactory-cli webui

看一点资料:https://www.cnblogs.com/lm970585581/p/18140564

sft指令微调

官方的lora sft微调例子:

CUDA_VISIBLE_DEVICES=0 python src/train_bash.py \--stage sft \--do_train \--model_name_or_path path_to_llama_model \--dataset alpaca_gpt4_zh \--template default \--finetuning_type lora \--lora_target q_proj,v_proj \--output_dir path_to_sft_checkpoint \--overwrite_cache \--per_device_train_batch_size 4 \--gradient_accumulation_steps 4 \--lr_scheduler_type cosine \--logging_steps 10 \--save_steps 1000 \--learning_rate 5e-5 \--num_train_epochs 3.0 \--plot_loss \--fp16

数据准备

数据准备的官方说明:

https://github.com/hiyouga/LLaMA-Factory/blob/main/data/README_zh.md

偏好数据集是用在奖励建模阶段的。

本次微调选择了开源项目数据集,地址如下:

https://github.com/KMnO4-zx/huanhuan-chat/blob/master/dataset/train/lora/huanhuan.json

下载后,将json文件存放到LLaMA-Factory的data目录下。

修改data目录下dataset_info.json 文件。

直接增加以下内容即可:

"huanhuan": {"file_name": "huanhuan.json"},

如图:

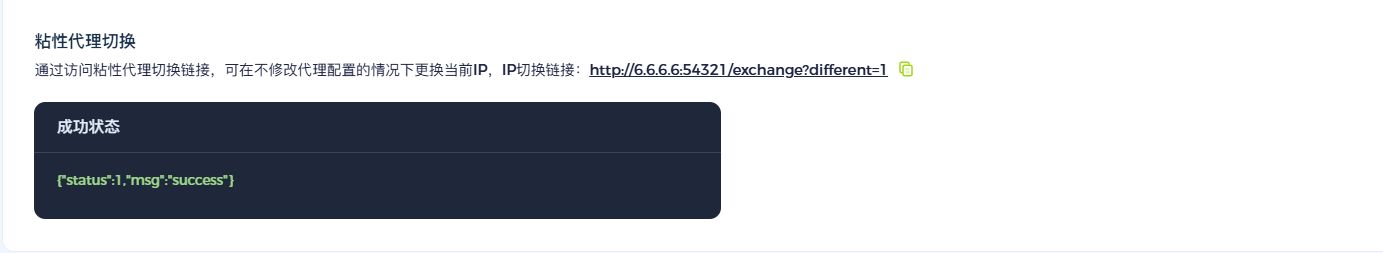

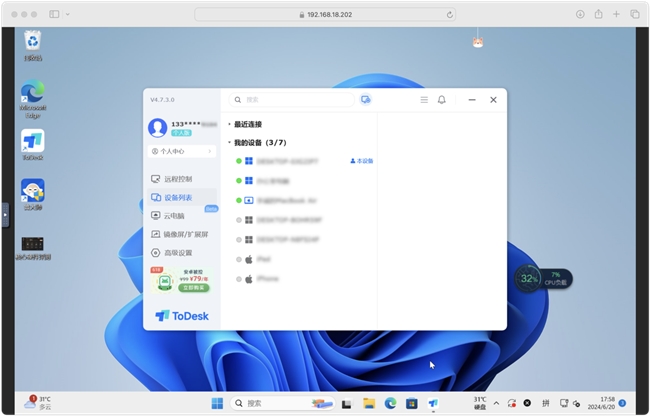

进入容器打开webui:

llamafactory-cli webui

网页打开页面:

http://10.136.19.26:9998/

webui训练老报错,可以把指令弄下来去容器里执行:

llamafactory-cli train \--stage sft \--do_train True \--model_name_or_path /ssd/xiedong/glm-4-9b-xd/glm-4-9b-chat \--preprocessing_num_workers 16 \--finetuning_type lora \--quantization_method bitsandbytes \--template glm4 \--flash_attn auto \--dataset_dir data \--dataset huanhuan \--cutoff_len 1024 \--learning_rate 5e-05 \--num_train_epochs 3.0 \--max_samples 100000 \--per_device_train_batch_size 2 \--gradient_accumulation_steps 8 \--lr_scheduler_type cosine \--max_grad_norm 1.0 \--logging_steps 5 \--save_steps 100 \--warmup_steps 0 \--optim adamw_torch \--packing False \--report_to none \--output_dir saves/GLM-4-9B-Chat/lora/train_2024-07-23-04-22-25 \--bf16 True \--plot_loss True \--ddp_timeout 180000000 \--include_num_input_tokens_seen True \--lora_rank 8 \--lora_alpha 32 \--lora_dropout 0.1 \--lora_target all

训练完:

***** train metrics *****

epoch = 2.9807

num_input_tokens_seen = 741088

total_flos = 36443671GF

train_loss = 2.5584

train_runtime = 0:09:24.59

train_samples_per_second = 19.814

train_steps_per_second = 0.308

chat

评估模型

40G显存空余才行,这模型太大。

类似,看指令 ,然后命令行执行:

CUDA_VISIBLE_DEVICES=1,2,3 llamafactory-cli train \--stage sft \--model_name_or_path /ssd/xiedong/glm-4-9b-xd/glm-4-9b-chat \--preprocessing_num_workers 16 \--finetuning_type lora \--quantization_method bitsandbytes \--template glm4 \--flash_attn auto \--dataset_dir data \--eval_dataset huanhuan \--cutoff_len 1024 \--max_samples 100000 \--per_device_eval_batch_size 2 \--predict_with_generate True \--max_new_tokens 512 \--top_p 0.7 \--temperature 0.95 \--output_dir saves/GLM-4-9B-Chat/lora/eval_2024-07-23-04-22-25 \--do_predict True \--adapter_name_or_path saves/GLM-4-9B-Chat/lora/train_2024-07-23-04-22-25

数据集有点大,没执行完我就停止了,结果可能是存这里:/app/saves/GLM-4-9B-Chat/lora/eval_2024-07-23-04-22-25

导出模型

填导出路径进行导出/ssd/xiedong/glm-4-9b-xd/export_test0723。

总结

这么看下来,这个文档的含金量很高:

https://github.com/hiyouga/LLaMA-Factory/tree/main/examples

为了方便使用,推送了这个镜像:

docker push kevinchina/deeplearning:llamafactory-0.8.3