一、数据录制

数据录制的部分参考了网上的部分代码,代码本身并不复杂,基本都是简单的CARLA语法,关键的一点在于,CARLA内部本身并没有预设的双目摄像头,需要我们添加两个朝向相同的摄像头来组成双目系统,这一点体现在添加相机时的位置和角度。之后利用回调函数进行保存,为了方便构建真值信息,这里我同时添加了gnss和imu的信息。为了让录制过程车辆不会停下来等红绿灯,交通管理的部分已经注释掉了。代码如下:

#!/usr/bin/env python

import glob

import os

import sys

import time

try:sys.path.append(glob.glob('../carla/dist/carla-*%d.%d-%s.egg' % (sys.version_info.major,sys.version_info.minor,'win-amd64' if os.name == 'nt' else 'linux-x86_64'))[0])

except IndexError:passimport carla

import random

import numpy as np

import cv2

from queue import Queue, Empty

import copy

import random

random.seed(0)

from agents.navigation.basic_agent import BasicAgent

from agents.navigation.behavior_agent import BehaviorAgent # args

import argparse

parser = argparse.ArgumentParser()

parser.add_argument('--host', metavar='H', default='127.0.0.1', help='IP of the host server (default: 127.0.0.1)')

parser.add_argument('--port', '-p', default=2000, type=int, help='TCP port to listen to (default: 2000)')

parser.add_argument('--tm_port', default=8000, type=int, help='Traffic Manager Port (default: 8000)')

parser.add_argument('--ego-spawn', type=list, default=None, help='[x,y] in world coordinate')

parser.add_argument('--top-view', default=True, help='Setting spectator to top view on ego car')

parser.add_argument('--map', default='Town10HD_Opt', help='Town Map')

parser.add_argument('--sync', default=True, help='Synchronous mode execution')

parser.add_argument('--sensor-h', default=2.4, help='Sensor Height')

parser.add_argument('--save-path', default='/home/zhihe/Documents/Dataset/CARLA/Town10/', help='Synchronous mode execution')

parser.add_argument('--behavior', type=str, default='normal', help='Choose one of the possible agent behaviors')

args = parser.parse_args()IM_WIDTH = 1392

IM_HEIGHT = 512actor_list, sensor_list = [], []

sensor_type = ['rgb','lidar','imu','gnss']

def main(args):client = carla.Client(args.host, args.port)client.set_timeout(5.0)traffic_manager = client.get_trafficmanager()# world = client.get_world()world_name = args.mapworld = client.load_world(world_name)# 获取所有的交通灯traffic_lights = world.get_actors().filter('traffic.traffic_light')# 将所有交通灯设置为绿灯并冻结状态for traffic_light in traffic_lights:traffic_light.set_state(carla.TrafficLightState.Green)traffic_light.freeze(True) # 冻结当前状态,保持绿灯不变blueprint_library = world.get_blueprint_library()try:original_settings = world.get_settings()settings = world.get_settings()settings.fixed_delta_seconds = 0.05settings.synchronous_mode = Trueworld.apply_settings(settings)traffic_manager.set_synchronous_mode(True)spectator = world.get_spectator()points_in_map = world.get_map().get_spawn_points()start_position = points_in_map[56]end_position = points_in_map[84]ego_vehicle = world.spawn_actor(random.choice(blueprint_library.filter("model3")), start_position)actor_list.append(ego_vehicle)if args.sync:world.tick()else:world.wait_for_tick()physics_control = ego_vehicle.get_physics_control()physics_control.use_sweep_wheel_collision = Trueego_vehicle.apply_physics_control(physics_control)#-------------------------- 进入传感器部分 --------------------------#sensor_queue = Queue()cam_bp = blueprint_library.find('sensor.camera.rgb')# lidar_bp = blueprint_library.find('sensor.lidar.ray_cast')imu_bp = blueprint_library.find('sensor.other.imu')gnss_bp = blueprint_library.find('sensor.other.gnss')cam_bp.set_attribute("image_size_x", "{}".format(IM_WIDTH))cam_bp.set_attribute("image_size_y", "{}".format(IM_HEIGHT))cam_bp.set_attribute("fov", "60")# cam_bp.set_attribute('sensor_tick', '0.1')cam01 = world.spawn_actor(cam_bp, carla.Transform(carla.Location(y=-1, z=args.sensor_h),carla.Rotation(yaw=0)), attach_to=ego_vehicle)cam01.listen(lambda data: sensor_callback(data, sensor_queue, "rgb_left"))sensor_list.append(cam01)cam02 = world.spawn_actor(cam_bp, carla.Transform(carla.Location(y=1, z=args.sensor_h),carla.Rotation(yaw=0)), attach_to=ego_vehicle)cam02.listen(lambda data: sensor_callback(data, sensor_queue, "rgb_right"))sensor_list.append(cam02)# lidar_bp.set_attribute('channels', '64')# lidar_bp.set_attribute('points_per_second', '200000')# lidar_bp.set_attribute('range', '32')# lidar_bp.set_attribute('rotation_frequency', str(int(1/settings.fixed_delta_seconds))) # lidar01 = world.spawn_actor(lidar_bp, carla.Transform(carla.Location(z=args.sensor_h)), attach_to=ego_vehicle)# lidar01.listen(lambda data: sensor_callback(data, sensor_queue, "lidar"))# sensor_list.append(lidar01)imu01 = world.spawn_actor(imu_bp, carla.Transform(carla.Location(z=args.sensor_h)), attach_to=ego_vehicle)imu01.listen(lambda data: sensor_callback(data, sensor_queue, "imu"))sensor_list.append(imu01)gnss01 = world.spawn_actor(gnss_bp, carla.Transform(carla.Location(z=args.sensor_h)), attach_to=ego_vehicle)gnss01.listen(lambda data: sensor_callback(data, sensor_queue, "gnss"))sensor_list.append(gnss01)#-------------------------- 传感器设置完毕 --------------------------## 清空文档file_path = args.save_path +'imu/'+str(args.map)+'.txt'with open(file_path, 'w') as file:file.write("") file_path = args.save_path +'gnss/'+str(args.map)+'.txt'with open(file_path, 'w') as file:file.write("")# 指定要清空图片文件的路径directory = args.save_path +'rgb/image_left'file_list = os.listdir(directory)# 筛选出所有的图片文件并删除for file in file_list:if file.lower().endswith(('.png', '.jpg', '.jpeg', '.gif', '.bmp')):file_path = os.path.join(directory, file)os.remove(file_path)directory = args.save_path +'rgb/image_right'file_list = os.listdir(directory)# 筛选出所有的图片文件并删除for file in file_list:if file.lower().endswith(('.png', '.jpg', '.jpeg', '.gif', '.bmp')):file_path = os.path.join(directory, file)os.remove(file_path)agent = BehaviorAgent(ego_vehicle, behavior=args.behavior)agent.set_destination(end_position.location)while True:# Tick the server# agent.update_information(ego_vehicle)world.tick()# 将CARLA界面摄像头跟随车动loc = ego_vehicle.get_transform().locationspectator.set_transform(carla.Transform(carla.Location(x=loc.x,y=loc.y,z=35),carla.Rotation(yaw=0,pitch=-90,roll=0)))w_frame = world.get_snapshot().frameprint("\nWorld's frame: %d" % w_frame)try:rgbs_left = []rgbs_right = []rgb_timestamp = 0for i in range (0, len(sensor_list)):s_frame, s_name, s_data = sensor_queue.get(True, 1.0)print(" Frame: %d Sensor: %s" % (s_frame, s_name))# sensor_type = s_name.split('_')[0]if s_name == 'rgb_left':rgb_timestamp = s_data.timestamprgbs_left.append(_parse_image_cb(s_data))elif s_name == 'rgb_right':rgb_timestamp = s_data.timestamprgbs_right.append(_parse_image_cb(s_data))elif s_name == 'lidar':lidar = _parse_lidar_cb(s_data)elif s_name == 'imu':imu = s_dataelif s_name == 'gnss':gnss = s_data# 仅用来可视化 可注释rgb_left = np.concatenate(rgbs_left, axis=1)[...,:3]rgb_right = np.concatenate(rgbs_right, axis=1)# cv2.imshow('vizs', visualize_data(rgb, imu_yaw, gnss))# cv2.imshow('vizs', visualize_data(rgb, lidar, imu_yaw, gnss))# cv2.waitKey(100)mkdir_folder(args.save_path)if rgb_left is None or args.save_path is not None:filename = args.save_path +'rgb/image_left/'+str(rgb_timestamp)+'.png'cv2.imwrite(filename, np.array(rgb_left[...,::-1]))if rgb_right is None or args.save_path is not None:filename = args.save_path +'rgb/image_right/'+str(rgb_timestamp)+'.png'cv2.imwrite(filename, np.array(rgb_right[...,::-1]))# filename = args.save_path +'lidar/'+str(w_frame)+'.npy'# np.save(filename, lidar)if imu is None or args.save_path is not None:file_path = args.save_path +'imu/'+str(args.map)+'.txt'with open(file_path, 'a') as file:file.write(str(imu.timestamp)+' '+str(imu.gyroscope.y)+' '+str(imu.gyroscope.x)+' '+str(imu.gyroscope.z)+'\n')if gnss is None or args.save_path is not None:file_path = args.save_path +'gnss/'+str(args.map)+'.txt'with open(file_path, 'a') as file:file.write(str(gnss.timestamp)+' '+str(gnss.latitude)+' '+str(gnss.longitude)+' '+str(gnss.altitude)+'\n')except Empty:print(" Some of the sensor information is missed")if (agent.done()):breakcontrol = agent.run_step()control.manual_gear_shift = Falseego_vehicle.apply_control(control)finally:world.apply_settings(original_settings)traffic_manager.set_synchronous_mode(False)for sensor in sensor_list:sensor.destroy()for actor in actor_list:actor.destroy()print("All cleaned up!")def mkdir_folder(path):for s_type in sensor_type:if not os.path.isdir(os.path.join(path, s_type)):os.makedirs(os.path.join(path, s_type))return Truedef sensor_callback(sensor_data, sensor_queue, sensor_name):# Do stuff with the sensor_data data like save it to disk# Then you just need to add to the queuesensor_queue.put((sensor_data.frame, sensor_name, sensor_data))# modify from world on rail code

# def visualize_data(rgb, lidar, imu_yaw, gnss, text_args=(cv2.FONT_HERSHEY_SIMPLEX, 0.3, (255,255,255), 1)):# canvas = np.array(rgb[...,::-1])# if lidar is not None:

# lidar_viz = lidar_to_bev(lidar).astype(np.uint8)

# lidar_viz = cv2.cvtColor(lidar_viz,cv2.COLOR_GRAY2RGB)

# canvas = np.concatenate([canvas, cv2.resize(lidar_viz.astype(np.uint8), (canvas.shape[0], canvas.shape[0]))], axis=1)# # cv2.putText(canvas, f'yaw angle: {imu_yaw:.3f}', (4, 10), *text_args)

# # cv2.putText(canvas, f'log: {gnss[0]:.3f} alt: {gnss[1]:.3f} brake: {gnss[2]:.3f}', (4, 20), *text_args)# return canvas

def visualize_data(rgb, imu_yaw, gnss, text_args=(cv2.FONT_HERSHEY_SIMPLEX, 0.3, (255,255,255), 1)):canvas = np.array(rgb[...,::-1])# if lidar is not None:# lidar_viz = lidar_to_bev(lidar).astype(np.uint8)# lidar_viz = cv2.cvtColor(lidar_viz,cv2.COLOR_GRAY2RGB)# canvas = np.concatenate([canvas, cv2.resize(lidar_viz.astype(np.uint8), (canvas.shape[0], canvas.shape[0]))], axis=1)# cv2.putText(canvas, f'yaw angle: {imu_yaw:.3f}', (4, 10), *text_args)# cv2.putText(canvas, f'log: {gnss[0]:.3f} alt: {gnss[1]:.3f} brake: {gnss[2]:.3f}', (4, 20), *text_args)return canvas

# modify from world on rail code

def lidar_to_bev(lidar, min_x=-24,max_x=24,min_y=-16,max_y=16, pixels_per_meter=4, hist_max_per_pixel=10):xbins = np.linspace(min_x, max_x+1,(max_x - min_x) * pixels_per_meter + 1,)ybins = np.linspace(min_y, max_y+1,(max_y - min_y) * pixels_per_meter + 1,)# Compute histogram of x and y coordinates of points.hist = np.histogramdd(lidar[..., :2], bins=(xbins, ybins))[0]# Clip histogramhist[hist > hist_max_per_pixel] = hist_max_per_pixel# Normalize histogram by the maximum number of points in a bin we care about.overhead_splat = hist / hist_max_per_pixel * 255.# Return splat in X x Y orientation, with X parallel to car axis, Y perp, both parallel to ground.return overhead_splat[::-1,:]# modify from manual control

def _parse_image_cb(image):array = np.frombuffer(image.raw_data, dtype=np.dtype("uint8"))array = np.reshape(array, (image.height, image.width, 4))array = array[:, :, :3]array = array[:, :, ::-1]return array

# modify from leaderboard

def _parse_lidar_cb(lidar_data):points = np.frombuffer(lidar_data.raw_data, dtype=np.dtype('f4'))points = copy.deepcopy(points)points = np.reshape(points, (int(points.shape[0] / 4), 4))return pointsif __name__ == "__main__":try:main(args)except KeyboardInterrupt:print(' - Exited by user.')

为了方便重复录制数据,这里我是使用预设的地图点作为起点和终点,为了方便查看当前地图的所有地图点,可以使用下面的代码进行所有地图点的可视化。

#!/usr/bin/env python# Copyright (c) 2018 Intel Labs.

# authors: German Ros (german.ros@intel.com)

#

# This work is licensed under the terms of the MIT license.

# For a copy, see <https://opensource.org/licenses/MIT>."""Example of automatic vehicle control from client side."""from __future__ import print_functionimport argparse

import collections

import datetime

import glob

import logging

import math

import os

import numpy.random as random

import re

import sys

import weakref

import cv2try:import pygamefrom pygame.locals import KMOD_CTRLfrom pygame.locals import K_ESCAPEfrom pygame.locals import K_q

except ImportError:raise RuntimeError('cannot import pygame, make sure pygame package is installed')try:import numpy as np

except ImportError:raise RuntimeError('cannot import numpy, make sure numpy package is installed')# ==============================================================================

# -- Find CARLA module ---------------------------------------------------------

# ==============================================================================

try:sys.path.append(glob.glob('../carla/dist/carla-*%d.%d-%s.egg' % (sys.version_info.major,sys.version_info.minor,'win-amd64' if os.name == 'nt' else 'linux-x86_64'))[0])

except IndexError:pass# ==============================================================================

# -- Add PythonAPI for release mode --------------------------------------------

# ==============================================================================

try:sys.path.append(os.path.dirname(os.path.dirname(os.path.abspath(__file__))) + '/carla')

except IndexError:passfrom carla import ColorConverter as ccfrom agents.navigation.behavior_agent import BehaviorAgent # pylint: disable=import-error

from agents.navigation.basic_agent import BasicAgent # pylint: disable=import-errorimport carlaclient = carla.Client('localhost',2000)

world = client.get_world()

#world.set_weather(world.get_weather().ClearNight)

m = world.get_map()

transform = carla.Transform()

spectator = world.get_spectator()

bv_transform = carla.Transform(transform.location + carla.Location(z=250,x=0), carla.Rotation(yaw=0, pitch=-90))

spectator.set_transform(bv_transform)blueprint_library = world.get_blueprint_library()

spawn_points = m.get_spawn_points()for i, spawn_point in enumerate(spawn_points):world.debug.draw_string(spawn_point.location, str(i), life_time=100)world.debug.draw_arrow(spawn_point.location, spawn_point.location + spawn_point.get_forward_vector(), life_time=100)while True:world.wait_for_tick()

二、真值处理

录制过程记录的imu和gnss信息需要进行处理才能拼接成tum格式的groundtruth。下面代码是用于拼接imu和gnss的代码,这里默认imu输出的是时间戳和rpy角度,gnss输出的是时间戳和xyz坐标、转换为tum格式时,rpy角会被转换为四元数形式,而xyz按道理是可以直接用,但是拼接后容易出现无法和SLAM结果对齐的问题,这里还是建议根据SLAM运行结果,调整xyz的顺序。

import numpy as np

from scipy.spatial.transform import Rotation as R# 定义函数,将 RPY 转换为四元数

def rpy_to_quaternion(roll, pitch, yaw):r = R.from_euler('xyz', [roll, pitch, yaw], degrees=False)q = r.as_quat() # [qx, qy, qz, qw]return q[0], q[1], q[2], q[3]# 读取 IMU 文件和 GNSS 文件

imu_data = np.loadtxt('./Town10/imu/Town10HD_Opt.txt')

gnss_data = np.loadtxt('./Town10/gnss/Town10HD_Opt.txt')# 检查时间戳一致性

assert np.array_equal(imu_data[:, 0], gnss_data[:, 0]), "时间戳不一致"# 初始化保存轨迹真值的数据列表

trajectory = []# 遍历每一行数据

for i in range(len(imu_data)):timestamp = imu_data[i, 0]roll, pitch, yaw = imu_data[i, 1], imu_data[i, 2], imu_data[i, 3]tx, ty, tz = gnss_data[i, 1], gnss_data[i, 2], gnss_data[i, 3]# 转换 RPY 为四元数qx, qy, qz, qw = rpy_to_quaternion(roll, pitch, yaw)# 组合数据并添加到轨迹列表trajectory.append([timestamp, 100000*tx, 0*tz, 100000*ty, qx, qy, qz, qw])# 保存结果到新文件

np.savetxt('groundtruth.txt', trajectory, fmt='%.6f', delimiter=' ', header="timestamp tx ty tz qx qy qz qw")三、使用ORBSLAM2运行自建CARLA数据集

在使用ORBSLAM2运行自建数据集时,首先需要自己构建一个time.txt,不然会出现无法读取图像的问题,我在录制数据集时采用的是将时间戳作为文件名,这会导致直接转换为double时溢出,所以这里我限制了六位的长度。

import osdef save_sorted_and_rename_files(directory_path, output_file):# 获取目录下所有以 .png 结尾的文件名filenames = [f for f in os.listdir(directory_path) if f.endswith('.png') and os.path.isfile(os.path.join(directory_path, f))]# 将文件名去掉 .png 后缀并转换为浮点数,再按浮点数排序filenames = sorted(filenames, key=lambda x: float(x.replace('.png', '')))# 重命名文件并写入排序后的文件名到txt文件with open(output_file, 'w') as file:for original_filename in filenames:# 去掉 .png 后缀并保留小数点后六位try:float_value = float(original_filename.replace('.png', ''))new_filename = f"{float_value:.6f}.png"# 重命名文件original_path = os.path.join(directory_path, original_filename)new_path = os.path.join(directory_path, new_filename)os.rename(original_path, new_path)# 写入新的文件名(不带扩展名)file.write(f"{new_filename.replace('.png', '')}\n")except ValueError:# 跳过无法转换为浮点数的文件名continue# 示例用法

directory_path = './Town10/rgb/image_left/' # 替换为你的目录路径

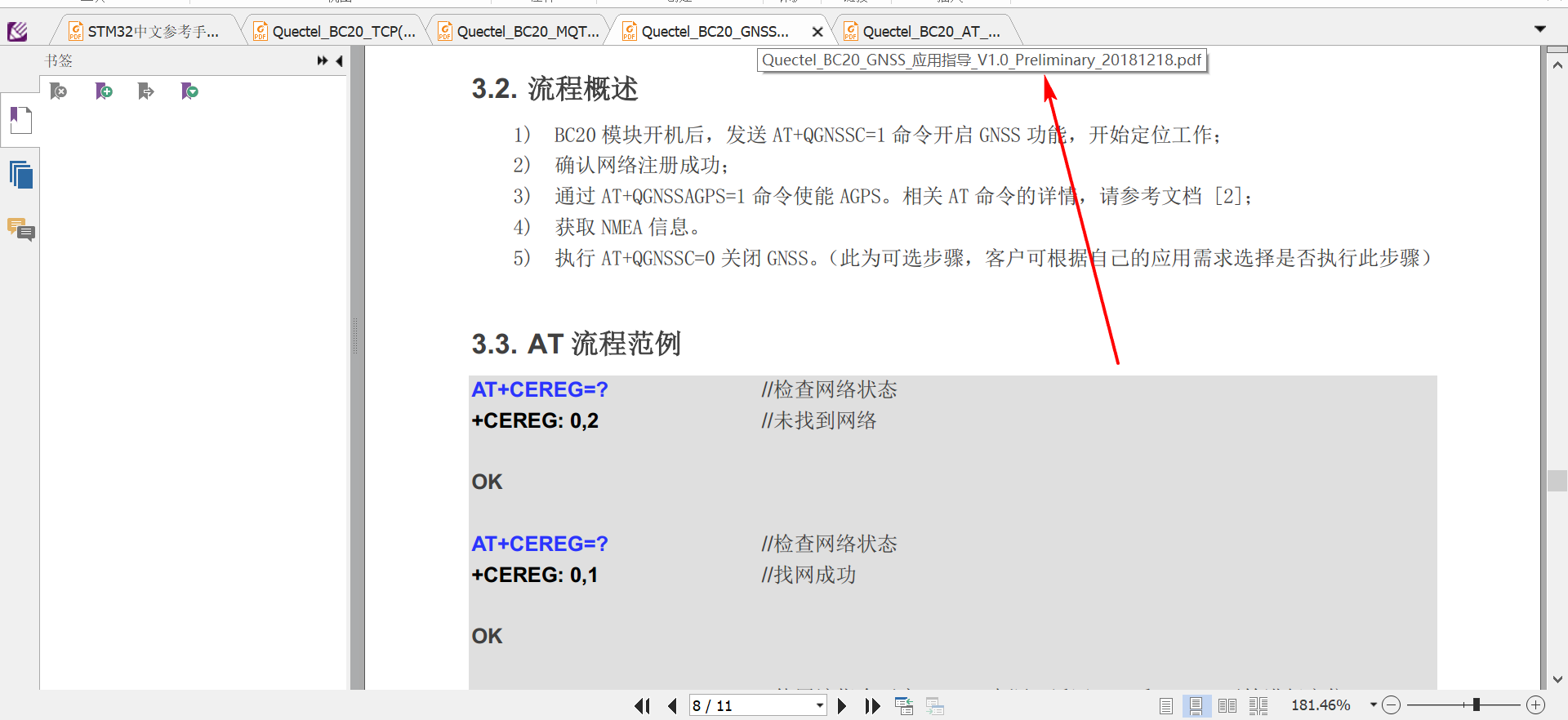

output_file = './Town10/rgb/times.txt' # 输出文件名save_sorted_and_rename_files(directory_path, output_file)除此之外,比较关键的一点是carla中相机的内外参,在仿真环境中添加标定板显然是不现实。在carla的github评论区找到了一个解决方法,这个方法实际上在官方的演示文件里面也用到了。计算内参可以使用下面的公式:

Focus_length = ImageSizeX /(2 * tan(CameraFOV * π / 360))

Center_X = ImageSizeX / 2

Center_Y = ImageSizeY / 2

其中Focus_length为焦距f,Center_X和Center_Y分别为cx和cy,CameraFOV要根据录制数据时的设置进行调整,我前面代码使用的FOV是60。而外参是根据添加相机时位置的设置计算出来的,我前面代码中两个相机朝向相同差别只在y上,根据carla的单位,这里相当于y上差了两米,即基线距离为2m,而orbslam的yaml文件中,Camera.bf字段的单位并不是米,而是基线距离乘以焦距,所以这里我们需要再2m的基础上再乘以前面计算出的焦距。最后修改出来的配置文件为:

%YAML:1.0#--------------------------------------------------------------------------------------------

# Camera Parameters. Adjust them!

#--------------------------------------------------------------------------------------------# Camera calibration and distortion parameters (OpenCV)

Camera.fx: 1206

Camera.fy: 1206

Camera.cx: 696

Camera.cy: 256Camera.k1: 0.0

Camera.k2: 0.0

Camera.p1: 0.0

Camera.p2: 0.0Camera.width: 1392

Camera.height: 512# Camera frames per second

Camera.fps: 20.0# stereo baseline times fx

Camera.bf: 2412# Color order of the images (0: BGR, 1: RGB. It is ignored if images are grayscale)

Camera.RGB: 1# Close/Far threshold. Baseline times.

ThDepth: 40#--------------------------------------------------------------------------------------------

# ORB Parameters

#--------------------------------------------------------------------------------------------# ORB Extractor: Number of features per image

ORBextractor.nFeatures: 2000# ORB Extractor: Scale factor between levels in the scale pyramid

ORBextractor.scaleFactor: 1.2# ORB Extractor: Number of levels in the scale pyramid

ORBextractor.nLevels: 8# ORB Extractor: Fast threshold

# Image is divided in a grid. At each cell FAST are extracted imposing a minimum response.

# Firstly we impose iniThFAST. If no corners are detected we impose a lower value minThFAST

# You can lower these values if your images have low contrast

ORBextractor.iniThFAST: 12

ORBextractor.minThFAST: 7#--------------------------------------------------------------------------------------------

# Viewer Parameters

#--------------------------------------------------------------------------------------------

Viewer.KeyFrameSize: 0.6

Viewer.KeyFrameLineWidth: 2

Viewer.GraphLineWidth: 1

Viewer.PointSize:2

Viewer.CameraSize: 0.7

Viewer.CameraLineWidth: 3

Viewer.ViewpointX: 0

Viewer.ViewpointY: -100

Viewer.ViewpointZ: -0.1

Viewer.ViewpointF: 2000如果配置文件不对,ORBSLAM的运行结果会出现很大差别,尤其是在转弯的时候,如果内外参错了会直接导致转弯偏离巨大。

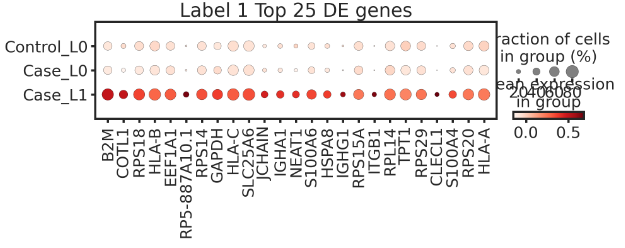

最后放一张成功运行并使用evo进行评价的图: