文章目录

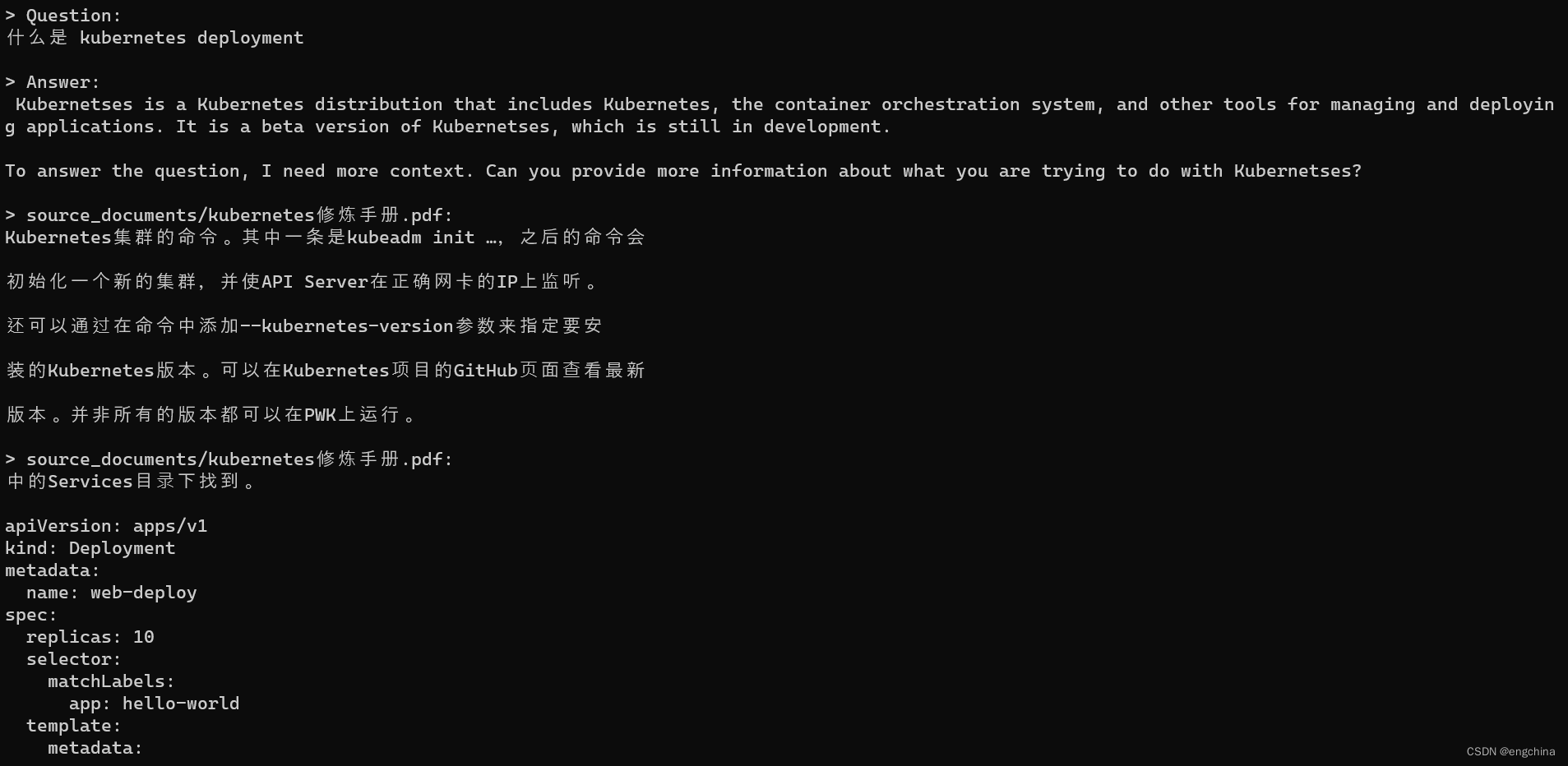

- 1、先看效果

- 2、本地部署

- 部署环境

- 下载

- 创建虚拟环境,安装库

- 本地模型下载

- int-4推理

- ```web_demo.py```

- 遇到的问题

原文链接:http://wangguo.site/posts/9d8c1768.html

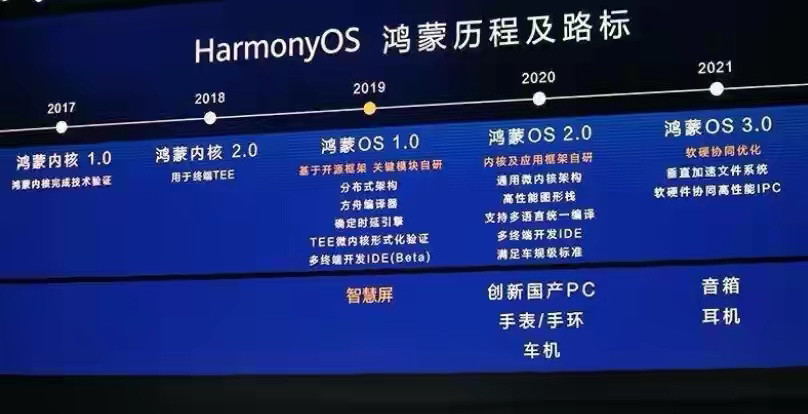

ChatGLM2-6B 是开源中英双语对话模型 ChatGLM-6B 的第二代版本

GitHub地址:https://github.com/THUDM/ChatGLM2-6B

1、先看效果

2、本地部署

部署环境

wsl2-ubuntu22.04 LTS+-----------------------------------------------------------------------------+

| NVIDIA-SMI 525.104 Driver Version: 528.79 CUDA Version: 12.0 |

|-------------------------------+----------------------+----------------------+

| GPU Name Persistence-M| Bus-Id Disp.A | Volatile Uncorr. ECC |

| Fan Temp Perf Pwr:Usage/Cap| Memory-Usage | GPU-Util Compute M. |

| | | MIG M. |

|===============================+======================+======================|

| 0 NVIDIA GeForce ... On | 00000000:01:00.0 On | N/A |

| N/A 45C P8 5W / 80W | 928MiB / 6144MiB | 3% Default |

| | | N/A |

+-------------------------------+----------------------+----------------------++-----------------------------------------------------------------------------+

| Processes: |

| GPU GI CI PID Type Process name GPU Memory |

| ID ID Usage |

|=============================================================================|

| 0 N/A N/A 23 G /Xwayland N/A |

+-----------------------------------------------------------------------------+

下载

git clone https://github.com/THUDM/ChatGLM2-6B

cd ChatGLM2-6B

创建虚拟环境,安装库

virtualenv venv

source venv/bin/activatepip install -r requirements.txt

本地模型下载

git clone https://huggingface.co/THUDM/chatglm2-6b-int4

然后在清华大学云盘下载相应的模型参数文件,并将文件拷贝到chatglm2-6b-int4文件夹下

int-4推理

需要先修改web_demo.py,修改内容

//第7行,修改为本地模型参数地址# model = AutoModel.from_pretrained("THUDM/chatglm2-6b-int4", trust_remote_code=True).cuda()model = AutoModel.from_pretrained("./chatglm2-6b-int4", trust_remote_code=True).cuda()web_demo.py

执行

python web_demo.py

遇到的问题

问题1

OSError: model/chatglm2-6b is not a local folder and is not a valid model identifier listed on 'https://huggingface.co/models'

If this is a private repository, make sure to pass a token having permission to this repo with `use_auth_token` or log in with `huggingface-cli login` and pass `use_auth_token=True`.

– 解决,输入

huggingface-cli login

并点击链接生成new token,拷贝到shell中输入即可

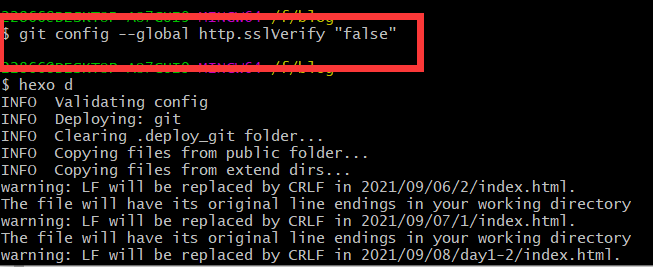

问题2

RuntimeError: Internal: src/sentencepiece_processor.cc(1101) [model_proto->ParseFromArray(serialized.data(), serialized.size())]

– 解决,输入

sudo apt install libcudart11.0 libcublaslt11