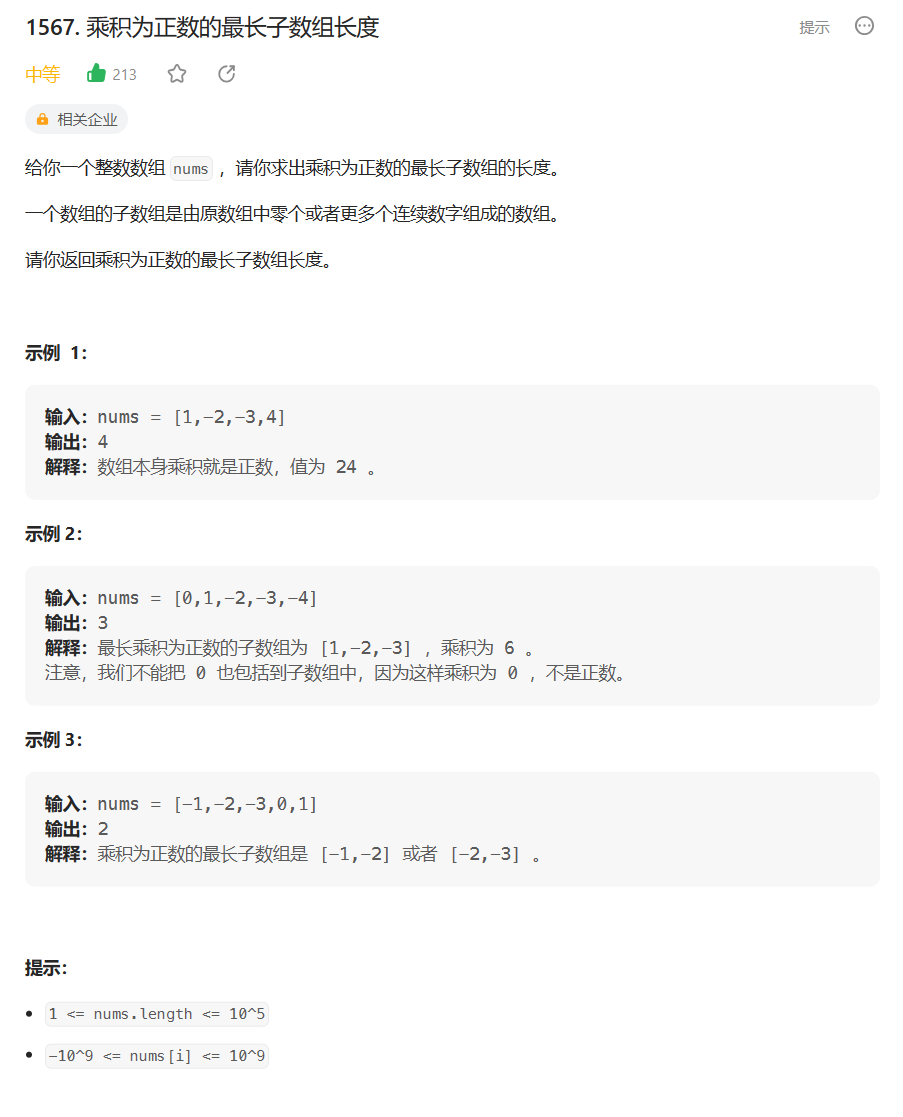

[oneAPI] 图像分类CIFAR-10

- 图像分类

- 参数与包

- 加载数据

- 模型

- 训练过程

- 结果

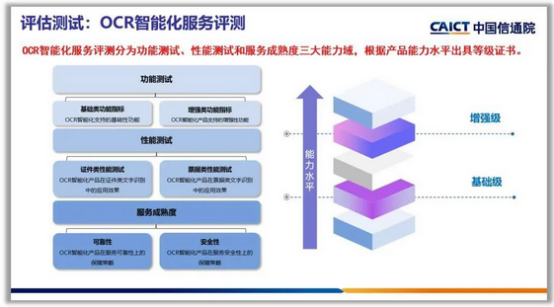

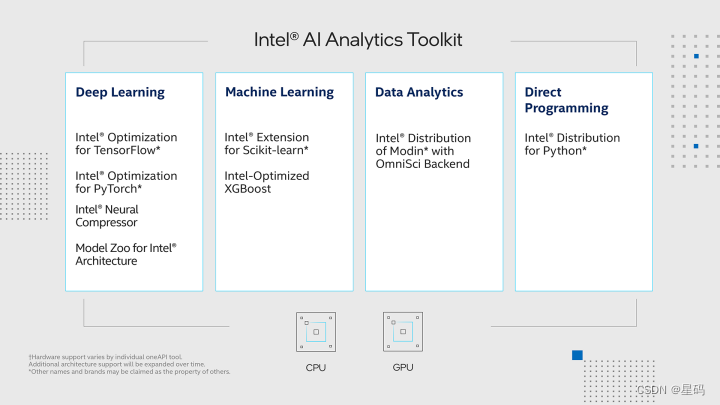

- oneAPI

比赛:https://marketing.csdn.net/p/f3e44fbfe46c465f4d9d6c23e38e0517

Intel® DevCloud for oneAPI:https://devcloud.intel.com/oneapi/get_started/aiAnalyticsToolkitSamples/

图像分类

使用了pytorch以及Intel® Optimization for PyTorch,通过优化扩展了 PyTorch,使英特尔硬件的性能进一步提升,让手写数字识别问题更加的快速高效

使用CIFAR-10数据集, 数据集是一个常用的计算机视觉数据集,包含了 60000 张 32x32 像素的彩色图像,涵盖了 10 个不同的类别,每个类别有 6000 张图像。这个数据集被广泛用于图像分类、物体识别等任务的训练和评估。

数据集被分成了训练集和测试集,其中训练集包含 50000 张图像,测试集包含 10000 张图像。

CIFAR-10 数据集包含以下 10 个类别:

飞机(airplane)

汽车(automobile)

鸟类(bird)

猫(cat)

鹿(deer)

狗(dog)

青蛙(frog)

马(horse)

船(ship)

卡车(truck)

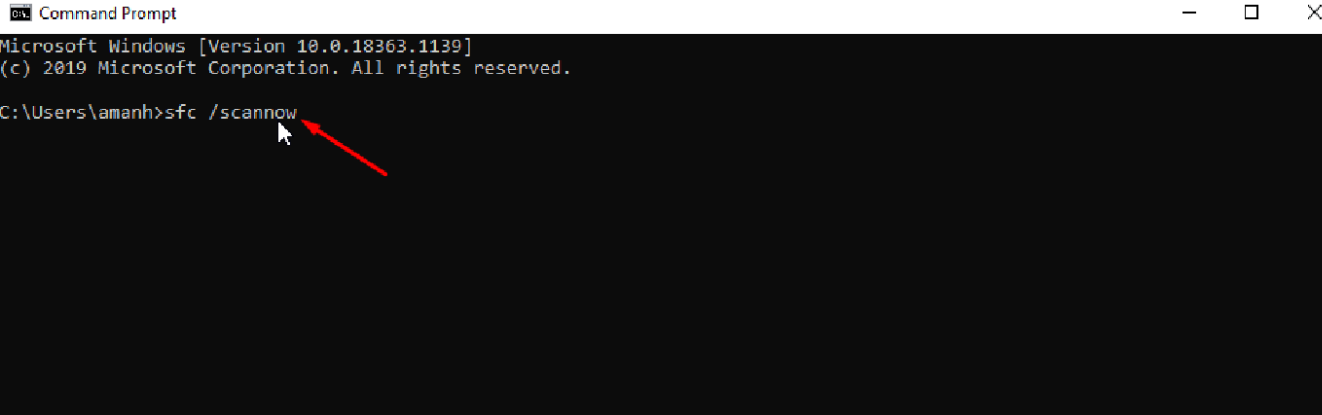

参数与包

import torch

import torch.nn as nn

import torchvision

import torchvision.transforms as transformsimport intel_extension_for_pytorch as ipex# Device configuration

device = torch.device('xpu' if torch.cuda.is_available() else 'cpu')# Hyper-parameters

num_epochs = 80

batch_size = 100

learning_rate = 0.001

加载数据

# Image preprocessing modules

transform = transforms.Compose([transforms.Pad(4),transforms.RandomHorizontalFlip(),transforms.RandomCrop(32),transforms.ToTensor()])# CIFAR-10 dataset

train_dataset = torchvision.datasets.CIFAR10(root='./data/',train=True,transform=transform,download=True)test_dataset = torchvision.datasets.CIFAR10(root='./data/',train=False,transform=transforms.ToTensor())# Data loader

train_loader = torch.utils.data.DataLoader(dataset=train_dataset,batch_size=batch_size,shuffle=True)test_loader = torch.utils.data.DataLoader(dataset=test_dataset,batch_size=batch_size,shuffle=False)

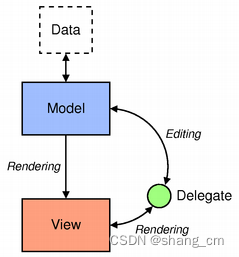

模型

# 3x3 convolution

def conv3x3(in_channels, out_channels, stride=1):return nn.Conv2d(in_channels, out_channels, kernel_size=3,stride=stride, padding=1, bias=False)# Residual block

class ResidualBlock(nn.Module):def __init__(self, in_channels, out_channels, stride=1, downsample=None):super(ResidualBlock, self).__init__()self.conv1 = conv3x3(in_channels, out_channels, stride)self.bn1 = nn.BatchNorm2d(out_channels)self.relu = nn.ReLU(inplace=True)self.conv2 = conv3x3(out_channels, out_channels)self.bn2 = nn.BatchNorm2d(out_channels)self.downsample = downsampledef forward(self, x):residual = xout = self.conv1(x)out = self.bn1(out)out = self.relu(out)out = self.conv2(out)out = self.bn2(out)if self.downsample:residual = self.downsample(x)out += residualout = self.relu(out)return out# ResNet

class ResNet(nn.Module):def __init__(self, block, layers, num_classes=10):super(ResNet, self).__init__()self.in_channels = 16self.conv = conv3x3(3, 16)self.bn = nn.BatchNorm2d(16)self.relu = nn.ReLU(inplace=True)self.layer1 = self.make_layer(block, 16, layers[0])self.layer2 = self.make_layer(block, 32, layers[1], 2)self.layer3 = self.make_layer(block, 64, layers[2], 2)self.avg_pool = nn.AvgPool2d(8)self.fc = nn.Linear(64, num_classes)def make_layer(self, block, out_channels, blocks, stride=1):downsample = Noneif (stride != 1) or (self.in_channels != out_channels):downsample = nn.Sequential(conv3x3(self.in_channels, out_channels, stride=stride),nn.BatchNorm2d(out_channels))layers = []layers.append(block(self.in_channels, out_channels, stride, downsample))self.in_channels = out_channelsfor i in range(1, blocks):layers.append(block(out_channels, out_channels))return nn.Sequential(*layers)def forward(self, x):out = self.conv(x)out = self.bn(out)out = self.relu(out)out = self.layer1(out)out = self.layer2(out)out = self.layer3(out)out = self.avg_pool(out)out = out.view(out.size(0), -1)out = self.fc(out)return out

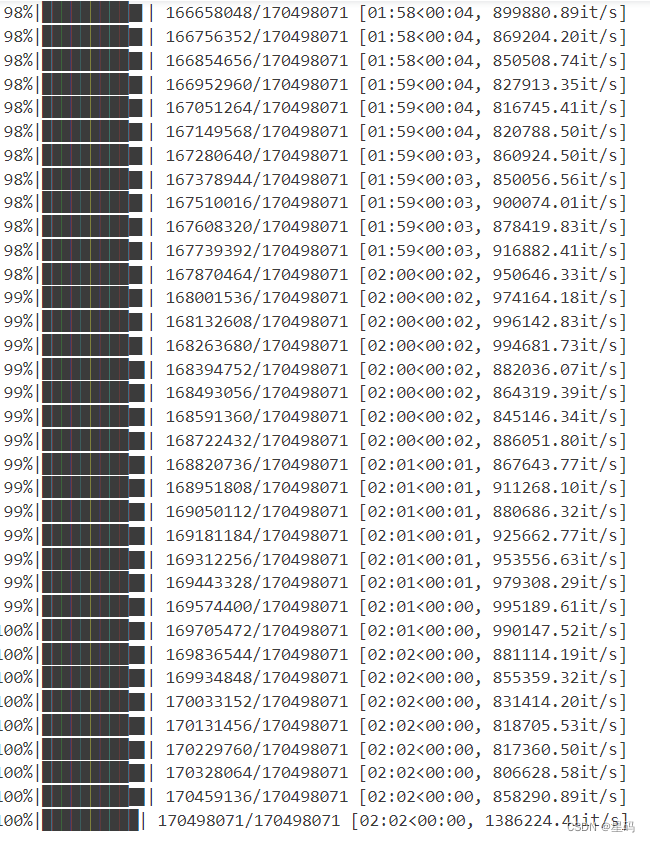

训练过程

model = ResNet(ResidualBlock, [2, 2, 2]).to(device)# Loss and optimizer

criterion = nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(model.parameters(), lr=learning_rate)'''

Apply Intel Extension for PyTorch optimization against the model object and optimizer object.

'''

model, optimizer = ipex.optimize(model, optimizer=optimizer)# For updating learning rate

def update_lr(optimizer, lr):for param_group in optimizer.param_groups:param_group['lr'] = lr# Train the model

total_step = len(train_loader)

curr_lr = learning_rate

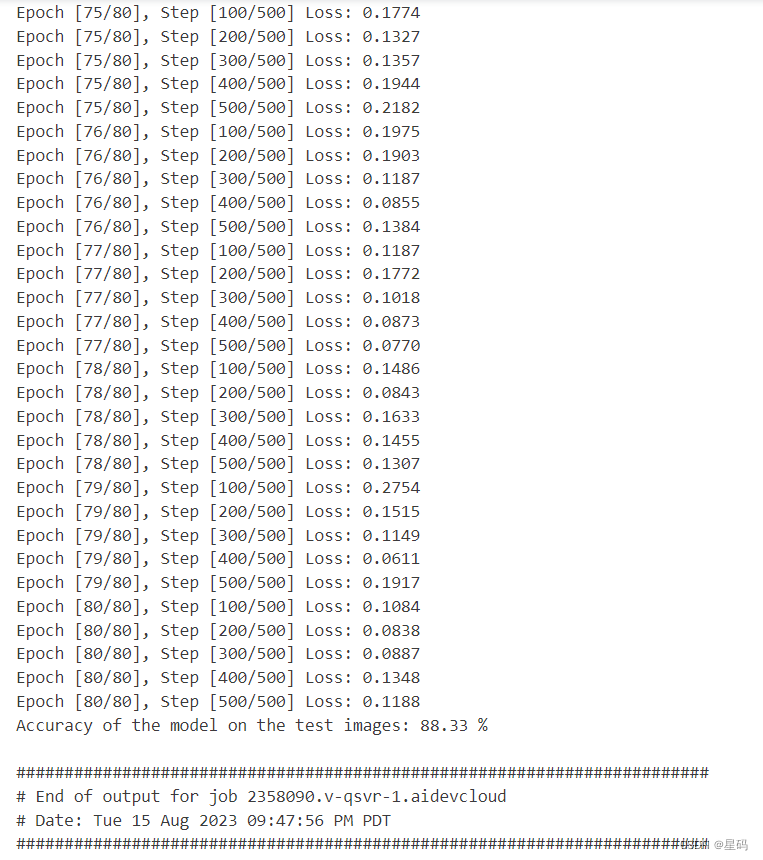

for epoch in range(num_epochs):for i, (images, labels) in enumerate(train_loader):images = images.to(device)labels = labels.to(device)# Forward passoutputs = model(images)loss = criterion(outputs, labels)# Backward and optimizeoptimizer.zero_grad()loss.backward()optimizer.step()if (i + 1) % 100 == 0:print("Epoch [{}/{}], Step [{}/{}] Loss: {:.4f}".format(epoch + 1, num_epochs, i + 1, total_step, loss.item()))# Decay learning rateif (epoch + 1) % 20 == 0:curr_lr /= 3update_lr(optimizer, curr_lr)# Test the model

model.eval()

with torch.no_grad():correct = 0total = 0for images, labels in test_loader:images = images.to(device)labels = labels.to(device)outputs = model(images)_, predicted = torch.max(outputs.data, 1)total += labels.size(0)correct += (predicted == labels).sum().item()print('Accuracy of the model on the test images: {} %'.format(100 * correct / total))# Save the model checkpoint

torch.save(model.state_dict(), 'resnet.ckpt')

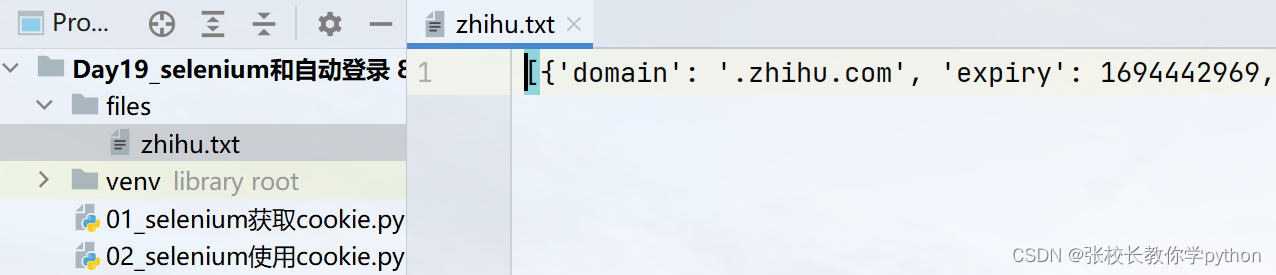

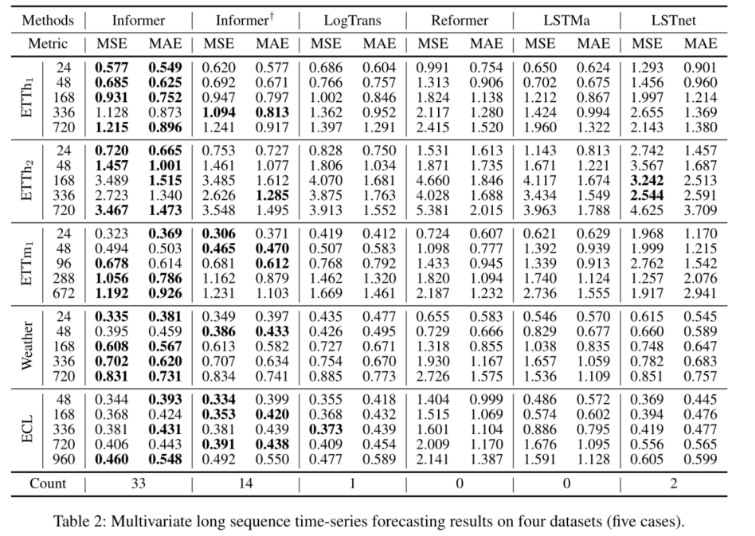

结果

oneAPI

import intel_extension_for_pytorch as ipex# Device configuration

device = torch.device('xpu' if torch.cuda.is_available() else 'cpu')# 模型

model = ConvNet(num_classes).to(device)# Loss and optimizer

criterion = nn.CrossEntropyLoss()

optimizer = torch.optim.Adam(model.parameters(), lr=learning_rate)'''

Apply Intel Extension for PyTorch optimization against the model object and optimizer object.

'''

model, optimizer = ipex.optimize(model, optimizer=optimizer)