目录

一、环境准备

1.1、主机初始化配置

1.2、部署docker环境

二、部署kubernetes集群

2.1、组件介绍

2.2、配置阿里云yum源

2.3、安装kubelet kubeadm kubectl

2.4、配置init-config.yaml

2.5、安装master节点

2.6、安装node节点

2.7、安装flannel、cni

2.8、部署测试应用

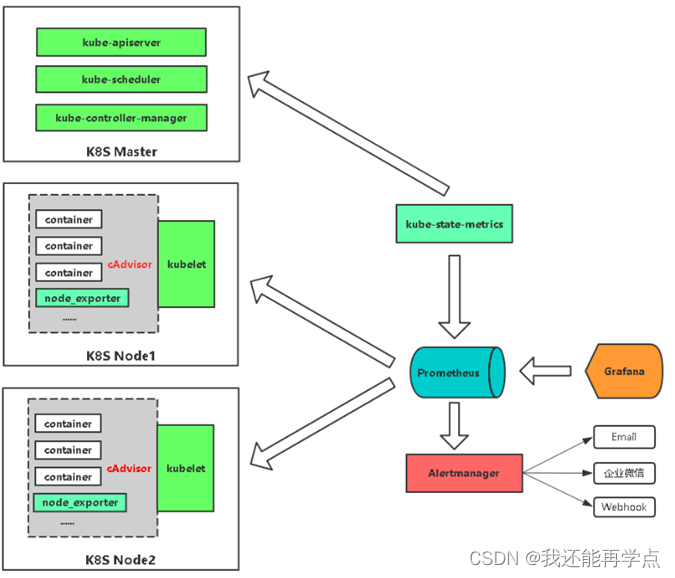

3、部署Prometheus监控平台

3.1、准备Prometheus相关YAML文件

3.2、部署prometheus

4、部署Grafana服务

4.1、部署Grafana相关yaml文件

4.2、配置Grafana数据源

一、环境准备

| 操作系统 | IP地址 | 主机名 | 组件 |

| CentOS7.5 | 192.168.147.137 | k8s-master | kubeadm、kubelet、kubectl、docker-ce |

| CentOS7.5 | 192.168.147.139 | k8s-node01 | kubeadm、kubelet、kubectl、docker-ce |

| CentOS7.5 | 192.168.147.140 | k8s-node02 | kubeadm、kubelet、kubectl、docker-ce |

注意:所有主机配置推荐CPU:2C+ Memory:2G+

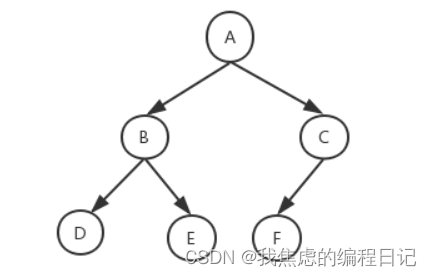

项目拓扑

1.1、主机初始化配置

所有主机配置禁用防火墙和selinux

[root@localhost ~]# setenforce 0

[root@localhost ~]# iptables -F

[root@localhost ~]# systemctl stop firewalld

[root@localhost ~]# systemctl disable firewalld

[root@localhost ~]# systemctl stop NetworkManager

[root@localhost ~]# systemctl disable NetworkManager

[root@localhost ~]# sed -i '/^SELINUX=/s/enforcing/disabled/' /etc/selinux/config

配置主机名并绑定hosts,不同主机名称不同

[root@localhost ~]# hostname k8s-master

[root@localhost ~]# bash

[root@k8s-master ~]# cat << EOF >> /etc/hosts

192.168.147.137 k8s-master

192.168.147.139 k8s-node01

192.168.147.140 k8s-node02

EOF[root@k8s-master ~]# scp /etc/hosts 192.168.200.112:/etc/

[root@k8s-master ~]# scp /etc/hosts 192.168.200.113:/etc/[root@localhost ~]# hostname k8s-node01

[root@localhost ~]# bash

[root@k8s-node01 ~]#[root@localhost ~]# hostname k8s-node02

[root@localhost ~]# bash

[root@k8s-node02 ~]#

主机配置初始化

[root@k8s-master ~]# yum -y install vim wget net-tools lrzsz[root@k8s-master ~]# swapoff -a

[root@k8s-master ~]# sed -i '/swap/s/^/#/' /etc/fstab[root@k8s-node01 ~]# cat << EOF >> /etc/sysctl.conf

net.bridge.bridge-nf-call-ip6tables = 1

net.bridge.bridge-nf-call-iptables = 1

EOF

[root@k8s-node01 ~]# modprobe br_netfilter

[root@k8s-node01 ~]# sysctl -p

1.2、部署docker环境

三台主机上分别部署 Docker 环境,因为 Kubernetes 对容器的编排需要 Docker 的支持。

[root@k8s-master ~]# wget -O /etc/yum.repos.d/CentOS-Base.repo http://mirrors.aliyun.com/repo/Centos-7.repo

[root@k8s-master ~]# yum install -y yum-utils device-mapper-persistent-data lvm2

使用 YUM 方式安装 Docker 时,推荐使用阿里的 YUM 源。

[root@k8s-master ~]# yum-config-manager --add-repo https://mirrors.aliyun.com/docker-ce/linux/centos/docker-ce.repo[root@k8s-master ~]# yum clean all && yum makecache fast [root@k8s-master ~]# yum -y install docker-ce

[root@k8s-master ~]# systemctl start docker

[root@k8s-master ~]# systemctl enable docker

镜像加速器(所有主机配置)

[root@k8s-master ~]# cat << END > /etc/docker/daemon.json

{"registry-mirrors":[ "https://nyakyfun.mirror.aliyuncs.com" ]

}

END

[root@k8s-master ~]# systemctl daemon-reload

[root@k8s-master ~]# systemctl restart docker

二、部署kubernetes集群

2.1、组件介绍

三个节点都需要安装下面三个组件

- kubeadm:安装工具,使所有的组件都会以容器的方式运行

- kubectl:客户端连接K8S API工具

- kubelet:运行在node节点,用来启动容器的工具

2.2、配置阿里云yum源

使用 YUM 方式安装 Kubernetes时,推荐使用阿里的 YUM 源。

[root@k8s-master ~]# cat <<EOF > /etc/yum.repos.d/kubernetes.repo

[kubernetes]

name=Kubernetes

baseurl=https://mirrors.aliyun.com/kubernetes/yum/repos/kubernetes-el7-x86_64/

enabled=1

gpgcheck=1

repo_gpgcheck=1

gpgkey=https://mirrors.aliyun.com/kubernetes/yum/doc/yum-key.gpghttps://mirrors.aliyun.com/kubernetes/yum/doc/rpm-package-key.gpg

EOF[root@k8s-master ~]# ls /etc/yum.repos.d/

backup Centos-7.repo CentOS-Media.repo CentOS-x86_64-kernel.repo docker-ce.repo kubernetes.repo

2.3、安装kubelet kubeadm kubectl

所有主机配置

[root@k8s-master ~]# yum install -y kubelet kubeadm kubectl

[root@k8s-master ~]# systemctl enable kubelet

kubelet 刚安装完成后,通过 systemctl start kubelet 方式是无法启动的,需要加入节点或初始化为 master 后才可启动成功。

2.4、配置init-config.yaml

Kubeadm 提供了很多配置项,Kubeadm 配置在 Kubernetes 集群中是存储在ConfigMap 中的,也可将这些配置写入配置文件,方便管理复杂的配置项。Kubeadm 配内容是通过 kubeadm config 命令写入配置文件的。

在master节点安装,master 定于为192.168.147.137,通过如下指令创建默认的init-config.yaml文件:

[root@k8s-master ~]# kubeadm config print init-defaults > init-config.yamlinit-config.yaml配置

[root@k8s-master ~]# cat init-config.yaml

apiVersion: kubeadm.k8s.io/v1beta2

bootstrapTokens:

- groups:- system:bootstrappers:kubeadm:default-node-tokentoken: abcdef.0123456789abcdefttl: 24h0m0susages:- signing- authentication

kind: InitConfiguration

localAPIEndpoint:advertiseAddress: 192.168.147.137 //master节点IP地址bindPort: 6443

nodeRegistration:criSocket: /var/run/dockershim.sockname: k8s-master //如果使用域名保证可以解析,或直接使用 IP 地址taints:- effect: NoSchedulekey: node-role.kubernetes.io/master

---

apiServer:timeoutForControlPlane: 4m0s

apiVersion: kubeadm.k8s.io/v1beta2

certificatesDir: /etc/kubernetes/pki

clusterName: kubernetes

controllerManager: {}

dns:type: CoreDNS

etcd:local:dataDir: /var/lib/etcd //etcd 容器挂载到本地的目录

imageRepository: registry.aliyuncs.com/google_containers //修改为国内地址

kind: ClusterConfiguration

kubernetesVersion: v1.19.0

networking:dnsDomain: cluster.localserviceSubnet: 10.96.0.0/12podSubnet: 10.244.0.0/16 //新增加 Pod 网段

scheduler: {}

2.5、安装master节点

拉取所需镜像

[root@k8s-master ~]# kubeadm config images list --config init-config.yaml

W0816 18:15:37.343955 20212 configset.go:348] WARNING: kubeadm cannot validate component configs for API groups [kubelet.config.k8s.io kubeproxy.config.k8s.io]

registry.aliyuncs.com/google_containers/kube-apiserver:v1.19.0

registry.aliyuncs.com/google_containers/kube-controller-manager:v1.19.0

registry.aliyuncs.com/google_containers/kube-scheduler:v1.19.0

registry.aliyuncs.com/google_containers/kube-proxy:v1.19.0

registry.aliyuncs.com/google_containers/pause:3.2

registry.aliyuncs.com/google_containers/etcd:3.4.9-1

registry.aliyuncs.com/google_containers/coredns:1.7.0

[root@k8s-master ~]# ls | while read line

do

docker load < $line

done

安装matser节点

[root@k8s-master ~]# kubeadm init --config=init-config.yaml //初始化安装K8S根据提示操作

kubectl 默认会在执行的用户家目录下面的.kube 目录下寻找config 文件。这里是将在初始化时[kubeconfig]步骤生成的admin.conf 拷贝到.kube/config

[root@k8s-master ~]# mkdir -p $HOME/.kube

[root@k8s-master ~]# cp -i /etc/kubernetes/admin.conf $HOME/.kube/config

[root@k8s-master ~]# chown $(id -u):$(id -g) $HOME/.kube/config

Kubeadm 通过初始化安装是不包括网络插件的,也就是说初始化之后是不具备相关网络功能的,比如 k8s-master 节点上查看节点信息都是“Not Ready”状态、Pod 的 CoreDNS无法提供服务等。

2.6、安装node节点

根据master安装时的提示信息

[root@k8s-node01 ~]# kubeadm join 192.168.147.137:6443 --token abcdef.0123456789abcdef --discovery-token-ca-cert-hash sha256:db9458ca9d8eaae330ab33da5e28f61778515af2ec06ff14f79d94285445ece9

[root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master NotReady master 2m22s v1.19.0

k8s-node01 NotReady <none> 15s v1.19.0

k8s-node02 NotReady <none> 11s v1.19.0

前面已经提到,在初始化 k8s-master 时并没有网络相关配置,所以无法跟 node 节点通信,因此状态都是“NotReady”。但是通过 kubeadm join 加入的 node 节点已经在k8s-master 上可以看到。

2.7、安装flannel、cni

Master 节点NotReady 的原因就是因为没有使用任何的网络插件,此时Node 和Master的连接还不正常。目前最流行的Kubernetes 网络插件有Flannel、Calico、Canal、Weave 这里选择使用flannel。

所有主机上传flannel_v0.12.0-amd64.tar、cni-plugins-linux-amd64-v0.8.6.tgz

[root@k8s-master ~]# docker load < flannel_v0.12.0-amd64.tar

[root@k8s-master ~]# tar xf cni-plugins-linux-amd64-v0.8.6.tgz

[root@k8s-master ~]# cp flannel /opt/cni/bin/master上传kube-flannel.yml

master主机配置:

[root@k8s-master ~]# kubectl apply -f kube-flannel.yml [root@k8s-master ~]# kubectl get nodes

NAME STATUS ROLES AGE VERSION

k8s-master Ready master 7m8s v1.19.0

k8s-node01 Ready <none> 5m1s v1.19.0

k8s-node02 Ready <none> 4m57s v1.19.0

[root@k8s-master ~]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-6d56c8448f-8282g 1/1 Running 0 7m19s

coredns-6d56c8448f-wrdw6 1/1 Running 0 7m19s

etcd-k8s-master 1/1 Running 0 7m30s

kube-apiserver-k8s-master 1/1 Running 0 7m30s

kube-controller-manager-k8s-master 1/1 Running 0 7m30s

kube-flannel-ds-amd64-pvzxl 1/1 Running 0 62s

kube-flannel-ds-amd64-qkjtd 1/1 Running 0 62s

kube-flannel-ds-amd64-szwp4 1/1 Running 0 62s

kube-proxy-9fbkb 1/1 Running 0 7m19s

kube-proxy-p2txx 1/1 Running 0 5m28s

kube-proxy-zpb98 1/1 Running 0 5m32s

kube-scheduler-k8s-master 1/1 Running 0 7m30s

已经是ready状态

2.8、部署测试应用

所有node主机导入测试镜像

[root@k8s-node01 ~]# docker load < nginx-1.19.tar

[root@k8s-node01 ~]# docker tag nginx nginx:1.19.6

在Kubernetes集群中创建一个pod,验证是否正常运行。

[root@k8s-master demo]# rz -E

rz waiting to receive.

[root@k8s-master demo]# vim nginx-deployment.yaml

apiVersion: apps/v1

kind: Deployment

metadata:name: nginx-deploymentlabels:app: nginx

spec:replicas: 3selector: matchLabels:app: nginxtemplate:metadata:labels:app: nginxspec:containers:- name: nginximage: nginx:1.19.6ports:- containerPort: 80

创建完 Deployment 的资源清单之后,使用 create 执行资源清单来创建容器。通过 get pods 可以查看到 Pod 容器资源已经自动创建完成。

[root@k8s-master demo]# kubectl get pods

NAME READY STATUS RESTARTS AGE

nginx-deployment-76ccf9dd9d-29ch8 1/1 Running 0 8s

nginx-deployment-76ccf9dd9d-lm7nl 1/1 Running 0 8s

nginx-deployment-76ccf9dd9d-lx29n 1/1 Running 0 8s

[root@k8s-master demo]# kubectl get pods -o wide

NAME READY STATUS RESTARTS AGE IP NODE NOMINATED NODE READINESS GATES

nginx-deployment-76ccf9dd9d-29ch8 1/1 Running 0 18s 10.244.2.4 k8s-node02 <none> <none>

nginx-deployment-76ccf9dd9d-lm7nl 1/1 Running 0 18s 10.244.1.3 k8s-node01 <none> <none>

nginx-deployment-76ccf9dd9d-lx29n 1/1 Running 0 18s 10.244.2.3 k8s-node02 <none> <none>

创建Service资源清单

在创建的 nginx-service 资源清单中,定义名称为 nginx-service 的 Service、标签选择器为 app: nginx、type 为 NodePort 指明外部流量可以访问内部容器。在 ports 中定义暴露的端口库号列表,对外暴露访问的端口是 80,容器内部的端口也是 80。

[root@k8s-master demo]# vim nginx-service.yaml

kind: Service

apiVersion: v1

metadata:name: nginx-service

spec:selector:app: nginxtype: NodePortports:- protocol: TCPport: 80

targetPort: 80[root@k8s-master demo]# vim nginx-server.yaml

[root@k8s-master demo]# kubectl create -f nginx-server.yaml

service/nginx-service created

[root@k8s-master demo]# kubectl get svc

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 7m46s

nginx-service NodePort 10.106.168.130 <none> 80:31487/TCP 10s

3、部署Prometheus监控平台

3.1、准备Prometheus相关YAML文件

在master节点/opt目录下新建pgmonitor目录

[root@k8s-master ~]# mkdir /opt/pgmonitor

[root@k8s-master ~]# cd /opt/pgmonitor

将下载yaml包上传至/opt/pgmonitor目录并解压

[root@k8s-master pgmonitor]# unzip k8s-prometheus-grafana-master.zip 3.2、部署prometheus

部署守护进程

[root@k8s-master pgmonitor]# cd k8s-prometheus-grafana-master/

[root@k8s-master k8s-prometheus-grafana-master]# kubectl create -f node-exporter.yaml

daemonset.apps/node-exporter created

service/node-exporter created

部署其他yaml文件

进入/opt/pgmonitor/k8s-prometheus-grafana-master/prometheus目录

[root@k8s-master k8s-prometheus-grafana-master]# cd prometheus部署rbac、部署configmap.yaml、部署prometheus.deploy.yml、部署prometheus.svc.yml

[root@k8s-master prometheus]# kubectl create -f rbac-setup.yaml

clusterrole.rbac.authorization.k8s.io/prometheus created

serviceaccount/prometheus created

clusterrolebinding.rbac.authorization.k8s.io/prometheus created

[root@k8s-master prometheus]# kubectl create -f configmap.yaml

configmap/prometheus-config created

[root@k8s-master prometheus]# kubectl create -f prometheus.deploy.yml

deployment.apps/prometheus created

[root@k8s-master prometheus]# kubectl create -f prometheus.svc.yml

service/prometheus created

查看prometheus状态

[root@k8s-master prometheus]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-6d56c8448f-8zrt7 1/1 Running 0 14m

coredns-6d56c8448f-hzm5v 1/1 Running 0 14m

etcd-k8s-master 1/1 Running 0 15m

kube-apiserver-k8s-master 1/1 Running 0 15m

kube-controller-manager-k8s-master 1/1 Running 0 15m

kube-flannel-ds-amd64-4654f 1/1 Running 0 11m

kube-flannel-ds-amd64-bpx5q 1/1 Running 0 11m

kube-flannel-ds-amd64-nnhlh 1/1 Running 0 11m

kube-proxy-2sps9 1/1 Running 0 13m

kube-proxy-99hn4 1/1 Running 0 13m

kube-proxy-s624n 1/1 Running 0 14m

kube-scheduler-k8s-master 1/1 Running 0 15m

node-exporter-brgw6 1/1 Running 0 3m28s

node-exporter-kvvgp 1/1 Running 0 3m28s

prometheus-68546b8d9-vmjms 1/1 Running 0 87s

4、部署Grafana服务

4.1、部署Grafana相关yaml文件

进入/opt/pgmonitor/k8s-prometheus-grafana-master/grafana目录

[root@k8s-master prometheus]# cd ../grafana/

部署grafana-deploy.yaml、部署grafana-svc.yaml、部署grafana-ing.yaml

[root@k8s-master prometheus]# cd ../grafana/

[root@k8s-master grafana]# kubectl create -f grafana-deploy.yaml

deployment.apps/grafana-core created

[root@k8s-master grafana]# kubectl create -f grafana-svc.yaml

service/grafana created

[root@k8s-master grafana]# kubectl create -f grafana-ing.yaml

Warning: extensions/v1beta1 Ingress is deprecated in v1.14+, unavailable in v1.22+; use networking.k8s.io/v1 Ingress

ingress.extensions/grafana created

查看Grafana状态

[root@k8s-master grafana]# kubectl get pods -n kube-system

NAME READY STATUS RESTARTS AGE

coredns-6d56c8448f-8zrt7 1/1 Running 0 18m

coredns-6d56c8448f-hzm5v 1/1 Running 0 18m

etcd-k8s-master 1/1 Running 0 18m

grafana-core-6d6fb7566-vphhz 1/1 Running 0 115s

kube-apiserver-k8s-master 1/1 Running 0 18m

kube-controller-manager-k8s-master 1/1 Running 0 18m

kube-flannel-ds-amd64-4654f 1/1 Running 0 14m

kube-flannel-ds-amd64-bpx5q 1/1 Running 0 14m

kube-flannel-ds-amd64-nnhlh 1/1 Running 0 14m

kube-proxy-2sps9 1/1 Running 0 16m

kube-proxy-99hn4 1/1 Running 0 16m

kube-proxy-s624n 1/1 Running 0 18m

kube-scheduler-k8s-master 1/1 Running 0 18m

node-exporter-brgw6 1/1 Running 0 6m55s

node-exporter-kvvgp 1/1 Running 0 6m55s

prometheus-68546b8d9-vmjms 1/1 Running 0 4m54s

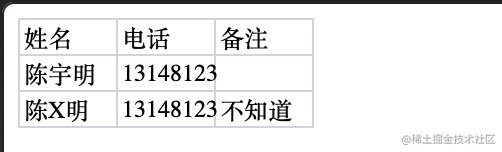

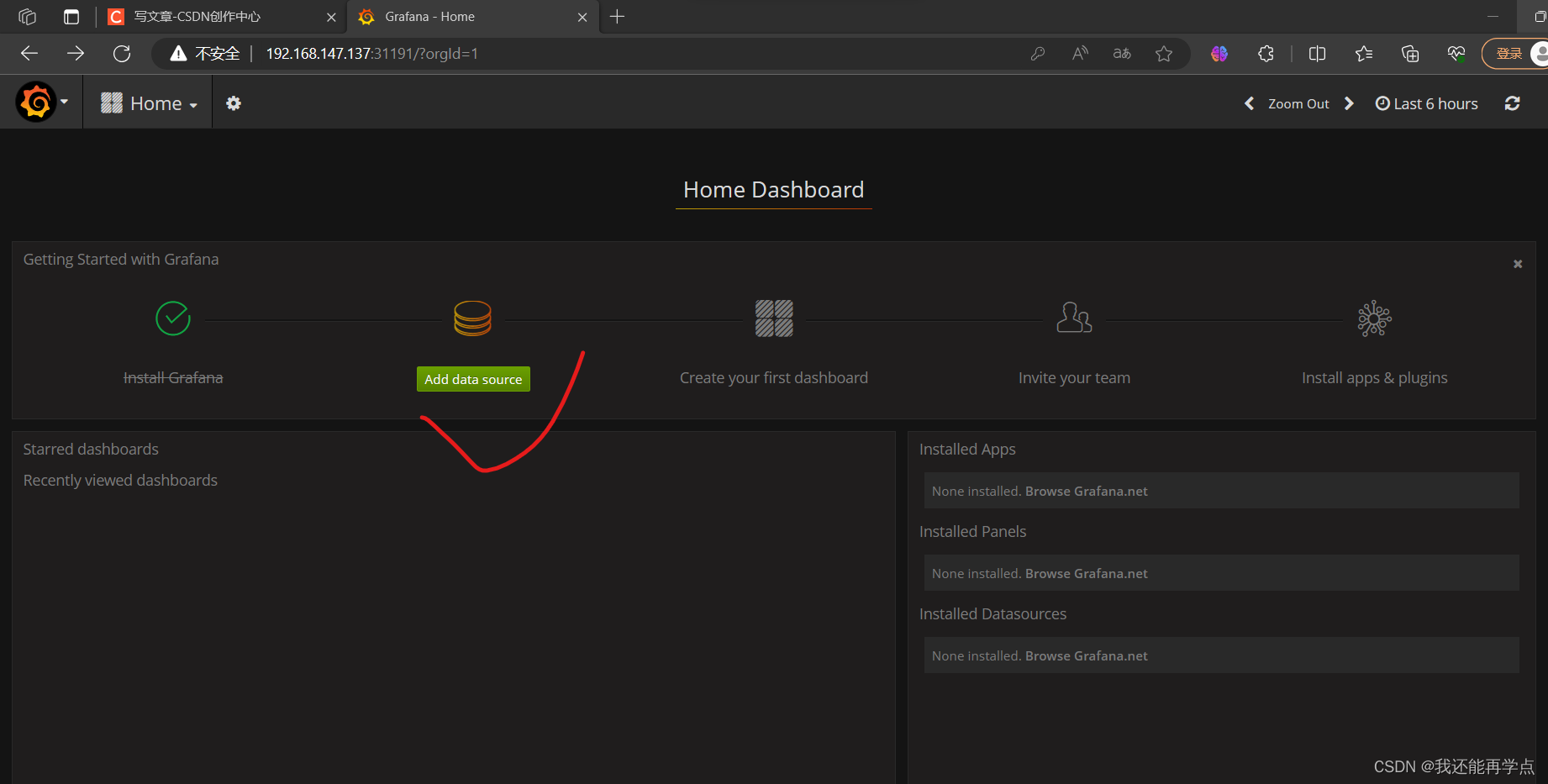

4.2、配置Grafana数据源

查看grafana的端口

[root@k8s-master grafana]# kubectl get svc -n kube-system

NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE

grafana NodePort 10.105.158.0 <none> 3000:31191/TCP 2m19s

kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 19m

node-exporter NodePort 10.111.78.61 <none> 9100:31672/TCP 7m25s

prometheus NodePort 10.98.254.105 <none> 9090:30003/TCP 5m12s

通过浏览器访问grafana,http://[masterIP]:[grafana端口]

例如:http://192.168.200.111:31191,默认的用户名和密码:admin/admin

设置DataSource

名字自定义、url是cluster-IP

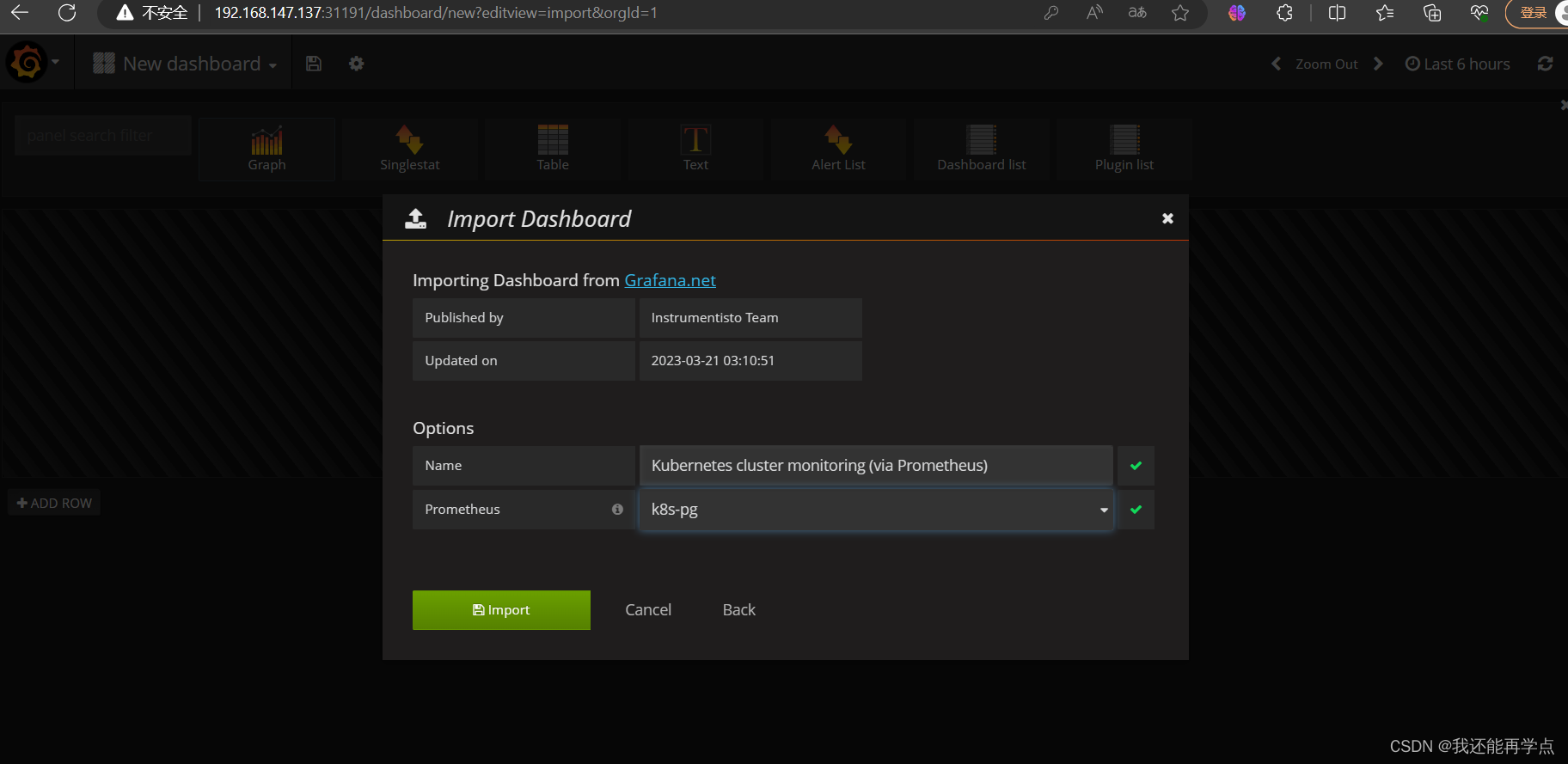

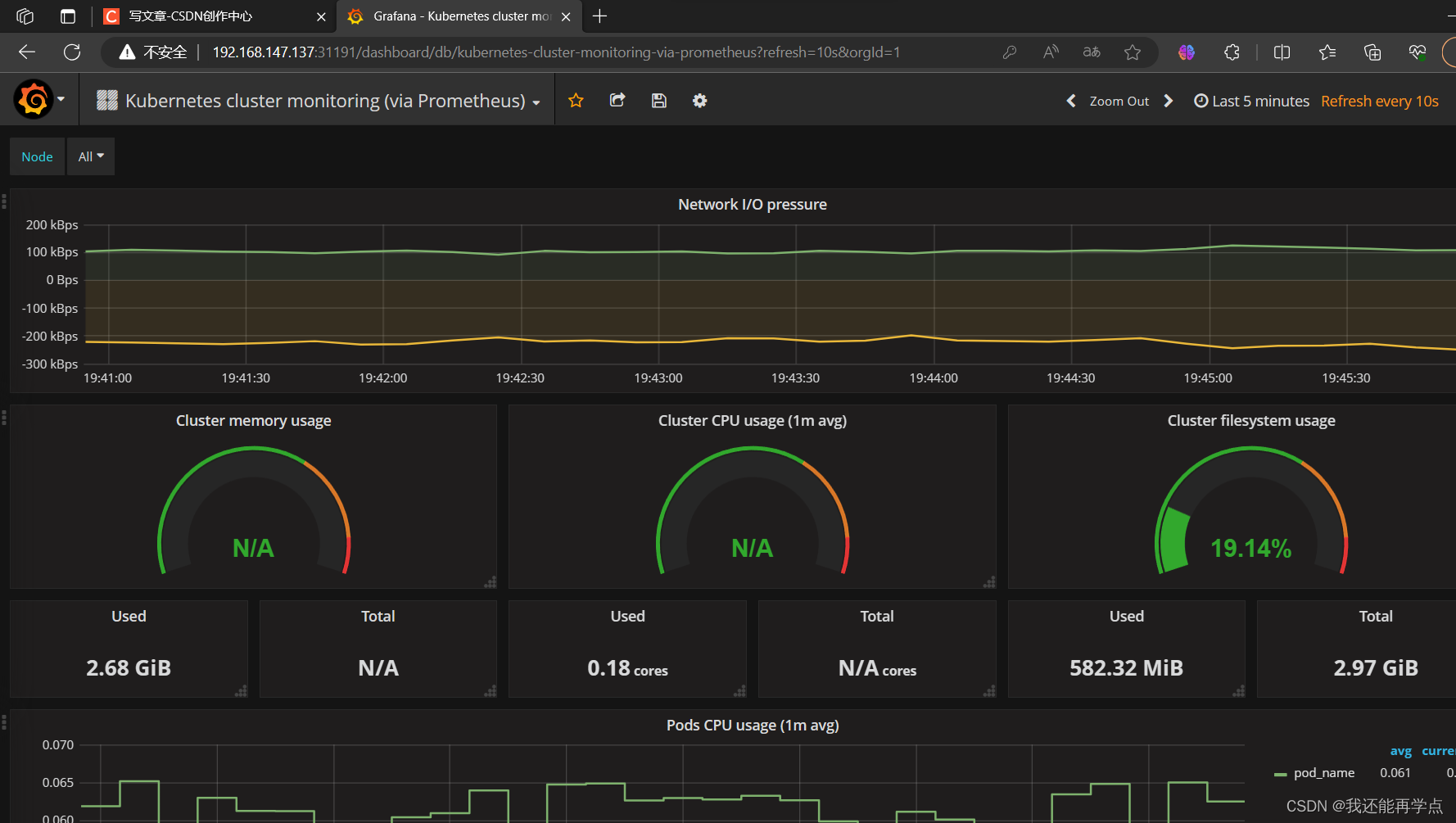

进入Import

进入Import

输入315并移除光标,等一会儿即可进入下一个页面

![[机器学习]特征工程:特征降维](https://img-blog.csdnimg.cn/img_convert/2a8b90e49d26044b819bcabd2ce63cd7.png)