WebRTC音视频通话-WebRTC视频自定义RTCVideoCapturer相机

在之前已经实现了WebRTC调用ossrs服务,实现直播视频通话功能。但是在使用过程中,RTCCameraVideoCapturer类提供的方法不能修改及调节相机的灯光等设置,那就需要自定义RTCVideoCapturer自行采集画面了。

iOS端WebRTC调用ossrs相关,实现直播视频通话功能请查看:

https://blog.csdn.net/gloryFlow/article/details/132262724

这里自定义RTCVideoCapturer

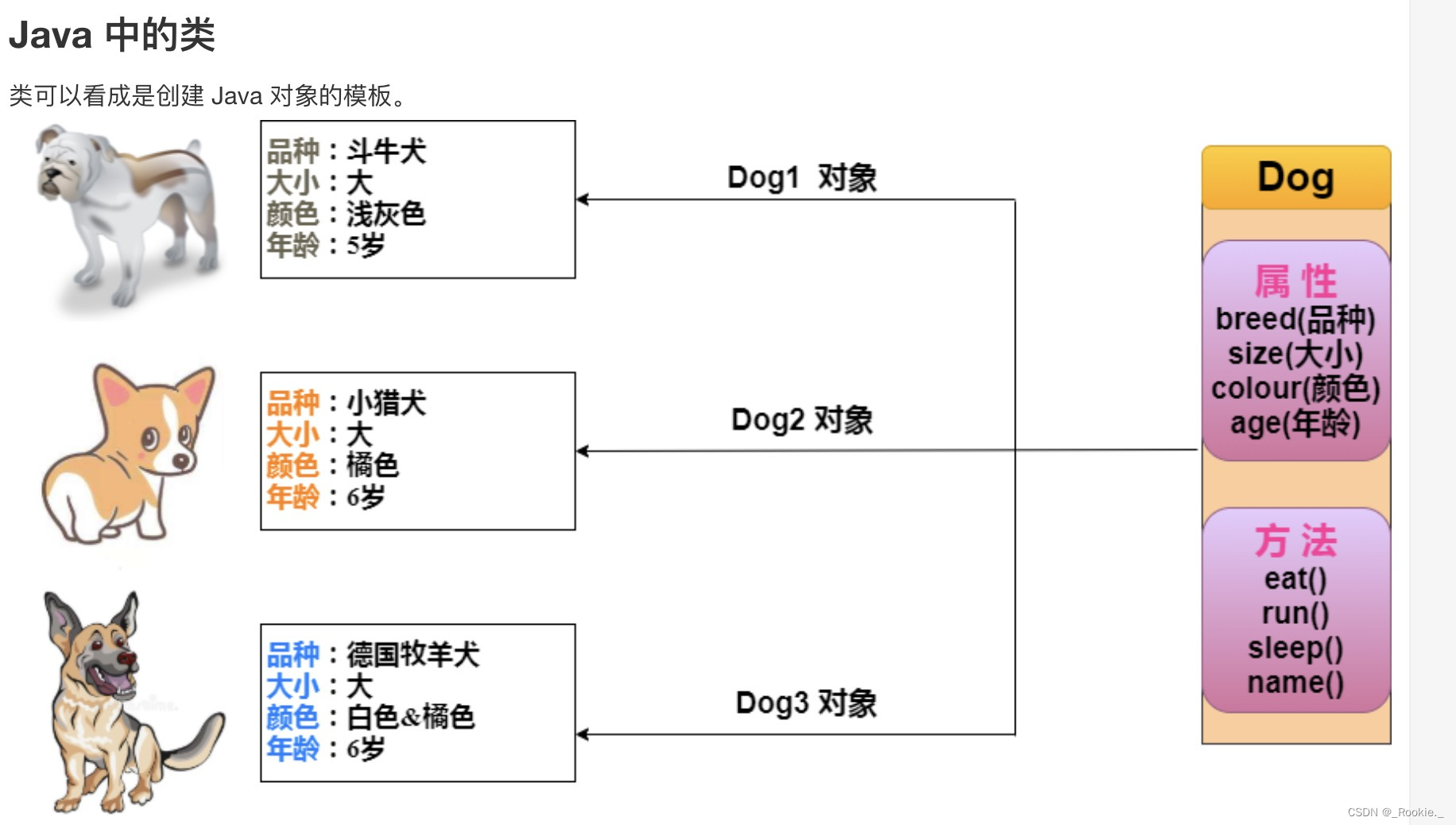

一、自定义相机需要的几个类

需要了解的几个类

-

AVCaptureSession

AVCaptureSession是iOS提供的一个管理和协调输入设备到输出设备之间数据流的对象。 -

AVCaptureDevice

AVCaptureDevice是指硬件设备。 -

AVCaptureDeviceInput

AVCaptureDeviceInput是用来从AVCaptureDevice对象捕获Input数据。 -

AVCaptureMetadataOutput

AVCaptureMetadataOutput是用来处理AVCaptureSession产生的定时元数据的捕获输出的。 -

AVCaptureVideoDataOutput

AVCaptureVideoDataOutput是用来处理视频数据输出的。 -

AVCaptureVideoPreviewLayer

AVCaptureVideoPreviewLayer是相机捕获的视频预览层,是用来展示视频的。

二、自定义RTCVideoCapturer相机采集

自定义相机,我们需要为AVCaptureSession添加AVCaptureVideoDataOutput的output

self.dataOutput = [[AVCaptureVideoDataOutput alloc] init];[self.dataOutput setAlwaysDiscardsLateVideoFrames:YES];self.dataOutput.videoSettings = @{(id)kCVPixelBufferPixelFormatTypeKey : @(needYuvOutput ? kCVPixelFormatType_420YpCbCr8BiPlanarFullRange : kCVPixelFormatType_32BGRA)};self.dataOutput.alwaysDiscardsLateVideoFrames = YES;[self.dataOutput setSampleBufferDelegate:self queue:self.bufferQueue];if ([self.session canAddOutput:self.dataOutput]) {[self.session addOutput:self.dataOutput];}else{NSLog( @"Could not add video data output to the session" );return nil;}

需要实现AVCaptureVideoDataOutputSampleBufferDelegate代理方法

- (void)captureOutput:(AVCaptureOutput *)captureOutput didOutputSampleBuffer:(CMSampleBufferRef)sampleBuffer fromConnection:(AVCaptureConnection *)connection;

将CMSampleBufferRef转换成CVPixelBufferRef,将处理后的CVPixelBufferRef生成RTCVideoFrame,通过调用WebRTC的localVideoSource中实现的didCaptureVideoFrame方法。

完整代码如下

SDCustomRTCCameraCapturer.h

#import <AVFoundation/AVFoundation.h>

#import <Foundation/Foundation.h>

#import <WebRTC/WebRTC.h>@protocol SDCustomRTCCameraCapturerDelegate <NSObject>- (void)rtcCameraVideoCapturer:(RTCVideoCapturer *)capturer didOutputSampleBuffer:(CMSampleBufferRef)sampleBuffer;@end@interface SDCustomRTCCameraCapturer : RTCVideoCapturer@property(nonatomic, weak) id<SDCustomRTCCameraCapturerDelegate>delegate;// Capture session that is used for capturing. Valid from initialization to dealloc.

@property(nonatomic, strong) AVCaptureSession *captureSession;// Returns list of available capture devices that support video capture.

+ (NSArray<AVCaptureDevice *> *)captureDevices;

// Returns list of formats that are supported by this class for this device.

+ (NSArray<AVCaptureDeviceFormat *> *)supportedFormatsForDevice:(AVCaptureDevice *)device;// Returns the most efficient supported output pixel format for this capturer.

- (FourCharCode)preferredOutputPixelFormat;// Starts the capture session asynchronously and notifies callback on completion.

// The device will capture video in the format given in the `format` parameter. If the pixel format

// in `format` is supported by the WebRTC pipeline, the same pixel format will be used for the

// output. Otherwise, the format returned by `preferredOutputPixelFormat` will be used.

- (void)startCaptureWithDevice:(AVCaptureDevice *)deviceformat:(AVCaptureDeviceFormat *)formatfps:(NSInteger)fpscompletionHandler:(nullable void (^)(NSError *))completionHandler;

// Stops the capture session asynchronously and notifies callback on completion.

- (void)stopCaptureWithCompletionHandler:(nullable void (^)(void))completionHandler;// Starts the capture session asynchronously.

- (void)startCaptureWithDevice:(AVCaptureDevice *)deviceformat:(AVCaptureDeviceFormat *)formatfps:(NSInteger)fps;

// Stops the capture session asynchronously.

- (void)stopCapture;#pragma mark - 自定义相机

@property (nonatomic, readonly) dispatch_queue_t bufferQueue;@property (nonatomic, assign) AVCaptureDevicePosition devicePosition; // default AVCaptureDevicePositionFront@property (nonatomic, assign) AVCaptureVideoOrientation videoOrientation;@property (nonatomic, assign) BOOL needVideoMirrored;@property (nonatomic, strong , readonly) AVCaptureConnection *videoConnection;@property (nonatomic, copy) NSString *sessionPreset; // default 640x480@property (nonatomic, strong) AVCaptureVideoPreviewLayer *previewLayer;@property (nonatomic, assign) BOOL bSessionPause;@property (nonatomic, assign) int iExpectedFPS;@property (nonatomic, readwrite, strong) NSDictionary *videoCompressingSettings;- (instancetype)initWithDevicePosition:(AVCaptureDevicePosition)iDevicePositionsessionPresset:(AVCaptureSessionPreset)sessionPresetfps:(int)iFPSneedYuvOutput:(BOOL)needYuvOutput;- (void)setExposurePoint:(CGPoint)point inPreviewFrame:(CGRect)frame;- (void)setISOValue:(float)value;- (void)startRunning;- (void)stopRunning;- (void)rotateCamera;- (void)rotateCamera:(BOOL)isUseFrontCamera;- (void)setWhiteBalance;- (CGRect)getZoomedRectWithRect:(CGRect)rect scaleToFit:(BOOL)bScaleToFit;@end

SDCustomRTCCameraCapturer.m

#import "SDCustomRTCCameraCapturer.h"

#import <UIKit/UIKit.h>

#import "EFMachineVersion.h"//#import "base/RTCLogging.h"

//#import "base/RTCVideoFrameBuffer.h"

//#import "components/video_frame_buffer/RTCCVPixelBuffer.h"

//

//#if TARGET_OS_IPHONE

//#import "helpers/UIDevice+RTCDevice.h"

//#endif

//

//#import "helpers/AVCaptureSession+DevicePosition.h"

//#import "helpers/RTCDispatcher+Private.h"typedef NS_ENUM(NSUInteger, STExposureModel) {STExposureModelPositive5,STExposureModelPositive4,STExposureModelPositive3,STExposureModelPositive2,STExposureModelPositive1,STExposureModel0,STExposureModelNegative1,STExposureModelNegative2,STExposureModelNegative3,STExposureModelNegative4,STExposureModelNegative5,STExposureModelNegative6,STExposureModelNegative7,STExposureModelNegative8,

};static char * kEffectsCamera = "EffectsCamera";static STExposureModel currentExposureMode;@interface SDCustomRTCCameraCapturer ()<AVCaptureVideoDataOutputSampleBufferDelegate, AVCaptureAudioDataOutputSampleBufferDelegate, AVCaptureMetadataOutputObjectsDelegate>

@property(nonatomic, readonly) dispatch_queue_t frameQueue;

@property(nonatomic, strong) AVCaptureDevice *currentDevice;

@property(nonatomic, assign) BOOL hasRetriedOnFatalError;

@property(nonatomic, assign) BOOL isRunning;

// Will the session be running once all asynchronous operations have been completed?

@property(nonatomic, assign) BOOL willBeRunning;@property (nonatomic, strong) AVCaptureDeviceInput *deviceInput;

@property (nonatomic, strong) AVCaptureVideoDataOutput *dataOutput;

@property (nonatomic, strong) AVCaptureMetadataOutput *metaOutput;

@property (nonatomic, strong) AVCaptureStillImageOutput *stillImageOutput;@property (nonatomic, readwrite) dispatch_queue_t bufferQueue;@property (nonatomic, strong, readwrite) AVCaptureConnection *videoConnection;@property (nonatomic, strong) AVCaptureDevice *videoDevice;

@property (nonatomic, strong) AVCaptureSession *session;@end@implementation SDCustomRTCCameraCapturer {AVCaptureVideoDataOutput *_videoDataOutput;AVCaptureSession *_captureSession;FourCharCode _preferredOutputPixelFormat;FourCharCode _outputPixelFormat;RTCVideoRotation _rotation;float _autoISOValue;

#if TARGET_OS_IPHONEUIDeviceOrientation _orientation;BOOL _generatingOrientationNotifications;

#endif

}@synthesize frameQueue = _frameQueue;

@synthesize captureSession = _captureSession;

@synthesize currentDevice = _currentDevice;

@synthesize hasRetriedOnFatalError = _hasRetriedOnFatalError;

@synthesize isRunning = _isRunning;

@synthesize willBeRunning = _willBeRunning;- (instancetype)init {return [self initWithDelegate:nil captureSession:[[AVCaptureSession alloc] init]];

}- (instancetype)initWithDelegate:(__weak id<RTCVideoCapturerDelegate>)delegate {return [self initWithDelegate:delegate captureSession:[[AVCaptureSession alloc] init]];

}// This initializer is used for testing.

- (instancetype)initWithDelegate:(__weak id<RTCVideoCapturerDelegate>)delegatecaptureSession:(AVCaptureSession *)captureSession {if (self = [super initWithDelegate:delegate]) {// Create the capture session and all relevant inputs and outputs. We need// to do this in init because the application may want the capture session// before we start the capturer for e.g. AVCapturePreviewLayer. All objects// created here are retained until dealloc and never recreated.if (![self setupCaptureSession:captureSession]) {return nil;}NSNotificationCenter *center = [NSNotificationCenter defaultCenter];

#if TARGET_OS_IPHONE_orientation = UIDeviceOrientationPortrait;_rotation = RTCVideoRotation_90;[center addObserver:selfselector:@selector(deviceOrientationDidChange:)name:UIDeviceOrientationDidChangeNotificationobject:nil];[center addObserver:selfselector:@selector(handleCaptureSessionInterruption:)name:AVCaptureSessionWasInterruptedNotificationobject:_captureSession];[center addObserver:selfselector:@selector(handleCaptureSessionInterruptionEnded:)name:AVCaptureSessionInterruptionEndedNotificationobject:_captureSession];[center addObserver:selfselector:@selector(handleApplicationDidBecomeActive:)name:UIApplicationDidBecomeActiveNotificationobject:[UIApplication sharedApplication]];

#endif[center addObserver:selfselector:@selector(handleCaptureSessionRuntimeError:)name:AVCaptureSessionRuntimeErrorNotificationobject:_captureSession];[center addObserver:selfselector:@selector(handleCaptureSessionDidStartRunning:)name:AVCaptureSessionDidStartRunningNotificationobject:_captureSession];[center addObserver:selfselector:@selector(handleCaptureSessionDidStopRunning:)name:AVCaptureSessionDidStopRunningNotificationobject:_captureSession];}return self;

}//- (void)dealloc {

// NSAssert(

// !_willBeRunning,

// @"Session was still running in RTCCameraVideoCapturer dealloc. Forgot to call stopCapture?");

// [[NSNotificationCenter defaultCenter] removeObserver:self];

//}+ (NSArray<AVCaptureDevice *> *)captureDevices {

#if defined(WEBRTC_IOS) && defined(__IPHONE_10_0) && \__IPHONE_OS_VERSION_MIN_REQUIRED >= __IPHONE_10_0AVCaptureDeviceDiscoverySession *session = [AVCaptureDeviceDiscoverySessiondiscoverySessionWithDeviceTypes:@[ AVCaptureDeviceTypeBuiltInWideAngleCamera ]mediaType:AVMediaTypeVideoposition:AVCaptureDevicePositionUnspecified];return session.devices;

#elsereturn [AVCaptureDevice devicesWithMediaType:AVMediaTypeVideo];

#endif

}+ (NSArray<AVCaptureDeviceFormat *> *)supportedFormatsForDevice:(AVCaptureDevice *)device {// Support opening the device in any format. We make sure it's converted to a format we// can handle, if needed, in the method `-setupVideoDataOutput`.return device.formats;

}- (FourCharCode)preferredOutputPixelFormat {return _preferredOutputPixelFormat;

}- (void)startCaptureWithDevice:(AVCaptureDevice *)deviceformat:(AVCaptureDeviceFormat *)formatfps:(NSInteger)fps {[self startCaptureWithDevice:device format:format fps:fps completionHandler:nil];

}- (void)stopCapture {[self stopCaptureWithCompletionHandler:nil];

}- (void)startCaptureWithDevice:(AVCaptureDevice *)deviceformat:(AVCaptureDeviceFormat *)formatfps:(NSInteger)fpscompletionHandler:(nullable void (^)(NSError *))completionHandler {_willBeRunning = YES;[RTCDispatcherdispatchAsyncOnType:RTCDispatcherTypeCaptureSessionblock:^{RTCLogInfo("startCaptureWithDevice %@ @ %ld fps", format, (long)fps);#if TARGET_OS_IPHONEdispatch_async(dispatch_get_main_queue(), ^{if (!self->_generatingOrientationNotifications) {[[UIDevice currentDevice] beginGeneratingDeviceOrientationNotifications];self->_generatingOrientationNotifications = YES;}});

#endifself.currentDevice = device;NSError *error = nil;if (![self.currentDevice lockForConfiguration:&error]) {RTCLogError(@"Failed to lock device %@. Error: %@",self.currentDevice,error.userInfo);if (completionHandler) {completionHandler(error);}self.willBeRunning = NO;return;}[self reconfigureCaptureSessionInput];[self updateOrientation];[self updateDeviceCaptureFormat:format fps:fps];[self updateVideoDataOutputPixelFormat:format];[self.captureSession startRunning];[self.currentDevice unlockForConfiguration];self.isRunning = YES;if (completionHandler) {completionHandler(nil);}}];

}- (void)stopCaptureWithCompletionHandler:(nullable void (^)(void))completionHandler {_willBeRunning = NO;[RTCDispatcherdispatchAsyncOnType:RTCDispatcherTypeCaptureSessionblock:^{RTCLogInfo("Stop");self.currentDevice = nil;for (AVCaptureDeviceInput *oldInput in [self.captureSession.inputs copy]) {[self.captureSession removeInput:oldInput];}[self.captureSession stopRunning];#if TARGET_OS_IPHONEdispatch_async(dispatch_get_main_queue(), ^{if (self->_generatingOrientationNotifications) {[[UIDevice currentDevice] endGeneratingDeviceOrientationNotifications];self->_generatingOrientationNotifications = NO;}});

#endifself.isRunning = NO;if (completionHandler) {completionHandler();}}];

}#pragma mark iOS notifications#if TARGET_OS_IPHONE

- (void)deviceOrientationDidChange:(NSNotification *)notification {[RTCDispatcher dispatchAsyncOnType:RTCDispatcherTypeCaptureSessionblock:^{[self updateOrientation];}];

}

#endif#pragma mark AVCaptureVideoDataOutputSampleBufferDelegate//- (void)captureOutput:(AVCaptureOutput *)captureOutput

// didOutputSampleBuffer:(CMSampleBufferRef)sampleBuffer

// fromConnection:(AVCaptureConnection *)connection {

// NSParameterAssert(captureOutput == _videoDataOutput);

//

// if (CMSampleBufferGetNumSamples(sampleBuffer) != 1 || !CMSampleBufferIsValid(sampleBuffer) ||

// !CMSampleBufferDataIsReady(sampleBuffer)) {

// return;

// }

//

// CVPixelBufferRef pixelBuffer = CMSampleBufferGetImageBuffer(sampleBuffer);

// if (pixelBuffer == nil) {

// return;

// }

//

//#if TARGET_OS_IPHONE

// // Default to portrait orientation on iPhone.

// BOOL usingFrontCamera = NO;

// // Check the image's EXIF for the camera the image came from as the image could have been

// // delayed as we set alwaysDiscardsLateVideoFrames to NO.

//

// AVCaptureDeviceInput *deviceInput =

// (AVCaptureDeviceInput *)((AVCaptureInputPort *)connection.inputPorts.firstObject).input;

// usingFrontCamera = AVCaptureDevicePositionFront == deviceInput.device.position;

//

// switch (_orientation) {

// case UIDeviceOrientationPortrait:

// _rotation = RTCVideoRotation_90;

// break;

// case UIDeviceOrientationPortraitUpsideDown:

// _rotation = RTCVideoRotation_270;

// break;

// case UIDeviceOrientationLandscapeLeft:

// _rotation = usingFrontCamera ? RTCVideoRotation_180 : RTCVideoRotation_0;

// break;

// case UIDeviceOrientationLandscapeRight:

// _rotation = usingFrontCamera ? RTCVideoRotation_0 : RTCVideoRotation_180;

// break;

// case UIDeviceOrientationFaceUp:

// case UIDeviceOrientationFaceDown:

// case UIDeviceOrientationUnknown:

// // Ignore.

// break;

// }

//#else

// // No rotation on Mac.

// _rotation = RTCVideoRotation_0;

//#endif

//

// if (self.delegate && [self.delegate respondsToSelector:@selector(rtcCameraVideoCapturer:didOutputSampleBuffer:)]) {

// [self.delegate rtcCameraVideoCapturer:self didOutputSampleBuffer:sampleBuffer];

// }

//}#pragma mark - AVCaptureSession notifications- (void)handleCaptureSessionInterruption:(NSNotification *)notification {NSString *reasonString = nil;

#if TARGET_OS_IPHONENSNumber *reason = notification.userInfo[AVCaptureSessionInterruptionReasonKey];if (reason) {switch (reason.intValue) {case AVCaptureSessionInterruptionReasonVideoDeviceNotAvailableInBackground:reasonString = @"VideoDeviceNotAvailableInBackground";break;case AVCaptureSessionInterruptionReasonAudioDeviceInUseByAnotherClient:reasonString = @"AudioDeviceInUseByAnotherClient";break;case AVCaptureSessionInterruptionReasonVideoDeviceInUseByAnotherClient:reasonString = @"VideoDeviceInUseByAnotherClient";break;case AVCaptureSessionInterruptionReasonVideoDeviceNotAvailableWithMultipleForegroundApps:reasonString = @"VideoDeviceNotAvailableWithMultipleForegroundApps";break;}}

#endifRTCLog(@"Capture session interrupted: %@", reasonString);

}- (void)handleCaptureSessionInterruptionEnded:(NSNotification *)notification {RTCLog(@"Capture session interruption ended.");

}- (void)handleCaptureSessionRuntimeError:(NSNotification *)notification {NSError *error = [notification.userInfo objectForKey:AVCaptureSessionErrorKey];RTCLogError(@"Capture session runtime error: %@", error);[RTCDispatcher dispatchAsyncOnType:RTCDispatcherTypeCaptureSessionblock:^{

#if TARGET_OS_IPHONEif (error.code == AVErrorMediaServicesWereReset) {[self handleNonFatalError];} else {[self handleFatalError];}

#else[self handleFatalError];

#endif}];

}- (void)handleCaptureSessionDidStartRunning:(NSNotification *)notification {RTCLog(@"Capture session started.");[RTCDispatcher dispatchAsyncOnType:RTCDispatcherTypeCaptureSessionblock:^{// If we successfully restarted after an unknown error,// allow future retries on fatal errors.self.hasRetriedOnFatalError = NO;}];

}- (void)handleCaptureSessionDidStopRunning:(NSNotification *)notification {RTCLog(@"Capture session stopped.");

}- (void)handleFatalError {[RTCDispatcherdispatchAsyncOnType:RTCDispatcherTypeCaptureSessionblock:^{if (!self.hasRetriedOnFatalError) {RTCLogWarning(@"Attempting to recover from fatal capture error.");[self handleNonFatalError];self.hasRetriedOnFatalError = YES;} else {RTCLogError(@"Previous fatal error recovery failed.");}}];

}- (void)handleNonFatalError {[RTCDispatcher dispatchAsyncOnType:RTCDispatcherTypeCaptureSessionblock:^{RTCLog(@"Restarting capture session after error.");if (self.isRunning) {[self.captureSession startRunning];}}];

}#if TARGET_OS_IPHONE#pragma mark - UIApplication notifications- (void)handleApplicationDidBecomeActive:(NSNotification *)notification {[RTCDispatcher dispatchAsyncOnType:RTCDispatcherTypeCaptureSessionblock:^{if (self.isRunning && !self.captureSession.isRunning) {RTCLog(@"Restarting capture session on active.");[self.captureSession startRunning];}}];

}#endif // TARGET_OS_IPHONE#pragma mark - Private- (dispatch_queue_t)frameQueue {if (!_frameQueue) {_frameQueue =dispatch_queue_create("org.webrtc.cameravideocapturer.video", DISPATCH_QUEUE_SERIAL);dispatch_set_target_queue(_frameQueue,dispatch_get_global_queue(DISPATCH_QUEUE_PRIORITY_HIGH, 0));}return _frameQueue;

}- (BOOL)setupCaptureSession:(AVCaptureSession *)captureSession {NSAssert(_captureSession == nil, @"Setup capture session called twice.");_captureSession = captureSession;

#if defined(WEBRTC_IOS)_captureSession.sessionPreset = AVCaptureSessionPresetInputPriority;_captureSession.usesApplicationAudioSession = NO;

#endif[self setupVideoDataOutput];// Add the output.if (![_captureSession canAddOutput:_videoDataOutput]) {RTCLogError(@"Video data output unsupported.");return NO;}[_captureSession addOutput:_videoDataOutput];return YES;

}- (void)setupVideoDataOutput {NSAssert(_videoDataOutput == nil, @"Setup video data output called twice.");AVCaptureVideoDataOutput *videoDataOutput = [[AVCaptureVideoDataOutput alloc] init];// `videoDataOutput.availableVideoCVPixelFormatTypes` returns the pixel formats supported by the// device with the most efficient output format first. Find the first format that we support.NSSet<NSNumber *> *supportedPixelFormats = [RTCCVPixelBuffer supportedPixelFormats];NSMutableOrderedSet *availablePixelFormats =[NSMutableOrderedSet orderedSetWithArray:videoDataOutput.availableVideoCVPixelFormatTypes];[availablePixelFormats intersectSet:supportedPixelFormats];NSNumber *pixelFormat = availablePixelFormats.firstObject;NSAssert(pixelFormat, @"Output device has no supported formats.");_preferredOutputPixelFormat = [pixelFormat unsignedIntValue];_outputPixelFormat = _preferredOutputPixelFormat;

// videoDataOutput.videoSettings = @{(NSString *)kCVPixelBufferPixelFormatTypeKey : pixelFormat};// [videoDataOutput setAlwaysDiscardsLateVideoFrames:YES];videoDataOutput.videoSettings = @{(id)kCVPixelBufferPixelFormatTypeKey : @(kCVPixelFormatType_32BGRA)};videoDataOutput.alwaysDiscardsLateVideoFrames = YES;[videoDataOutput setSampleBufferDelegate:self queue:self.frameQueue];_videoDataOutput = videoDataOutput;// // 设置视频方向和旋转

// AVCaptureConnection *connection = [videoDataOutput connectionWithMediaType:AVMediaTypeVideo];

// [connection setVideoOrientation:AVCaptureVideoOrientationPortrait];

// [connection setVideoMirrored:NO];

}- (void)updateVideoDataOutputPixelFormat:(AVCaptureDeviceFormat *)format {

// FourCharCode mediaSubType = CMFormatDescriptionGetMediaSubType(format.formatDescription);

// if (![[RTCCVPixelBuffer supportedPixelFormats] containsObject:@(mediaSubType)]) {

// mediaSubType = _preferredOutputPixelFormat;

// }

//

// if (mediaSubType != _outputPixelFormat) {

// _outputPixelFormat = mediaSubType;

// _videoDataOutput.videoSettings =

// @{ (NSString *)kCVPixelBufferPixelFormatTypeKey : @(mediaSubType) };

// }

}#pragma mark - Private, called inside capture queue- (void)updateDeviceCaptureFormat:(AVCaptureDeviceFormat *)format fps:(NSInteger)fps {NSAssert([RTCDispatcher isOnQueueForType:RTCDispatcherTypeCaptureSession],@"updateDeviceCaptureFormat must be called on the capture queue.");@try {_currentDevice.activeFormat = format;_currentDevice.activeVideoMinFrameDuration = CMTimeMake(1, fps);} @catch (NSException *exception) {RTCLogError(@"Failed to set active format!\n User info:%@", exception.userInfo);return;}

}- (void)reconfigureCaptureSessionInput {NSAssert([RTCDispatcher isOnQueueForType:RTCDispatcherTypeCaptureSession],@"reconfigureCaptureSessionInput must be called on the capture queue.");NSError *error = nil;AVCaptureDeviceInput *input =[AVCaptureDeviceInput deviceInputWithDevice:_currentDevice error:&error];if (!input) {RTCLogError(@"Failed to create front camera input: %@", error.localizedDescription);return;}[_captureSession beginConfiguration];for (AVCaptureDeviceInput *oldInput in [_captureSession.inputs copy]) {[_captureSession removeInput:oldInput];}if ([_captureSession canAddInput:input]) {[_captureSession addInput:input];} else {RTCLogError(@"Cannot add camera as an input to the session.");}[_captureSession commitConfiguration];

}- (void)updateOrientation {NSAssert([RTCDispatcher isOnQueueForType:RTCDispatcherTypeCaptureSession],@"updateOrientation must be called on the capture queue.");

#if TARGET_OS_IPHONE_orientation = [UIDevice currentDevice].orientation;

#endif

}- (instancetype)initWithDevicePosition:(AVCaptureDevicePosition)iDevicePositionsessionPresset:(AVCaptureSessionPreset)sessionPresetfps:(int)iFPSneedYuvOutput:(BOOL)needYuvOutput

{self = [super init];if (self) {self.bSessionPause = YES;self.bufferQueue = dispatch_queue_create("STCameraBufferQueue", NULL);self.session = [[AVCaptureSession alloc] init];self.videoDevice = [self cameraDeviceWithPosition:iDevicePosition];_devicePosition = iDevicePosition;NSError *error = nil;self.deviceInput = [AVCaptureDeviceInput deviceInputWithDevice:self.videoDeviceerror:&error];if (!self.deviceInput || error) {NSLog(@"create input error");return nil;}self.dataOutput = [[AVCaptureVideoDataOutput alloc] init];[self.dataOutput setAlwaysDiscardsLateVideoFrames:YES];self.dataOutput.videoSettings = @{(id)kCVPixelBufferPixelFormatTypeKey : @(needYuvOutput ? kCVPixelFormatType_420YpCbCr8BiPlanarFullRange : kCVPixelFormatType_32BGRA)};self.dataOutput.alwaysDiscardsLateVideoFrames = YES;[self.dataOutput setSampleBufferDelegate:self queue:self.bufferQueue];self.metaOutput = [[AVCaptureMetadataOutput alloc] init];[self.metaOutput setMetadataObjectsDelegate:self queue:self.bufferQueue];self.stillImageOutput = [[AVCaptureStillImageOutput alloc] init];self.stillImageOutput.outputSettings = @{AVVideoCodecKey : AVVideoCodecJPEG};if ([self.stillImageOutput respondsToSelector:@selector(setHighResolutionStillImageOutputEnabled:)]) {self.stillImageOutput.highResolutionStillImageOutputEnabled = YES;}[self.session beginConfiguration];if ([self.session canAddInput:self.deviceInput]) {[self.session addInput:self.deviceInput];}else{NSLog( @"Could not add device input to the session" );return nil;}if ([self.session canSetSessionPreset:sessionPreset]) {[self.session setSessionPreset:sessionPreset];_sessionPreset = sessionPreset;}else if([self.session canSetSessionPreset:AVCaptureSessionPreset1920x1080]){[self.session setSessionPreset:AVCaptureSessionPreset1920x1080];_sessionPreset = AVCaptureSessionPreset1920x1080;}else if([self.session canSetSessionPreset:AVCaptureSessionPreset1280x720]){[self.session setSessionPreset:AVCaptureSessionPreset1280x720];_sessionPreset = AVCaptureSessionPreset1280x720;}else{[self.session setSessionPreset:AVCaptureSessionPreset640x480];_sessionPreset = AVCaptureSessionPreset640x480;}if ([self.session canAddOutput:self.dataOutput]) {[self.session addOutput:self.dataOutput];}else{NSLog( @"Could not add video data output to the session" );return nil;}if ([self.session canAddOutput:self.metaOutput]) {[self.session addOutput:self.metaOutput];self.metaOutput.metadataObjectTypes = @[AVMetadataObjectTypeFace].copy;}if ([self.session canAddOutput:self.stillImageOutput]) {[self.session addOutput:self.stillImageOutput];}else {NSLog(@"Could not add still image output to the session");}self.videoConnection = [self.dataOutput connectionWithMediaType:AVMediaTypeVideo];if ([self.videoConnection isVideoOrientationSupported]) {[self.videoConnection setVideoOrientation:AVCaptureVideoOrientationPortrait];self.videoOrientation = AVCaptureVideoOrientationPortrait;}if ([self.videoConnection isVideoMirroringSupported]) {[self.videoConnection setVideoMirrored:YES];self.needVideoMirrored = YES;}if ([_videoDevice lockForConfiguration:NULL] == YES) {

// _videoDevice.activeFormat = bestFormat;_videoDevice.activeVideoMinFrameDuration = CMTimeMake(1, iFPS);_videoDevice.activeVideoMaxFrameDuration = CMTimeMake(1, iFPS);[_videoDevice unlockForConfiguration];}[self.session commitConfiguration];NSMutableDictionary *tmpSettings = [[self.dataOutput recommendedVideoSettingsForAssetWriterWithOutputFileType:AVFileTypeQuickTimeMovie] mutableCopy];if (!EFMachineVersion.isiPhone5sOrLater) {NSNumber *tmpSettingValue = tmpSettings[AVVideoHeightKey];tmpSettings[AVVideoHeightKey] = tmpSettings[AVVideoWidthKey];tmpSettings[AVVideoWidthKey] = tmpSettingValue;}self.videoCompressingSettings = [tmpSettings copy];self.iExpectedFPS = iFPS;[self addObservers];}return self;

}- (void)rotateCamera {if (self.devicePosition == AVCaptureDevicePositionFront) {self.devicePosition = AVCaptureDevicePositionBack;}else{self.devicePosition = AVCaptureDevicePositionFront;}

}- (void)rotateCamera:(BOOL)isUseFrontCamera {if (isUseFrontCamera) {self.devicePosition = AVCaptureDevicePositionFront;}else{self.devicePosition = AVCaptureDevicePositionBack;}

}- (void)setExposurePoint:(CGPoint)point inPreviewFrame:(CGRect)frame {BOOL isFrontCamera = self.devicePosition == AVCaptureDevicePositionFront;float fX = point.y / frame.size.height;float fY = isFrontCamera ? point.x / frame.size.width : (1 - point.x / frame.size.width);[self focusWithMode:self.videoDevice.focusMode exposureMode:self.videoDevice.exposureMode atPoint:CGPointMake(fX, fY)];

}- (void)focusWithMode:(AVCaptureFocusMode)focusMode exposureMode:(AVCaptureExposureMode)exposureMode atPoint:(CGPoint)point{NSError *error = nil;AVCaptureDevice * device = self.videoDevice;if ( [device lockForConfiguration:&error] ) {device.exposureMode = AVCaptureExposureModeContinuousAutoExposure;

// - (void)setISOValue:(float)value{[self setISOValue:0];

//device.exposureTargetBias// Setting (focus/exposure)PointOfInterest alone does not initiate a (focus/exposure) operation// Call -set(Focus/Exposure)Mode: to apply the new point of interestif ( focusMode != AVCaptureFocusModeLocked && device.isFocusPointOfInterestSupported && [device isFocusModeSupported:focusMode] ) {device.focusPointOfInterest = point;device.focusMode = focusMode;}if ( exposureMode != AVCaptureExposureModeCustom && device.isExposurePointOfInterestSupported && [device isExposureModeSupported:exposureMode] ) {device.exposurePointOfInterest = point;device.exposureMode = exposureMode;}device.subjectAreaChangeMonitoringEnabled = YES;[device unlockForConfiguration];}

}- (void)setWhiteBalance{[self changeDeviceProperty:^(AVCaptureDevice *captureDevice) {if ([captureDevice isWhiteBalanceModeSupported:AVCaptureWhiteBalanceModeContinuousAutoWhiteBalance]) {[captureDevice setWhiteBalanceMode:AVCaptureWhiteBalanceModeContinuousAutoWhiteBalance];}}];

}- (void)changeDeviceProperty:(void(^)(AVCaptureDevice *))propertyChange{AVCaptureDevice *captureDevice= self.videoDevice;NSError *error;if ([captureDevice lockForConfiguration:&error]) {propertyChange(captureDevice);[captureDevice unlockForConfiguration];}else{NSLog(@"设置设备属性过程发生错误,错误信息:%@",error.localizedDescription);}

}- (void)setISOValue:(float)value exposeDuration:(int)duration{float currentISO = (value < self.videoDevice.activeFormat.minISO) ? self.videoDevice.activeFormat.minISO: value;currentISO = value > self.videoDevice.activeFormat.maxISO ? self.videoDevice.activeFormat.maxISO : value;NSError *error;if ([self.videoDevice lockForConfiguration:&error]){[self.videoDevice setExposureModeCustomWithDuration:AVCaptureExposureDurationCurrent ISO:currentISO completionHandler:nil];[self.videoDevice unlockForConfiguration];}

}- (void)setISOValue:(float)value{

// float newVlaue = (value - 0.5) * (5.0 / 0.5); // mirror [0,1] to [-8,8]NSLog(@"%f", value);NSError *error = nil;if ( [self.videoDevice lockForConfiguration:&error] ) {[self.videoDevice setExposureTargetBias:value completionHandler:nil];[self.videoDevice unlockForConfiguration];}else {NSLog( @"Could not lock device for configuration: %@", error );}

}- (void)setDevicePosition:(AVCaptureDevicePosition)devicePosition

{if (_devicePosition != devicePosition && devicePosition != AVCaptureDevicePositionUnspecified) {if (_session) {AVCaptureDevice *targetDevice = [self cameraDeviceWithPosition:devicePosition];if (targetDevice && [self judgeCameraAuthorization]) {NSError *error = nil;AVCaptureDeviceInput *deviceInput = [[AVCaptureDeviceInput alloc] initWithDevice:targetDevice error:&error];if(!deviceInput || error) {NSLog(@"Error creating capture device input: %@", error.localizedDescription);return;}_bSessionPause = YES;[_session beginConfiguration];[_session removeInput:_deviceInput];if ([_session canAddInput:deviceInput]) {[_session addInput:deviceInput];_deviceInput = deviceInput;_videoDevice = targetDevice;_devicePosition = devicePosition;}_videoConnection = [_dataOutput connectionWithMediaType:AVMediaTypeVideo];if ([_videoConnection isVideoOrientationSupported]) {[_videoConnection setVideoOrientation:_videoOrientation];}if ([_videoConnection isVideoMirroringSupported]) {[_videoConnection setVideoMirrored:devicePosition == AVCaptureDevicePositionFront];}[_session commitConfiguration];[self setSessionPreset:_sessionPreset];_bSessionPause = NO;}}}

}- (void)setSessionPreset:(NSString *)sessionPreset

{if (_session && _sessionPreset) {// if (![sessionPreset isEqualToString:_sessionPreset]) {_bSessionPause = YES;[_session beginConfiguration];if ([_session canSetSessionPreset:sessionPreset]) {[_session setSessionPreset:sessionPreset];_sessionPreset = sessionPreset;}[_session commitConfiguration];self.videoCompressingSettings = [[self.dataOutput recommendedVideoSettingsForAssetWriterWithOutputFileType:AVFileTypeQuickTimeMovie] copy];// [self setIExpectedFPS:_iExpectedFPS];_bSessionPause = NO;

// }}

}- (void)setIExpectedFPS:(int)iExpectedFPS

{_iExpectedFPS = iExpectedFPS;if (iExpectedFPS <= 0 || !_dataOutput.videoSettings || !_videoDevice) {return;}CGFloat fWidth = [[_dataOutput.videoSettings objectForKey:@"Width"] floatValue];CGFloat fHeight = [[_dataOutput.videoSettings objectForKey:@"Height"] floatValue];AVCaptureDeviceFormat *bestFormat = nil;AVFrameRateRange *bestFrameRateRange = nil;for (AVCaptureDeviceFormat *format in [_videoDevice formats]) {CMFormatDescriptionRef description = format.formatDescription;if (CMFormatDescriptionGetMediaSubType(description) != kCVPixelFormatType_420YpCbCr8BiPlanarFullRange) {continue;}CMVideoDimensions videoDimension = CMVideoFormatDescriptionGetDimensions(description);if ((videoDimension.width == fWidth && videoDimension.height == fHeight)||(videoDimension.height == fWidth && videoDimension.width == fHeight)) {for (AVFrameRateRange *range in format.videoSupportedFrameRateRanges) {if (range.maxFrameRate >= bestFrameRateRange.maxFrameRate) {bestFormat = format;bestFrameRateRange = range;}}}}if (bestFormat) {CMTime minFrameDuration;if (bestFrameRateRange.minFrameDuration.timescale / bestFrameRateRange.minFrameDuration.value < iExpectedFPS) {minFrameDuration = bestFrameRateRange.minFrameDuration;}else{minFrameDuration = CMTimeMake(1, iExpectedFPS);}if ([_videoDevice lockForConfiguration:NULL] == YES) {_videoDevice.activeFormat = bestFormat;_videoDevice.activeVideoMinFrameDuration = minFrameDuration;_videoDevice.activeVideoMaxFrameDuration = minFrameDuration;[_videoDevice unlockForConfiguration];}}

}- (void)startRunning

{if (![self judgeCameraAuthorization]) {return;}if (!self.dataOutput) {return;}if (self.session && ![self.session isRunning]) {if (self.bufferQueue) {dispatch_async(self.bufferQueue, ^{[self.session startRunning];});}self.bSessionPause = NO;}

}- (void)stopRunning

{if (self.session && [self.session isRunning]) {if (self.bufferQueue) {dispatch_async(self.bufferQueue, ^{[self.session stopRunning];});}self.bSessionPause = YES;}

}- (CGRect)getZoomedRectWithRect:(CGRect)rect scaleToFit:(BOOL)bScaleToFit

{CGRect rectRet = rect;if (self.dataOutput.videoSettings) {CGFloat fWidth = [[self.dataOutput.videoSettings objectForKey:@"Width"] floatValue];CGFloat fHeight = [[self.dataOutput.videoSettings objectForKey:@"Height"] floatValue];float fScaleX = fWidth / CGRectGetWidth(rect);float fScaleY = fHeight / CGRectGetHeight(rect);float fScale = bScaleToFit ? fmaxf(fScaleX, fScaleY) : fminf(fScaleX, fScaleY);fWidth /= fScale;fHeight /= fScale;CGFloat fX = rect.origin.x - (fWidth - rect.size.width) / 2.0f;CGFloat fY = rect.origin.y - (fHeight - rect.size.height) / 2.0f;rectRet = CGRectMake(fX, fY, fWidth, fHeight);}return rectRet;

}- (BOOL)judgeCameraAuthorization

{AVAuthorizationStatus authStatus = [AVCaptureDevice authorizationStatusForMediaType:AVMediaTypeVideo];if (authStatus == AVAuthorizationStatusRestricted || authStatus == AVAuthorizationStatusDenied) {return NO;}return YES;

}- (AVCaptureDevice *)cameraDeviceWithPosition:(AVCaptureDevicePosition)position

{AVCaptureDevice *deviceRet = nil;if (position != AVCaptureDevicePositionUnspecified) {NSArray *devices = [AVCaptureDevice devicesWithMediaType:AVMediaTypeVideo];for (AVCaptureDevice *device in devices) {if ([device position] == position) {deviceRet = device;}}}return deviceRet;

}- (AVCaptureVideoPreviewLayer *)previewLayer

{if (!_previewLayer) {_previewLayer = [[AVCaptureVideoPreviewLayer alloc] initWithSession:self.session];}return _previewLayer;

}- (void)snapStillImageCompletionHandler:(void (^)(CMSampleBufferRef imageDataSampleBuffer, NSError *error))handler

{if ([self judgeCameraAuthorization]) {self.bSessionPause = YES;NSString *strSessionPreset = [self.sessionPreset mutableCopy];self.sessionPreset = AVCaptureSessionPresetPhoto;// 改变preset会黑一下[NSThread sleepForTimeInterval:0.3];dispatch_async(self.bufferQueue, ^{[self.stillImageOutput captureStillImageAsynchronouslyFromConnection:[self.stillImageOutput connectionWithMediaType:AVMediaTypeVideo] completionHandler:^(CMSampleBufferRef imageDataSampleBuffer, NSError *error) {self.bSessionPause = NO;self.sessionPreset = strSessionPreset;handler(imageDataSampleBuffer , error);}];} );}

}

BOOL updateExporureModel(STExposureModel model){if (currentExposureMode == model) return NO;currentExposureMode = model;return YES;

}- (void)test:(AVCaptureExposureMode)model{if ([self.videoDevice lockForConfiguration:nil]) {if ([self.videoDevice isExposureModeSupported:model]) {[self.videoDevice setExposureMode:model];}[self.videoDevice unlockForConfiguration];}

}- (void)setExposureTime:(CMTime)time{if ([self.videoDevice lockForConfiguration:nil]) {if (@available(iOS 12.0, *)) {self.videoDevice.activeMaxExposureDuration = time;} else {// Fallback on earlier versions}[self.videoDevice unlockForConfiguration];}

}

- (void)setFPS:(float)fps{if ([_videoDevice lockForConfiguration:NULL] == YES) {

// _videoDevice.activeFormat = bestFormat;_videoDevice.activeVideoMinFrameDuration = CMTimeMake(1, fps);_videoDevice.activeVideoMaxFrameDuration = CMTimeMake(1, fps);[_videoDevice unlockForConfiguration];}

}- (void)updateExposure:(CMSampleBufferRef)sampleBuffer{CFDictionaryRef metadataDict = CMCopyDictionaryOfAttachments(NULL,sampleBuffer, kCMAttachmentMode_ShouldPropagate);NSDictionary * metadata = [[NSMutableDictionary alloc] initWithDictionary:(__bridge NSDictionary *)metadataDict];CFRelease(metadataDict);NSDictionary *exifMetadata = [[metadata objectForKey:(NSString *)kCGImagePropertyExifDictionary] mutableCopy];float brightnessValue = [[exifMetadata objectForKey:(NSString *)kCGImagePropertyExifBrightnessValue] floatValue];if(brightnessValue > 2 && updateExporureModel(STExposureModelPositive2)){[self setISOValue:500 exposeDuration:30];[self test:AVCaptureExposureModeContinuousAutoExposure];[self setFPS:30];}else if(brightnessValue > 1 && brightnessValue < 2 && updateExporureModel(STExposureModelPositive1)){[self setISOValue:500 exposeDuration:30];[self test:AVCaptureExposureModeContinuousAutoExposure];[self setFPS:30];}else if(brightnessValue > 0 && brightnessValue < 1 && updateExporureModel(STExposureModel0)){[self setISOValue:500 exposeDuration:30];[self test:AVCaptureExposureModeContinuousAutoExposure];[self setFPS:30];}else if (brightnessValue > -1 && brightnessValue < 0 && updateExporureModel(STExposureModelNegative1)){[self setISOValue:self.videoDevice.activeFormat.maxISO - 200 exposeDuration:40];[self test:AVCaptureExposureModeContinuousAutoExposure];}else if (brightnessValue > -2 && brightnessValue < -1 && updateExporureModel(STExposureModelNegative2)){[self setISOValue:self.videoDevice.activeFormat.maxISO - 200 exposeDuration:35];[self test:AVCaptureExposureModeContinuousAutoExposure];}else if (brightnessValue > -2.5 && brightnessValue < -2 && updateExporureModel(STExposureModelNegative3)){[self setISOValue:self.videoDevice.activeFormat.maxISO - 200 exposeDuration:30];[self test:AVCaptureExposureModeContinuousAutoExposure];}else if (brightnessValue > -3 && brightnessValue < -2.5 && updateExporureModel(STExposureModelNegative4)){[self setISOValue:self.videoDevice.activeFormat.maxISO - 200 exposeDuration:25];[self test:AVCaptureExposureModeContinuousAutoExposure];}else if (brightnessValue > -3.5 && brightnessValue < -3 && updateExporureModel(STExposureModelNegative5)){[self setISOValue:self.videoDevice.activeFormat.maxISO - 200 exposeDuration:20];[self test:AVCaptureExposureModeContinuousAutoExposure];}else if (brightnessValue > -4 && brightnessValue < -3.5 && updateExporureModel(STExposureModelNegative6)){[self setISOValue:self.videoDevice.activeFormat.maxISO - 250 exposeDuration:15];[self test:AVCaptureExposureModeContinuousAutoExposure];}else if (brightnessValue > -5 && brightnessValue < -4 && updateExporureModel(STExposureModelNegative7)){[self setISOValue:self.videoDevice.activeFormat.maxISO - 200 exposeDuration:10];[self test:AVCaptureExposureModeContinuousAutoExposure];}else if(brightnessValue < -5 && updateExporureModel(STExposureModelNegative8)){[self setISOValue:self.videoDevice.activeFormat.maxISO - 150 exposeDuration:5];[self test:AVCaptureExposureModeContinuousAutoExposure];}// NSLog(@"current brightness %f min iso %f max iso %f min exposure %f max exposure %f", brightnessValue, self.videoDevice.activeFormat.minISO, self.videoDevice.activeFormat.maxISO, CMTimeGetSeconds(self.videoDevice.activeFormat.minExposureDuration), CMTimeGetSeconds(self.videoDevice.activeFormat.maxExposureDuration));

}- (void)captureOutput:(AVCaptureOutput *)captureOutput didOutputSampleBuffer:(CMSampleBufferRef)sampleBuffer fromConnection:(AVCaptureConnection *)connection

{if (!self.bSessionPause) {if (self.delegate && [self.delegate respondsToSelector:@selector(rtcCameraVideoCapturer:didOutputSampleBuffer:)]) {[self.delegate rtcCameraVideoCapturer:self didOutputSampleBuffer:sampleBuffer];}

// if (self.delegate && [self.delegate respondsToSelector:@selector(captureOutput:didOutputSampleBuffer:fromConnection:)]) {

// //[connection setVideoOrientation:AVCaptureVideoOrientationPortrait];

// [self.delegate captureOutput:captureOutput didOutputSampleBuffer:sampleBuffer fromConnection:connection];

// }}

// [self updateExposure:sampleBuffer];

}- (void)captureOutput:(AVCaptureOutput *)output didOutputMetadataObjects:(NSArray<__kindof AVMetadataObject *> *)metadataObjects fromConnection:(AVCaptureConnection *)connection{AVMetadataFaceObject *faceObject = nil;for(AVMetadataObject *object in metadataObjects){if (AVMetadataObjectTypeFace == object.type) {faceObject = (AVMetadataFaceObject*)object;}}static BOOL hasFace = NO;if (!hasFace && faceObject.faceID) {hasFace = YES;}if (!faceObject.faceID) {hasFace = NO;}

}#pragma mark - Notifications

- (void)addObservers {[[NSNotificationCenter defaultCenter] addObserver:self selector:@selector(appWillResignActive) name:UIApplicationWillResignActiveNotification object:nil];[[NSNotificationCenter defaultCenter] addObserver:self selector:@selector(appDidBecomeActive) name:UIApplicationDidBecomeActiveNotification object:nil];[[NSNotificationCenter defaultCenter] addObserver:selfselector:@selector(dealCaptureSessionRuntimeError:)name:AVCaptureSessionRuntimeErrorNotificationobject:self.session];

}- (void)removeObservers {[[NSNotificationCenter defaultCenter] removeObserver:self];

}#pragma mark - Notification

- (void)appWillResignActive {RTCLogInfo("SDCustomRTCCameraCapturer appWillResignActive");

}- (void)appDidBecomeActive {RTCLogInfo("SDCustomRTCCameraCapturer appDidBecomeActive");[self startRunning];

}- (void)dealCaptureSessionRuntimeError:(NSNotification *)notification {NSError *error = [notification.userInfo objectForKey:AVCaptureSessionErrorKey];RTCLogError(@"SDCustomRTCCameraCapturer dealCaptureSessionRuntimeError error: %@", error);if (error.code == AVErrorMediaServicesWereReset) {[self startRunning];}

}#pragma mark - Dealloc

- (void)dealloc {DebugLog(@"SDCustomRTCCameraCapturer dealloc");[self removeObservers];if (self.session) {self.bSessionPause = YES;[self.session beginConfiguration];[self.session removeOutput:self.dataOutput];[self.session removeInput:self.deviceInput];[self.session commitConfiguration];if ([self.session isRunning]) {[self.session stopRunning];}self.session = nil;}

}@end

三、实现WebRTC结合ossrs视频通话功能

iOS端WebRTC调用ossrs相关,实现直播视频通话功能请查看:

https://blog.csdn.net/gloryFlow/article/details/132262724

这里列出来需要改动的地方

在createVideoTrack中使用SDCustomRTCCameraCapturer类

- (RTCVideoTrack *)createVideoTrack {RTCVideoSource *videoSource = [self.factory videoSource];self.localVideoSource = videoSource;// 如果是模拟器if (TARGET_IPHONE_SIMULATOR) {if (@available(iOS 10, *)) {self.videoCapturer = [[RTCFileVideoCapturer alloc] initWithDelegate:self];} else {// Fallback on earlier versions}} else{self.videoCapturer = [[SDCustomRTCCameraCapturer alloc] initWithDevicePosition:AVCaptureDevicePositionFront sessionPresset:AVCaptureSessionPreset1920x1080 fps:20 needYuvOutput:NO];// self.videoCapturer = [[SDCustomRTCCameraCapturer alloc] initWithDelegate:self];}RTCVideoTrack *videoTrack = [self.factory videoTrackWithSource:videoSource trackId:@"video0"];return videoTrack;

}

当需要渲染到界面上的时候,需要设置startCaptureLocalVideo,为 localVideoTrack添加渲染的界面View,renderer是一个RTCMTLVideoView

self.localRenderer = [[RTCMTLVideoView alloc] initWithFrame:CGRectZero];self.localRenderer.delegate = self;

- (void)startCaptureLocalVideo:(id<RTCVideoRenderer>)renderer {if (!self.isPublish) {return;}if (!renderer) {return;}if (!self.videoCapturer) {return;}[self setDegradationPreference:RTCDegradationPreferenceMaintainResolution];RTCVideoCapturer *capturer = self.videoCapturer;if ([capturer isKindOfClass:[SDCustomRTCCameraCapturer class]]) {AVCaptureDevice *camera = [self findDeviceForPosition:self.usingFrontCamera?AVCaptureDevicePositionFront:AVCaptureDevicePositionBack];SDCustomRTCCameraCapturer *cameraVideoCapturer = (SDCustomRTCCameraCapturer *)capturer;[cameraVideoCapturer setISOValue:0.0];[cameraVideoCapturer rotateCamera:self.usingFrontCamera];self.videoCapturer.delegate = self;AVCaptureDeviceFormat *formatNilable = [self selectFormatForDevice:camera];;if (!formatNilable) {return;}DebugLog(@"formatNilable:%@", formatNilable);NSInteger fps = [self selectFpsForFormat:formatNilable];CMVideoDimensions videoVideoDimensions = CMVideoFormatDescriptionGetDimensions(formatNilable.formatDescription);float width = videoVideoDimensions.width;float height = videoVideoDimensions.height;DebugLog(@"videoVideoDimensions width:%f,height:%f", width, height);[cameraVideoCapturer startRunning];

// [cameraVideoCapturer startCaptureWithDevice:camera format:formatNilable fps:fps completionHandler:^(NSError *error) {

// DebugLog(@"startCaptureWithDevice error:%@", error);

// }];[self changeResolution:width height:height fps:(int)fps];}if (@available(iOS 10, *)) {if ([capturer isKindOfClass:[RTCFileVideoCapturer class]]) {RTCFileVideoCapturer *fileVideoCapturer = (RTCFileVideoCapturer *)capturer;[fileVideoCapturer startCapturingFromFileNamed:@"beautyPicture.mp4" onError:^(NSError * _Nonnull error) {DebugLog(@"startCaptureLocalVideo startCapturingFromFileNamed error:%@", error);}];}} else {// Fallback on earlier versions}[self.localVideoTrack addRenderer:renderer];

}

获得相机采集的画面- (void)rtcCameraVideoCapturer:(RTCVideoCapturer *)capturer didOutputSampleBuffer:(CMSampleBufferRef)sampleBuffer;

具体代码如下

- (void)rtcCameraVideoCapturer:(RTCVideoCapturer *)capturer didOutputSampleBuffer:(CMSampleBufferRef)sampleBuffer {CVPixelBufferRef pixelBuffer = CMSampleBufferGetImageBuffer(sampleBuffer);RTCCVPixelBuffer *rtcPixelBuffer =[[RTCCVPixelBuffer alloc] initWithPixelBuffer:videoPixelBufferRef];RTCCVPixelBuffer *rtcPixelBuffer = [[RTCCVPixelBuffer alloc] initWithPixelBuffer:pixelBuffer];int64_t timeStampNs = CMTimeGetSeconds(CMSampleBufferGetPresentationTimeStamp(sampleBuffer)) *1000000000;RTCVideoFrame *rtcVideoFrame = [[RTCVideoFrame alloc] initWithBuffer:rtcPixelBuffer [self.localVideoSource capturer:capturer didCaptureVideoFrame:rtcVideoFrame];

}

WebRTCClient完整代码如下

WebRTCClient.h

#import <Foundation/Foundation.h>

#import <WebRTC/WebRTC.h>

#import <UIKit/UIKit.h>

#import "SDCustomRTCCameraCapturer.h"#define kSelectedResolution @"kSelectedResolutionIndex"

#define kFramerateLimit 30.0@protocol WebRTCClientDelegate;

@interface WebRTCClient : NSObject@property (nonatomic, weak) id<WebRTCClientDelegate> delegate;/**connect工厂*/

@property (nonatomic, strong) RTCPeerConnectionFactory *factory;/**是否push*/

@property (nonatomic, assign) BOOL isPublish;/**connect*/

@property (nonatomic, strong) RTCPeerConnection *peerConnection;/**RTCAudioSession*/

@property (nonatomic, strong) RTCAudioSession *rtcAudioSession;/**DispatchQueue*/

@property (nonatomic) dispatch_queue_t audioQueue;/**mediaConstrains*/

@property (nonatomic, strong) NSDictionary *mediaConstrains;/**publishMediaConstrains*/

@property (nonatomic, strong) NSDictionary *publishMediaConstrains;/**playMediaConstrains*/

@property (nonatomic, strong) NSDictionary *playMediaConstrains;/**optionalConstraints*/

@property (nonatomic, strong) NSDictionary *optionalConstraints;/**RTCVideoCapturer摄像头采集器*/

@property (nonatomic, strong) RTCVideoCapturer *videoCapturer;/**local语音localAudioTrack*/

@property (nonatomic, strong) RTCAudioTrack *localAudioTrack;/**localVideoTrack*/

@property (nonatomic, strong) RTCVideoTrack *localVideoTrack;/**remoteVideoTrack*/

@property (nonatomic, strong) RTCVideoTrack *remoteVideoTrack;/**RTCVideoRenderer*/

@property (nonatomic, weak) id<RTCVideoRenderer> remoteRenderView;/**localDataChannel*/

@property (nonatomic, strong) RTCDataChannel *localDataChannel;/**localDataChannel*/

@property (nonatomic, strong) RTCDataChannel *remoteDataChannel;/**RTCVideoSource*/

@property (nonatomic, strong) RTCVideoSource *localVideoSource;- (instancetype)initWithPublish:(BOOL)isPublish;- (void)startCaptureLocalVideo:(id<RTCVideoRenderer>)renderer;- (void)addIceCandidate:(RTCIceCandidate *)candidate;- (void)answer:(void (^)(RTCSessionDescription *sdp))completionHandler;- (void)offer:(void (^)(RTCSessionDescription *sdp))completionHandler;- (void)setRemoteSdp:(RTCSessionDescription *)remoteSdp completion:(void (^)(NSError *error))completion;- (void)setRemoteCandidate:(RTCIceCandidate *)remoteCandidate;- (BOOL)changeResolution:(int)width height:(int)height fps:(int)fps;- (NSArray<NSString *> *)availableVideoResolutions;#pragma mark - switchCamera

- (void)switchCamera:(id<RTCVideoRenderer>)renderer;#pragma mark - Hiden or show Video

- (void)hidenVideo;- (void)showVideo;#pragma mark - Hiden or show Audio

- (void)muteAudio;- (void)unmuteAudio;- (void)speakOff;- (void)speakOn;#pragma mark - 设置视频码率BitrateBps

- (void)setMaxBitrate:(int)maxBitrate;- (void)setMinBitrate:(int)minBitrate;#pragma mark - 设置视频帧率

- (void)setMaxFramerate:(int)maxFramerate;- (void)close;@end@protocol WebRTCClientDelegate <NSObject>// 处理美颜设置

- (RTCVideoFrame *)webRTCClient:(WebRTCClient *)client didCaptureSampleBuffer:(CMSampleBufferRef)sampleBuffer;- (void)webRTCClient:(WebRTCClient *)client didDiscoverLocalCandidate:(RTCIceCandidate *)candidate;

- (void)webRTCClient:(WebRTCClient *)client didChangeConnectionState:(RTCIceConnectionState)state;

- (void)webRTCClient:(WebRTCClient *)client didReceiveData:(NSData *)data;@end

WebRTCClient.m

#import "WebRTCClient.h"@interface WebRTCClient ()<RTCPeerConnectionDelegate, RTCDataChannelDelegate, RTCVideoCapturerDelegate, SDCustomRTCCameraCapturerDelegate>@property (nonatomic, assign) BOOL usingFrontCamera;@end@implementation WebRTCClient- (instancetype)initWithPublish:(BOOL)isPublish {self = [super init];if (self) {self.isPublish = isPublish;self.usingFrontCamera = YES;RTCMediaConstraints *constraints = [[RTCMediaConstraints alloc] initWithMandatoryConstraints:self.publishMediaConstrains optionalConstraints:self.optionalConstraints];RTCConfiguration *newConfig = [[RTCConfiguration alloc] init];newConfig.sdpSemantics = RTCSdpSemanticsUnifiedPlan;newConfig.continualGatheringPolicy = RTCContinualGatheringPolicyGatherContinually;self.peerConnection = [self.factory peerConnectionWithConfiguration:newConfig constraints:constraints delegate:nil];[self createMediaSenders];[self createMediaReceivers];// srs not support data channel.// self.createDataChannel()[self configureAudioSession];self.peerConnection.delegate = self;}return self;

}- (void)createMediaSenders {if (!self.isPublish) {return;}NSString *streamId = @"stream";// AudioRTCAudioTrack *audioTrack = [self createAudioTrack];self.localAudioTrack = audioTrack;RTCRtpTransceiverInit *audioTrackTransceiver = [[RTCRtpTransceiverInit alloc] init];audioTrackTransceiver.direction = RTCRtpTransceiverDirectionSendOnly;audioTrackTransceiver.streamIds = @[streamId];[self.peerConnection addTransceiverWithTrack:audioTrack init:audioTrackTransceiver];// VideoRTCRtpEncodingParameters *encodingParameters = [[RTCRtpEncodingParameters alloc] init];encodingParameters.maxBitrateBps = @(6000000);

// encodingParameters.bitratePriority = 1.0;RTCVideoTrack *videoTrack = [self createVideoTrack];self.localVideoTrack = videoTrack;RTCRtpTransceiverInit *videoTrackTransceiver = [[RTCRtpTransceiverInit alloc] init];videoTrackTransceiver.direction = RTCRtpTransceiverDirectionSendOnly;videoTrackTransceiver.streamIds = @[streamId];// 设置该属性后,SRS服务无法播放视频画面

// videoTrackTransceiver.sendEncodings = @[encodingParameters];[self.peerConnection addTransceiverWithTrack:videoTrack init:videoTrackTransceiver];[self setDegradationPreference:RTCDegradationPreferenceBalanced];[self setMaxBitrate:6000000];

}- (void)createMediaReceivers {if (!self.isPublish) {return;}if (self.peerConnection.transceivers.count > 0) {RTCRtpTransceiver *transceiver = self.peerConnection.transceivers.firstObject;if (transceiver.mediaType == RTCRtpMediaTypeVideo) {RTCVideoTrack *track = (RTCVideoTrack *)transceiver.receiver.track;self.remoteVideoTrack = track;}}

}- (void)configureAudioSession {[self.rtcAudioSession lockForConfiguration];@try {RTCAudioSessionConfiguration *configuration =[[RTCAudioSessionConfiguration alloc] init];configuration.category = AVAudioSessionCategoryPlayAndRecord;configuration.categoryOptions = AVAudioSessionCategoryOptionDefaultToSpeaker;configuration.mode = AVAudioSessionModeDefault;BOOL hasSucceeded = NO;NSError *error = nil;if (self.rtcAudioSession.isActive) {hasSucceeded = [self.rtcAudioSession setConfiguration:configuration error:&error];} else {hasSucceeded = [self.rtcAudioSession setConfiguration:configurationactive:YESerror:&error];}if (!hasSucceeded) {DebugLog(@"Error setting configuration: %@", error.localizedDescription);}} @catch (NSException *exception) {DebugLog(@"configureAudioSession exception:%@", exception);}[self.rtcAudioSession unlockForConfiguration];

}- (RTCAudioTrack *)createAudioTrack {/// enable google 3A algorithm.NSDictionary *mandatory = @{@"googEchoCancellation": kRTCMediaConstraintsValueTrue,@"googAutoGainControl": kRTCMediaConstraintsValueTrue,@"googNoiseSuppression": kRTCMediaConstraintsValueTrue,};RTCMediaConstraints *audioConstrains = [[RTCMediaConstraints alloc] initWithMandatoryConstraints:mandatory optionalConstraints:self.optionalConstraints];RTCAudioSource *audioSource = [self.factory audioSourceWithConstraints:audioConstrains];RTCAudioTrack *audioTrack = [self.factory audioTrackWithSource:audioSource trackId:@"audio0"];return audioTrack;

}- (RTCVideoTrack *)createVideoTrack {RTCVideoSource *videoSource = [self.factory videoSource];self.localVideoSource = videoSource;// 如果是模拟器if (TARGET_IPHONE_SIMULATOR) {if (@available(iOS 10, *)) {self.videoCapturer = [[RTCFileVideoCapturer alloc] initWithDelegate:self];} else {// Fallback on earlier versions}} else{self.videoCapturer = [[SDCustomRTCCameraCapturer alloc] initWithDevicePosition:AVCaptureDevicePositionFront sessionPresset:AVCaptureSessionPreset1920x1080 fps:20 needYuvOutput:NO];// self.videoCapturer = [[SDCustomRTCCameraCapturer alloc] initWithDelegate:self];}RTCVideoTrack *videoTrack = [self.factory videoTrackWithSource:videoSource trackId:@"video0"];return videoTrack;

}- (void)addIceCandidate:(RTCIceCandidate *)candidate {[self.peerConnection addIceCandidate:candidate];

}- (void)offer:(void (^)(RTCSessionDescription *sdp))completion {if (self.isPublish) {self.mediaConstrains = self.publishMediaConstrains;} else {self.mediaConstrains = self.playMediaConstrains;}RTCMediaConstraints *constrains = [[RTCMediaConstraints alloc] initWithMandatoryConstraints:self.mediaConstrains optionalConstraints:self.optionalConstraints];DebugLog(@"peerConnection:%@",self.peerConnection);__weak typeof(self) weakSelf = self;[weakSelf.peerConnection offerForConstraints:constrains completionHandler:^(RTCSessionDescription * _Nullable sdp, NSError * _Nullable error) {if (error) {DebugLog(@"offer offerForConstraints error:%@", error);}if (sdp) {[weakSelf.peerConnection setLocalDescription:sdp completionHandler:^(NSError * _Nullable error) {if (error) {DebugLog(@"offer setLocalDescription error:%@", error);}if (completion) {completion(sdp);}}];}}];

}- (void)answer:(void (^)(RTCSessionDescription *sdp))completion {RTCMediaConstraints *constrains = [[RTCMediaConstraints alloc] initWithMandatoryConstraints:self.mediaConstrains optionalConstraints:self.optionalConstraints];__weak typeof(self) weakSelf = self;[weakSelf.peerConnection answerForConstraints:constrains completionHandler:^(RTCSessionDescription * _Nullable sdp, NSError * _Nullable error) {if (error) {DebugLog(@"answer answerForConstraints error:%@", error);}if (sdp) {[weakSelf.peerConnection setLocalDescription:sdp completionHandler:^(NSError * _Nullable error) {if (error) {DebugLog(@"answer setLocalDescription error:%@", error);}if (completion) {completion(sdp);}}];}}];

}- (void)setRemoteSdp:(RTCSessionDescription *)remoteSdp completion:(void (^)(NSError *error))completion {[self.peerConnection setRemoteDescription:remoteSdp completionHandler:completion];

}- (void)setRemoteCandidate:(RTCIceCandidate *)remoteCandidate {[self.peerConnection addIceCandidate:remoteCandidate];

}- (void)setMaxBitrate:(int)maxBitrate {if (self.peerConnection.senders.count > 0) {RTCRtpSender *videoSender = self.peerConnection.senders.firstObject;RTCRtpParameters *parametersToModify = videoSender.parameters;for (RTCRtpEncodingParameters *encoding in parametersToModify.encodings) {encoding.maxBitrateBps = @(maxBitrate);}[videoSender setParameters:parametersToModify];}

}- (void)setDegradationPreference:(RTCDegradationPreference)degradationPreference {// RTCDegradationPreferenceMaintainResolutionNSMutableArray *videoSenders = [NSMutableArray arrayWithCapacity:0];for (RTCRtpSender *sender in self.peerConnection.senders) {if (sender.track && [kRTCMediaStreamTrackKindVideo isEqualToString:sender.track.kind]) {// [videoSenders addObject:sender];RTCRtpParameters *parameters = sender.parameters;parameters.degradationPreference = [NSNumber numberWithInteger:degradationPreference];[sender setParameters:parameters];[videoSenders addObject:sender];}}for (RTCRtpSender *sender in videoSenders) {RTCRtpParameters *parameters = sender.parameters;parameters.degradationPreference = [NSNumber numberWithInteger:degradationPreference];[sender setParameters:parameters];}

}- (void)setMinBitrate:(int)minBitrate {if (self.peerConnection.senders.count > 0) {RTCRtpSender *videoSender = self.peerConnection.senders.firstObject;RTCRtpParameters *parametersToModify = videoSender.parameters;for (RTCRtpEncodingParameters *encoding in parametersToModify.encodings) {encoding.minBitrateBps = @(minBitrate);}[videoSender setParameters:parametersToModify];}

}- (void)setMaxFramerate:(int)maxFramerate {if (self.peerConnection.senders.count > 0) {RTCRtpSender *videoSender = self.peerConnection.senders.firstObject;RTCRtpParameters *parametersToModify = videoSender.parameters;// 该版本暂时没有maxFramerate,需要更新到最新版本for (RTCRtpEncodingParameters *encoding in parametersToModify.encodings) {encoding.maxFramerate = @(maxFramerate);}[videoSender setParameters:parametersToModify];}

}#pragma mark - Private

- (AVCaptureDevice *)findDeviceForPosition:(AVCaptureDevicePosition)position {NSArray<AVCaptureDevice *> *captureDevices =[SDCustomRTCCameraCapturer captureDevices];for (AVCaptureDevice *device in captureDevices) {if (device.position == position) {return device;}}return captureDevices[0];

}- (NSArray<NSString *> *)availableVideoResolutions {NSMutableSet<NSArray<NSNumber *> *> *resolutions =[[NSMutableSet<NSArray<NSNumber *> *> alloc] init];for (AVCaptureDevice *device in [SDCustomRTCCameraCapturer captureDevices]) {for (AVCaptureDeviceFormat *format in[SDCustomRTCCameraCapturer supportedFormatsForDevice:device]) {CMVideoDimensions resolution =CMVideoFormatDescriptionGetDimensions(format.formatDescription);NSArray<NSNumber *> *resolutionObject = @[@(resolution.width), @(resolution.height) ];[resolutions addObject:resolutionObject];}}NSArray<NSArray<NSNumber *> *> *sortedResolutions =[[resolutions allObjects] sortedArrayUsingComparator:^NSComparisonResult(NSArray<NSNumber *> *obj1, NSArray<NSNumber *> *obj2) {NSComparisonResult cmp = [obj1.firstObject compare:obj2.firstObject];if (cmp != NSOrderedSame) {return cmp;}return [obj1.lastObject compare:obj2.lastObject];}];NSMutableArray<NSString *> *resolutionStrings = [[NSMutableArray<NSString *> alloc] init];for (NSArray<NSNumber *> *resolution in sortedResolutions) {NSString *resolutionString =[NSString stringWithFormat:@"%@x%@", resolution.firstObject, resolution.lastObject];[resolutionStrings addObject:resolutionString];}return [resolutionStrings copy];

}- (int)videoResolutionComponentAtIndex:(int)index inString:(NSString *)resolution {if (index != 0 && index != 1) {return 0;}NSArray<NSString *> *components = [resolution componentsSeparatedByString:@"x"];if (components.count != 2) {return 0;}return components[index].intValue;

}- (AVCaptureDeviceFormat *)selectFormatForDevice:(AVCaptureDevice *)device {RTCVideoCapturer *capturer = self.videoCapturer;if ([capturer isKindOfClass:[SDCustomRTCCameraCapturer class]]) {SDCustomRTCCameraCapturer *cameraVideoCapturer = (SDCustomRTCCameraCapturer *)capturer;NSArray *availableVideoResolutions = [self availableVideoResolutions];NSString *selectedIndexStr = [[NSUserDefaults standardUserDefaults] objectForKey:kSelectedResolution];int selectedIndex = 0;if (selectedIndexStr && selectedIndexStr.length > 0) {selectedIndex = [selectedIndexStr intValue];}NSString *videoResolution = [availableVideoResolutions objectAtIndex:selectedIndex];DebugLog(@"availableVideoResolutions:%@, videoResolution:%@", availableVideoResolutions, videoResolution);NSArray<AVCaptureDeviceFormat *> *formats =[SDCustomRTCCameraCapturer supportedFormatsForDevice:device];int targetWidth = [self videoResolutionComponentAtIndex:0 inString:videoResolution];int targetHeight = [self videoResolutionComponentAtIndex:1 inString:videoResolution];AVCaptureDeviceFormat *selectedFormat = nil;int currentDiff = INT_MAX;for (AVCaptureDeviceFormat *format in formats) {CMVideoDimensions dimension = CMVideoFormatDescriptionGetDimensions(format.formatDescription);FourCharCode pixelFormat = CMFormatDescriptionGetMediaSubType(format.formatDescription);int diff = abs(targetWidth - dimension.width) + abs(targetHeight - dimension.height);if (diff < currentDiff) {selectedFormat = format;currentDiff = diff;} else if (diff == currentDiff && pixelFormat == [cameraVideoCapturer preferredOutputPixelFormat]) {selectedFormat = format;}}return selectedFormat;}return nil;

}- (NSInteger)selectFpsForFormat:(AVCaptureDeviceFormat *)format {Float64 maxSupportedFramerate = 0;for (AVFrameRateRange *fpsRange in format.videoSupportedFrameRateRanges) {maxSupportedFramerate = fmax(maxSupportedFramerate, fpsRange.maxFrameRate);}

// return fmin(maxSupportedFramerate, kFramerateLimit);return 20;

}- (void)startCaptureLocalVideo:(id<RTCVideoRenderer>)renderer {if (!self.isPublish) {return;}if (!renderer) {return;}if (!self.videoCapturer) {return;}[self setDegradationPreference:RTCDegradationPreferenceMaintainResolution];RTCVideoCapturer *capturer = self.videoCapturer;if ([capturer isKindOfClass:[SDCustomRTCCameraCapturer class]]) {AVCaptureDevice *camera = [self findDeviceForPosition:self.usingFrontCamera?AVCaptureDevicePositionFront:AVCaptureDevicePositionBack];SDCustomRTCCameraCapturer *cameraVideoCapturer = (SDCustomRTCCameraCapturer *)capturer;[cameraVideoCapturer setISOValue:0.0];[cameraVideoCapturer rotateCamera:self.usingFrontCamera];self.videoCapturer.delegate = self;AVCaptureDeviceFormat *formatNilable = [self selectFormatForDevice:camera];;if (!formatNilable) {return;}DebugLog(@"formatNilable:%@", formatNilable);NSInteger fps = [self selectFpsForFormat:formatNilable];CMVideoDimensions videoVideoDimensions = CMVideoFormatDescriptionGetDimensions(formatNilable.formatDescription);float width = videoVideoDimensions.width;float height = videoVideoDimensions.height;DebugLog(@"videoVideoDimensions width:%f,height:%f", width, height);[cameraVideoCapturer startRunning];

// [cameraVideoCapturer startCaptureWithDevice:camera format:formatNilable fps:fps completionHandler:^(NSError *error) {

// DebugLog(@"startCaptureWithDevice error:%@", error);

// }];[self changeResolution:width height:height fps:(int)fps];}if (@available(iOS 10, *)) {if ([capturer isKindOfClass:[RTCFileVideoCapturer class]]) {RTCFileVideoCapturer *fileVideoCapturer = (RTCFileVideoCapturer *)capturer;[fileVideoCapturer startCapturingFromFileNamed:@"beautyPicture.mp4" onError:^(NSError * _Nonnull error) {DebugLog(@"startCaptureLocalVideo startCapturingFromFileNamed error:%@", error);}];}} else {// Fallback on earlier versions}[self.localVideoTrack addRenderer:renderer];

}- (void)renderRemoteVideo:(id<RTCVideoRenderer>)renderer {if (!self.isPublish) {return;}self.remoteRenderView = renderer;

}- (RTCDataChannel *)createDataChannel {RTCDataChannelConfiguration *config = [[RTCDataChannelConfiguration alloc] init];RTCDataChannel *dataChannel = [self.peerConnection dataChannelForLabel:@"WebRTCData" configuration:config];if (!dataChannel) {return nil;}dataChannel.delegate = self;self.localDataChannel = dataChannel;return dataChannel;

}- (void)sendData:(NSData *)data {RTCDataBuffer *buffer = [[RTCDataBuffer alloc] initWithData:data isBinary:YES];[self.remoteDataChannel sendData:buffer];

}#pragma mark - switchCamera

- (void)switchCamera:(id<RTCVideoRenderer>)renderer {self.usingFrontCamera = !self.usingFrontCamera;[self startCaptureLocalVideo:renderer];

}#pragma mark - 更改分辨率

- (BOOL)changeResolution:(int)width height:(int)height fps:(int)fps {if (!self.localVideoSource) {RTCVideoSource *videoSource = [self.factory videoSource];[videoSource adaptOutputFormatToWidth:width height:height fps:fps];} else {[self.localVideoSource adaptOutputFormatToWidth:width height:height fps:fps];}return YES;

}#pragma mark - Hiden or show Video

- (void)hidenVideo {[self setVideoEnabled:NO];self.localVideoTrack.isEnabled = NO;

}- (void)showVideo {[self setVideoEnabled:YES];self.localVideoTrack.isEnabled = YES;

}- (void)setVideoEnabled:(BOOL)isEnabled {[self setTrackEnabled:[RTCVideoTrack class] isEnabled:isEnabled];

}- (void)setTrackEnabled:(Class)track isEnabled:(BOOL)isEnabled {for (RTCRtpTransceiver *transceiver in self.peerConnection.transceivers) {if (transceiver && [transceiver isKindOfClass:track]) {transceiver.sender.track.isEnabled = isEnabled;}}

}#pragma mark - Hiden or show Audio

- (void)muteAudio {[self setAudioEnabled:NO];self.localAudioTrack.isEnabled = NO;

}- (void)unmuteAudio {[self setAudioEnabled:YES];self.localAudioTrack.isEnabled = YES;

}- (void)speakOff {__weak typeof(self) weakSelf = self;dispatch_async(self.audioQueue, ^{[weakSelf.rtcAudioSession lockForConfiguration];@try {NSError *error;[self.rtcAudioSession setCategory:AVAudioSessionCategoryPlayAndRecord withOptions:AVAudioSessionCategoryOptionDefaultToSpeaker error:&error];NSError *ooapError;[self.rtcAudioSession overrideOutputAudioPort:AVAudioSessionPortOverrideNone error:&ooapError];DebugLog(@"speakOff error:%@, ooapError:%@", error, ooapError);} @catch (NSException *exception) {DebugLog(@"speakOff exception:%@", exception);}[weakSelf.rtcAudioSession unlockForConfiguration];});

}- (void)speakOn {__weak typeof(self) weakSelf = self;dispatch_async(self.audioQueue, ^{[weakSelf.rtcAudioSession lockForConfiguration];@try {NSError *error;[self.rtcAudioSession setCategory:AVAudioSessionCategoryPlayAndRecord withOptions:AVAudioSessionCategoryOptionDefaultToSpeaker error:&error];NSError *ooapError;[self.rtcAudioSession overrideOutputAudioPort:AVAudioSessionPortOverrideSpeaker error:&ooapError];NSError *activeError;[self.rtcAudioSession setActive:YES error:&activeError];DebugLog(@"speakOn error:%@, ooapError:%@, activeError:%@", error, ooapError, activeError);} @catch (NSException *exception) {DebugLog(@"speakOn exception:%@", exception);}[weakSelf.rtcAudioSession unlockForConfiguration];});

}- (void)setAudioEnabled:(BOOL)isEnabled {[self setTrackEnabled:[RTCAudioTrack class] isEnabled:isEnabled];

}- (void)close {RTCVideoCapturer *capturer = self.videoCapturer;if ([capturer isKindOfClass:[SDCustomRTCCameraCapturer class]]) {SDCustomRTCCameraCapturer *cameraVideoCapturer = (SDCustomRTCCameraCapturer *)capturer;[cameraVideoCapturer stopRunning];}[self.peerConnection close];self.peerConnection = nil;self.videoCapturer = nil;

}#pragma mark - RTCPeerConnectionDelegate

/** Called when the SignalingState changed. */

- (void)peerConnection:(RTCPeerConnection *)peerConnection

didChangeSignalingState:(RTCSignalingState)stateChanged {DebugLog(@"peerConnection didChangeSignalingState:%ld", (long)stateChanged);

}/** Called when media is received on a new stream from remote peer. */

- (void)peerConnection:(RTCPeerConnection *)peerConnection didAddStream:(RTCMediaStream *)stream {DebugLog(@"peerConnection didAddStream");if (self.isPublish) {return;}NSArray *videoTracks = stream.videoTracks;if (videoTracks && videoTracks.count > 0) {RTCVideoTrack *track = videoTracks.firstObject;self.remoteVideoTrack = track;}if (self.remoteVideoTrack && self.remoteRenderView) {id<RTCVideoRenderer> remoteRenderView = self.remoteRenderView;RTCVideoTrack *remoteVideoTrack = self.remoteVideoTrack;[remoteVideoTrack addRenderer:remoteRenderView];}/**if let audioTrack = stream.audioTracks.first{print("audio track faund")audioTrack.source.volume = 8}*/

}/** Called when a remote peer closes a stream.* This is not called when RTCSdpSemanticsUnifiedPlan is specified.*/

- (void)peerConnection:(RTCPeerConnection *)peerConnection didRemoveStream:(RTCMediaStream *)stream {DebugLog(@"peerConnection didRemoveStream");

}/** Called when negotiation is needed, for example ICE has restarted. */

- (void)peerConnectionShouldNegotiate:(RTCPeerConnection *)peerConnection {DebugLog(@"peerConnection peerConnectionShouldNegotiate");

}/** Called any time the IceConnectionState changes. */

- (void)peerConnection:(RTCPeerConnection *)peerConnectiondidChangeIceConnectionState:(RTCIceConnectionState)newState {DebugLog(@"peerConnection didChangeIceConnectionState:%ld", newState);if (self.delegate && [self.delegate respondsToSelector:@selector(webRTCClient:didChangeConnectionState:)]) {[self.delegate webRTCClient:self didChangeConnectionState:newState];}if (RTCIceConnectionStateConnected == newState || RTCIceConnectionStateChecking == newState) {[self setDegradationPreference:RTCDegradationPreferenceMaintainResolution];}

}/** Called any time the IceGatheringState changes. */

- (void)peerConnection:(RTCPeerConnection *)peerConnectiondidChangeIceGatheringState:(RTCIceGatheringState)newState {DebugLog(@"peerConnection didChangeIceGatheringState:%ld", newState);

}/** New ice candidate has been found. */

- (void)peerConnection:(RTCPeerConnection *)peerConnectiondidGenerateIceCandidate:(RTCIceCandidate *)candidate {DebugLog(@"peerConnection didGenerateIceCandidate:%@", candidate);if (self.delegate && [self.delegate respondsToSelector:@selector(webRTCClient:didDiscoverLocalCandidate:)]) {[self.delegate webRTCClient:self didDiscoverLocalCandidate:candidate];}

}/** Called when a group of local Ice candidates have been removed. */

- (void)peerConnection:(RTCPeerConnection *)peerConnectiondidRemoveIceCandidates:(NSArray<RTCIceCandidate *> *)candidates {DebugLog(@"peerConnection didRemoveIceCandidates:%@", candidates);

}/** New data channel has been opened. */

- (void)peerConnection:(RTCPeerConnection *)peerConnectiondidOpenDataChannel:(RTCDataChannel *)dataChannel {DebugLog(@"peerConnection didOpenDataChannel:%@", dataChannel);self.remoteDataChannel = dataChannel;

}/** Called when signaling indicates a transceiver will be receiving media from* the remote endpoint.* This is only called with RTCSdpSemanticsUnifiedPlan specified.*/

- (void)peerConnection:(RTCPeerConnection *)peerConnectiondidStartReceivingOnTransceiver:(RTCRtpTransceiver *)transceiver {DebugLog(@"peerConnection didStartReceivingOnTransceiver:%@", transceiver);

}/** Called when a receiver and its track are created. */

- (void)peerConnection:(RTCPeerConnection *)peerConnectiondidAddReceiver:(RTCRtpReceiver *)rtpReceiverstreams:(NSArray<RTCMediaStream *> *)mediaStreams {DebugLog(@"peerConnection didAddReceiver");

}/** Called when the receiver and its track are removed. */

- (void)peerConnection:(RTCPeerConnection *)peerConnectiondidRemoveReceiver:(RTCRtpReceiver *)rtpReceiver {DebugLog(@"peerConnection didRemoveReceiver");

}#pragma mark - RTCDataChannelDelegate

/** The data channel state changed. */

- (void)dataChannelDidChangeState:(RTCDataChannel *)dataChannel {DebugLog(@"dataChannelDidChangeState:%@", dataChannel);

}/** The data channel successfully received a data buffer. */

- (void)dataChannel:(RTCDataChannel *)dataChannel

didReceiveMessageWithBuffer:(RTCDataBuffer *)buffer {if (self.delegate && [self.delegate respondsToSelector:@selector(webRTCClient:didReceiveData:)]) {[self.delegate webRTCClient:self didReceiveData:buffer.data];}

}#pragma mark - RTCVideoCapturerDelegate处理代理

- (void)capturer:(RTCVideoCapturer *)capturer didCaptureVideoFrame:(RTCVideoFrame *)frame {

// DebugLog(@"capturer:%@ didCaptureVideoFrame:%@", capturer, frame);

// RTCVideoFrame *aFilterVideoFrame;

// if (self.delegate && [self.delegate respondsToSelector:@selector(webRTCClient:didCaptureVideoFrame:)]) {

// aFilterVideoFrame = [self.delegate webRTCClient:self didCaptureVideoFrame:frame];

// }// 操作C 需要手动释放 否则内存暴涨

// CVPixelBufferRelease(_buffer)// 拿到pixelBuffersampleBuffer

// ((RTCCVPixelBuffer*)frame.buffer).pixelBuffer// if (!aFilterVideoFrame) {

// aFilterVideoFrame = frame;

// }

//

// [self.localVideoSource capturer:capturer didCaptureVideoFrame:frame];

}- (void)rtcCameraVideoCapturer:(RTCVideoCapturer *)capturer didOutputSampleBuffer:(CMSampleBufferRef)sampleBuffer {CVPixelBufferRef pixelBuffer = CMSampleBufferGetImageBuffer(sampleBuffer);RTCCVPixelBuffer *rtcPixelBuffer =[[RTCCVPixelBuffer alloc] initWithPixelBuffer:videoPixelBufferRef];RTCCVPixelBuffer *rtcPixelBuffer = [[RTCCVPixelBuffer alloc] initWithPixelBuffer:pixelBuffer];int64_t timeStampNs = CMTimeGetSeconds(CMSampleBufferGetPresentationTimeStamp(sampleBuffer)) *1000000000;RTCVideoFrame *rtcVideoFrame = [[RTCVideoFrame alloc] initWithBuffer:rtcPixelBuffer [self.localVideoSource capturer:capturer didCaptureVideoFrame:rtcVideoFrame];

}#pragma mark - Lazy

- (RTCPeerConnectionFactory *)factory {if (!_factory) {RTCInitializeSSL();RTCDefaultVideoEncoderFactory *videoEncoderFactory = [[RTCDefaultVideoEncoderFactory alloc] init];RTCDefaultVideoDecoderFactory *videoDecoderFactory = [[RTCDefaultVideoDecoderFactory alloc] init];_factory = [[RTCPeerConnectionFactory alloc] initWithEncoderFactory:videoEncoderFactory decoderFactory:videoDecoderFactory];}return _factory;

}- (dispatch_queue_t)audioQueue {if (!_audioQueue) {_audioQueue = dispatch_queue_create("cn.dface.webrtc", NULL);}return _audioQueue;

}- (RTCAudioSession *)rtcAudioSession {if (!_rtcAudioSession) {_rtcAudioSession = [RTCAudioSession sharedInstance];}return _rtcAudioSession;

}- (NSDictionary *)mediaConstrains {if (!_mediaConstrains) {_mediaConstrains = [[NSDictionary alloc] initWithObjectsAndKeys:kRTCMediaConstraintsValueFalse, kRTCMediaConstraintsOfferToReceiveAudio,kRTCMediaConstraintsValueFalse, kRTCMediaConstraintsOfferToReceiveVideo,kRTCMediaConstraintsValueTrue, @"IceRestart",nil];}return _mediaConstrains;

}- (NSDictionary *)publishMediaConstrains {if (!_publishMediaConstrains) {_publishMediaConstrains = [[NSDictionary alloc] initWithObjectsAndKeys:kRTCMediaConstraintsValueFalse, kRTCMediaConstraintsOfferToReceiveAudio,kRTCMediaConstraintsValueFalse, kRTCMediaConstraintsOfferToReceiveVideo,kRTCMediaConstraintsValueTrue, @"IceRestart",kRTCMediaConstraintsValueTrue, @"googCpuOveruseDetection",kRTCMediaConstraintsValueFalse, @"googBandwidthLimitedResolution",

// @"2160", kRTCMediaConstraintsMinWidth,

// @"3840", kRTCMediaConstraintsMinHeight,

// @"0.25", kRTCMediaConstraintsMinAspectRatio,

// @"1", kRTCMediaConstraintsMaxAspectRatio,nil];}return _publishMediaConstrains;

}- (NSDictionary *)playMediaConstrains {if (!_playMediaConstrains) {_playMediaConstrains = [[NSDictionary alloc] initWithObjectsAndKeys:kRTCMediaConstraintsValueTrue, kRTCMediaConstraintsOfferToReceiveAudio,kRTCMediaConstraintsValueTrue, kRTCMediaConstraintsOfferToReceiveVideo,kRTCMediaConstraintsValueTrue, @"IceRestart",kRTCMediaConstraintsValueTrue, @"googCpuOveruseDetection",kRTCMediaConstraintsValueFalse, @"googBandwidthLimitedResolution",

// @"2160", kRTCMediaConstraintsMinWidth,

// @"3840", kRTCMediaConstraintsMinHeight,

// @"0.25", kRTCMediaConstraintsMinAspectRatio,

// @"1", kRTCMediaConstraintsMaxAspectRatio,nil];}return _playMediaConstrains;

}- (NSDictionary *)optionalConstraints {if (!_optionalConstraints) {_optionalConstraints = [[NSDictionary alloc] initWithObjectsAndKeys:kRTCMediaConstraintsValueTrue, @"DtlsSrtpKeyAgreement",kRTCMediaConstraintsValueTrue, @"googCpuOveruseDetection",nil];}return _optionalConstraints;

}- (void)dealloc {[self.peerConnection close];self.peerConnection = nil;

}@end

至此WebRTC视频自定义RTCVideoCapturer相机完毕。

其他

之前搭建ossrs服务,可以查看:https://blog.csdn.net/gloryFlow/article/details/132257196

之前实现iOS端调用ossrs音视频通话,可以查看:https://blog.csdn.net/gloryFlow/article/details/132262724

之前WebRTC音视频通话高分辨率不显示画面问题,可以查看:https://blog.csdn.net/gloryFlow/article/details/132240952

修改SDP中的码率Bitrate,可以查看:https://blog.csdn.net/gloryFlow/article/details/132263021

GPUImage视频通话视频美颜滤镜,可以查看:https://blog.csdn.net/gloryFlow/article/details/132265842

RTC直播本地视频或相册视频,可以查看:https://blog.csdn.net/gloryFlow/article/details/132267068

四、小结

WebRTC音视频通话-WebRTC视频自定义RTCVideoCapturer相机。主要获得相机采集的画面CVPixelBufferRef,将处理后的CVPixelBufferRef生成RTCVideoFrame,通过调用WebRTC的localVideoSource中实现的didCaptureVideoFrame方法。内容较多,描述可能不准确,请见谅。

https://blog.csdn.net/gloryFlow/article/details/132308673

学习记录,每天不停进步。