这是史上最简单、清晰……

C++语言编写的 带正向传播、反向传播(Forward ……和Back Propagation)……任意Nodes数的人工神经元神经网络……。

大一学生、甚至中学生可以读懂。

适合于,没学过高数的程序员……照猫画虎编写人工智能、深度学习之神经网络……

著作权归作者所有。商业转载请联系作者获得授权,非商业转载请注明出处。

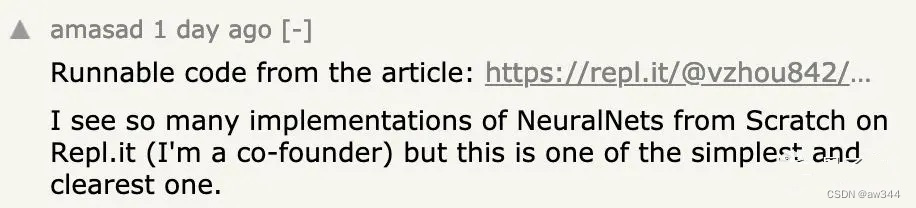

“我在网上看到过很多神经网络的实现方法,但这一篇是最简单、最清晰的。”

一位来自Umass的华人小哥Along Asong,写了篇神经网络入门教程,在线代码网站Repl.it联合创始人Amjad Masad看完以后,给予如是评价。

这篇教程发布仅一天时间,就在Hacker News论坛上收获了574赞。程序员们纷纷夸赞这篇文章的代码写得很好,变量名很规范,让人一目了然。

下面就让我们一起从零开始学习神经网络吧:

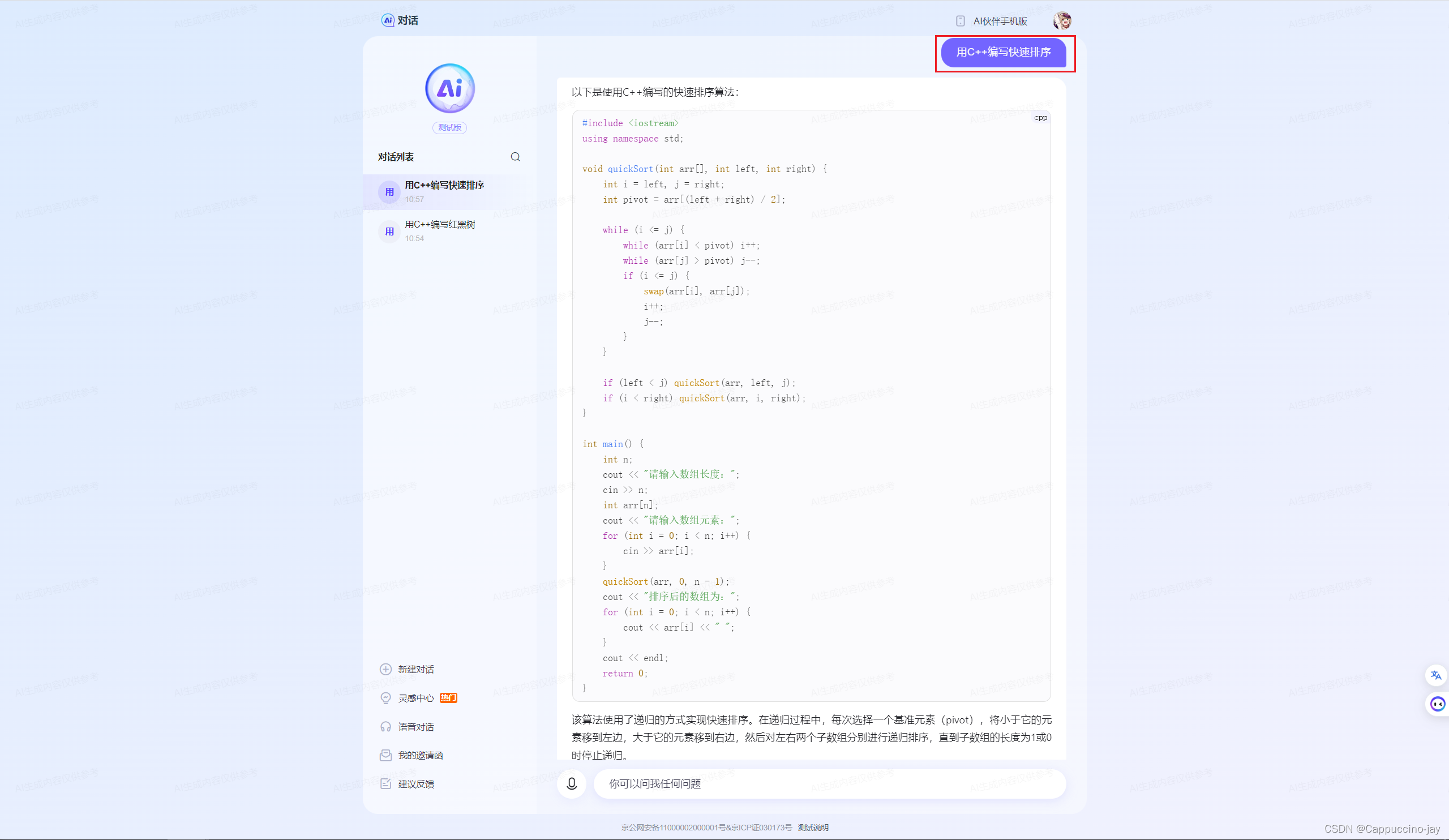

c++写一完整人工神经网络,要求输入层有9个Nodes,一个隐藏层有12个Nodes,输出层有5个Nodes,……含有反向传播、梯度下降法更新权重和偏置等。

- 神经网络的结构

- 前向传播(Forward Propagation)

- 反向传播(Back Propagation)

- 更新权重和偏置(梯度下降法)

下面是一个基本的实现:

// c++人工神经网络反向传播梯度下降更新权重偏置230810a18.cpp : 此文件包含 "main" 函数。程序执行将在此处开始并结束。

#include <iostream>

#include <vector>

#include <cmath>

#include <ctime>

#include <cstdlib>

#include <string>

#include <sstream>

#include <iomanip> // 引入setprecisionint Ninpu9t = 9; //输入层Nodes数

int Nhidde12n = 12;//隐藏层Nodes数 4;// 11;

int nOutpu2t = 5;//输出层Nodes数 2;// 3;double Lost001 = 9.0;// 使用sigmoid作为激活函数

double sigmoid(double x) {return 1.0 / (1.0 + std::exp(-x));

}double sigmoid_derivative(double x) {double s = sigmoid(x);return s * (1 - s);

}class NeuralNetwork {

private:std::vector<std::vector<double>> weights1, weights2; // weightsstd::vector<double> bias1, bias2; // biasesdouble learning_rate;public:NeuralNetwork() : learning_rate(0.1) { //01) {srand(time(nullptr));// 初始化权重和偏置weights1.resize(Ninpu9t, std::vector<double>(Nhidde12n));weights2.resize(Nhidde12n, std::vector<double>(nOutpu2t));bias1.resize(Nhidde12n);bias2.resize(nOutpu2t);for (int i = 0; i < Ninpu9t; ++i)for (int j = 0; j < Nhidde12n; ++j)weights1[i][j] = (rand() % 2000 - 1000) / 1000.0; // [-1, 1]for (int i = 0; i < Nhidde12n; ++i) {//for1100ibias1[i] = (rand() % 2000 - 1000) / 1000.0; // [-1, 1]for (int j = 0; j < nOutpu2t; ++j)weights2[i][j] = (rand() % 2000 - 1000) / 1000.0; // [-1, 1]}//for1100ifor (int i = 0; i < nOutpu2t; ++i)bias2[i] = (rand() % 2000 - 1000) / 1000.0; // [-1, 1]}std::vector<double> forward(const std::vector<double>& input) {std::vector<double> hidden(Nhidde12n);std::vector<double> output(nOutpu2t);for (int i = 0; i < Nhidde12n; ++i) {//for110ihidden[i] = 0;for (int j = 0; j < Ninpu9t; ++j){hidden[i] += input[j] * weights1[j][i];}hidden[i] += bias1[i];hidden[i] = sigmoid(hidden[i]);}//for110ifor (int i = 0; i < nOutpu2t; ++i) {//for220ioutput[i] = 0;for (int j = 0; j < Nhidde12n; ++j){output[i] += hidden[j] * weights2[j][i];}output[i] += bias2[i];output[i] = sigmoid(output[i]);}//for220ireturn output;}void train(const std::vector<double>& input, const std::vector<double>& target) {// Forwardstd::vector<double> hidden(Nhidde12n);std::vector<double> output(nOutpu2t);std::vector<double> hidden_sum(Nhidde12n, 0);std::vector<double> output_sum(nOutpu2t, 0);for (int i = 0; i < Nhidde12n; ++i) {for (int j = 0; j < Ninpu9t; ++j){hidden_sum[i] += input[j] * weights1[j][i];}hidden_sum[i] += bias1[i];hidden[i] = sigmoid(hidden_sum[i]);}//for110ifor (int i = 0; i < nOutpu2t; ++i) {//for220ifor (int j = 0; j < Nhidde12n; ++j)output_sum[i] += hidden[j] * weights2[j][i]; //注意 output_sum[]output_sum[i] += bias2[i];output[i] = sigmoid(output_sum[i]); //激活函数正向传播}//for220i//反向传播Backpropagationstd::vector<double> output_errors(nOutpu2t);for (int i = 0; i < nOutpu2t; ++i) {//for2240ioutput_errors[i] = target[i] - output[i];//算损失综合总和:Lost001 = 0.0;Lost001 += fabs(output_errors[i]);}//for2240istd::vector<double> d_output(nOutpu2t);for (int i = 0; i < nOutpu2t; ++i)d_output[i] = output_errors[i] * sigmoid_derivative(output_sum[i]); //对output_sum[]做 激活函数的 导数 传播std::vector<double> hidden_errors(Nhidde12n, 0);for (int i = 0; i < Nhidde12n; ++i) {//for440ifor (int j = 0; j < nOutpu2t; ++j)hidden_errors[i] += weights2[i][j] * d_output[j];}//for440istd::vector<double> d_hidden(Nhidde12n);for (int i = 0; i < Nhidde12n; ++i)d_hidden[i] = hidden_errors[i] * sigmoid_derivative(hidden_sum[i]); //对 hidden_errors层 做激活函数的 导数 传播// Update weights and biasesfor (int i = 0; i < Nhidde12n; ++i) {//for66ifor (int j = 0; j < nOutpu2t; ++j)weights2[i][j] += learning_rate * d_output[j] * hidden[i]; //修改 隐藏层的 weights2}//for66ifor (int i = 0; i < nOutpu2t; ++i)bias2[i] += learning_rate * d_output[i];for (int i = 0; i < Ninpu9t; ++i) {//for990ifor (int j = 0; j < Nhidde12n; ++j)weights1[i][j] += learning_rate * d_hidden[j] * input[i]; //修改输入层的 weight1}//for990ifor (int i = 0; i < Nhidde12n; ++i)bias1[i] += learning_rate * d_hidden[i];}//void train(const std::vector<double>& input, const std::vector<double>& target

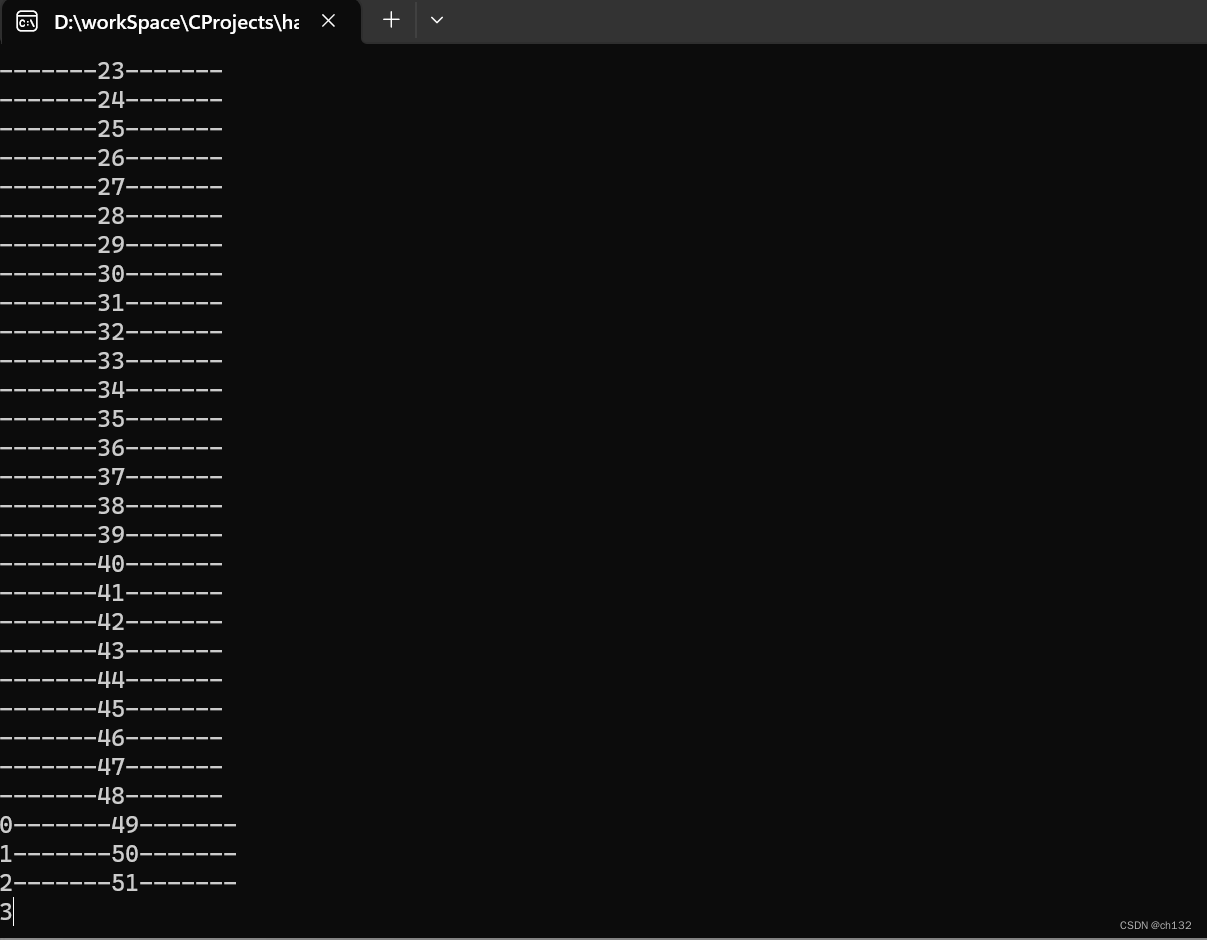

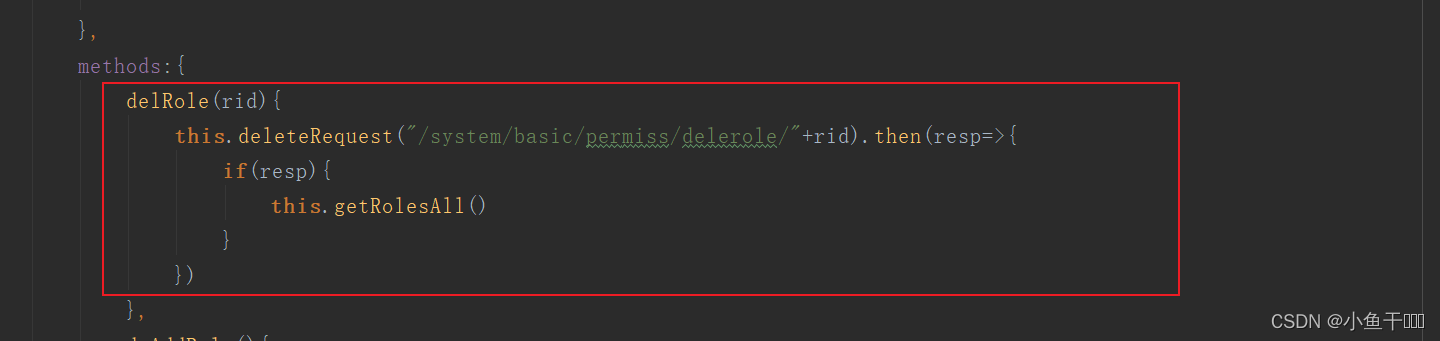

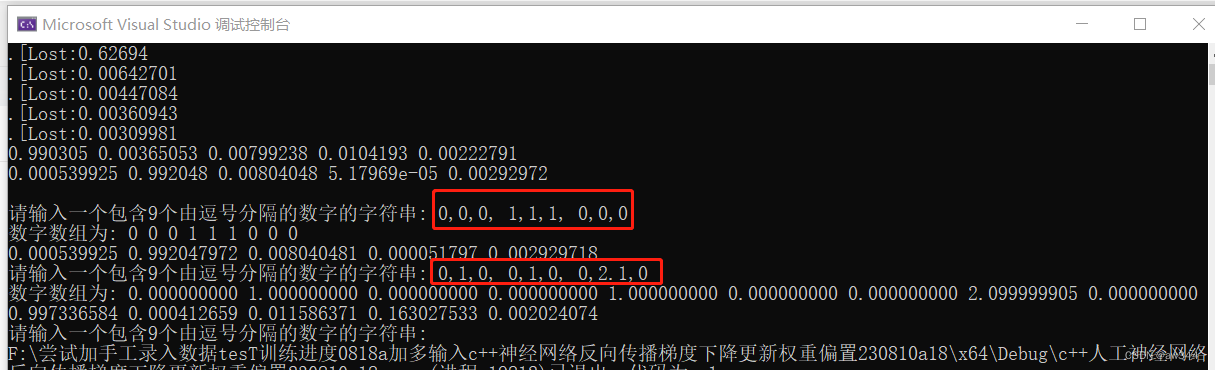

}; //class NeuralNetwork {int main() {NeuralNetwork nn;// Examplestd::vector<double> input[5];input[0] = { 0,1,0, 0,1,0, 0,1,0 }; //1“竖线”或 “1”字{ 1.0, 0.5, 0.25, 0.125 };input[1] = { 0,0,0, 1,1,1,0,0,0 }; //-“横线”或 “-”减号{ 1.0, 0.5, 0.25, 0.125 };input[2] = { 0,1,0, 1,1,1, 0,1,0 }; //+“+”加号{ 1.0, 0.5, 0.25, 0.125 };input[3] = { 0,1,0, 0,2, 0, 0,3, 0.12 }; // '1'或 '|'字型{ 1.0, 0.5, 0.25, 0.125 };input[4] = { 1,1,0, 9,0,9.8, 1,1,1 }; //“口”字型+{ 1.0, 0.5, 0.25, 0.125 };std::vector<double> target[5];target[0] = { 1.0, 0,0,0,0 };//1 , 0}; //0.0, 1.0, 0.5}; //{ 0.0, 1.0 };target[1] = { 0, 1.0 ,0,0,0};//- 91.0, 0};// , 0, 0}; //target[2] = { 0,0,1.0,0,0 };//+ 1.0, 0.5};target[3] = { 1.0 ,0,0, 0.5 ,0}; //1target[4] = { 0,0,0,0,1.0 }; //“口”// Trainingfor (int i = 0; i < 50000/*00 */; ++i) {//for220ifor (int jj = 0; jj < 4; ++jj) {nn.train(input[jj], target[jj]);}//for2230jjif (0 == i % 10000) {//if2250printf(".");std::cout << "[Lost:" << Lost001 << std::endl;Lost001 = 0;}//if2250

}//for220i// Testinput[0] = { 0,1,0, 0,1,0, 0,1,0 }; //1{ 1.0, 0.5, 0.25, 0.125 };std::vector<double> output = nn.forward(input[0]);for (auto& val : output)std::cout << val << " ";std::cout << std::endl;input[1] = { 0,0,0, 1,1,1, 0,0,0 };//std::vector<double> output = nn.forward(input[1]);for (auto& val : output)std::cout << val << " ";std::cout << std::endl;//-----------------------------------------------std::string S;// int D[9];do {std::cout << std::endl << "请输入一个包含9个由逗号分隔的数字的字符串: ";std::getline(std::cin, S);std::stringstream ss(S);for (int i = 0; i < 9; ++i) {std::string temp;std::getline(ss, temp, ',');input[1][i] = (double)std::stof(temp); // 将字符串转化为整数}std::cout << "数字数组为: ";for (int i = 0; i < 9; ++i) {std::cout << input[1][i] << " ";}output = nn.forward(input[1]);std::cout << std::endl;for (auto& val : output)std::cout <<std::fixed<< std::setprecision(9)<< val << " ";} while (1 == 1);//====================================================return 0;

}//main运行结果:

完整神经网络程序、和用法 可以私信或者 Email联系本人……

本人长期从事人工智能,神经网络,嵌入式 C/C++语言开发、培训……欢迎咨询!

41313989

41313989#QQ.com