前言:

本篇文章主要作为一个爬虫项目的小练习,来给大家进行一下爬虫的大致分析过程以及来帮助大家在以后的爬虫编写中有一个更加清晰的认识。

一:环境配置

Python版本:3.7

IDE:PyCharm

所需库:requests,bs4,xlwt

二:网页分析

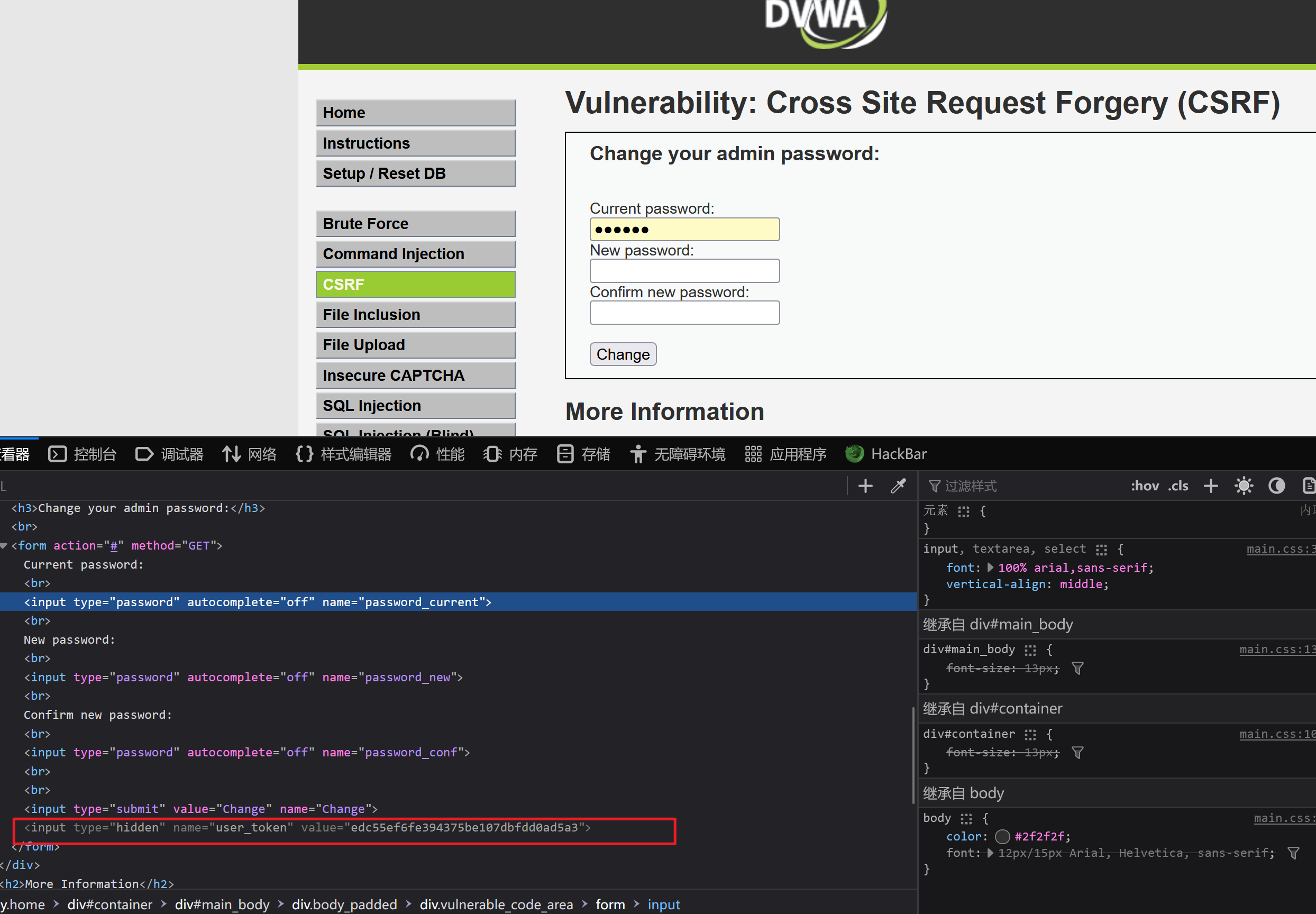

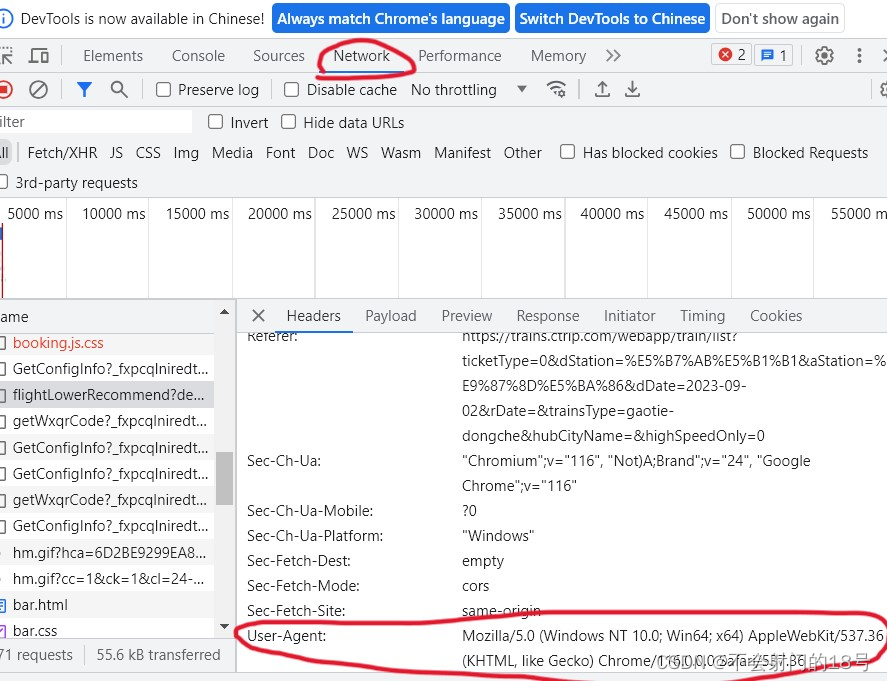

1我们需要去找到user-Agent

三:编写代码

1:导入所需库

import requests

from bs4 import BeautifulSoup

import xlwt2:编写请求头与参数

url = 'https://trains.ctrip.com/TrainBooking/Search.aspx'

headers={'User-Agent':'Mozilla/5.0 (Windows NT 10.0; Win64; x64) AppleWebKit/537.36 (KHTML, like Gecko) Chrome/116.0.0.0 Safari/537.36','Cookie':'Union=OUID=index&AllianceID=4897&SID=155952&SourceID=&createtime=1693561627&Expires=1694166426834; MKT_OrderClick=ASID=4897155952&AID=4897&CSID=155952&OUID=index&CT=1693561626835&CURL=https%3A%2F%2Fwww.ctrip.com%2F%3Fsid%3D155952%26allianceid%3D4897%26ouid%3Dindex&VAL={}; _ubtstatus=%7B%22vid%22%3A%221693561626984.ex3rp%22%2C%22sid%22%3A1%2C%22pvid%22%3A1%2C%22pid%22%3A102001%7D; MKT_CKID=1693561627205.kumds.y2nu; MKT_CKID_LMT=1693561627205; GUID=09031035213146004963; _jzqco=%7C%7C%7C%7C1693561627595%7C1.1256646287.1693561627210.1693561627210.1693561627210.1693561627210.1693561627210.0.0.0.1.1; _RF1=183.230.199.69; _RSG=..qaukvM.m2ykJjUVrQ3T8; _RDG=28437eee4e4c56259b173f8be0c752f59b; _RGUID=2c3e5b9b-b893-4fbe-8743-6b57deb53bbc; MKT_Pagesource=PC; _bfaStatusPVSend=1; _bfi=p1%3D102001%26p2%3D0%26v1%3D1%26v2%3D0; _bfaStatus=success; nfes_isSupportWebP=1; nfes_isSupportWebP=1; Hm_lvt_576acc2e13e286aa1847d8280cd967a5=1693561632; UBT_VID=1693561626984.ex3rp; __zpspc=9.1.1693561627.1693561631.3%232%7Cwww.baidu.com%7C%7C%7C%25E6%2590%25BA%25E7%25A8%258B%7C%23; _resDomain=https%3A%2F%2Fbd-s.tripcdn.cn; Hm_lpvt_576acc2e13e286aa1847d8280cd967a5=1693580464; _bfa=1.1693561626984.ex3rp.1.1693580463154.1693580623580.1.6.10650065554; _pd=%7B%22_o%22%3A30%2C%22s%22%3A154%2C%22_s%22%3A1%7D'

}

params={'from':'wushan','to':'chongqing','dayday':'false','fronCn':'巫山','toCn':'重庆','date':'2023-09-02',

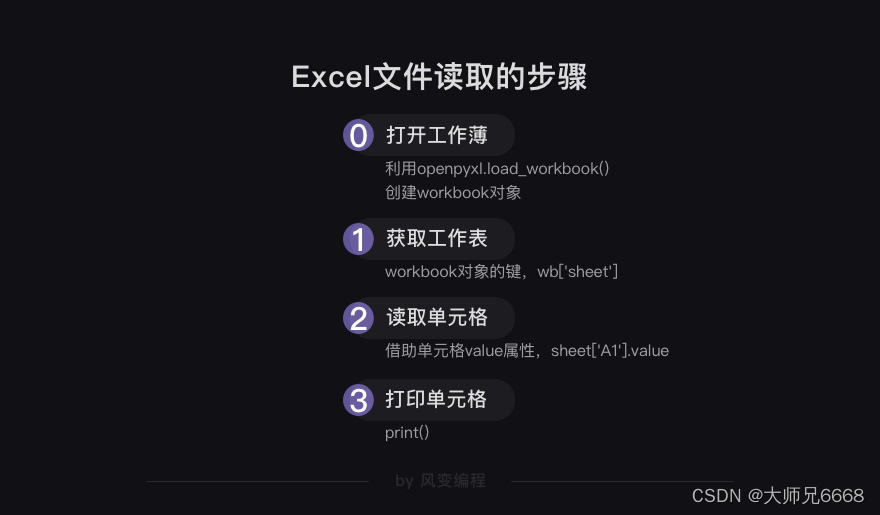

}3:发送请求并编写表头,把数据写入excel文件

response=requests.get(url=url,headers=headers,params=params)

soup=BeautifulSoup(response.text,'html.parser')

ticket_list=soup.select('#div_Result > .list_item')workbook =xlwt.Workbook(encoding='utf-8')

worksheet=workbook.add_sheet('Ticket Info',cell_overwrite_ok=True)worksheet.write(0,0,label='车次')

worksheet.write(0,1,label='出发时间')

worksheet.write(0,2,label='到达时间')

worksheet.write(0,3,label='历时')

worksheet.write(0,4,label='余票')row=1

for ticket in ticket_list:train_no=ticket.select('.num>a')[0].text.strip()start_time=ticket.select('.cds > .start_time')[0].text.strip()end_time = ticket.select('.cds > .end_time')[0].text.strip()duration = ticket.select('.cds > .time')[0].text.strip()remarks = ticket.select('.cds > .note')[0].text.strip()ticket_url = 'https://trains.ctrip.com/TrainBooking/TrainQuery.aspx'ticket_params={'from':'wushan','to':'chongqing','dayday':'false','date':'2023-09-02','trainNo':train_no,}ticket_response=requests.get(ticket_url,headers=headers,params=ticket_params)ticket_soup=BeautifulSoup(ticket_response.text,'html.parser')ticket_remaining=ticket_soup.select('.new_situation > p >span')[0].text.strip()worksheet(row,0,label=train_no)worksheet(row, 1,label=start_time)worksheet(row, 2,label=end_time)worksheet(row, 3,label=duration)worksheet(row, 4,label=ticket_remaining)row +=1print(train_no,start_time,end_time,duration,remarks,ticket_remaining)

workbook.save('ticket_info.xls')以上便是基本的源码,由于12306官网具有严格的反爬机制,所以不建议对12306官网进行爬取,如果未经授权将会承担相关责任,所以请选择其他软件进行示范,不过其他软件也会具有一些反爬机制,会导致爬取失败。