树叶分类竞赛(Baseline)-kaggle的GPU使用

文章目录

- 树叶分类竞赛(Baseline)-kaggle的GPU使用

- 竞赛的步骤

- 代码实现

- 创建自定义dataset

- 定义data_loader

- 模型定义

- 超参数

- 训练模型

- 预测和保存结果

- kaggle使用

竞赛的步骤

本文来自于Neko Kiku提供的Baseline,感谢大佬提供代码,来自于https://www.kaggle.com/nekokiku/simple-resnet-baseline

总结一下数据处理步骤:

- 先观察数据,发现数据有三类,测试和训练两类csv文件,以及image文件。

csv文件处理:读取训练文件,观察种类个数,同时将种类和标签相绑定

image文件:可以利用tensorboard进行图片的观察,也可以用image图片展示

- 创建自定义的dataset,自定义不同的类型;接着创建DataLoader定义一系列参数

- 模型定义,利用选定好的模型restnet34,记得最后接一个全连接层将数据压缩至种类个数

- 超参数可以有效提高辨别概率,自己电脑GPU太拉跨,选定好参数再进行跑模型,提高模型效率

- 训练模型

- 预测和保存结果

学习到的优化方法:

- 数据增强: 在数据加载阶段,可以使用数据增强技术来增加数据的多样性,帮助模型更好地泛化。可以使用

torchvision.transforms来实现。 - 调整超参数: 尝试不同的超参数,如学习率、batch size、weight decay 等,找到最佳组合。

代码实现

- 文件处理

# 首先导入包

import torch

import torch.nn as nn

import pandas as pd

import numpy as np

from torch.utils.data import Dataset, DataLoader

from torchvision import transforms

from PIL import Image

import os

import matplotlib.pyplot as plt

import torchvision.models as models

# This is for the progress bar.

from tqdm import tqdm

import seaborn as sns

- 观察给出的几个文件类型以及内容,得出标签类型以及频度

# 看看label文件长啥样,先了解训练集,再看对应的标签类型

labels_dataframe = pd.read_csv('../input/classify-leaves/train.csv')

labels_dataframe.head(5)

# pd.describe()函数生成描述性统计数据,统计数据集的集中趋势,分散和行列的分布情况,不包括 NaN值。

labels_dataframe.describe()

- 坐标展示数据,可视化种类的分布情况

#function to show bar lengthdef barw(ax): for p in ax.patches:val = p.get_width() #height of the barx = p.get_x()+ p.get_width() # x- position y = p.get_y() + p.get_height()/2 #y-positionax.annotate(round(val,2),(x,y))#finding top leaves

plt.figure(figsize = (15,30))

ax0=sns.countplot(y=labels_dataframe['label'],order=labels_dataframe['label'].value_counts().index)

barw(ax0)

plt.show()

- 将label和数字相对应,建立字典

# 把label文件排个序

leaves_labels = sorted(list(set(labels_dataframe['label'])))

n_classes = len(leaves_labels)

print(n_classes)

leaves_labels[:10]

# 把label转成对应的数字

class_to_num = dict(zip(leaves_labels, range(n_classes)))

class_to_num

# 再转换回来,方便最后预测的时候使用

num_to_class = {v : k for k, v in class_to_num.items()}

'abies_concolor': 0,'abies_nordmanniana': 1,'acer_campestre': 2,'acer_ginnala': 3,'acer_griseum': 4,'acer_negundo': 5,'acer_palmatum': 6,'acer_pensylvanicum': 7,'acer_platanoides': 8,'acer_pseudoplatanus': 9,'acer_rubrum': 10,

创建自定义dataset

dataset分为init,getitem,len三部分

init函数:定义照片尺寸和文件路径,根据不同模式对数据进行不同的处理。

getitem函数:获取文件的函数,同样对于不同模式的请求对数据有不同的处理。学习train中数据增强的手段

len函数:返回真实长度

# 继承pytorch的dataset,创建自己的

class LeavesData(Dataset):def __init__(self, csv_path, file_path, mode='train', valid_ratio=0.2, resize_height=256, resize_width=256):"""Args:csv_path (string): csv 文件路径img_path (string): 图像文件所在路径mode (string): 训练模式还是测试模式valid_ratio (float): 验证集比例"""# 需要调整后的照片尺寸,我这里每张图片的大小尺寸不一致#self.resize_height = resize_heightself.resize_width = resize_widthself.file_path = file_pathself.mode = mode# 读取 csv 文件# 利用pandas读取csv文件self.data_info = pd.read_csv(csv_path, header=None) #header=None是去掉表头部分# 计算 lengthself.data_len = len(self.data_info.index) - 1self.train_len = int(self.data_len * (1 - valid_ratio))if mode == 'train':# 第一列包含图像文件的名称self.train_image = np.asarray(self.data_info.iloc[1:self.train_len, 0]) #self.data_info.iloc[1:,0]表示读取第一列,从第二行开始到train_len# 第二列是图像的 labelself.train_label = np.asarray(self.data_info.iloc[1:self.train_len, 1])self.image_arr = self.train_image self.label_arr = self.train_labelelif mode == 'valid':self.valid_image = np.asarray(self.data_info.iloc[self.train_len:, 0]) self.valid_label = np.asarray(self.data_info.iloc[self.train_len:, 1])self.image_arr = self.valid_imageself.label_arr = self.valid_labelelif mode == 'test':self.test_image = np.asarray(self.data_info.iloc[1:, 0])self.image_arr = self.test_imageself.real_len = len(self.image_arr)print('Finished reading the {} set of Leaves Dataset ({} samples found)'.format(mode, self.real_len))def __getitem__(self, index):# 从 image_arr中得到索引对应的文件名single_image_name = self.image_arr[index]# 读取图像文件img_as_img = Image.open(self.file_path + single_image_name)#如果需要将RGB三通道的图片转换成灰度图片可参考下面两行

# if img_as_img.mode != 'L':

# img_as_img = img_as_img.convert('L')#设置好需要转换的变量,还可以包括一系列的nomarlize等等操作if self.mode == 'train':transform = transforms.Compose([transforms.Resize((224, 224)),transforms.RandomHorizontalFlip(p=0.5), #随机水平翻转 选择一个概率transforms.ToTensor()])else:# valid和test不做数据增强transform = transforms.Compose([transforms.Resize((224, 224)),transforms.ToTensor()])img_as_img = transform(img_as_img)if self.mode == 'test':return img_as_imgelse:# 得到图像的 string labellabel = self.label_arr[index]# number labelnumber_label = class_to_num[label]return img_as_img, number_label #返回每一个index对应的图片数据和对应的labeldef __len__(self):return self.real_len

# 设置文件路径,读取文件

train_path = '../input/classify-leaves/train.csv'

test_path = '../input/classify-leaves/test.csv'

# csv文件中已经images的路径了,因此这里只到上一级目录

img_path = '../input/classify-leaves/'train_dataset = LeavesData(train_path, img_path, mode='train')

val_dataset = LeavesData(train_path, img_path, mode='valid')

test_dataset = LeavesData(test_path, img_path, mode='test')

print(train_dataset)

print(val_dataset)

print(test_dataset)

定义data_loader

# 定义data loader,如果报错的话,改变num_workers为0

train_loader = torch.utils.data.DataLoader(dataset=train_dataset,batch_size=8, shuffle=False,num_workers=5)val_loader = torch.utils.data.DataLoader(dataset=val_dataset,batch_size=8, shuffle=False,num_workers=5)

test_loader = torch.utils.data.DataLoader(dataset=test_dataset,batch_size=8, shuffle=False,num_workers=5)

# 给大家展示一下数据长啥样

def im_convert(tensor):""" 展示数据"""image = tensor.to("cpu").clone().detach()image = image.numpy().squeeze()image = image.transpose(1,2,0)image = image.clip(0, 1)return imagefig=plt.figure(figsize=(20, 12))

columns = 4

rows = 2dataiter = iter(val_loader)

inputs, classes = dataiter.next()for idx in range (columns*rows):ax = fig.add_subplot(rows, columns, idx+1, xticks=[], yticks=[])ax.set_title(num_to_class[int(classes[idx])])plt.imshow(im_convert(inputs[idx]))

plt.show()

- 可以自己尝试一下用tensorboard展示一下图片,我贴出我的代码

from torch.utils.tensorboard import SummaryWriter

from PIL import Image

import numpy as np

import os# 注意这个图片的路径和之前的路径不同

image_path = './dataset/images'

writer = SummaryWriter("logs")

image_filenames = os.listdir(image_path)

batch_size = 10for i in range(0, int(len(image_filenames)/1000), batch_size):batch_images = image_filenames[i:i + batch_size]for j, img_filename in enumerate(batch_images):img_concise = os.path.join(image_path, img_filename)img = Image.open(img_concise)img_array = np.array(img)# writer.add_image(f"test/batch_{i // batch_size}/img_{j}", img_array, j, dataformats='HWC')writer.add_image(f"test_batch{i}", img_array, j, dataformats='HWC')writer.close()

# 观察是否在GPU上运行

def get_device():return 'cuda' if torch.cuda.is_available() else 'cpu'device = get_device()

print(device)

模型定义

在查阅下了解到利用了迁移学习,通过不改变模型前面一些层的参数可以加快训练速度,也称作冻结层,我在下面贴上冻结层的目的;模型利用上节课学到的resnet模型,模型全封装好了直接调用,只需要在最后一层添加一个全连接层,使得最后的输出结果为模型的类型即可。

- 减少训练时间:通过不更新某些层的参数,可以减少计算量,从而加快训练速度。

- 防止过拟合:冻结一些层的参数可以避免模型在小数据集上过拟合,特别是在迁移学习中,预训练的层通常已经学到了良好的特征。

# 是否要冻住模型的前面一些层

def set_parameter_requires_grad(model, feature_extracting):if feature_extracting:model = modelfor param in model.parameters():param.requires_grad = False

# resnet34模型

def res_model(num_classes, feature_extract = False, use_pretrained=True):model_ft = models.resnet34(pretrained=use_pretrained)set_parameter_requires_grad(model_ft, feature_extract)num_ftrs = model_ft.fc.in_featuresmodel_ft.fc = nn.Sequential(nn.Linear(num_ftrs, num_classes))return model_ft

超参数

# 超参数

learning_rate = 3e-4

weight_decay = 1e-3

num_epoch = 50

model_path = './pre_res_model.ckpt'

训练模型

- 训练和验证分类模型的模版,需要很大时间去训练,可以利用kaggle的GPU来跑模型

# Initialize a model, and put it on the device specified.

model = res_model(176) # 之前观察到树叶的类别有176个

model = model.to(device)

model.device = device

# For the classification task, we use cross-entropy as the measurement of performance.

criterion = nn.CrossEntropyLoss()# Initialize optimizer, you may fine-tune some hyperparameters such as learning rate on your own.

optimizer = torch.optim.Adam(model.parameters(), lr = learning_rate, weight_decay=weight_decay)# The number of training epochs.

n_epochs = num_epochbest_acc = 0.0

for epoch in range(n_epochs):# ---------- Training ----------# Make sure the model is in train mode before training.model.train() # These are used to record information in training.train_loss = []train_accs = []# Iterate the training set by batches.for batch in tqdm(train_loader):# A batch consists of image data and corresponding labels.imgs, labels = batchimgs = imgs.to(device)labels = labels.to(device)# Forward the data. (Make sure data and model are on the same device.)logits = model(imgs)# Calculate the cross-entropy loss.# We don't need to apply softmax before computing cross-entropy as it is done automatically.loss = criterion(logits, labels)# Gradients stored in the parameters in the previous step should be cleared out first.optimizer.zero_grad()# Compute the gradients for parameters.loss.backward()# Update the parameters with computed gradients.optimizer.step()# Compute the accuracy for current batch.acc = (logits.argmax(dim=-1) == labels).float().mean()# Record the loss and accuracy.train_loss.append(loss.item())train_accs.append(acc)# The average loss and accuracy of the training set is the average of the recorded values.train_loss = sum(train_loss) / len(train_loss)train_acc = sum(train_accs) / len(train_accs)# Print the information.print(f"[ Train | {epoch + 1:03d}/{n_epochs:03d} ] loss = {train_loss:.5f}, acc = {train_acc:.5f}")# ---------- Validation ----------# Make sure the model is in eval mode so that some modules like dropout are disabled and work normally.model.eval()# These are used to record information in validation.valid_loss = []valid_accs = []# Iterate the validation set by batches.for batch in tqdm(val_loader):imgs, labels = batch# We don't need gradient in validation.# Using torch.no_grad() accelerates the forward process.with torch.no_grad():logits = model(imgs.to(device))# We can still compute the loss (but not the gradient).loss = criterion(logits, labels.to(device))# Compute the accuracy for current batch.acc = (logits.argmax(dim=-1) == labels.to(device)).float().mean()# Record the loss and accuracy.valid_loss.append(loss.item())valid_accs.append(acc)# The average loss and accuracy for entire validation set is the average of the recorded values.valid_loss = sum(valid_loss) / len(valid_loss)valid_acc = sum(valid_accs) / len(valid_accs)# Print the information.print(f"[ Valid | {epoch + 1:03d}/{n_epochs:03d} ] loss = {valid_loss:.5f}, acc = {valid_acc:.5f}")# if the model improves, save a checkpoint at this epochif valid_acc > best_acc:best_acc = valid_acctorch.save(model.state_dict(), model_path)print('saving model with acc {:.3f}'.format(best_acc))

预测和保存结果

saveFileName = './submission.csv'

## predict

model = res_model(176)# create model and load weights from checkpoint

model = model.to(device)

model.load_state_dict(torch.load(model_path))# Make sure the model is in eval mode.

# Some modules like Dropout or BatchNorm affect if the model is in training mode.

model.eval()# Initialize a list to store the predictions.

predictions = []

# Iterate the testing set by batches.

for batch in tqdm(test_loader):imgs = batchwith torch.no_grad():logits = model(imgs.to(device))# Take the class with greatest logit as prediction and record it.predictions.extend(logits.argmax(dim=-1).cpu().numpy().tolist())preds = []

for i in predictions:preds.append(num_to_class[i])test_data = pd.read_csv(test_path)

test_data['label'] = pd.Series(preds)

submission = pd.concat([test_data['image'], test_data['label']], axis=1)

submission.to_csv(saveFileName, index=False)

print("Done!!!!!!!!!!!!!!!!!!!!!!!!!!!")

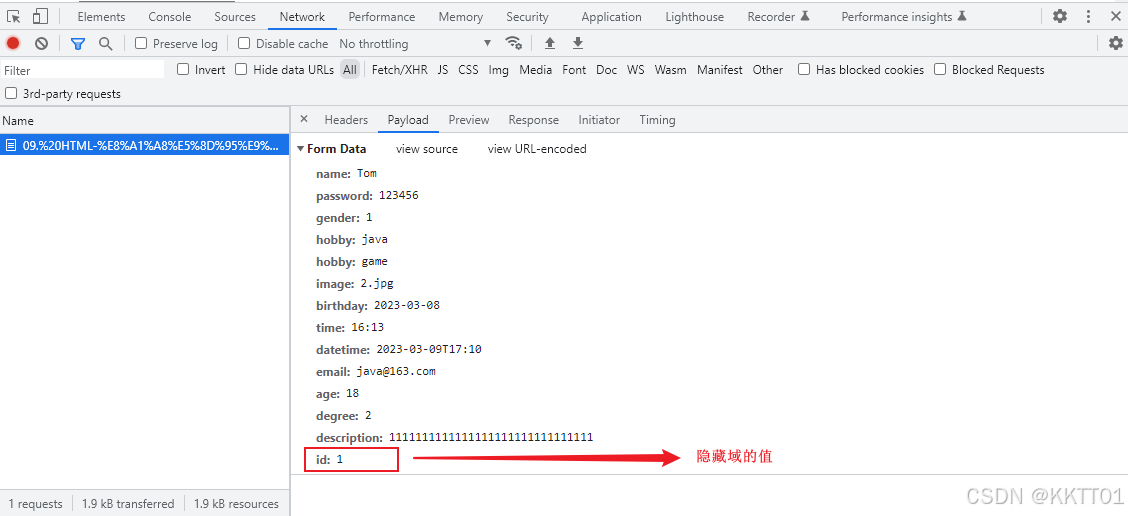

kaggle使用

- 创建kaggle账户

- 创建一个Dataset,将树叶信息导入/还可以通过kaggle自身的数据集进行数据的导入

- 创建一个Notebook

- 导入的文件放在了/kaggle/input,将导入的文件放在根目录下使得代码中的导入文件路径不会变

cp -r /文件 命名

cp -r /kaggle/input/leaf-dataset dataset

- 打开右侧边栏,将其修改为GPUP100,每周有30个小时的运行时间

- 运行代码,发现输出的结果都在根目录下,将输出的结果导出到/kaggle/Working

cp -r submission.csv /kaggle/working 导出输出文件即可