8.1 损失函数

① Loss损失函数一方面计算实际输出和目标之间的差距。

② Loss损失函数另一方面为我们更新输出提供一定的依据。

8.2 L1loss损失函数

① L1loss数学公式如下图所示,例子如下下图所示。

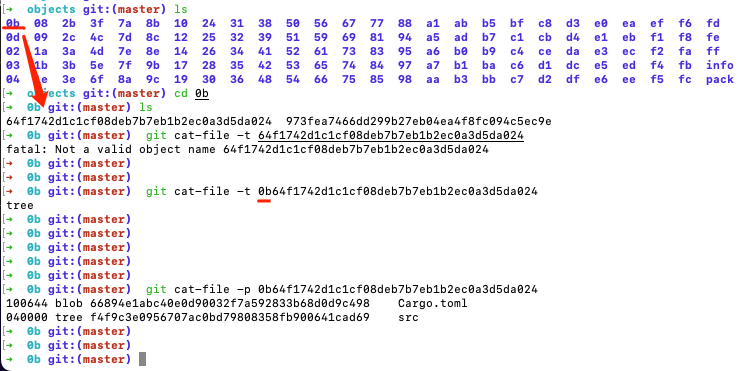

import torch

from torch.nn import L1Loss

inputs = torch.tensor([1,2,3],dtype=torch.float32)

targets = torch.tensor([1,2,5],dtype=torch.float32)

inputs = torch.reshape(inputs,(1,1,1,3))

targets = torch.reshape(targets,(1,1,1,3))

loss = L1Loss() # 默认为 maen

result = loss(inputs,targets)

print(result)结果:

tensor(0.6667)

import torch

from torch.nn import L1Loss

inputs = torch.tensor([1,2,3],dtype=torch.float32)

targets = torch.tensor([1,2,5],dtype=torch.float32)

inputs = torch.reshape(inputs,(1,1,1,3))

targets = torch.reshape(targets,(1,1,1,3))

loss = L1Loss(reduction='sum') # 修改为sum,三个值的差值,然后取和

result = loss(inputs,targets)

print(result)结果:

tensor(2.)

8.3 MSE损失函数

① MSE损失函数数学公式如下图所示。

import torch

from torch.nn import L1Loss

from torch import nn

inputs = torch.tensor([1,2,3],dtype=torch.float32)

targets = torch.tensor([1,2,5],dtype=torch.float32)

inputs = torch.reshape(inputs,(1,1,1,3))

targets = torch.reshape(targets,(1,1,1,3))

loss_mse = nn.MSELoss()

result_mse = loss_mse(inputs,targets)

print(result_mse)结果:

tensor(1.3333)

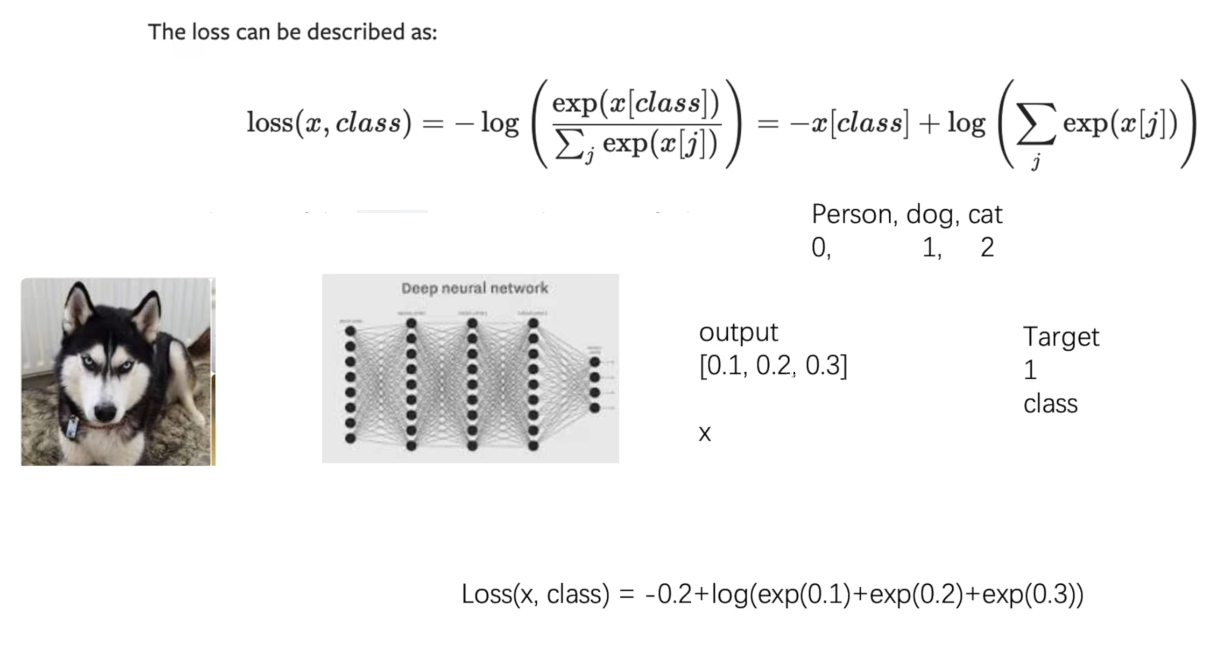

8.4 交叉熵损失函数

① 交叉熵损失函数数学公式如下图所示。

import torch

from torch.nn import L1Loss

from torch import nnx = torch.tensor([0.1,0.2,0.3])

y = torch.tensor([1])

x = torch.reshape(x,(1,3)) # 1的 batch_size,有三类

loss_cross = nn.CrossEntropyLoss()

result_cross = loss_cross(x,y)

print(result_cross)结果:

tensor(1.1019)

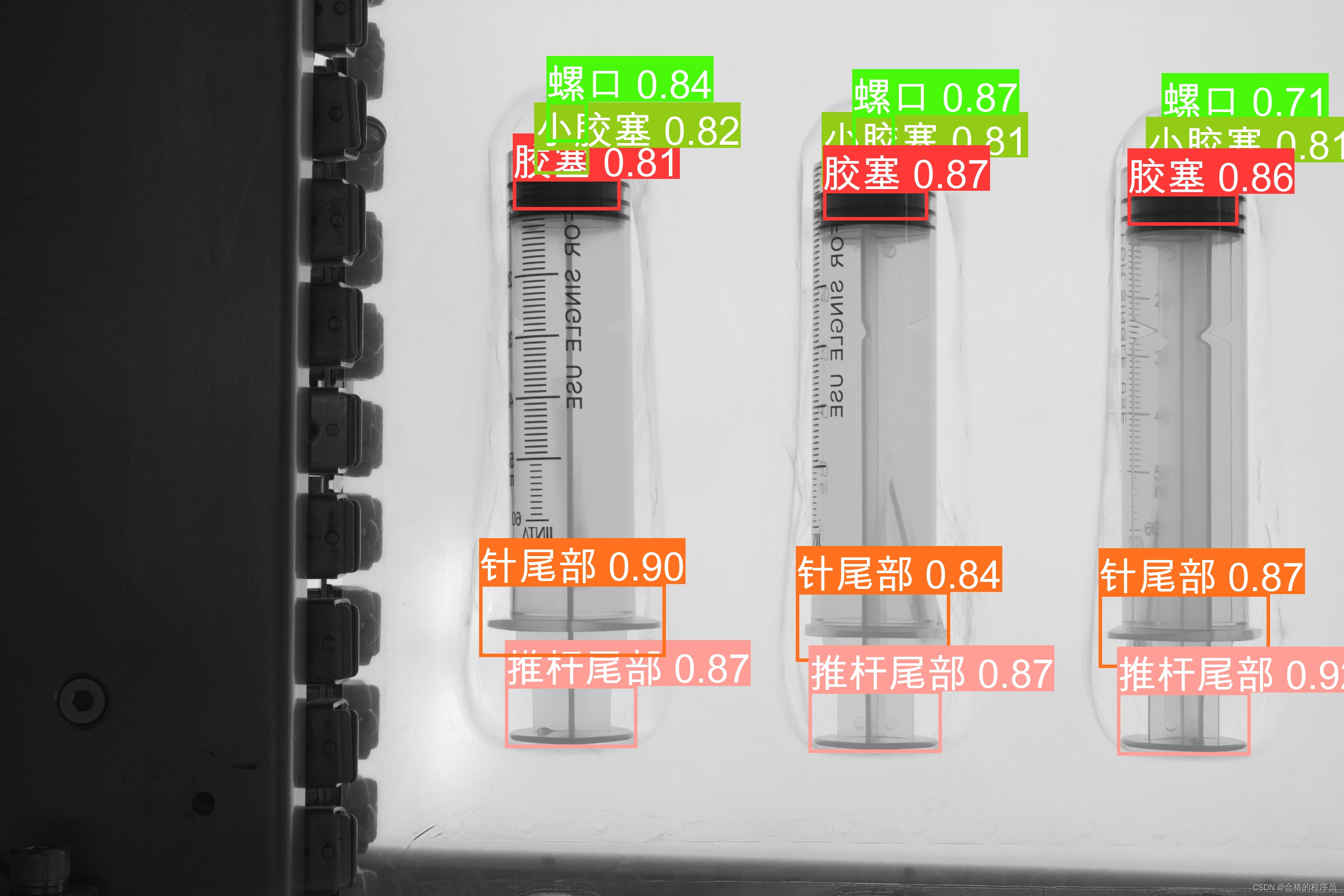

8.5 搭建神经网络

import torch

import torchvision

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriterdataset = torchvision.datasets.CIFAR10("./dataset",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset, batch_size=1,drop_last=True)class Tudui(nn.Module):def __init__(self):super(Tudui, self).__init__() self.model1 = Sequential(Conv2d(3,32,5,padding=2),MaxPool2d(2),Conv2d(32,32,5,padding=2),MaxPool2d(2),Conv2d(32,64,5,padding=2),MaxPool2d(2),Flatten(),Linear(1024,64),Linear(64,10))def forward(self, x):x = self.model1(x)return xtudui = Tudui()

for data in dataloader:imgs, targets = dataoutputs = tudui(imgs)print(outputs)print(targets)结果:

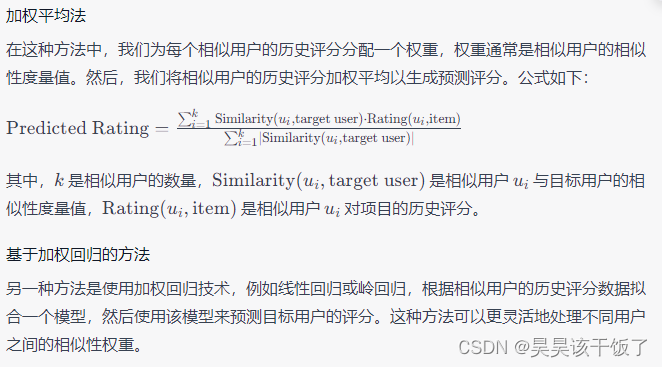

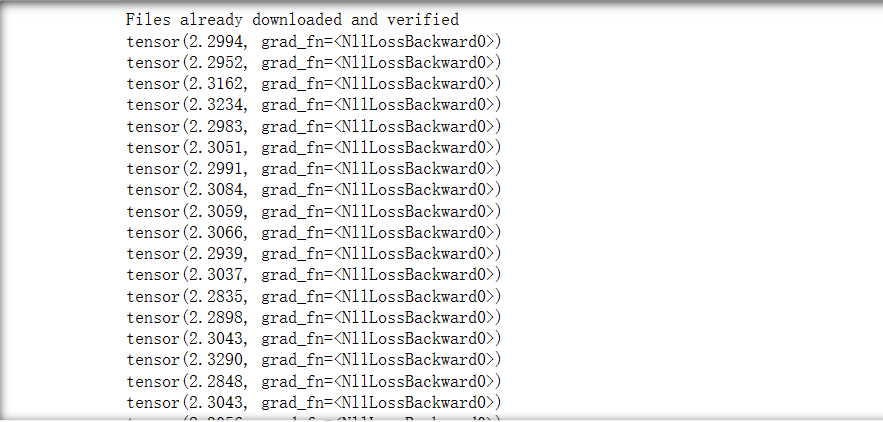

8.6 数据集计算损失函数

import torch

import torchvision

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriterdataset = torchvision.datasets.CIFAR10("./dataset",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset, batch_size=64,drop_last=True)class Tudui(nn.Module):def __init__(self):super(Tudui, self).__init__() self.model1 = Sequential(Conv2d(3,32,5,padding=2),MaxPool2d(2),Conv2d(32,32,5,padding=2),MaxPool2d(2),Conv2d(32,64,5,padding=2),MaxPool2d(2),Flatten(),Linear(1024,64),Linear(64,10))def forward(self, x):x = self.model1(x)return xloss = nn.CrossEntropyLoss() # 交叉熵

tudui = Tudui()

for data in dataloader:imgs, targets = dataoutputs = tudui(imgs)result_loss = loss(outputs, targets) # 计算实际输出与目标输出的差距print(result_loss)结果:

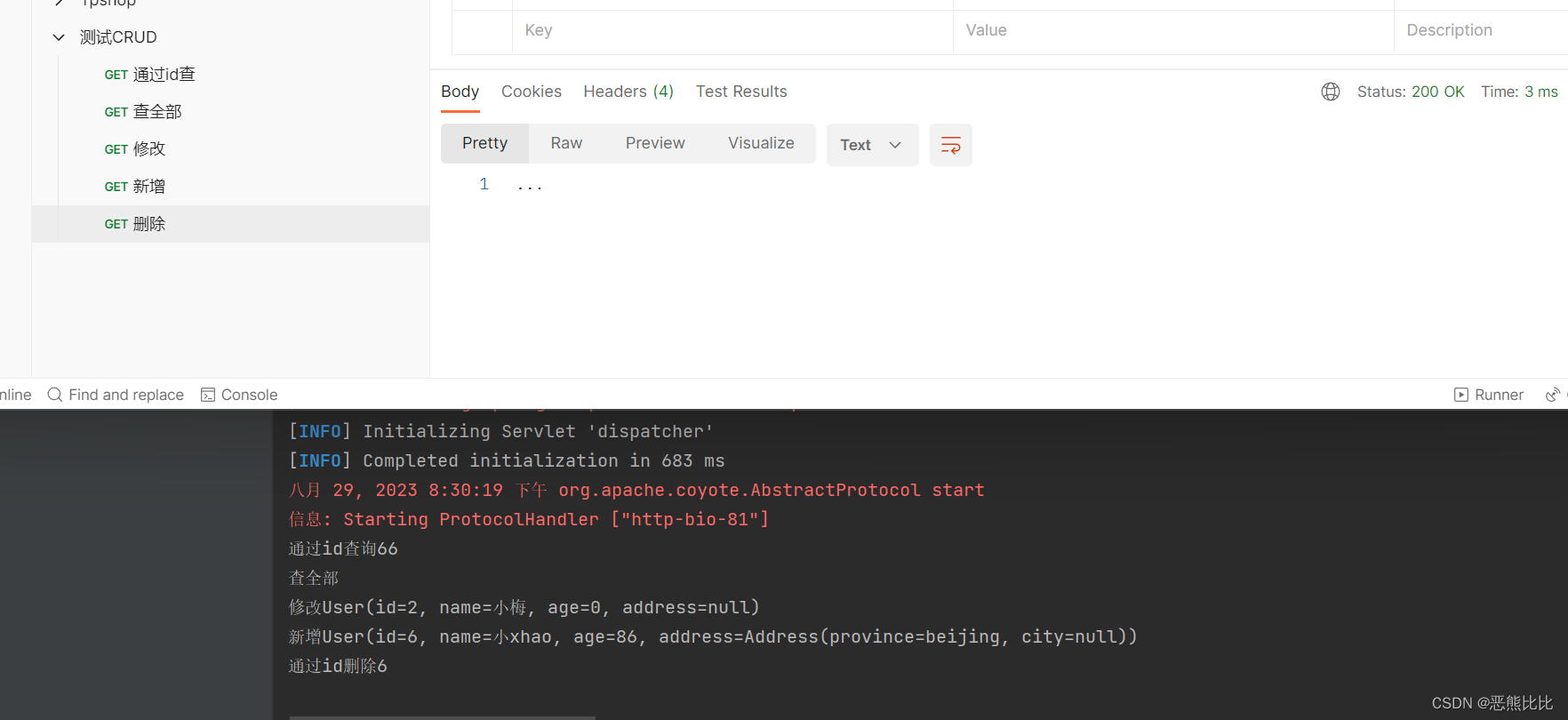

8.7 损失函数反向传播

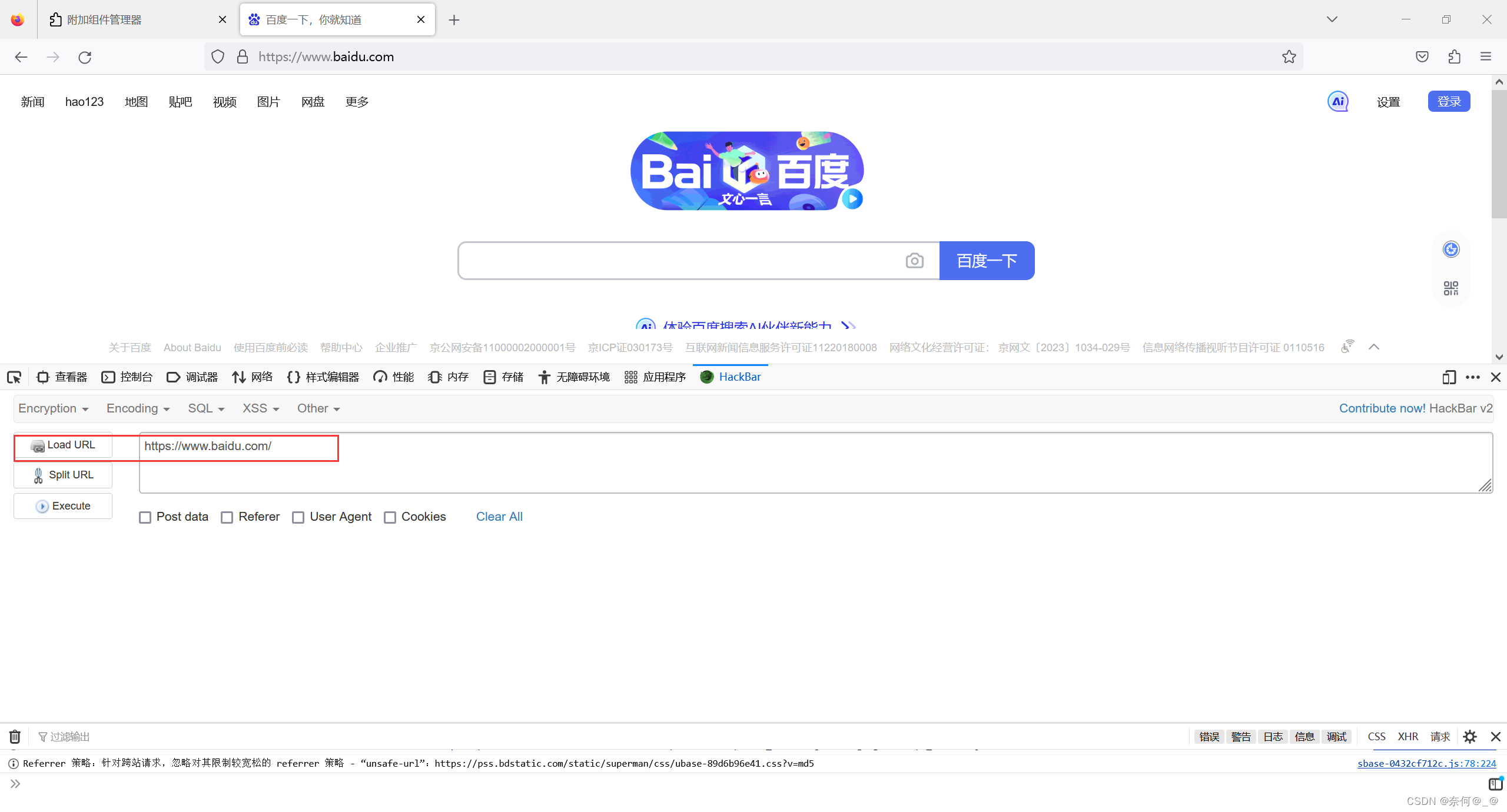

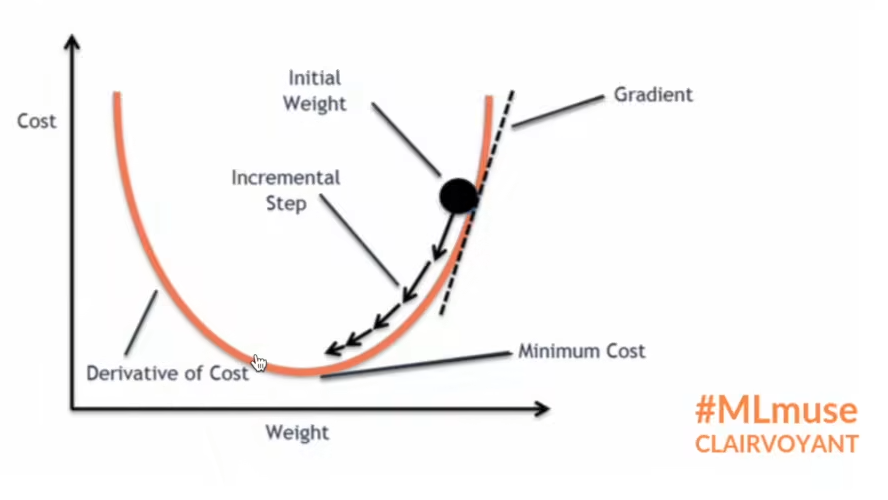

① 反向传播通过梯度来更新参数,使得loss损失最小,如下图所示。

import torch

import torchvision

from torch import nn

from torch.nn import Conv2d, MaxPool2d, Flatten, Linear, Sequential

from torch.utils.data import DataLoader

from torch.utils.tensorboard import SummaryWriterdataset = torchvision.datasets.CIFAR10("./dataset",train=False,transform=torchvision.transforms.ToTensor(),download=True)

dataloader = DataLoader(dataset, batch_size=64,drop_last=True)class Tudui(nn.Module):def __init__(self):super(Tudui, self).__init__() self.model1 = Sequential(Conv2d(3,32,5,padding=2),MaxPool2d(2),Conv2d(32,32,5,padding=2),MaxPool2d(2),Conv2d(32,64,5,padding=2),MaxPool2d(2),Flatten(),Linear(1024,64),Linear(64,10))def forward(self, x):x = self.model1(x)return xloss = nn.CrossEntropyLoss() # 交叉熵

tudui = Tudui()

for data in dataloader:imgs, targets = dataoutputs = tudui(imgs)result_loss = loss(outputs, targets) # 计算实际输出与目标输出的差距result_loss.backward() # 计算出来的 loss 值有 backward 方法属性,反向传播来计算每个节点的更新的参数。这里查看网络的属性 grad 梯度属性刚开始没有,反向传播计算出来后才有,后面优化器会利用梯度优化网络参数。 print("ok")