论文地址:😈

目录

一、环境配置以及数据集准备

数据集准备

数据集格式展示

环境配置,按照官网所述即可

二、一些调整

vit_base的预训练模型

远程debug的设置

Tokenizer初始化失败

读入网络图片的调整

三、训练过程

Image Encoder Layer

Text Encoder

Text Decoder

损失函数

ITC(Image-Text Contrastive)

ITM(Image-Text Matching)

LM(anguage Modeling)

网络结构

一、环境配置以及数据集准备

数据集准备

官网提供了下载数据集json文件的接口。但是很可能打不开,因为其放在了谷歌云上

https://storage.googleapis.com/

不过不要担心,网页打不开,咱们可以利用python去爬它。

# urlopen模块 读取数据 from __future__ import (absolute_import, division, print_function, unicode_literals)from urllib.request import urlopen import jsonjson_url = 'https://storage.googleapis.com/sfr-vision-language-research/BLIP/datasets/ccs_filtered.json'response = urlopen(json_url) # 读取数据 req = response.read() # 写入文件 with open('ccs_filtered.json', 'wb') as f: # 保存的路径自行设置f.write(req) f.close() # 加载json格式 # file_urllid = json.loads(req) # print(file_urllid)

数据集格式展示

正如官网所述,json文件中的格式为{'image': path_of_image, 'caption': text_of_image},部分展示如下:

[{"caption": "a wooden chair in the living room", "url": "http://static.flickr.com/2723/4385058960_b0f291553e.jpg"},{"caption": "the plate near the door almost makes it look like the cctv camera is the logo of the hotel", "url": "http://static.flickr.com/3348/3183472382_156bcbc461.jpg"},{"caption": "a close look at winter stoneflies reveals mottled wings and black or brown bodies photo by jason du pont", "url": "http://static.flickr.com/114/291586170_82b2c80750.jpg"},{"caption": "karipol leipzig, germany, abandoned factory for house und car cleaning supplies in leipzig founded in 1897, closed in 1995", "url": "http://static.flickr.com/5170/5208095391_f030f31dd1.jpg"},{"caption": "this train car is parked permanently in a yard in royal, nebraska", "url": "http://static.flickr.com/3067/4554530891_f0d45b0967.jpg"},{"caption": "another shot of the building next to the sixth floor museum in dallas", "url": "http://static.flickr.com/3145/2858049135_0944b40a34.jpg"},{"caption": "a tropical forest in the train station how cool is that", "url": "http://static.flickr.com/4113/5212209590_6c7d40a7f6.jpg"},{"caption": "le hast le hill in le pink le shirt", "url": "http://static.flickr.com/1338/4597496270_7d91f01f88.jpg"},{"caption": "daniel up by the red bag tackling", "url": "http://static.flickr.com/3129/2783268248_4930260b9d.jpg"},{"caption": "a window on a service building is seen at allerton park tuesday, oct 27, 2009, in monticello, ill", "url": "http://static.flickr.com/2516/4054292066_3b74bfcf89.jpg"},{"caption": "girls jumping off of the 50ft cliff at abique lake in santa fe new mexico", "url": "http://static.flickr.com/2637/4371962912_77b4c74878.jpg"},{"caption": "a young chhantyal girl in traditional dress", "url": "http://static.flickr.com/3075/3240630067_772ebf5504.jpg"},{"caption": "view from the train to snowdon hidden in the cloud", "url": "http://static.flickr.com/4114/4803115610_711924e2ab.jpg"},{"caption": "the only non white tiger in the habitat when i went there", "url": "http://static.flickr.com/2481/3873285144_25aa3013ca.jpg"},{"caption": "the contrast of the flowering pear tree against the bare branches of the other trees caught my eye", "url": "http://static.flickr.com/3198/3124550410_b70442da56.jpg"},{"caption": "this is my friend taking a nap in my sleeping bag with our friend's dog for company", "url": "http://static.flickr.com/1167/1466307446_c1a332c5ec.jpg"},{"caption": "view of old castle in field ii", "url": "http://static.flickr.com/4101/4758652582_8a1d44a1a0.jpg"},a wooden chair in the living room

this is my friend taking a nap in my sleeping bag with our friend's dog for company

环境配置,按照官网所述即可

# 不是顺序执行,大致意思

conda create -n BLIP python=3.8

pip install -r requirement.txt

pip install opencv-python

pip install opencv-python-headless

按照官网,去configs/pretrain.yaml修改json files的路径。然后自行创建保存的路径

mkdir output/pretrain (大致意思,不是执行这个)执行测试,(这里只用了一块GPU)

python -m torch.distributed.run --nproc_per_node=1 pretrain.py --config ./configs/Pretrain.yaml --output_dir output/Pretrain出现问题

ModuleNotFoundError: No module named 'ruamel_yaml'解决方法

# 将 import ruamel_yaml as yaml #改为 import yaml

出现问题

FileNotFoundError: [Errno 2] No such file or directory: './configs/Pretrain.yaml'解决方法

用绝对路径代替

出现问题

RuntimeError: The NVIDIA driver on your system is too old (found version 11060). Please update your GPU driver by downloading and installing a new version from the URL: http://www.nvidia.com/Download/index.aspx Alternatively, go to: https://pytorch.org to install a PyTorch version that has been compiled with your version of the CUDA driver.解决方法

见这里 😼

出现问题

FileNotFoundError: [Errno 2] No such file or directory: 'configs/bert_config.json'解决办法

用绝对路劲代替

出现问题

TypeError: '<=' not supported between instances of 'float' and 'str'解决办法

yaml错把3e-4等识别为str型数据

lr=float(config['init_lr']) 转成float型,或者去yaml文件中将

3e-4

1e-6

等直接换成

0.0003

0.0000001

出现问题

image = Image.open(ann['image']).convert('RGB')

KeyError: 'image'解决办法

见本文中的 第二部分中的 读入网络图片的调整

二、一些调整

vit_base的预训练模型

执行训练时会去网上下载这个预训练模型。为了避免重复下载,可将下载的预训练模型保存到一个文件夹中,并更改代码中的设置

checkpoint = torch.load('******/deit_base_patch16_224-b5f2ef4d.pth', map_location="cpu")远程debug的设置

遇见的问题

error:unrecognized argument: --local-rank=0解决方法见这里 🐰

Tokenizer初始化失败

由于初始化需要去hugging face下载模型文,所以有可能由于网络原因报错

TimeoutError: [Errno 110] Connection timed out解决方法见这里😼

并且 self.text_encoder 和self.text_decoder也需要同步进行更改

读入网络图片的调整

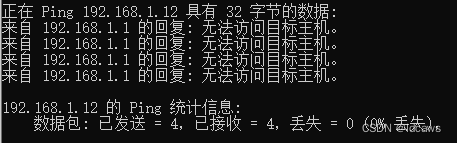

原数据集json文件太大,所以我编写脚本截取了一部分,只有4M的,20000个图片。

我不知道为什么,官网说的数据集json文件格式是{'image': path_of_image, 'caption': text_of_image},但是我下载的json文件格式是{'url': url_of_image, 'caption': text_of_image}。这个可能是我下载的是CC3M+CC12M+SBU,来自网页的,如果是coco的话可能就不一样了,就和他所述的格式一样。

所以,为了在网页上抓取并读入图片,需要修改一下源代码 (参考了这里🐰)

pretrain_dataset.py 中

# 导入库 from skimage import io# 插入代码 self.use_url = True# 以及函数def read_image_from_url(self, url):# 从URL下载图像image = io.imread(url)image = Image.fromarray(image)return image # 将源代码修改为def __getitem__(self, index): ann = self.annotation[index] if self.use_url:image = self.read_image_from_url(ann['url'])else:image = Image.open(ann['image']).convert('RGB')image = self.transform(image)caption = pre_caption(ann['caption'],30)return image, caption

然而可能遇到网络原因,403,不能读取图片。因此由于本次只是测试,就写了个脚本下载了截取的一些图片并配置json格式到 {'image': path_of_image, 'caption': text_of_image}。具体地,截取了原数据集json文件的前300个,

import jsonpath='/root/data/zjx/Code-subject/BLIP/ccs_filtered.json'

save_path ='/root/data/zjx/Code-subject/BLIP/cut_css_filtered.json'data = json.load(open(path, 'r'))

with open(save_path, 'w') as f2:save_len = 300for i in range(save_len):js = json.dumps(data[i])if i==0:f2.write('['+js+',')elif i == save_len-1:f2.write(js+']')else:f2.write(js+',')

print('finish')下载图片保存到文件夹并实现配置json文件

import json

from skimage import io

from tqdm import tqdmdef download_from_image(url, save_path, ind):image = io.imread(url)if ind <10:index_ = '000'+ str(ind)elif 9<ind<100:index_ = '00'+str(ind)else:index_ = '0'+str(ind)io.imsave(save_path+'/'+index_+'.jpg', image)return index_+'.jpg'def do_this_work(ori_json_path, new_json_path, yunduan_path, save_image_path, save_image_path_name):dict_data = json.load(open(ori_json_path, 'r'))num = len(dict_data)with open(new_json_path, 'w') as f2:for i, dict_info in enumerate(tqdm(dict_data)):new_dict = {}new_dict["caption"]=dict_info["caption"]image_path = download_from_image(dict_info['url'], save_image_path, i)new_dict["image"] = yunduan_path+'/'+save_image_path_name+'/'+image_pathnew_dict = json.dumps(new_dict)if i == 0:f2.write('[' + new_dict + ',')elif i == num - 1:f2.write(new_dict + ']')else:f2.write(new_dict + ',')if __name__=='__main__':path = './cut_css_filtered.json'save_image_path = './save_imgae'save_image_path_name = 'save_imgae'save_new_json_path = './new_ccs_filtered.json'yunduan_path = '/root/data/zjx/Code-subject/BLIP'do_this_work(path, save_new_json_path, yunduan_path, save_image_path, save_image_path_name)接着把yaml文件中的batch size 调小了

本地IDE debug 的配置

--nproc_per_node=1

--use_env

/root/data/zjx/Code-subject/BLIP/BLIP-main/pretrain.py

--config

/root/data/zjx/Code-subject/BLIP/BLIP-main/configs/pretrain.yaml

--output_dir

output/Pretrain映射关系

/root/anaconda3/envs/BLIP/lib/python3.8/site-packages/torch/distributed/launch.py

C:\Users\Lenovo\AppData\Local\JetBrains\PyCharm2021.2\remote_sources\2036786058\597056065\torch\distributed\launch.py

三、训练过程

Image Encoder Layer

采用的ViT架构,大致流程如下

接着用线性层映射了一下, 并进行了二范数归一化。

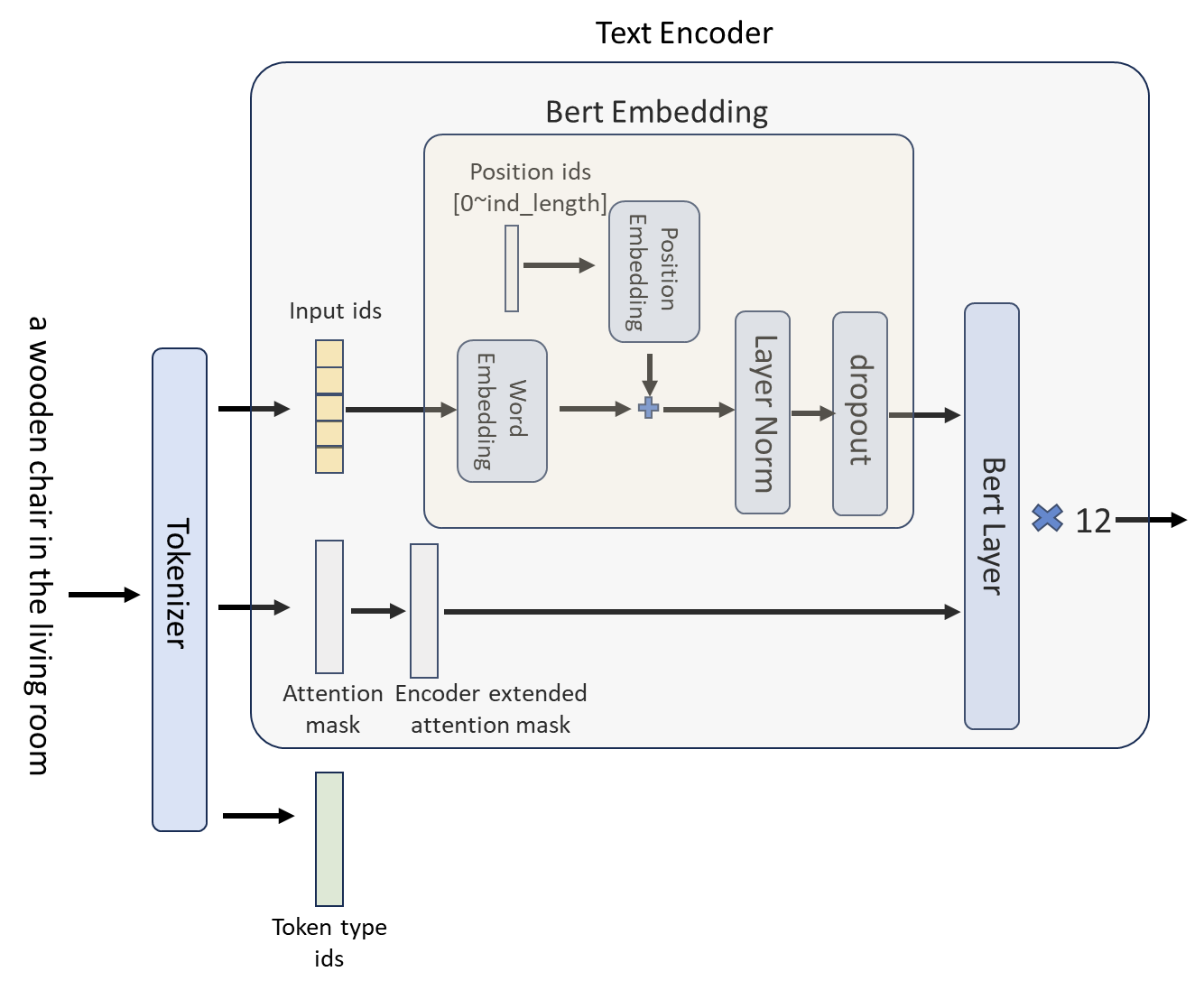

Text Encoder

首先 对caption进行量化,返回的是个BtachEncoding,其中的属性如下举例,编码的最大长度为本次选取的为30

{'input_ids': tensor([[ 101, 1037, 2485, 2298, 2012, 3467, 2962, 24019, 7657, 9587,26328, 2094, 4777, 1998, 2304, 2030, 2829, 4230, 6302, 2011,4463, 4241, 21179, 102, 0, 0, 0, 0, 0, 0],[ 101, 1037, 4799, 3242, 1999, 1996, 2542, 2282, 102, 0,0, 0, 0, 0, 0, 0, 0, 0, 0, 0,0, 0, 0, 0, 0, 0, 0, 0, 0, 0]],device='cuda:0'), 'token_type_ids': tensor([[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,0, 0, 0, 0, 0, 0],[0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,0, 0, 0, 0, 0, 0]], device='cuda:0'), 'attention_mask': tensor([[1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1, 1,0, 0, 0, 0, 0, 0],[1, 1, 1, 1, 1, 1, 1, 1, 1, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0, 0,0, 0, 0, 0, 0, 0]], device='cuda:0')}mask的长度和句子量化后的实值长度一样。

文本Encoder采用的BertModel结构

对于mask的处理

1、get_extended_attention_mask

最终extended_attention_mask 为 None

2、get_head_mask

最终 [None, None, None, None, None, None, None, None, None, None, None, None]

其长度等于隐藏层的数量 12

大致流程如下

Text Decoder

损失函数

ITC(Image-Text Contrastive)

采用 ALBEF算法实现。详细流程参考这里🐸

ITM(Image-Text Matching)

1、将 Image Token 与 Text Token 送入text encoder ,生成正样本的 hidden state

2、制作负样本,负样本为来自不同批的输入,即不是自身其它的都是负样本,其中抓取1个就行。这里利用

with torch.no_grad(): weights_t2i = F.softmax(sim_t2i[:,:bs],dim=1)+1e-4 # (2,2)weights_t2i.fill_diagonal_(0) # (2,2) 主对角线为0weights_i2t = F.softmax(sim_i2t[:,:bs],dim=1)+1e-4 # (2,2)weights_i2t.fill_diagonal_(0) # (2,2)制作权重,使得只有主对角线元素为0.其余都有权重(这里的权重矩阵为正方形,大小为batch size),再配合

torch.multinomial进行权重多分布采样。保证负样本不会和自身出现在同一批中。

负样本采样完毕后,进一步的对齐负样本,执行操作

TxetTokenPos -- (cat) -- TxetTokenNeg| | ImagTokenNeg -- (cat) -- ImagTokenPos至此负样本制作完毕。(过程中该包括了mask 的对齐)

3、将 Image Token Neg和 Text Token Neg 送入text encoder,得到 neg 的 hidden state

4、将 正 负样本的 中间预测特征 拼接在一起,经过 Linear head 输出 类别预测

5、 正样本标签 lable = [1, 1,...,0,0,..0] 1的个数为batch size,0的个数为2*batch size (因为负样本的制作)

计算损失, 二分类交叉熵

LM(anguage Modeling)

输入和标签如下举例: input:text 量化的 token, 但是第一个位置设置为 BOS

label:tensor([[30522, 1037, 2485, 2298, 2012, 3467, 2962, 24019, 7657, 9587,26328, 2094, 4777, 1998, 2304, 2030, 2829, 4230, 6302, 2011,4463, 4241, 21179, 102, -100, -100, -100, -100, -100, -100],[30522, 1037, 4799, 3242, 1999, 1996, 2542, 2282, 102, -100,-100, -100, -100, -100, -100, -100, -100, -100, -100, -100,-100, -100, -100, -100, -100, -100, -100, -100, -100, -100]],device='cuda:0')

input: tensor([[30522, 1037, 2485, 2298, 2012, 3467, 2962, 24019, 7657, 9587,26328, 2094, 4777, 1998, 2304, 2030, 2829, 4230, 6302, 2011,4463, 4241, 21179, 102, 0, 0, 0, 0, 0, 0],[30522, 1037, 4799, 3242, 1999, 1996, 2542, 2282, 102, 0,0, 0, 0, 0, 0, 0, 0, 0, 0, 0,0, 0, 0, 0, 0, 0, 0, 0, 0, 0]],device='cuda:0')1、input 经过 text decoder 输出(2,30,768),再经过BertOnlyMLMHead输出预测(2,30,30524), 30524为词汇表总长度。

2、计算损失 即似然

用decoder 的 输出预测 的前 29个单词 与 标签的后29个单词计算。

文中说采用自回归方式,由于transformer的并行能力,训练时一步到位。测试或者demo时则需要自回归方式去生成句子。

总损失为它们三加和

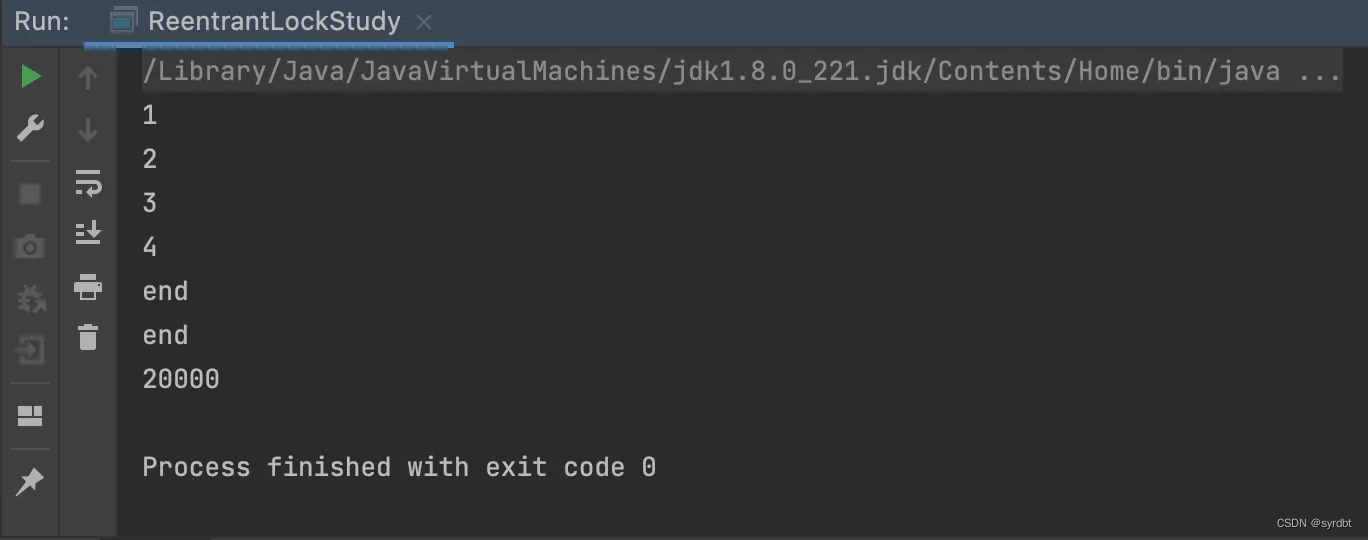

网络结构

BLIP_Pretrain((visual_encoder): VisionTransformer((patch_embed): PatchEmbed((proj): Conv2d(3, 768, kernel_size=(16, 16), stride=(16, 16))(norm): Identity())(pos_drop): Dropout(p=0.0, inplace=False)(blocks): ModuleList((0): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(1): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(2): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(3): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(4): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(5): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(6): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(7): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(8): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(9): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(10): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(11): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False))))(norm): LayerNorm((768,), eps=1e-06, elementwise_affine=True))(text_encoder): BertModel((embeddings): BertEmbeddings((word_embeddings): Embedding(30524, 768)(position_embeddings): Embedding(512, 768)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False))(encoder): BertEncoder((layer): ModuleList((0): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(1): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(2): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(3): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(4): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(5): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(6): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(7): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(8): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(9): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(10): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(11): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False))))))(vision_proj): Linear(in_features=768, out_features=256, bias=True)(text_proj): Linear(in_features=768, out_features=256, bias=True)(itm_head): Linear(in_features=768, out_features=2, bias=True)(visual_encoder_m): VisionTransformer((patch_embed): PatchEmbed((proj): Conv2d(3, 768, kernel_size=(16, 16), stride=(16, 16))(norm): Identity())(pos_drop): Dropout(p=0.0, inplace=False)(blocks): ModuleList((0): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(1): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(2): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(3): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(4): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(5): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(6): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(7): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(8): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(9): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(10): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False)))(11): Block((norm1): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(attn): Attention((qkv): Linear(in_features=768, out_features=2304, bias=True)(attn_drop): Dropout(p=0.0, inplace=False)(proj): Linear(in_features=768, out_features=768, bias=True)(proj_drop): Dropout(p=0.0, inplace=False))(drop_path): Identity()(norm2): LayerNorm((768,), eps=1e-06, elementwise_affine=True)(mlp): Mlp((fc1): Linear(in_features=768, out_features=3072, bias=True)(act): GELU(approximate='none')(fc2): Linear(in_features=3072, out_features=768, bias=True)(drop): Dropout(p=0.0, inplace=False))))(norm): LayerNorm((768,), eps=1e-06, elementwise_affine=True))(vision_proj_m): Linear(in_features=768, out_features=256, bias=True)(text_encoder_m): BertModel((embeddings): BertEmbeddings((word_embeddings): Embedding(30524, 768, padding_idx=0)(position_embeddings): Embedding(512, 768)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False))(encoder): BertEncoder((layer): ModuleList((0): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(1): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(2): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(3): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(4): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(5): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(6): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(7): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(8): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(9): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(10): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(11): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False))))))(text_proj_m): Linear(in_features=768, out_features=256, bias=True)(text_decoder): BertLMHeadModel((bert): BertModel((embeddings): BertEmbeddings((word_embeddings): Embedding(30524, 768)(position_embeddings): Embedding(512, 768)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False))(encoder): BertEncoder((layer): ModuleList((0): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(1): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(2): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(3): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(4): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(5): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(6): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(7): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(8): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(9): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(10): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(11): BertLayer((attention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(crossattention): BertAttention((self): BertSelfAttention((query): Linear(in_features=768, out_features=768, bias=True)(key): Linear(in_features=768, out_features=768, bias=True)(value): Linear(in_features=768, out_features=768, bias=True)(dropout): Dropout(p=0.1, inplace=False))(output): BertSelfOutput((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False)))(intermediate): BertIntermediate((dense): Linear(in_features=768, out_features=3072, bias=True))(output): BertOutput((dense): Linear(in_features=3072, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True)(dropout): Dropout(p=0.1, inplace=False))))))(cls): BertOnlyMLMHead((predictions): BertLMPredictionHead((transform): BertPredictionHeadTransform((dense): Linear(in_features=768, out_features=768, bias=True)(LayerNorm): LayerNorm((768,), eps=1e-12, elementwise_affine=True))(decoder): Linear(in_features=768, out_features=30524, bias=True))))

)

![[leetcode] all-nodes-distance-k-in-binary-tree 二叉树中所有距离为 K 的结点](https://img-blog.csdnimg.cn/direct/6d8eceafdb9b46a6888119f7de3829bd.png)